bplusplus 1.1.0__py3-none-any.whl → 1.2.0__py3-none-any.whl

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

Potentially problematic release.

This version of bplusplus might be problematic. Click here for more details.

- bplusplus/__init__.py +4 -2

- bplusplus/collect.py +69 -5

- bplusplus/hierarchical/test.py +670 -0

- bplusplus/hierarchical/train.py +676 -0

- bplusplus/prepare.py +228 -64

- bplusplus/resnet/test.py +473 -0

- bplusplus/resnet/train.py +329 -0

- bplusplus-1.2.0.dist-info/METADATA +249 -0

- bplusplus-1.2.0.dist-info/RECORD +12 -0

- bplusplus/yolov5detect/__init__.py +0 -1

- bplusplus/yolov5detect/detect.py +0 -444

- bplusplus/yolov5detect/export.py +0 -1530

- bplusplus/yolov5detect/insect.yaml +0 -8

- bplusplus/yolov5detect/models/__init__.py +0 -0

- bplusplus/yolov5detect/models/common.py +0 -1109

- bplusplus/yolov5detect/models/experimental.py +0 -130

- bplusplus/yolov5detect/models/hub/anchors.yaml +0 -56

- bplusplus/yolov5detect/models/hub/yolov3-spp.yaml +0 -52

- bplusplus/yolov5detect/models/hub/yolov3-tiny.yaml +0 -42

- bplusplus/yolov5detect/models/hub/yolov3.yaml +0 -52

- bplusplus/yolov5detect/models/hub/yolov5-bifpn.yaml +0 -49

- bplusplus/yolov5detect/models/hub/yolov5-fpn.yaml +0 -43

- bplusplus/yolov5detect/models/hub/yolov5-p2.yaml +0 -55

- bplusplus/yolov5detect/models/hub/yolov5-p34.yaml +0 -42

- bplusplus/yolov5detect/models/hub/yolov5-p6.yaml +0 -57

- bplusplus/yolov5detect/models/hub/yolov5-p7.yaml +0 -68

- bplusplus/yolov5detect/models/hub/yolov5-panet.yaml +0 -49

- bplusplus/yolov5detect/models/hub/yolov5l6.yaml +0 -61

- bplusplus/yolov5detect/models/hub/yolov5m6.yaml +0 -61

- bplusplus/yolov5detect/models/hub/yolov5n6.yaml +0 -61

- bplusplus/yolov5detect/models/hub/yolov5s-LeakyReLU.yaml +0 -50

- bplusplus/yolov5detect/models/hub/yolov5s-ghost.yaml +0 -49

- bplusplus/yolov5detect/models/hub/yolov5s-transformer.yaml +0 -49

- bplusplus/yolov5detect/models/hub/yolov5s6.yaml +0 -61

- bplusplus/yolov5detect/models/hub/yolov5x6.yaml +0 -61

- bplusplus/yolov5detect/models/segment/yolov5l-seg.yaml +0 -49

- bplusplus/yolov5detect/models/segment/yolov5m-seg.yaml +0 -49

- bplusplus/yolov5detect/models/segment/yolov5n-seg.yaml +0 -49

- bplusplus/yolov5detect/models/segment/yolov5s-seg.yaml +0 -49

- bplusplus/yolov5detect/models/segment/yolov5x-seg.yaml +0 -49

- bplusplus/yolov5detect/models/tf.py +0 -797

- bplusplus/yolov5detect/models/yolo.py +0 -495

- bplusplus/yolov5detect/models/yolov5l.yaml +0 -49

- bplusplus/yolov5detect/models/yolov5m.yaml +0 -49

- bplusplus/yolov5detect/models/yolov5n.yaml +0 -49

- bplusplus/yolov5detect/models/yolov5s.yaml +0 -49

- bplusplus/yolov5detect/models/yolov5x.yaml +0 -49

- bplusplus/yolov5detect/utils/__init__.py +0 -97

- bplusplus/yolov5detect/utils/activations.py +0 -134

- bplusplus/yolov5detect/utils/augmentations.py +0 -448

- bplusplus/yolov5detect/utils/autoanchor.py +0 -175

- bplusplus/yolov5detect/utils/autobatch.py +0 -70

- bplusplus/yolov5detect/utils/aws/__init__.py +0 -0

- bplusplus/yolov5detect/utils/aws/mime.sh +0 -26

- bplusplus/yolov5detect/utils/aws/resume.py +0 -41

- bplusplus/yolov5detect/utils/aws/userdata.sh +0 -27

- bplusplus/yolov5detect/utils/callbacks.py +0 -72

- bplusplus/yolov5detect/utils/dataloaders.py +0 -1385

- bplusplus/yolov5detect/utils/docker/Dockerfile +0 -73

- bplusplus/yolov5detect/utils/docker/Dockerfile-arm64 +0 -40

- bplusplus/yolov5detect/utils/docker/Dockerfile-cpu +0 -42

- bplusplus/yolov5detect/utils/downloads.py +0 -136

- bplusplus/yolov5detect/utils/flask_rest_api/README.md +0 -70

- bplusplus/yolov5detect/utils/flask_rest_api/example_request.py +0 -17

- bplusplus/yolov5detect/utils/flask_rest_api/restapi.py +0 -49

- bplusplus/yolov5detect/utils/general.py +0 -1294

- bplusplus/yolov5detect/utils/google_app_engine/Dockerfile +0 -25

- bplusplus/yolov5detect/utils/google_app_engine/additional_requirements.txt +0 -6

- bplusplus/yolov5detect/utils/google_app_engine/app.yaml +0 -16

- bplusplus/yolov5detect/utils/loggers/__init__.py +0 -476

- bplusplus/yolov5detect/utils/loggers/clearml/README.md +0 -222

- bplusplus/yolov5detect/utils/loggers/clearml/__init__.py +0 -0

- bplusplus/yolov5detect/utils/loggers/clearml/clearml_utils.py +0 -230

- bplusplus/yolov5detect/utils/loggers/clearml/hpo.py +0 -90

- bplusplus/yolov5detect/utils/loggers/comet/README.md +0 -250

- bplusplus/yolov5detect/utils/loggers/comet/__init__.py +0 -551

- bplusplus/yolov5detect/utils/loggers/comet/comet_utils.py +0 -151

- bplusplus/yolov5detect/utils/loggers/comet/hpo.py +0 -126

- bplusplus/yolov5detect/utils/loggers/comet/optimizer_config.json +0 -135

- bplusplus/yolov5detect/utils/loggers/wandb/__init__.py +0 -0

- bplusplus/yolov5detect/utils/loggers/wandb/wandb_utils.py +0 -210

- bplusplus/yolov5detect/utils/loss.py +0 -259

- bplusplus/yolov5detect/utils/metrics.py +0 -381

- bplusplus/yolov5detect/utils/plots.py +0 -517

- bplusplus/yolov5detect/utils/segment/__init__.py +0 -0

- bplusplus/yolov5detect/utils/segment/augmentations.py +0 -100

- bplusplus/yolov5detect/utils/segment/dataloaders.py +0 -366

- bplusplus/yolov5detect/utils/segment/general.py +0 -160

- bplusplus/yolov5detect/utils/segment/loss.py +0 -198

- bplusplus/yolov5detect/utils/segment/metrics.py +0 -225

- bplusplus/yolov5detect/utils/segment/plots.py +0 -152

- bplusplus/yolov5detect/utils/torch_utils.py +0 -482

- bplusplus/yolov5detect/utils/triton.py +0 -90

- bplusplus-1.1.0.dist-info/METADATA +0 -179

- bplusplus-1.1.0.dist-info/RECORD +0 -92

- {bplusplus-1.1.0.dist-info → bplusplus-1.2.0.dist-info}/LICENSE +0 -0

- {bplusplus-1.1.0.dist-info → bplusplus-1.2.0.dist-info}/WHEEL +0 -0

|

@@ -1,222 +0,0 @@

|

|

|

1

|

-

# ClearML Integration

|

|

2

|

-

|

|

3

|

-

<img align="center" src="https://github.com/thepycoder/clearml_screenshots/raw/main/logos_dark.png#gh-light-mode-only" alt="Clear|ML"><img align="center" src="https://github.com/thepycoder/clearml_screenshots/raw/main/logos_light.png#gh-dark-mode-only" alt="Clear|ML">

|

|

4

|

-

|

|

5

|

-

## About ClearML

|

|

6

|

-

|

|

7

|

-

[ClearML](https://clear.ml) is an [open-source](https://github.com/allegroai/clearml) toolbox designed to save you time ⏱️.

|

|

8

|

-

|

|

9

|

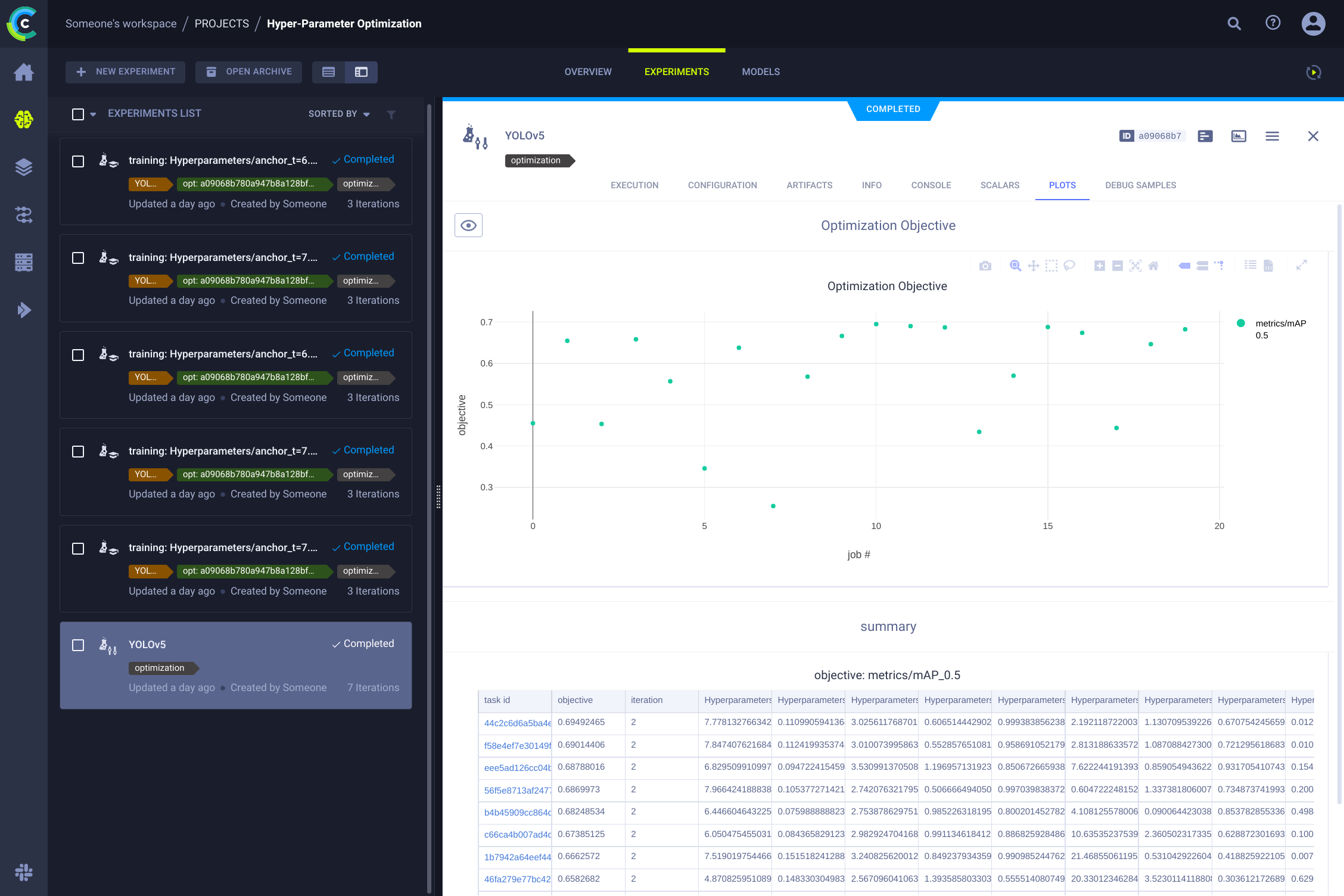

-

🔨 Track every YOLOv5 training run in the <b>experiment manager</b>

|

|

10

|

-

|

|

11

|

-

🔧 Version and easily access your custom training data with the integrated ClearML <b>Data Versioning Tool</b>

|

|

12

|

-

|

|

13

|

-

🔦 <b>Remotely train and monitor</b> your YOLOv5 training runs using ClearML Agent

|

|

14

|

-

|

|

15

|

-

🔬 Get the very best mAP using ClearML <b>Hyperparameter Optimization</b>

|

|

16

|

-

|

|

17

|

-

🔭 Turn your newly trained <b>YOLOv5 model into an API</b> with just a few commands using ClearML Serving

|

|

18

|

-

|

|

19

|

-

And so much more. It's up to you how many of these tools you want to use, you can stick to the experiment manager, or chain them all together into an impressive pipeline!

|

|

20

|

-

|

|

21

|

-

|

|

22

|

-

|

|

23

|

-

## 🦾 Setting Things Up

|

|

24

|

-

|

|

25

|

-

To keep track of your experiments and/or data, ClearML needs to communicate to a server. You have 2 options to get one:

|

|

26

|

-

|

|

27

|

-

Either sign up for free to the [ClearML Hosted Service](https://clear.ml) or you can set up your own server, see [here](https://clear.ml/docs/latest/docs/deploying_clearml/clearml_server). Even the server is open-source, so even if you're dealing with sensitive data, you should be good to go!

|

|

28

|

-

|

|

29

|

-

1. Install the `clearml` python package:

|

|

30

|

-

|

|

31

|

-

```bash

|

|

32

|

-

pip install clearml

|

|

33

|

-

```

|

|

34

|

-

|

|

35

|

-

2. Connect the ClearML SDK to the server by [creating credentials](https://app.clear.ml/settings/workspace-configuration) (go right top to Settings -> Workspace -> Create new credentials), then execute the command below and follow the instructions:

|

|

36

|

-

|

|

37

|

-

```bash

|

|

38

|

-

clearml-init

|

|

39

|

-

```

|

|

40

|

-

|

|

41

|

-

That's it! You're done 😎

|

|

42

|

-

|

|

43

|

-

## 🚀 Training YOLOv5 With ClearML

|

|

44

|

-

|

|

45

|

-

To enable ClearML experiment tracking, simply install the ClearML pip package.

|

|

46

|

-

|

|

47

|

-

```bash

|

|

48

|

-

pip install clearml>=1.2.0

|

|

49

|

-

```

|

|

50

|

-

|

|

51

|

-

This will enable integration with the YOLOv5 training script. Every training run from now on, will be captured and stored by the ClearML experiment manager.

|

|

52

|

-

|

|

53

|

-

If you want to change the `project_name` or `task_name`, use the `--project` and `--name` arguments of the `train.py` script, by default the project will be called `YOLOv5` and the task `Training`. PLEASE NOTE: ClearML uses `/` as a delimiter for subprojects, so be careful when using `/` in your project name!

|

|

54

|

-

|

|

55

|

-

```bash

|

|

56

|

-

python train.py --img 640 --batch 16 --epochs 3 --data coco128.yaml --weights yolov5s.pt --cache

|

|

57

|

-

```

|

|

58

|

-

|

|

59

|

-

or with custom project and task name:

|

|

60

|

-

|

|

61

|

-

```bash

|

|

62

|

-

python train.py --project my_project --name my_training --img 640 --batch 16 --epochs 3 --data coco128.yaml --weights yolov5s.pt --cache

|

|

63

|

-

```

|

|

64

|

-

|

|

65

|

-

This will capture:

|

|

66

|

-

|

|

67

|

-

- Source code + uncommitted changes

|

|

68

|

-

- Installed packages

|

|

69

|

-

- (Hyper)parameters

|

|

70

|

-

- Model files (use `--save-period n` to save a checkpoint every n epochs)

|

|

71

|

-

- Console output

|

|

72

|

-

- Scalars (mAP_0.5, mAP_0.5:0.95, precision, recall, losses, learning rates, ...)

|

|

73

|

-

- General info such as machine details, runtime, creation date etc.

|

|

74

|

-

- All produced plots such as label correlogram and confusion matrix

|

|

75

|

-

- Images with bounding boxes per epoch

|

|

76

|

-

- Mosaic per epoch

|

|

77

|

-

- Validation images per epoch

|

|

78

|

-

- ...

|

|

79

|

-

|

|

80

|

-

That's a lot right? 🤯 Now, we can visualize all of this information in the ClearML UI to get an overview of our training progress. Add custom columns to the table view (such as e.g. mAP_0.5) so you can easily sort on the best performing model. Or select multiple experiments and directly compare them!

|

|

81

|

-

|

|

82

|

-

There even more we can do with all of this information, like hyperparameter optimization and remote execution, so keep reading if you want to see how that works!

|

|

83

|

-

|

|

84

|

-

## 🔗 Dataset Version Management

|

|

85

|

-

|

|

86

|

-

Versioning your data separately from your code is generally a good idea and makes it easy to acquire the latest version too. This repository supports supplying a dataset version ID, and it will make sure to get the data if it's not there yet. Next to that, this workflow also saves the used dataset ID as part of the task parameters, so you will always know for sure which data was used in which experiment!

|

|

87

|

-

|

|

88

|

-

|

|

89

|

-

|

|

90

|

-

### Prepare Your Dataset

|

|

91

|

-

|

|

92

|

-

The YOLOv5 repository supports a number of different datasets by using yaml files containing their information. By default datasets are downloaded to the `../datasets` folder in relation to the repository root folder. So if you downloaded the `coco128` dataset using the link in the yaml or with the scripts provided by yolov5, you get this folder structure:

|

|

93

|

-

|

|

94

|

-

```

|

|

95

|

-

..

|

|

96

|

-

|_ yolov5

|

|

97

|

-

|_ datasets

|

|

98

|

-

|_ coco128

|

|

99

|

-

|_ images

|

|

100

|

-

|_ labels

|

|

101

|

-

|_ LICENSE

|

|

102

|

-

|_ README.txt

|

|

103

|

-

```

|

|

104

|

-

|

|

105

|

-

But this can be any dataset you wish. Feel free to use your own, as long as you keep to this folder structure.

|

|

106

|

-

|

|

107

|

-

Next, ⚠️**copy the corresponding yaml file to the root of the dataset folder**⚠️. This yaml files contains the information ClearML will need to properly use the dataset. You can make this yourself too, of course, just follow the structure of the example yamls.

|

|

108

|

-

|

|

109

|

-

Basically we need the following keys: `path`, `train`, `test`, `val`, `nc`, `names`.

|

|

110

|

-

|

|

111

|

-

```

|

|

112

|

-

..

|

|

113

|

-

|_ yolov5

|

|

114

|

-

|_ datasets

|

|

115

|

-

|_ coco128

|

|

116

|

-

|_ images

|

|

117

|

-

|_ labels

|

|

118

|

-

|_ coco128.yaml # <---- HERE!

|

|

119

|

-

|_ LICENSE

|

|

120

|

-

|_ README.txt

|

|

121

|

-

```

|

|

122

|

-

|

|

123

|

-

### Upload Your Dataset

|

|

124

|

-

|

|

125

|

-

To get this dataset into ClearML as a versioned dataset, go to the dataset root folder and run the following command:

|

|

126

|

-

|

|

127

|

-

```bash

|

|

128

|

-

cd coco128

|

|

129

|

-

clearml-data sync --project YOLOv5 --name coco128 --folder .

|

|

130

|

-

```

|

|

131

|

-

|

|

132

|

-

The command `clearml-data sync` is actually a shorthand command. You could also run these commands one after the other:

|

|

133

|

-

|

|

134

|

-

```bash

|

|

135

|

-

# Optionally add --parent <parent_dataset_id> if you want to base

|

|

136

|

-

# this version on another dataset version, so no duplicate files are uploaded!

|

|

137

|

-

clearml-data create --name coco128 --project YOLOv5

|

|

138

|

-

clearml-data add --files .

|

|

139

|

-

clearml-data close

|

|

140

|

-

```

|

|

141

|

-

|

|

142

|

-

### Run Training Using A ClearML Dataset

|

|

143

|

-

|

|

144

|

-

Now that you have a ClearML dataset, you can very simply use it to train custom YOLOv5 🚀 models!

|

|

145

|

-

|

|

146

|

-

```bash

|

|

147

|

-

python train.py --img 640 --batch 16 --epochs 3 --data clearml://<your_dataset_id> --weights yolov5s.pt --cache

|

|

148

|

-

```

|

|

149

|

-

|

|

150

|

-

## 👀 Hyperparameter Optimization

|

|

151

|

-

|

|

152

|

-

Now that we have our experiments and data versioned, it's time to take a look at what we can build on top!

|

|

153

|

-

|

|

154

|

-

Using the code information, installed packages and environment details, the experiment itself is now **completely reproducible**. In fact, ClearML allows you to clone an experiment and even change its parameters. We can then just rerun it with these new parameters automatically, this is basically what HPO does!

|

|

155

|

-

|

|

156

|

-

To **run hyperparameter optimization locally**, we've included a pre-made script for you. Just make sure a training task has been run at least once, so it is in the ClearML experiment manager, we will essentially clone it and change its hyperparameters.

|

|

157

|

-

|

|

158

|

-

You'll need to fill in the ID of this `template task` in the script found at `utils/loggers/clearml/hpo.py` and then just run it :) You can change `task.execute_locally()` to `task.execute()` to put it in a ClearML queue and have a remote agent work on it instead.

|

|

159

|

-

|

|

160

|

-

```bash

|

|

161

|

-

# To use optuna, install it first, otherwise you can change the optimizer to just be RandomSearch

|

|

162

|

-

pip install optuna

|

|

163

|

-

python utils/loggers/clearml/hpo.py

|

|

164

|

-

```

|

|

165

|

-

|

|

166

|

-

|

|

167

|

-

|

|

168

|

-

## 🤯 Remote Execution (advanced)

|

|

169

|

-

|

|

170

|

-

Running HPO locally is really handy, but what if we want to run our experiments on a remote machine instead? Maybe you have access to a very powerful GPU machine on-site, or you have some budget to use cloud GPUs. This is where the ClearML Agent comes into play. Check out what the agent can do here:

|

|

171

|

-

|

|

172

|

-

- [YouTube video](https://www.youtube.com/watch?v=MX3BrXnaULs&feature=youtu.be)

|

|

173

|

-

- [Documentation](https://clear.ml/docs/latest/docs/clearml_agent)

|

|

174

|

-

|

|

175

|

-

In short: every experiment tracked by the experiment manager contains enough information to reproduce it on a different machine (installed packages, uncommitted changes etc.). So a ClearML agent does just that: it listens to a queue for incoming tasks and when it finds one, it recreates the environment and runs it while still reporting scalars, plots etc. to the experiment manager.

|

|

176

|

-

|

|

177

|

-

You can turn any machine (a cloud VM, a local GPU machine, your own laptop ... ) into a ClearML agent by simply running:

|

|

178

|

-

|

|

179

|

-

```bash

|

|

180

|

-

clearml-agent daemon --queue <queues_to_listen_to> [--docker]

|

|

181

|

-

```

|

|

182

|

-

|

|

183

|

-

### Cloning, Editing And Enqueuing

|

|

184

|

-

|

|

185

|

-

With our agent running, we can give it some work. Remember from the HPO section that we can clone a task and edit the hyperparameters? We can do that from the interface too!

|

|

186

|

-

|

|

187

|

-

🪄 Clone the experiment by right-clicking it

|

|

188

|

-

|

|

189

|

-

🎯 Edit the hyperparameters to what you wish them to be

|

|

190

|

-

|

|

191

|

-

⏳ Enqueue the task to any of the queues by right-clicking it

|

|

192

|

-

|

|

193

|

-

|

|

194

|

-

|

|

195

|

-

### Executing A Task Remotely

|

|

196

|

-

|

|

197

|

-

Now you can clone a task like we explained above, or simply mark your current script by adding `task.execute_remotely()` and on execution it will be put into a queue, for the agent to start working on!

|

|

198

|

-

|

|

199

|

-

To run the YOLOv5 training script remotely, all you have to do is add this line to the training.py script after the clearml logger has been instantiated:

|

|

200

|

-

|

|

201

|

-

```python

|

|

202

|

-

# ...

|

|

203

|

-

# Loggers

|

|

204

|

-

data_dict = None

|

|

205

|

-

if RANK in {-1, 0}:

|

|

206

|

-

loggers = Loggers(save_dir, weights, opt, hyp, LOGGER) # loggers instance

|

|

207

|

-

if loggers.clearml:

|

|

208

|

-

loggers.clearml.task.execute_remotely(queue="my_queue") # <------ ADD THIS LINE

|

|

209

|

-

# Data_dict is either None is user did not choose for ClearML dataset or is filled in by ClearML

|

|

210

|

-

data_dict = loggers.clearml.data_dict

|

|

211

|

-

# ...

|

|

212

|

-

```

|

|

213

|

-

|

|

214

|

-

When running the training script after this change, python will run the script up until that line, after which it will package the code and send it to the queue instead!

|

|

215

|

-

|

|

216

|

-

### Autoscaling workers

|

|

217

|

-

|

|

218

|

-

ClearML comes with autoscalers too! This tool will automatically spin up new remote machines in the cloud of your choice (AWS, GCP, Azure) and turn them into ClearML agents for you whenever there are experiments detected in the queue. Once the tasks are processed, the autoscaler will automatically shut down the remote machines, and you stop paying!

|

|

219

|

-

|

|

220

|

-

Check out the autoscalers getting started video below.

|

|

221

|

-

|

|

222

|

-

[](https://youtu.be/j4XVMAaUt3E)

|

|

File without changes

|

|

@@ -1,230 +0,0 @@

|

|

|

1

|

-

# Ultralytics YOLOv5 🚀, AGPL-3.0 license

|

|

2

|

-

"""Main Logger class for ClearML experiment tracking."""

|

|

3

|

-

|

|

4

|

-

import glob

|

|

5

|

-

import re

|

|

6

|

-

from pathlib import Path

|

|

7

|

-

|

|

8

|

-

import matplotlib.image as mpimg

|

|

9

|

-

import matplotlib.pyplot as plt

|

|

10

|

-

import numpy as np

|

|

11

|

-

import yaml

|

|

12

|

-

from ultralytics.utils.plotting import Annotator, colors

|

|

13

|

-

|

|

14

|

-

try:

|

|

15

|

-

import clearml

|

|

16

|

-

from clearml import Dataset, Task

|

|

17

|

-

|

|

18

|

-

assert hasattr(clearml, "__version__") # verify package import not local dir

|

|

19

|

-

except (ImportError, AssertionError):

|

|

20

|

-

clearml = None

|

|

21

|

-

|

|

22

|

-

|

|

23

|

-

def construct_dataset(clearml_info_string):

|

|

24

|

-

"""Load in a clearml dataset and fill the internal data_dict with its contents."""

|

|

25

|

-

dataset_id = clearml_info_string.replace("clearml://", "")

|

|

26

|

-

dataset = Dataset.get(dataset_id=dataset_id)

|

|

27

|

-

dataset_root_path = Path(dataset.get_local_copy())

|

|

28

|

-

|

|

29

|

-

# We'll search for the yaml file definition in the dataset

|

|

30

|

-

yaml_filenames = list(glob.glob(str(dataset_root_path / "*.yaml")) + glob.glob(str(dataset_root_path / "*.yml")))

|

|

31

|

-

if len(yaml_filenames) > 1:

|

|

32

|

-

raise ValueError(

|

|

33

|

-

"More than one yaml file was found in the dataset root, cannot determine which one contains "

|

|

34

|

-

"the dataset definition this way."

|

|

35

|

-

)

|

|

36

|

-

elif not yaml_filenames:

|

|

37

|

-

raise ValueError(

|

|

38

|

-

"No yaml definition found in dataset root path, check that there is a correct yaml file "

|

|

39

|

-

"inside the dataset root path."

|

|

40

|

-

)

|

|

41

|

-

with open(yaml_filenames[0]) as f:

|

|

42

|

-

dataset_definition = yaml.safe_load(f)

|

|

43

|

-

|

|

44

|

-

assert set(

|

|

45

|

-

dataset_definition.keys()

|

|

46

|

-

).issuperset(

|

|

47

|

-

{"train", "test", "val", "nc", "names"}

|

|

48

|

-

), "The right keys were not found in the yaml file, make sure it at least has the following keys: ('train', 'test', 'val', 'nc', 'names')"

|

|

49

|

-

|

|

50

|

-

data_dict = {

|

|

51

|

-

"train": (

|

|

52

|

-

str((dataset_root_path / dataset_definition["train"]).resolve()) if dataset_definition["train"] else None

|

|

53

|

-

)

|

|

54

|

-

}

|

|

55

|

-

data_dict["test"] = (

|

|

56

|

-

str((dataset_root_path / dataset_definition["test"]).resolve()) if dataset_definition["test"] else None

|

|

57

|

-

)

|

|

58

|

-

data_dict["val"] = (

|

|

59

|

-

str((dataset_root_path / dataset_definition["val"]).resolve()) if dataset_definition["val"] else None

|

|

60

|

-

)

|

|

61

|

-

data_dict["nc"] = dataset_definition["nc"]

|

|

62

|

-

data_dict["names"] = dataset_definition["names"]

|

|

63

|

-

|

|

64

|

-

return data_dict

|

|

65

|

-

|

|

66

|

-

|

|

67

|

-

class ClearmlLogger:

|

|

68

|

-

"""

|

|

69

|

-

Log training runs, datasets, models, and predictions to ClearML.

|

|

70

|

-

|

|

71

|

-

This logger sends information to ClearML at app.clear.ml or to your own hosted server. By default, this information

|

|

72

|

-

includes hyperparameters, system configuration and metrics, model metrics, code information and basic data metrics

|

|

73

|

-

and analyses.

|

|

74

|

-

|

|

75

|

-

By providing additional command line arguments to train.py, datasets, models and predictions can also be logged.

|

|

76

|

-

"""

|

|

77

|

-

|

|

78

|

-

def __init__(self, opt, hyp):

|

|

79

|

-

"""

|

|

80

|

-

- Initialize ClearML Task, this object will capture the experiment

|

|

81

|

-

- Upload dataset version to ClearML Data if opt.upload_dataset is True.

|

|

82

|

-

|

|

83

|

-

Arguments:

|

|

84

|

-

opt (namespace) -- Commandline arguments for this run

|

|

85

|

-

hyp (dict) -- Hyperparameters for this run

|

|

86

|

-

|

|

87

|

-

"""

|

|

88

|

-

self.current_epoch = 0

|

|

89

|

-

# Keep tracked of amount of logged images to enforce a limit

|

|

90

|

-

self.current_epoch_logged_images = set()

|

|

91

|

-

# Maximum number of images to log to clearML per epoch

|

|

92

|

-

self.max_imgs_to_log_per_epoch = 16

|

|

93

|

-

# Get the interval of epochs when bounding box images should be logged

|

|

94

|

-

# Only for detection task though!

|

|

95

|

-

if "bbox_interval" in opt:

|

|

96

|

-

self.bbox_interval = opt.bbox_interval

|

|

97

|

-

self.clearml = clearml

|

|

98

|

-

self.task = None

|

|

99

|

-

self.data_dict = None

|

|

100

|

-

if self.clearml:

|

|

101

|

-

self.task = Task.init(

|

|

102

|

-

project_name="YOLOv5" if str(opt.project).startswith("runs/") else opt.project,

|

|

103

|

-

task_name=opt.name if opt.name != "exp" else "Training",

|

|

104

|

-

tags=["YOLOv5"],

|

|

105

|

-

output_uri=True,

|

|

106

|

-

reuse_last_task_id=opt.exist_ok,

|

|

107

|

-

auto_connect_frameworks={"pytorch": False, "matplotlib": False},

|

|

108

|

-

# We disconnect pytorch auto-detection, because we added manual model save points in the code

|

|

109

|

-

)

|

|

110

|

-

# ClearML's hooks will already grab all general parameters

|

|

111

|

-

# Only the hyperparameters coming from the yaml config file

|

|

112

|

-

# will have to be added manually!

|

|

113

|

-

self.task.connect(hyp, name="Hyperparameters")

|

|

114

|

-

self.task.connect(opt, name="Args")

|

|

115

|

-

|

|

116

|

-

# Make sure the code is easily remotely runnable by setting the docker image to use by the remote agent

|

|

117

|

-

self.task.set_base_docker(

|

|

118

|

-

"ultralytics/yolov5:latest",

|

|

119

|

-

docker_arguments='--ipc=host -e="CLEARML_AGENT_SKIP_PYTHON_ENV_INSTALL=1"',

|

|

120

|

-

docker_setup_bash_script="pip install clearml",

|

|

121

|

-

)

|

|

122

|

-

|

|

123

|

-

# Get ClearML Dataset Version if requested

|

|

124

|

-

if opt.data.startswith("clearml://"):

|

|

125

|

-

# data_dict should have the following keys:

|

|

126

|

-

# names, nc (number of classes), test, train, val (all three relative paths to ../datasets)

|

|

127

|

-

self.data_dict = construct_dataset(opt.data)

|

|

128

|

-

# Set data to data_dict because wandb will crash without this information and opt is the best way

|

|

129

|

-

# to give it to them

|

|

130

|

-

opt.data = self.data_dict

|

|

131

|

-

|

|

132

|

-

def log_scalars(self, metrics, epoch):

|

|

133

|

-

"""

|

|

134

|

-

Log scalars/metrics to ClearML.

|

|

135

|

-

|

|

136

|

-

Arguments:

|

|

137

|

-

metrics (dict) Metrics in dict format: {"metrics/mAP": 0.8, ...}

|

|

138

|

-

epoch (int) iteration number for the current set of metrics

|

|

139

|

-

"""

|

|

140

|

-

for k, v in metrics.items():

|

|

141

|

-

title, series = k.split("/")

|

|

142

|

-

self.task.get_logger().report_scalar(title, series, v, epoch)

|

|

143

|

-

|

|

144

|

-

def log_model(self, model_path, model_name, epoch=0):

|

|

145

|

-

"""

|

|

146

|

-

Log model weights to ClearML.

|

|

147

|

-

|

|

148

|

-

Arguments:

|

|

149

|

-

model_path (PosixPath or str) Path to the model weights

|

|

150

|

-

model_name (str) Name of the model visible in ClearML

|

|

151

|

-

epoch (int) Iteration / epoch of the model weights

|

|

152

|

-

"""

|

|

153

|

-

self.task.update_output_model(

|

|

154

|

-

model_path=str(model_path), name=model_name, iteration=epoch, auto_delete_file=False

|

|

155

|

-

)

|

|

156

|

-

|

|

157

|

-

def log_summary(self, metrics):

|

|

158

|

-

"""

|

|

159

|

-

Log final metrics to a summary table.

|

|

160

|

-

|

|

161

|

-

Arguments:

|

|

162

|

-

metrics (dict) Metrics in dict format: {"metrics/mAP": 0.8, ...}

|

|

163

|

-

"""

|

|

164

|

-

for k, v in metrics.items():

|

|

165

|

-

self.task.get_logger().report_single_value(k, v)

|

|

166

|

-

|

|

167

|

-

def log_plot(self, title, plot_path):

|

|

168

|

-

"""

|

|

169

|

-

Log image as plot in the plot section of ClearML.

|

|

170

|

-

|

|

171

|

-

Arguments:

|

|

172

|

-

title (str) Title of the plot

|

|

173

|

-

plot_path (PosixPath or str) Path to the saved image file

|

|

174

|

-

"""

|

|

175

|

-

img = mpimg.imread(plot_path)

|

|

176

|

-

fig = plt.figure()

|

|

177

|

-

ax = fig.add_axes([0, 0, 1, 1], frameon=False, aspect="auto", xticks=[], yticks=[]) # no ticks

|

|

178

|

-

ax.imshow(img)

|

|

179

|

-

|

|

180

|

-

self.task.get_logger().report_matplotlib_figure(title, "", figure=fig, report_interactive=False)

|

|

181

|

-

|

|

182

|

-

def log_debug_samples(self, files, title="Debug Samples"):

|

|

183

|

-

"""

|

|

184

|

-

Log files (images) as debug samples in the ClearML task.

|

|

185

|

-

|

|

186

|

-

Arguments:

|

|

187

|

-

files (List(PosixPath)) a list of file paths in PosixPath format

|

|

188

|

-

title (str) A title that groups together images with the same values

|

|

189

|

-

"""

|

|

190

|

-

for f in files:

|

|

191

|

-

if f.exists():

|

|

192

|

-

it = re.search(r"_batch(\d+)", f.name)

|

|

193

|

-

iteration = int(it.groups()[0]) if it else 0

|

|

194

|

-

self.task.get_logger().report_image(

|

|

195

|

-

title=title, series=f.name.replace(f"_batch{iteration}", ""), local_path=str(f), iteration=iteration

|

|

196

|

-

)

|

|

197

|

-

|

|

198

|

-

def log_image_with_boxes(self, image_path, boxes, class_names, image, conf_threshold=0.25):

|

|

199

|

-

"""

|

|

200

|

-

Draw the bounding boxes on a single image and report the result as a ClearML debug sample.

|

|

201

|

-

|

|

202

|

-

Arguments:

|

|

203

|

-

image_path (PosixPath) the path the original image file

|

|

204

|

-

boxes (list): list of scaled predictions in the format - [xmin, ymin, xmax, ymax, confidence, class]

|

|

205

|

-

class_names (dict): dict containing mapping of class int to class name

|

|

206

|

-

image (Tensor): A torch tensor containing the actual image data

|

|

207

|

-

"""

|

|

208

|

-

if (

|

|

209

|

-

len(self.current_epoch_logged_images) < self.max_imgs_to_log_per_epoch

|

|

210

|

-

and self.current_epoch >= 0

|

|

211

|

-

and (self.current_epoch % self.bbox_interval == 0 and image_path not in self.current_epoch_logged_images)

|

|

212

|

-

):

|

|

213

|

-

im = np.ascontiguousarray(np.moveaxis(image.mul(255).clamp(0, 255).byte().cpu().numpy(), 0, 2))

|

|

214

|

-

annotator = Annotator(im=im, pil=True)

|

|

215

|

-

for i, (conf, class_nr, box) in enumerate(zip(boxes[:, 4], boxes[:, 5], boxes[:, :4])):

|

|

216

|

-

color = colors(i)

|

|

217

|

-

|

|

218

|

-

class_name = class_names[int(class_nr)]

|

|

219

|

-

confidence_percentage = round(float(conf) * 100, 2)

|

|

220

|

-

label = f"{class_name}: {confidence_percentage}%"

|

|

221

|

-

|

|

222

|

-

if conf > conf_threshold:

|

|

223

|

-

annotator.rectangle(box.cpu().numpy(), outline=color)

|

|

224

|

-

annotator.box_label(box.cpu().numpy(), label=label, color=color)

|

|

225

|

-

|

|

226

|

-

annotated_image = annotator.result()

|

|

227

|

-

self.task.get_logger().report_image(

|

|

228

|

-

title="Bounding Boxes", series=image_path.name, iteration=self.current_epoch, image=annotated_image

|

|

229

|

-

)

|

|

230

|

-

self.current_epoch_logged_images.add(image_path)

|

|

@@ -1,90 +0,0 @@

|

|

|

1

|

-

# Ultralytics YOLOv5 🚀, AGPL-3.0 license

|

|

2

|

-

|

|

3

|

-

from clearml import Task

|

|

4

|

-

|

|

5

|

-

# Connecting ClearML with the current process,

|

|

6

|

-

# from here on everything is logged automatically

|

|

7

|

-

from clearml.automation import HyperParameterOptimizer, UniformParameterRange

|

|

8

|

-

from clearml.automation.optuna import OptimizerOptuna

|

|

9

|

-

|

|

10

|

-

task = Task.init(

|

|

11

|

-

project_name="Hyper-Parameter Optimization",

|

|

12

|

-

task_name="YOLOv5",

|

|

13

|

-

task_type=Task.TaskTypes.optimizer,

|

|

14

|

-

reuse_last_task_id=False,

|

|

15

|

-

)

|

|

16

|

-

|

|

17

|

-

# Example use case:

|

|

18

|

-

optimizer = HyperParameterOptimizer(

|

|

19

|

-

# This is the experiment we want to optimize

|

|

20

|

-

base_task_id="<your_template_task_id>",

|

|

21

|

-

# here we define the hyper-parameters to optimize

|

|

22

|

-

# Notice: The parameter name should exactly match what you see in the UI: <section_name>/<parameter>

|

|

23

|

-

# For Example, here we see in the base experiment a section Named: "General"

|

|

24

|

-

# under it a parameter named "batch_size", this becomes "General/batch_size"

|

|

25

|

-

# If you have `argparse` for example, then arguments will appear under the "Args" section,

|

|

26

|

-

# and you should instead pass "Args/batch_size"

|

|

27

|

-

hyper_parameters=[

|

|

28

|

-

UniformParameterRange("Hyperparameters/lr0", min_value=1e-5, max_value=1e-1),

|

|

29

|

-

UniformParameterRange("Hyperparameters/lrf", min_value=0.01, max_value=1.0),

|

|

30

|

-

UniformParameterRange("Hyperparameters/momentum", min_value=0.6, max_value=0.98),

|

|

31

|

-

UniformParameterRange("Hyperparameters/weight_decay", min_value=0.0, max_value=0.001),

|

|

32

|

-

UniformParameterRange("Hyperparameters/warmup_epochs", min_value=0.0, max_value=5.0),

|

|

33

|

-

UniformParameterRange("Hyperparameters/warmup_momentum", min_value=0.0, max_value=0.95),

|

|

34

|

-

UniformParameterRange("Hyperparameters/warmup_bias_lr", min_value=0.0, max_value=0.2),

|

|

35

|

-

UniformParameterRange("Hyperparameters/box", min_value=0.02, max_value=0.2),

|

|

36

|

-

UniformParameterRange("Hyperparameters/cls", min_value=0.2, max_value=4.0),

|

|

37

|

-

UniformParameterRange("Hyperparameters/cls_pw", min_value=0.5, max_value=2.0),

|

|

38

|

-

UniformParameterRange("Hyperparameters/obj", min_value=0.2, max_value=4.0),

|

|

39

|

-

UniformParameterRange("Hyperparameters/obj_pw", min_value=0.5, max_value=2.0),

|

|

40

|

-

UniformParameterRange("Hyperparameters/iou_t", min_value=0.1, max_value=0.7),

|

|

41

|

-

UniformParameterRange("Hyperparameters/anchor_t", min_value=2.0, max_value=8.0),

|

|

42

|

-

UniformParameterRange("Hyperparameters/fl_gamma", min_value=0.0, max_value=4.0),

|

|

43

|

-

UniformParameterRange("Hyperparameters/hsv_h", min_value=0.0, max_value=0.1),

|

|

44

|

-

UniformParameterRange("Hyperparameters/hsv_s", min_value=0.0, max_value=0.9),

|

|

45

|

-

UniformParameterRange("Hyperparameters/hsv_v", min_value=0.0, max_value=0.9),

|

|

46

|

-

UniformParameterRange("Hyperparameters/degrees", min_value=0.0, max_value=45.0),

|

|

47

|

-

UniformParameterRange("Hyperparameters/translate", min_value=0.0, max_value=0.9),

|

|

48

|

-

UniformParameterRange("Hyperparameters/scale", min_value=0.0, max_value=0.9),

|

|

49

|

-

UniformParameterRange("Hyperparameters/shear", min_value=0.0, max_value=10.0),

|

|

50

|

-

UniformParameterRange("Hyperparameters/perspective", min_value=0.0, max_value=0.001),

|

|

51

|

-

UniformParameterRange("Hyperparameters/flipud", min_value=0.0, max_value=1.0),

|

|

52

|

-

UniformParameterRange("Hyperparameters/fliplr", min_value=0.0, max_value=1.0),

|

|

53

|

-

UniformParameterRange("Hyperparameters/mosaic", min_value=0.0, max_value=1.0),

|

|

54

|

-

UniformParameterRange("Hyperparameters/mixup", min_value=0.0, max_value=1.0),

|

|

55

|

-

UniformParameterRange("Hyperparameters/copy_paste", min_value=0.0, max_value=1.0),

|

|

56

|

-

],

|

|

57

|

-

# this is the objective metric we want to maximize/minimize

|

|

58

|

-

objective_metric_title="metrics",

|

|

59

|

-

objective_metric_series="mAP_0.5",

|

|

60

|

-

# now we decide if we want to maximize it or minimize it (accuracy we maximize)

|

|

61

|

-

objective_metric_sign="max",

|

|

62

|

-

# let us limit the number of concurrent experiments,

|

|

63

|

-

# this in turn will make sure we don't bombard the scheduler with experiments.

|

|

64

|

-

# if we have an auto-scaler connected, this, by proxy, will limit the number of machine

|

|

65

|

-

max_number_of_concurrent_tasks=1,

|

|

66

|

-

# this is the optimizer class (actually doing the optimization)

|

|

67

|

-

# Currently, we can choose from GridSearch, RandomSearch or OptimizerBOHB (Bayesian optimization Hyper-Band)

|

|

68

|

-

optimizer_class=OptimizerOptuna,

|

|

69

|

-

# If specified only the top K performing Tasks will be kept, the others will be automatically archived

|

|

70

|

-

save_top_k_tasks_only=5, # 5,

|

|

71

|

-

compute_time_limit=None,

|

|

72

|

-

total_max_jobs=20,

|

|

73

|

-

min_iteration_per_job=None,

|

|

74

|

-

max_iteration_per_job=None,

|

|

75

|

-

)

|

|

76

|

-

|

|

77

|

-

# report every 10 seconds, this is way too often, but we are testing here

|

|

78

|

-

optimizer.set_report_period(10 / 60)

|

|

79

|

-

# You can also use the line below instead to run all the optimizer tasks locally, without using queues or agent

|

|

80

|

-

# an_optimizer.start_locally(job_complete_callback=job_complete_callback)

|

|

81

|

-

# set the time limit for the optimization process (2 hours)

|

|

82

|

-

optimizer.set_time_limit(in_minutes=120.0)

|

|

83

|

-

# Start the optimization process in the local environment

|

|

84

|

-

optimizer.start_locally()

|

|

85

|

-

# wait until process is done (notice we are controlling the optimization process in the background)

|

|

86

|

-

optimizer.wait()

|

|

87

|

-

# make sure background optimization stopped

|

|

88

|

-

optimizer.stop()

|

|

89

|

-

|

|

90

|

-

print("We are done, good bye")

|