@lobehub/chat 0.133.0 → 0.133.2

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- package/.eslintrc.js +16 -0

- package/CHANGELOG.md +1392 -2240

- package/README.md +7 -3

- package/README.zh-CN.md +7 -3

- package/contributing/Home.md +20 -28

- package/contributing/_Sidebar.md +1 -9

- package/docs/self-hosting/advanced/analytics.zh-CN.mdx +4 -2

- package/docs/self-hosting/advanced/authentication.mdx +32 -33

- package/docs/self-hosting/advanced/authentication.zh-CN.mdx +31 -34

- package/docs/self-hosting/advanced/upstream-sync.mdx +23 -26

- package/docs/self-hosting/advanced/upstream-sync.zh-CN.mdx +23 -26

- package/docs/self-hosting/environment-variables/basic.mdx +12 -14

- package/docs/self-hosting/environment-variables/basic.zh-CN.mdx +13 -15

- package/docs/self-hosting/environment-variables/model-provider.mdx +6 -2

- package/docs/self-hosting/environment-variables/model-provider.zh-CN.mdx +8 -5

- package/docs/self-hosting/environment-variables.mdx +11 -0

- package/docs/self-hosting/environment-variables.zh-CN.mdx +10 -0

- package/docs/self-hosting/examples/azure-openai.mdx +7 -7

- package/docs/self-hosting/examples/azure-openai.zh-CN.mdx +7 -7

- package/docs/self-hosting/faq/no-v1-suffix.mdx +0 -1

- package/docs/self-hosting/faq/proxy-with-unable-to-verify-leaf-signature.mdx +0 -1

- package/docs/self-hosting/platform/docker-compose.mdx +73 -79

- package/docs/self-hosting/platform/docker-compose.zh-CN.mdx +80 -86

- package/docs/self-hosting/platform/docker.mdx +96 -101

- package/docs/self-hosting/platform/docker.zh-CN.mdx +102 -107

- package/docs/self-hosting/platform/netlify.mdx +66 -145

- package/docs/self-hosting/platform/netlify.zh-CN.mdx +64 -143

- package/docs/self-hosting/platform/repocloud.mdx +7 -9

- package/docs/self-hosting/platform/repocloud.zh-CN.mdx +10 -12

- package/docs/self-hosting/platform/sealos.mdx +7 -9

- package/docs/self-hosting/platform/sealos.zh-CN.mdx +10 -12

- package/docs/self-hosting/platform/vercel.mdx +13 -15

- package/docs/self-hosting/platform/vercel.zh-CN.mdx +12 -15

- package/docs/self-hosting/platform/zeabur.mdx +7 -9

- package/docs/self-hosting/platform/zeabur.zh-CN.mdx +10 -12

- package/docs/self-hosting/start.mdx +17 -0

- package/docs/self-hosting/start.zh-CN.mdx +17 -0

- package/docs/usage/agents/concepts.mdx +0 -1

- package/docs/usage/agents/custom-agent.mdx +5 -7

- package/docs/usage/agents/custom-agent.zh-CN.mdx +3 -3

- package/docs/usage/agents/model.mdx +9 -4

- package/docs/usage/agents/model.zh-CN.mdx +5 -5

- package/docs/usage/agents/prompt.mdx +8 -9

- package/docs/usage/agents/prompt.zh-CN.mdx +7 -8

- package/docs/usage/agents/topics.mdx +1 -1

- package/docs/usage/features/agent-market.mdx +13 -11

- package/docs/usage/features/agent-market.zh-CN.mdx +7 -8

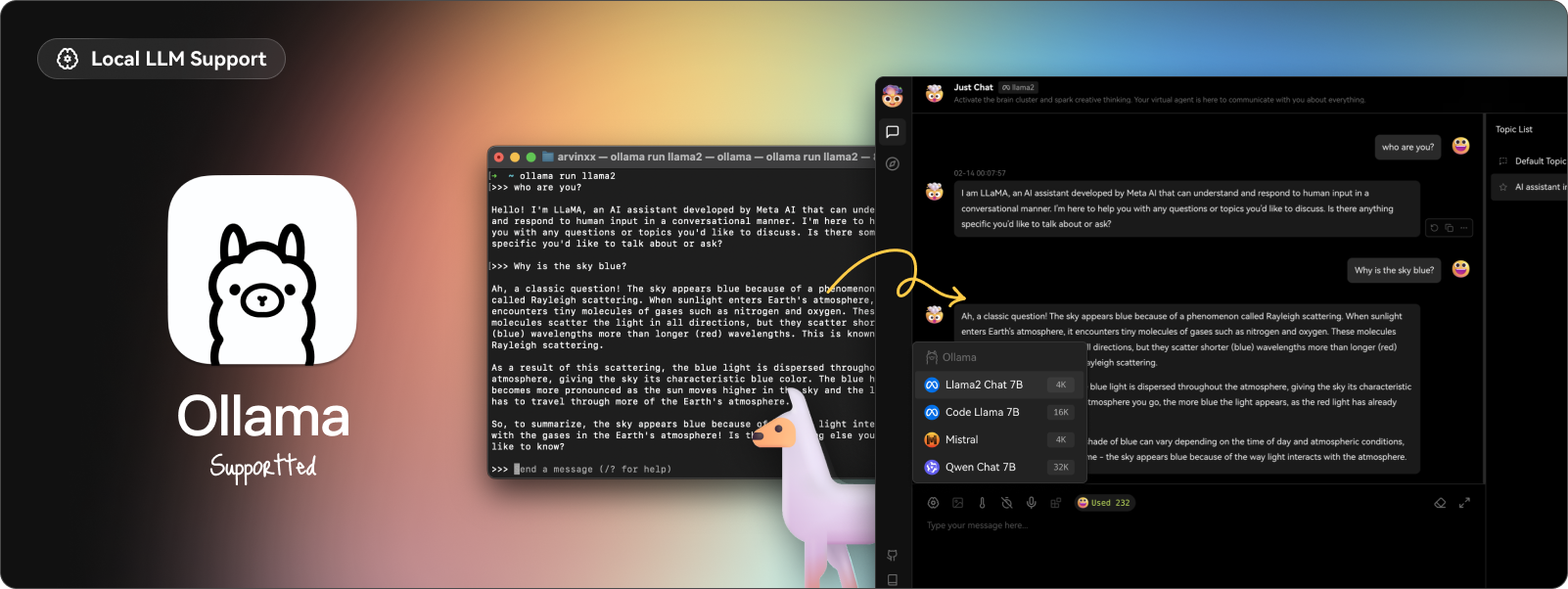

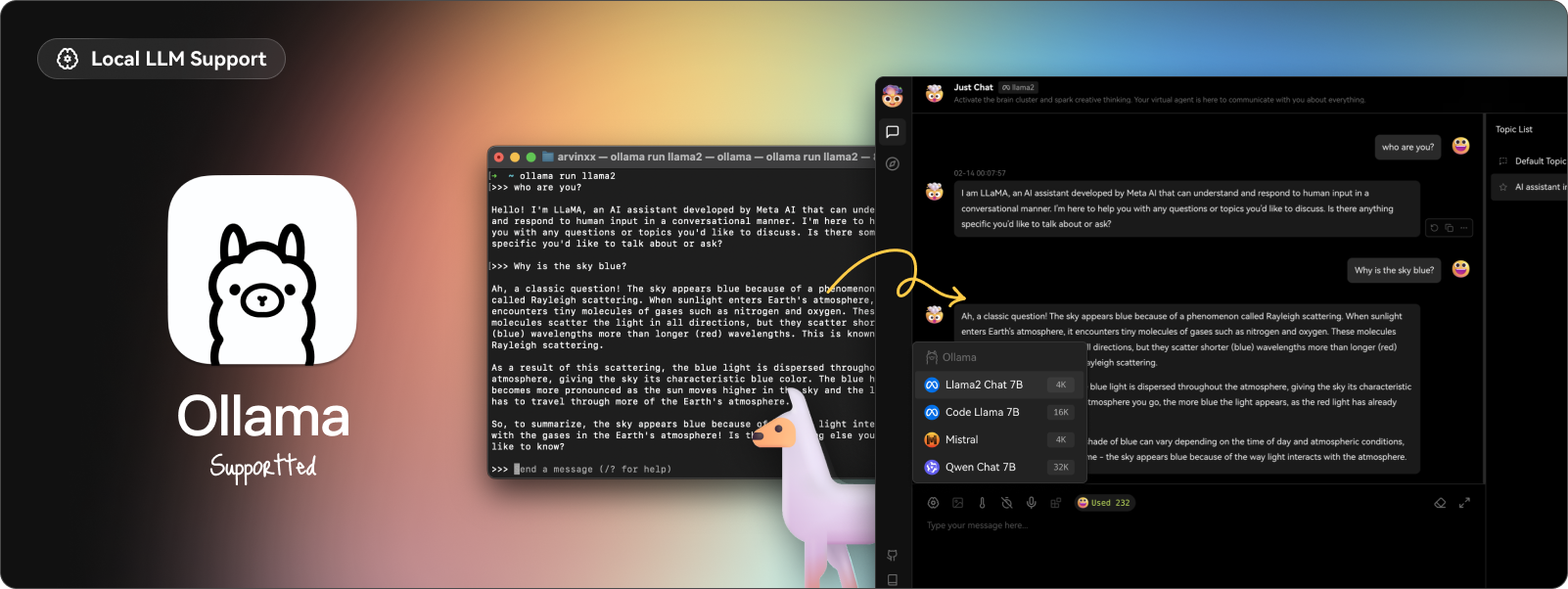

- package/docs/usage/features/local-llm.mdx +6 -5

- package/docs/usage/features/local-llm.zh-CN.mdx +8 -5

- package/docs/usage/features/mobile.mdx +5 -5

- package/docs/usage/features/mobile.zh-CN.mdx +1 -5

- package/docs/usage/features/multi-ai-providers.mdx +6 -6

- package/docs/usage/features/multi-ai-providers.zh-CN.mdx +3 -7

- package/docs/usage/features/plugin-system.mdx +20 -18

- package/docs/usage/features/plugin-system.zh-CN.mdx +20 -22

- package/docs/usage/features/pwa.mdx +9 -11

- package/docs/usage/features/pwa.zh-CN.mdx +5 -11

- package/docs/usage/features/text-to-image.mdx +5 -1

- package/docs/usage/features/text-to-image.zh-CN.mdx +1 -1

- package/docs/usage/features/theme.mdx +5 -5

- package/docs/usage/features/theme.zh-CN.mdx +1 -5

- package/docs/usage/features/tts.mdx +6 -1

- package/docs/usage/features/tts.zh-CN.mdx +1 -1

- package/docs/usage/features/vision.mdx +7 -3

- package/docs/usage/features/vision.zh-CN.mdx +1 -1

- package/docs/usage/plugins/basic-usage.mdx +2 -2

- package/docs/usage/plugins/custom-plugin.mdx +2 -2

- package/docs/usage/plugins/custom-plugin.zh-CN.mdx +2 -2

- package/docs/usage/plugins/{plugin-development.mdx → development.mdx} +7 -7

- package/docs/usage/plugins/{plugin-development.zh-CN.mdx → development.zh-CN.mdx} +7 -7

- package/docs/usage/plugins/{plugin-store.mdx → store.mdx} +0 -1

- package/docs/usage/plugins/store.zh-CN.mdx +9 -0

- package/docs/usage/providers/ollama/gemma.mdx +22 -33

- package/docs/usage/providers/ollama/gemma.zh-CN.mdx +20 -32

- package/docs/usage/providers/ollama.mdx +34 -43

- package/docs/usage/providers/ollama.zh-CN.mdx +18 -29

- package/docs/usage/start.mdx +34 -0

- package/docs/usage/start.zh-CN.mdx +28 -0

- package/next-sitemap.config.mjs +2 -3

- package/package.json +24 -19

- package/src/app/settings/llm/Anthropic/index.tsx +2 -2

- package/src/app/settings/llm/index.tsx +4 -4

- package/src/config/modelProviders/ollama.ts +4 -4

- package/src/libs/agent-runtime/anthropic/index.test.ts +6 -10

- package/docs/usage/plugins/plugin-store.zh-CN.mdx +0 -9

- package/public/robots.txt +0 -4

|

@@ -7,26 +7,21 @@ import { Callout, Steps } from 'nextra/components';

|

|

|

7

7

|

## Vercel 部署流程

|

|

8

8

|

|

|

9

9

|

<Steps>

|

|

10

|

+

### 准备好你的 OpenAI API Key

|

|

10

11

|

|

|

11

|

-

|

|

12

|

+

前往 [OpenAI API Key](https://platform.openai.com/account/api-keys) 获取你的 OpenAI API Key

|

|

12

13

|

|

|

13

|

-

|

|

14

|

+

### 点击下方按钮进行部署

|

|

14

15

|

|

|

15

|

-

|

|

16

|

+

[![][deploy-button-image]][deploy-link]

|

|

16

17

|

|

|

17

|

-

|

|

18

|

+

直接使用 GitHub 账号登录即可,记得在环境变量页填入 `OPENAI_API_KEY` (必填) and `ACCESS_CODE`(推荐);

|

|

18

19

|

|

|

19

|

-

|

|

20

|

+

### 部署完毕后,即可开始使用

|

|

20

21

|

|

|

21

|

-

|

|

22

|

-

[deploy-link]: https://vercel.com/new/clone?repository-url=https%3A%2F%2Fgithub.com%2Flobehub%2Flobe-chat&env=OPENAI_API_KEY,ACCESS_CODE&envDescription=Find%20your%20OpenAI%20API%20Key%20by%20click%20the%20right%20Learn%20More%20button.%20%7C%20Access%20Code%20can%20protect%20your%20website&envLink=https%3A%2F%2Fplatform.openai.com%2Faccount%2Fapi-keys&project-name=lobe-chat&repository-name=lobe-chat

|

|

23

|

-

|

|

24

|

-

### 部署完毕后,即可开始使用

|

|

25

|

-

|

|

26

|

-

### 绑定自定义域名(可选)

|

|

27

|

-

|

|

28

|

-

Vercel 分配的域名 DNS 在某些区域被污染了,绑定自定义域名即可直连。

|

|

22

|

+

### 绑定自定义域名(可选)

|

|

29

23

|

|

|

24

|

+

Vercel 分配的域名 DNS 在某些区域被污染了,绑定自定义域名即可直连。

|

|

30

25

|

</Steps>

|

|

31

26

|

|

|

32

27

|

## 自动同步更新

|

|

@@ -34,6 +29,8 @@ Vercel 分配的域名 DNS 在某些区域被污染了,绑定自定义域名

|

|

|

34

29

|

如果你根据上述中的一键部署步骤部署了自己的项目,你可能会发现总是被提示 “有可用更新”。这是因为 Vercel 默认为你创建新项目而非 fork 本项目,这将导致无法准确检测更新。

|

|

35

30

|

|

|

36

31

|

<Callout>

|

|

37

|

-

我们建议按照 [📘 LobeChat

|

|

38

|

-

自部署保持更新](/zh/self-hosting/advanced/upstream-sync) 步骤重新部署。

|

|

32

|

+

我们建议按照 [📘 LobeChat 自部署保持更新](/zh/self-hosting/advanced/upstream-sync) 步骤重新部署。

|

|

39

33

|

</Callout>

|

|

34

|

+

|

|

35

|

+

[deploy-button-image]: https://vercel.com/button

|

|

36

|

+

[deploy-link]: https://vercel.com/new/clone?repository-url=https%3A%2F%2Fgithub.com%2Flobehub%2Flobe-chat&env=OPENAI_API_KEY,ACCESS_CODE&envDescription=Find%20your%20OpenAI%20API%20Key%20by%20click%20the%20right%20Learn%20More%20button.%20%7C%20Access%20Code%20can%20protect%20your%20website&envLink=https%3A%2F%2Fplatform.openai.com%2Faccount%2Fapi-keys&project-name=lobe-chat&repository-name=lobe-chat

|

|

@@ -7,21 +7,19 @@ If you want to deploy LobeChat on Zeabur, you can follow the steps below:

|

|

|

7

7

|

## Zeabur Deployment Process

|

|

8

8

|

|

|

9

9

|

<Steps>

|

|

10

|

+

### Prepare your OpenAI API Key

|

|

10

11

|

|

|

11

|

-

|

|

12

|

+

Go to [OpenAI API Key](https://platform.openai.com/account/api-keys) to get your OpenAI API Key.

|

|

12

13

|

|

|

13

|

-

|

|

14

|

+

### Click the button below to deploy

|

|

14

15

|

|

|

15

|

-

|

|

16

|

+

[![][deploy-button-image]][deploy-link]

|

|

16

17

|

|

|

17

|

-

|

|

18

|

+

### Once deployed, you can start using it

|

|

18

19

|

|

|

19

|

-

###

|

|

20

|

-

|

|

21

|

-

### Bind a custom domain (optional)

|

|

22

|

-

|

|

23

|

-

You can use the subdomain provided by Zeabur, or choose to bind a custom domain. Currently, the domains provided by Zeabur have not been contaminated, and most regions can connect directly.

|

|

20

|

+

### Bind a custom domain (optional)

|

|

24

21

|

|

|

22

|

+

You can use the subdomain provided by Zeabur, or choose to bind a custom domain. Currently, the domains provided by Zeabur have not been contaminated, and most regions can connect directly.

|

|

25

23

|

</Steps>

|

|

26

24

|

|

|

27

25

|

[deploy-button-image]: https://zeabur.com/button.svg

|

|

@@ -7,22 +7,20 @@ import { Steps } from 'nextra/components';

|

|

|

7

7

|

## Zeabur 部署流程

|

|

8

8

|

|

|

9

9

|

<Steps>

|

|

10

|

+

### 准备好你的 OpenAI API Key

|

|

10

11

|

|

|

11

|

-

|

|

12

|

+

前往 [OpenAI API Key](https://platform.openai.com/account/api-keys) 获取你的 OpenAI API Key

|

|

12

13

|

|

|

13

|

-

|

|

14

|

+

### 点击下方按钮进行部署

|

|

14

15

|

|

|

15

|

-

|

|

16

|

+

[![][deploy-button-image]][deploy-link]

|

|

16

17

|

|

|

17

|

-

|

|

18

|

+

### 部署完毕后,即可开始使用

|

|

18

19

|

|

|

19

|

-

|

|

20

|

-

[deploy-link]: https://zeabur.com/templates/VZGGTI

|

|

21

|

-

|

|

22

|

-

### 部署完毕后,即可开始使用

|

|

23

|

-

|

|

24

|

-

### 绑定自定义域名(可选)

|

|

25

|

-

|

|

26

|

-

你可以使用 Zeabur 提供的子域名,也可以选择绑定自定义域名。目前 Zeabur 提供的域名还未被污染,大多数地区都可以直连。

|

|

20

|

+

### 绑定自定义域名(可选)

|

|

27

21

|

|

|

22

|

+

你可以使用 Zeabur 提供的子域名,也可以选择绑定自定义域名。目前 Zeabur 提供的域名还未被污染,大多数地区都可以直连。

|

|

28

23

|

</Steps>

|

|

24

|

+

|

|

25

|

+

[deploy-button-image]: https://zeabur.com/button.svg

|

|

26

|

+

[deploy-link]: https://zeabur.com/templates/VZGGTI

|

|

@@ -0,0 +1,17 @@

|

|

|

1

|

+

# Build your own Lobe Chat

|

|

2

|

+

|

|

3

|

+

Choose your favorite platform to get started.

|

|

4

|

+

|

|

5

|

+

## Choose your deployment platform

|

|

6

|

+

|

|

7

|

+

LobeChat support multiple deployment platforms, including Vercel, Docker, and Docker Compose and so on, you can choose the deployment platform that suits you.

|

|

8

|

+

|

|

9

|

+

<PlatformCards

|

|

10

|

+

docker={'Docker'}

|

|

11

|

+

dockerCompose={'Docker Compose'}

|

|

12

|

+

netlify={'Netlify'}

|

|

13

|

+

repocloud={'RepoCloud'}

|

|

14

|

+

sealos={'SealOS'}

|

|

15

|

+

vercel={'Vercel'}

|

|

16

|

+

zeabur={'Zeabur'}

|

|

17

|

+

/>

|

|

@@ -0,0 +1,17 @@

|

|

|

1

|

+

# 构建属于自己的 Lobe Chat

|

|

2

|

+

|

|

3

|

+

选择自己喜爱的平台,构建属于自己的 Lobe Chat。

|

|

4

|

+

|

|

5

|

+

## 选择部署平台

|

|

6

|

+

|

|

7

|

+

LobeChat 支持多种部署平台,包括 Vercel、Docker 和 Docker Compose 等,你可以选择适合自己的部署平台进行部署。

|

|

8

|

+

|

|

9

|

+

<PlatformCards

|

|

10

|

+

docker={'Docker 部署'}

|

|

11

|

+

dockerCompose={'Docker Compose 部署'}

|

|

12

|

+

netlify={'Netlify 部署'}

|

|

13

|

+

repocloud={'Repocloud 部署'}

|

|

14

|

+

sealos={'SealOS 部署'}

|

|

15

|

+

vercel={'Vercel 部署'}

|

|

16

|

+

zeabur={'Zeabur 部署'}

|

|

17

|

+

/>

|

|

@@ -15,4 +15,3 @@ Therefore, in LobeChat, we have introduced the concept of **assistants**. An ass

|

|

|

15

15

|

|

|

16

16

|

|

|

17

17

|

At the same time, we have integrated topics into each assistant. The benefit of this approach is that each assistant has an independent topic list. You can choose the corresponding assistant based on the current task and quickly switch between historical conversation records. This method is more in line with users' habits in common chat software, improving interaction efficiency.

|

|

18

|

-

|

|

@@ -17,8 +17,8 @@ When you need to handle specific tasks, you need to consider creating a custom a

|

|

|

17

17

|

|

|

18

18

|

|

|

19

19

|

<Callout type={'info'}>

|

|

20

|

-

**Quick Setup Tip**: You can conveniently modify the Prompt through the quick edit button in the

|

|

21

|

-

|

|

20

|

+

**Quick Setup Tip**: You can conveniently modify the Prompt through the quick edit button in the

|

|

21

|

+

sidebar.

|

|

22

22

|

</Callout>

|

|

23

23

|

|

|

24

24

|

|

|

@@ -26,9 +26,7 @@ When you need to handle specific tasks, you need to consider creating a custom a

|

|

|

26

26

|

If you want to understand Prompt writing tips and common model parameter settings, you can continue to view:

|

|

27

27

|

|

|

28

28

|

<Cards>

|

|

29

|

-

<Cards.Card href={'/en/usage/agents/prompt'} title={'Prompt User Guide'}

|

|

30

|

-

|

|

31

|

-

|

|

32

|

-

title={'Large Language Model User Guide'}

|

|

33

|

-

></Cards.Card>

|

|

29

|

+

<Cards.Card href={'/en/usage/agents/prompt'} title={'Prompt User Guide'} />

|

|

30

|

+

|

|

31

|

+

<Cards.Card href={'/en/usage/agents/model'} title={'Large Language Model User Guide'} />

|

|

34

32

|

</Cards>

|

|

@@ -18,7 +18,6 @@ import { Callout, Cards } from 'nextra/components';

|

|

|

18

18

|

|

|

19

19

|

<Callout type={'info'}>

|

|

20

20

|

**快捷设置技巧**: 可以通过侧边栏的快捷编辑按钮进行 Prompt 的便捷修改

|

|

21

|

-

|

|

22

21

|

</Callout>

|

|

23

22

|

|

|

24

23

|

|

|

@@ -26,6 +25,7 @@ import { Callout, Cards } from 'nextra/components';

|

|

|

26

25

|

如果你希望理解 Prompt 编写技巧和常见的模型参数设置,可以继续查看:

|

|

27

26

|

|

|

28

27

|

<Cards>

|

|

29

|

-

<Cards.Card href={'/zh/usage/agents/prompt'} title={'Prompt 使用指南'}

|

|

30

|

-

|

|

28

|

+

<Cards.Card href={'/zh/usage/agents/prompt'} title={'Prompt 使用指南'} />

|

|

29

|

+

|

|

30

|

+

<Cards.Card href={'/zh/usage/agents/model'} title={'大语言模型使用指南'} />

|

|

31

31

|

</Cards>

|

|

@@ -1,3 +1,8 @@

|

|

|

1

|

+

---

|

|

2

|

+

title: LLM Guide

|

|

3

|

+

description: Explore the capabilities of ChatGPT models from gpt-3.5-turbo to gpt-4-32k, understanding their speed, context limits, and cost. Learn about model parameters like temperature and top-p for better output.

|

|

4

|

+

---

|

|

5

|

+

|

|

1

6

|

import { Callout } from 'nextra/components';

|

|

2

7

|

|

|

3

8

|

# Model Guide

|

|

@@ -32,10 +37,10 @@ This parameter controls the randomness of the model's output. The higher the val

|

|

|

32

37

|

|

|

33

38

|

### `top_p`

|

|

34

39

|

|

|

35

|

-

|

|

40

|

+

Top_p is also a sampling parameter, but it differs from temperature in its sampling method. Before outputting, the model generates a bunch of tokens, and these tokens are ranked based on their quality. In the top-p sampling mode, the candidate word list is dynamic, and tokens are selected from the tokens based on a percentage. Top_p introduces randomness in token selection, allowing other high-scoring tokens to have a chance of being selected, rather than always choosing the highest-scoring one.

|

|

36

41

|

|

|

37

42

|

<Callout>

|

|

38

|

-

|

|

43

|

+

Top_p is similar to randomness, and it is generally not recommended to change it together with the

|

|

39

44

|

randomness of temperature.

|

|

40

45

|

</Callout>

|

|

41

46

|

|

|

@@ -61,8 +66,8 @@ The presence penalty parameter can be seen as a punishment for repetitive conten

|

|

|

61

66

|

|

|

62

67

|

It is a mechanism that penalizes frequently occurring new vocabulary in the text to reduce the likelihood of the model repeating the same word. The larger the value, the more likely it is to reduce repeated words.

|

|

63

68

|

|

|

64

|

-

- `-2.0` When the morning news started broadcasting, I found that my TV now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now

|

|

65

|

-

- `-1.0` He always watches the news in the early morning, in front of the TV watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch

|

|

69

|

+

- `-2.0` When the morning news started broadcasting, I found that my TV now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now now **(The highest frequency word is "now", accounting for 44.79%)**

|

|

70

|

+

- `-1.0` He always watches the news in the early morning, in front of the TV watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch watch **(The highest frequency word is "watch", accounting for 57.69%)**

|

|

66

71

|

- `0.0` When the morning sun poured into the small diner, a tired postman appeared at the door, carrying a bag of letters in his hands. The owner warmly prepared a breakfast for him, and he started sorting the mail while enjoying his breakfast. **(The highest frequency word is "of", accounting for 8.45%)**

|

|

67

72

|

- `1.0` A girl in deep sleep was woken up by a warm ray of sunshine, she saw the first ray of morning light, surrounded by birdsong and flowers, everything was full of vitality. \_ (The highest frequency word is "of", accounting for 5.45%)

|

|

68

73

|

- `2.0` Every morning, he would sit on the balcony to have breakfast. Under the soft setting sun, everything looked very peaceful. However, one day, when he was about to pick up his breakfast, an optimistic little bird flew by, bringing him a good mood for the day. \_ (The highest frequency word is "of", accounting for 4.94%)

|

|

@@ -31,7 +31,7 @@ LLM 看似很神奇,但本质还是一个概率问题,神经网络根据输

|

|

|

31

31

|

|

|

32

32

|

### `top_p`

|

|

33

33

|

|

|

34

|

-

核采样 top_p 也是采样参数,跟 temperature 不一样的采样方式。模型在输出之前,会生成一堆 token,这些 token 根据质量高低排名,核采样模式中候选词列表是动态的,从 tokens 里按百分比选择候选词。

|

|

34

|

+

核采样 top_p 也是采样参数,跟 temperature 不一样的采样方式。模型在输出之前,会生成一堆 token,这些 token 根据质量高低排名,核采样模式中候选词列表是动态的,从 tokens 里按百分比选择候选词。 top_p 为选择 token 引入了随机性,让其他高分的 token 有被选择的机会,不会总是选最高分的。

|

|

35

35

|

|

|

36

36

|

<Callout>top_p 与随机性类似,一般来说不建议和随机性 temperature 一起更改</Callout>

|

|

37

37

|

|

|

@@ -57,8 +57,8 @@ Presence Penalty 参数可以看作是对生成文本中重复内容的一种惩

|

|

|

57

57

|

|

|

58

58

|

是一种机制,通过对文本中频繁出现的新词汇施加惩罚,以减少模型重复同一词语的可能性,值越大,越有可能降低重复字词。

|

|

59

59

|

|

|

60

|

-

- `-2.0` 当早间新闻开始播出,我发现我家电视现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在

|

|

61

|

-

- `-1.0` 他总是在清晨看新闻,在电视前看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看

|

|

60

|

+

- `-2.0` 当早间新闻开始播出,我发现我家电视现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在现在 *(频率最高的词是 “现在”,占比 44.79%)*

|

|

61

|

+

- `-1.0` 他总是在清晨看新闻,在电视前看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看看 *(频率最高的词是 “看”,占比 57.69%)*

|

|

62

62

|

- `0.0` 当清晨的阳光洒进小餐馆时,一名疲倦的邮递员出现在门口,他的手中提着一袋信件。店主热情地为他准备了一份早餐,他在享用早餐的同时开始整理邮件。**(频率最高的词是 “的”,占比 8.45%)**

|

|

63

|

-

- `1.0`

|

|

64

|

-

- `2.0` 每天早上,他都会在阳台上坐着吃早餐。在柔和的夕阳照耀下,一切看起来都非常宁静。然而有一天,当他准备端起早餐的时候,一只乐观的小鸟飞过,给他带来了一天的好心情。

|

|

63

|

+

- `1.0` 一个深度睡眠的女孩被一阵温暖的阳光唤醒,她看到了早晨的第一缕阳光,周围是鸟语花香,一切都充满了生机。*(频率最高的词是 “的”,占比 5.45%)*

|

|

64

|

+

- `2.0` 每天早上,他都会在阳台上坐着吃早餐。在柔和的夕阳照耀下,一切看起来都非常宁静。然而有一天,当他准备端起早餐的时候,一只乐观的小鸟飞过,给他带来了一天的好心情。 *(频率最高的词是 “的”,占比 4.94%)*

|

|

@@ -11,12 +11,12 @@ Generative AI is very useful, but it requires human guidance. In most cases, gen

|

|

|

11

11

|

<Callout type={'info'}>

|

|

12

12

|

A structured prompt refers to the construction of the prompt having a clear logic and structure.

|

|

13

13

|

For example, if you want the model to generate an article, your prompt may need to include the

|

|

14

|

-

article's topic, outline, and style.

|

|

14

|

+

article's topic, outline, and style.

|

|

15

15

|

</Callout>

|

|

16

16

|

|

|

17

17

|

Let's look at a basic discussion prompt example:

|

|

18

18

|

|

|

19

|

-

>

|

|

19

|

+

> *"What are the most urgent environmental issues facing our planet, and what actions can individuals take to help address these issues?"*

|

|

20

20

|

|

|

21

21

|

We can convert it into a simple prompt for the assistant to answer the following questions: placed at the front.

|

|

22

22

|

|

|

@@ -38,19 +38,18 @@ The second prompt generates longer output and better structure. The use of the t

|

|

|

38

38

|

## How to Improve Quality and Effectiveness

|

|

39

39

|

|

|

40

40

|

<Callout type={'info'}>

|

|

41

|

-

There are several ways to improve the quality and effectiveness of prompts:

|

|

42

|

-

|

|

43

|

-

- **Be Clear About Your Needs:** The model's output will strive to meet your needs, so if your needs are not clear, the output may not meet expectations.

|

|

44

|

-

- **Use Correct Grammar and Spelling:** The model will try to mimic your language style, so if your language style is problematic, the output may also be problematic.

|

|

45

|

-

- **Provide Sufficient Contextual Information:** The model will generate output based on the contextual information you provide, so if the information is insufficient, it may not produce the desired results.

|

|

41

|

+

There are several ways to improve the quality and effectiveness of prompts:

|

|

46

42

|

|

|

43

|

+

- **Be Clear About Your Needs:** The model's output will strive to meet your needs, so if your needs are not clear, the output may not meet expectations.

|

|

44

|

+

- **Use Correct Grammar and Spelling:** The model will try to mimic your language style, so if your language style is problematic, the output may also be problematic.

|

|

45

|

+

- **Provide Sufficient Contextual Information:** The model will generate output based on the contextual information you provide, so if the information is insufficient, it may not produce the desired results.

|

|

47

46

|

</Callout>

|

|

48

47

|

|

|

49

48

|

After formulating effective prompts for discussing issues, you now need to refine the generated results. This may involve adjusting the output to fit constraints such as word count or combining concepts from different generated results.

|

|

50

49

|

|

|

51

50

|

A simple method of iteration is to generate multiple outputs and review them to understand the concepts and structures being used. Once the outputs have been evaluated, you can select the most suitable ones and combine them into a coherent response. Another iterative method is to start small and **gradually expand**. This requires more than one prompt: an initial prompt for drafting the initial one or two paragraphs, followed by additional prompts to expand on the content already written. Here is a potential philosophical discussion prompt:

|

|

52

51

|

|

|

53

|

-

>

|

|

52

|

+

> *"Is mathematics an invention or a discovery? Use careful reasoning to explain your answer."*

|

|

54

53

|

|

|

55

54

|

Add it to a simple prompt as follows:

|

|

56

55

|

|

|

@@ -93,4 +92,4 @@ Using the prompt extensions, we can iteratively write and iterate at each step.

|

|

|

93

92

|

|

|

94

93

|

## Further Reading

|

|

95

94

|

|

|

96

|

-

- **Learn Prompting**: https://learnprompting.org/en-US/docs/intro

|

|

95

|

+

- **Learn Prompting**: [https://learnprompting.org/en-US/docs/intro](https://learnprompting.org/en-US/docs/intro)

|

|

@@ -15,7 +15,7 @@ import { Callout } from 'nextra/components';

|

|

|

15

15

|

|

|

16

16

|

让我们看一个基本的讨论问题的例子:

|

|

17

17

|

|

|

18

|

-

>

|

|

18

|

+

> *"我们星球面临的最紧迫的环境问题是什么,个人可以采取哪些措施来帮助解决这些问题?"*

|

|

19

19

|

|

|

20

20

|

我们可以将其转化为简单的助手提示,将回答以下问题:放在前面。

|

|

21

21

|

|

|

@@ -39,19 +39,18 @@ import { Callout } from 'nextra/components';

|

|

|

39

39

|

## 如何提升其质量和效果

|

|

40

40

|

|

|

41

41

|

<Callout type={'info'}>

|

|

42

|

-

提升 prompt 质量和效果的方法主要有以下几点:

|

|

43

|

-

|

|

44

|

-

- **尽量明确你的需求:** 模型的输出会尽可能满足你的需求,所以如果你的需求不明确,输出可能会不如预期。

|

|

45

|

-

- **使用正确的语法和拼写:** 模型会尽可能模仿你的语言风格,所以如果你的语言风格有问题,输出可能也会有问题。

|

|

46

|

-

- **提供足够的上下文信息:** 模型会根据你提供的上下文信息生成输出,所以如果你提供的上下文信息不足,可能无法生成你想要的结果。

|

|

42

|

+

提升 prompt 质量和效果的方法主要有以下几点:

|

|

47

43

|

|

|

44

|

+

- **尽量明确你的需求:** 模型的输出会尽可能满足你的需求,所以如果你的需求不明确,输出可能会不如预期。

|

|

45

|

+

- **使用正确的语法和拼写:** 模型会尽可能模仿你的语言风格,所以如果你的语言风格有问题,输出可能也会有问题。

|

|

46

|

+

- **提供足够的上下文信息:** 模型会根据你提供的上下文信息生成输出,所以如果你提供的上下文信息不足,可能无法生成你想要的结果。

|

|

48

47

|

</Callout>

|

|

49

48

|

|

|

50

49

|

在为讨论问题制定有效的提示后,您现在需要细化生成的结果。这可能涉及到调整输出以符合诸如字数等限制,或将不同生成的结果的概念组合在一起。

|

|

51

50

|

|

|

52

51

|

迭代的一个简单方法是生成多个输出并查看它们,以了解正在使用的概念和结构。一旦评估了输出,您就可以选择最合适的输出并将它们组合成一个连贯的回答。另一种迭代的方法是逐步开始,然后**逐步扩展**。这需要不止一个提示:一个起始提示,用于撰写最初的一两段,然后是其他提示,以扩展已经写过的内容。以下是一个潜在的哲学讨论问题:

|

|

53

52

|

|

|

54

|

-

>

|

|

53

|

+

> *"数学是发明还是发现?用仔细的推理来解释你的答案。"*

|

|

55

54

|

|

|

56

55

|

将其添加到一个简单的提示中,如下所示:

|

|

57

56

|

|

|

@@ -94,4 +93,4 @@ import { Callout } from 'nextra/components';

|

|

|

94

93

|

|

|

95

94

|

## 扩展阅读

|

|

96

95

|

|

|

97

|

-

- **Learn Prompting**: https://learnprompting.org/zh-Hans/docs/intro

|

|

96

|

+

- **Learn Prompting**: [https://learnprompting.org/zh-Hans/docs/intro](https://learnprompting.org/zh-Hans/docs/intro)

|

|

@@ -3,4 +3,4 @@

|

|

|

3

3

|

|

|

4

4

|

|

|

5

5

|

- **Save Topic:** During a conversation, if you want to save the current context and start a new topic, you can click the save button next to the send button.

|

|

6

|

-

- **Topic List:** Clicking on a topic in the list allows for quick switching of historical conversation records and continuing the conversation. You can also use the star icon <kbd>⭐️</kbd> to pin favorite topics to the top, or use the more button on the right to rename or delete topics.

|

|

6

|

+

- **Topic List:** Clicking on a topic in the list allows for quick switching of historical conversation records and continuing the conversation. You can also use the star icon <kbd>⭐️</kbd> to pin favorite topics to the top, or use the more button on the right to rename or delete topics.

|

|

@@ -1,13 +1,16 @@

|

|

|

1

|

+

---

|

|

2

|

+

title: Assistant Market

|

|

3

|

+

---

|

|

4

|

+

|

|

1

5

|

import { Callout } from 'nextra/components';

|

|

2

6

|

|

|

3

|

-

#

|

|

7

|

+

# Assistant Market

|

|

4

8

|

|

|

5

9

|

<Image

|

|

6

|

-

alt={'

|

|

10

|

+

alt={'Assistant Market'}

|

|

7

11

|

src={

|

|

8

12

|

'https://github-production-user-asset-6210df.s3.amazonaws.com/17870709/268670869-f1ffbf66-42b6-42cf-a937-9ce1f8328514.png'

|

|

9

13

|

}

|

|

10

|

-

cover

|

|

11

14

|

/>

|

|

12

15

|

|

|

13

16

|

In LobeChat's Assistant Market, creators can discover a vibrant and innovative community that brings together numerous carefully designed assistants. These assistants not only play a crucial role in work scenarios but also provide great convenience in the learning process. Our market is not just a showcase platform, but also a collaborative space. Here, everyone can contribute their wisdom and share their personally developed assistants.

|

|

@@ -17,7 +20,7 @@ In LobeChat's Assistant Market, creators can discover a vibrant and innovative c

|

|

|

17

20

|

our platform. We particularly emphasize that LobeChat has established a sophisticated automated

|

|

18

21

|

internationalization (i18n) workflow, which excels in seamlessly converting your assistants into

|

|

19

22

|

multiple language versions. This means that regardless of the language your users are using, they

|

|

20

|

-

can seamlessly experience your assistant.

|

|

23

|

+

can seamlessly experience your assistant.

|

|

21

24

|

</Callout>

|

|

22

25

|

|

|

23

26

|

<Callout>

|

|

@@ -28,14 +31,13 @@ In LobeChat's Assistant Market, creators can discover a vibrant and innovative c

|

|

|

28

31

|

|

|

29

32

|

## Assistant Examples

|

|

30

33

|

|

|

31

|

-

| Recently Added

|

|

32

|

-

|

|

|

33

|

-

| [Copywriting](https://chat-preview.lobehub.com/market?agent=copywriting)<br/><sup>By **[pllz7](https://github.com/pllz7)** on **2024-02-14**</sup>

|

|

34

|

-

| [Private Domain Operation Expert](https://chat-preview.lobehub.com/market?agent=gl-syyy)<br/><sup>By **[guling-io](https://github.com/guling-io)** on **2024-02-14**</sup> | Proficient in private domain operation, traffic acquisition, conversion, and content planning, familiar with marketing theories and related classic works.<br/>`Private domain operation` `Traffic acquisition` `Conversion` `Content planning` |

|

|

35

|

-

| [Self-media Operation Expert](https://chat-preview.lobehub.com/market?agent=gl-zmtyy)<br/><sup>By **[guling-io](https://github.com/guling-io)** on **2024-02-14**</sup>

|

|

36

|

-

| [Product Description](https://chat-preview.lobehub.com/market?agent=product-description)<br/><sup>By **[pllz7](https://github.com/pllz7)** on **2024-02-14**</sup>

|

|

34

|

+

| Recently Added | Assistant Description |

|

|

35

|

+

| --------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------ |

|

|

36

|

+

| [Copywriting](https://chat-preview.lobehub.com/market?agent=copywriting)<br /><sup>By **[pllz7](https://github.com/pllz7)** on **2024-02-14**</sup> | Proficient in persuasive copywriting and consumer psychology<br />`E-commerce` |

|

|

37

|

+

| [Private Domain Operation Expert](https://chat-preview.lobehub.com/market?agent=gl-syyy)<br /><sup>By **[guling-io](https://github.com/guling-io)** on **2024-02-14**</sup> | Proficient in private domain operation, traffic acquisition, conversion, and content planning, familiar with marketing theories and related classic works.<br />`Private domain operation` `Traffic acquisition` `Conversion` `Content planning` |

|

|

38

|

+

| [Self-media Operation Expert](https://chat-preview.lobehub.com/market?agent=gl-zmtyy)<br /><sup>By **[guling-io](https://github.com/guling-io)** on **2024-02-14**</sup> | Proficient in self-media operation and content creation<br />`Self-media operation` `Social media` `Content creation` `Fan growth` `Brand promotion` |

|

|

39

|

+

| [Product Description](https://chat-preview.lobehub.com/market?agent=product-description)<br /><sup>By **[pllz7](https://github.com/pllz7)** on **2024-02-14**</sup> | Create captivating product descriptions to improve e-commerce sales performance<br />`E-commerce` |

|

|

37

40

|

|

|

38

41

|

> 📊 Total agents: [<kbd>**177**</kbd> ](https://github.com/lobehub/lobe-chat-agents)

|

|

39

42

|

|

|

40

43

|

[submit-agents-link]: https://github.com/lobehub/lobe-chat-agents

|

|

41

|

-

[submit-agents-shield]: https://img.shields.io/badge/🤖/🏪_submit_agent-%E2%86%92-c4f042?labelColor=black&style=for-the-badge

|

|

@@ -4,10 +4,10 @@ import { Callout } from 'nextra/components';

|

|

|

4

4

|

|

|

5

5

|

<Image

|

|

6

6

|

alt={'助手市场'}

|

|

7

|

+

cover

|

|

7

8

|

src={

|

|

8

9

|

'https://github-production-user-asset-6210df.s3.amazonaws.com/17870709/268670869-f1ffbf66-42b6-42cf-a937-9ce1f8328514.png'

|

|

9

10

|

}

|

|

10

|

-

cover

|

|

11

11

|

/>

|

|

12

12

|

|

|

13

13

|

在 LobeChat 的助手市场中,创作者们可以发现一个充满活力和创新的社区,它汇聚了众多精心设计的助手,这些助手不仅在工作场景中发挥着重要作用,也在学习过程中提供了极大的便利。我们的市场不仅是一个展示平台,更是一个协作的空间。在这里,每个人都可以贡献自己的智慧,分享个人开发的助手。

|

|

@@ -25,14 +25,13 @@ import { Callout } from 'nextra/components';

|

|

|

25

25

|

|

|

26

26

|

## 助手示例

|

|

27

27

|

|

|

28

|

-

| 最近新增

|

|

29

|

-

|

|

|

30

|

-

| [产品文案撰写](https://chat-preview.lobehub.com/market?agent=copywriting)<br/><sup>By **[pllz7](https://github.com/pllz7)** on **2024-02-14**</sup>

|

|

31

|

-

| [私域运营专家](https://chat-preview.lobehub.com/market?agent=gl-syyy)<br/><sup>By **[guling-io](https://github.com/guling-io)** on **2024-02-14**</sup>

|

|

32

|

-

| [自媒体运营专家](https://chat-preview.lobehub.com/market?agent=gl-zmtyy)<br/><sup>By **[guling-io](https://github.com/guling-io)** on **2024-02-14**</sup> | 擅长自媒体运营与内容创作<br/>`自媒体运营` `社交媒体` `内容创作` `粉丝增长` `品牌推广`

|

|

33

|

-

| [产品描述](https://chat-preview.lobehub.com/market?agent=product-description)<br/><sup>By **[pllz7](https://github.com/pllz7)** on **2024-02-14**</sup> | 打造引人入胜的产品描述,提升电子商务销售业绩<br/>`电子商务`

|

|

28

|

+

| 最近新增 | 助手说明 |

|

|

29

|

+

| ---------------------------------------------------------------------------------------------------------------------------------------------------- | --------------------------------------------------------------------- |

|

|

30

|

+

| [产品文案撰写](https://chat-preview.lobehub.com/market?agent=copywriting)<br /><sup>By **[pllz7](https://github.com/pllz7)** on **2024-02-14**</sup> | 精通有说服力的文案撰写和消费者心理学<br />`电子商务` |

|

|

31

|

+

| [私域运营专家](https://chat-preview.lobehub.com/market?agent=gl-syyy)<br /><sup>By **[guling-io](https://github.com/guling-io)** on **2024-02-14**</sup> | 擅长私域运营、引流、承接、转化和内容策划,熟悉营销理论和相关经典著作。<br />`私域运营` `引流` `承接` `转化` `内容策划` |

|

|

32

|

+

| [自媒体运营专家](https://chat-preview.lobehub.com/market?agent=gl-zmtyy)<br /><sup>By **[guling-io](https://github.com/guling-io)** on **2024-02-14**</sup> | 擅长自媒体运营与内容创作<br />`自媒体运营` `社交媒体` `内容创作` `粉丝增长` `品牌推广` |

|

|

33

|

+

| [产品描述](https://chat-preview.lobehub.com/market?agent=product-description)<br /><sup>By **[pllz7](https://github.com/pllz7)** on **2024-02-14**</sup> | 打造引人入胜的产品描述,提升电子商务销售业绩<br />`电子商务` |

|

|

34

34

|

|

|

35

35

|

> 📊 Total agents: [<kbd>**177**</kbd> ](https://github.com/lobehub/lobe-chat-agents)

|

|

36

36

|

|

|

37

37

|

[submit-agents-link]: https://github.com/lobehub/lobe-chat-agents

|

|

38

|

-

[submit-agents-shield]: https://img.shields.io/badge/🤖/🏪_submit_agent-%E2%86%92-c4f042?labelColor=black&style=for-the-badge

|

|

@@ -1,12 +1,13 @@

|

|

|

1

|

+

---

|

|

2

|

+

title: Local LLM

|

|

3

|

+

image: https://github.com/lobehub/lobe-chat/assets/28616219/8292b22e-1f4f-478b-b510-32c7883c1c6d

|

|

4

|

+

---

|

|

5

|

+

|

|

1

6

|

import { Callout } from 'nextra/components';

|

|

2

7

|

|

|

3

8

|

# Local Large Language Model (LLM) Support

|

|

4

9

|

|

|

5

|

-

<Image

|

|

6

|

-

alt={'Ollama Local Large Language Model (LLM) Support'}

|

|

7

|

-

src={'https://github.com/lobehub/lobe-chat/assets/28616219/ca9a21bc-ea6c-4c90-bf4a-fa53b4fb2b5c'}

|

|

8

|

-

cover

|

|

9

|

-

/>

|

|

10

|

+

<Image alt={'Ollama Local Large Language Model (LLM) Support'} cover src={'https://github.com/lobehub/lobe-chat/assets/28616219/ca9a21bc-ea6c-4c90-bf4a-fa53b4fb2b5c'} />

|

|

10

11

|

|

|

11

12

|

<Callout>Available in >=0.127.0, currently only supports Docker deployment</Callout>

|

|

12

13

|

|

|

@@ -1,12 +1,15 @@

|

|

|

1

|

+

---

|

|

2

|

+

title: 本地大语言模型(Local)

|

|

3

|

+

description: LobeChat 支持本地 LLM,使用 Ollama AI集成带来高效智能沟通。体验本地大语言模型的隐私性、安全性和即时交流

|

|

4

|

+

image: https://github.com/lobehub/lobe-chat/assets/28616219/8292b22e-1f4f-478b-b510-32c7883c1c6d

|

|

5

|

+

tags: 本地大语言模型,LLM,LobeChat v0.127.0,Ollama AI,Docker 部署

|

|

6

|

+

---

|

|

7

|

+

|

|

1

8

|

import { Callout } from 'nextra/components';

|

|

2

9

|

|

|

3

10

|

# 支持本地大语言模型(LLM)

|

|

4

11

|

|

|

5

|

-

<Image

|

|

6

|

-

alt={'Ollama 支持本地大语言模型'}

|

|

7

|

-

src={'https://github.com/lobehub/lobe-chat/assets/28616219/ca9a21bc-ea6c-4c90-bf4a-fa53b4fb2b5c'}

|

|

8

|

-

cover

|

|

9

|

-

/>

|

|

12

|

+

<Image alt={'Ollama 支持本地大语言模型'} cover src={'https://github.com/lobehub/lobe-chat/assets/28616219/ca9a21bc-ea6c-4c90-bf4a-fa53b4fb2b5c'} />

|

|

10

13

|

|

|

11

14

|

<Callout>在 >=v0.127.0 版本中可用,目前仅支持 Docker 部署</Callout>

|

|

12

15

|

|

|

@@ -1,10 +1,10 @@

|

|

|

1

|

+

---

|

|

2

|

+

title: Mobile Device Adaptation

|

|

3

|

+

---

|

|

4

|

+

|

|

1

5

|

# Mobile Device Adaptation

|

|

2

6

|

|

|

3

|

-

<Image

|

|

4

|

-

alt={'Mobile Device Adaptation'}

|

|

5

|

-

src={'https://github.com/lobehub/lobe-chat/assets/28616219/11110732-8d5a-4049-b556-c2561cb66182'}

|

|

6

|

-

cover

|

|

7

|

-

/>

|

|

7

|

+

<Image alt={'Mobile Device Adaptation'} cover src={'https://github.com/lobehub/lobe-chat/assets/28616219/11110732-8d5a-4049-b556-c2561cb66182'} />

|

|

8

8

|

|

|

9

9

|

LobeChat has undergone a series of optimized designs for mobile devices to enhance the user's mobile experience.

|

|

10

10

|

|

|

@@ -1,10 +1,6 @@

|

|

|

1

1

|

# 移动设备适配

|

|

2

2

|

|

|

3

|

-

<Image

|

|

4

|

-

alt={'移动端设备适配'}

|

|

5

|

-

src={'https://github.com/lobehub/lobe-chat/assets/28616219/11110732-8d5a-4049-b556-c2561cb66182'}

|

|

6

|

-

cover

|

|

7

|

-

/>

|

|

3

|

+

<Image alt={'移动端设备适配'} cover src={'https://github.com/lobehub/lobe-chat/assets/28616219/11110732-8d5a-4049-b556-c2561cb66182'} />

|

|

8

4

|

|

|

9

5

|

LobeChat 针对移动设备进行了一系列的优化设计,以提升用户的移动体验。

|

|

10

6

|

|

|

@@ -1,12 +1,12 @@

|

|

|

1

|

+

---

|

|

2

|

+

title: Multi AI Providers

|

|

3

|

+

---

|

|

4

|

+

|

|

1

5

|

import { Callout } from 'nextra/components';

|

|

2

6

|

|

|

3

7

|

# Multi-Model Service Provider Support

|

|

4

8

|

|

|

5

|

-

<Image

|

|

6

|

-

alt={'Multi-Model Service Provider Support'}

|

|

7

|

-

src={'https://github.com/lobehub/lobe-chat/assets/28616219/b164bc54-8ba2-4c1e-b2f2-f4d7f7e7a551'}

|

|

8

|

-

cover

|

|

9

|

-

/>

|

|

9

|

+

<Image alt={'Multi-Model Service Provider Support'} cover src={'https://github.com/lobehub/lobe-chat/assets/28616219/b164bc54-8ba2-4c1e-b2f2-f4d7f7e7a551'} />

|

|

10

10

|

|

|

11

11

|

<Callout>Available in version 0.123.0 and later</Callout>

|

|

12

12

|

|

|

@@ -29,4 +29,4 @@ At the same time, we are also planning to support more model service providers,

|

|

|

29

29

|

|

|

30

30

|

|

|

31

31

|

|

|

32

|

-

To meet the specific needs of users, LobeChat also supports the use of local models based on [Ollama](https://ollama.ai), allowing users to flexibly use their own or third-party models. For more details, see [Local Model Support](/

|

|

32

|

+

To meet the specific needs of users, LobeChat also supports the use of local models based on [Ollama](https://ollama.ai), allowing users to flexibly use their own or third-party models. For more details, see [Local Model Support](/en/usage/features/local-llm).

|

|

@@ -2,15 +2,11 @@ import { Callout } from 'nextra/components';

|

|

|

2

2

|

|

|

3

3

|

# 多模型服务商支持

|

|

4

4

|

|

|

5

|

-

<Image

|

|

6

|

-

alt={'多模型服务商支持'}

|

|

7

|

-

src={'https://github.com/lobehub/lobe-chat/assets/28616219/b164bc54-8ba2-4c1e-b2f2-f4d7f7e7a551'}

|

|

8

|

-

cover

|

|

9

|

-

/>

|

|

5

|

+

<Image alt={'多模型服务商支持'} cover src={'https://github.com/lobehub/lobe-chat/assets/28616219/b164bc54-8ba2-4c1e-b2f2-f4d7f7e7a551'} />

|

|

10

6

|

|

|

11

7

|

<Callout>在 0.123.0 及以后版本中可用</Callout>

|

|

12

8

|

|

|

13

|

-

在 LobeChat 的不断发展过程中,我们深刻理解到在提供AI会话服务时模型服务商的多样性对于满足社区需求的重要性。因此,我们不再局限于单一的模型服务商,而是拓展了对多种模型服务商的支持,以便为用户提供更为丰富和多样化的会话选择。

|

|

9

|

+

在 LobeChat 的不断发展过程中,我们深刻理解到在提供 AI 会话服务时模型服务商的多样性对于满足社区需求的重要性。因此,我们不再局限于单一的模型服务商,而是拓展了对多种模型服务商的支持,以便为用户提供更为丰富和多样化的会话选择。

|

|

14

10

|

|

|

15

11

|

通过这种方式,LobeChat 能够更灵活地适应不同用户的需求,同时也为开发者提供了更为广泛的选择空间。

|

|

16

12

|

|

|

@@ -29,4 +25,4 @@ import { Callout } from 'nextra/components';

|

|

|

29

25

|

|

|

30

26

|

|

|

31

27

|

|

|

32

|

-

为了满足特定用户的需求,LobeChat 还基于 [Ollama](https://ollama.ai) 支持了本地模型的使用,让用户能够更灵活地使用自己的或第三方的模型,详见 [本地模型支持](/zh/features/local-llm)。

|

|

28

|

+

为了满足特定用户的需求,LobeChat 还基于 [Ollama](https://ollama.ai) 支持了本地模型的使用,让用户能够更灵活地使用自己的或第三方的模型,详见 [本地模型支持](/zh/usage/features/local-llm)。

|