@juicesharp/rpiv-voice 1.4.2

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- package/CHANGELOG.md +45 -0

- package/LICENSE +21 -0

- package/README.md +116 -0

- package/audio/error-log.ts +37 -0

- package/audio/hallucination-filter.ts +71 -0

- package/audio/mic-source.ts +38 -0

- package/audio/model-download.ts +268 -0

- package/audio/pcm.ts +45 -0

- package/audio/sherpa-onnx-node.d.ts +55 -0

- package/audio/stt-engine.ts +117 -0

- package/command/pipeline-runner.ts +238 -0

- package/command/splash-runner.ts +72 -0

- package/command/voice-command.ts +251 -0

- package/config/voice-config.ts +80 -0

- package/docs/cover.png +0 -0

- package/docs/cover.svg +173 -0

- package/docs/equalizer.svg +86 -0

- package/docs/overlay.jpg +0 -0

- package/docs/overlay.png +0 -0

- package/docs/vertical-cover.png +0 -0

- package/docs/vertical-cover.svg +239 -0

- package/index.ts +66 -0

- package/locales/de.json +39 -0

- package/locales/en.json +42 -0

- package/locales/es.json +39 -0

- package/locales/fr.json +39 -0

- package/locales/pt-BR.json +39 -0

- package/locales/pt.json +39 -0

- package/locales/ru.json +39 -0

- package/locales/uk.json +39 -0

- package/package.json +94 -0

- package/state/i18n-bridge.ts +51 -0

- package/state/key-router.ts +46 -0

- package/state/screen-intent.ts +27 -0

- package/state/selectors/contract.ts +13 -0

- package/state/selectors/derivations.ts +9 -0

- package/state/selectors/focus.ts +6 -0

- package/state/selectors/projections.ts +112 -0

- package/state/state-reducer.ts +197 -0

- package/state/state.ts +48 -0

- package/state/status-intent.ts +23 -0

- package/state/voice-session.ts +176 -0

- package/view/component-binding.ts +24 -0

- package/view/components/equalizer-view.ts +237 -0

- package/view/components/settings-field-view.ts +77 -0

- package/view/components/settings-form-view.ts +26 -0

- package/view/components/splash-view.ts +98 -0

- package/view/components/status-bar-view.ts +112 -0

- package/view/components/transcript-view.ts +50 -0

- package/view/overlay-view.ts +82 -0

- package/view/props-adapter.ts +29 -0

- package/view/screen-content-strategy.ts +58 -0

- package/view/stateful-view.ts +7 -0

package/CHANGELOG.md

ADDED

|

@@ -0,0 +1,45 @@

|

|

|

1

|

+

# Changelog

|

|

2

|

+

|

|

3

|

+

All notable changes to `@juicesharp/rpiv-voice` are documented here.

|

|

4

|

+

|

|

5

|

+

Format: [Keep a Changelog](https://keepachangelog.com/en/1.1.0/).

|

|

6

|

+

Versioning: [Semantic Versioning](https://semver.org/spec/v2.0.0.html).

|

|

7

|

+

|

|

8

|

+

## [1.4.2] - 2026-05-11

|

|

9

|

+

|

|

10

|

+

### Changed

|

|

11

|

+

- Published to npm — install via `pi install npm:@juicesharp/rpiv-voice`.

|

|

12

|

+

|

|

13

|

+

## [1.4.1] - 2026-05-11

|

|

14

|

+

|

|

15

|

+

## [1.4.0] - 2026-05-10

|

|

16

|

+

|

|

17

|

+

## [1.3.1] - 2026-05-10

|

|

18

|

+

|

|

19

|

+

### Added

|

|

20

|

+

- Settings screen with ↑/↓ field navigation, equalizer toggle (default off), and auto-persist on close.

|

|

21

|

+

- Live audio-level equalizer bar in the overlay chrome.

|

|

22

|

+

|

|

23

|

+

### Changed

|

|

24

|

+

- README rewritten with feature overview, key-binding table, and configuration reference.

|

|

25

|

+

|

|

26

|

+

### Fixed

|

|

27

|

+

- Preflight stage tag preserved across voice-session initialization.

|

|

28

|

+

|

|

29

|

+

## [1.3.0] - 2026-05-08

|

|

30

|

+

|

|

31

|

+

### Added

|

|

32

|

+

- `/voice` command for local speech-to-text dictation backed by sherpa-onnx Whisper, with mic capture, live transcript overlay, and settings screen.

|

|

33

|

+

- Rolling partial transcript shown in real time while speaking.

|

|

34

|

+

- Download progress indicator with percent and byte counter on the model splash screen.

|

|

35

|

+

- Configurable keybinding support for the cancel action (no longer hardcoded to Escape).

|

|

36

|

+

|

|

37

|

+

### Changed

|

|

38

|

+

- Package marked `private: true` (opt-in install only; not part of the auto-install bundle).

|

|

39

|

+

- Internal constants and helper functions extracted for readability (magic numbers named, idioms centralized).

|

|

40

|

+

|

|

41

|

+

### Fixed

|

|

42

|

+

- Whisper spurious terminal punctuation reduced via longer VAD hangover and trailing-silence padding.

|

|

43

|

+

- Partial model installs are rolled back on failure; stale model directories detected and re-downloaded automatically.

|

|

44

|

+

- STT recognition failures logged to `~/.config/rpiv-voice/errors.log` instead of being silently swallowed.

|

|

45

|

+

- Download splash text uses a simplified Whisper model name.

|

package/LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

1

|

+

MIT License

|

|

2

|

+

|

|

3

|

+

Copyright (c) 2026 juicesharp

|

|

4

|

+

|

|

5

|

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

|

6

|

+

of this software and associated documentation files (the "Software"), to deal

|

|

7

|

+

in the Software without restriction, including without limitation the rights

|

|

8

|

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

|

9

|

+

copies of the Software, and to permit persons to whom the Software is

|

|

10

|

+

furnished to do so, subject to the following conditions:

|

|

11

|

+

|

|

12

|

+

The above copyright notice and this permission notice shall be included in all

|

|

13

|

+

copies or substantial portions of the Software.

|

|

14

|

+

|

|

15

|

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

|

16

|

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

|

17

|

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

|

18

|

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

|

19

|

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

|

20

|

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

|

21

|

+

SOFTWARE.

|

package/README.md

ADDED

|

@@ -0,0 +1,116 @@

|

|

|

1

|

+

# rpiv-voice

|

|

2

|

+

|

|

3

|

+

<div align="center">

|

|

4

|

+

<a href="https://github.com/juicesharp/rpiv-mono/tree/main/packages/rpiv-voice">

|

|

5

|

+

<picture>

|

|

6

|

+

<img src="https://raw.githubusercontent.com/juicesharp/rpiv-mono/main/packages/rpiv-voice/docs/cover.png" alt="rpiv-voice cover" width="50%">

|

|

7

|

+

</picture>

|

|

8

|

+

</a>

|

|

9

|

+

</div>

|

|

10

|

+

|

|

11

|

+

[](https://opensource.org/licenses/MIT)

|

|

12

|

+

|

|

13

|

+

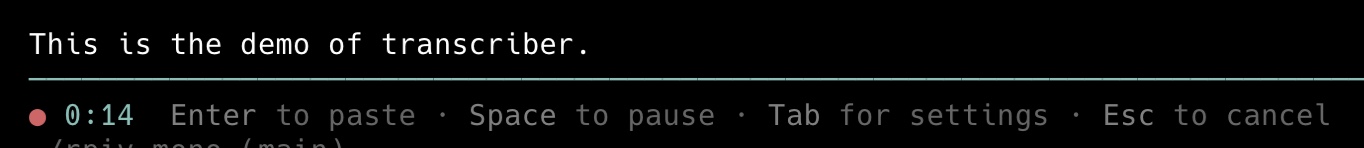

Talk to [Pi Agent](https://github.com/badlogic/pi-mono) instead of typing. `rpiv-voice` adds the `/voice` slash command — open the overlay, speak, hit `Enter`, and your transcript drops straight into Pi's editor. Speech-to-text runs **entirely on your machine** via [sherpa-onnx](https://github.com/k2-fsa/sherpa-onnx) Whisper (base multilingual int8). No cloud, no API keys, no telemetry.

|

|

14

|

+

|

|

15

|

+

|

|

16

|

+

|

|

17

|

+

## Features

|

|

18

|

+

|

|

19

|

+

- **100% on-device** — audio never leaves your laptop. No accounts, no API keys, no network calls after the first model download.

|

|

20

|

+

- **~99 languages, autodetected** — Whisper base multilingual handles the full Whisper language set with per-utterance autodetection. Switch languages mid-session without changing settings.

|

|

21

|

+

- **Live transcript** — committed lines render as you finish phrases, with a dim rolling partial showing the still-active utterance in real time. What you see is what gets pasted (no waiting for a "proper" final).

|

|

22

|

+

- **VAD-driven chunking** — Silero voice-activity detection breaks long monologues at natural pauses, so latency stays bounded even on a 5-minute rant.

|

|

23

|

+

- **Settings screen built-in** — `Tab` flips to a settings panel showing your active mic, detected language, and a hallucination filter toggle. `Ctrl-S` to save, `Esc` or `Tab` to return to dictation.

|

|

24

|

+

- **Whisper hallucination filter** — strips spurious "Thanks for watching", "[Music]", and repeating-token loops that Whisper sometimes emits on silence. Toggle off if you're dictating short single words.

|

|

25

|

+

- **Pause / resume** — hit `Space` to mute the mic without closing the overlay; great for stepping aside mid-thought.

|

|

26

|

+

- **Localized UI** — overlay, status bar, and settings render in German, English, Spanish, French, Portuguese (European + Brazilian), Russian, and Ukrainian when [`@juicesharp/rpiv-i18n`](https://www.npmjs.com/package/@juicesharp/rpiv-i18n) is installed. Falls back to English when it isn't.

|

|

27

|

+

- **Honest first-run UX** — the splash overlay shows download progress (percent + bytes), then `Extracting…`, `Verifying…`, `Loading engine…`, `Initializing mic…` before the dictation overlay opens. Half-loaded states never reach you.

|

|

28

|

+

- **Configurable cancel keybinding** — bind cancel to whatever your fingers prefer; no longer hardcoded to `Esc`.

|

|

29

|

+

- **Errors persisted, not swallowed** — recognition failures land in `~/.config/rpiv-voice/errors.log` so you can see why a phrase didn't transcribe.

|

|

30

|

+

|

|

31

|

+

## Install

|

|

32

|

+

|

|

33

|

+

`rpiv-voice` is **opt-in** — it's not part of `/rpiv-setup` because the native deps (sherpa-onnx, decibri) are heavyweight. Install it directly:

|

|

34

|

+

|

|

35

|

+

```sh

|

|

36

|

+

pi install npm:@juicesharp/rpiv-voice

|

|

37

|

+

```

|

|

38

|

+

|

|

39

|

+

Then restart your Pi session.

|

|

40

|

+

|

|

41

|

+

### Optional: localized UI

|

|

42

|

+

|

|

43

|

+

Install `@juicesharp/rpiv-i18n` alongside it to flip the overlay, status bar, and settings strings to your active locale:

|

|

44

|

+

|

|

45

|

+

```sh

|

|

46

|

+

pi install npm:@juicesharp/rpiv-i18n

|

|

47

|

+

```

|

|

48

|

+

|

|

49

|

+

`/languages` switches the locale live — no restart.

|

|

50

|

+

|

|

51

|

+

## Usage

|

|

52

|

+

|

|

53

|

+

Type `/voice` in Pi's input — the overlay opens with a recording glyph, a session timer, and `Listening…`.

|

|

54

|

+

|

|

55

|

+

| Key | Action |

|

|

56

|

+

|---|---|

|

|

57

|

+

| *(speak)* | Equalizer animates; transcript fills in live as Whisper decodes |

|

|

58

|

+

| `Enter` | Close overlay, paste transcript into the Pi editor |

|

|

59

|

+

| `Esc` | Close overlay, paste nothing (configurable — see below) |

|

|

60

|

+

| `Space` | Pause / resume the mic |

|

|

61

|

+

| `Tab` | Flip between dictation and settings screens |

|

|

62

|

+

| `Ctrl-S` *(in Settings)* | Save settings to disk |

|

|

63

|

+

|

|

64

|

+

The dim trailing text after the committed transcript is the rolling partial — it's already part of what will paste, so you can hit `Enter` the moment you're done.

|

|

65

|

+

|

|

66

|

+

### First run

|

|

67

|

+

|

|

68

|

+

The first time you run `/voice`, the splash overlay downloads the Whisper base multilingual model (~198 MB compressed, ~157 MB on disk) into `~/.pi/models/whisper-base/`. Subsequent runs load directly from disk in under a second. If a previous download was interrupted, the stale model directory is detected and re-downloaded automatically.

|

|

69

|

+

|

|

70

|

+

## Configuration

|

|

71

|

+

|

|

72

|

+

`rpiv-voice` works without any config file. To customize, drop a JSON file at `~/.config/rpiv-voice/voice.json`:

|

|

73

|

+

|

|

74

|

+

```json

|

|

75

|

+

{

|

|

76

|

+

"hallucinationFilterEnabled": false

|

|

77

|

+

}

|

|

78

|

+

```

|

|

79

|

+

|

|

80

|

+

| Field | Default | Effect |

|

|

81

|

+

|---|---|---|

|

|

82

|

+

| `hallucinationFilterEnabled` | `true` | When `false`, keeps Whisper's "Thanks for watching" / "[Music]" / repeating-token loops. Useful when dictating short single words that the filter might mistake for noise. |

|

|

83

|

+

|

|

84

|

+

You can also flip the toggle interactively from the **Settings** screen (`Tab` from dictation, `Ctrl-S` to save).

|

|

85

|

+

|

|

86

|

+

The microphone is the OS default input — `rpiv-voice` does not expose device selection. The bundled Whisper base multilingual model is loaded from `~/.pi/models/whisper-base/`; alternative models aren't supported today.

|

|

87

|

+

|

|

88

|

+

## Privacy

|

|

89

|

+

|

|

90

|

+

- **No cloud STT.** Audio is decoded on your CPU via sherpa-onnx; nothing leaves the machine.

|

|

91

|

+

- **No telemetry.** No usage events, no crash reports, no install pings. Errors are written to a local log only.

|

|

92

|

+

- **No API keys.** Nothing to provision, nothing to revoke.

|

|

93

|

+

- **Network only on first run** — to download the model. After that, `/voice` works offline.

|

|

94

|

+

|

|

95

|

+

## Requirements

|

|

96

|

+

|

|

97

|

+

- [Pi Agent CLI](https://www.npmjs.com/package/@earendil-works/pi-coding-agent)

|

|

98

|

+

- A working microphone reachable by [`decibri`](https://www.npmjs.com/package/decibri) (mic permission granted to your terminal on macOS)

|

|

99

|

+

- ~200 MB free disk under `~/.pi/models/whisper-base/`

|

|

100

|

+

- Network access on first run only

|

|

101

|

+

|

|

102

|

+

## Troubleshooting

|

|

103

|

+

|

|

104

|

+

- **"Microphone init failed"** on the splash — grant your terminal app microphone access (System Settings → Privacy & Security → Microphone on macOS), then re-run `/voice`.

|

|

105

|

+

- **Transcript looks like "Thanks for watching"** — Whisper hallucinated on near-silence; either speak louder/closer, or leave the hallucination filter on (the default).

|

|

106

|

+

- **`/voice` not found** — restart your Pi session after install. If it's still missing, confirm the entry exists in `~/.pi/agent/settings.json`.

|

|

107

|

+

- **Recognition errors** — check `~/.config/rpiv-voice/errors.log` for the underlying sherpa-onnx error.

|

|

108

|

+

|

|

109

|

+

## Related packages

|

|

110

|

+

|

|

111

|

+

- [`@juicesharp/rpiv-i18n`](https://www.npmjs.com/package/@juicesharp/rpiv-i18n) — localizes the `/voice` overlay UI.

|

|

112

|

+

- [`@juicesharp/rpiv-pi`](https://www.npmjs.com/package/@juicesharp/rpiv-pi) — umbrella + `/rpiv-setup` for the rest of the `rpiv-*` family.

|

|

113

|

+

|

|

114

|

+

## License

|

|

115

|

+

|

|

116

|

+

MIT — see [LICENSE](./LICENSE).

|

|

@@ -0,0 +1,37 @@

|

|

|

1

|

+

/**

|

|

2

|

+

* error-log — append-only diagnostic sink for the dictation pipeline.

|

|

3

|

+

*

|

|

4

|

+

* The pipeline cannot write to stderr (corrupts the active TUI render) and

|

|

5

|

+

* cannot surface failures via `notify` without churning the chat history. So

|

|

6

|

+

* recognition errors went silent, leaving users with mysterious gaps in the

|

|

7

|

+

* transcript and no breadcrumbs.

|

|

8

|

+

*

|

|

9

|

+

* This module appends one line per failure to

|

|

10

|

+

* `~/.config/rpiv-voice/errors.log`. Writes are best-effort and synchronous —

|

|

11

|

+

* a write failure is itself swallowed (we cannot log the log failure without

|

|

12

|

+

* re-entering the same hazard).

|

|

13

|

+

*/

|

|

14

|

+

|

|

15

|

+

import { appendFileSync, mkdirSync } from "node:fs";

|

|

16

|

+

import { homedir } from "node:os";

|

|

17

|

+

import { join } from "node:path";

|

|

18

|

+

|

|

19

|

+

const LOG_DIR = join(homedir(), ".config", "rpiv-voice");

|

|

20

|

+

const LOG_PATH = join(LOG_DIR, "errors.log");

|

|

21

|

+

|

|

22

|

+

export function getErrorLogPath(): string {

|

|

23

|

+

return LOG_PATH;

|

|

24

|

+

}

|

|

25

|

+

|

|

26

|

+

export function appendErrorLog(scope: string, err: unknown): void {

|

|

27

|

+

try {

|

|

28

|

+

mkdirSync(LOG_DIR, { recursive: true });

|

|

29

|

+

const message = err instanceof Error ? `${err.name}: ${err.message}` : String(err);

|

|

30

|

+

const line = `${new Date().toISOString()} [${scope}] ${message}\n`;

|

|

31

|

+

appendFileSync(LOG_PATH, line, "utf-8");

|

|

32

|

+

} catch {

|

|

33

|

+

// Best-effort: a logging-path failure must never re-throw into the

|

|

34

|

+

// dictation pipeline. The TUI is the user's only feedback channel and

|

|

35

|

+

// stderr would corrupt it.

|

|

36

|

+

}

|

|

37

|

+

}

|

|

@@ -0,0 +1,71 @@

|

|

|

1

|

+

// Post-recognition filter for Whisper's well-known silence/near-silence

|

|

2

|

+

// hallucinations. sherpa-onnx-node (and sherpa-onnx itself) don't expose the

|

|

3

|

+

// openai-whisper decoder thresholds — `no_speech_threshold`,

|

|

4

|

+

// `logprob_threshold`, `compression_ratio_threshold` — so we filter the

|

|

5

|

+

// output instead. Combined with the input-side RMS gate this catches the

|

|

6

|

+

// long tail without an engine swap.

|

|

7

|

+

|

|

8

|

+

// Phrases Whisper emits as the *entire* output of a quiet segment. Matched

|

|

9

|

+

// against the normalized form (lowercase, punctuation+symbols stripped, whitespace

|

|

10

|

+

// collapsed) so "Thanks for watching!" and "thanks for watching." both hit.

|

|

11

|

+

const HALLUCINATION_PHRASES: ReadonlySet<string> = new Set([

|

|

12

|

+

"thanks for watching",

|

|

13

|

+

"thanks for watching everyone",

|

|

14

|

+

"thank you for watching",

|

|

15

|

+

"please subscribe",

|

|

16

|

+

"please like and subscribe",

|

|

17

|

+

"see you in the next video",

|

|

18

|

+

"see you next time",

|

|

19

|

+

"ill see you in the next video",

|

|

20

|

+

"subtitles by the amaraorg community",

|

|

21

|

+

"transcribed by esoteric",

|

|

22

|

+

"music",

|

|

23

|

+

"applause",

|

|

24

|

+

"silence",

|

|

25

|

+

"you",

|

|

26

|

+

"thank you",

|

|

27

|

+

"bye",

|

|

28

|

+

// Frequent non-English variants (Whisper's multilingual decoder picks these

|

|

29

|

+

// up on quiet segments regardless of the actual spoken language):

|

|

30

|

+

"ご視聴ありがとうございました",

|

|

31

|

+

"次回もお楽しみに",

|

|

32

|

+

"시청해주셔서 감사합니다",

|

|

33

|

+

"다음 영상에서 만나요",

|

|

34

|

+

]);

|

|

35

|

+

|

|

36

|

+

export function isHallucination(text: string): boolean {

|

|

37

|

+

const normalized = normalize(text);

|

|

38

|

+

if (!normalized) return true;

|

|

39

|

+

if (HALLUCINATION_PHRASES.has(normalized)) return true;

|

|

40

|

+

if (isRepetitionLoop(normalized)) return true;

|

|

41

|

+

return false;

|

|

42

|

+

}

|

|

43

|

+

|

|

44

|

+

function normalize(text: string): string {

|

|

45

|

+

return text

|

|

46

|

+

.toLowerCase()

|

|

47

|

+

.replace(/[\p{P}\p{S}]/gu, "")

|

|

48

|

+

.replace(/\s+/g, " ")

|

|

49

|

+

.trim();

|

|

50

|

+

}

|

|

51

|

+

|

|

52

|

+

// Catches "1/2 1/2 1/2 1/2", "ok ok ok ok", "yes no yes no yes no" — the

|

|

53

|

+

// decoder loop output Whisper falls into on borderline-silent input. Single-

|

|

54

|

+

// token repeats need 4 reps so real emphasis ("yeah yeah yeah") is preserved.

|

|

55

|

+

function isRepetitionLoop(normalized: string): boolean {

|

|

56

|

+

const tokens = normalized.split(" ").filter(Boolean);

|

|

57

|

+

if (tokens.length < 4) return false;

|

|

58

|

+

for (let groupSize = 1; groupSize <= 3; groupSize++) {

|

|

59

|

+

const minRepeats = groupSize === 1 ? 4 : 3;

|

|

60

|

+

if (tokens.length < groupSize * minRepeats) continue;

|

|

61

|

+

let allMatch = true;

|

|

62

|

+

for (let i = groupSize; i < tokens.length; i++) {

|

|

63

|

+

if (tokens[i] !== tokens[i % groupSize]) {

|

|

64

|

+

allMatch = false;

|

|

65

|

+

break;

|

|

66

|

+

}

|

|

67

|

+

}

|

|

68

|

+

if (allMatch) return true;

|

|

69

|

+

}

|

|

70

|

+

return false;

|

|

71

|

+

}

|

|

@@ -0,0 +1,38 @@

|

|

|

1

|

+

export const TARGET_SAMPLE_RATE = 16000;

|

|

2

|

+

export const FRAMES_PER_BUFFER = 1600;

|

|

3

|

+

|

|

4

|

+

const VAD_THRESHOLD = 0.5;

|

|

5

|

+

// Hangover before emitting `silence`. decibri's 300 ms default flushed mid-

|

|

6

|

+

// clause at natural breath pauses, which forced Whisper to "complete" an

|

|

7

|

+

// unterminated phrase with a spurious period. 700 ms eliminated that but felt

|

|

8

|

+

// laggy at the user-perceived "I stopped → text appears" gap. 500 ms is the

|

|

9

|

+

// LiveKit value: covers most natural breath pauses, keeps the perceived gap

|

|

10

|

+

// to ~half a second, and the transcribing spinner now papers over the rest.

|

|

11

|

+

const VAD_HOLDOFF_MS = 500;

|

|

12

|

+

|

|

13

|

+

export interface DecibriLike {

|

|

14

|

+

on(event: "data", listener: (chunk: Buffer) => void): unknown;

|

|

15

|

+

on(event: "speech" | "silence", listener: () => void): unknown;

|

|

16

|

+

once(event: "end" | "error" | "close", listener: (err?: Error) => void): unknown;

|

|

17

|

+

stop(): void;

|

|

18

|

+

}

|

|

19

|

+

|

|

20

|

+

interface DecibriCtor {

|

|

21

|

+

new (opts: Record<string, unknown>): DecibriLike;

|

|

22

|

+

}

|

|

23

|

+

|

|

24

|

+

export async function createMic(): Promise<DecibriLike> {

|

|

25

|

+

// decibri ships as CJS (`module.exports = Decibri`); under ESM the ctor lands on `.default`.

|

|

26

|

+

const mod = (await import("decibri")) as { default: DecibriCtor };

|

|

27

|

+

const Decibri = mod.default;

|

|

28

|

+

return new Decibri({

|

|

29

|

+

sampleRate: TARGET_SAMPLE_RATE,

|

|

30

|

+

channels: 1,

|

|

31

|

+

framesPerBuffer: FRAMES_PER_BUFFER,

|

|

32

|

+

format: "int16",

|

|

33

|

+

vad: true,

|

|

34

|

+

vadMode: "silero",

|

|

35

|

+

vadThreshold: VAD_THRESHOLD,

|

|

36

|

+

vadHoldoff: VAD_HOLDOFF_MS,

|

|

37

|

+

});

|

|

38

|

+

}

|

|

@@ -0,0 +1,268 @@

|

|

|

1

|

+

/**

|

|

2

|

+

* model-download — fetches the multilingual Whisper base model archive into

|

|

3

|

+

* `~/.pi/models/whisper-base/`, extracts it, prunes unused fp32 duplicates,

|

|

4

|

+

* and writes a sentinel file marking the install complete.

|

|

5

|

+

*

|

|

6

|

+

* The upstream archive ships BOTH fp32 (~290 MB) and int8 (~155 MB) variants

|

|

7

|

+

* in one tarball. We use int8 for CPU inference, so we delete the fp32

|

|

8

|

+

* duplicates after extraction to keep on-disk usage to ~157 MB.

|

|

9

|

+

*

|

|

10

|

+

* Progress is surfaced phase-by-phase (downloading → extracting → verifying);

|

|

11

|

+

* we deliberately don't forward per-chunk fetch progress, because callers

|

|

12

|

+

* pipe phase strings into a single-line ctx.ui.setStatus and per-chunk would

|

|

13

|

+

* spam the status surface.

|

|

14

|

+

*/

|

|

15

|

+

|

|

16

|

+

import { execFile } from "node:child_process";

|

|

17

|

+

import { createWriteStream, existsSync, mkdirSync, rmSync, writeFileSync } from "node:fs";

|

|

18

|

+

import { homedir } from "node:os";

|

|

19

|

+

import { join } from "node:path";

|

|

20

|

+

import { Readable, Transform } from "node:stream";

|

|

21

|

+

import { pipeline } from "node:stream/promises";

|

|

22

|

+

import { promisify } from "node:util";

|

|

23

|

+

import { t } from "../state/i18n-bridge.js";

|

|

24

|

+

|

|

25

|

+

const execFileAsync = promisify(execFile);

|

|

26

|

+

|

|

27

|

+

// ── Paths ────────────────────────────────────────────────────────────────────

|

|

28

|

+

const MODEL_DIR_NAME = "whisper-base";

|

|

29

|

+

export const MODELS_DIR = join(homedir(), ".pi", "models");

|

|

30

|

+

export const WHISPER_BASE_DIR = join(MODELS_DIR, MODEL_DIR_NAME);

|

|

31

|

+

export const SENTINEL_FILE = ".download-complete";

|

|

32

|

+

|

|

33

|

+

// ── Source archive ───────────────────────────────────────────────────────────

|

|

34

|

+

// Approx archive size on the wire is ~198 MB; the splash now shows the exact

|

|

35

|

+

// total once Content-Length is parsed, so we no longer encode the estimate

|

|

36

|

+

// in any user-facing string. Kept here for documentation only.

|

|

37

|

+

const MODEL_RELEASE_TAG = "asr-models";

|

|

38

|

+

const MODEL_ARCHIVE_NAME = "sherpa-onnx-whisper-base.tar.bz2";

|

|

39

|

+

const MODEL_URL = `https://github.com/k2-fsa/sherpa-onnx/releases/download/${MODEL_RELEASE_TAG}/${MODEL_ARCHIVE_NAME}`;

|

|

40

|

+

|

|

41

|

+

// ── Files we keep (int8 quantized) ──────────────────────────────────────────

|

|

42

|

+

const ENCODER_FILE = "base-encoder.int8.onnx";

|

|

43

|

+

const DECODER_FILE = "base-decoder.int8.onnx";

|

|

44

|

+

const TOKENS_FILE = "base-tokens.txt";

|

|

45

|

+

const REQUIRED_FILES: readonly string[] = [ENCODER_FILE, DECODER_FILE, TOKENS_FILE];

|

|

46

|

+

|

|

47

|

+

// ── Files we delete after extraction (fp32 dupes we don't need on CPU) ──────

|

|

48

|

+

const FP32_ENCODER_FILE = "base-encoder.onnx";

|

|

49

|

+

const FP32_DECODER_FILE = "base-decoder.onnx";

|

|

50

|

+

const FP32_DUPLICATE_FILES: readonly string[] = [FP32_ENCODER_FILE, FP32_DECODER_FILE];

|

|

51

|

+

|

|

52

|

+

// ── Tar invocation ───────────────────────────────────────────────────────────

|

|

53

|

+

const TAR_BIN = "tar";

|

|

54

|

+

// `--strip-components=1` flattens sherpa's top-level wrapper directory so the

|

|

55

|

+

// REQUIRED_FILES land directly inside WHISPER_BASE_DIR.

|

|

56

|

+

const TAR_FLAGS: readonly string[] = ["-xjf"];

|

|

57

|

+

const TAR_STRIP_FLAG = "--strip-components=1";

|

|

58

|

+

|

|

59

|

+

// ── Status messages ──────────────────────────────────────────────────────────

|

|

60

|

+

// Resolved at progress-emit time (not module load) so live `/languages` flips

|

|

61

|

+

// take effect mid-download.

|

|

62

|

+

const msgDownloading = (): string => t("splash.downloading", "Downloading Whisper…");

|

|

63

|

+

const msgExtracting = (): string => t("splash.extracting", "Extracting model files…");

|

|

64

|

+

const msgVerifying = (): string => t("splash.verifying", "Verifying model files…");

|

|

65

|

+

|

|

66

|

+

// ── Public API ───────────────────────────────────────────────────────────────

|

|

67

|

+

|

|

68

|

+

export interface DownloadProgress {

|

|

69

|

+

phase: "downloading" | "extracting" | "verifying";

|

|

70

|

+

/** 0-100 integer when total size is known. Omitted when the server didn't

|

|

71

|

+

* send a Content-Length, or when the phase isn't byte-bounded. */

|

|

72

|

+

percent?: number;

|

|

73

|

+

/** Bytes received so far during the download phase (cumulative). */

|

|

74

|

+

bytesReceived?: number;

|

|

75

|

+

/** Total expected bytes when known via Content-Length. */

|

|

76

|

+

totalBytes?: number;

|

|

77

|

+

message?: string;

|

|

78

|

+

}

|

|

79

|

+

export type ProgressCallback = (progress: DownloadProgress) => void;

|

|

80

|

+

|

|

81

|

+

// Bound how often we surface byte-count updates: terminals re-flow on every

|

|

82

|

+

// emit and a fast network can fire chunks at >1 kHz, which would burn CPU on

|

|

83

|

+

// no-op renders. 200 ms feels lively without being chatty.

|

|

84

|

+

const PROGRESS_THROTTLE_MS = 200;

|

|

85

|

+

|

|

86

|

+

export interface ModelPaths {

|

|

87

|

+

encoderPath: string;

|

|

88

|

+

decoderPath: string;

|

|

89

|

+

tokensPath: string;

|

|

90

|

+

}

|

|

91

|

+

|

|

92

|

+

export type ModelInstallStage = "download" | "extract" | "verify";

|

|

93

|

+

|

|

94

|

+

/**

|

|

95

|

+

* Tagged failure surface for `ensureModelDownloaded` — lets callers distinguish

|

|

96

|

+

* "couldn't fetch the bytes" (network / HTTP) from "got the bytes but the

|

|

97

|

+

* archive was bad" (tar exit, missing file). Diagnostics matter: previously

|

|

98

|

+

* every stage rolled up to the same "check your internet connection" string.

|

|

99

|

+

*/

|

|

100

|

+

export class ModelInstallError extends Error {

|

|

101

|

+

constructor(

|

|

102

|

+

readonly stage: ModelInstallStage,

|

|

103

|

+

cause: unknown,

|

|

104

|

+

) {

|

|

105

|

+

super(`model install failed at ${stage}`, { cause: cause as Error });

|

|

106

|

+

this.name = "ModelInstallError";

|

|

107

|

+

}

|

|

108

|

+

}

|

|

109

|

+

|

|

110

|

+

export function isModelDownloaded(): boolean {

|

|

111

|

+

return existsSync(join(WHISPER_BASE_DIR, SENTINEL_FILE));

|

|

112

|

+

}

|

|

113

|

+

|

|

114

|

+

export function getModelPaths(): ModelPaths {

|

|

115

|

+

return {

|

|

116

|

+

encoderPath: join(WHISPER_BASE_DIR, ENCODER_FILE),

|

|

117

|

+

decoderPath: join(WHISPER_BASE_DIR, DECODER_FILE),

|

|

118

|

+

tokensPath: join(WHISPER_BASE_DIR, TOKENS_FILE),

|

|

119

|

+

};

|

|

120

|

+

}

|

|

121

|

+

|

|

122

|

+

/**

|

|

123

|

+

* Re-runs the post-extraction file existence check against an "already

|

|

124

|

+

* downloaded" install. The sentinel only proves the *previous* run wrote it —

|

|

125

|

+

* a user (or another tool) can have removed a required `.onnx` since then,

|

|

126

|

+

* which would otherwise surface as an opaque native crash inside

|

|

127

|

+

* sherpa-onnx's `OfflineRecognizer` constructor. Callers should call this

|

|

128

|

+

* after `isModelDownloaded()` returns true and *before* loading the engine,

|

|

129

|

+

* and on failure should `removeModelInstall()` so the next launch redownloads.

|

|

130

|

+

*/

|

|

131

|

+

export function assertModelIntact(): void {

|

|

132

|

+

verifyModelFiles();

|

|

133

|

+

}

|

|

134

|

+

|

|

135

|

+

/** Wipe the entire model directory — used to recover from any partial /

|

|

136

|

+

* corrupt install state. Idempotent and silent on missing dir. */

|

|

137

|

+

export function removeModelInstall(): void {

|

|

138

|

+

rmSync(WHISPER_BASE_DIR, { recursive: true, force: true });

|

|

139

|

+

}

|

|

140

|

+

|

|

141

|

+

export async function ensureModelDownloaded(onProgress: ProgressCallback, signal?: AbortSignal): Promise<ModelPaths> {

|

|

142

|

+

if (isModelDownloaded()) return getModelPaths();

|

|

143

|

+

|

|

144

|

+

mkdirSync(WHISPER_BASE_DIR, { recursive: true });

|

|

145

|

+

const archivePath = join(WHISPER_BASE_DIR, MODEL_ARCHIVE_NAME);

|

|

146

|

+

|

|

147

|

+

// Any failure between mkdir and writeSentinel leaves a half-populated

|

|

148

|

+

// directory (partial archive, partially-extracted .onnx, etc.) but no

|

|

149

|

+

// sentinel — so the next run would re-enter this function and overwrite,

|

|

150

|

+

// but only after wasting bandwidth. Wiping the dir on failure makes that

|

|

151

|

+

// redownload start from a clean slate and prevents a hypothetical

|

|

152

|

+

// race where another caller observes the partial state mid-run.

|

|

153

|

+

try {

|

|

154

|

+

onProgress({ phase: "downloading", message: msgDownloading() });

|

|

155

|

+

try {

|

|

156

|

+

let lastEmitMs = 0;

|

|

157

|

+

await downloadArchive(MODEL_URL, archivePath, signal, (stats) => {

|

|

158

|

+

const now = Date.now();

|

|

159

|

+

const isFinal = stats.totalBytes !== undefined && stats.bytesReceived >= stats.totalBytes;

|

|

160

|

+

if (!isFinal && now - lastEmitMs < PROGRESS_THROTTLE_MS) return;

|

|

161

|

+

lastEmitMs = now;

|

|

162

|

+

const percent =

|

|

163

|

+

stats.totalBytes && stats.totalBytes > 0

|

|

164

|

+

? Math.min(100, Math.floor((stats.bytesReceived / stats.totalBytes) * 100))

|

|

165

|

+

: undefined;

|

|

166

|

+

onProgress({

|

|

167

|

+

phase: "downloading",

|

|

168

|

+

message: msgDownloading(),

|

|

169

|

+

percent,

|

|

170

|

+

bytesReceived: stats.bytesReceived,

|

|

171

|

+

totalBytes: stats.totalBytes,

|

|

172

|

+

});

|

|

173

|

+

});

|

|

174

|

+

} catch (err) {

|

|

175

|

+

throw new ModelInstallError("download", err);

|

|

176

|

+

}

|

|

177

|

+

|

|

178

|

+

onProgress({ phase: "extracting", message: msgExtracting() });

|

|

179

|

+

try {

|

|

180

|

+

await extractArchive(archivePath, WHISPER_BASE_DIR);

|

|

181

|

+

rmSync(archivePath, { force: true });

|

|

182

|

+

pruneFp32Duplicates();

|

|

183

|

+

} catch (err) {

|

|

184

|

+

throw new ModelInstallError("extract", err);

|

|

185

|

+

}

|

|

186

|

+

|

|

187

|

+

onProgress({ phase: "verifying", message: msgVerifying() });

|

|

188

|

+

try {

|

|

189

|

+

verifyModelFiles();

|

|

190

|

+

} catch (err) {

|

|

191

|

+

throw new ModelInstallError("verify", err);

|

|

192

|

+

}

|

|

193

|

+

|

|

194

|

+

writeSentinel();

|

|

195

|

+

return getModelPaths();

|

|

196

|

+

} catch (err) {

|

|

197

|

+

removeModelInstall();

|

|

198

|

+

throw err;

|

|

199

|

+

}

|

|

200

|

+

}

|

|

201

|

+

|

|

202

|

+

// ── Internals ────────────────────────────────────────────────────────────────

|

|

203

|

+

|

|

204

|

+

interface DownloadStats {

|

|

205

|

+

bytesReceived: number;

|

|

206

|

+

totalBytes?: number;

|

|

207

|

+

}

|

|

208

|

+

|

|

209

|

+

async function downloadArchive(

|

|

210

|

+

url: string,

|

|

211

|

+

destPath: string,

|

|

212

|

+

signal: AbortSignal | undefined,

|

|

213

|

+

onStats?: (stats: DownloadStats) => void,

|

|

214

|

+

): Promise<void> {

|

|

215

|

+

const response = await fetch(url, { signal });

|

|

216

|

+

if (!response.ok || !response.body) {

|

|

217

|

+

throw new Error(`Model download failed: HTTP ${response.status}`);

|

|

218

|

+

}

|

|

219

|

+

|

|

220

|

+

// Servers occasionally omit `Content-Length` for chunked / proxied

|

|

221

|

+

// responses; downstream we treat undefined as "unknown total" and the

|

|

222

|

+

// splash falls back to a byte-counter without a percentage.

|

|

223

|

+

const totalBytes = parsePositiveInt(response.headers.get("content-length"));

|

|

224

|

+

|

|

225

|

+

let bytesReceived = 0;

|

|

226

|

+

const tap = new Transform({

|

|

227

|

+

transform(chunk: Buffer, _enc, cb) {

|

|

228

|

+

bytesReceived += chunk.length;

|

|

229

|

+

onStats?.({ bytesReceived, totalBytes });

|

|

230

|

+

cb(null, chunk);

|

|

231

|

+

},

|

|

232

|

+

});

|

|

233

|

+

|

|

234

|

+

const out = createWriteStream(destPath);

|

|

235

|

+

await pipeline(Readable.fromWeb(response.body as never), tap, out, { signal });

|

|

236

|

+

}

|

|

237

|

+

|

|

238

|

+

const DECIMAL_RADIX = 10;

|

|

239

|

+

|

|

240

|

+

function parsePositiveInt(raw: string | null | undefined): number | undefined {

|

|

241

|

+

if (!raw) return undefined;

|

|

242

|

+

const value = Number.parseInt(raw, DECIMAL_RADIX);

|

|

243

|

+

return Number.isFinite(value) && value > 0 ? value : undefined;

|

|

244

|

+

}

|

|

245

|

+

|

|

246

|

+

async function extractArchive(archivePath: string, destDir: string): Promise<void> {

|

|

247

|

+

await execFileAsync(TAR_BIN, [...TAR_FLAGS, archivePath, "-C", destDir, TAR_STRIP_FLAG]);

|

|

248

|

+

}

|

|

249

|

+

|

|

250

|

+

// The Whisper archive ships fp32 + int8 side-by-side (~290 MB of fp32 we

|

|

251

|

+

// don't use on CPU). Drop them so the install settles around ~157 MB.

|

|

252

|

+

function pruneFp32Duplicates(): void {

|

|

253

|

+

for (const name of FP32_DUPLICATE_FILES) {

|

|

254

|

+

rmSync(join(WHISPER_BASE_DIR, name), { force: true });

|

|

255

|

+

}

|

|

256

|

+

}

|

|

257

|

+

|

|

258

|

+

function verifyModelFiles(): void {

|

|

259

|

+

for (const name of REQUIRED_FILES) {

|

|

260

|

+

if (!existsSync(join(WHISPER_BASE_DIR, name))) {

|

|

261

|

+

throw new Error(`Model verification failed: missing ${name}`);

|

|

262

|

+

}

|

|

263

|

+

}

|

|

264

|

+

}

|

|

265

|

+

|

|

266

|

+

function writeSentinel(): void {

|

|

267

|

+

writeFileSync(join(WHISPER_BASE_DIR, SENTINEL_FILE), "", "utf-8");

|

|

268

|

+

}

|

package/audio/pcm.ts

ADDED

|

@@ -0,0 +1,45 @@

|

|

|

1

|

+

// decibri delivers Int16 LE; the STT engine wants Float32 in [-1, +1].

|

|

2

|

+

|

|

3

|

+

const BYTES_PER_INT16_SAMPLE = 2;

|

|

4

|

+

const INT16_FULL_SCALE = 0x8000;

|

|

5

|

+

|

|

6

|

+

export function bufferToFloat32(buf: Buffer): Float32Array {

|

|

7

|

+

const sampleCount = buf.length / BYTES_PER_INT16_SAMPLE;

|

|

8

|

+

const samples = new Float32Array(sampleCount);

|

|

9

|

+

for (let i = 0, j = 0; i < buf.length; i += BYTES_PER_INT16_SAMPLE, j++) {

|

|

10

|

+

samples[j] = buf.readInt16LE(i) / INT16_FULL_SCALE;

|

|

11

|

+

}

|

|

12

|

+

return samples;

|

|

13

|

+

}

|

|

14

|

+

|

|

15

|

+

export function computeRmsInt16(buf: Buffer): number {

|

|

16

|

+

const sampleCount = Math.floor(buf.length / BYTES_PER_INT16_SAMPLE);

|

|

17

|

+

if (sampleCount === 0) return 0;

|

|

18

|

+

let sumSquares = 0;

|

|

19

|

+

for (let i = 0; i < sampleCount; i++) {

|

|

20

|

+

const s = buf.readInt16LE(i * BYTES_PER_INT16_SAMPLE) / INT16_FULL_SCALE;

|

|

21

|

+

sumSquares += s * s;

|

|

22

|

+

}

|

|

23

|

+

const rms = Math.sqrt(sumSquares / sampleCount);

|

|

24

|

+

return clamp01(rms);

|

|

25

|

+

}

|

|

26

|

+

|

|

27

|

+

export function computeRmsFloat32(samples: Float32Array): number {

|

|

28

|

+

if (samples.length === 0) return 0;

|

|

29

|

+

let sumSquares = 0;

|

|

30

|

+

for (let i = 0; i < samples.length; i++) sumSquares += samples[i] * samples[i];

|

|

31

|

+

return Math.sqrt(sumSquares / samples.length);

|

|

32

|

+

}

|

|

33

|

+

|

|

34

|

+

export function clamp01(n: number): number {

|

|

35

|

+

if (n < 0) return 0;

|

|

36

|

+

if (n > 1) return 1;

|

|

37

|

+

return n;

|

|

38

|

+

}

|

|

39

|

+

|

|

40

|

+

export const BYTES_PER_INT16 = BYTES_PER_INT16_SAMPLE;

|

|

41

|

+

|

|

42

|

+

/** How many Int16 samples sit in a raw PCM chunk. */

|

|

43

|

+

export function samplesInInt16Chunk(chunk: Buffer): number {

|

|

44

|

+

return chunk.length / BYTES_PER_INT16_SAMPLE;

|

|

45

|

+

}

|