schema-search 0.1.10__py3-none-any.whl

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- schema_search/__init__.py +26 -0

- schema_search/chunkers/__init__.py +6 -0

- schema_search/chunkers/base.py +95 -0

- schema_search/chunkers/factory.py +31 -0

- schema_search/chunkers/llm.py +54 -0

- schema_search/chunkers/markdown.py +25 -0

- schema_search/embedding_cache/__init__.py +5 -0

- schema_search/embedding_cache/base.py +40 -0

- schema_search/embedding_cache/bm25.py +63 -0

- schema_search/embedding_cache/factory.py +20 -0

- schema_search/embedding_cache/inmemory.py +122 -0

- schema_search/graph_builder.py +69 -0

- schema_search/mcp_server.py +81 -0

- schema_search/metrics.py +33 -0

- schema_search/rankers/__init__.py +5 -0

- schema_search/rankers/base.py +45 -0

- schema_search/rankers/cross_encoder.py +40 -0

- schema_search/rankers/factory.py +11 -0

- schema_search/schema_extractor.py +135 -0

- schema_search/schema_search.py +276 -0

- schema_search/search/__init__.py +15 -0

- schema_search/search/base.py +85 -0

- schema_search/search/bm25.py +48 -0

- schema_search/search/factory.py +61 -0

- schema_search/search/fuzzy.py +56 -0

- schema_search/search/hybrid.py +82 -0

- schema_search/search/semantic.py +49 -0

- schema_search/types.py +57 -0

- schema_search/utils/__init__.py +0 -0

- schema_search/utils/lazy_import.py +26 -0

- schema_search-0.1.10.dist-info/METADATA +308 -0

- schema_search-0.1.10.dist-info/RECORD +40 -0

- schema_search-0.1.10.dist-info/WHEEL +5 -0

- schema_search-0.1.10.dist-info/entry_points.txt +2 -0

- schema_search-0.1.10.dist-info/licenses/LICENSE +21 -0

- schema_search-0.1.10.dist-info/top_level.txt +2 -0

- tests/__init__.py +0 -0

- tests/test_integration.py +352 -0

- tests/test_llm_sql_generation.py +320 -0

- tests/test_spider_eval.py +488 -0

|

@@ -0,0 +1,61 @@

|

|

|

1

|

+

from typing import Callable, Dict, Optional

|

|

2

|

+

|

|

3

|

+

from schema_search.search.semantic import SemanticSearchStrategy

|

|

4

|

+

from schema_search.search.fuzzy import FuzzySearchStrategy

|

|

5

|

+

from schema_search.search.bm25 import BM25SearchStrategy

|

|

6

|

+

from schema_search.search.hybrid import HybridSearchStrategy

|

|

7

|

+

from schema_search.search.base import BaseSearchStrategy

|

|

8

|

+

from schema_search.embedding_cache import BaseEmbeddingCache

|

|

9

|

+

from schema_search.rankers.base import BaseRanker

|

|

10

|

+

|

|

11

|

+

|

|

12

|

+

def create_search_strategy(

|

|

13

|

+

config: Dict,

|

|

14

|

+

get_embedding_cache: Callable[[], BaseEmbeddingCache],

|

|

15

|

+

get_bm25_cache: Callable,

|

|

16

|

+

get_reranker: Callable[[], Optional[BaseRanker]],

|

|

17

|

+

strategy_type: Optional[str],

|

|

18

|

+

) -> BaseSearchStrategy:

|

|

19

|

+

search_config = config["search"]

|

|

20

|

+

strategy_type = strategy_type or search_config["strategy"]

|

|

21

|

+

|

|

22

|

+

initial_top_k = search_config["initial_top_k"]

|

|

23

|

+

rerank_top_k = search_config["rerank_top_k"]

|

|

24

|

+

|

|

25

|

+

reranker = get_reranker()

|

|

26

|

+

|

|

27

|

+

if strategy_type == "semantic":

|

|

28

|

+

return SemanticSearchStrategy(

|

|

29

|

+

embedding_cache=get_embedding_cache(),

|

|

30

|

+

initial_top_k=initial_top_k,

|

|

31

|

+

rerank_top_k=rerank_top_k,

|

|

32

|

+

reranker=reranker,

|

|

33

|

+

)

|

|

34

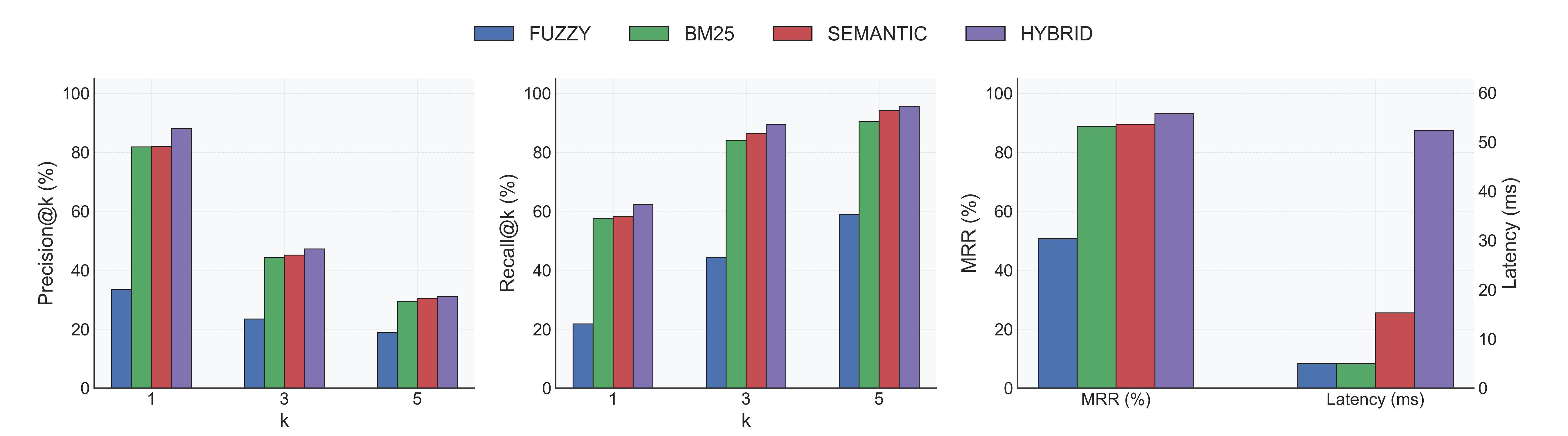

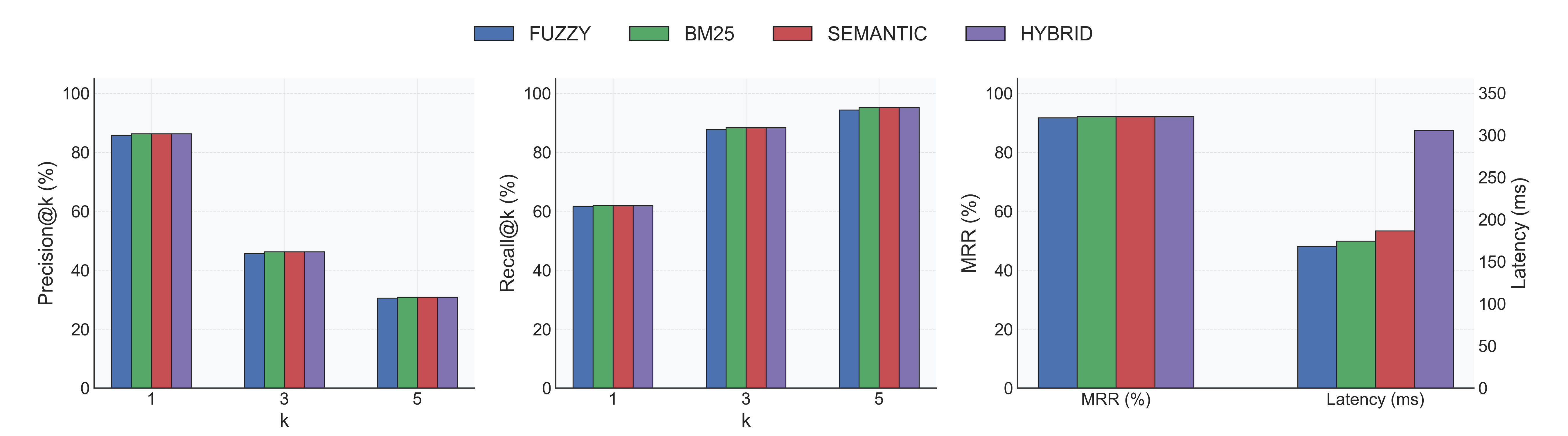

|

+

|

|

35

|

+

if strategy_type == "bm25":

|

|

36

|

+

return BM25SearchStrategy(

|

|

37

|

+

bm25_cache=get_bm25_cache(),

|

|

38

|

+

initial_top_k=initial_top_k,

|

|

39

|

+

rerank_top_k=rerank_top_k,

|

|

40

|

+

reranker=reranker,

|

|

41

|

+

)

|

|

42

|

+

|

|

43

|

+

if strategy_type == "fuzzy":

|

|

44

|

+

return FuzzySearchStrategy(

|

|

45

|

+

initial_top_k=initial_top_k,

|

|

46

|

+

rerank_top_k=rerank_top_k,

|

|

47

|

+

reranker=reranker,

|

|

48

|

+

)

|

|

49

|

+

|

|

50

|

+

if strategy_type == "hybrid":

|

|

51

|

+

semantic_weight = search_config["semantic_weight"]

|

|

52

|

+

return HybridSearchStrategy(

|

|

53

|

+

embedding_cache=get_embedding_cache(),

|

|

54

|

+

bm25_cache=get_bm25_cache(),

|

|

55

|

+

initial_top_k=initial_top_k,

|

|

56

|

+

rerank_top_k=rerank_top_k,

|

|

57

|

+

reranker=reranker,

|

|

58

|

+

semantic_weight=semantic_weight,

|

|

59

|

+

)

|

|

60

|

+

|

|

61

|

+

raise ValueError(f"Unknown search strategy: {strategy_type}")

|

|

@@ -0,0 +1,56 @@

|

|

|

1

|

+

from typing import Dict, List, Optional

|

|

2

|

+

|

|

3

|

+

from rapidfuzz import fuzz

|

|

4

|

+

|

|

5

|

+

from schema_search.search.base import BaseSearchStrategy

|

|

6

|

+

from schema_search.types import TableSchema, SearchResultItem

|

|

7

|

+

from schema_search.chunkers import Chunk

|

|

8

|

+

from schema_search.graph_builder import GraphBuilder

|

|

9

|

+

from schema_search.rankers.base import BaseRanker

|

|

10

|

+

|

|

11

|

+

|

|

12

|

+

class FuzzySearchStrategy(BaseSearchStrategy):

|

|

13

|

+

def __init__(

|

|

14

|

+

self, initial_top_k: int, rerank_top_k: int, reranker: Optional[BaseRanker]

|

|

15

|

+

):

|

|

16

|

+

super().__init__(reranker, initial_top_k, rerank_top_k)

|

|

17

|

+

|

|

18

|

+

def _initial_ranking(

|

|

19

|

+

self,

|

|

20

|

+

query: str,

|

|

21

|

+

schemas: Dict[str, TableSchema],

|

|

22

|

+

chunks: List[Chunk],

|

|

23

|

+

graph_builder: GraphBuilder,

|

|

24

|

+

hops: int,

|

|

25

|

+

) -> List[SearchResultItem]:

|

|

26

|

+

scored_tables: List[tuple[str, float]] = []

|

|

27

|

+

|

|

28

|

+

for table_name, schema in schemas.items():

|

|

29

|

+

searchable_text = self._build_searchable_text(table_name, schema)

|

|

30

|

+

score = fuzz.ratio(query, searchable_text, score_cutoff=0) / 100.0

|

|

31

|

+

scored_tables.append((table_name, score))

|

|

32

|

+

|

|

33

|

+

scored_tables.sort(key=lambda x: x[1], reverse=True)

|

|

34

|

+

|

|

35

|

+

results: List[SearchResultItem] = []

|

|

36

|

+

for table_name, score in scored_tables[: self.initial_top_k]:

|

|

37

|

+

result = self._build_result_item(

|

|

38

|

+

table_name=table_name,

|

|

39

|

+

score=score,

|

|

40

|

+

schema=schemas[table_name],

|

|

41

|

+

matched_chunks=[],

|

|

42

|

+

graph_builder=graph_builder,

|

|

43

|

+

hops=hops,

|

|

44

|

+

)

|

|

45

|

+

results.append(result)

|

|

46

|

+

|

|

47

|

+

return results

|

|

48

|

+

|

|

49

|

+

def _build_searchable_text(self, table_name: str, schema: TableSchema) -> str:

|

|

50

|

+

parts = [table_name]

|

|

51

|

+

|

|

52

|

+

if schema["indices"]:

|

|

53

|

+

for idx in schema["indices"]:

|

|

54

|

+

parts.append(idx["name"])

|

|

55

|

+

|

|

56

|

+

return " ".join(parts)

|

|

@@ -0,0 +1,82 @@

|

|

|

1

|

+

from typing import Dict, List, Optional, TYPE_CHECKING

|

|

2

|

+

|

|

3

|

+

import numpy as np

|

|

4

|

+

|

|

5

|

+

from schema_search.search.base import BaseSearchStrategy

|

|

6

|

+

from schema_search.types import TableSchema, SearchResultItem

|

|

7

|

+

from schema_search.chunkers import Chunk

|

|

8

|

+

from schema_search.graph_builder import GraphBuilder

|

|

9

|

+

from schema_search.embedding_cache import BaseEmbeddingCache

|

|

10

|

+

from schema_search.rankers.base import BaseRanker

|

|

11

|

+

|

|

12

|

+

if TYPE_CHECKING:

|

|

13

|

+

from schema_search.embedding_cache.bm25 import BM25Cache

|

|

14

|

+

|

|

15

|

+

|

|

16

|

+

class HybridSearchStrategy(BaseSearchStrategy):

|

|

17

|

+

def __init__(

|

|

18

|

+

self,

|

|

19

|

+

embedding_cache: BaseEmbeddingCache,

|

|

20

|

+

bm25_cache: "BM25Cache",

|

|

21

|

+

initial_top_k: int,

|

|

22

|

+

rerank_top_k: int,

|

|

23

|

+

reranker: Optional[BaseRanker],

|

|

24

|

+

semantic_weight: float,

|

|

25

|

+

):

|

|

26

|

+

super().__init__(reranker, initial_top_k, rerank_top_k)

|

|

27

|

+

assert 0 <= semantic_weight <= 1, "semantic_weight must be between 0 and 1"

|

|

28

|

+

self.embedding_cache = embedding_cache

|

|

29

|

+

self.bm25_cache = bm25_cache

|

|

30

|

+

self.semantic_weight = semantic_weight

|

|

31

|

+

self.bm25_weight = 1 - semantic_weight

|

|

32

|

+

|

|

33

|

+

def _initial_ranking(

|

|

34

|

+

self,

|

|

35

|

+

query: str,

|

|

36

|

+

schemas: Dict[str, TableSchema],

|

|

37

|

+

chunks: List[Chunk],

|

|

38

|

+

graph_builder: GraphBuilder,

|

|

39

|

+

hops: int,

|

|

40

|

+

) -> List[SearchResultItem]:

|

|

41

|

+

query_embedding = self.embedding_cache.encode_query(query)

|

|

42

|

+

semantic_scores = self.embedding_cache.compute_similarities(query_embedding)

|

|

43

|

+

|

|

44

|

+

bm25_scores = self.bm25_cache.get_scores(query)

|

|

45

|

+

|

|

46

|

+

semantic_min = semantic_scores.min()

|

|

47

|

+

semantic_max = semantic_scores.max()

|

|

48

|

+

semantic_range = semantic_max - semantic_min

|

|

49

|

+

if semantic_range > 0:

|

|

50

|

+

semantic_scores_norm = (semantic_scores - semantic_min) / semantic_range

|

|

51

|

+

else:

|

|

52

|

+

semantic_scores_norm = np.zeros_like(semantic_scores)

|

|

53

|

+

|

|

54

|

+

bm25_min = bm25_scores.min()

|

|

55

|

+

bm25_max = bm25_scores.max()

|

|

56

|

+

bm25_range = bm25_max - bm25_min

|

|

57

|

+

if bm25_range > 0:

|

|

58

|

+

bm25_scores_norm = (bm25_scores - bm25_min) / bm25_range

|

|

59

|

+

else:

|

|

60

|

+

bm25_scores_norm = np.zeros_like(bm25_scores)

|

|

61

|

+

|

|

62

|

+

hybrid_scores = (

|

|

63

|

+

self.semantic_weight * semantic_scores_norm

|

|

64

|

+

+ self.bm25_weight * bm25_scores_norm

|

|

65

|

+

)

|

|

66

|

+

|

|

67

|

+

top_indices = hybrid_scores.argsort()[::-1][: self.initial_top_k]

|

|

68

|

+

|

|

69

|

+

results: List[SearchResultItem] = []

|

|

70

|

+

for idx in top_indices:

|

|

71

|

+

chunk = chunks[idx]

|

|

72

|

+

result = self._build_result_item(

|

|

73

|

+

table_name=chunk.table_name,

|

|

74

|

+

score=float(hybrid_scores[idx]),

|

|

75

|

+

schema=schemas[chunk.table_name],

|

|

76

|

+

matched_chunks=[chunk.content],

|

|

77

|

+

graph_builder=graph_builder,

|

|

78

|

+

hops=hops,

|

|

79

|

+

)

|

|

80

|

+

results.append(result)

|

|

81

|

+

|

|

82

|

+

return results

|

|

@@ -0,0 +1,49 @@

|

|

|

1

|

+

from typing import Dict, List, Optional

|

|

2

|

+

|

|

3

|

+

import numpy as np

|

|

4

|

+

|

|

5

|

+

from schema_search.search.base import BaseSearchStrategy

|

|

6

|

+

from schema_search.types import TableSchema, SearchResultItem

|

|

7

|

+

from schema_search.chunkers import Chunk

|

|

8

|

+

from schema_search.graph_builder import GraphBuilder

|

|

9

|

+

from schema_search.embedding_cache import BaseEmbeddingCache

|

|

10

|

+

from schema_search.rankers.base import BaseRanker

|

|

11

|

+

|

|

12

|

+

|

|

13

|

+

class SemanticSearchStrategy(BaseSearchStrategy):

|

|

14

|

+

def __init__(

|

|

15

|

+

self,

|

|

16

|

+

embedding_cache: BaseEmbeddingCache,

|

|

17

|

+

initial_top_k: int,

|

|

18

|

+

rerank_top_k: int,

|

|

19

|

+

reranker: Optional[BaseRanker],

|

|

20

|

+

):

|

|

21

|

+

super().__init__(reranker, initial_top_k, rerank_top_k)

|

|

22

|

+

self.embedding_cache = embedding_cache

|

|

23

|

+

|

|

24

|

+

def _initial_ranking(

|

|

25

|

+

self,

|

|

26

|

+

query: str,

|

|

27

|

+

schemas: Dict[str, TableSchema],

|

|

28

|

+

chunks: List[Chunk],

|

|

29

|

+

graph_builder: GraphBuilder,

|

|

30

|

+

hops: int,

|

|

31

|

+

) -> List[SearchResultItem]:

|

|

32

|

+

query_embedding = self.embedding_cache.encode_query(query)

|

|

33

|

+

embedding_scores = self.embedding_cache.compute_similarities(query_embedding)

|

|

34

|

+

top_indices = embedding_scores.argsort()[::-1][: self.initial_top_k]

|

|

35

|

+

|

|

36

|

+

results: List[SearchResultItem] = []

|

|

37

|

+

for idx in top_indices:

|

|

38

|

+

chunk = chunks[idx]

|

|

39

|

+

result = self._build_result_item(

|

|

40

|

+

table_name=chunk.table_name,

|

|

41

|

+

score=float(embedding_scores[idx]),

|

|

42

|

+

schema=schemas[chunk.table_name],

|

|

43

|

+

matched_chunks=[chunk.content],

|

|

44

|

+

graph_builder=graph_builder,

|

|

45

|

+

hops=hops,

|

|

46

|

+

)

|

|

47

|

+

results.append(result)

|

|

48

|

+

|

|

49

|

+

return results

|

schema_search/types.py

ADDED

|

@@ -0,0 +1,57 @@

|

|

|

1

|

+

from typing import TypedDict, List, Literal, Optional

|

|

2

|

+

|

|

3

|

+

|

|

4

|

+

SearchType = Literal["semantic", "fuzzy", "bm25", "hybrid"]

|

|

5

|

+

|

|

6

|

+

|

|

7

|

+

class ColumnInfo(TypedDict):

|

|

8

|

+

name: str

|

|

9

|

+

type: str

|

|

10

|

+

nullable: bool

|

|

11

|

+

default: Optional[str]

|

|

12

|

+

|

|

13

|

+

|

|

14

|

+

class ForeignKeyInfo(TypedDict):

|

|

15

|

+

constrained_columns: List[str]

|

|

16

|

+

referred_table: str

|

|

17

|

+

referred_columns: List[str]

|

|

18

|

+

|

|

19

|

+

|

|

20

|

+

class IndexInfo(TypedDict):

|

|

21

|

+

name: str

|

|

22

|

+

columns: List[str]

|

|

23

|

+

unique: bool

|

|

24

|

+

|

|

25

|

+

|

|

26

|

+

class ConstraintInfo(TypedDict):

|

|

27

|

+

name: Optional[str]

|

|

28

|

+

columns: List[str]

|

|

29

|

+

|

|

30

|

+

|

|

31

|

+

class TableSchema(TypedDict):

|

|

32

|

+

name: str

|

|

33

|

+

primary_keys: List[str]

|

|

34

|

+

columns: Optional[List[ColumnInfo]]

|

|

35

|

+

foreign_keys: Optional[List[ForeignKeyInfo]]

|

|

36

|

+

indices: Optional[List[IndexInfo]]

|

|

37

|

+

unique_constraints: Optional[List[ConstraintInfo]]

|

|

38

|

+

check_constraints: Optional[List[ConstraintInfo]]

|

|

39

|

+

|

|

40

|

+

|

|

41

|

+

class IndexResult(TypedDict):

|

|

42

|

+

tables: int

|

|

43

|

+

chunks: int

|

|

44

|

+

latency_sec: float

|

|

45

|

+

|

|

46

|

+

|

|

47

|

+

class SearchResultItem(TypedDict):

|

|

48

|

+

table: str

|

|

49

|

+

score: float

|

|

50

|

+

schema: TableSchema

|

|

51

|

+

matched_chunks: List[str]

|

|

52

|

+

related_tables: List[str]

|

|

53

|

+

|

|

54

|

+

|

|

55

|

+

class SearchResult(TypedDict):

|

|

56

|

+

results: List[SearchResultItem]

|

|

57

|

+

latency_sec: float

|

|

File without changes

|

|

@@ -0,0 +1,26 @@

|

|

|

1

|

+

from typing import Any

|

|

2

|

+

from importlib import import_module

|

|

3

|

+

|

|

4

|

+

|

|

5

|

+

def lazy_import_check(module_name: str, extra_name: str, feature: str) -> Any:

|

|

6

|

+

"""

|

|

7

|

+

Lazily import a module and provide helpful error if missing.

|

|

8

|

+

|

|

9

|

+

Args:

|

|

10

|

+

module_name: Python module to import (e.g., "sentence_transformers")

|

|

11

|

+

extra_name: pip extra name (e.g., "semantic")

|

|

12

|

+

feature: User-facing feature description (e.g., "semantic search")

|

|

13

|

+

|

|

14

|

+

Returns:

|

|

15

|

+

Imported module

|

|

16

|

+

|

|

17

|

+

Raises:

|

|

18

|

+

ImportError: With installation instructions if module not found

|

|

19

|

+

"""

|

|

20

|

+

try:

|

|

21

|

+

return import_module(module_name)

|

|

22

|

+

except ImportError as e:

|

|

23

|

+

raise ImportError(

|

|

24

|

+

f"'{module_name}' is required for {feature}. "

|

|

25

|

+

f"Install with: pip install schema-search[{extra_name}]"

|

|

26

|

+

) from e

|

|

@@ -0,0 +1,308 @@

|

|

|

1

|

+

Metadata-Version: 2.4

|

|

2

|

+

Name: schema-search

|

|

3

|

+

Version: 0.1.10

|

|

4

|

+

Summary: Natural language database schema search with graph-aware semantic retrieval

|

|

5

|

+

Home-page: https://adibhasan.com/blog/schema-search/

|

|

6

|

+

Author: Adib Hasan

|

|

7

|

+

Classifier: Development Status :: 3 - Alpha

|

|

8

|

+

Classifier: Intended Audience :: Developers

|

|

9

|

+

Classifier: License :: OSI Approved :: MIT License

|

|

10

|

+

Classifier: Programming Language :: Python :: 3

|

|

11

|

+

Classifier: Programming Language :: Python :: 3.10

|

|

12

|

+

Classifier: Programming Language :: Python :: 3.11

|

|

13

|

+

Requires-Python: >=3.10

|

|

14

|

+

Description-Content-Type: text/markdown

|

|

15

|

+

License-File: LICENSE

|

|

16

|

+

Requires-Dist: sqlalchemy>=1.4.0

|

|

17

|

+

Requires-Dist: networkx>=2.8.0

|

|

18

|

+

Requires-Dist: bm25s>=0.2.0

|

|

19

|

+

Requires-Dist: numpy>=1.21.0

|

|

20

|

+

Requires-Dist: pyyaml>=6.0

|

|

21

|

+

Requires-Dist: tqdm>=4.65.0

|

|

22

|

+

Requires-Dist: rapidfuzz>=3.0.0

|

|

23

|

+

Provides-Extra: semantic

|

|

24

|

+

Requires-Dist: sentence-transformers>=2.2.0; extra == "semantic"

|

|

25

|

+

Provides-Extra: llm

|

|

26

|

+

Requires-Dist: openai>=1.0.0; extra == "llm"

|

|

27

|

+

Provides-Extra: mcp

|

|

28

|

+

Requires-Dist: fastmcp>=2.0.0; extra == "mcp"

|

|

29

|

+

Provides-Extra: test

|

|

30

|

+

Requires-Dist: pytest>=7.0.0; extra == "test"

|

|

31

|

+

Requires-Dist: python-dotenv>=1.0.0; extra == "test"

|

|

32

|

+

Requires-Dist: psutil>=5.9.0; extra == "test"

|

|

33

|

+

Requires-Dist: datasets>=2.0.0; extra == "test"

|

|

34

|

+

Provides-Extra: postgres

|

|

35

|

+

Requires-Dist: psycopg2-binary>=2.9.0; extra == "postgres"

|

|

36

|

+

Provides-Extra: mysql

|

|

37

|

+

Requires-Dist: pymysql>=1.0.0; extra == "mysql"

|

|

38

|

+

Provides-Extra: snowflake

|

|

39

|

+

Requires-Dist: snowflake-sqlalchemy>=1.4.0; extra == "snowflake"

|

|

40

|

+

Requires-Dist: snowflake-connector-python>=3.0.0; extra == "snowflake"

|

|

41

|

+

Provides-Extra: bigquery

|

|

42

|

+

Requires-Dist: sqlalchemy-bigquery>=1.6.0; extra == "bigquery"

|

|

43

|

+

Dynamic: author

|

|

44

|

+

Dynamic: classifier

|

|

45

|

+

Dynamic: description

|

|

46

|

+

Dynamic: description-content-type

|

|

47

|

+

Dynamic: home-page

|

|

48

|

+

Dynamic: license-file

|

|

49

|

+

Dynamic: provides-extra

|

|

50

|

+

Dynamic: requires-dist

|

|

51

|

+

Dynamic: requires-python

|

|

52

|

+

Dynamic: summary

|

|

53

|

+

|

|

54

|

+

# Schema Search

|

|

55

|

+

|

|

56

|

+

An MCP Server for Natural Language Search over RDBMS Schemas. Find exact tables you need, with all their relationships mapped out, in milliseconds. No vector database setup is required.

|

|

57

|

+

|

|

58

|

+

## Why

|

|

59

|

+

|

|

60

|

+

You have 200 tables in your database. Someone asks "where are user refunds stored?"

|

|

61

|

+

|

|

62

|

+

You could:

|

|

63

|

+

- Grep through SQL files for 20 minutes

|

|

64

|

+

- Pass the full schema to an LLM and watch it struggle with 200 tables

|

|

65

|

+

|

|

66

|

+

Or **build schematic embeddings of your tables, store in-memory, and query in natural language in an MCP server**.

|

|

67

|

+

|

|

68

|

+

### Benefits

|

|

69

|

+

- No vector database setup is required

|

|

70

|

+

- Small memory footprint -- easily scales up to 1000 tables and 10,000+ columns.

|

|

71

|

+

- Millisecond query latency

|

|

72

|

+

|

|

73

|

+

## Install

|

|

74

|

+

|

|

75

|

+

**Fast by default** - Base install uses only BM25/fuzzy search (no PyTorch):

|

|

76

|

+

|

|

77

|

+

```bash

|

|

78

|

+

# Minimal install (BM25 + fuzzy only, ~10MB)

|

|

79

|

+

pip install "schema-search[postgres]"

|

|

80

|

+

|

|

81

|

+

# With semantic/hybrid search support (~500MB with PyTorch)

|

|

82

|

+

pip install "schema-search[postgres,semantic]"

|

|

83

|

+

|

|

84

|

+

# With LLM chunking

|

|

85

|

+

pip install "schema-search[postgres,semantic,llm]"

|

|

86

|

+

|

|

87

|

+

# With MCP server

|

|

88

|

+

pip install "schema-search[postgres,semantic,mcp]"

|

|

89

|

+

|

|

90

|

+

# Other databases

|

|

91

|

+

pip install "schema-search[mysql,semantic]" # MySQL

|

|

92

|

+

pip install "schema-search[snowflake,semantic]" # Snowflake

|

|

93

|

+

pip install "schema-search[bigquery,semantic]" # BigQuery

|

|

94

|

+

```

|

|

95

|

+

|

|

96

|

+

**Extras:**

|

|

97

|

+

- `[semantic]`: Enables semantic/hybrid search and CrossEncoder reranking (adds sentence-transformers)

|

|

98

|

+

- `[llm]`: Enables LLM-based schema chunking (adds openai)

|

|

99

|

+

- `[mcp]`: MCP server support (adds fastmcp)

|

|

100

|

+

|

|

101

|

+

## Configuration

|

|

102

|

+

|

|

103

|

+

Edit [`config.yml`](https://github.com/Neehan/schema-search/blob/main/config.yml):

|

|

104

|

+

|

|

105

|

+

```yaml

|

|

106

|

+

logging:

|

|

107

|

+

level: "WARNING"

|

|

108

|

+

|

|

109

|

+

embedding:

|

|

110

|

+

location: "memory" # Options: "memory", "vectordb" (coming soon)

|

|

111

|

+

model: "multi-qa-MiniLM-L6-cos-v1"

|

|

112

|

+

metric: "cosine" # Options: "cosine", "euclidean", "manhattan", "dot"

|

|

113

|

+

batch_size: 32

|

|

114

|

+

show_progress: false

|

|

115

|

+

cache_dir: "/tmp/.schema_search_cache"

|

|

116

|

+

|

|

117

|

+

chunking:

|

|

118

|

+

strategy: "raw" # Options: "raw", "llm"

|

|

119

|

+

max_tokens: 256

|

|

120

|

+

overlap_tokens: 50

|

|

121

|

+

model: "gpt-4o-mini"

|

|

122

|

+

|

|

123

|

+

search:

|

|

124

|

+

# Search strategy: "semantic" (embeddings), "bm25" (BM25 lexical), "fuzzy" (fuzzy string matching), "hybrid" (semantic + bm25)

|

|

125

|

+

strategy: "bm25"

|

|

126

|

+

initial_top_k: 20

|

|

127

|

+

rerank_top_k: 5

|

|

128

|

+

semantic_weight: 0.67 # For hybrid search (bm25_weight = 1 - semantic_weight)

|

|

129

|

+

hops: 1 # Number of foreign key hops for graph expansion (0-2 recommended)

|

|

130

|

+

|

|

131

|

+

reranker:

|

|

132

|

+

# CrossEncoder model for reranking. Set to null to disable reranking

|

|

133

|

+

model: null # "Alibaba-NLP/gte-reranker-modernbert-base"

|

|

134

|

+

|

|

135

|

+

schema:

|

|

136

|

+

include_columns: true

|

|

137

|

+

include_indices: true

|

|

138

|

+

include_foreign_keys: true

|

|

139

|

+

include_constraints: true

|

|

140

|

+

```

|

|

141

|

+

|

|

142

|

+

|

|

143

|

+

## MCP Server

|

|

144

|

+

|

|

145

|

+

Integrate with Claude Desktop or any MCP client.

|

|

146

|

+

|

|

147

|

+

### Setup

|

|

148

|

+

|

|

149

|

+

Add to your MCP config (e.g., `~/.cursor/mcp.json` or Claude Desktop config):

|

|

150

|

+

|

|

151

|

+

**Using uv (Recommended):**

|

|

152

|

+

```json

|

|

153

|

+

{

|

|

154

|

+

"mcpServers": {

|

|

155

|

+

"schema-search": {

|

|

156

|

+

"command": "uvx",

|

|

157

|

+

"args": [

|

|

158

|

+

"schema-search[postgres,mcp]",

|

|

159

|

+

"postgresql://user:pass@localhost/db",

|

|

160

|

+

"optional/path/to/config.yml",

|

|

161

|

+

"optional llm_api_key",

|

|

162

|

+

"optional llm_base_url"

|

|

163

|

+

]

|

|

164

|

+

}

|

|

165

|

+

}

|

|

166

|

+

}

|

|

167

|

+

```

|

|

168

|

+

|

|

169

|

+

**Using pip:**

|

|

170

|

+

```json

|

|

171

|

+

{

|

|

172

|

+

"mcpServers": {

|

|

173

|

+

"schema-search": {

|

|

174

|

+

// conda: /Users/<username>/opt/miniconda3/envs/<your env>/bin/schema-search",

|

|

175

|

+

"command": "path/to/schema-search",

|

|

176

|

+

"args": [

|

|

177

|

+

"postgresql://user:pass@localhost/db",

|

|

178

|

+

"optional/path/to/config.yml",

|

|

179

|

+

"optional llm_api_key",

|

|

180

|

+

"optional llm_base_url"

|

|

181

|

+

]

|

|

182

|

+

}

|

|

183

|

+

}

|

|

184

|

+

}

|

|

185

|

+

```

|

|

186

|

+

|

|

187

|

+

|

|

188

|

+

The LLM API key and base url are only required if you use LLM-generated schema summaries (`config.chunking.strategy = 'llm'`).

|

|

189

|

+

|

|

190

|

+

### CLI Usage

|

|

191

|

+

|

|

192

|

+

```bash

|

|

193

|

+

schema-search "postgresql://user:pass@localhost/db" "optional/path/to/config.yml"

|

|

194

|

+

```

|

|

195

|

+

|

|

196

|

+

Optional args: `[config_path] [llm_api_key] [llm_base_url]`

|

|

197

|

+

|

|

198

|

+

The server exposes `schema_search(query, hops, limit)` for natural language schema queries.

|

|

199

|

+

|

|

200

|

+

## Python Use

|

|

201

|

+

|

|

202

|

+

```python

|

|

203

|

+

from sqlalchemy import create_engine

|

|

204

|

+

from schema_search import SchemaSearch

|

|

205

|

+

|

|

206

|

+

engine = create_engine("postgresql://user:pass@localhost/db")

|

|

207

|

+

search = SchemaSearch(

|

|

208

|

+

engine=engine,

|

|

209

|

+

config_path="optional/path/to/config.yml", # default: config.yml

|

|

210

|

+

llm_api_key="optional llm api key",

|

|

211

|

+

llm_base_url="optional llm base url"

|

|

212

|

+

)

|

|

213

|

+

|

|

214

|

+

search.index(force=False) # default is False

|

|

215

|

+

results = search.search("where are user refunds stored?")

|

|

216

|

+

|

|

217

|

+

for result in results['results']:

|

|

218

|

+

print(result['table']) # "refund_transactions"

|

|

219

|

+

print(result['schema']) # Full column info, types, constraints

|

|

220

|

+

print(result['related_tables']) # ["users", "payments", "transactions"]

|

|

221

|

+

|

|

222

|

+

# Override hops, limit, search strategy

|

|

223

|

+

results = search.search("user_table", hops=1, limit=5, search_type="hybrid")

|

|

224

|

+

|

|

225

|

+

```

|

|

226

|

+

|

|

227

|

+

`SchemaSearch.index()` automatically detects schema changes and refreshes cached metadata, so you rarely need to force a reindex manually.

|

|

228

|

+

|

|

229

|

+

## Search Strategies

|

|

230

|

+

|

|

231

|

+

Schema Search supports four search strategies:

|

|

232

|

+

|

|

233

|

+

- **bm25**: Lexical search using BM25 ranking algorithm (no ML dependencies)

|

|

234

|

+

- **fuzzy**: String matching on table/column names using fuzzy matching (no ML dependencies)

|

|

235

|

+

- **semantic**: Embedding-based similarity search using sentence transformers (requires `[semantic]`)

|

|

236

|

+

- **hybrid**: Combines semantic and bm25 scores (default: 67% semantic, 33% bm25) (requires `[semantic]`)

|

|

237

|

+

|

|

238

|

+

Each strategy performs its own initial ranking, then optionally applies CrossEncoder reranking if `reranker.model` is configured (requires `[semantic]`). Set `reranker.model` to `null` to disable reranking.

|

|

239

|

+

|

|

240

|

+

## Performance Comparison

|

|

241

|

+

We [benchmarked](/tests/test_spider_eval.py) on the Spider dataset (1,234 train queries across 18 databases) using the default `config.yml`.

|

|

242

|

+

|

|

243

|

+

**Memory:** The embedding model requires ~90 MB and the optional reranker adds ~155 MB. Actual process memory depends on your Python runtime.

|

|

244

|

+

|

|

245

|

+

### Without Reranker (`reranker.model: null`)

|

|

246

|

+

|

|

247

|

+

- **Indexing:** 0.22s ± 0.08s per database (18 total).

|

|

248

|

+

- **Accuracy:** Hybrid leads with Recall@1 62% / MRR 0.93; Semantic follows at Recall@1 58% / MRR 0.89.

|

|

249

|

+

- **Latency:** BM25 and Fuzzy return in ~5ms; Semantic spends ~15ms; Hybrid (semantic + fuzzy) averages 52ms.

|

|

250

|

+

- **Fuzzy baseline:** Recall@1 22%, highlighting the need for semantic signals on natural-language queries.

|

|

251

|

+

|

|

252

|

+

### With Reranker (`Alibaba-NLP/gte-reranker-modernbert-base`)

|

|

253

|

+

|

|

254

|

+

- **Indexing:** 0.25s ± 0.05s per database (same 18 DBs).

|

|

255

|

+

- **Accuracy:** All strategies converge around Recall@1 62% and MRR ≈ 0.92; Fuzzy jumps from 51% → 92% MRR.

|

|

256

|

+

- **Latency trade-off:** Extra CrossEncoder pass lifts per-query latency to ~0.18–0.29s depending on strategy.

|

|

257

|

+

- **Recommendation:** Enable the reranker when accuracy matters most; disable it for ultra-low-latency lookups.

|

|

258

|

+

|

|

259

|

+

|

|

260

|

+

You can override the search strategy, hops, and limit at query time:

|

|

261

|

+

|

|

262

|

+

```python

|

|

263

|

+

# Use fuzzy search instead of default

|

|

264

|

+

results = search.search("user_table", search_type="fuzzy")

|

|

265

|

+

|

|

266

|

+

# Use BM25 for keyword-based search

|

|

267

|

+

results = search.search("transactions payments", search_type="bm25")

|

|

268

|

+

|

|

269

|

+

# Use hybrid for best of both worlds

|

|

270

|

+

results = search.search("where are user refunds?", search_type="hybrid")

|

|

271

|

+

|

|

272

|

+

# Override hops and limit

|

|

273

|

+

results = search.search("user refunds", hops=2, limit=10) # Expand 2 hops, return 10 tables

|

|

274

|

+

|

|

275

|

+

# Disable graph expansion

|

|

276

|

+

results = search.search("user_table", hops=0) # Only direct matches, no foreign key traversal

|

|

277

|

+

```

|

|

278

|

+

|

|

279

|

+

### LLM Chunking

|

|

280

|

+

|

|

281

|

+

Use LLM to generate semantic summaries instead of raw schema text (requires `[llm]` extra):

|

|

282

|

+

|

|

283

|

+

1. Install: `pip install "schema-search[postgres,llm]"`

|

|

284

|

+

2. Set `strategy: "llm"` in `config.yml`

|

|

285

|

+

3. Pass API credentials:

|

|

286

|

+

|

|

287

|

+

```python

|

|

288

|

+

search = SchemaSearch(

|

|

289

|

+

engine,

|

|

290

|

+

llm_api_key="sk-...",

|

|

291

|

+

llm_base_url="https://api.openai.com/v1/" # optional

|

|

292

|

+

)

|

|

293

|

+

```

|

|

294

|

+

|

|

295

|

+

## How It Works

|

|

296

|

+

|

|

297

|

+

1. **Extract schemas** from database using SQLAlchemy inspector

|

|

298

|

+

2. **Chunk schemas** into digestible pieces (markdown or LLM-generated summaries)

|

|

299

|

+

3. **Initial search** using selected strategy (semantic/BM25/fuzzy)

|

|

300

|

+

4. **Expand via foreign keys** to find related tables (configurable hops)

|

|

301

|

+

5. **Optional reranking** with CrossEncoder to refine results

|

|

302

|

+

6. Return top tables with full schema and relationships

|

|

303

|

+

|

|

304

|

+

Cache stored in `/tmp/.schema_search_cache/` (configurable in `config.yml`)

|

|

305

|

+

|

|

306

|

+

## License

|

|

307

|

+

|

|

308

|

+

MIT

|

|

@@ -0,0 +1,40 @@

|

|

|

1

|

+

schema_search/__init__.py,sha256=06680k1q7pUf1m-1MNhKJGgHyT2NYiyJTLUIOP74dJY,486

|

|

2

|

+

schema_search/graph_builder.py,sha256=oKiVdVI_EB_ZmnxNiIV7Dt-jyKjV8B1RlbiSWpOSe30,2140

|

|

3

|

+

schema_search/mcp_server.py,sha256=uFTGONeQ8Zib9r2zw-YO_uzZgVdIVh-_o8deMmNA2i0,2241

|

|

4

|

+

schema_search/metrics.py,sha256=veyPo23aysiU_1MCwTVbBcVNreZFr_RGJwMCKBq1RAs,913

|

|

5

|

+

schema_search/schema_extractor.py,sha256=tpFF5FNPT694qZNoPZoRBjMSZySDt0CxUU0Ljtno6Z8,4280

|

|

6

|

+

schema_search/schema_search.py,sha256=VubGHXDyHQp9VPf4VXfC-oGFHkRQlgyV8hPsXS_XJwA,9260

|

|

7

|

+

schema_search/types.py,sha256=0CbG57j6orJawBaKjAMG28sfFARh3jSusoQ6gsA4PRc,1156

|

|

8

|

+

schema_search/chunkers/__init__.py,sha256=nBZZCZHIvqpmWBR5Noef7j5yyTEcFZz_ZNZuDCYuQt0,314

|

|

9

|

+

schema_search/chunkers/base.py,sha256=J7K-EO5SCZ9x0m7mnpjCjud9z5mLyq5zB-wt1ziHheY,2841

|

|

10

|

+

schema_search/chunkers/factory.py,sha256=ue2M9MUIEtLZN_3sHXumHmUKXDrYD4Hu7L6EIYgBk1s,1108

|

|

11

|

+

schema_search/chunkers/llm.py,sha256=VSBxfL5SBu7q4583wvWrYOqjkyiL33CBfl4FvZFH4xw,1808

|

|

12

|

+

schema_search/chunkers/markdown.py,sha256=LBFr9E3LVQV0UPW6X-qyNlfTYSfJGE8oxCl_FzbkAEw,940

|

|

13

|

+

schema_search/embedding_cache/__init__.py,sha256=cOOPGCcQqw_ywnMxcZbOJXfHHoqTRVS1Mj638kHjJoU,299

|

|

14

|

+

schema_search/embedding_cache/base.py,sha256=D9Izn3h319_HQIz9I_SrjWrdemnYg4aV8dQ0rppa3Y4,978

|

|

15

|

+

schema_search/embedding_cache/bm25.py,sha256=D_wiXWnigJhnOU_wD3p4xbjtUB70sL_7yYrV6eAuTy0,1940

|

|

16

|

+

schema_search/embedding_cache/factory.py,sha256=XJ6_PJV0Vsk-XNwW0xFJcuMO12vNExOhDcrgrZEH_u8,739

|

|

17

|

+

schema_search/embedding_cache/inmemory.py,sha256=_1gQzrjAk0HQJI-6ZmmJLRL5bYDfNx_3WCE9mabjZNA,3991

|

|

18

|

+

schema_search/rankers/__init__.py,sha256=0pNYKAvWSuspeksB9uaCLYuXD82rT7jqT_jEBXzJ-rw,238

|

|

19

|

+

schema_search/rankers/base.py,sha256=HpYM_ljsRseMXJPgjKcDse58VdVRhg0aAYgmLJm-rZU,1467

|

|

20

|

+

schema_search/rankers/cross_encoder.py,sha256=AT8-vgO0iqhFW-6GgkqDH1kCMjBDmEwpPpDqjVGW-qg,1563

|

|

21

|

+

schema_search/rankers/factory.py,sha256=EVwd_kaHyg4TlVji1gt6Qb9BQS7D8kP7DsSq8DoNt4M,368

|

|

22

|

+

schema_search/search/__init__.py,sha256=pWMX755FaxAr0hbaZp4Qk2V8KH5W4CDjSbxFRavMmgw,545

|

|

23

|

+

schema_search/search/base.py,sha256=XmG8UewFfr0f4bEw0aMZPVoX2HxInGHZ-5ebB1x9ZEY,2573

|

|

24

|

+

schema_search/search/bm25.py,sha256=jQHRFTuKGhIcSt3UdM7JVd5MIbpsuIi9uAevRn6ranE,1539

|

|

25

|

+

schema_search/search/factory.py,sha256=wgcx-xnZ8c7uSvu6oP3Fpoabd2Gl8FyJxn7zu3zZYMs,2062

|

|

26

|

+

schema_search/search/fuzzy.py,sha256=Urn2GtJ5h6j0R3HsRkrMfQCLSTU8jtGaHdfYXL_Nb3A,1865

|

|

27

|

+

schema_search/search/hybrid.py,sha256=T1O46SLCPgpCOnTw2bznnCWmqP9EUkUBLqu5AeQu7oQ,2864

|

|

28

|

+

schema_search/search/semantic.py,sha256=brw7x2hZMCep6QK7WWMT451RnpVcSMuNIZtp51kC6Bo,1673

|

|

29

|

+

schema_search/utils/__init__.py,sha256=47DEQpj8HBSa-_TImW-5JCeuQeRkm5NMpJWZG3hSuFU,0

|

|

30

|

+

schema_search/utils/lazy_import.py,sha256=ZDF7gZ1axIBp-U4r5NqA4YgR3iYTBm1eEA1a93LfUdA,813

|

|

31

|

+

schema_search-0.1.10.dist-info/licenses/LICENSE,sha256=jOHFAJEjJCD7iBjS2dBe73X5IGDJdAWGosGOUxfCHTM,1067

|

|

32

|

+

tests/__init__.py,sha256=47DEQpj8HBSa-_TImW-5JCeuQeRkm5NMpJWZG3hSuFU,0

|

|

33

|

+

tests/test_integration.py,sha256=8Iiq9NAwAxMoZcnfR19oOcBEGTyIOmt6nSafG6LWpj0,11959

|

|

34

|

+

tests/test_llm_sql_generation.py,sha256=bj6iwTqXfNEvlrSXnbPxbrgEM2nscbrmYHbT-rNBJZ4,11834

|

|

35

|

+

tests/test_spider_eval.py,sha256=aSN9Mh01E_R3uqFgP9gbBwys1K-iBv81Cw76eoiUK98,15442

|

|

36

|

+

schema_search-0.1.10.dist-info/METADATA,sha256=4Px4pxsTDssJqOy6CQC4TtLy3L3Z5snWJiqVMKksynU,10436

|

|

37

|

+

schema_search-0.1.10.dist-info/WHEEL,sha256=_zCd3N1l69ArxyTb8rzEoP9TpbYXkqRFSNOD5OuxnTs,91

|

|

38

|

+

schema_search-0.1.10.dist-info/entry_points.txt,sha256=9FAtZWOuIlmRNBPX_v7bn8x_aUcfojAKWU6ruSo48GM,64

|

|

39

|

+

schema_search-0.1.10.dist-info/top_level.txt,sha256=NZTdQFHoJMezNIhtZICGPOuXlCXQkQduQV925Oqf4sk,20

|

|

40

|

+

schema_search-0.1.10.dist-info/RECORD,,

|