liger-kernel 0.1.0__py3-none-any.whl → 0.1.1__py3-none-any.whl

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- liger_kernel-0.1.1.dist-info/METADATA +245 -0

- {liger_kernel-0.1.0.dist-info → liger_kernel-0.1.1.dist-info}/RECORD +6 -6

- liger_kernel-0.1.0.dist-info/METADATA +0 -16

- {liger_kernel-0.1.0.dist-info → liger_kernel-0.1.1.dist-info}/LICENSE +0 -0

- {liger_kernel-0.1.0.dist-info → liger_kernel-0.1.1.dist-info}/NOTICE +0 -0

- {liger_kernel-0.1.0.dist-info → liger_kernel-0.1.1.dist-info}/WHEEL +0 -0

- {liger_kernel-0.1.0.dist-info → liger_kernel-0.1.1.dist-info}/top_level.txt +0 -0

|

@@ -0,0 +1,245 @@

|

|

|

1

|

+

Metadata-Version: 2.1

|

|

2

|

+

Name: liger-kernel

|

|

3

|

+

Version: 0.1.1

|

|

4

|

+

Summary: Efficient Triton kernels for LLM Training

|

|

5

|

+

Home-page: https://github.com/linkedin/Liger-Kernel

|

|

6

|

+

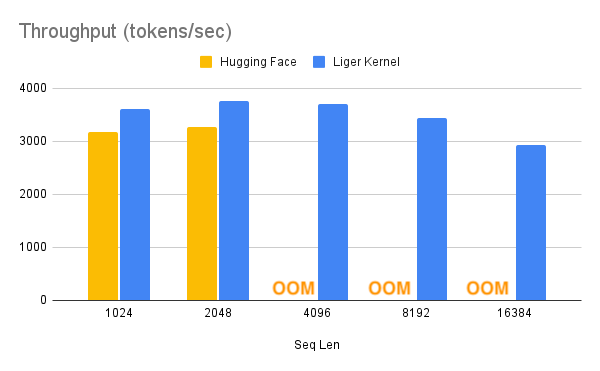

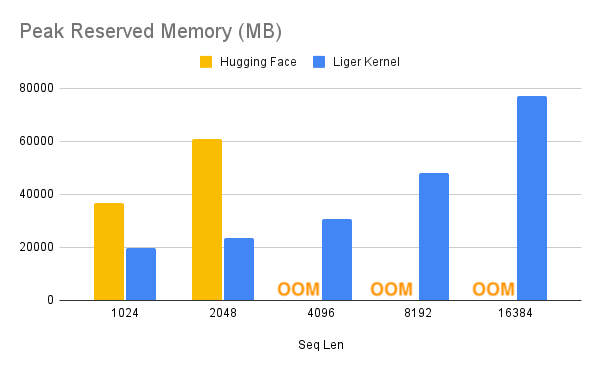

License: BSD-2-Clause

|

|

7

|

+

Keywords: triton,kernels,LLM training,deep learning,Hugging Face,PyTorch,GPU optimization

|

|

8

|

+

Classifier: Development Status :: 4 - Beta

|

|

9

|

+

Classifier: Intended Audience :: Developers

|

|

10

|

+

Classifier: Intended Audience :: Science/Research

|

|

11

|

+

Classifier: Intended Audience :: Education

|

|

12

|

+

Classifier: License :: OSI Approved :: BSD License

|

|

13

|

+

Classifier: Programming Language :: Python :: 3

|

|

14

|

+

Classifier: Programming Language :: Python :: 3.8

|

|

15

|

+

Classifier: Programming Language :: Python :: 3.9

|

|

16

|

+

Classifier: Programming Language :: Python :: 3.10

|

|

17

|

+

Classifier: Topic :: Software Development :: Libraries

|

|

18

|

+

Classifier: Topic :: Scientific/Engineering :: Artificial Intelligence

|

|

19

|

+

Description-Content-Type: text/markdown

|

|

20

|

+

License-File: LICENSE

|

|

21

|

+

License-File: NOTICE

|

|

22

|

+

Requires-Dist: torch>=2.1.2

|

|

23

|

+

Requires-Dist: triton>=2.3.0

|

|

24

|

+

Requires-Dist: transformers>=4.40.1

|

|

25

|

+

Provides-Extra: dev

|

|

26

|

+

Requires-Dist: matplotlib>=3.7.2; extra == "dev"

|

|

27

|

+

Requires-Dist: flake8>=4.0.1.1; extra == "dev"

|

|

28

|

+

Requires-Dist: black>=24.4.2; extra == "dev"

|

|

29

|

+

Requires-Dist: isort>=5.13.2; extra == "dev"

|

|

30

|

+

Requires-Dist: pre-commit>=3.7.1; extra == "dev"

|

|

31

|

+

Requires-Dist: torch-tb-profiler>=0.4.1; extra == "dev"

|

|

32

|

+

|

|

33

|

+

# Liger Kernel: Efficient Triton Kernels for LLM Training

|

|

34

|

+

|

|

35

|

+

[](https://pepy.tech/project/liger-kernel) [](https://badge.fury.io/py/liger-kernel) [](https://badge.fury.io/py/liger-kernel-nightly)

|

|

36

|

+

|

|

37

|

+

|

|

38

|

+

[Installation](#installation) | [Getting Started](#getting-started) | [Examples](#examples) | [APIs](#apis) | [Structure](#structure) | [Contributing](#contributing)

|

|

39

|

+

|

|

40

|

+

**Liger (Linkedin GPU Efficient Runtime) Kernel** is a collection of Triton kernels designed specifically for LLM training. It can effectively increase multi-GPU **training throughput by 20%** and reduces **memory usage by 60%**. We have implemented **Hugging Face Compatible** `RMSNorm`, `RoPE`, `SwiGLU`, `CrossEntropy`, `FusedLinearCrossEntropy`, and more to come. The kernel works out of the box with [Flash Attention](https://github.com/Dao-AILab/flash-attention), [PyTorch FSDP](https://pytorch.org/tutorials/intermediate/FSDP_tutorial.html), and [Microsoft DeepSpeed](https://github.com/microsoft/DeepSpeed). We welcome contributions from the community to gather the best kernels for LLM training.

|

|

41

|

+

|

|

42

|

+

## Supercharge Your Model with Liger Kernel

|

|

43

|

+

|

|

44

|

+

|

|

45

|

+

|

|

46

|

+

|

|

47

|

+

With one line of code, Liger Kernel can increase throughput by more than 20% and reduce memory usage by 60%, thereby enabling longer context lengths, larger batch sizes, and massive vocabularies.

|

|

48

|

+

|

|

49

|

+

|

|

50

|

+

| Speed Up | Memory Reduction |

|

|

51

|

+

|--------------------------|-------------------------|

|

|

52

|

+

|  |  |

|

|

53

|

+

|

|

54

|

+

> **Note:**

|

|

55

|

+

> - Benchmark conditions: LLaMA 3-8B, Batch Size = 8, Data Type = `bf16`, Optimizer = AdamW, Gradient Checkpointing = True, Distributed Strategy = FSDP1 on 8 A100s.

|

|

56

|

+

> - Hugging Face models start to OOM at a 4K context length, whereas Hugging Face + Liger Kernel scales up to 16K.

|

|

57

|

+

|

|

58

|

+

## Examples

|

|

59

|

+

|

|

60

|

+

### Basic

|

|

61

|

+

|

|

62

|

+

| **Example** | **Description** | **Lightning Studio** |

|

|

63

|

+

|------------------------------------------------|---------------------------------------------------------------------------------------------------|----------------------|

|

|

64

|

+

| [**Hugging Face Trainer**](https://github.com/linkedin/Liger-Kernel/tree/main/examples/huggingface) | Train LLaMA 3-8B ~20% faster with over 40% memory reduction on Alpaca dataset using 4 A100s with FSDP | TBA |

|

|

65

|

+

| [**Lightning Trainer**](https://github.com/linkedin/Liger-Kernel/tree/main/examples/lightning) | Increase 15% throughput and reduce memory usage by 40% with LLaMA3-8B on MMLU dataset using 8 A100s with DeepSpeed ZeRO3 | TBA |

|

|

66

|

+

|

|

67

|

+

### Advanced

|

|

68

|

+

|

|

69

|

+

| **Example** | **Description** | **Lightning Studio** |

|

|

70

|

+

|------------------------------------------------|---------------------------------------------------------------------------------------------------|----------------------|

|

|

71

|

+

| [**Medusa Multi-head LLM (Retraining Phase)**](https://github.com/linkedin/Liger-Kernel/tree/main/examples/medusa) | Reduce memory usage by 80% with 5 LM heads and improve throughput by 40% using 8 A100s with FSDP | TBA |

|

|

72

|

+

|

|

73

|

+

## Key Features

|

|

74

|

+

|

|

75

|

+

- **Ease of use:** Simply patch your Hugging Face model with one line of code, or compose your own model using our Liger Kernel modules.

|

|

76

|

+

- **Time and memory efficient:** In the same spirit as Flash-Attn, but for layers like **RMSNorm**, **RoPE**, **SwiGLU**, and **CrossEntropy**! Increases multi-GPU training throughput by 20% and reduces memory usage by 60% with **kernel fusion**, **in-place replacement**, and **chunking** techniques.

|

|

77

|

+

- **Exact:** Computation is exact—no approximations! Both forward and backward passes are implemented with rigorous unit tests and undergo convergence testing against training runs without Liger Kernel to ensure accuracy.

|

|

78

|

+

- **Lightweight:** Liger Kernel has minimal dependencies, requiring only Torch and Triton—no extra libraries needed! Say goodbye to dependency headaches!

|

|

79

|

+

- **Multi-GPU supported:** Compatible with multi-GPU setups (PyTorch FSDP, DeepSpeed, DDP, etc.).

|

|

80

|

+

|

|

81

|

+

## Target Audiences

|

|

82

|

+

|

|

83

|

+

- **Researchers**: Looking to compose models using efficient and reliable kernels for frontier experiments.

|

|

84

|

+

- **ML Practitioners**: Focused on maximizing GPU training efficiency with optimal, high-performance kernels.

|

|

85

|

+

- **Curious Novices**: Eager to learn how to write reliable Triton kernels to enhance training efficiency.

|

|

86

|

+

|

|

87

|

+

|

|

88

|

+

## Installation

|

|

89

|

+

|

|

90

|

+

### Dependencies

|

|

91

|

+

|

|

92

|

+

- `torch >= 2.1.2`

|

|

93

|

+

- `triton >= 2.3.0`

|

|

94

|

+

- `transformers >= 4.40.1`

|

|

95

|

+

|

|

96

|

+

To install the stable version:

|

|

97

|

+

|

|

98

|

+

```bash

|

|

99

|

+

$ pip install liger-kernel

|

|

100

|

+

```

|

|

101

|

+

|

|

102

|

+

To install the nightly version:

|

|

103

|

+

|

|

104

|

+

```bash

|

|

105

|

+

$ pip install liger-kernel-nightly

|

|

106

|

+

```

|

|

107

|

+

|

|

108

|

+

## Getting Started

|

|

109

|

+

|

|

110

|

+

### 1. Patch Existing Hugging Face Models

|

|

111

|

+

|

|

112

|

+

Using the [patching APIs](#patching), you can swap Hugging Face models with optimized Liger Kernels.

|

|

113

|

+

|

|

114

|

+

```python

|

|

115

|

+

import transformers

|

|

116

|

+

from liger_kernel.transformers import apply_liger_kernel_to_llama

|

|

117

|

+

|

|

118

|

+

model = transformers.AutoModelForCausalLM.from_pretrained("<some llama model>")

|

|

119

|

+

|

|

120

|

+

# Adding this line automatically monkey-patches the model with the optimized Liger kernels

|

|

121

|

+

apply_liger_kernel_to_llama()

|

|

122

|

+

```

|

|

123

|

+

|

|

124

|

+

### 2. Compose Your Own Model

|

|

125

|

+

|

|

126

|

+

You can take individual [kernels](#kernels) to compose your models.

|

|

127

|

+

|

|

128

|

+

```python

|

|

129

|

+

from liger_kernel.transformers import LigerFusedLinearCrossEntropyLoss

|

|

130

|

+

import torch.nn as nn

|

|

131

|

+

import torch

|

|

132

|

+

|

|

133

|

+

model = nn.Linear(128, 256).cuda()

|

|

134

|

+

|

|

135

|

+

# fuses linear + cross entropy layers together and performs chunk-by-chunk computation to reduce memory

|

|

136

|

+

loss_fn = LigerFusedLinearCrossEntropyLoss()

|

|

137

|

+

|

|

138

|

+

input = torch.randn(4, 128, requires_grad=True, device="cuda")

|

|

139

|

+

target = torch.randint(256, (4, ), device="cuda")

|

|

140

|

+

|

|

141

|

+

loss = loss_fn(model.weight, input, target)

|

|

142

|

+

loss.backward()

|

|

143

|

+

```

|

|

144

|

+

|

|

145

|

+

|

|

146

|

+

## Structure

|

|

147

|

+

|

|

148

|

+

### Source Code

|

|

149

|

+

|

|

150

|

+

- `ops/`: Core Triton operations.

|

|

151

|

+

- `transformers/`: PyTorch `nn.Module` implementations built on Triton operations, compliant with the `transformers` API.

|

|

152

|

+

|

|

153

|

+

### Tests

|

|

154

|

+

|

|

155

|

+

- `transformers/`: Correctness tests for the Triton-based layers.

|

|

156

|

+

- `convergence/`: Patches Hugging Face models with all kernels, runs multiple iterations, and compares weights, logits, and loss layer-by-layer.

|

|

157

|

+

|

|

158

|

+

### Benchmark

|

|

159

|

+

|

|

160

|

+

- `benchmark/`: Execution time and memory benchmarks compared to Hugging Face layers.

|

|

161

|

+

|

|

162

|

+

## APIs

|

|

163

|

+

|

|

164

|

+

### Patching

|

|

165

|

+

|

|

166

|

+

| **Model** | **API** | **Supported Operations** |

|

|

167

|

+

|-------------|--------------------------------------------------------------|-------------------------------------------------------------------------|

|

|

168

|

+

| LLaMA (2 & 3) | `liger_kernel.transformers.apply_liger_kernel_to_llama` | RoPE, RMSNorm, SwiGLU, CrossEntropyLoss, FusedLinearCrossEntropy |

|

|

169

|

+

| Mistral | `liger_kernel.transformers.apply_liger_kernel_to_mistral` | RoPE, RMSNorm, SwiGLU, CrossEntropyLoss |

|

|

170

|

+

| Mixtral | `liger_kernel.transformers.apply_liger_kernel_to_mixtral` | RoPE, RMSNorm, SwiGLU, CrossEntropyLoss |

|

|

171

|

+

| Gemma2 | `liger_kernel.transformers.apply_liger_kernel_to_gemma` | RoPE, RMSNorm, GeGLU, CrossEntropyLoss |

|

|

172

|

+

|

|

173

|

+

### Kernels

|

|

174

|

+

|

|

175

|

+

| **Kernel** | **API** |

|

|

176

|

+

|---------------------------------|-------------------------------------------------------------|

|

|

177

|

+

| RMSNorm | `liger_kernel.transformers.LigerRMSNorm` |

|

|

178

|

+

| RoPE | `liger_kernel.transformers.liger_rotary_pos_emb` |

|

|

179

|

+

| SwiGLU | `liger_kernel.transformers.LigerSwiGLUMLP` |

|

|

180

|

+

| GeGLU | `liger_kernel.transformers.LigerGEGLUMLP` |

|

|

181

|

+

| CrossEntropy | `liger_kernel.transformers.LigerCrossEntropyLoss` |

|

|

182

|

+

| FusedLinearCrossEntropy | `liger_kernel.transformers.LigerFusedLinearCrossEntropyLoss`|

|

|

183

|

+

|

|

184

|

+

- **RMSNorm**: [RMSNorm](https://arxiv.org/pdf/1910.07467), which normalizes activations using their root mean square, is implemented by fusing the normalization and scaling steps into a single Triton kernel, and achieves ~3X speedup with ~3X peak memory reduction.

|

|

185

|

+

- **RoPE**: [Rotary Positional Embedding](https://arxiv.org/pdf/2104.09864) is implemented by fusing the query and key embedding rotary into a single kernel with inplace replacement, and achieves ~3X speedup with ~3X peak memory reduction.

|

|

186

|

+

- **SwiGLU**: [Swish Gated Linear Units](https://arxiv.org/pdf/2002.05202), given by

|

|

187

|

+

$$\text{SwiGLU}(x)=\text{Swish}_{\beta}(xW+b)\otimes(xV+c)$$

|

|

188

|

+

, is implemented by fusing the elementwise multiplication (denoted by $\otimes$) into a single kernel with inplace replacement, and achieves parity speed with ~1.5X peak memory reduction.

|

|

189

|

+

- **GeGLU**: [GELU Gated Linear Units](https://arxiv.org/pdf/2002.05202), given by

|

|

190

|

+

$$\text{GeGLU}(x)=\text{GELU}(xW+b)\otimes(xV+c)$$

|

|

191

|

+

, is implemented by fusing the elementwise multiplication into a single kernel with inplace replacement, and achieves parity speed with ~1.5X peak memory reduction. Note that the [tanh approximation form of GELU](https://pytorch.org/docs/stable/generated/torch.nn.GELU.html) is used.

|

|

192

|

+

- **CrossEntropy**: [Cross entropy loss](https://pytorch.org/docs/stable/generated/torch.nn.CrossEntropyLoss.html) is implemented by computing both the loss and gradient in the forward pass with inplace replacement of input to reduce the peak memory by avoiding simultaneous materialization of both input logits and gradient. It achieves >2X speedup and >4X memory reduction for common vocab sizes (e.g., 32K, 128K, etc.).

|

|

193

|

+

<!-- TODO: verify vocab sizes are accurate -->

|

|

194

|

+

- **FusedLinearCrossEntropy**: Peak memory usage of cross entropy loss is further improved by fusing the model head with the CE loss and chunking the input for block-wise loss and gradient calculation, a technique inspired by [Efficient Cross Entropy](https://github.com/mgmalek/efficient_cross_entropy). It achieves >4X memory reduction for 128k vocab size. **This is highly effective for large batch size, large sequence length, and large vocabulary sizes.** Please refer to the [Medusa example](https://github.com/linkedin/Liger-Kernel/tree/main/examples/medusa) for individual kernel usage.

|

|

195

|

+

|

|

196

|

+

|

|

197

|

+

<!-- TODO: be more specific about batch size -->

|

|

198

|

+

> **Note:**

|

|

199

|

+

> Reported speedups and memory reductions are with respect to the LLaMA 3-8B Hugging Face layer implementations. All models use 4K hidden size and 4K sequence length and are evaluated based on memory usage and wall time for the forward+backward pass on a single NVIDIA A100 80G GPU using small batch sizes. Liger kernels exhibit more efficient scaling to larger batch sizes, detailed further in the [Benchmark](./benchmark) folder.

|

|

200

|

+

|

|

201

|

+

## Note on ML Compiler

|

|

202

|

+

|

|

203

|

+

### 1. Torch Compile

|

|

204

|

+

|

|

205

|

+

Since Liger Kernel is 100% Triton-based, it works seamlessly with [`torch.compile`](https://pytorch.org/tutorials/intermediate/torch_compile_tutorial.html). In the following example, Liger Kernel can further optimize the model on top of Torch Compile, reducing the memory by more than half.

|

|

206

|

+

|

|

207

|

+

| Configuration | Throughput (tokens/sec) | Memory Reserved (GB) |

|

|

208

|

+

|--------------------------------|----------------------------|-------------------------|

|

|

209

|

+

| Torch Compile | 3780 | 66.4 |

|

|

210

|

+

| Torch Compile + Liger Kernel | 3702 | 31.0 |

|

|

211

|

+

|

|

212

|

+

> **Note:**

|

|

213

|

+

> 1. Benchmark conditions: LLaMA 3-8B, Batch Size = 8, Seq Len = 4096, Data Type = `bf16`, Optimizer = AdamW, Gradient Checkpointing = True, Distributed Strategy = FSDP1 on 8 A100s.

|

|

214

|

+

> 2. Tested on torch `2.5.0.dev20240731+cu118`

|

|

215

|

+

|

|

216

|

+

### 2. Lightning Thunder

|

|

217

|

+

|

|

218

|

+

*WIP*

|

|

219

|

+

|

|

220

|

+

## Contributing

|

|

221

|

+

|

|

222

|

+

[CONTRIBUTING GUIDE](https://github.com/linkedin/Liger-Kernel/blob/main/CONTRIBUTING.md)

|

|

223

|

+

|

|

224

|

+

## Acknowledgement

|

|

225

|

+

|

|

226

|

+

- [flash-attn](https://github.com/Dao-AILab/flash-attention) and [Unsloth](https://github.com/unslothai/unsloth) for inspiration in Triton kernels for training

|

|

227

|

+

- [tiny shakespeare dataset](https://raw.githubusercontent.com/karpathy/char-rnn/master/data/tinyshakespeare/input.txt) by Andrej Karpathy for convergence testing

|

|

228

|

+

- [Efficient Cross Entropy](https://github.com/mgmalek/efficient_cross_entropy) for lm_head + cross entropy inspiration

|

|

229

|

+

|

|

230

|

+

|

|

231

|

+

## License

|

|

232

|

+

|

|

233

|

+

[BSD 2-CLAUSE](https://github.com/linkedin/Liger-Kernel/blob/main/LICENSE)

|

|

234

|

+

|

|

235

|

+

## Cite this work

|

|

236

|

+

|

|

237

|

+

Biblatex entry:

|

|

238

|

+

```bib

|

|

239

|

+

@software{liger2024,

|

|

240

|

+

title = {Liger-Kernel: Efficient Triton Kernels for LLM Training},

|

|

241

|

+

author = {Hsu, Pin-Lun and Dai, Yun and Kothapalli, Vignesh and Song, Qingquan and Tang, Shao and Zhu, Siyu},

|

|

242

|

+

url = {https://github.com/linkedin/Liger-Kernel},

|

|

243

|

+

year = {2024}

|

|

244

|

+

}

|

|

245

|

+

```

|

|

@@ -19,9 +19,9 @@ liger_kernel/transformers/model/__init__.py,sha256=47DEQpj8HBSa-_TImW-5JCeuQeRkm

|

|

|

19

19

|

liger_kernel/transformers/model/llama.py,sha256=4mfVTMrY7T-xiJeQJe02hBVnAwNCKlvLGp49gj6TWiU,5298

|

|

20

20

|

liger_kernel/triton/__init__.py,sha256=yfRe0zMb47QnqjecZWG7LnanfCTzeku7SgWRAwNVmzU,101

|

|

21

21

|

liger_kernel/triton/monkey_patch.py,sha256=5BcGKTtdqeYchypBIBopGIWPx1-cFALz7sOKoEsqXJ0,1584

|

|

22

|

-

liger_kernel-0.1.

|

|

23

|

-

liger_kernel-0.1.

|

|

24

|

-

liger_kernel-0.1.

|

|

25

|

-

liger_kernel-0.1.

|

|

26

|

-

liger_kernel-0.1.

|

|

27

|

-

liger_kernel-0.1.

|

|

22

|

+

liger_kernel-0.1.1.dist-info/LICENSE,sha256=OhzLDHJ0to4a8sodVLELZiCFylZ1NAAYLs-HrjPy0ag,1312

|

|

23

|

+

liger_kernel-0.1.1.dist-info/METADATA,sha256=jkp8JFT7zDNqf4-i0HQruXWhd-RGjJ8pTbCsM_K2ftI,14533

|

|

24

|

+

liger_kernel-0.1.1.dist-info/NOTICE,sha256=BXkXY9aWvEy_7MAB57zDu1z8uMYT1i1l9B6EpHuBa8s,173

|

|

25

|

+

liger_kernel-0.1.1.dist-info/WHEEL,sha256=eOLhNAGa2EW3wWl_TU484h7q1UNgy0JXjjoqKoxAAQc,92

|

|

26

|

+

liger_kernel-0.1.1.dist-info/top_level.txt,sha256=2eghu4hA3LnkM7ElW92tQ8zegWKgSbeo-k-aGe1YnvY,13

|

|

27

|

+

liger_kernel-0.1.1.dist-info/RECORD,,

|

|

@@ -1,16 +0,0 @@

|

|

|

1

|

-

Metadata-Version: 2.1

|

|

2

|

-

Name: liger-kernel

|

|

3

|

-

Version: 0.1.0

|

|

4

|

-

License-File: LICENSE

|

|

5

|

-

License-File: NOTICE

|

|

6

|

-

Requires-Dist: torch>=2.1.2

|

|

7

|

-

Requires-Dist: triton>=2.3.0

|

|

8

|

-

Requires-Dist: transformers>=4.40.1

|

|

9

|

-

Provides-Extra: dev

|

|

10

|

-

Requires-Dist: matplotlib>=3.7.2; extra == "dev"

|

|

11

|

-

Requires-Dist: flake8>=4.0.1.1; extra == "dev"

|

|

12

|

-

Requires-Dist: black>=24.4.2; extra == "dev"

|

|

13

|

-

Requires-Dist: isort>=5.13.2; extra == "dev"

|

|

14

|

-

Requires-Dist: pre-commit>=3.7.1; extra == "dev"

|

|

15

|

-

Requires-Dist: torch-tb-profiler>=0.4.1; extra == "dev"

|

|

16

|

-

|

|

File without changes

|

|

File without changes

|

|

File without changes

|

|

File without changes

|