cobweb-launcher 1.1.23__py3-none-any.whl → 1.2.1__py3-none-any.whl

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

Potentially problematic release.

This version of cobweb-launcher might be problematic. Click here for more details.

- cobweb/__init__.py +1 -1

- cobweb/base/__init__.py +1 -1

- cobweb/base/item.py +7 -0

- cobweb/constant.py +23 -1

- cobweb/crawlers/__init__.py +1 -2

- cobweb/crawlers/crawler.py +160 -0

- cobweb/launchers/__init__.py +1 -1

- cobweb/launchers/launcher.py +39 -53

- cobweb/launchers/launcher_air.py +88 -0

- cobweb/launchers/launcher_pro.py +83 -36

- cobweb/pipelines/__init__.py +3 -2

- cobweb/pipelines/pipeline.py +60 -0

- cobweb/pipelines/pipeline_console.py +22 -0

- cobweb/pipelines/pipeline_loghub.py +34 -0

- cobweb/setting.py +6 -6

- cobweb/utils/tools.py +2 -2

- cobweb_launcher-1.2.1.dist-info/METADATA +204 -0

- cobweb_launcher-1.2.1.dist-info/RECORD +37 -0

- cobweb_launcher-1.1.23.dist-info/METADATA +0 -48

- cobweb_launcher-1.1.23.dist-info/RECORD +0 -32

- {cobweb_launcher-1.1.23.dist-info → cobweb_launcher-1.2.1.dist-info}/LICENSE +0 -0

- {cobweb_launcher-1.1.23.dist-info → cobweb_launcher-1.2.1.dist-info}/WHEEL +0 -0

- {cobweb_launcher-1.1.23.dist-info → cobweb_launcher-1.2.1.dist-info}/top_level.txt +0 -0

cobweb/__init__.py

CHANGED

|

@@ -1,2 +1,2 @@

|

|

|

1

|

-

from .launchers import

|

|

1

|

+

from .launchers import LauncherAir, LauncherPro

|

|

2

2

|

from .constant import CrawlerModel

|

cobweb/base/__init__.py

CHANGED

cobweb/base/item.py

CHANGED

cobweb/constant.py

CHANGED

|

@@ -30,6 +30,24 @@ class DealModel:

|

|

|

30

30

|

|

|

31

31

|

class LogTemplate:

|

|

32

32

|

|

|

33

|

+

console_item = """

|

|

34

|

+

----------------------- start - console pipeline -----------------

|

|

35

|

+

种子详情 \n{seed_detail}

|

|

36

|

+

解析详情 \n{parse_detail}

|

|

37

|

+

----------------------- end - console pipeline ------------------

|

|

38

|

+

"""

|

|

39

|

+

|

|

40

|

+

launcher_air_polling = """

|

|

41

|

+

----------------------- start - 轮训日志: {task} -----------------

|

|

42

|

+

内存队列

|

|

43

|

+

种子数: {doing_len}

|

|

44

|

+

待消费: {todo_len}

|

|

45

|

+

已消费: {done_len}

|

|

46

|

+

存储队列

|

|

47

|

+

待上传: {upload_len}

|

|

48

|

+

----------------------- end - 轮训日志: {task} ------------------

|

|

49

|

+

"""

|

|

50

|

+

|

|

33

51

|

launcher_pro_polling = """

|

|

34

52

|

----------------------- start - 轮训日志: {task} -----------------

|

|

35

53

|

内存队列

|

|

@@ -69,4 +87,8 @@ class LogTemplate:

|

|

|

69

87

|

response

|

|

70

88

|

status : {status} \n{response}

|

|

71

89

|

------------------------------------------------------------------

|

|

72

|

-

"""

|

|

90

|

+

"""

|

|

91

|

+

|

|

92

|

+

@staticmethod

|

|

93

|

+

def log_info(item: dict) -> str:

|

|

94

|

+

return "\n".join([" " * 12 + f"{str(k).ljust(14)}: {str(v)}" for k, v in item.items()])

|

cobweb/crawlers/__init__.py

CHANGED

|

@@ -1,2 +1 @@

|

|

|

1

|

-

from .

|

|

2

|

-

from .file_crawler import FileCrawlerAir

|

|

1

|

+

from .crawler import Crawler

|

|

@@ -0,0 +1,160 @@

|

|

|

1

|

+

import json

|

|

2

|

+

import threading

|

|

3

|

+

import time

|

|

4

|

+

import traceback

|

|

5

|

+

from inspect import isgenerator

|

|

6

|

+

from typing import Union, Callable, Mapping

|

|

7

|

+

|

|

8

|

+

from cobweb.constant import DealModel, LogTemplate

|

|

9

|

+

from cobweb.base import (

|

|

10

|

+

Queue,

|

|

11

|

+

Seed,

|

|

12

|

+

BaseItem,

|

|

13

|

+

Request,

|

|

14

|

+

Response,

|

|

15

|

+

ConsoleItem,

|

|

16

|

+

logger

|

|

17

|

+

)

|

|

18

|

+

|

|

19

|

+

|

|

20

|

+

class Crawler(threading.Thread):

|

|

21

|

+

|

|

22

|

+

def __init__(

|

|

23

|

+

self,

|

|

24

|

+

stop: threading.Event,

|

|

25

|

+

pause: threading.Event,

|

|

26

|

+

launcher_queue: Union[Mapping[str, Queue]],

|

|

27

|

+

custom_func: Union[Mapping[str, Callable]],

|

|

28

|

+

thread_num: int,

|

|

29

|

+

max_retries: int

|

|

30

|

+

):

|

|

31

|

+

super().__init__()

|

|

32

|

+

|

|

33

|

+

self._stop = stop

|

|

34

|

+

self._pause = pause

|

|

35

|

+

self._new = launcher_queue["new"]

|

|

36

|

+

self._todo = launcher_queue["todo"]

|

|

37

|

+

self._done = launcher_queue["done"]

|

|

38

|

+

self._upload = launcher_queue["upload"]

|

|

39

|

+

|

|

40

|

+

for func_name, _callable in custom_func.items():

|

|

41

|

+

if isinstance(_callable, Callable):

|

|

42

|

+

self.__setattr__(func_name, _callable)

|

|

43

|

+

|

|

44

|

+

self.thread_num = thread_num

|

|

45

|

+

self.max_retries = max_retries

|

|

46

|

+

|

|

47

|

+

@staticmethod

|

|

48

|

+

def request(seed: Seed) -> Union[Request, BaseItem]:

|

|

49

|

+

yield Request(seed.url, seed, timeout=5)

|

|

50

|

+

|

|

51

|

+

@staticmethod

|

|

52

|

+

def download(item: Request) -> Union[Seed, BaseItem, Response, str]:

|

|

53

|

+

response = item.download()

|

|

54

|

+

yield Response(item.seed, response, **item.to_dict)

|

|

55

|

+

|

|

56

|

+

@staticmethod

|

|

57

|

+

def parse(item: Response) -> BaseItem:

|

|

58

|

+

upload_item = item.to_dict

|

|

59

|

+

upload_item["text"] = item.response.text

|

|

60

|

+

yield ConsoleItem(item.seed, data=json.dumps(upload_item, ensure_ascii=False))

|

|

61

|

+

|

|

62

|

+

# def get_seed(self) -> Seed:

|

|

63

|

+

# return self._todo.pop()

|

|

64

|

+

|

|

65

|

+

def distribute(self, item, seed):

|

|

66

|

+

if isinstance(item, BaseItem):

|

|

67

|

+

self._upload.push(item)

|

|

68

|

+

elif isinstance(item, Seed):

|

|

69

|

+

self._new.push(item)

|

|

70

|

+

elif isinstance(item, str) and item == DealModel.poll:

|

|

71

|

+

self._todo.push(seed)

|

|

72

|

+

elif isinstance(item, str) and item == DealModel.done:

|

|

73

|

+

self._done.push(seed)

|

|

74

|

+

elif isinstance(item, str) and item == DealModel.fail:

|

|

75

|

+

seed.params.seed_status = DealModel.fail

|

|

76

|

+

self._done.push(seed)

|

|

77

|

+

else:

|

|

78

|

+

raise TypeError("yield value type error!")

|

|

79

|

+

|

|

80

|

+

def spider(self):

|

|

81

|

+

while not self._stop.is_set():

|

|

82

|

+

|

|

83

|

+

seed = self._todo.pop()

|

|

84

|

+

|

|

85

|

+

if not seed:

|

|

86

|

+

time.sleep(1)

|

|

87

|

+

continue

|

|

88

|

+

|

|

89

|

+

elif seed.params.retry >= self.max_retries:

|

|

90

|

+

seed.params.seed_status = DealModel.fail

|

|

91

|

+

self._done.push(seed)

|

|

92

|

+

continue

|

|

93

|

+

|

|

94

|

+

seed_detail_log_info = LogTemplate.log_info(seed.to_dict)

|

|

95

|

+

|

|

96

|

+

try:

|

|

97

|

+

request_iterators = self.request(seed)

|

|

98

|

+

|

|

99

|

+

if not isgenerator(request_iterators):

|

|

100

|

+

raise TypeError("request function isn't a generator!")

|

|

101

|

+

|

|

102

|

+

iterator_status = False

|

|

103

|

+

|

|

104

|

+

for request_item in request_iterators:

|

|

105

|

+

|

|

106

|

+

iterator_status = True

|

|

107

|

+

|

|

108

|

+

if isinstance(request_item, Request):

|

|

109

|

+

iterator_status = False

|

|

110

|

+

download_iterators = self.download(request_item)

|

|

111

|

+

if not isgenerator(download_iterators):

|

|

112

|

+

raise TypeError("download function isn't a generator")

|

|

113

|

+

|

|

114

|

+

for download_item in download_iterators:

|

|

115

|

+

iterator_status = True

|

|

116

|

+

if isinstance(download_item, Response):

|

|

117

|

+

iterator_status = False

|

|

118

|

+

logger.info(LogTemplate.download_info.format(

|

|

119

|

+

detail=seed_detail_log_info,

|

|

120

|

+

retry=seed.params.retry,

|

|

121

|

+

priority=seed.params.priority,

|

|

122

|

+

seed_version=seed.params.seed_version,

|

|

123

|

+

identifier=seed.identifier or "",

|

|

124

|

+

status=download_item.response,

|

|

125

|

+

response=LogTemplate.log_info(download_item.to_dict)

|

|

126

|

+

))

|

|

127

|

+

parse_iterators = self.parse(download_item)

|

|

128

|

+

if not isgenerator(parse_iterators):

|

|

129

|

+

raise TypeError("parse function isn't a generator")

|

|

130

|

+

for parse_item in parse_iterators:

|

|

131

|

+

iterator_status = True

|

|

132

|

+

if isinstance(parse_item, Response):

|

|

133

|

+

raise TypeError("upload_item can't be a Response instance")

|

|

134

|

+

self.distribute(parse_item, seed)

|

|

135

|

+

else:

|

|

136

|

+

self.distribute(download_item, seed)

|

|

137

|

+

else:

|

|

138

|

+

self.distribute(request_item, seed)

|

|

139

|

+

|

|

140

|

+

if not iterator_status:

|

|

141

|

+

raise ValueError("request/download/parse function yield value error!")

|

|

142

|

+

except Exception as e:

|

|

143

|

+

logger.info(LogTemplate.download_exception.format(

|

|

144

|

+

detail=seed_detail_log_info,

|

|

145

|

+

retry=seed.params.retry,

|

|

146

|

+

priority=seed.params.priority,

|

|

147

|

+

seed_version=seed.params.seed_version,

|

|

148

|

+

identifier=seed.identifier or "",

|

|

149

|

+

exception=''.join(traceback.format_exception(type(e), e, e.__traceback__))

|

|

150

|

+

))

|

|

151

|

+

seed.params.retry += 1

|

|

152

|

+

self._todo.push(seed)

|

|

153

|

+

finally:

|

|

154

|

+

time.sleep(0.1)

|

|

155

|

+

logger.info("spider thread close")

|

|

156

|

+

|

|

157

|

+

def run(self):

|

|

158

|

+

for index in range(self.thread_num):

|

|

159

|

+

threading.Thread(name=f"spider_{index}", target=self.spider).start()

|

|

160

|

+

|

cobweb/launchers/__init__.py

CHANGED

|

@@ -1,2 +1,2 @@

|

|

|

1

|

-

from .

|

|

1

|

+

from .launcher_air import LauncherAir

|

|

2

2

|

from .launcher_pro import LauncherPro

|

cobweb/launchers/launcher.py

CHANGED

|

@@ -15,15 +15,16 @@ class Launcher(threading.Thread):

|

|

|

15

15

|

__DOING__ = {}

|

|

16

16

|

|

|

17

17

|

__CUSTOM_FUNC__ = {

|

|

18

|

-

"download": None,

|

|

19

|

-

"

|

|

20

|

-

"parse": None,

|

|

18

|

+

# "download": None,

|

|

19

|

+

# "request": None,

|

|

20

|

+

# "parse": None,

|

|

21

21

|

}

|

|

22

22

|

|

|

23

23

|

__LAUNCHER_QUEUE__ = {

|

|

24

24

|

"new": Queue(),

|

|

25

25

|

"todo": Queue(),

|

|

26

26

|

"done": Queue(),

|

|

27

|

+

"upload": Queue()

|

|

27

28

|

}

|

|

28

29

|

|

|

29

30

|

__LAUNCHER_FUNC__ = [

|

|

@@ -76,9 +77,13 @@ class Launcher(threading.Thread):

|

|

|

76

77

|

self._done_queue_max_size = setting.DONE_QUEUE_MAX_SIZE

|

|

77

78

|

self._upload_queue_max_size = setting.UPLOAD_QUEUE_MAX_SIZE

|

|

78

79

|

|

|

80

|

+

self._spider_thread_num = setting.SPIDER_MAX_RETRIES

|

|

81

|

+

self._spider_max_retries = setting.SPIDER_THREAD_NUM

|

|

82

|

+

|

|

79

83

|

self._done_model = setting.DONE_MODEL

|

|

84

|

+

self._task_model = setting.TASK_MODEL

|

|

80

85

|

|

|

81

|

-

self._upload_queue = Queue()

|

|

86

|

+

# self._upload_queue = Queue()

|

|

82

87

|

|

|

83

88

|

@property

|

|

84

89

|

def start_seeds(self):

|

|

@@ -120,8 +125,8 @@ class Launcher(threading.Thread):

|

|

|

120

125

|

自定义parse函数, xxxItem为自定义的存储数据类型

|

|

121

126

|

use case:

|

|

122

127

|

from cobweb.base import Request, Response

|

|

123

|

-

@launcher.

|

|

124

|

-

def

|

|

128

|

+

@launcher.parse

|

|

129

|

+

def parse(item: Response) -> BaseItem:

|

|

125

130

|

...

|

|

126

131

|

yield xxxItem(seed, **kwargs)

|

|

127

132

|

"""

|

|

@@ -133,6 +138,33 @@ class Launcher(threading.Thread):

|

|

|

133

138

|

for seed in seeds:

|

|

134

139

|

self.__DOING__.pop(seed, None)

|

|

135

140

|

|

|

141

|

+

def _execute(self):

|

|

142

|

+

for func_name in self.__LAUNCHER_FUNC__:

|

|

143

|

+

threading.Thread(name=func_name, target=getattr(self, func_name)).start()

|

|

144

|

+

time.sleep(2)

|

|

145

|

+

|

|

146

|

+

def run(self):

|

|

147

|

+

threading.Thread(target=self._execute_heartbeat).start()

|

|

148

|

+

|

|

149

|

+

self._Crawler(

|

|

150

|

+

stop=self._stop, pause=self._pause,

|

|

151

|

+

launcher_queue=self.__LAUNCHER_QUEUE__,

|

|

152

|

+

custom_func=self.__CUSTOM_FUNC__,

|

|

153

|

+

thread_num = self._spider_thread_num,

|

|

154

|

+

max_retries = self._spider_max_retries

|

|

155

|

+

).start()

|

|

156

|

+

|

|

157

|

+

self._Pipeline(

|

|

158

|

+

stop=self._stop, pause=self._pause,

|

|

159

|

+

upload=self.__LAUNCHER_QUEUE__["upload"],

|

|

160

|

+

done=self.__LAUNCHER_QUEUE__["done"],

|

|

161

|

+

upload_size=self._upload_queue_max_size,

|

|

162

|

+

wait_seconds=self._upload_queue_wait_seconds

|

|

163

|

+

).start()

|

|

164

|

+

|

|

165

|

+

self._execute()

|

|

166

|

+

self._polling()

|

|

167

|

+

|

|

136

168

|

def _execute_heartbeat(self):

|

|

137

169

|

pass

|

|

138

170

|

|

|

@@ -151,52 +183,6 @@ class Launcher(threading.Thread):

|

|

|

151

183

|

def _delete(self):

|

|

152

184

|

pass

|

|

153

185

|

|

|

154

|

-

def _execute(self):

|

|

155

|

-

for func_name in self.__LAUNCHER_FUNC__:

|

|

156

|

-

threading.Thread(name=func_name, target=getattr(self, func_name)).start()

|

|

157

|

-

time.sleep(2)

|

|

158

|

-

|

|

159

186

|

def _polling(self):

|

|

187

|

+

pass

|

|

160

188

|

|

|

161

|

-

check_emtpy_times = 0

|

|

162

|

-

|

|

163

|

-

while not self._stop.is_set():

|

|

164

|

-

|

|

165

|

-

queue_not_empty_count = 0

|

|

166

|

-

|

|

167

|

-

for q in self.__LAUNCHER_QUEUE__.values():

|

|

168

|

-

if q.length != 0:

|

|

169

|

-

queue_not_empty_count += 1

|

|

170

|

-

|

|

171

|

-

if self._pause.is_set() and queue_not_empty_count != 0:

|

|

172

|

-

self._pause.clear()

|

|

173

|

-

self._execute()

|

|

174

|

-

|

|

175

|

-

elif queue_not_empty_count == 0:

|

|

176

|

-

check_emtpy_times += 1

|

|

177

|

-

else:

|

|

178

|

-

check_emtpy_times = 0

|

|

179

|

-

|

|

180

|

-

if check_emtpy_times > 2:

|

|

181

|

-

check_emtpy_times = 0

|

|

182

|

-

self.__DOING__ = {}

|

|

183

|

-

self._pause.set()

|

|

184

|

-

|

|

185

|

-

def run(self):

|

|

186

|

-

threading.Thread(target=self._execute_heartbeat).start()

|

|

187

|

-

|

|

188

|

-

self._Crawler(

|

|

189

|

-

upload_queue=self._upload_queue,

|

|

190

|

-

custom_func=self.__CUSTOM_FUNC__,

|

|

191

|

-

launcher_queue=self.__LAUNCHER_QUEUE__,

|

|

192

|

-

).start()

|

|

193

|

-

|

|

194

|

-

self._Pipeline(

|

|

195

|

-

upload_queue=self._upload_queue,

|

|

196

|

-

done_queue=self.__LAUNCHER_QUEUE__["done"],

|

|

197

|

-

upload_queue_size=self._upload_queue_max_size,

|

|

198

|

-

upload_wait_seconds=self._upload_queue_wait_seconds

|

|

199

|

-

).start()

|

|

200

|

-

|

|

201

|

-

self._execute()

|

|

202

|

-

self._polling()

|

|

@@ -0,0 +1,88 @@

|

|

|

1

|

+

import time

|

|

2

|

+

|

|

3

|

+

from cobweb.constant import LogTemplate

|

|

4

|

+

from cobweb.base import logger

|

|

5

|

+

from .launcher import Launcher

|

|

6

|

+

|

|

7

|

+

|

|

8

|

+

class LauncherAir(Launcher):

|

|

9

|

+

|

|

10

|

+

def _scheduler(self):

|

|

11

|

+

if self.start_seeds:

|

|

12

|

+

self.__LAUNCHER_QUEUE__['todo'].push(self.start_seeds)

|

|

13

|

+

|

|

14

|

+

def _insert(self):

|

|

15

|

+

while not self._pause.is_set():

|

|

16

|

+

seeds = {}

|

|

17

|

+

status = self.__LAUNCHER_QUEUE__['new'].length < self._new_queue_max_size

|

|

18

|

+

for _ in range(self._new_queue_max_size):

|

|

19

|

+

seed = self.__LAUNCHER_QUEUE__['new'].pop()

|

|

20

|

+

if not seed:

|

|

21

|

+

break

|

|

22

|

+

seeds[seed.to_string] = seed.params.priority

|

|

23

|

+

if seeds:

|

|

24

|

+

self.__LAUNCHER_QUEUE__['todo'].push(seeds)

|

|

25

|

+

if status:

|

|

26

|

+

time.sleep(self._new_queue_wait_seconds)

|

|

27

|

+

|

|

28

|

+

def _delete(self):

|

|

29

|

+

while not self._pause.is_set():

|

|

30

|

+

seeds = []

|

|

31

|

+

status = self.__LAUNCHER_QUEUE__['done'].length < self._done_queue_max_size

|

|

32

|

+

|

|

33

|

+

for _ in range(self._done_queue_max_size):

|

|

34

|

+

seed = self.__LAUNCHER_QUEUE__['done'].pop()

|

|

35

|

+

if not seed:

|

|

36

|

+

break

|

|

37

|

+

seeds.append(seed.to_string)

|

|

38

|

+

|

|

39

|

+

if seeds:

|

|

40

|

+

self._remove_doing_seeds(seeds)

|

|

41

|

+

|

|

42

|

+

if status:

|

|

43

|

+

time.sleep(self._done_queue_wait_seconds)

|

|

44

|

+

|

|

45

|

+

def _polling(self):

|

|

46

|

+

|

|

47

|

+

check_emtpy_times = 0

|

|

48

|

+

|

|

49

|

+

while not self._stop.is_set():

|

|

50

|

+

|

|

51

|

+

queue_not_empty_count = 0

|

|

52

|

+

pooling_wait_seconds = 30

|

|

53

|

+

|

|

54

|

+

for q in self.__LAUNCHER_QUEUE__.values():

|

|

55

|

+

if q.length != 0:

|

|

56

|

+

queue_not_empty_count += 1

|

|

57

|

+

|

|

58

|

+

if queue_not_empty_count == 0:

|

|

59

|

+

pooling_wait_seconds = 3

|

|

60

|

+

if self._pause.is_set():

|

|

61

|

+

check_emtpy_times = 0

|

|

62

|

+

if not self._task_model:

|

|

63

|

+

logger.info("Done! Ready to close thread...")

|

|

64

|

+

self._stop.set()

|

|

65

|

+

elif check_emtpy_times > 2:

|

|

66

|

+

self.__DOING__ = {}

|

|

67

|

+

self._pause.set()

|

|

68

|

+

else:

|

|

69

|

+

logger.info(

|

|

70

|

+

"check whether the task is complete, "

|

|

71

|

+

f"reset times {3 - check_emtpy_times}"

|

|

72

|

+

)

|

|

73

|

+

check_emtpy_times += 1

|

|

74

|

+

elif self._pause.is_set():

|

|

75

|

+

self._pause.clear()

|

|

76

|

+

self._execute()

|

|

77

|

+

else:

|

|

78

|

+

logger.info(LogTemplate.launcher_air_polling.format(

|

|

79

|

+

task=self.task,

|

|

80

|

+

doing_len=len(self.__DOING__.keys()),

|

|

81

|

+

todo_len=self.__LAUNCHER_QUEUE__['todo'].length,

|

|

82

|

+

done_len=self.__LAUNCHER_QUEUE__['done'].length,

|

|

83

|

+

upload_len=self.__LAUNCHER_QUEUE__['upload'].length,

|

|

84

|

+

))

|

|

85

|

+

|

|

86

|

+

time.sleep(pooling_wait_seconds)

|

|

87

|

+

|

|

88

|

+

|

cobweb/launchers/launcher_pro.py

CHANGED

|

@@ -3,19 +3,19 @@ import threading

|

|

|

3

3

|

|

|

4

4

|

from cobweb.db import RedisDB

|

|

5

5

|

from cobweb.base import Seed, logger

|

|

6

|

-

from cobweb.launchers import Launcher

|

|

7

6

|

from cobweb.constant import DealModel, LogTemplate

|

|

7

|

+

from .launcher import Launcher

|

|

8

8

|

|

|

9

9

|

|

|

10

10

|

class LauncherPro(Launcher):

|

|

11

11

|

|

|

12

12

|

def __init__(self, task, project, custom_setting=None, **kwargs):

|

|

13

13

|

super().__init__(task, project, custom_setting, **kwargs)

|

|

14

|

-

self.

|

|

15

|

-

self.

|

|

16

|

-

self.

|

|

17

|

-

self.

|

|

18

|

-

self.

|

|

14

|

+

self._todo_key = "{%s:%s}:todo" % (project, task)

|

|

15

|

+

self._done_key = "{%s:%s}:done" % (project, task)

|

|

16

|

+

self._fail_key = "{%s:%s}:fail" % (project, task)

|

|

17

|

+

self._heartbeat_key = "heartbeat:%s_%s" % (project, task)

|

|

18

|

+

self._reset_lock_key = "lock:reset:%s_%s" % (project, task)

|

|

19

19

|

self._db = RedisDB()

|

|

20

20

|

|

|

21

21

|

self._heartbeat_start_event = threading.Event()

|

|

@@ -23,12 +23,12 @@ class LauncherPro(Launcher):

|

|

|

23

23

|

|

|

24

24

|

@property

|

|

25

25

|

def heartbeat(self):

|

|

26

|

-

return self._db.exists(self.

|

|

26

|

+

return self._db.exists(self._heartbeat_key)

|

|

27

27

|

|

|

28

28

|

def _execute_heartbeat(self):

|

|

29

29

|

while not self._stop.is_set():

|

|

30

30

|

if self._heartbeat_start_event.is_set():

|

|

31

|

-

self._db.setex(self.

|

|

31

|

+

self._db.setex(self._heartbeat_key, 3)

|

|

32

32

|

time.sleep(2)

|

|

33

33

|

|

|

34

34

|

def _reset(self):

|

|

@@ -39,15 +39,15 @@ class LauncherPro(Launcher):

|

|

|

39

39

|

while not self._pause.is_set():

|

|

40

40

|

reset_wait_seconds = 30

|

|

41

41

|

start_reset_time = int(time.time())

|

|

42

|

-

if self._db.lock(self.

|

|

42

|

+

if self._db.lock(self._reset_lock_key, t=120):

|

|

43

43

|

if not self.heartbeat:

|

|

44

44

|

self._heartbeat_start_event.set()

|

|

45

45

|

|

|

46

46

|

_min = -int(time.time()) + self._seed_reset_seconds \

|

|

47

47

|

if self.heartbeat or not init else "-inf"

|

|

48

48

|

|

|

49

|

-

self._db.members(self.

|

|

50

|

-

self._db.delete(self.

|

|

49

|

+

self._db.members(self._todo_key, 0, _min=_min, _max="(0")

|

|

50

|

+

self._db.delete(self._reset_lock_key)

|

|

51

51

|

|

|

52

52

|

ttl = 120 - int(time.time()) + start_reset_time

|

|

53

53

|

reset_wait_seconds = max(ttl, 1)

|

|

@@ -61,14 +61,14 @@ class LauncherPro(Launcher):

|

|

|

61

61

|

if self.start_seeds:

|

|

62

62

|

self.__LAUNCHER_QUEUE__['todo'].push(self.start_seeds)

|

|

63

63

|

while not self._pause.is_set():

|

|

64

|

-

if not self._db.zcount(self.

|

|

64

|

+

if not self._db.zcount(self._todo_key, 0, "(1000"):

|

|

65

65

|

time.sleep(self._scheduler_wait_seconds)

|

|

66

66

|

continue

|

|

67

67

|

if self.__LAUNCHER_QUEUE__['todo'].length >= self._todo_queue_size:

|

|

68

68

|

time.sleep(self._todo_queue_full_wait_seconds)

|

|

69

69

|

continue

|

|

70

70

|

members = self._db.members(

|

|

71

|

-

self.

|

|

71

|

+

self._todo_key, int(time.time()),

|

|

72

72

|

count=self._todo_queue_size,

|

|

73

73

|

_min=0, _max="(1000"

|

|

74

74

|

)

|

|

@@ -90,7 +90,7 @@ class LauncherPro(Launcher):

|

|

|

90

90

|

break

|

|

91

91

|

seeds[seed.to_string] = seed.params.priority

|

|

92

92

|

if seeds:

|

|

93

|

-

self._db.zadd(self.

|

|

93

|

+

self._db.zadd(self._todo_key, seeds, nx=True)

|

|

94

94

|

if status:

|

|

95

95

|

time.sleep(self._new_queue_wait_seconds)

|

|

96

96

|

|

|

@@ -102,7 +102,7 @@ class LauncherPro(Launcher):

|

|

|

102

102

|

if self.__DOING__:

|

|

103

103

|

refresh_time = int(time.time())

|

|

104

104

|

seeds = {k:-refresh_time - v / 1000 for k, v in self.__DOING__.items()}

|

|

105

|

-

self._db.zadd(self.

|

|

105

|

+

self._db.zadd(self._todo_key, item=seeds, xx=True)

|

|

106

106

|

time.sleep(30)

|

|

107

107

|

|

|

108

108

|

def _delete(self):

|

|

@@ -124,13 +124,13 @@ class LauncherPro(Launcher):

|

|

|

124

124

|

else:

|

|

125

125

|

seeds.append(seed.to_string)

|

|

126

126

|

if seeds:

|

|

127

|

-

self._db.zrem(self.

|

|

127

|

+

self._db.zrem(self._todo_key, *seeds)

|

|

128

128

|

self._remove_doing_seeds(seeds)

|

|

129

129

|

if s_seeds:

|

|

130

|

-

self._db.done([self.

|

|

130

|

+

self._db.done([self._todo_key, self._done_key], *s_seeds)

|

|

131

131

|

self._remove_doing_seeds(s_seeds)

|

|

132

132

|

if f_seeds:

|

|

133

|

-

self._db.done([self.

|

|

133

|

+

self._db.done([self._todo_key, self._fail_key], *f_seeds)

|

|

134

134

|

self._remove_doing_seeds(f_seeds)

|

|

135

135

|

|

|

136

136

|

if status:

|

|

@@ -141,32 +141,79 @@ class LauncherPro(Launcher):

|

|

|

141

141

|

while not self._stop.is_set():

|

|

142

142

|

queue_not_empty_count = 0

|

|

143

143

|

pooling_wait_seconds = 30

|

|

144

|

+

|

|

144

145

|

for q in self.__LAUNCHER_QUEUE__.values():

|

|

145

146

|

if q.length != 0:

|

|

146

147

|

queue_not_empty_count += 1

|

|

147

|

-

if self._pause.is_set():

|

|

148

|

-

self._pause.clear()

|

|

149

|

-

self._execute()

|

|

150

|

-

elif queue_not_empty_count == 0:

|

|

151

|

-

pooling_wait_seconds = 5

|

|

152

|

-

check_emtpy_times += 1

|

|

153

|

-

else:

|

|

154

|

-

check_emtpy_times = 0

|

|

155

148

|

|

|

156

|

-

if

|

|

157

|

-

|

|

158

|

-

self.

|

|

159

|

-

|

|

160

|

-

|

|

161

|

-

|

|

149

|

+

if queue_not_empty_count == 0:

|

|

150

|

+

pooling_wait_seconds = 3

|

|

151

|

+

if self._pause.is_set():

|

|

152

|

+

check_emtpy_times = 0

|

|

153

|

+

if not self._task_model:

|

|

154

|

+

logger.info("Done! ready to close thread...")

|

|

155

|

+

self._stop.set()

|

|

156

|

+

elif self._db.zcount(self._todo_key, _min=0, _max="(1000"):

|

|

157

|

+

logger.info(f"Recovery {self.task} task run!")

|

|

158

|

+

self._pause.clear()

|

|

159

|

+

self._execute()

|

|

160

|

+

else:

|

|

161

|

+

logger.info("pause! waiting for resume...")

|

|

162

|

+

elif check_emtpy_times > 2:

|

|

163

|

+

self.__DOING__ = {}

|

|

164

|

+

self._pause.set()

|

|

165

|

+

else:

|

|

166

|

+

logger.info(

|

|

167

|

+

"check whether the task is complete, "

|

|

168

|

+

f"reset times {3 - check_emtpy_times}"

|

|

169

|

+

)

|

|

170

|

+

check_emtpy_times += 1

|

|

171

|

+

# elif self._pause.is_set():

|

|

172

|

+

# self._pause.clear()

|

|

173

|

+

# self._execute()

|

|

174

|

+

else:

|

|

162

175

|

logger.info(LogTemplate.launcher_pro_polling.format(

|

|

163

176

|

task=self.task,

|

|

164

177

|

doing_len=len(self.__DOING__.keys()),

|

|

165

178

|

todo_len=self.__LAUNCHER_QUEUE__['todo'].length,

|

|

166

179

|

done_len=self.__LAUNCHER_QUEUE__['done'].length,

|

|

167

|

-

redis_seed_count=self._db.zcount(self.

|

|

168

|

-

redis_todo_len=self._db.zcount(self.

|

|

169

|

-

redis_doing_len=self._db.zcount(self.

|

|

170

|

-

upload_len=self.

|

|

180

|

+

redis_seed_count=self._db.zcount(self._todo_key, "-inf", "+inf"),

|

|

181

|

+

redis_todo_len=self._db.zcount(self._todo_key, 0, "(1000"),

|

|

182

|

+

redis_doing_len=self._db.zcount(self._todo_key, "-inf", "(0"),

|

|

183

|

+

upload_len=self.__LAUNCHER_QUEUE__['upload'].length,

|

|

171

184

|

))

|

|

185

|

+

|

|

172

186

|

time.sleep(pooling_wait_seconds)

|

|

187

|

+

# if self._pause.is_set():

|

|

188

|

+

# self._pause.clear()

|

|

189

|

+

# self._execute()

|

|

190

|

+

#

|

|

191

|

+

# elif queue_not_empty_count == 0:

|

|

192

|

+

# pooling_wait_seconds = 5

|

|

193

|

+

# check_emtpy_times += 1

|

|

194

|

+

# else:

|

|

195

|

+

# check_emtpy_times = 0

|

|

196

|

+

#

|

|

197

|

+

# if not self._db.zcount(self._todo, _min=0, _max="(1000") and check_emtpy_times > 2:

|

|

198

|

+

# check_emtpy_times = 0

|

|

199

|

+

# self.__DOING__ = {}

|

|

200

|

+

# self._pause.set()

|

|

201

|

+

#

|

|

202

|

+

# time.sleep(pooling_wait_seconds)

|

|

203

|

+

#

|

|

204

|

+

# if not self._pause.is_set():

|

|

205

|

+

# logger.info(LogTemplate.launcher_pro_polling.format(

|

|

206

|

+

# task=self.task,

|

|

207

|

+

# doing_len=len(self.__DOING__.keys()),

|

|

208

|

+

# todo_len=self.__LAUNCHER_QUEUE__['todo'].length,

|

|

209

|

+

# done_len=self.__LAUNCHER_QUEUE__['done'].length,

|

|

210

|

+

# redis_seed_count=self._db.zcount(self._todo, "-inf", "+inf"),

|

|

211

|

+

# redis_todo_len=self._db.zcount(self._todo, 0, "(1000"),

|

|

212

|

+

# redis_doing_len=self._db.zcount(self._todo, "-inf", "(0"),

|

|

213

|

+

# upload_len=self.__LAUNCHER_QUEUE__['upload'].length,

|

|

214

|

+

# ))

|

|

215

|

+

# elif not self._task_model:

|

|

216

|

+

# self._stop.set()

|

|

217

|

+

|

|

218

|

+

logger.info("Done! Ready to close thread...")

|

|

219

|

+

|

cobweb/pipelines/__init__.py

CHANGED

|

@@ -1,2 +1,3 @@

|

|

|

1

|

-

from .

|

|

2

|

-

from .

|

|

1

|

+

from .pipeline import Pipeline

|

|

2

|

+

from .pipeline_console import Console

|

|

3

|

+

from .pipeline_loghub import Loghub

|

|

@@ -0,0 +1,60 @@

|

|

|

1

|

+

import time

|

|

2

|

+

import threading

|

|

3

|

+

|

|

4

|

+

from abc import ABC, abstractmethod

|

|

5

|

+

from cobweb.base import BaseItem, Queue, logger

|

|

6

|

+

|

|

7

|

+

|

|

8

|

+

class Pipeline(threading.Thread, ABC):

|

|

9

|

+

|

|

10

|

+

def __init__(

|

|

11

|

+

self,

|

|

12

|

+

stop: threading.Event,

|

|

13

|

+

pause: threading.Event,

|

|

14

|

+

upload: Queue, done: Queue,

|

|

15

|

+

upload_size: int,

|

|

16

|

+

wait_seconds: int

|

|

17

|

+

):

|

|

18

|

+

super().__init__()

|

|

19

|

+

self._stop = stop

|

|

20

|

+

self._pause = pause

|

|

21

|

+

self._upload = upload

|

|

22

|

+

self._done = done

|

|

23

|

+

|

|

24

|

+

self.upload_size = upload_size

|

|

25

|

+

self.wait_seconds = wait_seconds

|

|

26

|

+

|

|

27

|

+

@abstractmethod

|

|

28

|

+

def build(self, item: BaseItem) -> dict:

|

|

29

|

+

pass

|

|

30

|

+

|

|

31

|

+

@abstractmethod

|

|

32

|

+

def upload(self, table: str, data: list) -> bool:

|

|

33

|

+

pass

|

|

34

|

+

|

|

35

|

+

def run(self):

|

|

36

|

+

while not self._stop.is_set():

|

|

37

|

+

status = self._upload.length < self.upload_size

|

|

38

|

+

if status:

|

|

39

|

+

time.sleep(self.wait_seconds)

|

|

40

|

+

data_info, seeds = {}, []

|

|

41

|

+

for _ in range(self.upload_size):

|

|

42

|

+

item = self._upload.pop()

|

|

43

|

+

if not item:

|

|

44

|

+

break

|

|

45

|

+

data = self.build(item)

|

|

46

|

+

seeds.append(item.seed)

|

|

47

|

+

data_info.setdefault(item.table, []).append(data)

|

|

48

|

+

for table, datas in data_info.items():

|

|

49

|

+

try:

|

|

50

|

+

self.upload(table, datas)

|

|

51

|

+

status = True

|

|

52

|

+

except Exception as e:

|

|

53

|

+

logger.info(e)

|

|

54

|

+

status = False

|

|

55

|

+

if status:

|

|

56

|

+

self._done.push(seeds)

|

|

57

|

+

|

|

58

|

+

logger.info("upload pipeline close!")

|

|

59

|

+

|

|

60

|

+

|

|

@@ -0,0 +1,22 @@

|

|

|

1

|

+

from cobweb.base import ConsoleItem, logger

|

|

2

|

+

from cobweb.constant import LogTemplate

|

|

3

|

+

from cobweb.pipelines import Pipeline

|

|

4

|

+

|

|

5

|

+

|

|

6

|

+

class Console(Pipeline):

|

|

7

|

+

|

|

8

|

+

def build(self, item: ConsoleItem):

|

|

9

|

+

return {

|

|

10

|

+

"seed": item.seed.to_dict,

|

|

11

|

+

"data": item.to_dict

|

|

12

|

+

}

|

|

13

|

+

|

|

14

|

+

def upload(self, table, datas):

|

|

15

|

+

for data in datas:

|

|

16

|

+

parse_detail = LogTemplate.log_info(data["data"])

|

|

17

|

+

if len(parse_detail) > 500:

|

|

18

|

+

parse_detail = parse_detail[:500] + " ...\n" + " " * 12 + "-- Text is too long and details are omitted!"

|

|

19

|

+

logger.info(LogTemplate.console_item.format(

|

|

20

|

+

seed_detail=LogTemplate.log_info(data["seed"]),

|

|

21

|

+

parse_detail=parse_detail

|

|

22

|

+

))

|

|

@@ -0,0 +1,34 @@

|

|

|

1

|

+

import json

|

|

2

|

+

|

|

3

|

+

from cobweb import setting

|

|

4

|

+

from cobweb.base import BaseItem

|

|

5

|

+

from cobweb.pipelines import Pipeline

|

|

6

|

+

from aliyun.log import LogClient, LogItem, PutLogsRequest

|

|

7

|

+

|

|

8

|

+

|

|

9

|

+

class Loghub(Pipeline):

|

|

10

|

+

|

|

11

|

+

def __init__(self, *args, **kwargs):

|

|

12

|

+

super().__init__(*args, **kwargs)

|

|

13

|

+

self.client = LogClient(**setting.LOGHUB_CONFIG)

|

|

14

|

+

|

|

15

|

+

def build(self, item: BaseItem):

|

|

16

|

+

log_item = LogItem()

|

|

17

|

+

temp = item.to_dict

|

|

18

|

+

for key, value in temp.items():

|

|

19

|

+

if not isinstance(value, str):

|

|

20

|

+

temp[key] = json.dumps(value, ensure_ascii=False)

|

|

21

|

+

contents = sorted(temp.items())

|

|

22

|

+

log_item.set_contents(contents)

|

|

23

|

+

return log_item

|

|

24

|

+

|

|

25

|

+

def upload(self, table, datas):

|

|

26

|

+

request = PutLogsRequest(

|

|

27

|

+

project=setting.LOGHUB_PROJECT,

|

|

28

|

+

logstore=table,

|

|

29

|

+

topic=setting.LOGHUB_TOPIC,

|

|

30

|

+

source=setting.LOGHUB_SOURCE,

|

|

31

|

+

logitems=datas,

|

|

32

|

+

compress=True

|

|

33

|

+

)

|

|

34

|

+

self.client.put_logs(request=request)

|

cobweb/setting.py

CHANGED

|

@@ -30,8 +30,8 @@ OSS_MIN_UPLOAD_SIZE = 1024

|

|

|

30

30

|

# 采集器选择

|

|

31

31

|

CRAWLER = "cobweb.crawlers.Crawler"

|

|

32

32

|

|

|

33

|

-

#

|

|

34

|

-

PIPELINE = "cobweb.pipelines.

|

|

33

|

+

# 数据存储链路

|

|

34

|

+

PIPELINE = "cobweb.pipelines.pipeline_console.Console"

|

|

35

35

|

|

|

36

36

|

|

|

37

37

|

# Launcher 等待时间

|

|

@@ -52,12 +52,12 @@ UPLOAD_QUEUE_MAX_SIZE = 100 # upload队列长度

|

|

|

52

52

|

# DONE_MODEL IN (0, 1), 种子完成模式

|

|

53

53

|

DONE_MODEL = 0 # 0:种子消费成功直接从队列移除,失败则添加至失败队列;1:种子消费成功添加至成功队列,失败添加至失败队列

|

|

54

54

|

|

|

55

|

-

# DOWNLOAD_MODEL IN (0, 1), 下载模式

|

|

56

|

-

DOWNLOAD_MODEL = 0 # 0: 通用下载;1:文件下载

|

|

57

|

-

|

|

58

55

|

# spider

|

|

59

56

|

SPIDER_THREAD_NUM = 10

|

|

60

57

|

SPIDER_MAX_RETRIES = 5

|

|

61

58

|

|

|

59

|

+

# 任务模式

|

|

60

|

+

TASK_MODEL = 0 # 0:单次,1:常驻

|

|

61

|

+

|

|

62

62

|

# 文件下载响应类型过滤

|

|

63

|

-

FILE_FILTER_CONTENT_TYPE = ["text/html", "application/xhtml+xml"]

|

|

63

|

+

# FILE_FILTER_CONTENT_TYPE = ["text/html", "application/xhtml+xml"]

|

cobweb/utils/tools.py

CHANGED

|

@@ -38,5 +38,5 @@ def dynamic_load_class(model_info):

|

|

|

38

38

|

raise TypeError()

|

|

39

39

|

|

|

40

40

|

|

|

41

|

-

def download_log_info(item:dict) -> str:

|

|

42

|

-

|

|

41

|

+

# def download_log_info(item:dict) -> str:

|

|

42

|

+

# return "\n".join([" " * 12 + f"{str(k).ljust(14)}: {str(v)}" for k, v in item.items()])

|

|

@@ -0,0 +1,204 @@

|

|

|

1

|

+

Metadata-Version: 2.1

|

|

2

|

+

Name: cobweb-launcher

|

|

3

|

+

Version: 1.2.1

|

|

4

|

+

Summary: spider_hole

|

|

5

|

+

Home-page: https://github.com/Juannie-PP/cobweb

|

|

6

|

+

Author: Juannie-PP

|

|

7

|

+

Author-email: 2604868278@qq.com

|

|

8

|

+

License: MIT

|

|

9

|

+

Keywords: cobweb-launcher, cobweb

|

|

10

|

+

Platform: UNKNOWN

|

|

11

|

+

Classifier: Programming Language :: Python :: 3

|

|

12

|

+

Requires-Python: >=3.7

|

|

13

|

+

Description-Content-Type: text/markdown

|

|

14

|

+

License-File: LICENSE

|

|

15

|

+

Requires-Dist: requests (>=2.19.1)

|

|

16

|

+

Requires-Dist: oss2 (>=2.18.1)

|

|

17

|

+

Requires-Dist: redis (>=4.4.4)

|

|

18

|

+

Requires-Dist: aliyun-log-python-sdk

|

|

19

|

+

|

|

20

|

+

# cobweb

|

|

21

|

+

cobweb是一个基于python的分布式爬虫调度框架,目前支持分布式爬虫,单机爬虫,支持自定义数据库,支持自定义数据存储,支持自定义数据处理等操作。

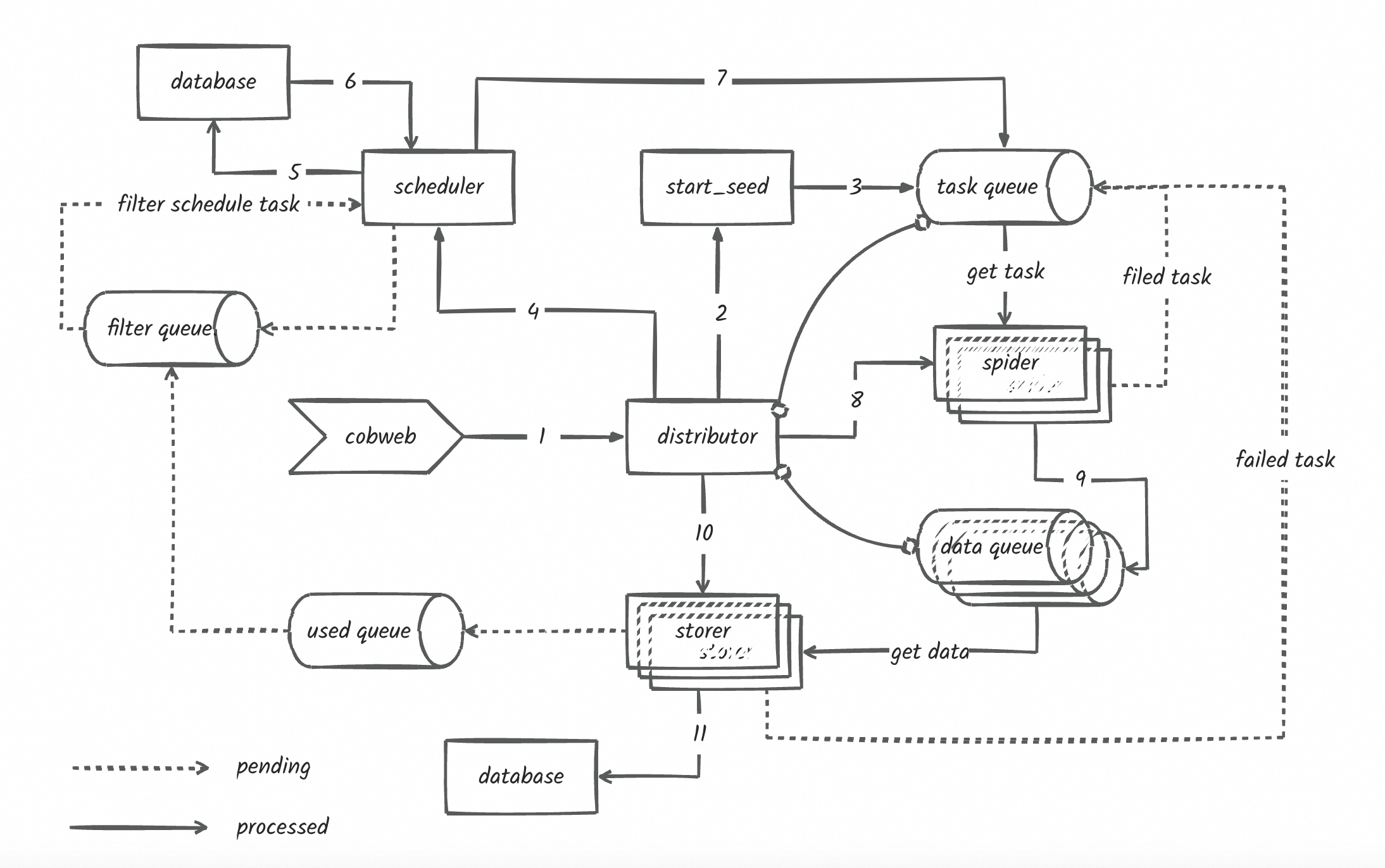

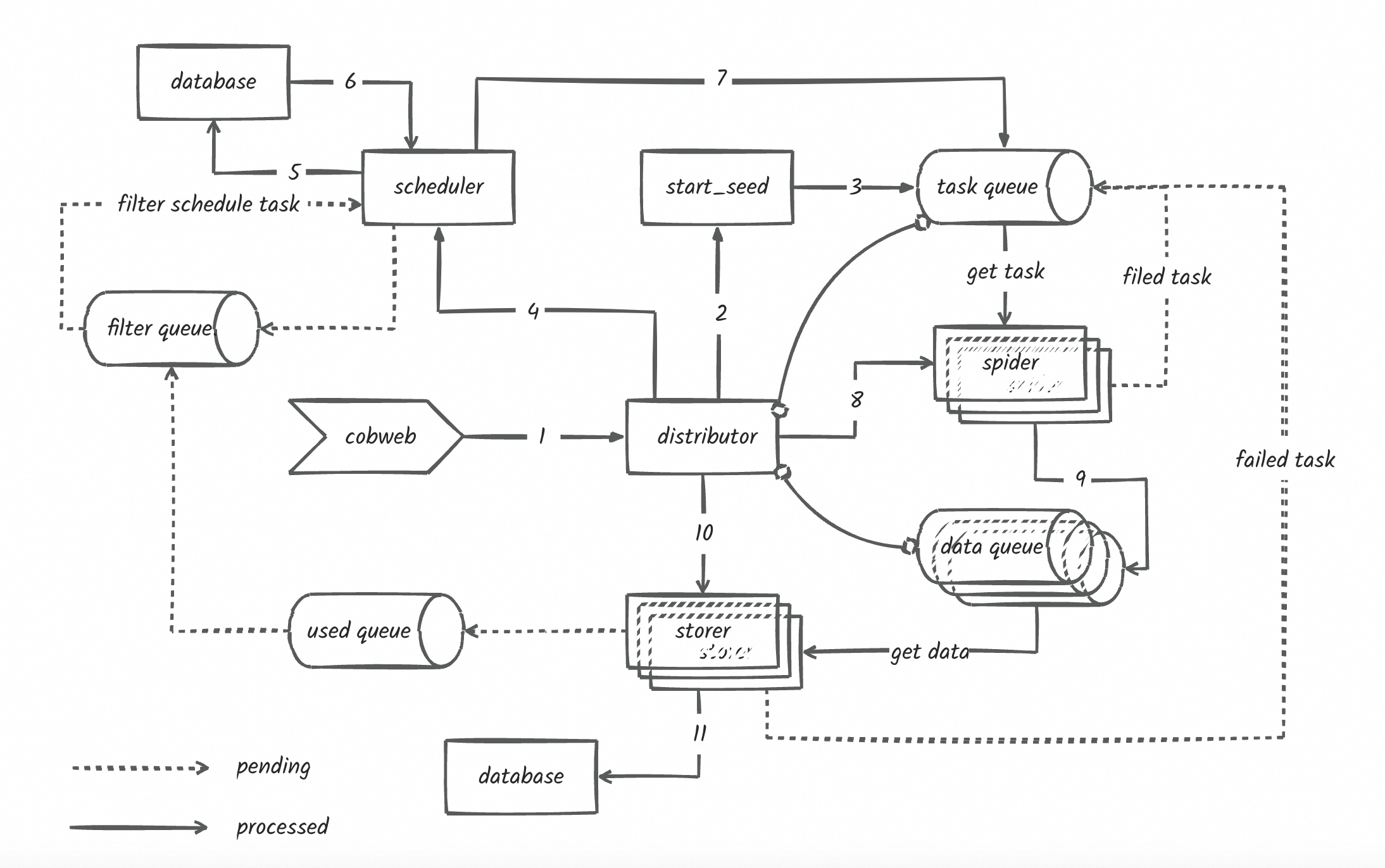

|

|

22

|

+

|

|

23

|

+

cobweb主要由3个模块和一个配置文件组成:Launcher启动器、Crawler采集器、Pipeline存储和setting配置文件。

|

|

24

|

+

1. Launcher启动器:用于启动爬虫任务,控制爬虫任务的执行流程,以及数据存储和数据处理。

|

|

25

|

+

框架提供两种启动器模式:LauncherAir、LauncherPro,分别对应单机爬虫模式和分布式调度模式。

|

|

26

|

+

2. Crawler采集器:用于控制采集流程、数据下载和数据处理。

|

|

27

|

+

框架提供了基础的采集器,用于控制采集流程、数据下载和数据处理,用户也可在创建任务时自定义请求、下载和解析方法,具体看使用方法介绍。

|

|

28

|

+

3. Pipeline存储:用于存储采集到的数据,支持自定义数据存储和数据处理。框架提供了Console和Loghub两种存储方式,用户也可继承Pipeline抽象类自定义存储方式。

|

|

29

|

+

4. setting配置文件:用于配置采集器、存储器、队列长度、采集线程数等参数,框架提供了默认配置,用户也可自定义配置。

|

|

30

|

+

## 安装

|

|

31

|

+

```

|

|

32

|

+

pip3 install --upgrade cobweb-launcher

|

|

33

|

+

```

|

|

34

|

+

## 使用方法介绍

|

|

35

|

+

### 1. 任务创建

|

|

36

|

+

- LauncherAir任务创建

|

|

37

|

+

```python

|

|

38

|

+

from cobweb import LauncherAir

|

|

39

|

+

|

|

40

|

+

# 创建启动器

|

|

41

|

+

app = LauncherAir(task="test", project="test")

|

|

42

|

+

|

|

43

|

+

# 设置采集种子

|

|

44

|

+

app.SEEDS = [{

|

|

45

|

+

"url": "https://www.baidu.com"

|

|

46

|

+

}]

|

|

47

|

+

...

|

|

48

|

+

# 启动任务

|

|

49

|

+

app.start()

|

|

50

|

+

```

|

|

51

|

+

- LauncherPro任务创建

|

|

52

|

+

LauncherPro依赖redis实现分布式调度,使用LauncherPro启动器需要完成环境变量的配置或自定义setting文件中的redis配置,如何配置查看`2. 自定义配置文件参数`

|

|

53

|

+

```python

|

|

54

|

+

from cobweb import LauncherPro

|

|

55

|

+

|

|

56

|

+

# 创建启动器

|

|

57

|

+

app = LauncherPro(

|

|

58

|

+

task="test",

|

|

59

|

+

project="test"

|

|

60

|

+

)

|

|

61

|

+

...

|

|

62

|

+

# 启动任务

|

|

63

|

+

app.start()

|

|

64

|

+

```

|

|

65

|

+

### 2. 自定义配置文件参数

|

|

66

|

+

- 通过自定义setting文件,配置文件导入字符串方式

|

|

67

|

+

> 默认配置文件:import cobweb.setting

|

|

68

|

+

> 不推荐!!!目前有bug,随缘使用...

|

|

69

|

+

例如:同级目录下自定义创建了setting.py文件。

|

|

70

|

+

```python

|

|

71

|

+

from cobweb import LauncherAir

|

|

72

|

+

|

|

73

|

+

app = LauncherAir(

|

|

74

|

+

task="test",

|

|

75

|

+

project="test",

|

|

76

|

+

setting="import setting"

|

|

77

|

+

)

|

|

78

|

+

|

|

79

|

+

...

|

|

80

|

+

|

|

81

|

+

app.start()

|

|

82

|

+

```

|

|

83

|

+

- 自定义修改setting中对象值

|

|

84

|

+

```python

|

|

85

|

+

from cobweb import LauncherPro

|

|

86

|

+

|

|

87

|

+

# 创建启动器

|

|

88

|

+

app = LauncherPro(

|

|

89

|

+

task="test",

|

|

90

|

+

project="test",

|

|

91

|

+

REDIS_CONFIG = {

|

|

92

|

+

"host": ...,

|

|

93

|

+

"password":...,

|

|

94

|

+

"port": ...,

|

|

95

|

+

"db": ...

|

|

96

|

+

}

|

|

97

|

+

)

|

|

98

|

+

...

|

|

99

|

+

# 启动任务

|

|

100

|

+

app.start()

|

|

101

|

+

```

|

|

102

|

+

### 3. 自定义请求

|

|

103

|

+

`@app.request`使用装饰器封装自定义请求方法,作用于发生请求前的操作,返回Request对象或继承于BaseItem对象,用于控制请求参数。

|

|

104

|

+

```python

|

|

105

|

+

from typing import Union

|

|

106

|

+

from cobweb import LauncherAir

|

|

107

|

+

from cobweb.base import Seed, Request, BaseItem

|

|

108

|

+

|

|

109

|

+

app = LauncherAir(

|

|

110

|

+

task="test",

|

|

111

|

+

project="test"

|

|

112

|

+

)

|

|

113

|

+

|

|

114

|

+

...

|

|

115

|

+

|

|

116

|

+

@app.request

|

|

117

|

+

def request(seed: Seed) -> Union[Request, BaseItem]:

|

|

118

|

+

# 可自定义headers,代理,构造请求参数等操作

|

|

119

|

+

proxies = {"http": ..., "https": ...}

|

|

120

|

+

yield Request(seed.url, seed, ..., proxies=proxies, timeout=15)

|

|

121

|

+

# yield xxxItem(seed, ...) # 跳过请求和解析直接进入数据存储流程

|

|

122

|

+

|

|

123

|

+

...

|

|

124

|

+

|

|

125

|

+

app.start()

|

|

126

|

+

```

|

|

127

|

+

> 默认请求方法

|

|

128

|

+

> def request(seed: Seed) -> Union[Request, BaseItem]:

|

|

129

|

+

> yield Request(seed.url, seed, timeout=5)

|

|

130

|

+

### 4. 自定义下载

|

|

131

|

+

`@app.download`使用装饰器封装自定义下载方法,作用于发生请求时的操作,返回Response对象或继承于BaseItem对象,用于控制请求参数。

|

|

132

|

+

```python

|

|

133

|

+

from typing import Union

|

|

134

|

+

from cobweb import LauncherAir

|

|

135

|

+

from cobweb.base import Request, Response, BaseItem

|

|

136

|

+

|

|

137

|

+

app = LauncherAir(

|

|

138

|

+

task="test",

|

|

139

|

+

project="test"

|

|

140

|

+

)

|

|

141

|

+

|

|

142

|

+

...

|

|

143

|

+

|

|

144

|

+

@app.download

|

|

145

|

+

def download(item: Request) -> Union[BaseItem, Response]:

|

|

146

|

+

...

|

|

147

|

+

response = ...

|

|

148

|

+

...

|

|

149

|

+

yield Response(item.seed, response, ...) # 返回Response对象,进行解析

|

|

150

|

+

# yield xxxItem(seed, ...) # 跳过请求和解析直接进入数据存储流程

|

|

151

|

+

|

|

152

|

+

...

|

|

153

|

+

|

|

154

|

+

app.start()

|

|

155

|

+

```

|

|

156

|

+

> 默认下载方法

|

|

157

|

+

> def download(item: Request) -> Union[Seed, BaseItem, Response, str]:

|

|

158

|

+

> response = item.download()

|

|

159

|

+

> yield Response(item.seed, response, **item.to_dict)

|

|

160

|

+

### 5. 自定义解析

|

|

161

|

+

自定义解析需要由一个存储数据类和解析方法组成。存储数据类继承于BaseItem的对象,规定存储表名及字段,

|

|

162

|

+

解析方法返回继承于BaseItem的对象,yield返回进行控制数据存储流程。

|

|

163

|

+

```python

|

|

164

|

+

from typing import Union

|

|

165

|

+

from cobweb import LauncherAir

|

|

166

|

+

from cobweb.base import Seed, Response, BaseItem

|

|

167

|

+

|

|

168

|

+

class TestItem(BaseItem):

|

|

169

|

+

__TABLE__ = "test_data" # 表名

|

|

170

|

+

__FIELDS__ = "field1, field2, field3" # 字段名

|

|

171

|

+

|

|

172

|

+

app = LauncherAir(

|

|

173

|

+

task="test",

|

|

174

|

+

project="test"

|

|

175

|

+

)

|

|

176

|

+

|

|

177

|

+

...

|

|

178

|

+

|

|

179

|

+

@app.parse

|

|

180

|

+

def parse(item: Response) -> Union[Seed, BaseItem]:

|

|

181

|

+

...

|

|

182

|

+

yield TestItem(item.seed, field1=..., field2=..., field3=...)

|

|

183

|

+

# yield Seed(...) # 构造新种子推送至消费队列

|

|

184

|

+

|

|

185

|

+

...

|

|

186

|

+

|

|

187

|

+

app.start()

|

|

188

|

+

```

|

|

189

|

+

> 默认解析方法

|

|

190

|

+

> def parse(item: Request) -> Union[Seed, BaseItem]:

|

|

191

|

+

> upload_item = item.to_dict

|

|

192

|

+

> upload_item["text"] = item.response.text

|

|

193

|

+

> yield ConsoleItem(item.seed, data=json.dumps(upload_item, ensure_ascii=False))

|

|

194

|

+

## need deal

|

|

195

|

+

- 队列优化完善,使用queue的机制wait()同步各模块执行?

|

|

196

|

+

- 日志功能完善,单机模式调度和保存数据写入文件,结构化输出各任务日志

|

|

197

|

+

- 去重过滤(布隆过滤器等)

|

|

198

|

+

- 单机防丢失

|

|

199

|

+

- excel、mysql、redis数据完善

|

|

200

|

+

|

|

201

|

+

> 未更新流程图!!!

|

|

202

|

+

|

|

203

|

+

|

|

204

|

+

|

|

@@ -0,0 +1,37 @@

|

|

|

1

|

+

cobweb/__init__.py,sha256=uMHyf4Fekbyw2xBCbkA8R0LwCpBJf5p_7pWbh60ZWYk,83

|

|

2

|

+

cobweb/constant.py,sha256=zy3XYsc1qp2B76_Fn_hVQ8eGHlPBd3OFlZK2cryE6FY,2839

|

|

3

|

+

cobweb/setting.py,sha256=zOO1cA_zQd4Q0CzY_tdSfdo-10L4QIVpm4382wbP5BQ,1906

|

|

4

|

+

cobweb/base/__init__.py,sha256=4gwWWQ0Q8cYG9cD7Lwf4XMqRGc5M_mapS3IczR6zeCE,222

|

|

5

|

+

cobweb/base/common_queue.py,sha256=W7PPZZFl52j3Mc916T0imHj7oAUelA6aKJwW-FecDPE,872

|

|

6

|

+

cobweb/base/decorators.py,sha256=wDCaQ94aAZGxks9Ljc0aXq6omDXT1_yzFy83ZW6VbVI,930

|

|

7

|

+

cobweb/base/item.py,sha256=hYheVTV2Bozp4iciJpE2ZwBIXkaqBg4QQkRccP8yoVk,1049

|

|

8

|

+

cobweb/base/log.py,sha256=L01hXdk3L2qEm9X1FOXQ9VmWIoHSELe0cyZvrdAN61A,2003

|

|

9

|

+

cobweb/base/request.py,sha256=tEkgMVUfdQI-kZuzWuiit9P_q4Q9-_RZh9aXXpc0314,2352

|

|

10

|

+

cobweb/base/response.py,sha256=eB1DWMXFCpn3cJ3yzgCRU1WeZAdayGDohRgdjdMUFN4,406

|

|

11

|

+

cobweb/base/seed.py,sha256=Uz_VBRlAxNYQcFHk3tsZFMlU96yPOedHaWGTvk-zKd8,2908

|

|

12

|

+

cobweb/crawlers/__init__.py,sha256=msvkB9mTpsgyj8JfNMsmwAcpy5kWk_2NrO1Adw2Hkw0,29

|

|

13

|

+

cobweb/crawlers/base_crawler.py,sha256=ee_WSDnPQpPTk6wlFuY2UEx5L3hcsAZFcr6i3GLSry8,5751

|

|

14

|

+

cobweb/crawlers/crawler.py,sha256=_4bU4rlg-oQTztWibtAEKY4nzFxpZ-FBu6KuwhEkewE,6003

|

|

15

|

+

cobweb/crawlers/file_crawler.py,sha256=2Sjbdgxzqd41WykKUQE3QQlGai3T8k-pmHNmPlTchjQ,4454

|

|

16

|

+

cobweb/db/__init__.py,sha256=ut0iEyBLjcJL06WNG_5_d4hO5PJWvDrKWMkDOdmgh2M,30

|

|

17

|

+

cobweb/db/redis_db.py,sha256=NNI2QkRV1hEZI-z-COEncXt88z3pZN6wusKlcQzc8V4,4304

|

|

18

|

+

cobweb/exceptions/__init__.py,sha256=E9SHnJBbhD7fOgPFMswqyOf8SKRDrI_i25L0bSpohvk,32

|

|

19

|

+

cobweb/exceptions/oss_db_exception.py,sha256=iP_AImjNHT3-Iv49zCFQ3rdLnlvuHa3h2BXApgrOYpA,636

|

|

20

|

+

cobweb/launchers/__init__.py,sha256=af0Y6wrGX8SQZ7w7XL2sOtREjCT3dwad-uCc3nIontY,76

|

|

21

|

+

cobweb/launchers/launcher.py,sha256=_oPoFNXp3Rrf5eK2tcHtwHZi2pKbuNPBf_VRvj9dJUc,5379

|

|

22