arize-phoenix 3.16.1__py3-none-any.whl → 7.7.0__py3-none-any.whl

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

Potentially problematic release.

This version of arize-phoenix might be problematic. Click here for more details.

- arize_phoenix-7.7.0.dist-info/METADATA +261 -0

- arize_phoenix-7.7.0.dist-info/RECORD +345 -0

- {arize_phoenix-3.16.1.dist-info → arize_phoenix-7.7.0.dist-info}/WHEEL +1 -1

- arize_phoenix-7.7.0.dist-info/entry_points.txt +3 -0

- phoenix/__init__.py +86 -14

- phoenix/auth.py +309 -0

- phoenix/config.py +675 -45

- phoenix/core/model.py +32 -30

- phoenix/core/model_schema.py +102 -109

- phoenix/core/model_schema_adapter.py +48 -45

- phoenix/datetime_utils.py +24 -3

- phoenix/db/README.md +54 -0

- phoenix/db/__init__.py +4 -0

- phoenix/db/alembic.ini +85 -0

- phoenix/db/bulk_inserter.py +294 -0

- phoenix/db/engines.py +208 -0

- phoenix/db/enums.py +20 -0

- phoenix/db/facilitator.py +113 -0

- phoenix/db/helpers.py +159 -0

- phoenix/db/insertion/constants.py +2 -0

- phoenix/db/insertion/dataset.py +227 -0

- phoenix/db/insertion/document_annotation.py +171 -0

- phoenix/db/insertion/evaluation.py +191 -0

- phoenix/db/insertion/helpers.py +98 -0

- phoenix/db/insertion/span.py +193 -0

- phoenix/db/insertion/span_annotation.py +158 -0

- phoenix/db/insertion/trace_annotation.py +158 -0

- phoenix/db/insertion/types.py +256 -0

- phoenix/db/migrate.py +86 -0

- phoenix/db/migrations/data_migration_scripts/populate_project_sessions.py +199 -0

- phoenix/db/migrations/env.py +114 -0

- phoenix/db/migrations/script.py.mako +26 -0

- phoenix/db/migrations/versions/10460e46d750_datasets.py +317 -0

- phoenix/db/migrations/versions/3be8647b87d8_add_token_columns_to_spans_table.py +126 -0

- phoenix/db/migrations/versions/4ded9e43755f_create_project_sessions_table.py +66 -0

- phoenix/db/migrations/versions/cd164e83824f_users_and_tokens.py +157 -0

- phoenix/db/migrations/versions/cf03bd6bae1d_init.py +280 -0

- phoenix/db/models.py +807 -0

- phoenix/exceptions.py +5 -1

- phoenix/experiments/__init__.py +6 -0

- phoenix/experiments/evaluators/__init__.py +29 -0

- phoenix/experiments/evaluators/base.py +158 -0

- phoenix/experiments/evaluators/code_evaluators.py +184 -0

- phoenix/experiments/evaluators/llm_evaluators.py +473 -0

- phoenix/experiments/evaluators/utils.py +236 -0

- phoenix/experiments/functions.py +772 -0

- phoenix/experiments/tracing.py +86 -0

- phoenix/experiments/types.py +726 -0

- phoenix/experiments/utils.py +25 -0

- phoenix/inferences/__init__.py +0 -0

- phoenix/{datasets → inferences}/errors.py +6 -5

- phoenix/{datasets → inferences}/fixtures.py +49 -42

- phoenix/{datasets/dataset.py → inferences/inferences.py} +121 -105

- phoenix/{datasets → inferences}/schema.py +11 -11

- phoenix/{datasets → inferences}/validation.py +13 -14

- phoenix/logging/__init__.py +3 -0

- phoenix/logging/_config.py +90 -0

- phoenix/logging/_filter.py +6 -0

- phoenix/logging/_formatter.py +69 -0

- phoenix/metrics/__init__.py +5 -4

- phoenix/metrics/binning.py +4 -3

- phoenix/metrics/metrics.py +2 -1

- phoenix/metrics/mixins.py +7 -6

- phoenix/metrics/retrieval_metrics.py +2 -1

- phoenix/metrics/timeseries.py +5 -4

- phoenix/metrics/wrappers.py +9 -3

- phoenix/pointcloud/clustering.py +5 -5

- phoenix/pointcloud/pointcloud.py +7 -5

- phoenix/pointcloud/projectors.py +5 -6

- phoenix/pointcloud/umap_parameters.py +53 -52

- phoenix/server/api/README.md +28 -0

- phoenix/server/api/auth.py +44 -0

- phoenix/server/api/context.py +152 -9

- phoenix/server/api/dataloaders/__init__.py +91 -0

- phoenix/server/api/dataloaders/annotation_summaries.py +139 -0

- phoenix/server/api/dataloaders/average_experiment_run_latency.py +54 -0

- phoenix/server/api/dataloaders/cache/__init__.py +3 -0

- phoenix/server/api/dataloaders/cache/two_tier_cache.py +68 -0

- phoenix/server/api/dataloaders/dataset_example_revisions.py +131 -0

- phoenix/server/api/dataloaders/dataset_example_spans.py +38 -0

- phoenix/server/api/dataloaders/document_evaluation_summaries.py +144 -0

- phoenix/server/api/dataloaders/document_evaluations.py +31 -0

- phoenix/server/api/dataloaders/document_retrieval_metrics.py +89 -0

- phoenix/server/api/dataloaders/experiment_annotation_summaries.py +79 -0

- phoenix/server/api/dataloaders/experiment_error_rates.py +58 -0

- phoenix/server/api/dataloaders/experiment_run_annotations.py +36 -0

- phoenix/server/api/dataloaders/experiment_run_counts.py +49 -0

- phoenix/server/api/dataloaders/experiment_sequence_number.py +44 -0

- phoenix/server/api/dataloaders/latency_ms_quantile.py +188 -0

- phoenix/server/api/dataloaders/min_start_or_max_end_times.py +85 -0

- phoenix/server/api/dataloaders/project_by_name.py +31 -0

- phoenix/server/api/dataloaders/record_counts.py +116 -0

- phoenix/server/api/dataloaders/session_io.py +79 -0

- phoenix/server/api/dataloaders/session_num_traces.py +30 -0

- phoenix/server/api/dataloaders/session_num_traces_with_error.py +32 -0

- phoenix/server/api/dataloaders/session_token_usages.py +41 -0

- phoenix/server/api/dataloaders/session_trace_latency_ms_quantile.py +55 -0

- phoenix/server/api/dataloaders/span_annotations.py +26 -0

- phoenix/server/api/dataloaders/span_dataset_examples.py +31 -0

- phoenix/server/api/dataloaders/span_descendants.py +57 -0

- phoenix/server/api/dataloaders/span_projects.py +33 -0

- phoenix/server/api/dataloaders/token_counts.py +124 -0

- phoenix/server/api/dataloaders/trace_by_trace_ids.py +25 -0

- phoenix/server/api/dataloaders/trace_root_spans.py +32 -0

- phoenix/server/api/dataloaders/user_roles.py +30 -0

- phoenix/server/api/dataloaders/users.py +33 -0

- phoenix/server/api/exceptions.py +48 -0

- phoenix/server/api/helpers/__init__.py +12 -0

- phoenix/server/api/helpers/dataset_helpers.py +217 -0

- phoenix/server/api/helpers/experiment_run_filters.py +763 -0

- phoenix/server/api/helpers/playground_clients.py +948 -0

- phoenix/server/api/helpers/playground_registry.py +70 -0

- phoenix/server/api/helpers/playground_spans.py +455 -0

- phoenix/server/api/input_types/AddExamplesToDatasetInput.py +16 -0

- phoenix/server/api/input_types/AddSpansToDatasetInput.py +14 -0

- phoenix/server/api/input_types/ChatCompletionInput.py +38 -0

- phoenix/server/api/input_types/ChatCompletionMessageInput.py +24 -0

- phoenix/server/api/input_types/ClearProjectInput.py +15 -0

- phoenix/server/api/input_types/ClusterInput.py +2 -2

- phoenix/server/api/input_types/CreateDatasetInput.py +12 -0

- phoenix/server/api/input_types/CreateSpanAnnotationInput.py +18 -0

- phoenix/server/api/input_types/CreateTraceAnnotationInput.py +18 -0

- phoenix/server/api/input_types/DataQualityMetricInput.py +5 -2

- phoenix/server/api/input_types/DatasetExampleInput.py +14 -0

- phoenix/server/api/input_types/DatasetSort.py +17 -0

- phoenix/server/api/input_types/DatasetVersionSort.py +16 -0

- phoenix/server/api/input_types/DeleteAnnotationsInput.py +7 -0

- phoenix/server/api/input_types/DeleteDatasetExamplesInput.py +13 -0

- phoenix/server/api/input_types/DeleteDatasetInput.py +7 -0

- phoenix/server/api/input_types/DeleteExperimentsInput.py +7 -0

- phoenix/server/api/input_types/DimensionFilter.py +4 -4

- phoenix/server/api/input_types/GenerativeModelInput.py +17 -0

- phoenix/server/api/input_types/Granularity.py +1 -1

- phoenix/server/api/input_types/InvocationParameters.py +162 -0

- phoenix/server/api/input_types/PatchAnnotationInput.py +19 -0

- phoenix/server/api/input_types/PatchDatasetExamplesInput.py +35 -0

- phoenix/server/api/input_types/PatchDatasetInput.py +14 -0

- phoenix/server/api/input_types/PerformanceMetricInput.py +5 -2

- phoenix/server/api/input_types/ProjectSessionSort.py +29 -0

- phoenix/server/api/input_types/SpanAnnotationSort.py +17 -0

- phoenix/server/api/input_types/SpanSort.py +134 -69

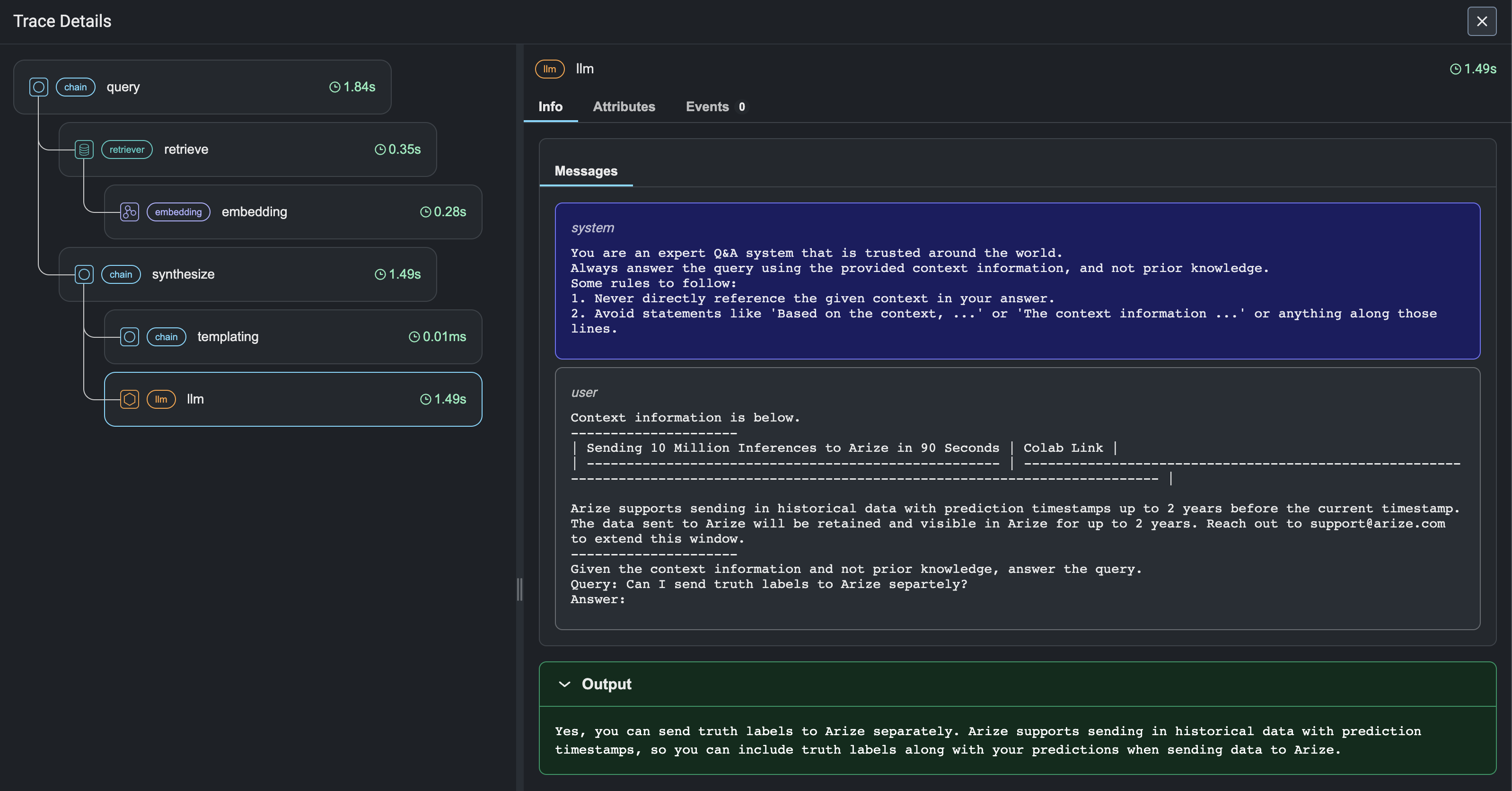

- phoenix/server/api/input_types/TemplateOptions.py +10 -0

- phoenix/server/api/input_types/TraceAnnotationSort.py +17 -0

- phoenix/server/api/input_types/UserRoleInput.py +9 -0

- phoenix/server/api/mutations/__init__.py +28 -0

- phoenix/server/api/mutations/api_key_mutations.py +167 -0

- phoenix/server/api/mutations/chat_mutations.py +593 -0

- phoenix/server/api/mutations/dataset_mutations.py +591 -0

- phoenix/server/api/mutations/experiment_mutations.py +75 -0

- phoenix/server/api/{types/ExportEventsMutation.py → mutations/export_events_mutations.py} +21 -18

- phoenix/server/api/mutations/project_mutations.py +57 -0

- phoenix/server/api/mutations/span_annotations_mutations.py +128 -0

- phoenix/server/api/mutations/trace_annotations_mutations.py +127 -0

- phoenix/server/api/mutations/user_mutations.py +329 -0

- phoenix/server/api/openapi/__init__.py +0 -0

- phoenix/server/api/openapi/main.py +17 -0

- phoenix/server/api/openapi/schema.py +16 -0

- phoenix/server/api/queries.py +738 -0

- phoenix/server/api/routers/__init__.py +11 -0

- phoenix/server/api/routers/auth.py +284 -0

- phoenix/server/api/routers/embeddings.py +26 -0

- phoenix/server/api/routers/oauth2.py +488 -0

- phoenix/server/api/routers/v1/__init__.py +64 -0

- phoenix/server/api/routers/v1/datasets.py +1017 -0

- phoenix/server/api/routers/v1/evaluations.py +362 -0

- phoenix/server/api/routers/v1/experiment_evaluations.py +115 -0

- phoenix/server/api/routers/v1/experiment_runs.py +167 -0

- phoenix/server/api/routers/v1/experiments.py +308 -0

- phoenix/server/api/routers/v1/pydantic_compat.py +78 -0

- phoenix/server/api/routers/v1/spans.py +267 -0

- phoenix/server/api/routers/v1/traces.py +208 -0

- phoenix/server/api/routers/v1/utils.py +95 -0

- phoenix/server/api/schema.py +44 -241

- phoenix/server/api/subscriptions.py +597 -0

- phoenix/server/api/types/Annotation.py +21 -0

- phoenix/server/api/types/AnnotationSummary.py +55 -0

- phoenix/server/api/types/AnnotatorKind.py +16 -0

- phoenix/server/api/types/ApiKey.py +27 -0

- phoenix/server/api/types/AuthMethod.py +9 -0

- phoenix/server/api/types/ChatCompletionMessageRole.py +11 -0

- phoenix/server/api/types/ChatCompletionSubscriptionPayload.py +46 -0

- phoenix/server/api/types/Cluster.py +25 -24

- phoenix/server/api/types/CreateDatasetPayload.py +8 -0

- phoenix/server/api/types/DataQualityMetric.py +31 -13

- phoenix/server/api/types/Dataset.py +288 -63

- phoenix/server/api/types/DatasetExample.py +85 -0

- phoenix/server/api/types/DatasetExampleRevision.py +34 -0

- phoenix/server/api/types/DatasetVersion.py +14 -0

- phoenix/server/api/types/Dimension.py +32 -31

- phoenix/server/api/types/DocumentEvaluationSummary.py +9 -8

- phoenix/server/api/types/EmbeddingDimension.py +56 -49

- phoenix/server/api/types/Evaluation.py +25 -31

- phoenix/server/api/types/EvaluationSummary.py +30 -50

- phoenix/server/api/types/Event.py +20 -20

- phoenix/server/api/types/ExampleRevisionInterface.py +14 -0

- phoenix/server/api/types/Experiment.py +152 -0

- phoenix/server/api/types/ExperimentAnnotationSummary.py +13 -0

- phoenix/server/api/types/ExperimentComparison.py +17 -0

- phoenix/server/api/types/ExperimentRun.py +119 -0

- phoenix/server/api/types/ExperimentRunAnnotation.py +56 -0

- phoenix/server/api/types/GenerativeModel.py +9 -0

- phoenix/server/api/types/GenerativeProvider.py +85 -0

- phoenix/server/api/types/Inferences.py +80 -0

- phoenix/server/api/types/InferencesRole.py +23 -0

- phoenix/server/api/types/LabelFraction.py +7 -0

- phoenix/server/api/types/MimeType.py +2 -2

- phoenix/server/api/types/Model.py +54 -54

- phoenix/server/api/types/PerformanceMetric.py +8 -5

- phoenix/server/api/types/Project.py +407 -142

- phoenix/server/api/types/ProjectSession.py +139 -0

- phoenix/server/api/types/Segments.py +4 -4

- phoenix/server/api/types/Span.py +221 -176

- phoenix/server/api/types/SpanAnnotation.py +43 -0

- phoenix/server/api/types/SpanIOValue.py +15 -0

- phoenix/server/api/types/SystemApiKey.py +9 -0

- phoenix/server/api/types/TemplateLanguage.py +10 -0

- phoenix/server/api/types/TimeSeries.py +19 -15

- phoenix/server/api/types/TokenUsage.py +11 -0

- phoenix/server/api/types/Trace.py +154 -0

- phoenix/server/api/types/TraceAnnotation.py +45 -0

- phoenix/server/api/types/UMAPPoints.py +7 -7

- phoenix/server/api/types/User.py +60 -0

- phoenix/server/api/types/UserApiKey.py +45 -0

- phoenix/server/api/types/UserRole.py +15 -0

- phoenix/server/api/types/node.py +4 -112

- phoenix/server/api/types/pagination.py +156 -57

- phoenix/server/api/utils.py +34 -0

- phoenix/server/app.py +864 -115

- phoenix/server/bearer_auth.py +163 -0

- phoenix/server/dml_event.py +136 -0

- phoenix/server/dml_event_handler.py +256 -0

- phoenix/server/email/__init__.py +0 -0

- phoenix/server/email/sender.py +97 -0

- phoenix/server/email/templates/__init__.py +0 -0

- phoenix/server/email/templates/password_reset.html +19 -0

- phoenix/server/email/types.py +11 -0

- phoenix/server/grpc_server.py +102 -0

- phoenix/server/jwt_store.py +505 -0

- phoenix/server/main.py +305 -116

- phoenix/server/oauth2.py +52 -0

- phoenix/server/openapi/__init__.py +0 -0

- phoenix/server/prometheus.py +111 -0

- phoenix/server/rate_limiters.py +188 -0

- phoenix/server/static/.vite/manifest.json +87 -0

- phoenix/server/static/assets/components-Cy9nwIvF.js +2125 -0

- phoenix/server/static/assets/index-BKvHIxkk.js +113 -0

- phoenix/server/static/assets/pages-CUi2xCVQ.js +4449 -0

- phoenix/server/static/assets/vendor-DvC8cT4X.js +894 -0

- phoenix/server/static/assets/vendor-DxkFTwjz.css +1 -0

- phoenix/server/static/assets/vendor-arizeai-Do1793cv.js +662 -0

- phoenix/server/static/assets/vendor-codemirror-BzwZPyJM.js +24 -0

- phoenix/server/static/assets/vendor-recharts-_Jb7JjhG.js +59 -0

- phoenix/server/static/assets/vendor-shiki-Cl9QBraO.js +5 -0

- phoenix/server/static/assets/vendor-three-DwGkEfCM.js +2998 -0

- phoenix/server/telemetry.py +68 -0

- phoenix/server/templates/index.html +82 -23

- phoenix/server/thread_server.py +3 -3

- phoenix/server/types.py +275 -0

- phoenix/services.py +27 -18

- phoenix/session/client.py +743 -68

- phoenix/session/data_extractor.py +31 -7

- phoenix/session/evaluation.py +3 -9

- phoenix/session/session.py +263 -219

- phoenix/settings.py +22 -0

- phoenix/trace/__init__.py +2 -22

- phoenix/trace/attributes.py +338 -0

- phoenix/trace/dsl/README.md +116 -0

- phoenix/trace/dsl/filter.py +663 -213

- phoenix/trace/dsl/helpers.py +73 -21

- phoenix/trace/dsl/query.py +574 -201

- phoenix/trace/exporter.py +24 -19

- phoenix/trace/fixtures.py +368 -32

- phoenix/trace/otel.py +71 -219

- phoenix/trace/projects.py +3 -2

- phoenix/trace/schemas.py +33 -11

- phoenix/trace/span_evaluations.py +21 -16

- phoenix/trace/span_json_decoder.py +6 -4

- phoenix/trace/span_json_encoder.py +2 -2

- phoenix/trace/trace_dataset.py +47 -32

- phoenix/trace/utils.py +21 -4

- phoenix/utilities/__init__.py +0 -26

- phoenix/utilities/client.py +132 -0

- phoenix/utilities/deprecation.py +31 -0

- phoenix/utilities/error_handling.py +3 -2

- phoenix/utilities/json.py +109 -0

- phoenix/utilities/logging.py +8 -0

- phoenix/utilities/project.py +2 -2

- phoenix/utilities/re.py +49 -0

- phoenix/utilities/span_store.py +0 -23

- phoenix/utilities/template_formatters.py +99 -0

- phoenix/version.py +1 -1

- arize_phoenix-3.16.1.dist-info/METADATA +0 -495

- arize_phoenix-3.16.1.dist-info/RECORD +0 -178

- phoenix/core/project.py +0 -619

- phoenix/core/traces.py +0 -96

- phoenix/experimental/evals/__init__.py +0 -73

- phoenix/experimental/evals/evaluators.py +0 -413

- phoenix/experimental/evals/functions/__init__.py +0 -4

- phoenix/experimental/evals/functions/classify.py +0 -453

- phoenix/experimental/evals/functions/executor.py +0 -353

- phoenix/experimental/evals/functions/generate.py +0 -138

- phoenix/experimental/evals/functions/processing.py +0 -76

- phoenix/experimental/evals/models/__init__.py +0 -14

- phoenix/experimental/evals/models/anthropic.py +0 -175

- phoenix/experimental/evals/models/base.py +0 -170

- phoenix/experimental/evals/models/bedrock.py +0 -221

- phoenix/experimental/evals/models/litellm.py +0 -134

- phoenix/experimental/evals/models/openai.py +0 -448

- phoenix/experimental/evals/models/rate_limiters.py +0 -246

- phoenix/experimental/evals/models/vertex.py +0 -173

- phoenix/experimental/evals/models/vertexai.py +0 -186

- phoenix/experimental/evals/retrievals.py +0 -96

- phoenix/experimental/evals/templates/__init__.py +0 -50

- phoenix/experimental/evals/templates/default_templates.py +0 -472

- phoenix/experimental/evals/templates/template.py +0 -195

- phoenix/experimental/evals/utils/__init__.py +0 -172

- phoenix/experimental/evals/utils/threads.py +0 -27

- phoenix/server/api/helpers.py +0 -11

- phoenix/server/api/routers/evaluation_handler.py +0 -109

- phoenix/server/api/routers/span_handler.py +0 -70

- phoenix/server/api/routers/trace_handler.py +0 -60

- phoenix/server/api/types/DatasetRole.py +0 -23

- phoenix/server/static/index.css +0 -6

- phoenix/server/static/index.js +0 -7447

- phoenix/storage/span_store/__init__.py +0 -23

- phoenix/storage/span_store/text_file.py +0 -85

- phoenix/trace/dsl/missing.py +0 -60

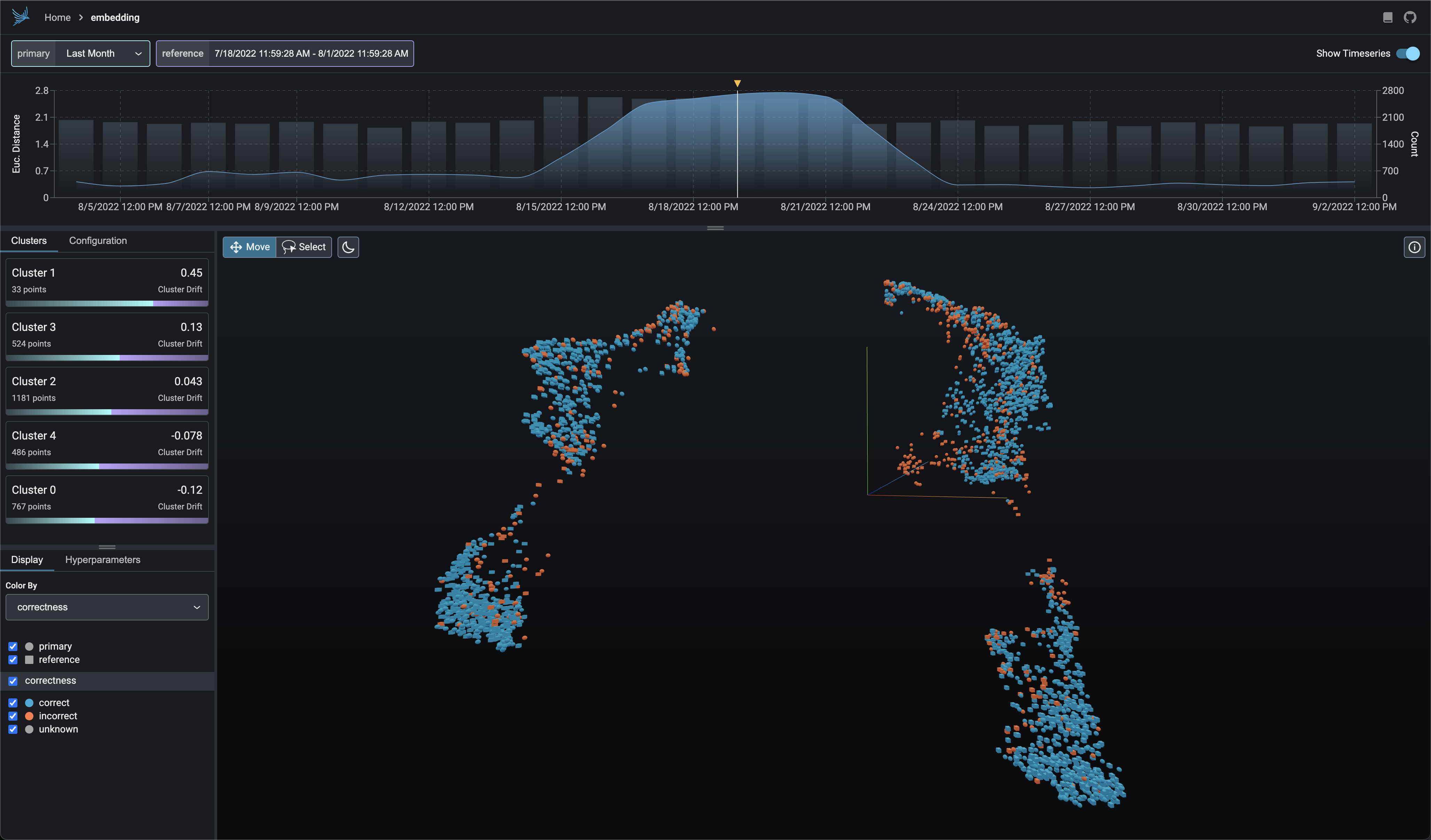

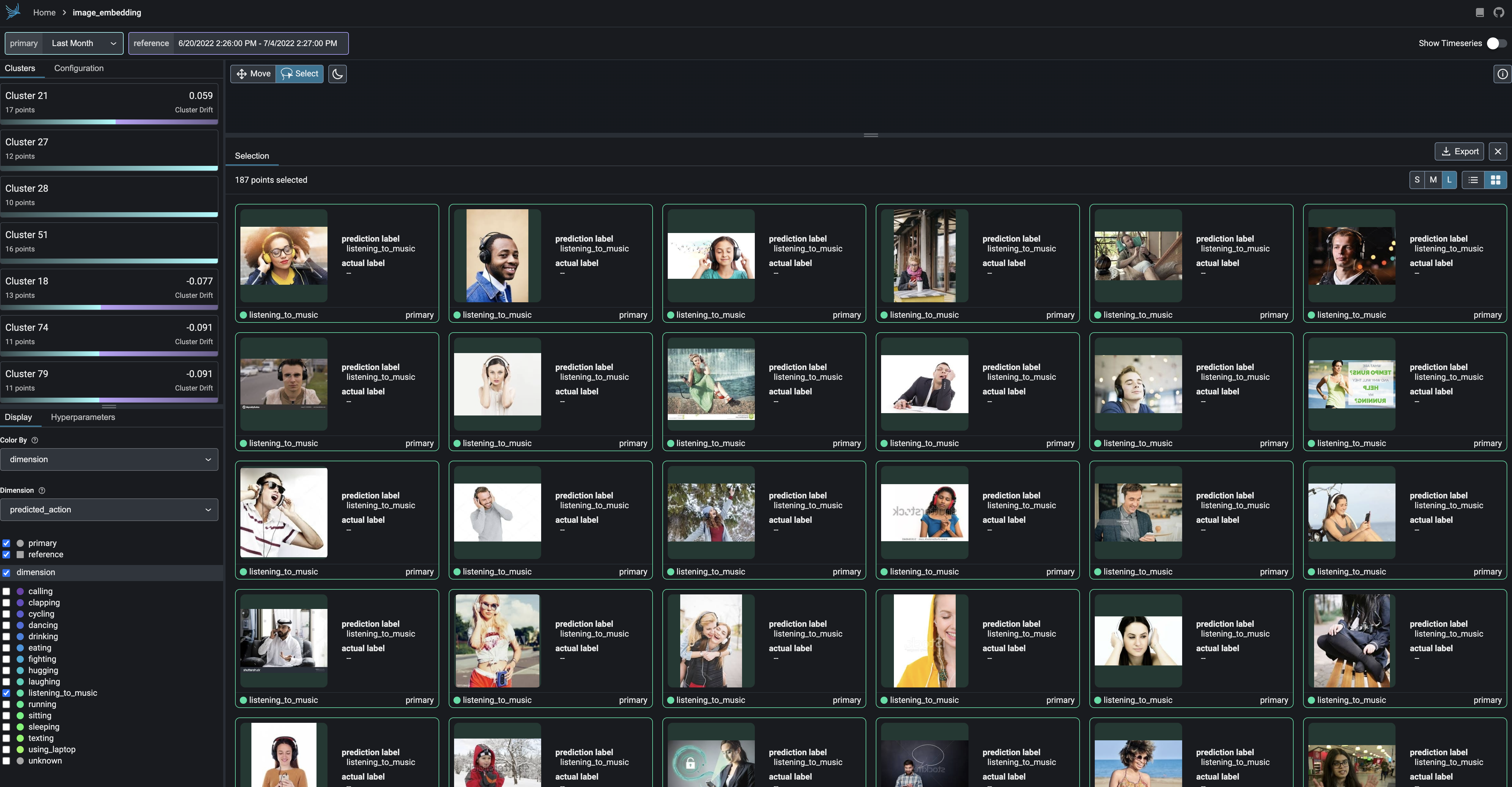

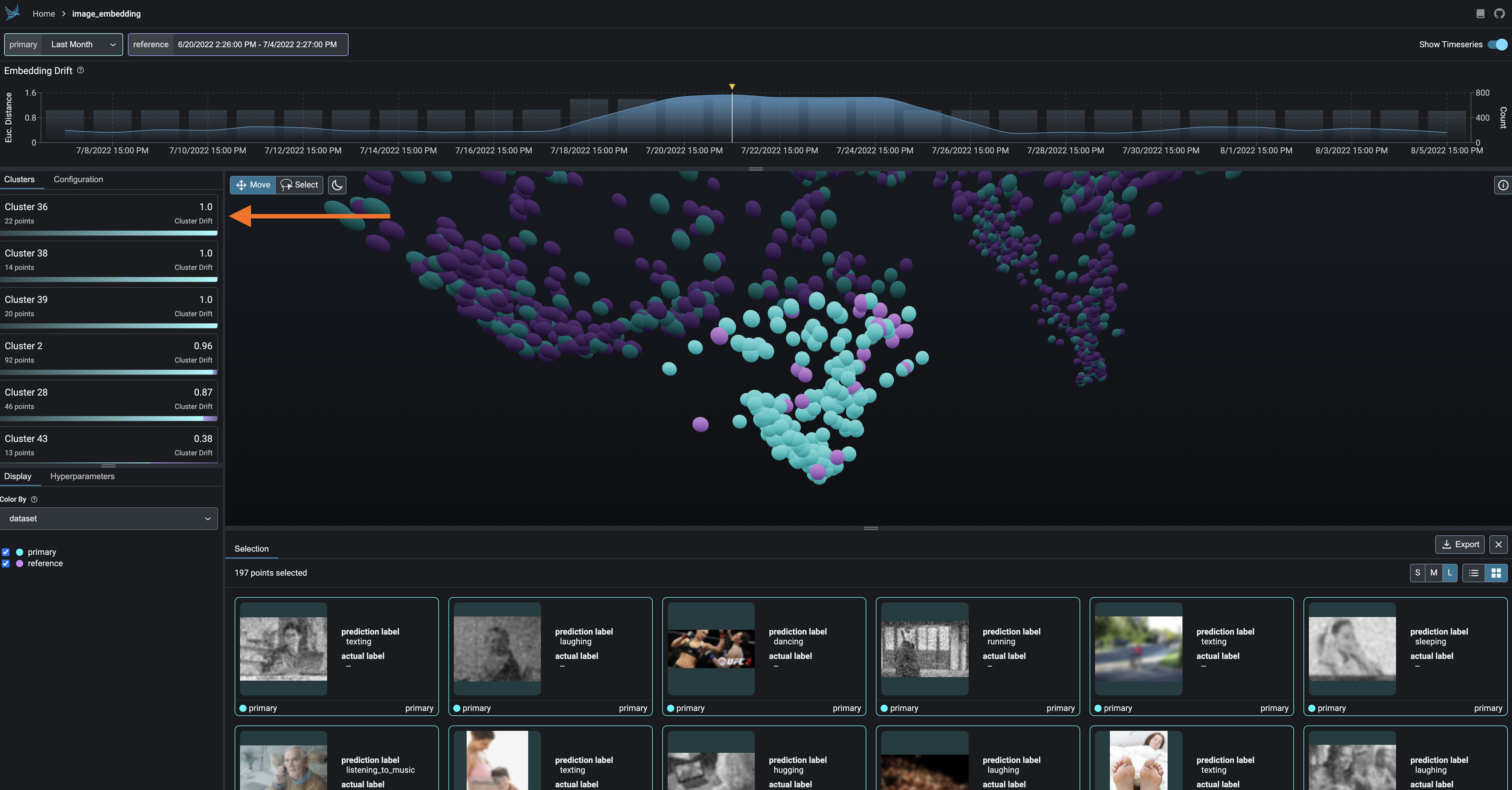

- phoenix/trace/langchain/__init__.py +0 -3

- phoenix/trace/langchain/instrumentor.py +0 -35

- phoenix/trace/llama_index/__init__.py +0 -3

- phoenix/trace/llama_index/callback.py +0 -102

- phoenix/trace/openai/__init__.py +0 -3

- phoenix/trace/openai/instrumentor.py +0 -30

- {arize_phoenix-3.16.1.dist-info → arize_phoenix-7.7.0.dist-info}/licenses/IP_NOTICE +0 -0

- {arize_phoenix-3.16.1.dist-info → arize_phoenix-7.7.0.dist-info}/licenses/LICENSE +0 -0

- /phoenix/{datasets → db/insertion}/__init__.py +0 -0

- /phoenix/{experimental → db/migrations}/__init__.py +0 -0

- /phoenix/{storage → db/migrations/data_migration_scripts}/__init__.py +0 -0

|

@@ -0,0 +1,99 @@

|

|

|

1

|

+

import re

|

|

2

|

+

from abc import ABC, abstractmethod

|

|

3

|

+

from collections.abc import Iterable

|

|

4

|

+

from string import Formatter

|

|

5

|

+

from typing import Any

|

|

6

|

+

|

|

7

|

+

|

|

8

|

+

class TemplateFormatter(ABC):

|

|

9

|

+

@abstractmethod

|

|

10

|

+

def parse(self, template: str) -> set[str]:

|

|

11

|

+

"""

|

|

12

|

+

Parse the template and return a set of variable names.

|

|

13

|

+

"""

|

|

14

|

+

raise NotImplementedError

|

|

15

|

+

|

|

16

|

+

def format(self, template: str, **variables: Any) -> str:

|

|

17

|

+

"""

|

|

18

|

+

Formats the template with the given variables.

|

|

19

|

+

"""

|

|

20

|

+

template_variable_names = self.parse(template)

|

|

21

|

+

if missing_template_variables := template_variable_names - set(variables.keys()):

|

|

22

|

+

raise TemplateFormatterError(

|

|

23

|

+

f"Missing template variable(s): {', '.join(missing_template_variables)}"

|

|

24

|

+

)

|

|

25

|

+

return self._format(template, template_variable_names, **variables)

|

|

26

|

+

|

|

27

|

+

@abstractmethod

|

|

28

|

+

def _format(self, template: str, variable_names: Iterable[str], **variables: Any) -> str:

|

|

29

|

+

raise NotImplementedError

|

|

30

|

+

|

|

31

|

+

|

|

32

|

+

class NoOpFormatter(TemplateFormatter):

|

|

33

|

+

"""

|

|

34

|

+

No-op template formatter.

|

|

35

|

+

|

|

36

|

+

Examples:

|

|

37

|

+

|

|

38

|

+

>>> formatter = NoOpFormatter()

|

|

39

|

+

>>> formatter.format("hello")

|

|

40

|

+

'hello'

|

|

41

|

+

"""

|

|

42

|

+

|

|

43

|

+

def parse(self, template: str) -> set[str]:

|

|

44

|

+

return set()

|

|

45

|

+

|

|

46

|

+

def _format(self, template: str, *args: Any, **variables: Any) -> str:

|

|

47

|

+

return template

|

|

48

|

+

|

|

49

|

+

|

|

50

|

+

class FStringTemplateFormatter(TemplateFormatter):

|

|

51

|

+

"""

|

|

52

|

+

Regular f-string template formatter.

|

|

53

|

+

|

|

54

|

+

Examples:

|

|

55

|

+

|

|

56

|

+

>>> formatter = FStringTemplateFormatter()

|

|

57

|

+

>>> formatter.format("{hello}", hello="world")

|

|

58

|

+

'world'

|

|

59

|

+

"""

|

|

60

|

+

|

|

61

|

+

def parse(self, template: str) -> set[str]:

|

|

62

|

+

return set(field_name for _, field_name, _, _ in Formatter().parse(template) if field_name)

|

|

63

|

+

|

|

64

|

+

def _format(self, template: str, variable_names: Iterable[str], **variables: Any) -> str:

|

|

65

|

+

return template.format(**variables)

|

|

66

|

+

|

|

67

|

+

|

|

68

|

+

class MustacheTemplateFormatter(TemplateFormatter):

|

|

69

|

+

"""

|

|

70

|

+

Mustache template formatter.

|

|

71

|

+

|

|

72

|

+

Examples:

|

|

73

|

+

|

|

74

|

+

>>> formatter = MustacheTemplateFormatter()

|

|

75

|

+

>>> formatter.format("{{ hello }}", hello="world")

|

|

76

|

+

'world'

|

|

77

|

+

"""

|

|

78

|

+

|

|

79

|

+

PATTERN = re.compile(r"(?<!\\){{\s*(\w+)\s*}}")

|

|

80

|

+

|

|

81

|

+

def parse(self, template: str) -> set[str]:

|

|

82

|

+

return set(match for match in re.findall(self.PATTERN, template))

|

|

83

|

+

|

|

84

|

+

def _format(self, template: str, variable_names: Iterable[str], **variables: Any) -> str:

|

|

85

|

+

for variable_name in variable_names:

|

|

86

|

+

template = re.sub(

|

|

87

|

+

pattern=rf"(?<!\\){{{{\s*{variable_name}\s*}}}}",

|

|

88

|

+

repl=variables[variable_name],

|

|

89

|

+

string=template,

|

|

90

|

+

)

|

|

91

|

+

return template

|

|

92

|

+

|

|

93

|

+

|

|

94

|

+

class TemplateFormatterError(Exception):

|

|

95

|

+

"""

|

|

96

|

+

An error raised when template formatting fails.

|

|

97

|

+

"""

|

|

98

|

+

|

|

99

|

+

pass

|

phoenix/version.py

CHANGED

|

@@ -1 +1 @@

|

|

|

1

|

-

__version__ = "

|

|

1

|

+

__version__ = "7.7.0"

|

|

@@ -1,495 +0,0 @@

|

|

|

1

|

-

Metadata-Version: 2.3

|

|

2

|

-

Name: arize-phoenix

|

|

3

|

-

Version: 3.16.1

|

|

4

|

-

Summary: AI Observability and Evaluation

|

|

5

|

-

Project-URL: Documentation, https://docs.arize.com/phoenix/

|

|

6

|

-

Project-URL: Issues, https://github.com/Arize-ai/phoenix/issues

|

|

7

|

-

Project-URL: Source, https://github.com/Arize-ai/phoenix

|

|

8

|

-

Author-email: Arize AI <phoenix-devs@arize.com>

|

|

9

|

-

License-Expression: Elastic-2.0

|

|

10

|

-

License-File: IP_NOTICE

|

|

11

|

-

License-File: LICENSE

|

|

12

|

-

Keywords: Explainability,Monitoring,Observability

|

|

13

|

-

Classifier: Programming Language :: Python

|

|

14

|

-

Classifier: Programming Language :: Python :: 3.8

|

|

15

|

-

Classifier: Programming Language :: Python :: 3.9

|

|

16

|

-

Classifier: Programming Language :: Python :: 3.10

|

|

17

|

-

Classifier: Programming Language :: Python :: 3.11

|

|

18

|

-

Classifier: Programming Language :: Python :: 3.12

|

|

19

|

-

Requires-Python: <3.13,>=3.8

|

|

20

|

-

Requires-Dist: ddsketch

|

|

21

|

-

Requires-Dist: hdbscan>=0.8.33

|

|

22

|

-

Requires-Dist: jinja2

|

|

23

|

-

Requires-Dist: numpy

|

|

24

|

-

Requires-Dist: openinference-instrumentation-langchain>=0.1.12

|

|

25

|

-

Requires-Dist: openinference-instrumentation-llama-index>=1.2.0

|

|

26

|

-

Requires-Dist: openinference-instrumentation-openai>=0.1.4

|

|

27

|

-

Requires-Dist: openinference-semantic-conventions>=0.1.5

|

|

28

|

-

Requires-Dist: opentelemetry-exporter-otlp

|

|

29

|

-

Requires-Dist: opentelemetry-proto

|

|

30

|

-

Requires-Dist: opentelemetry-sdk

|

|

31

|

-

Requires-Dist: pandas

|

|

32

|

-

Requires-Dist: protobuf<5.0,>=3.20

|

|

33

|

-

Requires-Dist: psutil

|

|

34

|

-

Requires-Dist: pyarrow

|

|

35

|

-

Requires-Dist: requests

|

|

36

|

-

Requires-Dist: scikit-learn

|

|

37

|

-

Requires-Dist: scipy

|

|

38

|

-

Requires-Dist: sortedcontainers

|

|

39

|

-

Requires-Dist: starlette

|

|

40

|

-

Requires-Dist: strawberry-graphql==0.208.2

|

|

41

|

-

Requires-Dist: tqdm

|

|

42

|

-

Requires-Dist: typing-extensions>=4.5; python_version < '3.12'

|

|

43

|

-

Requires-Dist: typing-extensions>=4.6; python_version >= '3.12'

|

|

44

|

-

Requires-Dist: umap-learn

|

|

45

|

-

Requires-Dist: uvicorn

|

|

46

|

-

Requires-Dist: wrapt

|

|

47

|

-

Provides-Extra: dev

|

|

48

|

-

Requires-Dist: anthropic; extra == 'dev'

|

|

49

|

-

Requires-Dist: arize[autoembeddings,llm-evaluation]; extra == 'dev'

|

|

50

|

-

Requires-Dist: gcsfs; extra == 'dev'

|

|

51

|

-

Requires-Dist: google-cloud-aiplatform>=1.3; extra == 'dev'

|

|

52

|

-

Requires-Dist: hatch; extra == 'dev'

|

|

53

|

-

Requires-Dist: jupyter; extra == 'dev'

|

|

54

|

-

Requires-Dist: langchain>=0.0.334; extra == 'dev'

|

|

55

|

-

Requires-Dist: litellm>=1.0.3; extra == 'dev'

|

|

56

|

-

Requires-Dist: llama-index>=0.10.3; extra == 'dev'

|

|

57

|

-

Requires-Dist: nbqa; extra == 'dev'

|

|

58

|

-

Requires-Dist: pandas-stubs<=2.0.2.230605; extra == 'dev'

|

|

59

|

-

Requires-Dist: pre-commit; extra == 'dev'

|

|

60

|

-

Requires-Dist: pytest-asyncio; extra == 'dev'

|

|

61

|

-

Requires-Dist: pytest-cov; extra == 'dev'

|

|

62

|

-

Requires-Dist: pytest-lazy-fixture; extra == 'dev'

|

|

63

|

-

Requires-Dist: pytest==7.4.4; extra == 'dev'

|

|

64

|

-

Requires-Dist: ruff==0.3.0; extra == 'dev'

|

|

65

|

-

Requires-Dist: strawberry-graphql[debug-server]==0.208.2; extra == 'dev'

|

|

66

|

-

Provides-Extra: evals

|

|

67

|

-

Requires-Dist: arize-phoenix-evals>=0.3.0; extra == 'evals'

|

|

68

|

-

Provides-Extra: experimental

|

|

69

|

-

Requires-Dist: tenacity; extra == 'experimental'

|

|

70

|

-

Provides-Extra: llama-index

|

|

71

|

-

Requires-Dist: llama-index-callbacks-arize-phoenix>=0.1.2; extra == 'llama-index'

|

|

72

|

-

Requires-Dist: llama-index==0.10.3; extra == 'llama-index'

|

|

73

|

-

Requires-Dist: openinference-instrumentation-llama-index>=1.2.0; extra == 'llama-index'

|

|

74

|

-

Description-Content-Type: text/markdown

|

|

75

|

-

|

|

76

|

-

<p align="center">

|

|

77

|

-

<a target="_blank" href="https://phoenix.arize.com" style="background:none">

|

|

78

|

-

<img alt="phoenix logo" src="https://storage.googleapis.com/arize-assets/phoenix/assets/phoenix-logo-light.svg" width="auto" height="200"></img>

|

|

79

|

-

</a>

|

|

80

|

-

<br/>

|

|

81

|

-

<br/>

|

|

82

|

-

<a href="https://docs.arize.com/phoenix/">

|

|

83

|

-

<img src="https://img.shields.io/static/v1?message=Docs&logo=data:image/png;base64,iVBORw0KGgoAAAANSUhEUgAAAIAAAACACAYAAADDPmHLAAAG4ElEQVR4nO2d4XHjNhCFcTf+b3ZgdWCmgmMqOKUC0xXYrsBOBVEqsFRB7ApCVRCygrMriFQBM7h5mNlwKBECARLg7jeDscamSQj7sFgsQfBL27ZK4MtXsT1vRADMEQEwRwTAHBEAc0QAzBEBMEcEwBwRAHNEAMwRATBnjAByFGE+MqVUMcYOY24GVUqpb/h8VErVKAf87QNFcEcbd4WSw+D6803njHscO5sATmGEURGBiCj6yUlv1uX2gv91FsDViArbcA2RUKF8QhAV8RQc0b15DcOt0VaTE1oAfWj3dYdCBfGGsmSM0XX5HsP3nEMAXbqCeCdiOERQPx9og5exGJ0S4zRQN9KrUupfpdQWjZciure/YIj7K0bjqwTyAHdovA805iqCOg2xgnB1nZ97IvaoSCURdIPG/IHGjTH/YAz/A8KdJai7lBQzgbpx/0Hg6DT18UzWMXxSjMkDrElPNEmKfAbl6znwI3IMU/OCa0/1nfckwWaSbvWYYDnEsvCMJDNckhqu7GCMKWYOBXp9yPGd5kvqUAKf6rkAk7M2SY9QDXdEr9wEOr9x96EiejMFnixBNteDISsyNw7hHRqc22evWcP4vt39O85bzZH30AKg4+eo8cQRI4bHAJ7hyYM3CNHrG9RrimSXuZmUkZjN/O6nAPpcwCcJNmipAle2QM/1GU3vITCXhvY91u9geN/jOY27VuTnYL1PCeAcRhwh7/Bl8Ai+IuxPiOCShtfX/sPDtY8w+sZjby86dw6dBeoigD7obd/Ko6fI4BF8DA9HnGdrcU0fLt+n4dfE6H5jpjYcVdu2L23b5lpjHoo+18FDbcszddF1rUee/4C6ZiO+80rHZmjDoIQUQLdRtm3brkcKIUPjjqVPBIUHgW1GGN4YfawAL2IqAVB8iEE31tvIelARlCPPVaFOLoIupzY6xVcM4MoRUyHXyHhslH6PaPl5RP1Lh4UsOeKR2e8dzC0Aiuvc2Nx3fwhfxf/hknouUYbWUk5GTAIwmOh5e+H0cor8vEL91hfOdEqINLq1AV+RKImJ6869f9tFIBVc6y7gd3lHfWyNX0LEr7EuDElhRdAlQjig0e/RU31xxDltM4pF7IY3pLIgxAhhgzF/iC2M0Hi4dkOGlyGMd/g7dsMbUlsR9ICe9WhxbA3DjRkSdjiHzQzlBSKNJsCzIcUlYdfI0dcWS8LMkPDkcJ0n/O+Qyy/IAtDkSPnp4Fu4WpthQR/zm2VcoI/51fI28iYld9/HEh4Pf7D0Bm845pwIPnHMUJSf45pT5x68s5T9AW6INzhHDeP1BYcNMew5SghkinWOwVnaBhHGG5ybMn70zBDe8buh8X6DqV0Sa/5tWOIOIbcWQ8KBiGBnMb/P0OuTd/lddCrY5jn/VLm3nL+fY4X4YREuv8vS9wh6HSkAExMs0viKySZRd44iyOH2FzPe98Fll7A7GNMmjay4GF9BAKGXesfCN0sRsDG+YrhP4O2ACFgZXzHdKPL2RMJoxc34ivFOod3AMMNUj5XxFfOtYrUIXvB5MandS+G+V/AzZ+MrEcBPlpoFtUIEwBwRAG+OIgDe1CIA5ogAmCMCYI4IgDkiAOaIAJgjAmCOCIA5IgDmiACYIwJgjgiAOSIA5ogAmCMCYI4IgDkiAOaIAJgjAmCOCIA5IgDmiACYIwJgjgiAOSIA5ogAmCMCYI4IgDkiAOaIAJgjAmDOVYBXvwvxQV8NWJOd0esvJ94babZaz7B5ovldxnlDpYhp0JFr/KTlLKcEMMQKpcDPXIQxGXsYmhZnXAXQh/EWBQrr3bc80mATyyrEvs4+BdBHgbdxFOIhrDkSg1/6Iu2LCS0AyoqI4ftUF00EY/Q3h1fRj2JKAVCMGErmnsH1lfnemEsAlByvgl0z2qx5B8OPCuB8EIMADBlEEOV79j1whNE3c/X2PmISAGUNr7CEmUSUhjfEKgBDAY+QohCiNrwhdgEYzPv7UxkadvBg0RrekMrNoAozh3vLN4DPhc7S/WL52vkoSO1u4BZC+DOCulC0KJ/gqWaP7C8hlSGgjxyCmDuPsEePT/KuasrrAcyr4H+f6fq01yd7Sz1lD0CZ2hs06PVJufs+lrIiyLwufjfBtXYpjvWnWIoHoJSYe4dIK/t4HX1ULFEACkPCm8e8wXFJvZ6y1EWhJkDcWxw7RINzLc74auGrgg8e4oIm9Sh/CA7LwkvHqaIJ9pLI6Lmy1BigDy2EV8tjdzh+8XB6MGSLKH4INsZXDJ8MGhIBK+Mrpo+GnRIBO+MrZjFAFxoTNBwCvj6u4qvSZJiM3iNX4yvmHoA9Sh4PF0QAzBEBMEcEwBwRAHNEAMwRAXBGKfUfr5hKvglRfO4AAAAASUVORK5CYII=&labelColor=grey&color=blue&logoColor=white&label=%20"/>

|

|

84

|

-

</a>

|

|

85

|

-

<a target="_blank" href="https://join.slack.com/t/arize-ai/shared_invite/zt-1px8dcmlf-fmThhDFD_V_48oU7ALan4Q">

|

|

86

|

-

<img src="https://img.shields.io/static/v1?message=Community&logo=slack&labelColor=grey&color=blue&logoColor=white&label=%20"/>

|

|

87

|

-

</a>

|

|

88

|

-

<a target="_blank" href="https://twitter.com/ArizePhoenix">

|

|

89

|

-

<img src="https://img.shields.io/badge/-ArizePhoenix-blue.svg?color=blue&labelColor=gray&logo=twitter">

|

|

90

|

-

</a>

|

|

91

|

-

<a target="_blank" href="https://pypi.org/project/arize-phoenix/">

|

|

92

|

-

<img src="https://img.shields.io/pypi/v/arize-phoenix?color=blue">

|

|

93

|

-

</a>

|

|

94

|

-

<a target="_blank" href="https://anaconda.org/conda-forge/arize-phoenix">

|

|

95

|

-

<img src="https://img.shields.io/conda/vn/conda-forge/arize-phoenix.svg?color=blue">

|

|

96

|

-

</a>

|

|

97

|

-

<a target="_blank" href="https://pypi.org/project/arize-phoenix/">

|

|

98

|

-

<img src="https://img.shields.io/pypi/pyversions/arize-phoenix">

|

|

99

|

-

</a>

|

|

100

|

-

<a target="_blank" href="https://hub.docker.com/repository/docker/arizephoenix/phoenix/general">

|

|

101

|

-

<img src="https://img.shields.io/docker/v/arizephoenix/phoenix?sort=semver&logo=docker&label=image&color=blue">

|

|

102

|

-

</a>

|

|

103

|

-

</p>

|

|

104

|

-

|

|

105

|

-

|

|

106

|

-

|

|

107

|

-

Phoenix provides MLOps and LLMOps insights at lightning speed with zero-config observability. Phoenix provides a notebook-first experience for monitoring your models and LLM Applications by providing:

|

|

108

|

-

|

|

109

|

-

- **LLM Traces** - Trace through the execution of your LLM Application to understand the internals of your LLM Application and to troubleshoot problems related to things like retrieval and tool execution.

|

|

110

|

-

- **LLM Evals** - Leverage the power of large language models to evaluate your generative model or application's relevance, toxicity, and more.

|

|

111

|

-

- **Embedding Analysis** - Explore embedding point-clouds and identify clusters of high drift and performance degradation.

|

|

112

|

-

- **RAG Analysis** - Visualize your generative application's search and retrieval process to identify problems and improve your RAG pipeline.

|

|

113

|

-

- **Structured Data Analysis** - Statistically analyze your structured data by performing A/B analysis, temporal drift analysis, and more.

|

|

114

|

-

|

|

115

|

-

**Table of Contents**

|

|

116

|

-

|

|

117

|

-

- [Installation](#installation)

|

|

118

|

-

- [LLM Traces](#llm-traces)

|

|

119

|

-

- [Tracing with LlamaIndex](#tracing-with-llamaindex)

|

|

120

|

-

- [Tracing with LangChain](#tracing-with-langchain)

|

|

121

|

-

- [LLM Evals](#llm-evals)

|

|

122

|

-

- [Embedding Analysis](#embedding-analysis)

|

|

123

|

-

- [UMAP-based Exploratory Data Analysis](#umap-based-exploratory-data-analysis)

|

|

124

|

-

- [Cluster-driven Drift and Performance Analysis](#cluster-driven-drift-and-performance-analysis)

|

|

125

|

-

- [Exportable Clusters](#exportable-clusters)

|

|

126

|

-

- [Retrieval-Augmented Generation Analysis](#retrieval-augmented-generation-analysis)

|

|

127

|

-

- [Structured Data Analysis](#structured-data-analysis)

|

|

128

|

-

- [Deploying Phoenix](#deploying-phoenix)

|

|

129

|

-

- [Breaking Changes](#breaking-changes)

|

|

130

|

-

- [Community](#community)

|

|

131

|

-

- [Thanks](#thanks)

|

|

132

|

-

- [Copyright, Patent, and License](#copyright-patent-and-license)

|

|

133

|

-

|

|

134

|

-

## Installation

|

|

135

|

-

|

|

136

|

-

Install Phoenix via `pip` or or `conda` as well as any of its subpackages.

|

|

137

|

-

|

|

138

|

-

```shell

|

|

139

|

-

pip install arize-phoenix[evals]

|

|

140

|

-

```

|

|

141

|

-

|

|

142

|

-

> [!NOTE]

|

|

143

|

-

> The above will install Phoenix and its `evals` subpackage. To just install phoenix's evaluation package, you can run `pip install arize-phoenix-evals` instead.

|

|

144

|

-

|

|

145

|

-

## LLM Traces

|

|

146

|

-

|

|

147

|

-

|

|

148

|

-

|

|

149

|

-

With the advent of powerful LLMs, it is now possible to build LLM Applications that can perform complex tasks like summarization, translation, question and answering, and more. However, these applications are often difficult to debug and troubleshoot as they have an extensive surface area: search and retrieval via vector stores, embedding generation, usage of external tools and so on. Phoenix provides a tracing framework that allows you to trace through the execution of your LLM Application hierarchically. This allows you to understand the internals of your LLM Application and to troubleshoot the complex components of your applicaition. Phoenix is built on top of the OpenInference tracing standard and uses it to trace, export, and collect critical information about your LLM Application in the form of `spans`. For more details on the OpenInference tracing standard, see the [OpenInference Specification](https://github.com/Arize-ai/openinference)

|

|

150

|

-

|

|

151

|

-

### Tracing with LlamaIndex

|

|

152

|

-

|

|

153

|

-

[](https://colab.research.google.com/github/Arize-ai/phoenix/blob/main/tutorials/tracing/llama_index_tracing_tutorial.ipynb) [](https://github.com/Arize-ai/phoenix/blob/main/tutorials/tracing/llama_index_tracing_tutorial.ipynb)

|

|

154

|

-

|

|

155

|

-

|

|

156

|

-

|

|

157

|

-

To extract traces from your LlamaIndex application, you will have to add Phoenix's `OpenInferenceTraceCallback` to your LlamaIndex application. A callback (in this case an OpenInference `Tracer`) is a class that automatically accumulates `spans` that trac your application as it executes. The OpenInference `Tracer` is a tracer that is specifically designed to work with Phoenix and by default exports the traces to a locally running phoenix server.

|

|

158

|

-

|

|

159

|

-

```shell

|

|

160

|

-

# Install phoenix as well as llama_index and your LLM of choice

|

|

161

|

-

pip install "arize-phoenix[evals]" "openai>=1" "llama-index>=0.10.3" "openinference-instrumentation-llama-index>=1.0.0" "llama-index-callbacks-arize-phoenix>=0.1.2" llama-index-llms-openai

|

|

162

|

-

```

|

|

163

|

-

|

|

164

|

-

Launch Phoenix in a notebook and view the traces of your LlamaIndex application in the Phoenix UI.

|

|

165

|

-

|

|

166

|

-

```python

|

|

167

|

-

import os

|

|

168

|

-

import phoenix as px

|

|

169

|

-

from llama_index.core import (

|

|

170

|

-

Settings,

|

|

171

|

-

VectorStoreIndex,

|

|

172

|

-

SimpleDirectoryReader,

|

|

173

|

-

set_global_handler,

|

|

174

|

-

)

|

|

175

|

-

from llama_index.embeddings.openai import OpenAIEmbedding

|

|

176

|

-

from llama_index.llms.openai import OpenAI

|

|

177

|

-

|

|

178

|

-

os.environ["OPENAI_API_KEY"] = "YOUR_OPENAI_API_KEY"

|

|

179

|

-

|

|

180

|

-

# To view traces in Phoenix, you will first have to start a Phoenix server. You can do this by running the following:

|

|

181

|

-

session = px.launch_app()

|

|

182

|

-

|

|

183

|

-

|

|

184

|

-

# Once you have started a Phoenix server, you can start your LlamaIndex application and configure it to send traces to Phoenix. To do this, you will have to add configure Phoenix as the global handler

|

|

185

|

-

|

|

186

|

-

set_global_handler("arize_phoenix")

|

|

187

|

-

|

|

188

|

-

|

|

189

|

-

# LlamaIndex application initialization may vary

|

|

190

|

-

# depending on your application

|

|

191

|

-

Settings.llm = OpenAI(model="gpt-4-turbo-preview")

|

|

192

|

-

Settings.embed_model = OpenAIEmbedding(model="text-embedding-ada-002")

|

|

193

|

-

|

|

194

|

-

|

|

195

|

-

# Load your data and create an index. Note you usually want to store your index in a persistent store like a database or the file system

|

|

196

|

-

documents = SimpleDirectoryReader("YOUR_DATA_DIRECTORY").load_data()

|

|

197

|

-

index = VectorStoreIndex.from_documents(documents)

|

|

198

|

-

|

|

199

|

-

query_engine = index.as_query_engine()

|

|

200

|

-

|

|

201

|

-

# Query your LlamaIndex application

|

|

202

|

-

query_engine.query("What is the meaning of life?")

|

|

203

|

-

query_engine.query("Why did the cow jump over the moon?")

|

|

204

|

-

|

|

205

|

-

# View the traces in the Phoenix UI

|

|

206

|

-

px.active_session().url

|

|

207

|

-

```

|

|

208

|

-

|

|

209

|

-

### Tracing with LangChain

|

|

210

|

-

|

|

211

|

-

[](https://colab.research.google.com/github/Arize-ai/phoenix/blob/main/tutorials/tracing/langchain_tracing_tutorial.ipynb) [](https://github.com/Arize-ai/phoenix/blob/main/tutorials/tracing/langchain_tracing_tutorial.ipynb)

|

|

212

|

-

|

|

213

|

-

To extract traces from your LangChain application, you will have to add Phoenix's OpenInference Tracer to your LangChain application. A tracer is a class that automatically accumulates traces as your application executes. The OpenInference Tracer is a tracer that is specifically designed to work with Phoenix and by default exports the traces to a locally running phoenix server.

|

|

214

|

-

|

|

215

|

-

```shell

|

|

216

|

-

# Install phoenix as well as langchain and your LLM of choice

|

|

217

|

-

pip install arize-phoenix langchain openai

|

|

218

|

-

|

|

219

|

-

```

|

|

220

|

-

|

|

221

|

-

Launch Phoenix in a notebook and view the traces of your LangChain application in the Phoenix UI.

|

|

222

|

-

|

|

223

|

-

```python

|

|

224

|

-

import phoenix as px

|

|

225

|

-

import pandas as pd

|

|

226

|

-

import numpy as np

|

|

227

|

-

|

|

228

|

-

# Launch phoenix

|

|

229

|

-

session = px.launch_app()

|

|

230

|

-

|

|

231

|

-

# Once you have started a Phoenix server, you can start your LangChain application with the OpenInferenceTracer as a callback. To do this, you will have to instrument your LangChain application with the tracer:

|

|

232

|

-

|

|

233

|

-

from phoenix.trace.langchain import LangChainInstrumentor

|

|

234

|

-

|

|

235

|

-

# By default, the traces will be exported to the locally running Phoenix server.

|

|

236

|

-

LangChainInstrumentor().instrument()

|

|

237

|

-

|

|

238

|

-

# Initialize your LangChain application

|

|

239

|

-

from langchain.chains import RetrievalQA

|

|

240

|

-

from langchain.chat_models import ChatOpenAI

|

|

241

|

-

from langchain.embeddings import OpenAIEmbeddings

|

|

242

|

-

from langchain.retrievers import KNNRetriever

|

|

243

|

-

|

|

244

|

-

embeddings = OpenAIEmbeddings(model="text-embedding-ada-002")

|

|

245

|

-

documents_df = pd.read_parquet(

|

|

246

|

-

"http://storage.googleapis.com/arize-assets/phoenix/datasets/unstructured/llm/context-retrieval/langchain-pinecone/database.parquet"

|

|

247

|

-

)

|

|

248

|

-

knn_retriever = KNNRetriever(

|

|

249

|

-

index=np.stack(documents_df["text_vector"]),

|

|

250

|

-

texts=documents_df["text"].tolist(),

|

|

251

|

-

embeddings=OpenAIEmbeddings(),

|

|

252

|

-

)

|

|

253

|

-

chain_type = "stuff" # stuff, refine, map_reduce, and map_rerank

|

|

254

|

-

chat_model_name = "gpt-3.5-turbo"

|

|

255

|

-

llm = ChatOpenAI(model_name=chat_model_name)

|

|

256

|

-

chain = RetrievalQA.from_chain_type(

|

|

257

|

-

llm=llm,

|

|

258

|

-

chain_type=chain_type,

|

|

259

|

-

retriever=knn_retriever,

|

|

260

|

-

)

|

|

261

|

-

|

|

262

|

-

# Instrument the execution of the runs with the tracer. By default the tracer uses an HTTPExporter

|

|

263

|

-

query = "What is euclidean distance?"

|

|

264

|

-

response = chain.run(query, callbacks=[tracer])

|

|

265

|

-

|

|

266

|

-

# By adding the tracer to the callbacks of LangChain, we've created a one-way data connection between your LLM application and Phoenix.

|

|

267

|

-

|

|

268

|

-

# To view the traces in Phoenix, simply open the UI in your browser.

|

|

269

|

-

session.url

|

|

270

|

-

```

|

|

271

|

-

|

|

272

|

-

## LLM Evals

|

|

273

|

-

|

|

274

|

-

[](https://colab.research.google.com/github/Arize-ai/phoenix/blob/main/tutorials/evals/evaluate_relevance_classifications.ipynb) [](https://github.com/Arize-ai/phoenix/blob/main/tutorials/evals/evaluate_relevance_classifications.ipynb)

|

|

275

|

-

|

|

276

|

-

Phoenix provides tooling to evaluate LLM applications, including tools to determine the relevance or irrelevance of documents retrieved by retrieval-augmented generation (RAG) application, whether or not the response is toxic, and much more.

|

|

277

|

-

|

|

278

|

-

Phoenix's approach to LLM evals is notable for the following reasons:

|

|

279

|

-

|

|

280

|

-

- Includes pre-tested templates and convenience functions for a set of common Eval “tasks”

|

|

281

|

-

- Data science rigor applied to the testing of model and template combinations

|

|

282

|

-

- Designed to run as fast as possible on batches of data

|

|

283

|

-

- Includes benchmark datasets and tests for each eval function

|

|

284

|

-

|

|

285

|

-

Here is an example of running the RAG relevance eval on a dataset of Wikipedia questions and answers:

|

|

286

|

-

|

|

287

|

-

```shell

|

|

288

|

-

# Install phoenix as well as the evals subpackage

|

|

289

|

-

pip install 'arize-phoenix[evals]' ipython matplotlib openai pycm scikit-learn

|

|

290

|

-

```

|

|

291

|

-

|

|

292

|

-

```python

|

|

293

|

-

from phoenix.evals import (

|

|

294

|

-

RAG_RELEVANCY_PROMPT_TEMPLATE,

|

|

295

|

-

RAG_RELEVANCY_PROMPT_RAILS_MAP,

|

|

296

|

-

OpenAIModel,

|

|

297

|

-

download_benchmark_dataset,

|

|

298

|

-

llm_classify,

|

|

299

|

-

)

|

|

300

|

-

from sklearn.metrics import precision_recall_fscore_support, confusion_matrix, ConfusionMatrixDisplay

|

|

301

|

-

|

|

302

|

-

# Download the benchmark golden dataset

|

|

303

|

-

df = download_benchmark_dataset(

|

|

304

|

-

task="binary-relevance-classification", dataset_name="wiki_qa-train"

|

|

305

|

-

)

|

|

306

|

-

# Sample and re-name the columns to match the template

|

|

307

|

-

df = df.sample(100)

|

|

308

|

-

df = df.rename(

|

|

309

|

-

columns={

|

|

310

|

-

"query_text": "input",

|

|

311

|

-

"document_text": "reference",

|

|

312

|

-

},

|

|

313

|

-

)

|

|

314

|

-

model = OpenAIModel(

|

|

315

|

-

model="gpt-4",

|

|

316

|

-

temperature=0.0,

|

|

317

|

-

)

|

|

318

|

-

rails =list(RAG_RELEVANCY_PROMPT_RAILS_MAP.values())

|

|

319

|

-

df[["eval_relevance"]] = llm_classify(df, model, RAG_RELEVANCY_PROMPT_TEMPLATE, rails)

|

|

320

|

-

#Golden dataset has True/False map to -> "irrelevant" / "relevant"

|

|

321

|

-

#we can then scikit compare to output of template - same format

|

|

322

|

-

y_true = df["relevant"].map({True: "relevant", False: "irrelevant"})

|

|

323

|

-

y_pred = df["eval_relevance"]

|

|

324

|

-

|

|

325

|

-

# Compute Per-Class Precision, Recall, F1 Score, Support

|

|

326

|

-

precision, recall, f1, support = precision_recall_fscore_support(y_true, y_pred)

|

|

327

|

-

```

|

|

328

|

-

|

|

329

|

-

To learn more about LLM Evals, see the [Evals documentation](https://docs.arize.com/phoenix/concepts/llm-evals/).

|

|

330

|

-

|

|

331

|

-

## Embedding Analysis

|

|

332

|

-

|

|

333

|

-

[](https://colab.research.google.com/github/Arize-ai/phoenix/blob/main/tutorials/image_classification_tutorial.ipynb) [](https://github.com/Arize-ai/phoenix/blob/main/tutorials/image_classification_tutorial.ipynb)

|

|

334

|

-

|

|

335

|

-

Explore UMAP point-clouds at times of high drift and performance degredation and identify clusters of problematic data.

|

|

336

|

-

|

|

337

|

-

|

|

338

|

-

|

|

339

|

-

Embedding analysis is critical for understanding the behavior of you NLP, CV, and LLM Apps that use embeddings. Phoenix provides an A/B testing framework to help you understand how your embeddings are changing over time and how they are changing between different versions of your model (`prod` vs `train`, `champion` vs `challenger`).

|

|

340

|

-

|

|

341

|

-

```python

|

|

342

|

-

# Import libraries.

|

|

343

|

-

from dataclasses import replace

|

|

344

|

-

import pandas as pd

|

|

345

|

-

import phoenix as px

|

|

346

|

-

|

|

347

|

-

# Download curated datasets and load them into pandas DataFrames.

|

|

348

|

-

train_df = pd.read_parquet(

|

|

349

|

-

"https://storage.googleapis.com/arize-assets/phoenix/datasets/unstructured/cv/human-actions/human_actions_training.parquet"

|

|

350

|

-

)

|

|

351

|

-

prod_df = pd.read_parquet(

|

|

352

|

-

"https://storage.googleapis.com/arize-assets/phoenix/datasets/unstructured/cv/human-actions/human_actions_production.parquet"

|

|

353

|

-

)

|

|

354

|

-

|

|

355

|

-

# Define schemas that tell Phoenix which columns of your DataFrames correspond to features, predictions, actuals (i.e., ground truth), embeddings, etc.

|

|

356

|

-

train_schema = px.Schema(

|

|

357

|

-

prediction_id_column_name="prediction_id",

|

|

358

|

-

timestamp_column_name="prediction_ts",

|

|

359

|

-

prediction_label_column_name="predicted_action",

|

|

360

|

-

actual_label_column_name="actual_action",

|

|

361

|

-

embedding_feature_column_names={

|

|

362

|

-

"image_embedding": px.EmbeddingColumnNames(

|

|

363

|

-

vector_column_name="image_vector",

|

|

364

|

-

link_to_data_column_name="url",

|

|

365

|

-

),

|

|

366

|

-

},

|

|

367

|

-

)

|

|

368

|

-

prod_schema = replace(train_schema, actual_label_column_name=None)

|

|

369

|

-

|

|

370

|

-

# Define your production and training datasets.

|

|

371

|

-

prod_ds = px.Dataset(prod_df, prod_schema)

|

|

372

|

-

train_ds = px.Dataset(train_df, train_schema)

|

|

373

|

-

|

|

374

|

-

# Launch Phoenix.

|

|

375

|

-

session = px.launch_app(prod_ds, train_ds)

|

|

376

|

-

|

|

377

|

-

# View the Phoenix UI in the browser

|

|

378

|

-

session.url

|

|

379

|

-

```

|

|

380

|

-

|

|

381

|

-

### UMAP-based Exploratory Data Analysis

|

|

382

|

-

|

|

383

|

-

Color your UMAP point-clouds by your model's dimensions, drift, and performance to identify problematic cohorts.

|

|

384

|

-

|

|

385

|

-

|

|

386

|

-

|

|

387

|

-

### Cluster-driven Drift and Performance Analysis

|

|

388

|

-

|

|

389

|

-

Break-apart your data into clusters of high drift or bad performance using HDBSCAN

|

|

390

|

-

|

|

391

|

-

|

|

392

|

-

|

|

393

|

-

### Exportable Clusters

|

|

394

|

-

|

|

395

|

-

Export your clusters to `parquet` files or dataframes for further analysis and fine-tuning.

|

|

396

|

-

|

|

397

|

-

## Retrieval-Augmented Generation Analysis

|

|

398

|

-

|

|

399

|

-

[](https://colab.research.google.com/github/Arize-ai/phoenix/blob/main/tutorials/llama_index_search_and_retrieval_tutorial.ipynb) [](https://github.com/Arize-ai/phoenix/blob/main/tutorials/llama_index_search_and_retrieval_tutorial.ipynb)

|

|

400

|

-

|

|

401

|

-

|

|

402

|

-

|

|

403

|

-

Search and retrieval is a critical component of many LLM Applications as it allows you to extend the LLM's capabilities to encompass knowledge about private data. This process is known as RAG (retrieval-augmented generation) and often times a vector store is leveraged to store chunks of documents encoded as embeddings so that they can be retrieved at inference time.

|

|

404

|

-

|

|

405

|

-

To help you better understand your RAG application, Phoenix allows you to upload a corpus of your knowledge base along with your LLM application's inferences to help you troubleshoot hard to find bugs with retrieval.

|

|

406

|

-

|

|

407

|

-

## Structured Data Analysis

|

|

408

|

-

|

|

409

|

-

[](https://colab.research.google.com/github/Arize-ai/phoenix/blob/main/tutorials/credit_card_fraud_tutorial.ipynb) [](https://github.com/Arize-ai/phoenix/blob/main/tutorials/credit_card_fraud_tutorial.ipynb)

|

|

410

|

-

|

|

411

|

-

Phoenix provides a suite of tools for analyzing structured data. These tools allow you to perform A/B analysis, temporal drift analysis, and more.

|

|

412

|

-

|

|

413

|

-

|

|

414

|

-

|

|

415

|

-

```python

|

|

416

|

-

import pandas as pd

|

|

417

|

-

import phoenix as px

|

|

418

|

-

|

|

419

|

-

# Perform A/B analysis on your training and production datasets

|

|

420

|

-

train_df = pd.read_parquet(

|

|

421

|

-

"http://storage.googleapis.com/arize-assets/phoenix/datasets/structured/credit-card-fraud/credit_card_fraud_train.parquet",

|

|

422

|

-

)

|

|

423

|

-

prod_df = pd.read_parquet(

|

|

424

|

-

"http://storage.googleapis.com/arize-assets/phoenix/datasets/structured/credit-card-fraud/credit_card_fraud_production.parquet",

|

|

425

|

-

)

|

|

426

|

-

|

|

427

|

-

# Describe the data for analysis

|

|

428

|

-

schema = px.Schema(

|

|

429

|

-

prediction_id_column_name="prediction_id",

|

|

430

|

-

prediction_label_column_name="predicted_label",

|

|

431

|

-

prediction_score_column_name="predicted_score",

|

|

432

|

-

actual_label_column_name="actual_label",

|

|

433

|

-

timestamp_column_name="prediction_timestamp",

|

|

434

|

-

feature_column_names=feature_column_names,

|

|

435

|

-

tag_column_names=["age"],

|

|

436

|

-

)

|

|

437

|

-

|

|

438

|

-

# Define your production and training datasets.

|

|

439

|

-

prod_ds = px.Dataset(dataframe=prod_df, schema=schema, name="production")

|

|

440

|

-

train_ds = px.Dataset(dataframe=train_df, schema=schema, name="training")

|

|

441

|

-

|

|

442

|

-

# Launch Phoenix for analysis

|

|

443

|

-

session = px.launch_app(primary=prod_ds, reference=train_ds)

|

|

444

|

-

```

|

|

445

|

-

|

|

446

|

-

## Deploying Phoenix

|

|

447

|

-

|

|

448

|

-

<a target="_blank" href="https://hub.docker.com/repository/docker/arizephoenix/phoenix/general">

|

|

449

|

-

<img src="https://img.shields.io/docker/v/arizephoenix/phoenix?sort=semver&logo=docker&label=image&color=blue">

|

|

450

|

-

</a>

|

|

451

|

-

|

|

452

|

-

<img src="https://storage.googleapis.com/arize-assets/phoenix/assets/images/deployment.png" title="How phoenix can collect traces from an LLM application"/>

|

|

453

|

-

|

|

454

|

-

Phoenix's notebook-first approach to observability makes it a great tool to utilize during experimentation and pre-production. However at some point you are going to want to ship your application to production and continue to monitor your application as it runs. Phoenix is made up of two components that can be deployed independently:

|

|

455

|

-

|

|

456

|

-

- **Trace Instrumentation**: These are a set of plugins that can be added to your application's startup process. These plugins (known as instrumentations) automatically collect spans for your application and export them for collection and visualization. For phoenix, all the instrumentors are managed via a single repository called [OpenInference](https://github.com/Arize-ai/openinference)

|

|

457

|

-

- **Trace Collector**: The Phoenix server acts as a trace collector and application that helps you troubleshoot your application in real time. You can pull the latest images of Phoenix from the [Docker Hub](https://hub.docker.com/repository/docker/arizephoenix/phoenix/general)

|

|

458

|

-

|

|

459

|

-

In order to run Phoenix tracing in production, you will have to follow these following steps:

|

|

460

|

-

|

|

461

|

-

- **Setup a Server**: your LLM application to run on a server ([examples](https://github.com/Arize-ai/openinference/tree/main/python/examples))

|

|

462

|

-

- **Instrument**: Add [OpenInference](https://github.com/Arize-ai/openinference) Instrumentation to your server

|

|

463

|

-

- **Observe**: Run the Phoenix server as a side-car or a standalone instance and point your tracing instrumentation to the phoenix server

|

|

464

|

-

|

|

465

|

-

For more information on deploying Phoenix, see the [Phoenix Deployment Guide](https://docs.arize.com/phoenix/deployment/deploying-phoenix).

|

|

466

|

-

|

|

467

|

-

## Breaking Changes

|

|

468

|

-

|

|

469

|

-

see the [migration guide](./MIGRATION.md) for a list of breaking changes.

|

|

470

|

-

|

|

471

|

-

## Community

|

|

472

|

-

|

|

473

|

-

Join our community to connect with thousands of machine learning practitioners and ML observability enthusiasts.

|

|

474

|

-

|

|

475

|

-

- 🌍 Join our [Slack community](https://join.slack.com/t/arize-ai/shared_invite/zt-1px8dcmlf-fmThhDFD_V_48oU7ALan4Q).

|

|

476

|

-

- 💡 Ask questions and provide feedback in the _#phoenix-support_ channel.

|

|

477

|

-

- 🌟 Leave a star on our [GitHub](https://github.com/Arize-ai/phoenix).

|

|

478

|

-

- 🐞 Report bugs with [GitHub Issues](https://github.com/Arize-ai/phoenix/issues).

|

|

479

|

-

- 🐣 Follow us on [twitter](https://twitter.com/ArizePhoenix).

|

|

480

|

-

- 💌️ Sign up for our [mailing list](https://phoenix.arize.com/#updates).

|

|

481

|

-

- 🗺️ Check out our [roadmap](https://github.com/orgs/Arize-ai/projects/45) to see where we're heading next.

|

|

482

|

-

- 🎓 Learn the fundamentals of ML observability with our [introductory](https://arize.com/ml-observability-fundamentals/) and [advanced](https://arize.com/blog-course/) courses.

|

|

483

|

-

|

|

484

|

-

## Thanks

|

|

485

|

-

|

|

486

|

-

- [UMAP](https://github.com/lmcinnes/umap) For unlocking the ability to visualize and reason about embeddings

|

|

487

|

-

- [HDBSCAN](https://github.com/scikit-learn-contrib/hdbscan) For providing a clustering algorithm to aid in the discovery of drift and performance degradation

|

|

488

|

-

|

|

489

|

-

## Copyright, Patent, and License

|

|

490

|

-

|

|

491

|

-

Copyright 2023 Arize AI, Inc. All Rights Reserved.

|

|

492

|

-

|

|

493

|

-

Portions of this code are patent protected by one or more U.S. Patents. See [IP_NOTICE](https://github.com/Arize-ai/phoenix/blob/main/IP_NOTICE).

|

|

494

|

-

|

|

495

|

-

This software is licensed under the terms of the Elastic License 2.0 (ELv2). See [LICENSE](https://github.com/Arize-ai/phoenix/blob/main/LICENSE).

|