TransferQueue 0.1.1.dev0__py3-none-any.whl

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- recipe/simple_use_case/async_demo.py +331 -0

- recipe/simple_use_case/sync_demo.py +220 -0

- tests/test_async_simple_storage_manager.py +339 -0

- tests/test_client.py +423 -0

- tests/test_controller.py +274 -0

- tests/test_controller_data_partitions.py +513 -0

- tests/test_kv_storage_manager.py +92 -0

- tests/test_put.py +327 -0

- tests/test_samplers.py +492 -0

- tests/test_serial_utils_on_cpu.py +202 -0

- tests/test_simple_storage_unit.py +443 -0

- tests/test_storage_client_factory.py +45 -0

- transfer_queue/__init__.py +48 -0

- transfer_queue/client.py +611 -0

- transfer_queue/controller.py +1187 -0

- transfer_queue/metadata.py +460 -0

- transfer_queue/sampler/__init__.py +19 -0

- transfer_queue/sampler/base.py +74 -0

- transfer_queue/sampler/grpo_group_n_sampler.py +157 -0

- transfer_queue/sampler/sequential_sampler.py +75 -0

- transfer_queue/storage/__init__.py +25 -0

- transfer_queue/storage/clients/__init__.py +24 -0

- transfer_queue/storage/clients/base.py +22 -0

- transfer_queue/storage/clients/factory.py +55 -0

- transfer_queue/storage/clients/yuanrong_client.py +118 -0

- transfer_queue/storage/managers/__init__.py +23 -0

- transfer_queue/storage/managers/base.py +460 -0

- transfer_queue/storage/managers/factory.py +43 -0

- transfer_queue/storage/managers/simple_backend_manager.py +611 -0

- transfer_queue/storage/managers/yuanrong_manager.py +18 -0

- transfer_queue/storage/simple_backend.py +451 -0

- transfer_queue/utils/__init__.py +13 -0

- transfer_queue/utils/serial_utils.py +240 -0

- transfer_queue/utils/utils.py +132 -0

- transfer_queue/utils/zmq_utils.py +170 -0

- transfer_queue/version/version +1 -0

- transferqueue-0.1.1.dev0.dist-info/METADATA +327 -0

- transferqueue-0.1.1.dev0.dist-info/RECORD +41 -0

- transferqueue-0.1.1.dev0.dist-info/WHEEL +5 -0

- transferqueue-0.1.1.dev0.dist-info/licenses/LICENSE +202 -0

- transferqueue-0.1.1.dev0.dist-info/top_level.txt +4 -0

|

@@ -0,0 +1,327 @@

|

|

|

1

|

+

Metadata-Version: 2.4

|

|

2

|

+

Name: TransferQueue

|

|

3

|

+

Version: 0.1.1.dev0

|

|

4

|

+

Summary: TransferQueue: An Asynchronous Streaming Data Management Module

|

|

5

|

+

Author-email: The TransferQueue Team <hanzy19@tsinghua.org.cn>

|

|

6

|

+

License: Apache-2.0

|

|

7

|

+

Requires-Python: >=3.10

|

|

8

|

+

Description-Content-Type: text/markdown

|

|

9

|

+

License-File: LICENSE

|

|

10

|

+

Requires-Dist: ray[default]

|

|

11

|

+

Requires-Dist: tensordict>=0.10.0

|

|

12

|

+

Requires-Dist: pyzmq

|

|

13

|

+

Requires-Dist: hydra-core

|

|

14

|

+

Requires-Dist: numpy<2.0.0

|

|

15

|

+

Requires-Dist: msgspec

|

|

16

|

+

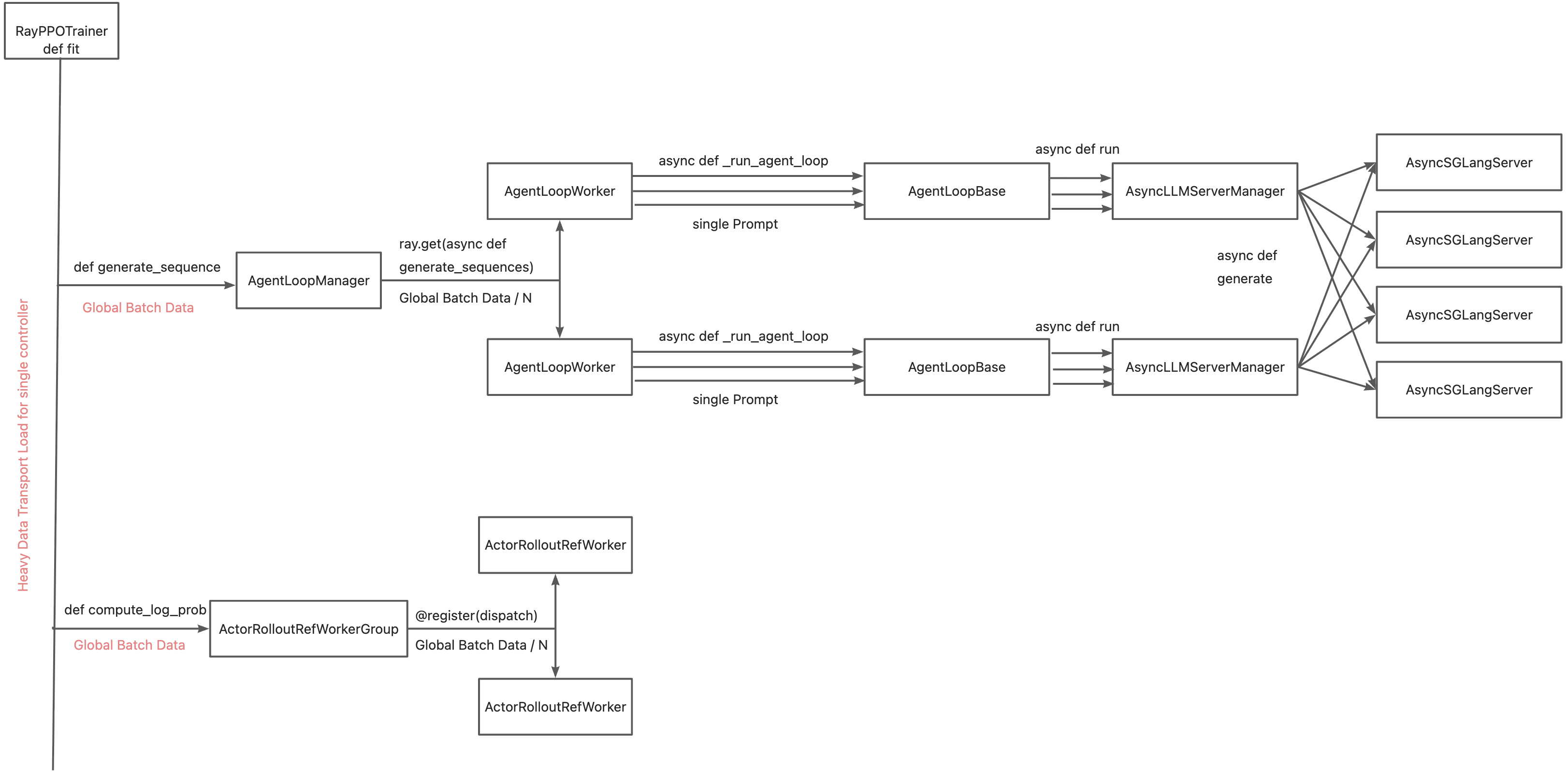

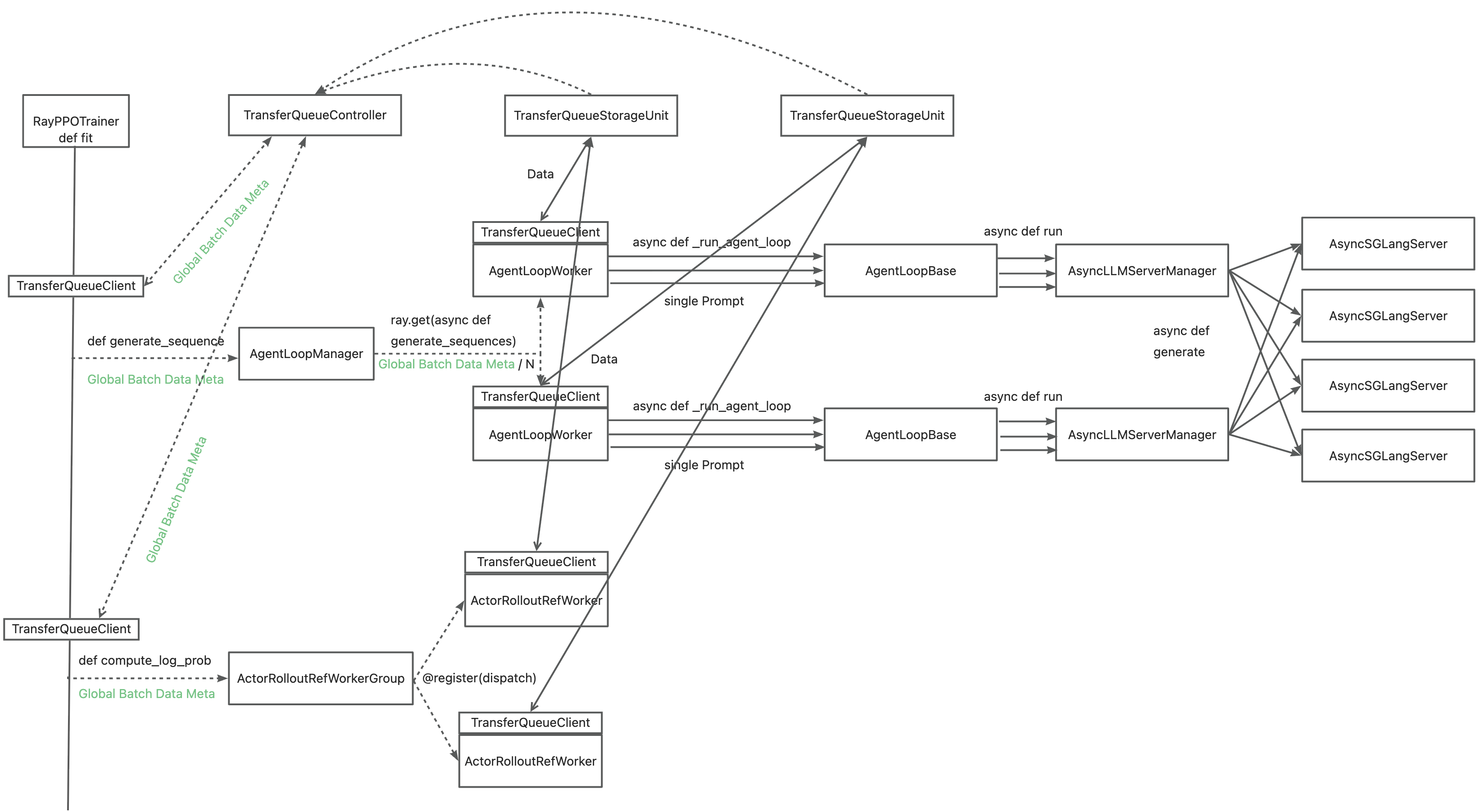

Requires-Dist: psutil

|

|

17

|

+

Provides-Extra: test

|

|

18

|

+

Requires-Dist: pytest>=7.0.0; extra == "test"

|

|

19

|

+

Requires-Dist: pytest-asyncio>=0.20.0; extra == "test"

|

|

20

|

+

Dynamic: license-file

|

|

21

|

+

|

|

22

|

+

<div align="center">

|

|

23

|

+

<h2 align="center">

|

|

24

|

+

TransferQueue: An asynchronous streaming data management module for efficient post-training

|

|

25

|

+

</h2>

|

|

26

|

+

<a href="https://arxiv.org/abs/2507.01663" target="_blank"><strong>Paper</strong></a>

|

|

27

|

+

| <a href="https://zhuanlan.zhihu.com/p/1930244241625449814" target="_blank"><strong>Zhihu</strong></a>

|

|

28

|

+

<br />

|

|

29

|

+

<br />

|

|

30

|

+

|

|

31

|

+

<a href="https://deepwiki.com/TransferQueue/TransferQueue"><img src="https://devin.ai/assets/deepwiki-badge.png" alt="Ask DeepWiki.com" style="height:20px;"></a>

|

|

32

|

+

[](https://github.com/TransferQueue/TransferQueue/stargazers/)

|

|

33

|

+

[](https://github.com/TransferQueue/TransferQueue/graphs/commit-activity)

|

|

34

|

+

|

|

35

|

+

</div>

|

|

36

|

+

<br/>

|

|

37

|

+

|

|

38

|

+

<h2 id="overview">🎉 Overview</h2>

|

|

39

|

+

|

|

40

|

+

TransferQueue is a high-performance data storage and transfer module with panoramic data visibility and streaming scheduling capabilities, optimized for efficient dataflow in post-training workflows.

|

|

41

|

+

|

|

42

|

+

<p align="center">

|

|

43

|

+

<img src="https://cdn.nlark.com/yuque/0/2025/png/23208217/1761356010763-b05751d3-f975-4890-ba59-c8d753cf95f2.png" width="70%">

|

|

44

|

+

</p>

|

|

45

|

+

|

|

46

|

+

TransferQueue offers **fine-grained, sample-level** data management and **load-balancing** (on the way) capabilities, serving as a data gateway that decouples explicit data dependencies across computational tasks. This enables a divide-and-conquer approach, significantly simplifies the algorithm controller design.

|

|

47

|

+

|

|

48

|

+

<p align="center">

|

|

49

|

+

<img src="https://cdn.nlark.com/yuque/0/2025/png/23208217/1758696791245-fa7baf96-46af-4c19-8606-28ffadc4556c.png" width="70%">

|

|

50

|

+

</p>

|

|

51

|

+

|

|

52

|

+

<h2 id="updates">🔄 Updates</h2>

|

|

53

|

+

|

|

54

|

+

- **Nov 10, 2025**: We disentangle the data retrieval logic from TransferQueueController [PR#101](https://github.com/TransferQueue/TransferQueue/pull/101). Now you can implement your own `Sampler` to control how to consume the data.

|

|

55

|

+

- **Nov 5, 2025**: We provide a `KVStorageManager` that simplifies the integration with KV-based storage backends [PR#96](https://github.com/TransferQueue/TransferQueue/pull/96). The first available KV-based backend is [Yuanrong](https://gitee.com/openeuler/yuanrong-datasystem).

|

|

56

|

+

- **Nov 4, 2025**: Data partition capability is available in [PR#98](https://github.com/TransferQueue/TransferQueue/pull/98). Now you can define logical data partitions to manage your train/val/test datasets.

|

|

57

|

+

- **Oct 25, 2025**: We make storage backends pluggable in [PR#66](https://github.com/TransferQueue/TransferQueue/pull/66). You can try to integrate your own storage backend with TransferQueue now!

|

|

58

|

+

- **Oct 21, 2025**: Official integration into verl is ready [verl/pulls/3649](https://github.com/volcengine/verl/pull/3649). Following PRs will optimize the single controller architecture by fully decoupling data & control flows.

|

|

59

|

+

- **July 22, 2025**: We present a series of Chinese blogs on <a href="https://zhuanlan.zhihu.com/p/1930244241625449814">Zhihu 1</a>, <a href="https://zhuanlan.zhihu.com/p/1933259599953232589">2</a>.

|

|

60

|

+

- **July 21, 2025**: We started an RFC on verl community [verl/RFC#2662](https://github.com/volcengine/verl/discussions/2662).

|

|

61

|

+

- **July 2, 2025**: We publish the paper [AsyncFlow](https://arxiv.org/abs/2507.01663).

|

|

62

|

+

|

|

63

|

+

<h2 id="components">🧩 Components</h2>

|

|

64

|

+

|

|

65

|

+

### Control Plane: Panoramic Data Management

|

|

66

|

+

|

|

67

|

+

In the control plane, `TransferQueueController` tracks the **production status** and **consumption status** of each training sample as metadata. When all the required data fields are ready (i.e., written to the `TransferQueueStorageManager`), we know that this data sample can be consumed by downstream tasks.

|

|

68

|

+

|

|

69

|

+

For consumption status, we record the consumption records for each computational task (e.g., `generate_sequences`, `compute_log_prob`, etc.). Therefore, even when different computation tasks require the same data field, they can consume the data independently without interfering with each other.

|

|

70

|

+

|

|

71

|

+

<p align="center">

|

|

72

|

+

<img src="https://cdn.nlark.com/yuque/0/2025/png/23208217/1758696820173-456c1784-42ba-40c8-a292-2ff1401f49c5.png" width="70%">

|

|

73

|

+

</p>

|

|

74

|

+

|

|

75

|

+

To make the data retrieval process more customizable, we provide a `Sampler` class that allows users to define their own data retrieval and consumption logic. Refer to the [Customize](#customize) section for details.

|

|

76

|

+

|

|

77

|

+

> In the future, we plan to support **load-balancing** and **dynamic batching** capabilities in the control plane. Additionally, we will support data management for disaggregated frameworks where each rank manages the data retrieval by itself, rather than coordinated by a single controller.

|

|

78

|

+

|

|

79

|

+

### Data Plane: Distributed Data Storage

|

|

80

|

+

|

|

81

|

+

In the data plane, we provide a pluggable design that enables TransferQueue to integrate with different storage backends according to user requirements.

|

|

82

|

+

|

|

83

|

+

Specifically, we provide a `TransferQueueStorageManager` abstraction class that defines the core APIs as follows:

|

|

84

|

+

|

|

85

|

+

- `async def put_data(self, data: TensorDict, metadata: BatchMeta) -> None`

|

|

86

|

+

- `async def get_data(self, metadata: BatchMeta) -> TensorDict`

|

|

87

|

+

- `async def clear_data(self, metadata: BatchMeta) -> None`

|

|

88

|

+

|

|

89

|

+

This class encapsulates the core interaction logic within the TransferQueue system. You only need to write a simple subclass to integrate your own storage backend. Refer to the [Customize](#customize) section for details.

|

|

90

|

+

|

|

91

|

+

Currently, we support the following storage backends:

|

|

92

|

+

|

|

93

|

+

- SimpleStorageUnit: A basic CPU memory storage with minimal data format constraints and easy usability.

|

|

94

|

+

- [MoonCakeStore](https://github.com/kvcache-ai/Mooncake): A high-performance, KV-based hierarchical storage that supports RDMA transport between GPU and DRAM.

|

|

95

|

+

- [Yuanrong](https://gitee.com/openeuler/yuanrong-datasystem): An Ascend native data system that provides hierarchical storage interfaces including HBM/DRAM/SSD.

|

|

96

|

+

- [Ray Direct Transport](https://docs.ray.io/en/master/ray-core/direct-transport.html): Ray's new feature that allows Ray to store and pass objects directly between Ray actors.

|

|

97

|

+

|

|

98

|

+

Among them, `SimpleStorageUnit` serves as our default storage backend, coordinated by the `AsyncSimpleStorageManager` class. Each storage unit can be deployed on a separate node, allowing for distributed data management.

|

|

99

|

+

|

|

100

|

+

`SimpleStorageUnit` employs a 2D data structure as follows:

|

|

101

|

+

|

|

102

|

+

- Each row corresponds to a training sample, assigned a unique index within the corresponding global batch.

|

|

103

|

+

- Each column represents the input/output data fields for computational tasks.

|

|

104

|

+

|

|

105

|

+

This data structure design is motivated by the computational characteristics of the post-training process, where each training sample is generated in a relayed manner across task pipelines. It provides an accurate addressing capability, which allows fine-grained, concurrent data read/write operations in a streaming manner.

|

|

106

|

+

|

|

107

|

+

<p align="center">

|

|

108

|

+

<img src="https://cdn.nlark.com/yuque/0/2025/png/23208217/1758696805154-3817011f-84e6-40d0-a80c-58b7e3e5f6a7.png" width="70%">

|

|

109

|

+

</p>

|

|

110

|

+

|

|

111

|

+

### User Interface: Asynchronous & Synchronous Client

|

|

112

|

+

|

|

113

|

+

The interaction workflow of TransferQueue system is as follows:

|

|

114

|

+

|

|

115

|

+

1. A process sends a read request to the `TransferQueueController`.

|

|

116

|

+

2. `TransferQueueController` scans the production and consumption metadata for each sample (row), and dynamically assembles a micro-batch metadata according to the load-balancing policy. This mechanism enables sample-level data scheduling.

|

|

117

|

+

3. The process retrieves the actual data from distributed storage units using the metadata provided by the controller.

|

|

118

|

+

|

|

119

|

+

To simplify the usage of TransferQueue, we have encapsulated this process into `AsyncTransferQueueClient` and `TransferQueueClient`. These clients provide both asynchronous and synchronous interfaces for data transfer, allowing users to easily integrate TransferQueue into their framework.

|

|

120

|

+

|

|

121

|

+

> In the future, we will provide a `StreamingDataLoader` interface for disaggregated frameworks as discussed in [issue#85](https://github.com/TransferQueue/TransferQueue/issues/85) and [verl/RFC#2662](https://github.com/volcengine/verl/discussions/2662). Leveraging this abstraction, each rank can automatically get its own data like `DataLoader` in PyTorch. The TransferQueue system will handle the underlying data scheduling and transfer logic caused by different parallelism strategies, significantly simplifying the design of disaggregated frameworks.

|

|

122

|

+

|

|

123

|

+

<h2 id="show-cases">🔥 Showcases</h2>

|

|

124

|

+

|

|

125

|

+

### General Usage

|

|

126

|

+

|

|

127

|

+

The primary interaction points are `AsyncTransferQueueClient` and `TransferQueueClient`, serving as the communication interface with the TransferQueue system.

|

|

128

|

+

|

|

129

|

+

Core interfaces:

|

|

130

|

+

|

|

131

|

+

- (async_)get_meta(data_fields: list[str], batch_size:int, global_step:int, get_n_samples:bool, task_name:str) -> BatchMeta

|

|

132

|

+

- (async_)get_data(metadata:BatchMeta) -> TensorDict

|

|

133

|

+

- (async_)put(data:TensorDict, metadata:BatchMeta, global_step)

|

|

134

|

+

- (async_)clear(global_step: int)

|

|

135

|

+

|

|

136

|

+

We will soon release a detailed tutorial and API documentation.

|

|

137

|

+

|

|

138

|

+

### Collocated Example

|

|

139

|

+

|

|

140

|

+

#### verl

|

|

141

|

+

The primary motivation for integrating TransferQueue to verl now is to **alleviate the data transfer bottleneck of the single controller `RayPPOTrainer`**. Currently, all `DataProto` objects must be routed through `RayPPOTrainer`, resulting in a single point bottleneck of the whole post-training system.

|

|

142

|

+

|

|

143

|

+

|

|

144

|

+

|

|

145

|

+

Leveraging TransferQueue, we separate experience data transfer from metadata dispatch by

|

|

146

|

+

|

|

147

|

+

- Replacing `DataProto` with `BatchMeta` (metadata) and `TensorDict` (actual data) structures

|

|

148

|

+

- Preserving verl's original Dispatch/Collect logic via BatchMeta (maintaining single-controller debuggability)

|

|

149

|

+

- Accelerating data transfer by TransferQueue's distributed storage units

|

|

150

|

+

|

|

151

|

+

|

|

152

|

+

|

|

153

|

+

You may refer to the [recipe](https://github.com/TransferQueue/TransferQueue/tree/dev/recipe/simple_use_case), where we mimic the verl usage in both async & sync scenarios. Official integration to verl is also available now at [verl/pulls/3649](https://github.com/volcengine/verl/pull/3649) (with subsequent PRs to further optimize the integration).

|

|

154

|

+

|

|

155

|

+

### Disaggregated Example

|

|

156

|

+

|

|

157

|

+

Work in progress :)

|

|

158

|

+

|

|

159

|

+

<p align="center">

|

|

160

|

+

<img src="https://cdn.nlark.com/yuque/0/2025/png/23208217/1758696840817-14ba4c3b-b96e-4390-ac7c-4ecf7b8c0ac3.png" width="70%">

|

|

161

|

+

</p>

|

|

162

|

+

|

|

163

|

+

<h2 id="quick-start">🚀 Quick Start</h2>

|

|

164

|

+

|

|

165

|

+

### Use Python package

|

|

166

|

+

We will soon release the Python package on PyPI.

|

|

167

|

+

|

|

168

|

+

### Build wheel package from source code

|

|

169

|

+

|

|

170

|

+

Follow these steps to build and install:

|

|

171

|

+

1. Clone the source code from the GitHub repository

|

|

172

|

+

```bash

|

|

173

|

+

git clone https://github.com/TransferQueue/TransferQueue/

|

|

174

|

+

cd TransferQueue

|

|

175

|

+

```

|

|

176

|

+

|

|

177

|

+

2. Install dependencies

|

|

178

|

+

```bash

|

|

179

|

+

pip install -r requirements.txt

|

|

180

|

+

```

|

|

181

|

+

|

|

182

|

+

3. Build and install

|

|

183

|

+

```bash

|

|

184

|

+

python -m build --wheel

|

|

185

|

+

pip install dist/*.whl

|

|

186

|

+

```

|

|

187

|

+

|

|

188

|

+

<h2 id="performance">📊 Performance</h2>

|

|

189

|

+

|

|

190

|

+

<p align="center">

|

|

191

|

+

<img src="https://cdn.nlark.com/yuque/0/2025/png/23208217/1761294403612-76ca20a7-9108-42fc-b3f5-60f84d70f39b.png" width="100%">

|

|

192

|

+

</p>

|

|

193

|

+

|

|

194

|

+

> Note: The above benchmark for TransferQueue is based on our naive `SimpleStorageUnit` backend. By introducing high-performance storage backends and optimizing serialization/deserialization, we expect to achieve even better performance. Warmly welcome contributions from the community!

|

|

195

|

+

|

|

196

|

+

For detailed performance benchmarks, please refer to [this blog](https://www.yuque.com/haomingzi-lfse7/hlx5g0/obi4ovmy9wf08zz3?singleDoc#).

|

|

197

|

+

|

|

198

|

+

<h2 id="customize"> 🛠️ Customize TransferQueue</h2>

|

|

199

|

+

|

|

200

|

+

### Define your own data retrieval logic

|

|

201

|

+

We provide a `BaseSampler` abstraction class, which defines the following interface:

|

|

202

|

+

|

|

203

|

+

```python3

|

|

204

|

+

@abstractmethod

|

|

205

|

+

def sample(

|

|

206

|

+

self,

|

|

207

|

+

ready_indexes: list[int],

|

|

208

|

+

batch_size: int,

|

|

209

|

+

*args: Any,

|

|

210

|

+

**kwargs: Any,

|

|

211

|

+

) -> tuple[list[int], list[int]]:

|

|

212

|

+

"""Sample a batch of indices from the ready indices.

|

|

213

|

+

|

|

214

|

+

Args:

|

|

215

|

+

ready_indexes: List of global indices for which all required fields of the

|

|

216

|

+

corresponding samples have been produced, and the samples are not labeled as

|

|

217

|

+

consumed in the corresponding task.

|

|

218

|

+

batch_size: Number of samples to select

|

|

219

|

+

*args: Additional positional arguments for specific sampler implementations

|

|

220

|

+

**kwargs: Additional keyword arguments for specific sampler implementations

|

|

221

|

+

|

|

222

|

+

Returns:

|

|

223

|

+

List of sampled global indices of length batch_size

|

|

224

|

+

List of global indices of length batch_size that should be labeled as consumed

|

|

225

|

+

(will never be retrieved in the future)

|

|

226

|

+

|

|

227

|

+

Raises:

|

|

228

|

+

ValueError: If batch_size is invalid or ready_indexes is insufficient

|

|

229

|

+

"""

|

|

230

|

+

raise NotImplementedError("Subclasses must implement sample")

|

|

231

|

+

```

|

|

232

|

+

|

|

233

|

+

In this design, we separate data retrieval and data consumption through the two return values, which enables us to easily control sample replacement. We have implemented two reference designs: `SequentialSampler` and `GRPOGroupNSampler`.

|

|

234

|

+

|

|

235

|

+

The `Sampler` class or instance should be passed to the `TransferQueueController` during initialization. During each `get_meta` call, you can provide dynamic sampling parameters to the `Sampler`.

|

|

236

|

+

|

|

237

|

+

```python3

|

|

238

|

+

from transfer_queue import TransferQueueController, TransferQueueClient, GRPOGroupNSampler, process_zmq_server_info

|

|

239

|

+

|

|

240

|

+

# Option 1: Pass the sampler class to the TransferQueueController

|

|

241

|

+

controller = TransferQueueController.remote(GRPOGroupNSampler)

|

|

242

|

+

|

|

243

|

+

# Option 2: Pass the sampler instance to the TransferQueueController (if you need custom configuration)

|

|

244

|

+

your_own_sampler = YourOwnSampler(config)

|

|

245

|

+

controller = TransferQueueController.remote(your_own_sampler)

|

|

246

|

+

|

|

247

|

+

# Use the sampler

|

|

248

|

+

batch_meta = client.get_meta(

|

|

249

|

+

data_fields=["input_ids", "attention_mask"],

|

|

250

|

+

batch_size=8,

|

|

251

|

+

partition_id="train_0",

|

|

252

|

+

task_name="generate_sequences",

|

|

253

|

+

sampling_config={"n_samples_per_prompt": 4} # Put the required sampling parameters here

|

|

254

|

+

)

|

|

255

|

+

```

|

|

256

|

+

|

|

257

|

+

### How to integrate a new storage backend

|

|

258

|

+

|

|

259

|

+

The data plane is organized as follows:

|

|

260

|

+

```text

|

|

261

|

+

transfer_queue/

|

|

262

|

+

├── storage/

|

|

263

|

+

│ ├── __init__.py

|

|

264

|

+

│ │── simple_backend.py # SimpleStorageUnit、StorageUnitData、StorageMetaGroup

|

|

265

|

+

│ ├── managers/ # Managers are upper level interfaces that encapsulate the interaction logic with TQ system.

|

|

266

|

+

│ │ ├── __init__.py

|

|

267

|

+

│ │ ├──base.py # TransferQueueStorageManager, KVStorageManager

|

|

268

|

+

│ │ ├──simple_backend_manager.py # AsyncSimpleStorageManager

|

|

269

|

+

│ │ ├──yuanrong_manager.py # YuanrongStorageManager

|

|

270

|

+

│ │ ├──mooncake_manager.py # MooncakeStorageManager

|

|

271

|

+

│ │ └──factory.py # TransferQueueStorageManagerFactory

|

|

272

|

+

│ └── clients/ # Clients are lower level interfaces that directly manipulate the target storage backend.

|

|

273

|

+

│ │ ├── __init__.py

|

|

274

|

+

│ │ ├── base.py # TransferQueueStorageKVClient

|

|

275

|

+

│ │ ├── yuanrong_client.py # YRStorageClient

|

|

276

|

+

│ │ ├── mooncake_client.py # MooncakeStoreClient

|

|

277

|

+

│ │ └── factory.py # TransferQueueStorageClientFactory

|

|

278

|

+

```

|

|

279

|

+

|

|

280

|

+

To integrate TransferQueue with a custom storage backend, start by implementing a subclass that inherits from `TransferQueueStorageManager`. This subclass acts as an adapter between the TransferQueue system and the target storage backend. For KV-based storage backends, you can simply inherit from `KVStorageManager`, which can serve as the general manager for all KV-based backends.

|

|

281

|

+

|

|

282

|

+

Distributed storage backends often come with their own native clients serving as the interface of the storage system. In such cases, a low-level adapter for this client can be written, following the examples provided in the `storage/clients` directory.

|

|

283

|

+

|

|

284

|

+

Factory classes are provided for both `StorageManager` and `StorageClient` to facilitate easy integration. Adding necessary descriptions of required parameters in the factory class helps enhance the overall user experience.

|

|

285

|

+

|

|

286

|

+

<h2 id="contribution"> ✏️ Contribution Guide</h2>

|

|

287

|

+

|

|

288

|

+

<span style="color: #FF0000;">**Contributions are warmly welcome!**</span>

|

|

289

|

+

|

|

290

|

+

New ideas, feature suggestions, and user experience feedback are all encouraged—feel free to submit issues or PRs. We will respond as soon as possible.

|

|

291

|

+

|

|

292

|

+

We recommend using pre-commit for better code format.

|

|

293

|

+

|

|

294

|

+

```bash

|

|

295

|

+

# install pre-commit

|

|

296

|

+

pip install pre-commit

|

|

297

|

+

|

|

298

|

+

# run the following command in your repo folder, then fix the check before committing your code

|

|

299

|

+

pre-commit install && pre-commit run --all-files --show-diff-on-failure --color=always

|

|

300

|

+

```

|

|

301

|

+

|

|

302

|

+

<h2 id="roadmap"> 🛣️ Roadmap</h2>

|

|

303

|

+

|

|

304

|

+

- [ ] Support data rewrite for partial rollout & agentic post-training

|

|

305

|

+

- [x] Provide a general storage abstraction layer `TransferQueueStorageManager` to manage distributed storage units, which simplifies `Client` design and makes it possible to introduce different storage backends ([PR#66](https://github.com/TransferQueue/TransferQueue/pull/66), [issue#72](https://github.com/TransferQueue/TransferQueue/issues/72))

|

|

306

|

+

- [x] Implement `AsyncSimpleStorageManager` as the default storage backend based on the `TransferQueueStorageManager` abstraction

|

|

307

|

+

- [x] Provide a `KVStorageManager` to cover all the KV based storage backends ([PR#96](https://github.com/TransferQueue/TransferQueue/pull/96))

|

|

308

|

+

- [x] Support topic-based data partitioning to maintain train/val/test data simultaneously ([PR#98](https://github.com/TransferQueue/TransferQueue/pull/98))

|

|

309

|

+

- [ ] Release the first stable version through PyPI

|

|

310

|

+

- [ ] Support disaggregated framework (each rank retrieves its own data without going through a centralized node)

|

|

311

|

+

- [ ] Provide a `StreamingDataLoader` interface for disaggregated framework

|

|

312

|

+

- [ ] Support load-balancing and dynamic batching

|

|

313

|

+

- [ ] Support high-performance storage backends for RDMA transmission (e.g., [MoonCakeStore](https://github.com/kvcache-ai/Mooncake), [Ray Direct Transport](https://docs.ray.io/en/master/ray-core/direct-transport.html)...)

|

|

314

|

+

- [ ] High-performance serialization and deserialization

|

|

315

|

+

- [ ] More documentation, examples and tutorials

|

|

316

|

+

|

|

317

|

+

<h2 id="citation">📑 Citation</h2>

|

|

318

|

+

Please kindly cite our paper if you find this repo is useful:

|

|

319

|

+

|

|

320

|

+

```bibtex

|

|

321

|

+

@article{han2025asyncflow,

|

|

322

|

+

title={AsyncFlow: An Asynchronous Streaming RL Framework for Efficient LLM Post-Training},

|

|

323

|

+

author={Han, Zhenyu and You, Ansheng and Wang, Haibo and Luo, Kui and Yang, Guang and Shi, Wenqi and Chen, Menglong and Zhang, Sicheng and Lan, Zeshun and Deng, Chunshi and others},

|

|

324

|

+

journal={arXiv preprint arXiv:2507.01663},

|

|

325

|

+

year={2025}

|

|

326

|

+

}

|

|

327

|

+

```

|

|

@@ -0,0 +1,41 @@

|

|

|

1

|

+

recipe/simple_use_case/async_demo.py,sha256=nkxGPBUyD32p4xEcYzzRSuUfsi1OVoDQcrer71piefg,13591

|

|

2

|

+

recipe/simple_use_case/sync_demo.py,sha256=yn6i2-G1hwdFJ2_K46zYbWLJKAYEqtZhfVPLEwkgsE8,8629

|

|

3

|

+

tests/test_async_simple_storage_manager.py,sha256=CJaLiqXp7bDu9rHDeDvoA3dycLbIuaoU2e9NfrTyVPo,12346

|

|

4

|

+

tests/test_client.py,sha256=74Pm1D4SI_GCg0Kxwm5Wqa4ppSfc57mpHJGuIdtNUrs,15325

|

|

5

|

+

tests/test_controller.py,sha256=ZcvFCC3jSnNN_fEerjA37RQv0SSO0Xh8vjcL2mvF03o,11084

|

|

6

|

+

tests/test_controller_data_partitions.py,sha256=qZxMHerMwKIwyRmT8FZke8TEd80Z9vAkBzU_k5Jz1bY,19185

|

|

7

|

+

tests/test_kv_storage_manager.py,sha256=Eh6xykdhLBMpxikXfRHxn1crhLsQjn9QGa0O7TLO-5o,3582

|

|

8

|

+

tests/test_put.py,sha256=WnRKCGPXmRITAvbD8KWlZor4jpvv6sdg2gg3NCw9gyQ,10453

|

|

9

|

+

tests/test_samplers.py,sha256=CvYqfmbHEWWa1RyymztCAn0GcitAPOBbfJ4ud1VvO2o,19168

|

|

10

|

+

tests/test_serial_utils_on_cpu.py,sha256=Hju5yAV52JP-cPw-PT_jsut-y6J_7lUX_SbG5EO1lNQ,7379

|

|

11

|

+

tests/test_simple_storage_unit.py,sha256=Sczhw2bdCfTfa7RwnpW-aKCV1of8mO6z3l3q2PDzZCA,16535

|

|

12

|

+

tests/test_storage_client_factory.py,sha256=U0gS_l4bc_bP7K_uPhy8UlJ186HyTrA10TSJNdf5SBM,1708

|

|

13

|

+

transfer_queue/__init__.py,sha256=68c0sBfqHPqTa7OdzO4sAZB52XvwtjpwLqP9BWAh4fA,1535

|

|

14

|

+

transfer_queue/client.py,sha256=zDlH1beWwRbjz0a7S8QH9IOJ1wp5yEQ36XYwFwJTXOY,24949

|

|

15

|

+

transfer_queue/controller.py,sha256=gFS3OMM7giSqwypA7qmMFZ_AxG22LIpCQIblbqC_Gm8,48988

|

|

16

|

+

transfer_queue/metadata.py,sha256=LHQhM7vixw5QcA05nZcqWbxSh7mhJ2ETJOo_1gFb3eI,17645

|

|

17

|

+

transfer_queue/sampler/__init__.py,sha256=1oauDy2Dwb5GXhKi7tl5DWAHv8i4t2MQK1S4U36Sy4g,788

|

|

18

|

+

transfer_queue/sampler/base.py,sha256=wFti4dNJb3YArYpGzxA_YDfyUTdTG8wVz6HclPDyZPw,3299

|

|

19

|

+

transfer_queue/sampler/grpo_group_n_sampler.py,sha256=Kq3hGAz8mboBNvw4Dj0P8lP6Qs8TDojx81fxSh57w28,6566

|

|

20

|

+

transfer_queue/sampler/sequential_sampler.py,sha256=TY0eB-uFLUskwoNMgu3AvuF4G2KDkgjOkrlXZHy4Pls,2780

|

|

21

|

+

transfer_queue/storage/__init__.py,sha256=559q9ZOMLLhHXil5-iY3aLPnACoJLnZnKf-E0lvpQdk,978

|

|

22

|

+

transfer_queue/storage/simple_backend.py,sha256=n62dq3ApBu9cqO-lqIpNfLnoqGeZKkF1ztSQ7EY5EBI,18844

|

|

23

|

+

transfer_queue/storage/clients/__init__.py,sha256=s7kaQVy_FDqFCXz8GkHHmzkvcU3AeF0zzbcYxmbsayw,903

|

|

24

|

+

transfer_queue/storage/clients/base.py,sha256=xXd9JBeTmW8tN4wsPocHhW-ERUEzx2YyYHZrtuQQIdI,690

|

|

25

|

+

transfer_queue/storage/clients/factory.py,sha256=lPOG8oMAgaTbrzkogcOULPJnGywa0F-m4vskkOQZhnU,2137

|

|

26

|

+

transfer_queue/storage/clients/yuanrong_client.py,sha256=rWOPQPLgHLav7cFdGCr8CeVUsQKb7SiVhjgxPti6Z9A,4595

|

|

27

|

+

transfer_queue/storage/managers/__init__.py,sha256=y5x5OzZwK_YormdHdzc-smnJNew3niuqU2g_kkaiXIk,876

|

|

28

|

+

transfer_queue/storage/managers/base.py,sha256=ntlo6sLWIbTiAOUxgJTUyjLl5m4HBuAUUNCYZxq9BFM,20352

|

|

29

|

+

transfer_queue/storage/managers/factory.py,sha256=58kp2mCKz1K8Ea7RWMsWxdDhN3y4ZhgE-G647AKq7-I,1752

|

|

30

|

+

transfer_queue/storage/managers/simple_backend_manager.py,sha256=fCj-0BnS3RbzPn03KEcxmUWbxZVWK11cfxpj8tSf-yY,27381

|

|

31

|

+

transfer_queue/storage/managers/yuanrong_manager.py,sha256=NjHC3LBW0fQwm30Oq_qEKoCQEq8oWO0D-AobcpQNPNg,777

|

|

32

|

+

transfer_queue/utils/__init__.py,sha256=vki-5RVaRBKxVc6Q7XPQox3VNPio2DvJYvRz0SZtu-w,586

|

|

33

|

+

transfer_queue/utils/serial_utils.py,sha256=9ZgsytTp-441YKtIRFqyH5NhNifSqRKa2h0FI44ltcc,10200

|

|

34

|

+

transfer_queue/utils/utils.py,sha256=Pno4h3WjX_eT7q4xiVV6Jkhquc39Fp-Ycsg1cv0qNKQ,4544

|

|

35

|

+

transfer_queue/utils/zmq_utils.py,sha256=jCg2pQfvy_IYdGyZq4nvL4CAwjJc7Li0Trp3T3GDBMg,5118

|

|

36

|

+

transfer_queue/version/version,sha256=6GX4KkA_FGXCFr4Z-Mw9dUky3PbKBVWPbSJCCVmVPlw,9

|

|

37

|

+

transferqueue-0.1.1.dev0.dist-info/licenses/LICENSE,sha256=WNHhf_5RCaeuKWyq_K39vmp9F28LxKsB4SpomwSZ2L0,11357

|

|

38

|

+

transferqueue-0.1.1.dev0.dist-info/METADATA,sha256=3YY8wlygPXiXf5Ct4x6Is4ezv6-ZR1bwnL3tyTxZf7E,19531

|

|

39

|

+

transferqueue-0.1.1.dev0.dist-info/WHEEL,sha256=_zCd3N1l69ArxyTb8rzEoP9TpbYXkqRFSNOD5OuxnTs,91

|

|

40

|

+

transferqueue-0.1.1.dev0.dist-info/top_level.txt,sha256=BiBclu7jWJ0AZ35vUr3hN9_cg8JL9EiH_hjFxquMxtw,33

|

|

41

|

+

transferqueue-0.1.1.dev0.dist-info/RECORD,,

|

|

@@ -0,0 +1,202 @@

|

|

|

1

|

+

|

|

2

|

+

Apache License

|

|

3

|

+

Version 2.0, January 2004

|

|

4

|

+

http://www.apache.org/licenses/

|

|

5

|

+

|

|

6

|

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

|

7

|

+

|

|

8

|

+

1. Definitions.

|

|

9

|

+

|

|

10

|

+

"License" shall mean the terms and conditions for use, reproduction,

|

|

11

|

+

and distribution as defined by Sections 1 through 9 of this document.

|

|

12

|

+

|

|

13

|

+

"Licensor" shall mean the copyright owner or entity authorized by

|

|

14

|

+

the copyright owner that is granting the License.

|

|

15

|

+

|

|

16

|

+

"Legal Entity" shall mean the union of the acting entity and all

|

|

17

|

+

other entities that control, are controlled by, or are under common

|

|

18

|

+

control with that entity. For the purposes of this definition,

|

|

19

|

+

"control" means (i) the power, direct or indirect, to cause the

|

|

20

|

+

direction or management of such entity, whether by contract or

|

|

21

|

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

|

22

|

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

|

23

|

+

|

|

24

|

+

"You" (or "Your") shall mean an individual or Legal Entity

|

|

25

|

+

exercising permissions granted by this License.

|

|

26

|

+

|

|

27

|

+

"Source" form shall mean the preferred form for making modifications,

|

|

28

|

+

including but not limited to software source code, documentation

|

|

29

|

+

source, and configuration files.

|

|

30

|

+

|

|

31

|

+

"Object" form shall mean any form resulting from mechanical

|

|

32

|

+

transformation or translation of a Source form, including but

|

|

33

|

+

not limited to compiled object code, generated documentation,

|

|

34

|

+

and conversions to other media types.

|

|

35

|

+

|

|

36

|

+

"Work" shall mean the work of authorship, whether in Source or

|

|

37

|

+

Object form, made available under the License, as indicated by a

|

|

38

|

+

copyright notice that is included in or attached to the work

|

|

39

|

+

(an example is provided in the Appendix below).

|

|

40

|

+

|

|

41

|

+

"Derivative Works" shall mean any work, whether in Source or Object

|

|

42

|

+

form, that is based on (or derived from) the Work and for which the

|

|

43

|

+

editorial revisions, annotations, elaborations, or other modifications

|

|

44

|

+

represent, as a whole, an original work of authorship. For the purposes

|

|

45

|

+

of this License, Derivative Works shall not include works that remain

|

|

46

|

+

separable from, or merely link (or bind by name) to the interfaces of,

|

|

47

|

+

the Work and Derivative Works thereof.

|

|

48

|

+

|

|

49

|

+

"Contribution" shall mean any work of authorship, including

|

|

50

|

+

the original version of the Work and any modifications or additions

|

|

51

|

+

to that Work or Derivative Works thereof, that is intentionally

|

|

52

|

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

|

53

|

+

or by an individual or Legal Entity authorized to submit on behalf of

|

|

54

|

+

the copyright owner. For the purposes of this definition, "submitted"

|

|

55

|

+

means any form of electronic, verbal, or written communication sent

|

|

56

|

+

to the Licensor or its representatives, including but not limited to

|

|

57

|

+

communication on electronic mailing lists, source code control systems,

|

|

58

|

+

and issue tracking systems that are managed by, or on behalf of, the

|

|

59

|

+

Licensor for the purpose of discussing and improving the Work, but

|

|

60

|

+

excluding communication that is conspicuously marked or otherwise

|

|

61

|

+

designated in writing by the copyright owner as "Not a Contribution."

|

|

62

|

+

|

|

63

|

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

|

64

|

+

on behalf of whom a Contribution has been received by Licensor and

|

|

65

|

+

subsequently incorporated within the Work.

|

|

66

|

+

|

|

67

|

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

|

68

|

+

this License, each Contributor hereby grants to You a perpetual,

|

|

69

|

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

|

70

|

+

copyright license to reproduce, prepare Derivative Works of,

|

|

71

|

+

publicly display, publicly perform, sublicense, and distribute the

|

|

72

|

+

Work and such Derivative Works in Source or Object form.

|

|

73

|

+

|

|

74

|

+

3. Grant of Patent License. Subject to the terms and conditions of

|

|

75

|

+

this License, each Contributor hereby grants to You a perpetual,

|

|

76

|

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

|

77

|

+

(except as stated in this section) patent license to make, have made,

|

|

78

|

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

|

79

|

+

where such license applies only to those patent claims licensable

|

|

80

|

+

by such Contributor that are necessarily infringed by their

|

|

81

|

+

Contribution(s) alone or by combination of their Contribution(s)

|

|

82

|

+

with the Work to which such Contribution(s) was submitted. If You

|

|

83

|

+

institute patent litigation against any entity (including a

|

|

84

|

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

|

85

|

+

or a Contribution incorporated within the Work constitutes direct

|

|

86

|

+

or contributory patent infringement, then any patent licenses

|

|

87

|

+

granted to You under this License for that Work shall terminate

|

|

88

|

+

as of the date such litigation is filed.

|

|

89

|

+

|

|

90

|

+

4. Redistribution. You may reproduce and distribute copies of the

|

|

91

|

+

Work or Derivative Works thereof in any medium, with or without

|

|

92

|

+

modifications, and in Source or Object form, provided that You

|

|

93

|

+

meet the following conditions:

|

|

94

|

+

|

|

95

|

+

(a) You must give any other recipients of the Work or

|

|

96

|

+

Derivative Works a copy of this License; and

|

|

97

|

+

|

|

98

|

+

(b) You must cause any modified files to carry prominent notices

|

|

99

|

+

stating that You changed the files; and

|

|

100

|

+

|

|

101

|

+

(c) You must retain, in the Source form of any Derivative Works

|

|

102

|

+

that You distribute, all copyright, patent, trademark, and

|

|

103

|

+

attribution notices from the Source form of the Work,

|

|

104

|

+

excluding those notices that do not pertain to any part of

|

|

105

|

+

the Derivative Works; and

|

|

106

|

+

|

|

107

|

+

(d) If the Work includes a "NOTICE" text file as part of its

|

|

108

|

+

distribution, then any Derivative Works that You distribute must

|

|

109

|

+

include a readable copy of the attribution notices contained

|

|

110

|

+

within such NOTICE file, excluding those notices that do not

|

|

111

|

+

pertain to any part of the Derivative Works, in at least one

|

|

112

|

+

of the following places: within a NOTICE text file distributed

|

|

113

|

+

as part of the Derivative Works; within the Source form or

|

|

114

|

+

documentation, if provided along with the Derivative Works; or,

|

|

115

|

+

within a display generated by the Derivative Works, if and

|

|

116

|

+

wherever such third-party notices normally appear. The contents

|

|

117

|

+

of the NOTICE file are for informational purposes only and

|

|

118

|

+

do not modify the License. You may add Your own attribution

|

|

119

|

+

notices within Derivative Works that You distribute, alongside

|

|

120

|

+

or as an addendum to the NOTICE text from the Work, provided

|

|

121

|

+

that such additional attribution notices cannot be construed

|

|

122

|

+

as modifying the License.

|

|

123

|

+

|

|

124

|

+

You may add Your own copyright statement to Your modifications and

|

|

125

|

+

may provide additional or different license terms and conditions

|

|

126

|

+

for use, reproduction, or distribution of Your modifications, or

|

|

127

|

+

for any such Derivative Works as a whole, provided Your use,

|

|

128

|

+

reproduction, and distribution of the Work otherwise complies with

|

|

129

|

+

the conditions stated in this License.

|

|

130

|

+

|

|

131

|

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

|

132

|

+

any Contribution intentionally submitted for inclusion in the Work

|

|

133

|

+

by You to the Licensor shall be under the terms and conditions of

|

|

134

|

+

this License, without any additional terms or conditions.

|

|

135

|

+

Notwithstanding the above, nothing herein shall supersede or modify

|

|

136

|

+

the terms of any separate license agreement you may have executed

|

|

137

|

+

with Licensor regarding such Contributions.

|

|

138

|

+

|

|

139

|

+

6. Trademarks. This License does not grant permission to use the trade

|

|

140

|

+

names, trademarks, service marks, or product names of the Licensor,

|

|

141

|

+

except as required for reasonable and customary use in describing the

|

|

142

|

+

origin of the Work and reproducing the content of the NOTICE file.

|

|

143

|

+

|

|

144

|

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

|

145

|

+

agreed to in writing, Licensor provides the Work (and each

|

|

146

|

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

|

147

|

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

|

148

|

+

implied, including, without limitation, any warranties or conditions

|

|

149

|

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

|

150

|

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

|

151

|

+

appropriateness of using or redistributing the Work and assume any

|

|

152

|

+

risks associated with Your exercise of permissions under this License.

|

|

153

|

+

|

|

154

|

+

8. Limitation of Liability. In no event and under no legal theory,

|

|

155

|

+

whether in tort (including negligence), contract, or otherwise,

|

|

156

|

+

unless required by applicable law (such as deliberate and grossly

|

|

157

|

+

negligent acts) or agreed to in writing, shall any Contributor be

|

|

158

|

+

liable to You for damages, including any direct, indirect, special,

|

|

159

|

+

incidental, or consequential damages of any character arising as a

|

|

160

|

+

result of this License or out of the use or inability to use the

|

|

161

|

+

Work (including but not limited to damages for loss of goodwill,

|

|

162

|

+

work stoppage, computer failure or malfunction, or any and all

|

|

163

|

+

other commercial damages or losses), even if such Contributor

|

|

164

|

+

has been advised of the possibility of such damages.

|

|

165

|

+

|

|

166

|

+

9. Accepting Warranty or Additional Liability. While redistributing

|

|

167

|

+

the Work or Derivative Works thereof, You may choose to offer,

|

|

168

|

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

|

169

|

+

or other liability obligations and/or rights consistent with this

|

|

170

|

+

License. However, in accepting such obligations, You may act only

|

|

171

|

+

on Your own behalf and on Your sole responsibility, not on behalf

|

|

172

|

+

of any other Contributor, and only if You agree to indemnify,

|

|

173

|

+

defend, and hold each Contributor harmless for any liability

|

|

174

|

+

incurred by, or claims asserted against, such Contributor by reason

|

|

175

|

+

of your accepting any such warranty or additional liability.

|

|

176

|

+

|

|

177

|

+

END OF TERMS AND CONDITIONS

|

|

178

|

+

|

|

179

|

+

APPENDIX: How to apply the Apache License to your work.

|

|

180

|

+

|

|

181

|

+

To apply the Apache License to your work, attach the following

|

|

182

|

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

|

183

|

+

replaced with your own identifying information. (Don't include

|

|

184

|

+

the brackets!) The text should be enclosed in the appropriate

|

|

185

|

+

comment syntax for the file format. We also recommend that a

|

|

186

|

+

file or class name and description of purpose be included on the

|

|

187

|

+

same "printed page" as the copyright notice for easier

|

|

188

|

+

identification within third-party archives.

|

|

189

|

+

|

|

190

|

+

Copyright [yyyy] [name of copyright owner]

|

|

191

|

+

|

|

192

|

+

Licensed under the Apache License, Version 2.0 (the "License");

|

|

193

|

+

you may not use this file except in compliance with the License.

|

|

194

|

+

You may obtain a copy of the License at

|

|

195

|

+

|

|

196

|

+

http://www.apache.org/licenses/LICENSE-2.0

|

|

197

|

+

|

|

198

|

+

Unless required by applicable law or agreed to in writing, software

|

|

199

|

+

distributed under the License is distributed on an "AS IS" BASIS,

|

|

200

|

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

|

201

|

+

See the License for the specific language governing permissions and

|

|

202

|

+

limitations under the License.

|