nvicode 0.1.5 → 0.1.6

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- package/README.md +17 -15

- package/dist/cli.js +223 -62

- package/dist/config.js +12 -3

- package/dist/models.js +30 -5

- package/dist/proxy.js +58 -3

- package/dist/usage.js +27 -1

- package/package.json +2 -2

package/README.md

CHANGED

|

@@ -1,6 +1,7 @@

|

|

|

1

|

-

#

|

|

1

|

+

# Navicode - one click Nvidia NIM to Claude code connection

|

|

2

2

|

|

|

3

|

-

Run Claude Code through NVIDIA-hosted models using a

|

|

3

|

+

Run Claude Code through NVIDIA-hosted models or OpenRouter using a simple CLI wrapper.

|

|

4

|

+

Use top open-source model APIs on NVIDIA Build for free, with `nvicode` paced to `40 RPM` by default.

|

|

4

5

|

|

|

5

6

|

Supported environments:

|

|

6

7

|

- macOS

|

|

@@ -16,21 +17,16 @@ Install the published package:

|

|

|

16

17

|

npm install -g nvicode

|

|

17

18

|

```

|

|

18

19

|

|

|

19

|

-

|

|

20

|

+

Set up provider, key, and model:

|

|

20

21

|

|

|

21

|

-

|

|

22

|

-

|

|

23

|

-

```sh

|

|

24

|

-

nvicode auth

|

|

25

|

-

```

|

|

26

|

-

|

|

27

|

-

Choose a model:

|

|

22

|

+

- NVIDIA: get a free key from [NVIDIA Build API Keys](https://build.nvidia.com/settings/api-keys)

|

|

23

|

+

- OpenRouter: use your OpenRouter API key

|

|

28

24

|

|

|

29

25

|

```sh

|

|

30

26

|

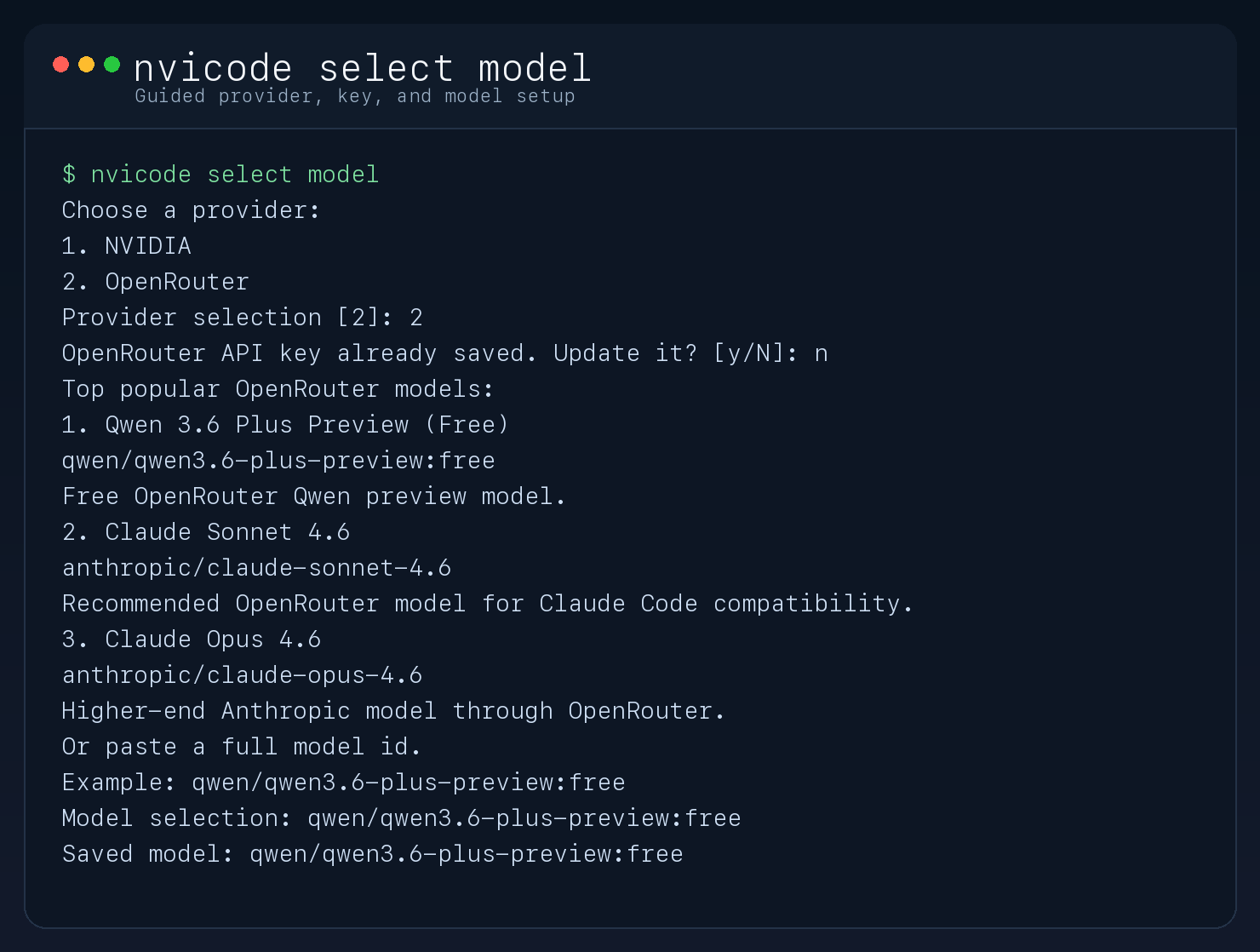

nvicode select model

|

|

31

27

|

```

|

|

32

28

|

|

|

33

|

-

Launch Claude Code through

|

|

29

|

+

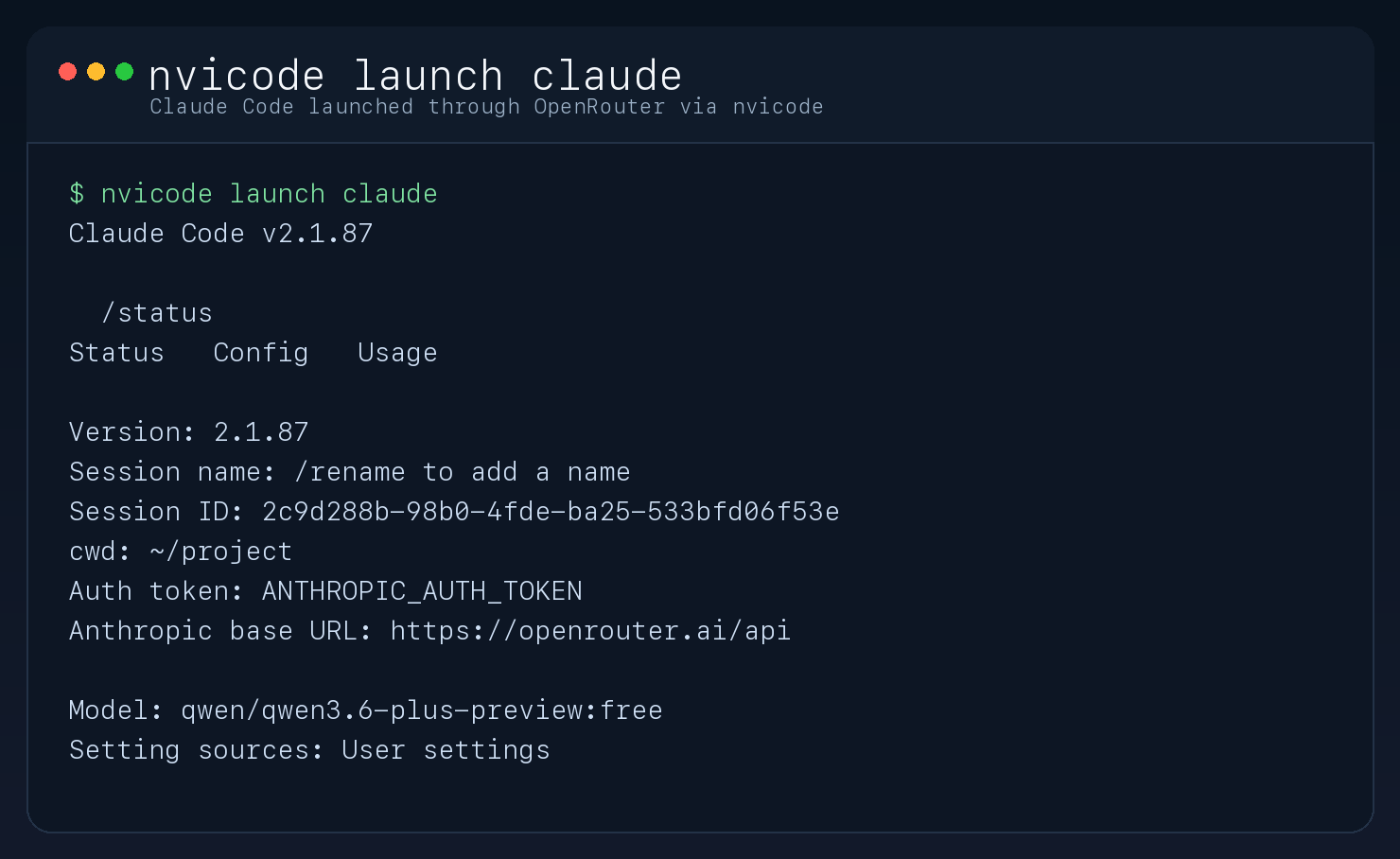

Launch Claude Code through your selected provider:

|

|

34

30

|

|

|

35

31

|

```sh

|

|

36

32

|

nvicode launch claude

|

|

@@ -46,7 +42,7 @@ nvicode launch claude

|

|

|

46

42

|

|

|

47

43

|

|

|

48

44

|

|

|

49

|

-

### Launch Claude Code through

|

|

45

|

+

### Launch Claude Code through your selected provider

|

|

50

46

|

|

|

51

47

|

|

|

52

48

|

|

|

@@ -64,12 +60,18 @@ nvicode auth

|

|

|

64

60

|

nvicode launch claude -p "Reply with exactly OK"

|

|

65

61

|

```

|

|

66

62

|

|

|

67

|

-

|

|

63

|

+

Provider behavior:

|

|

64

|

+

- NVIDIA: starts a local proxy on `127.0.0.1:8788`, points Claude Code at it with `ANTHROPIC_BASE_URL`, and forwards requests to NVIDIA `chat/completions`.

|

|

65

|

+

- OpenRouter: points Claude Code directly at `https://openrouter.ai/api` using OpenRouter credentials and Anthropic-compatible model ids.

|

|

66

|

+

|

|

67

|

+

In an interactive terminal, `nvicode usage` refreshes live every 2 seconds. When piped or redirected, it prints a single snapshot.

|

|

68

68

|

|

|

69

|

-

|

|

69

|

+

`nvicode select model` now asks for provider, optional API key update, and model choice in one guided flow.

|

|

70

|

+

If no API key is saved for the active provider yet, `nvicode` prompts for one on first use.

|

|

70

71

|

By default, the proxy paces upstream NVIDIA requests at `40 RPM`. Override that with `NVICODE_MAX_RPM` if your account has a different limit.

|

|

71

72

|

The usage dashboard compares your local NVIDIA run cost against Claude Opus 4.6 at `$5 / MTok input` and `$25 / MTok output`, based on Anthropic pricing as of `2026-03-30`.

|

|

72

73

|

If your NVIDIA endpoint is not free, override local cost estimates with `NVICODE_INPUT_USD_PER_MTOK` and `NVICODE_OUTPUT_USD_PER_MTOK`.

|

|

74

|

+

Local `usage`, `activity`, and `dashboard` commands are available for NVIDIA proxy sessions. OpenRouter sessions use OpenRouter's direct connection path instead.

|

|

73

75

|

|

|

74

76

|

## Requirements

|

|

75

77

|

|

|

@@ -92,4 +94,4 @@ npm link

|

|

|

92

94

|

- `thinking` is disabled by default because some NVIDIA reasoning models can consume the entire output budget and return no visible answer to Claude Code.

|

|

93

95

|

- The proxy supports basic text, tool calls, tool results, and token count estimation.

|

|

94

96

|

- The proxy includes upstream request pacing and retries on NVIDIA `429` responses.

|

|

95

|

-

- Claude Code remains the frontend; the selected

|

|

97

|

+

- Claude Code remains the frontend; the selected provider/model becomes the backend.

|

package/dist/cli.js

CHANGED

|

@@ -7,18 +7,18 @@ import path from "node:path";

|

|

|

7

7

|

import process from "node:process";

|

|

8

8

|

import { spawn } from "node:child_process";

|

|

9

9

|

import { fileURLToPath } from "node:url";

|

|

10

|

-

import { getNvicodePaths, loadConfig, saveConfig, } from "./config.js";

|

|

10

|

+

import { getActiveApiKey, getActiveModel, getNvicodePaths, loadConfig, saveConfig, } from "./config.js";

|

|

11

11

|

import { createProxyServer } from "./proxy.js";

|

|

12

|

-

import {

|

|

12

|

+

import { getRecommendedModels } from "./models.js";

|

|

13

13

|

import { filterRecordsSince, formatDuration, formatInteger, formatTimestamp, formatUsd, readUsageRecords, summarizeUsage, } from "./usage.js";

|

|

14

14

|

const __filename = fileURLToPath(import.meta.url);

|

|

15

15

|

const usage = () => {

|

|

16

16

|

console.log(`nvicode

|

|

17

17

|

|

|

18

18

|

Commands:

|

|

19

|

-

nvicode select model

|

|

20

|

-

nvicode models Show recommended

|

|

21

|

-

nvicode auth Save or update

|

|

19

|

+

nvicode select model Guided provider, key, and model selection

|

|

20

|

+

nvicode models Show recommended models for the active provider

|

|

21

|

+

nvicode auth Save or update the API key for the active provider

|

|

22

22

|

nvicode config Show current nvicode config

|

|

23

23

|

nvicode usage Show token usage and cost comparison

|

|

24

24

|

nvicode activity Show recent request activity

|

|

@@ -40,6 +40,7 @@ const getPathExts = () => {

|

|

|

40

40

|

.map((ext) => ext.toLowerCase());

|

|

41

41

|

};

|

|

42

42

|

const unique = (values) => [...new Set(values)];

|

|

43

|

+

const getProviderLabel = (provider) => provider === "openrouter" ? "OpenRouter" : "NVIDIA";

|

|

43

44

|

const question = async (prompt) => {

|

|

44

45

|

const rl = createInterface({

|

|

45

46

|

input: process.stdin,

|

|

@@ -52,28 +53,85 @@ const question = async (prompt) => {

|

|

|

52

53

|

rl.close();

|

|

53

54

|

}

|

|

54

55

|

};

|

|

56

|

+

const promptProviderSelection = async (initialProvider) => {

|

|

57

|

+

console.log("Choose a provider:");

|

|

58

|

+

console.log("1. NVIDIA");

|

|

59

|

+

console.log(" Uses the local nvicode proxy and usage dashboard.");

|

|

60

|

+

console.log("2. OpenRouter");

|

|

61

|

+

console.log(" Uses Claude Code direct Anthropic-compatible connection.");

|

|

62

|

+

const defaultChoice = initialProvider === "openrouter" ? "2" : "1";

|

|

63

|

+

const answer = (await question(`Provider selection [${defaultChoice}]: `)).toLowerCase();

|

|

64

|

+

const normalized = answer || defaultChoice;

|

|

65

|

+

if (normalized === "1" || normalized === "nvidia") {

|

|

66

|

+

return "nvidia";

|

|

67

|

+

}

|

|

68

|

+

if (normalized === "2" ||

|

|

69

|

+

normalized === "openrouter" ||

|

|

70

|

+

normalized === "open-router") {

|

|

71

|

+

return "openrouter";

|

|

72

|

+

}

|

|

73

|

+

throw new Error("Provider selection is required.");

|

|

74

|

+

};

|

|

75

|

+

const promptApiKeyUpdate = async (config, provider) => {

|

|

76

|

+

const providerLabel = getProviderLabel(provider);

|

|

77

|

+

const currentApiKey = provider === "openrouter" ? config.openrouterApiKey : config.nvidiaApiKey;

|

|

78

|

+

if (currentApiKey) {

|

|

79

|

+

const answer = (await question(`${providerLabel} API key already saved. Update it? [y/N]: `)).toLowerCase();

|

|

80

|

+

if (answer !== "y" && answer !== "yes") {

|

|

81

|

+

return provider === "openrouter"

|

|

82

|

+

? { openrouterApiKey: currentApiKey, nvidiaApiKey: config.nvidiaApiKey }

|

|

83

|

+

: { nvidiaApiKey: currentApiKey, openrouterApiKey: config.openrouterApiKey };

|

|

84

|

+

}

|

|

85

|

+

const nextKey = await question(`${providerLabel} API key (press Enter or type "skip" to keep current): `);

|

|

86

|

+

if (!nextKey || nextKey.toLowerCase() === "skip") {

|

|

87

|

+

return provider === "openrouter"

|

|

88

|

+

? { openrouterApiKey: currentApiKey, nvidiaApiKey: config.nvidiaApiKey }

|

|

89

|

+

: { nvidiaApiKey: currentApiKey, openrouterApiKey: config.openrouterApiKey };

|

|

90

|

+

}

|

|

91

|

+

return provider === "openrouter"

|

|

92

|

+

? { openrouterApiKey: nextKey, nvidiaApiKey: config.nvidiaApiKey }

|

|

93

|

+

: { nvidiaApiKey: nextKey, openrouterApiKey: config.openrouterApiKey };

|

|

94

|

+

}

|

|

95

|

+

const nextKey = await question(`${providerLabel} API key (press Enter or type "skip" to skip): `);

|

|

96

|

+

if (!nextKey || nextKey.toLowerCase() === "skip") {

|

|

97

|

+

return {

|

|

98

|

+

nvidiaApiKey: config.nvidiaApiKey,

|

|

99

|

+

openrouterApiKey: config.openrouterApiKey,

|

|

100

|

+

};

|

|

101

|

+

}

|

|

102

|

+

return provider === "openrouter"

|

|

103

|

+

? { openrouterApiKey: nextKey, nvidiaApiKey: config.nvidiaApiKey }

|

|

104

|

+

: { nvidiaApiKey: nextKey, openrouterApiKey: config.openrouterApiKey };

|

|

105

|

+

};

|

|

55

106

|

const ensureConfigured = async () => {

|

|

56

107

|

let config = await loadConfig();

|

|

57

108

|

let changed = false;

|

|

58

|

-

|

|

109

|

+

const providerLabel = getProviderLabel(config.provider);

|

|

110

|

+

const activeApiKey = getActiveApiKey(config);

|

|

111

|

+

const activeModel = getActiveModel(config);

|

|

112

|

+

if (!activeApiKey) {

|

|

59

113

|

if (!process.stdin.isTTY) {

|

|

60

|

-

throw new Error(

|

|

114

|

+

throw new Error(`Missing ${providerLabel} API key. Run \`nvicode auth\` first.`);

|

|

61

115

|

}

|

|

62

|

-

const apiKey = await question(

|

|

116

|

+

const apiKey = await question(`${providerLabel} API key: `);

|

|

63

117

|

if (!apiKey) {

|

|

64

|

-

throw new Error(

|

|

118

|

+

throw new Error(`${providerLabel} API key is required.`);

|

|

65

119

|

}

|

|

66

120

|

config = {

|

|

67

121

|

...config,

|

|

68

|

-

|

|

122

|

+

...(config.provider === "openrouter"

|

|

123

|

+

? { openrouterApiKey: apiKey }

|

|

124

|

+

: { nvidiaApiKey: apiKey }),

|

|

69

125

|

};

|

|

70

126

|

changed = true;

|

|

71

127

|

}

|

|

72

|

-

if (!

|

|

73

|

-

const [first] = await getRecommendedModels(config.

|

|

128

|

+

if (!activeModel) {

|

|

129

|

+

const [first] = await getRecommendedModels(config.provider, getActiveApiKey(config));

|

|

74

130

|

config = {

|

|

75

131

|

...config,

|

|

76

|

-

|

|

132

|

+

...(config.provider === "openrouter"

|

|

133

|

+

? { openrouterModel: first?.id || "anthropic/claude-sonnet-4.6" }

|

|

134

|

+

: { nvidiaModel: first?.id || "moonshotai/kimi-k2.5" }),

|

|

77

135

|

};

|

|

78

136

|

changed = true;

|

|

79

137

|

}

|

|

@@ -84,22 +142,28 @@ const ensureConfigured = async () => {

|

|

|

84

142

|

};

|

|

85

143

|

const runAuth = async () => {

|

|

86

144

|

const config = await loadConfig();

|

|

87

|

-

const

|

|

88

|

-

|

|

89

|

-

|

|

145

|

+

const providerLabel = getProviderLabel(config.provider);

|

|

146

|

+

const currentApiKey = getActiveApiKey(config);

|

|

147

|

+

const apiKey = await question(currentApiKey

|

|

148

|

+

? `${providerLabel} API key (leave blank to keep current): `

|

|

149

|

+

: `${providerLabel} API key: `);

|

|

150

|

+

if (!apiKey && currentApiKey) {

|

|

151

|

+

console.log(`Kept existing ${providerLabel} API key.`);

|

|

90

152

|

return;

|

|

91

153

|

}

|

|

92

154

|

if (!apiKey) {

|

|

93

|

-

throw new Error(

|

|

155

|

+

throw new Error(`${providerLabel} API key is required.`);

|

|

94

156

|

}

|

|

95

157

|

await saveConfig({

|

|

96

158

|

...config,

|

|

97

|

-

|

|

159

|

+

...(config.provider === "openrouter"

|

|

160

|

+

? { openrouterApiKey: apiKey }

|

|

161

|

+

: { nvidiaApiKey: apiKey }),

|

|

98

162

|

});

|

|

99

|

-

console.log(

|

|

163

|

+

console.log(`Saved ${providerLabel} API key.`);

|

|

100

164

|

};

|

|

101

|

-

const printModels = async (apiKey) => {

|

|

102

|

-

const models =

|

|

165

|

+

const printModels = async (provider, apiKey) => {

|

|

166

|

+

const models = await getRecommendedModels(provider, apiKey || "");

|

|

103

167

|

models.forEach((model, index) => {

|

|

104

168

|

console.log(`${index + 1}. ${model.label}`);

|

|

105

169

|

console.log(` ${model.id}`);

|

|

@@ -107,11 +171,20 @@ const printModels = async (apiKey) => {

|

|

|

107

171

|

});

|

|

108

172

|

};

|

|

109

173

|

const runSelectModel = async () => {

|

|

110

|

-

const config = await

|

|

111

|

-

const

|

|

112

|

-

|

|

113

|

-

await

|

|

114

|

-

|

|

174

|

+

const config = await loadConfig();

|

|

175

|

+

const provider = await promptProviderSelection(config.provider);

|

|

176

|

+

const providerLabel = getProviderLabel(provider);

|

|

177

|

+

const keyPatch = await promptApiKeyUpdate(config, provider);

|

|

178

|

+

const nextConfig = await saveConfig({

|

|

179

|

+

...config,

|

|

180

|

+

...keyPatch,

|

|

181

|

+

provider,

|

|

182

|

+

});

|

|

183

|

+

const models = await getRecommendedModels(provider, getActiveApiKey(nextConfig));

|

|

184

|

+

console.log(`Top popular ${providerLabel} models:`);

|

|

185

|

+

await printModels(provider, getActiveApiKey(nextConfig));

|

|

186

|

+

console.log("Or paste a full model id.");

|

|

187

|

+

console.log("Example: qwen/qwen3.6-plus-preview:free");

|

|

115

188

|

const answer = await question("Model selection: ");

|

|

116

189

|

const index = Number(answer);

|

|

117

190

|

const chosenModel = Number.isInteger(index) && index >= 1 && index <= models.length

|

|

@@ -121,8 +194,10 @@ const runSelectModel = async () => {

|

|

|

121

194

|

throw new Error("Model selection is required.");

|

|

122

195

|

}

|

|

123

196

|

await saveConfig({

|

|

124

|

-

...

|

|

125

|

-

|

|

197

|

+

...nextConfig,

|

|

198

|

+

...(provider === "openrouter"

|

|

199

|

+

? { openrouterModel: chosenModel }

|

|

200

|

+

: { nvidiaModel: chosenModel }),

|

|

126

201

|

});

|

|

127

202

|

console.log(`Saved model: ${chosenModel}`);

|

|

128

203

|

};

|

|

@@ -132,36 +207,46 @@ const runConfig = async () => {

|

|

|

132

207

|

console.log(`Config file: ${paths.configFile}`);

|

|

133

208

|

console.log(`State dir: ${paths.stateDir}`);

|

|

134

209

|

console.log(`Usage log: ${paths.usageLogFile}`);

|

|

135

|

-

console.log(`

|

|

210

|

+

console.log(`Provider: ${getProviderLabel(config.provider)}`);

|

|

211

|

+

console.log(`Model: ${getActiveModel(config)}`);

|

|

136

212

|

console.log(`Proxy port: ${config.proxyPort}`);

|

|

137

213

|

console.log(`Max RPM: ${config.maxRequestsPerMinute}`);

|

|

138

214

|

console.log(`Thinking: ${config.thinking ? "on" : "off"}`);

|

|

139

|

-

console.log(`

|

|

215

|

+

console.log(`NVIDIA key: ${config.nvidiaApiKey ? "saved" : "missing"}`);

|

|

216

|

+

console.log(`OpenRouter key: ${config.openrouterApiKey ? "saved" : "missing"}`);

|

|

140

217

|

};

|

|

141

218

|

const printUsageBlock = (label, records) => {

|

|

142

219

|

const summary = summarizeUsage(records);

|

|

143

220

|

console.log(label);

|

|

144

221

|

console.log(`Requests: ${formatInteger(summary.requests)} (${formatInteger(summary.successes)} ok, ${formatInteger(summary.errors)} error)`);

|

|

145

|

-

console.log(`

|

|

146

|

-

console.log(`

|

|

222

|

+

console.log(`Turn input tokens: ${formatInteger(summary.turnInputTokens)}`);

|

|

223

|

+

console.log(`Billed input tokens: ${formatInteger(summary.inputTokens)}`);

|

|

224

|

+

console.log(`Turn output tokens: ${formatInteger(summary.turnOutputTokens)}`);

|

|

225

|

+

console.log(`Billed output tokens: ${formatInteger(summary.outputTokens)}`);

|

|

147

226

|

console.log(`NVIDIA cost: ${formatUsd(summary.providerCostUsd)}`);

|

|

148

|

-

console.log(`Opus 4.6 equivalent: ${formatUsd(summary.compareCostUsd)}`);

|

|

149

227

|

console.log(`Estimated savings: ${formatUsd(summary.savingsUsd)}`);

|

|

150

228

|

};

|

|

151

|

-

const

|

|

229

|

+

const getUsageView = async () => {

|

|

152

230

|

const records = await readUsageRecords();

|

|

153

231

|

if (records.length === 0) {

|

|

154

|

-

|

|

155

|

-

|

|

232

|

+

return [

|

|

233

|

+

"nvicode usage",

|

|

234

|

+

"",

|

|

235

|

+

"No usage recorded yet.",

|

|

236

|

+

"Keep this open and new activity will appear automatically.",

|

|

237

|

+

].join("\n");

|

|

156

238

|

}

|

|

157

239

|

const now = Date.now();

|

|

158

240

|

const latestPricing = records[0]?.pricing;

|

|

241

|

+

const lines = ["nvicode usage", ""];

|

|

159

242

|

if (latestPricing) {

|

|

160

|

-

|

|

161

|

-

|

|

162

|

-

|

|

163

|

-

|

|

164

|

-

|

|

243

|

+

lines.push("Pricing basis:");

|

|

244

|

+

lines.push(`- NVIDIA configured cost: ${formatUsd(latestPricing.providerInputUsdPerMTok)} / MTok input, ${formatUsd(latestPricing.providerOutputUsdPerMTok)} / MTok output`);

|

|

245

|

+

lines.push(`- ${latestPricing.compareModel}: ${formatUsd(latestPricing.compareInputUsdPerMTok)} / MTok input, ${formatUsd(latestPricing.compareOutputUsdPerMTok)} / MTok output`);

|

|

246

|

+

lines.push(`- Comparison source: ${latestPricing.comparePricingSource} (${latestPricing.comparePricingUpdatedAt})`);

|

|

247

|

+

lines.push("- In/Out columns show current-turn tokens.");

|

|

248

|

+

lines.push("- Billed In/Billed Out include the full Claude Code request context.");

|

|

249

|

+

lines.push("");

|

|

165

250

|

}

|

|

166

251

|

const windows = [

|

|

167

252

|

{ label: "Last 1 hour", durationMs: 1 * 60 * 60 * 1000 },

|

|

@@ -176,34 +261,87 @@ const runUsage = async () => {

|

|

|

176

261

|

return {

|

|

177

262

|

window: window.label,

|

|

178

263

|

requests: `${formatInteger(summary.requests)} (${formatInteger(summary.successes)} ok/${formatInteger(summary.errors)} err)`,

|

|

179

|

-

inputTokens: formatInteger(summary.

|

|

180

|

-

|

|

264

|

+

inputTokens: formatInteger(summary.turnInputTokens),

|

|

265

|

+

billedInputTokens: formatInteger(summary.inputTokens),

|

|

266

|

+

outputTokens: formatInteger(summary.turnOutputTokens),

|

|

267

|

+

billedOutputTokens: formatInteger(summary.outputTokens),

|

|

181

268

|

nvidiaCost: formatUsd(summary.providerCostUsd),

|

|

182

269

|

savings: formatUsd(summary.savingsUsd),

|

|

183

270

|

};

|

|

184

271

|

});

|

|

185

|

-

|

|

272

|

+

lines.push(`Snapshot: ${formatTimestamp(new Date(now).toISOString())}`);

|

|

273

|

+

lines.push("");

|

|

274

|

+

lines.push("Window Requests In Tok Billed In Out Tok Billed Out NVIDIA Saved");

|

|

186

275

|

rows.forEach((row) => {

|

|

187

|

-

|

|

276

|

+

lines.push(`${row.window.padEnd(13)} ${row.requests.padEnd(16)} ${row.inputTokens.padStart(8)} ${row.billedInputTokens.padStart(11)} ${row.outputTokens.padStart(8)} ${row.billedOutputTokens.padStart(11)} ${row.nvidiaCost.padStart(10)} ${row.savings.padStart(10)}`);

|

|

188

277

|

});

|

|

278

|

+

return lines.join("\n");

|

|

279

|

+

};

|

|

280

|

+

const sleep = async (ms) => new Promise((resolve) => setTimeout(resolve, ms));

|

|

281

|

+

const clearTerminal = () => {

|

|

282

|

+

process.stdout.write("\x1b[2J\x1b[H");

|

|

283

|

+

};

|

|

284

|

+

const runUsage = async () => {

|

|

285

|

+

const config = await loadConfig();

|

|

286

|

+

if (config.provider === "openrouter") {

|

|

287

|

+

console.log("OpenRouter uses a direct Claude Code connection.");

|

|

288

|

+

console.log("Local nvicode usage stats are only available for NVIDIA proxy sessions.");

|

|

289

|

+

console.log("Use the OpenRouter activity dashboard for OpenRouter usage.");

|

|

290

|

+

return;

|

|

291

|

+

}

|

|

292

|

+

const interactive = process.stdout.isTTY && process.stdin.isTTY;

|

|

293

|

+

if (!interactive) {

|

|

294

|

+

console.log(await getUsageView());

|

|

295

|

+

return;

|

|

296

|

+

}

|

|

297

|

+

let stopped = false;

|

|

298

|

+

const stop = () => {

|

|

299

|

+

stopped = true;

|

|

300

|

+

};

|

|

301

|

+

process.on("SIGINT", stop);

|

|

302

|

+

process.on("SIGTERM", stop);

|

|

303

|

+

try {

|

|

304

|

+

while (!stopped) {

|

|

305

|

+

clearTerminal();

|

|

306

|

+

process.stdout.write(await getUsageView());

|

|

307

|

+

process.stdout.write("\n\nRefreshing every 2s. Press Ctrl+C to exit.\n");

|

|

308

|

+

await sleep(2_000);

|

|

309

|

+

}

|

|

310

|

+

}

|

|

311

|

+

finally {

|

|

312

|

+

process.off("SIGINT", stop);

|

|

313

|

+

process.off("SIGTERM", stop);

|

|

314

|

+

}

|

|

189

315

|

};

|

|

190

316

|

const runActivity = async () => {

|

|

317

|

+

const config = await loadConfig();

|

|

318

|

+

if (config.provider === "openrouter") {

|

|

319

|

+

console.log("OpenRouter uses a direct Claude Code connection.");

|

|

320

|

+

console.log("Local nvicode activity logs are only available for NVIDIA proxy sessions.");

|

|

321

|

+

return;

|

|

322

|

+

}

|

|

191

323

|

const records = await readUsageRecords();

|

|

192

324

|

if (records.length === 0) {

|

|

193

325

|

console.log("No activity recorded yet.");

|

|

194

326

|

return;

|

|

195

327

|

}

|

|

196

|

-

console.log("Timestamp Status Model

|

|

328

|

+

console.log("Timestamp Status Model In Tok Bill In Out Tok Bill Out Latency NVIDIA Saved");

|

|

197

329

|

for (const record of records.slice(0, 15)) {

|

|

198

|

-

const model = record.model.length >

|

|

330

|

+

const model = record.model.length > 28 ? `${record.model.slice(0, 25)}...` : record.model;

|

|

199

331

|

const status = record.status === "success" ? "ok" : "error";

|

|

200

|

-

console.log(`${formatTimestamp(record.timestamp).padEnd(21)} ${status.padEnd(6)} ${model.padEnd(

|

|

332

|

+

console.log(`${formatTimestamp(record.timestamp).padEnd(21)} ${status.padEnd(6)} ${model.padEnd(29)} ${formatInteger(record.turnInputTokens ?? record.visibleInputTokens ?? record.inputTokens).padStart(7)} ${formatInteger(record.inputTokens).padStart(8)} ${formatInteger(record.turnOutputTokens ?? record.visibleOutputTokens ?? record.outputTokens).padStart(8)} ${formatInteger(record.outputTokens).padStart(8)} ${formatDuration(record.latencyMs).padStart(8)} ${formatUsd(record.providerCostUsd).padStart(10)} ${formatUsd(record.savingsUsd).padStart(10)}`);

|

|

201

333

|

if (record.error) {

|

|

202

334

|

console.log(` error: ${record.error}`);

|

|

203

335

|

}

|

|

204

336

|

}

|

|

205

337

|

};

|

|

206

338

|

const runDashboard = async () => {

|

|

339

|

+

const config = await loadConfig();

|

|

340

|

+

if (config.provider === "openrouter") {

|

|

341

|

+

console.log("OpenRouter uses a direct Claude Code connection.");

|

|

342

|

+

console.log("Local nvicode dashboards are only available for NVIDIA proxy sessions.");

|

|

343

|

+

return;

|

|

344

|

+

}

|

|

207

345

|

const records = await readUsageRecords();

|

|

208

346

|

if (records.length === 0) {

|

|

209

347

|

console.log("No usage recorded yet.");

|

|

@@ -371,19 +509,39 @@ const spawnClaudeProcess = (claudeBinary, args, env) => {

|

|

|

371

509

|

};

|

|

372

510

|

const runLaunchClaude = async (args) => {

|

|

373

511

|

const config = await ensureConfigured();

|

|

374

|

-

await ensureProxyRunning(config);

|

|

375

512

|

const claudeBinary = await resolveClaudeBinary();

|

|

376

|

-

const

|

|

377

|

-

|

|

378

|

-

|

|

379

|

-

|

|

380

|

-

|

|

381

|

-

|

|

382

|

-

|

|

383

|

-

|

|

384

|

-

|

|

385

|

-

|

|

386

|

-

|

|

513

|

+

const activeModel = getActiveModel(config);

|

|

514

|

+

const activeApiKey = getActiveApiKey(config);

|

|

515

|

+

const env = config.provider === "openrouter"

|

|

516

|

+

? {

|

|

517

|

+

...process.env,

|

|

518

|

+

ANTHROPIC_BASE_URL: "https://openrouter.ai/api",

|

|

519

|

+

ANTHROPIC_AUTH_TOKEN: activeApiKey,

|

|

520

|

+

ANTHROPIC_API_KEY: "",

|

|

521

|

+

ANTHROPIC_MODEL: activeModel,

|

|

522

|

+

ANTHROPIC_DEFAULT_SONNET_MODEL: activeModel,

|

|

523

|

+

ANTHROPIC_DEFAULT_OPUS_MODEL: activeModel,

|

|

524

|

+

ANTHROPIC_DEFAULT_HAIKU_MODEL: activeModel,

|

|

525

|

+

CLAUDE_CODE_SUBAGENT_MODEL: activeModel,

|

|

526

|

+

CLAUDE_CODE_DISABLE_EXPERIMENTAL_BETAS: "1",

|

|

527

|

+

}

|

|

528

|

+

: (() => {

|

|

529

|

+

return {

|

|

530

|

+

...process.env,

|

|

531

|

+

ANTHROPIC_BASE_URL: `http://127.0.0.1:${config.proxyPort}`,

|

|

532

|

+

ANTHROPIC_AUTH_TOKEN: config.proxyToken,

|

|

533

|

+

ANTHROPIC_API_KEY: "",

|

|

534

|

+

ANTHROPIC_MODEL: activeModel,

|

|

535

|

+

CLAUDE_CODE_DISABLE_EXPERIMENTAL_BETAS: "1",

|

|

536

|

+

ANTHROPIC_CUSTOM_MODEL_OPTION: activeModel,

|

|

537

|

+

ANTHROPIC_CUSTOM_MODEL_OPTION_NAME: "nvicode custom model",

|

|

538

|

+

ANTHROPIC_CUSTOM_MODEL_OPTION_DESCRIPTION: "Claude Code via local NVIDIA gateway",

|

|

539

|

+

};

|

|

540

|

+

})();

|

|

541

|

+

if (config.provider === "nvidia") {

|

|

542

|

+

await ensureProxyRunning(config);

|

|

543

|

+

}

|

|

544

|

+

const child = spawnClaudeProcess(claudeBinary, args, env);

|

|

387

545

|

await new Promise((resolve, reject) => {

|

|

388

546

|

child.on("exit", (code, signal) => {

|

|

389

547

|

if (signal) {

|

|

@@ -398,12 +556,15 @@ const runLaunchClaude = async (args) => {

|

|

|

398

556

|

};

|

|

399

557

|

const runServe = async () => {

|

|

400

558

|

const config = await ensureConfigured();

|

|

559

|

+

if (config.provider !== "nvidia") {

|

|

560

|

+

throw new Error("`nvicode serve` is only available for the NVIDIA provider.");

|

|

561

|

+

}

|

|

401

562

|

const server = createProxyServer(config);

|

|

402

563

|

await new Promise((resolve, reject) => {

|

|

403

564

|

server.once("error", reject);

|

|

404

565

|

server.listen(config.proxyPort, "127.0.0.1", () => resolve());

|

|

405

566

|

});

|

|

406

|

-

console.error(`nvicode proxy listening on http://127.0.0.1:${config.proxyPort} using ${config.

|

|

567

|

+

console.error(`nvicode proxy listening on http://127.0.0.1:${config.proxyPort} using ${config.nvidiaModel}`);

|

|

407

568

|

const shutdown = () => {

|

|

408

569

|

server.close(() => process.exit(0));

|

|

409

570

|

};

|

|

@@ -423,7 +584,7 @@ const main = async () => {

|

|

|

423

584

|

}

|

|

424

585

|

if (command === "models") {

|

|

425

586

|

const config = await loadConfig();

|

|

426

|

-

await printModels(config.

|

|

587

|

+

await printModels(config.provider, getActiveApiKey(config) || undefined);

|

|

427

588

|

return;

|

|

428

589

|

}

|

|

429

590

|

if (command === "auth") {

|

package/dist/config.js

CHANGED

|

@@ -3,7 +3,9 @@ import { promises as fs } from "node:fs";

|

|

|

3

3

|

import os from "node:os";

|

|

4

4

|

import path from "node:path";

|

|

5

5

|

const DEFAULT_PROXY_PORT = 8788;

|

|

6

|

-

const

|

|

6

|

+

const DEFAULT_PROVIDER = "nvidia";

|

|

7

|

+

const DEFAULT_NVIDIA_MODEL = "moonshotai/kimi-k2.5";

|

|

8

|

+

const DEFAULT_OPENROUTER_MODEL = "anthropic/claude-sonnet-4.6";

|

|

7

9

|

const DEFAULT_MAX_REQUESTS_PER_MINUTE = 40;

|

|

8

10

|

const getEnvNumber = (name) => {

|

|

9

11

|

const raw = process.env[name];

|

|

@@ -54,9 +56,14 @@ export const getNvicodePaths = () => {

|

|

|

54

56

|

};

|

|

55

57

|

const withDefaults = (config) => {

|

|

56

58

|

const envMaxRequestsPerMinute = getEnvNumber("NVICODE_MAX_RPM");

|

|

59

|

+

const legacyApiKey = config.apiKey?.trim() || "";

|

|

60

|

+

const legacyModel = config.model?.trim() || DEFAULT_NVIDIA_MODEL;

|

|

57

61

|

return {

|

|

58

|

-

|

|

59

|

-

|

|

62

|

+

provider: config.provider === "openrouter" ? "openrouter" : DEFAULT_PROVIDER,

|

|

63

|

+

nvidiaApiKey: config.nvidiaApiKey?.trim() || legacyApiKey,

|

|

64

|

+

nvidiaModel: config.nvidiaModel?.trim() || legacyModel,

|

|

65

|

+

openrouterApiKey: config.openrouterApiKey?.trim() || "",

|

|

66

|

+

openrouterModel: config.openrouterModel?.trim() || DEFAULT_OPENROUTER_MODEL,

|

|

60

67

|

proxyPort: Number.isInteger(config.proxyPort) && config.proxyPort > 0

|

|

61

68

|

? config.proxyPort

|

|

62

69

|

: DEFAULT_PROXY_PORT,

|

|

@@ -97,3 +104,5 @@ export const updateConfig = async (patch) => {

|

|

|

97

104

|

...patch,

|

|

98

105

|

});

|

|

99

106

|

};

|

|

107

|

+

export const getActiveApiKey = (config) => config.provider === "openrouter" ? config.openrouterApiKey : config.nvidiaApiKey;

|

|

108

|

+

export const getActiveModel = (config) => config.provider === "openrouter" ? config.openrouterModel : config.nvidiaModel;

|

package/dist/models.js

CHANGED

|

@@ -1,4 +1,4 @@

|

|

|

1

|

-

export const

|

|

1

|

+

export const NVIDIA_CURATED_MODELS = [

|

|

2

2

|

{

|

|

3

3

|

id: "moonshotai/kimi-k2.5",

|

|

4

4

|

label: "Kimi K2.5",

|

|

@@ -30,6 +30,28 @@ export const CURATED_MODELS = [

|

|

|

30

30

|

description: "Smaller coding-focused Qwen model.",

|

|

31

31

|

},

|

|

32

32

|

];

|

|

33

|

+

export const OPENROUTER_CURATED_MODELS = [

|

|

34

|

+

{

|

|

35

|

+

id: "qwen/qwen3.6-plus-preview:free",

|

|

36

|

+

label: "Qwen 3.6 Plus Preview (Free)",

|

|

37

|

+

description: "Free OpenRouter Qwen preview model.",

|

|

38

|

+

},

|

|

39

|

+

{

|

|

40

|

+

id: "anthropic/claude-sonnet-4.6",

|

|

41

|

+

label: "Claude Sonnet 4.6",

|

|

42

|

+

description: "Recommended OpenRouter model for Claude Code compatibility.",

|

|

43

|

+

},

|

|

44

|

+

{

|

|

45

|

+

id: "anthropic/claude-opus-4.6",

|

|

46

|

+

label: "Claude Opus 4.6",

|

|

47

|

+

description: "Higher-end Anthropic model through OpenRouter.",

|

|

48

|

+

},

|

|

49

|

+

{

|

|

50

|

+

id: "anthropic/claude-haiku-4.5",

|

|

51

|

+

label: "Claude Haiku 4.5",

|

|

52

|

+

description: "Faster lower-cost Anthropic model through OpenRouter.",

|

|

53

|

+

},

|

|

54

|

+

];

|

|

33

55

|

const MODELS_URL = "https://integrate.api.nvidia.com/v1/models";

|

|

34

56

|

export const fetchAvailableModelIds = async (apiKey) => {

|

|

35

57

|

const response = await fetch(MODELS_URL, {

|

|

@@ -49,13 +71,16 @@ export const fetchAvailableModelIds = async (apiKey) => {

|

|

|

49

71

|

}

|

|

50

72

|

return ids;

|

|

51

73

|

};

|

|

52

|

-

export const getRecommendedModels = async (apiKey) => {

|

|

74

|

+

export const getRecommendedModels = async (provider, apiKey) => {

|

|

75

|

+

if (provider === "openrouter") {

|

|

76

|

+

return OPENROUTER_CURATED_MODELS;

|

|

77

|

+

}

|

|

53

78

|

try {

|

|

54

79

|

const available = await fetchAvailableModelIds(apiKey);

|

|

55

|

-

const curated =

|

|

56

|

-

return curated.length > 0 ? curated :

|

|

80

|

+

const curated = NVIDIA_CURATED_MODELS.filter((model) => available.has(model.id));

|

|

81

|

+

return curated.length > 0 ? curated : NVIDIA_CURATED_MODELS;

|

|

57

82

|

}

|

|

58

83

|

catch {

|

|

59

|

-

return

|

|

84

|

+

return NVIDIA_CURATED_MODELS;

|

|

60

85

|

}

|

|

61

86

|

};

|

package/dist/proxy.js

CHANGED

|

@@ -336,9 +336,58 @@ const estimateTokens = (payload) => {

|

|

|

336

336

|

const raw = JSON.stringify(payload);

|

|

337

337

|

return Math.max(1, Math.ceil(raw.length / 4));

|

|

338

338

|

};

|

|

339

|

+

const getCurrentTurnMessages = (messages) => {

|

|

340

|

+

const entries = messages ?? [];

|

|

341

|

+

for (let index = entries.length - 1; index >= 0; index -= 1) {

|

|

342

|

+

if (entries[index]?.role === "assistant") {

|

|

343

|

+

return entries.slice(index + 1);

|

|

344

|

+

}

|

|

345

|

+

}

|

|

346

|

+

return entries;

|

|

347

|

+

};

|

|

348

|

+

const extractPromptInput = (messages) => {

|

|

349

|

+

const parts = [];

|

|

350

|

+

for (const message of messages) {

|

|

351

|

+

if (message.role !== "user") {

|

|

352

|

+

continue;

|

|

353

|

+

}

|

|

354

|

+

if (typeof message.content === "string") {

|

|

355

|

+

if (message.content.trim().length > 0) {

|

|

356

|

+

parts.push(message.content);

|

|

357

|

+

}

|

|

358

|

+

continue;

|

|

359

|

+

}

|

|

360

|

+

for (const block of message.content) {

|

|

361

|

+

if (block.type === "text" && block.text.trim().length > 0) {

|

|

362

|

+

parts.push(block.text);

|

|

363

|

+

continue;

|

|

364

|

+

}

|

|

365

|

+

if (block.type === "image" && block.source?.data) {

|

|

366

|

+

parts.push({

|

|

367

|

+

type: "image_url",

|

|

368

|

+

image_url: {

|

|

369

|

+

url: `data:${block.source.media_type || "application/octet-stream"};base64,${block.source.data}`,

|

|

370

|

+

},

|

|

371

|

+

});

|

|

372

|

+

}

|

|

373

|

+

}

|

|

374

|

+

}

|

|

375

|

+

return parts;

|

|

376

|

+

};

|

|

377

|

+

const estimateTurnInputTokens = (payload) => {

|

|

378

|

+

const currentTurnMessages = getCurrentTurnMessages(payload.messages);

|

|

379

|

+

const promptInput = extractPromptInput(currentTurnMessages);

|

|

380

|

+

if (promptInput.length === 0) {

|

|

381

|

+

return 0;

|

|

382

|

+

}

|

|

383

|

+

return estimateTokens({

|

|

384

|

+

prompt: promptInput,

|

|

385

|

+

});

|

|

386

|

+

};

|

|

387

|

+

const estimateTurnOutputTokens = (content) => estimateTokens(content);

|

|

339

388

|

const resolveTargetModel = (config, payload) => payload.model && payload.model.includes("/") && !payload.model.startsWith("claude-")

|

|

340

389

|

? payload.model

|

|

341

|

-

: config.

|

|

390

|

+

: config.nvidiaModel;

|

|

342

391

|

const callNvidia = async (config, scheduleRequest, payload) => {

|

|

343

392

|

const targetModel = resolveTargetModel(config, payload);

|

|

344

393

|

const requestBody = {

|

|

@@ -374,7 +423,7 @@ const callNvidia = async (config, scheduleRequest, payload) => {

|

|

|

374

423

|

const response = await fetch(NVIDIA_URL, {

|

|

375

424

|

method: "POST",

|

|

376

425

|

headers: {

|

|

377

|

-

Authorization: `Bearer ${config.

|

|

426

|

+

Authorization: `Bearer ${config.nvidiaApiKey}`,

|

|

378

427

|

Accept: "application/json",

|

|

379

428

|

"Content-Type": "application/json",

|

|

380

429

|

},

|

|

@@ -412,7 +461,7 @@ export const createProxyServer = (config) => {

|

|

|

412

461

|

if (url.pathname === "/health") {

|

|

413

462

|

sendJson(response, 200, {

|

|

414

463

|

ok: true,

|

|

415

|

-

model: config.

|

|

464

|

+

model: config.nvidiaModel,

|

|

416

465

|

port: config.proxyPort,

|

|

417

466

|

thinking: config.thinking,

|

|

418

467

|

maxRequestsPerMinute: config.maxRequestsPerMinute,

|

|

@@ -445,12 +494,14 @@ export const createProxyServer = (config) => {

|

|

|

445

494

|

messages: payload.messages ?? [],

|

|

446

495

|

tools: payload.tools ?? [],

|

|

447

496

|

});

|

|

497

|

+

const estimatedTurnInputTokens = estimateTurnInputTokens(payload);

|

|

448

498

|

const startedAt = Date.now();

|

|

449

499

|

const pricing = getPricingSnapshot();

|

|

450

500

|

try {

|

|

451

501

|

const { upstream } = await callNvidia(config, scheduleNvidiaRequest, payload);

|

|

452

502

|

const choice = upstream.choices?.[0];

|

|

453

503

|

const mappedContent = mapResponseContent(choice);

|

|

504

|

+

const estimatedTurnOutputTokens = estimateTurnOutputTokens(mappedContent);

|

|

454

505

|

const anthropicResponse = {

|

|

455

506

|

id: upstream.id || `msg_${randomUUID()}`,

|

|

456

507

|

type: "message",

|

|

@@ -470,6 +521,8 @@ export const createProxyServer = (config) => {

|

|

|

470

521

|

model: targetModel,

|

|

471

522

|

inputTokens: anthropicResponse.usage.input_tokens,

|

|

472

523

|

outputTokens: anthropicResponse.usage.output_tokens,

|

|

524

|

+

turnInputTokens: estimatedTurnInputTokens,

|

|

525

|

+

turnOutputTokens: estimatedTurnOutputTokens,

|

|

473

526

|

latencyMs: Date.now() - startedAt,

|

|

474

527

|

stopReason: anthropicResponse.stop_reason,

|

|

475

528

|

pricing,

|

|

@@ -570,6 +623,8 @@ export const createProxyServer = (config) => {

|

|

|

570

623

|

model: targetModel,

|

|

571

624

|

inputTokens: estimatedInputTokens,

|

|

572

625

|

outputTokens: 0,

|

|

626

|

+

turnInputTokens: estimatedTurnInputTokens,

|

|

627

|

+

turnOutputTokens: 0,

|

|

573

628

|

latencyMs: Date.now() - startedAt,

|

|

574

629

|

error: message,

|

|

575

630

|

pricing,

|

package/dist/usage.js

CHANGED

|

@@ -26,7 +26,7 @@ export const getPricingSnapshot = () => ({

|

|

|

26

26

|

});

|

|

27

27

|

export const estimateCostUsd = (inputTokens, outputTokens, inputUsdPerMTok, outputUsdPerMTok) => (inputTokens / 1_000_000) * inputUsdPerMTok +

|

|

28

28

|

(outputTokens / 1_000_000) * outputUsdPerMTok;

|

|

29

|

-

export const buildUsageRecord = ({ id, timestamp = new Date().toISOString(), status, model, inputTokens, outputTokens, latencyMs, stopReason, error, pricing = getPricingSnapshot(), }) => {

|

|

29

|

+

export const buildUsageRecord = ({ id, timestamp = new Date().toISOString(), status, model, inputTokens, outputTokens, turnInputTokens, turnOutputTokens, visibleInputTokens, visibleOutputTokens, latencyMs, stopReason, error, pricing = getPricingSnapshot(), }) => {

|

|

30

30

|

const providerCostUsd = estimateCostUsd(inputTokens, outputTokens, pricing.providerInputUsdPerMTok, pricing.providerOutputUsdPerMTok);

|

|

31

31

|

const compareCostUsd = estimateCostUsd(inputTokens, outputTokens, pricing.compareInputUsdPerMTok, pricing.compareOutputUsdPerMTok);

|

|

32

32

|

return {

|

|

@@ -36,6 +36,18 @@ export const buildUsageRecord = ({ id, timestamp = new Date().toISOString(), sta

|

|

|

36

36

|

model,

|

|

37

37

|

inputTokens,

|

|

38

38

|

outputTokens,

|

|

39

|

+

...(turnInputTokens !== undefined

|

|

40

|

+

? { turnInputTokens }

|

|

41

|

+

: {}),

|

|

42

|

+

...(turnOutputTokens !== undefined

|

|

43

|

+

? { turnOutputTokens }

|

|

44

|

+

: {}),

|

|

45

|

+

...(visibleInputTokens !== undefined

|

|

46

|

+

? { visibleInputTokens }

|

|

47

|

+

: {}),

|

|

48

|

+

...(visibleOutputTokens !== undefined

|

|

49

|

+

? { visibleOutputTokens }

|

|

50

|

+

: {}),

|

|

39

51

|

latencyMs,

|

|

40

52

|

providerCostUsd,

|

|

41

53

|

compareCostUsd,

|

|

@@ -75,6 +87,16 @@ export const summarizeUsage = (records) => records.reduce((summary, record) => {

|

|

|

75

87

|

summary.errors += record.status === "error" ? 1 : 0;

|

|

76

88

|

summary.inputTokens += record.inputTokens;

|

|

77

89

|

summary.outputTokens += record.outputTokens;

|

|

90

|

+

summary.turnInputTokens +=

|

|

91

|

+

record.turnInputTokens ??

|

|

92

|

+

record.visibleInputTokens ??

|

|

93

|

+

record.inputTokens;

|

|

94

|

+

summary.turnOutputTokens +=

|

|

95

|

+

record.turnOutputTokens ??

|

|

96

|

+

record.visibleOutputTokens ??

|

|

97

|

+

record.outputTokens;

|

|

98

|

+

summary.visibleInputTokens += record.visibleInputTokens ?? record.inputTokens;

|

|

99

|

+

summary.visibleOutputTokens += record.visibleOutputTokens ?? record.outputTokens;

|

|

78

100

|

summary.providerCostUsd += record.providerCostUsd;

|

|

79

101

|

summary.compareCostUsd += record.compareCostUsd;

|

|

80

102

|

summary.savingsUsd += record.savingsUsd;

|

|

@@ -85,6 +107,10 @@ export const summarizeUsage = (records) => records.reduce((summary, record) => {

|

|

|

85

107

|

errors: 0,

|

|

86

108

|

inputTokens: 0,

|

|

87

109

|

outputTokens: 0,

|

|

110

|

+

turnInputTokens: 0,

|

|

111

|

+

turnOutputTokens: 0,

|

|

112

|

+

visibleInputTokens: 0,

|

|

113

|

+

visibleOutputTokens: 0,

|

|

88

114

|

providerCostUsd: 0,

|

|

89

115

|

compareCostUsd: 0,

|

|

90

116

|

savingsUsd: 0,

|

package/package.json

CHANGED

|

@@ -1,7 +1,7 @@

|

|

|

1

1

|

{

|

|

2

2

|

"name": "nvicode",

|

|

3

|

-

"version": "0.1.

|

|

4

|

-

"description": "Run Claude Code through NVIDIA-hosted models using a

|

|

3

|

+

"version": "0.1.6",

|

|

4

|

+

"description": "Run Claude Code through NVIDIA-hosted models or OpenRouter using a simple CLI wrapper.",

|

|

5

5

|

"author": "Dinesh Potla",

|

|

6

6

|

"keywords": [

|

|

7

7

|

"claude-code",

|