nvicode 0.1.1 → 0.1.5

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- package/README.md +31 -1

- package/dist/cli.js +201 -24

- package/dist/config.js +54 -11

- package/dist/proxy.js +199 -115

- package/dist/usage.js +120 -0

- package/package.json +1 -1

package/README.md

CHANGED

|

@@ -2,6 +2,12 @@

|

|

|

2

2

|

|

|

3

3

|

Run Claude Code through NVIDIA-hosted models using a local Anthropic-compatible gateway.

|

|

4

4

|

|

|

5

|

+

Supported environments:

|

|

6

|

+

- macOS

|

|

7

|

+

- Ubuntu/Linux

|

|

8

|

+

- WSL

|

|

9

|

+

- Native Windows with Claude Code installed and working from PowerShell, CMD, or Git Bash

|

|

10

|

+

|

|

5

11

|

## Quickstart

|

|

6

12

|

|

|

7

13

|

Install the published package:

|

|

@@ -12,6 +18,8 @@ npm install -g nvicode

|

|

|

12

18

|

|

|

13

19

|

Save your NVIDIA API key:

|

|

14

20

|

|

|

21

|

+

Get a free key from [NVIDIA Build API Keys](https://build.nvidia.com/settings/api-keys).

|

|

22

|

+

|

|

15

23

|

```sh

|

|

16

24

|

nvicode auth

|

|

17

25

|

```

|

|

@@ -28,11 +36,28 @@ Launch Claude Code through NVIDIA:

|

|

|

28

36

|

nvicode launch claude

|

|

29

37

|

```

|

|

30

38

|

|

|

39

|

+

## Screenshots

|

|

40

|

+

|

|

41

|

+

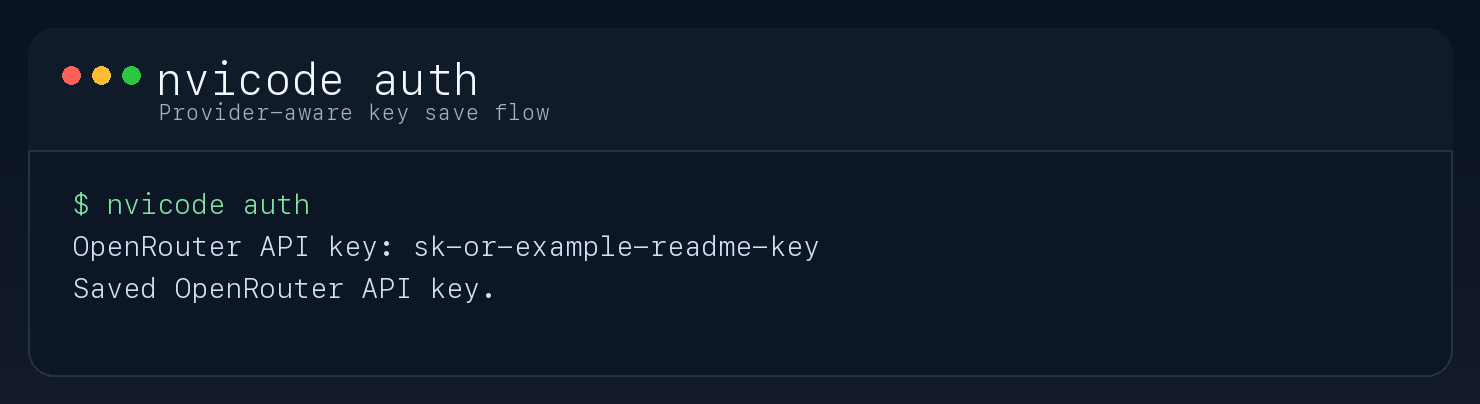

### Save your API key

|

|

42

|

+

|

|

43

|

+

|

|

44

|

+

|

|

45

|

+

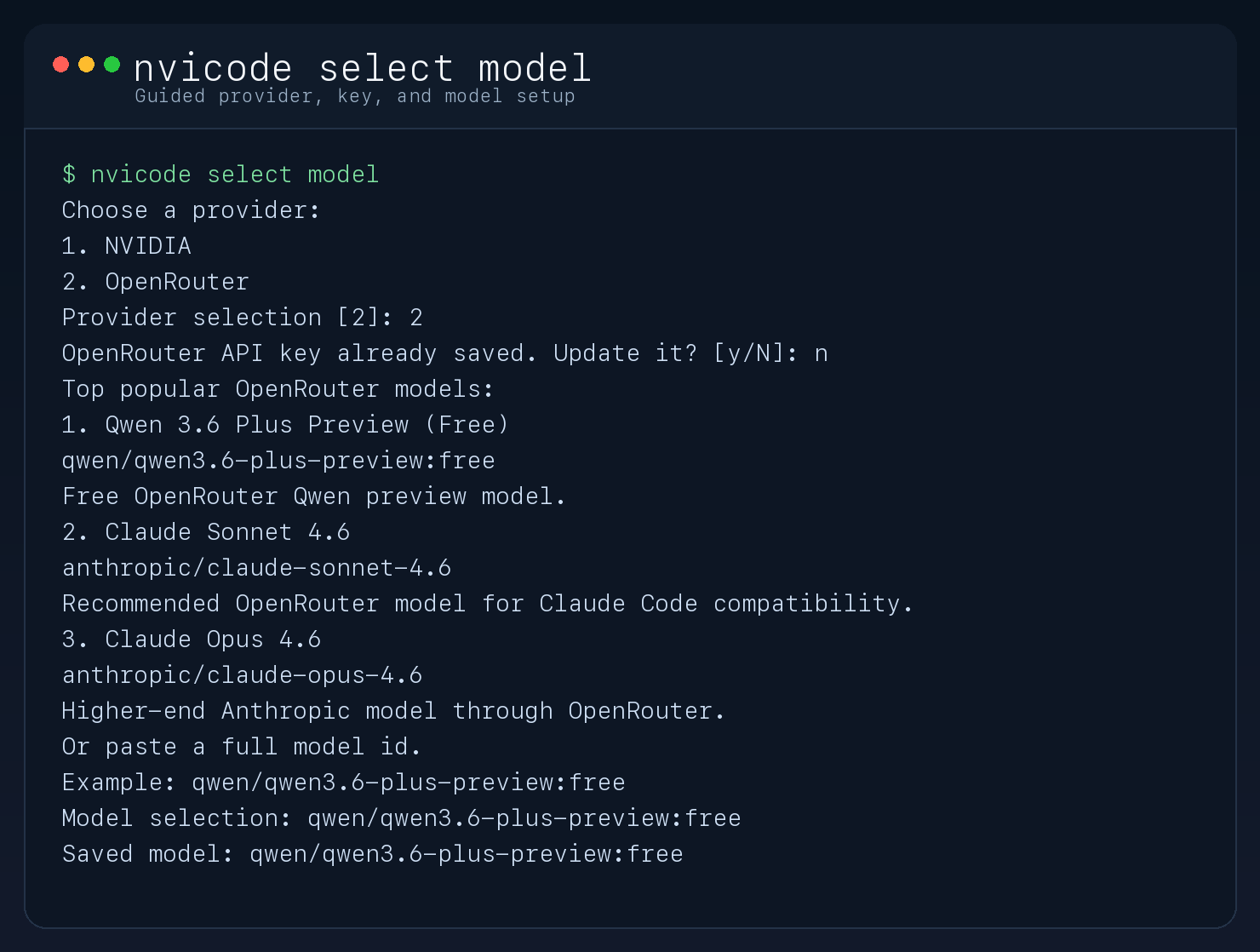

### Choose a model

|

|

46

|

+

|

|

47

|

+

|

|

48

|

+

|

|

49

|

+

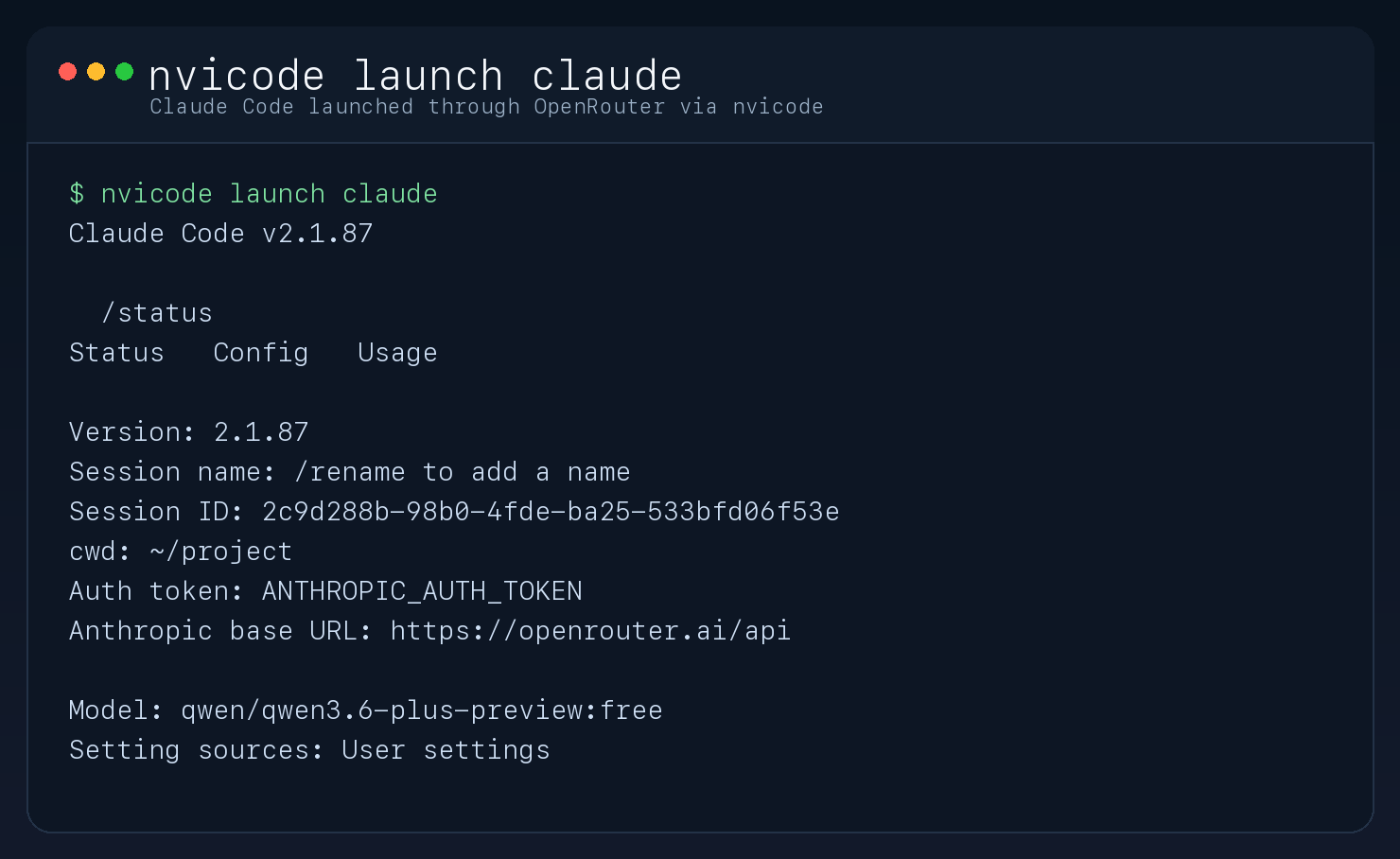

### Launch Claude Code through NVIDIA

|

|

50

|

+

|

|

51

|

+

|

|

52

|

+

|

|

31

53

|

## Commands

|

|

32

54

|

|

|

33

55

|

Useful commands:

|

|

34

56

|

|

|

35

57

|

```sh

|

|

58

|

+

nvicode dashboard

|

|

59

|

+

nvicode usage

|

|

60

|

+

nvicode activity

|

|

36

61

|

nvicode models

|

|

37

62

|

nvicode config

|

|

38

63

|

nvicode auth

|

|

@@ -42,11 +67,15 @@ nvicode launch claude -p "Reply with exactly OK"

|

|

|

42

67

|

The launcher starts a local proxy on `127.0.0.1:8788`, points Claude Code at it with `ANTHROPIC_BASE_URL`, and forwards requests to NVIDIA `chat/completions`.

|

|

43

68

|

|

|

44

69

|

If no NVIDIA API key is saved yet, `nvicode` prompts for one on first use.

|

|

70

|

+

By default, the proxy paces upstream NVIDIA requests at `40 RPM`. Override that with `NVICODE_MAX_RPM` if your account has a different limit.

|

|

71

|

+

The usage dashboard compares your local NVIDIA run cost against Claude Opus 4.6 at `$5 / MTok input` and `$25 / MTok output`, based on Anthropic pricing as of `2026-03-30`.

|

|

72

|

+

If your NVIDIA endpoint is not free, override local cost estimates with `NVICODE_INPUT_USD_PER_MTOK` and `NVICODE_OUTPUT_USD_PER_MTOK`.

|

|

45

73

|

|

|

46

74

|

## Requirements

|

|

47

75

|

|

|

48

76

|

- Claude Code must already be installed on the machine.

|

|

49

77

|

- Node.js 20 or newer is required to install `nvicode`.

|

|

78

|

+

- On native Windows, Claude Code itself requires Git for Windows. See the [Claude Code setup docs](https://code.claude.com/docs/en/setup).

|

|

50

79

|

|

|

51

80

|

## Local Development

|

|

52

81

|

|

|

@@ -55,11 +84,12 @@ These steps are only for contributors working from a git checkout. End users do

|

|

|

55

84

|

```sh

|

|

56

85

|

npm install

|

|

57

86

|

npm run build

|

|

58

|

-

|

|

87

|

+

npm link

|

|

59

88

|

```

|

|

60

89

|

|

|

61

90

|

## Notes

|

|

62

91

|

|

|

63

92

|

- `thinking` is disabled by default because some NVIDIA reasoning models can consume the entire output budget and return no visible answer to Claude Code.

|

|

64

93

|

- The proxy supports basic text, tool calls, tool results, and token count estimation.

|

|

94

|

+

- The proxy includes upstream request pacing and retries on NVIDIA `429` responses.

|

|

65

95

|

- Claude Code remains the frontend; the selected NVIDIA model becomes the backend.

|

package/dist/cli.js

CHANGED

|

@@ -10,6 +10,7 @@ import { fileURLToPath } from "node:url";

|

|

|

10

10

|

import { getNvicodePaths, loadConfig, saveConfig, } from "./config.js";

|

|

11

11

|

import { createProxyServer } from "./proxy.js";

|

|

12

12

|

import { CURATED_MODELS, getRecommendedModels } from "./models.js";

|

|

13

|

+

import { filterRecordsSince, formatDuration, formatInteger, formatTimestamp, formatUsd, readUsageRecords, summarizeUsage, } from "./usage.js";

|

|

13

14

|

const __filename = fileURLToPath(import.meta.url);

|

|

14

15

|

const usage = () => {

|

|

15

16

|

console.log(`nvicode

|

|

@@ -19,10 +20,26 @@ Commands:

|

|

|

19

20

|

nvicode models Show recommended coding models

|

|

20

21

|

nvicode auth Save or update NVIDIA API key

|

|

21

22

|

nvicode config Show current nvicode config

|

|

23

|

+

nvicode usage Show token usage and cost comparison

|

|

24

|

+

nvicode activity Show recent request activity

|

|

25

|

+

nvicode dashboard Show usage summary and recent activity

|

|

22

26

|

nvicode launch claude [...] Launch Claude Code through nvicode

|

|

23

27

|

nvicode serve Run the local proxy in the foreground

|

|

24

28

|

`);

|

|

25

29

|

};

|

|

30

|

+

const isWindows = process.platform === "win32";

|

|

31

|

+

const getPathExts = () => {

|

|

32

|

+

if (!isWindows) {

|

|

33

|

+

return [""];

|

|

34

|

+

}

|

|

35

|

+

const raw = process.env.PATHEXT || ".COM;.EXE;.BAT;.CMD";

|

|

36

|

+

return raw

|

|

37

|

+

.split(";")

|

|

38

|

+

.map((ext) => ext.trim())

|

|

39

|

+

.filter(Boolean)

|

|

40

|

+

.map((ext) => ext.toLowerCase());

|

|

41

|

+

};

|

|

42

|

+

const unique = (values) => [...new Set(values)];

|

|

26

43

|

const question = async (prompt) => {

|

|

27

44

|

const rl = createInterface({

|

|

28

45

|

input: process.stdin,

|

|

@@ -114,11 +131,91 @@ const runConfig = async () => {

|

|

|

114

131

|

const paths = getNvicodePaths();

|

|

115

132

|

console.log(`Config file: ${paths.configFile}`);

|

|

116

133

|

console.log(`State dir: ${paths.stateDir}`);

|

|

134

|

+

console.log(`Usage log: ${paths.usageLogFile}`);

|

|

117

135

|

console.log(`Model: ${config.model}`);

|

|

118

136

|

console.log(`Proxy port: ${config.proxyPort}`);

|

|

137

|

+

console.log(`Max RPM: ${config.maxRequestsPerMinute}`);

|

|

119

138

|

console.log(`Thinking: ${config.thinking ? "on" : "off"}`);

|

|

120

139

|

console.log(`API key: ${config.apiKey ? "saved" : "missing"}`);

|

|

121

140

|

};

|

|

141

|

+

const printUsageBlock = (label, records) => {

|

|

142

|

+

const summary = summarizeUsage(records);

|

|

143

|

+

console.log(label);

|

|

144

|

+

console.log(`Requests: ${formatInteger(summary.requests)} (${formatInteger(summary.successes)} ok, ${formatInteger(summary.errors)} error)`);

|

|

145

|

+

console.log(`Input tokens: ${formatInteger(summary.inputTokens)}`);

|

|

146

|

+

console.log(`Output tokens: ${formatInteger(summary.outputTokens)}`);

|

|

147

|

+

console.log(`NVIDIA cost: ${formatUsd(summary.providerCostUsd)}`);

|

|

148

|

+

console.log(`Opus 4.6 equivalent: ${formatUsd(summary.compareCostUsd)}`);

|

|

149

|

+

console.log(`Estimated savings: ${formatUsd(summary.savingsUsd)}`);

|

|

150

|

+

};

|

|

151

|

+

const runUsage = async () => {

|

|

152

|

+

const records = await readUsageRecords();

|

|

153

|

+

if (records.length === 0) {

|

|

154

|

+

console.log("No usage recorded yet.");

|

|

155

|

+

return;

|

|

156

|

+

}

|

|

157

|

+

const now = Date.now();

|

|

158

|

+

const latestPricing = records[0]?.pricing;

|

|

159

|

+

if (latestPricing) {

|

|

160

|

+

console.log("Pricing basis:");

|

|

161

|

+

console.log(`- NVIDIA configured cost: ${formatUsd(latestPricing.providerInputUsdPerMTok)} / MTok input, ${formatUsd(latestPricing.providerOutputUsdPerMTok)} / MTok output`);

|

|

162

|

+

console.log(`- ${latestPricing.compareModel}: ${formatUsd(latestPricing.compareInputUsdPerMTok)} / MTok input, ${formatUsd(latestPricing.compareOutputUsdPerMTok)} / MTok output`);

|

|

163

|

+

console.log(`- Comparison source: ${latestPricing.comparePricingSource} (${latestPricing.comparePricingUpdatedAt})`);

|

|

164

|

+

console.log("");

|

|

165

|

+

}

|

|

166

|

+

const windows = [

|

|

167

|

+

{ label: "Last 1 hour", durationMs: 1 * 60 * 60 * 1000 },

|

|

168

|

+

{ label: "Last 6 hours", durationMs: 6 * 60 * 60 * 1000 },

|

|

169

|

+

{ label: "Last 12 hours", durationMs: 12 * 60 * 60 * 1000 },

|

|

170

|

+

{ label: "Last 1 day", durationMs: 24 * 60 * 60 * 1000 },

|

|

171

|

+

{ label: "Last 1 week", durationMs: 7 * 24 * 60 * 60 * 1000 },

|

|

172

|

+

{ label: "Last 1 month", durationMs: 30 * 24 * 60 * 60 * 1000 },

|

|

173

|

+

];

|

|

174

|

+

const rows = windows.map((window) => {

|

|

175

|

+

const summary = summarizeUsage(filterRecordsSince(records, now - window.durationMs));

|

|

176

|

+

return {

|

|

177

|

+

window: window.label,

|

|

178

|

+

requests: `${formatInteger(summary.requests)} (${formatInteger(summary.successes)} ok/${formatInteger(summary.errors)} err)`,

|

|

179

|

+

inputTokens: formatInteger(summary.inputTokens),

|

|

180

|

+

outputTokens: formatInteger(summary.outputTokens),

|

|

181

|

+

nvidiaCost: formatUsd(summary.providerCostUsd),

|

|

182

|

+

savings: formatUsd(summary.savingsUsd),

|

|

183

|

+

};

|

|

184

|

+

});

|

|

185

|

+

console.log("Window Requests Input Tok Output Tok NVIDIA Saved");

|

|

186

|

+

rows.forEach((row) => {

|

|

187

|

+

console.log(`${row.window.padEnd(13)} ${row.requests.padEnd(16)} ${row.inputTokens.padStart(10)} ${row.outputTokens.padStart(11)} ${row.nvidiaCost.padStart(10)} ${row.savings.padStart(10)}`);

|

|

188

|

+

});

|

|

189

|

+

};

|

|

190

|

+

const runActivity = async () => {

|

|

191

|

+

const records = await readUsageRecords();

|

|

192

|

+

if (records.length === 0) {

|

|

193

|

+

console.log("No activity recorded yet.");

|

|

194

|

+

return;

|

|

195

|

+

}

|

|

196

|

+

console.log("Timestamp Status Model In Tok Out Tok Latency NVIDIA Saved");

|

|

197

|

+

for (const record of records.slice(0, 15)) {

|

|

198

|

+

const model = record.model.length > 30 ? `${record.model.slice(0, 27)}...` : record.model;

|

|

199

|

+

const status = record.status === "success" ? "ok" : "error";

|

|

200

|

+

console.log(`${formatTimestamp(record.timestamp).padEnd(21)} ${status.padEnd(6)} ${model.padEnd(31)} ${formatInteger(record.inputTokens).padStart(7)} ${formatInteger(record.outputTokens).padStart(8)} ${formatDuration(record.latencyMs).padStart(8)} ${formatUsd(record.providerCostUsd).padStart(10)} ${formatUsd(record.savingsUsd).padStart(10)}`);

|

|

201

|

+

if (record.error) {

|

|

202

|

+

console.log(` error: ${record.error}`);

|

|

203

|

+

}

|

|

204

|

+

}

|

|

205

|

+

};

|

|

206

|

+

const runDashboard = async () => {

|

|

207

|

+

const records = await readUsageRecords();

|

|

208

|

+

if (records.length === 0) {

|

|

209

|

+

console.log("No usage recorded yet.");

|

|

210

|

+

return;

|

|

211

|

+

}

|

|

212

|

+

const last7Days = filterRecordsSince(records, Date.now() - 7 * 24 * 60 * 60 * 1000);

|

|

213

|

+

printUsageBlock("Usage (7d)", last7Days);

|

|

214

|

+

console.log("");

|

|

215

|

+

console.log("Recent activity");

|

|

216

|

+

console.log("");

|

|

217

|

+

await runActivity();

|

|

218

|

+

};

|

|

122

219

|

const waitForHealthyProxy = async (port) => {

|

|

123

220

|

for (let attempt = 0; attempt < 50; attempt += 1) {

|

|

124

221

|

try {

|

|

@@ -147,6 +244,7 @@ const ensureProxyRunning = async (config) => {

|

|

|

147

244

|

...process.env,

|

|

148

245

|

},

|

|

149

246

|

stdio: ["ignore", logFd, logFd],

|

|

247

|

+

windowsHide: true,

|

|

150

248

|

});

|

|

151

249

|

child.unref();

|

|

152

250

|

await fs.writeFile(paths.pidFile, `${child.pid}\n`);

|

|

@@ -156,17 +254,63 @@ const ensureProxyRunning = async (config) => {

|

|

|

156

254

|

};

|

|

157

255

|

const isExecutable = async (filePath) => {

|

|

158

256

|

try {

|

|

159

|

-

await fs.access(filePath, constants.X_OK);

|

|

257

|

+

await fs.access(filePath, isWindows ? constants.F_OK : constants.X_OK);

|

|

160

258

|

return true;

|

|

161

259

|

}

|

|

162

260

|

catch {

|

|

163

261

|

return false;

|

|

164

262

|

}

|

|

165

263

|

};

|

|

264

|

+

const buildExecutableCandidates = (entry, name) => {

|

|

265

|

+

const base = path.join(entry, name);

|

|

266

|

+

if (!isWindows) {

|

|

267

|

+

return [base];

|

|

268

|

+

}

|

|

269

|

+

if (path.extname(name)) {

|

|

270

|

+

return [base];

|

|

271

|

+

}

|

|

272

|

+

return unique([base, ...getPathExts().map((ext) => `${base}${ext}`)]);

|

|

273

|

+

};

|

|

274

|

+

const resolveClaudeVersionEntry = async (entryPath) => {

|

|

275

|

+

if (await isExecutable(entryPath)) {

|

|

276

|

+

return entryPath;

|

|

277

|

+

}

|

|

278

|

+

const nestedCandidates = isWindows

|

|

279

|

+

? ["claude.exe", "claude.cmd", "claude.bat", "claude"]

|

|

280

|

+

: ["claude"];

|

|

281

|

+

for (const candidateName of nestedCandidates) {

|

|

282

|

+

const candidate = path.join(entryPath, candidateName);

|

|

283

|

+

if (await isExecutable(candidate)) {

|

|

284

|

+

return candidate;

|

|

285

|

+

}

|

|

286

|

+

}

|

|

287

|

+

return null;

|

|

288

|

+

};

|

|

166

289

|

const resolveClaudeBinary = async () => {

|

|

167

|

-

const

|

|

168

|

-

|

|

169

|

-

|

|

290

|

+

const nativeNames = isWindows

|

|

291

|

+

? ["claude-native.exe", "claude-native.cmd", "claude-native.bat", "claude-native"]

|

|

292

|

+

: ["claude-native"];

|

|

293

|

+

for (const name of nativeNames) {

|

|

294

|

+

const nativeInPath = await findExecutableInPath(name);

|

|

295

|

+

if (nativeInPath) {

|

|

296

|

+

return nativeInPath;

|

|

297

|

+

}

|

|

298

|

+

}

|

|

299

|

+

const homeBinCandidates = isWindows

|

|

300

|

+

? [

|

|

301

|

+

path.join(os.homedir(), ".local", "bin", "claude.exe"),

|

|

302

|

+

path.join(os.homedir(), ".local", "bin", "claude.cmd"),

|

|

303

|

+

path.join(os.homedir(), ".local", "bin", "claude.bat"),

|

|

304

|

+

path.join(os.homedir(), ".local", "bin", "claude"),

|

|

305

|

+

]

|

|

306

|

+

: [

|

|

307

|

+

path.join(os.homedir(), ".local", "bin", "claude-native"),

|

|

308

|

+

path.join(os.homedir(), ".local", "bin", "claude"),

|

|

309

|

+

];

|

|

310

|

+

for (const candidate of homeBinCandidates) {

|

|

311

|

+

if (await isExecutable(candidate)) {

|

|

312

|

+

return candidate;

|

|

313

|

+

}

|

|

170

314

|

}

|

|

171

315

|

const versionsDir = path.join(os.homedir(), ".local", "share", "claude", "versions");

|

|

172

316

|

try {

|

|

@@ -176,15 +320,23 @@ const resolveClaudeBinary = async () => {

|

|

|

176

320

|

sensitivity: "base",

|

|

177

321

|

})).at(-1);

|

|

178

322

|

if (latest) {

|

|

179

|

-

|

|

323

|

+

const resolved = await resolveClaudeVersionEntry(path.join(versionsDir, latest));

|

|

324

|

+

if (resolved) {

|

|

325

|

+

return resolved;

|

|

326

|

+

}

|

|

180

327

|

}

|

|

181

328

|

}

|

|

182

329

|

catch {

|

|

183

330

|

// continue

|

|

184

331

|

}

|

|

185

|

-

const

|

|

186

|

-

|

|

187

|

-

|

|

332

|

+

const cliNames = isWindows

|

|

333

|

+

? ["claude.exe", "claude.cmd", "claude.bat", "claude"]

|

|

334

|

+

: ["claude"];

|

|

335

|

+

for (const name of cliNames) {

|

|

336

|

+

const claudeInPath = await findExecutableInPath(name);

|

|

337

|

+

if (claudeInPath) {

|

|

338

|

+

return claudeInPath;

|

|

339

|

+

}

|

|

188

340

|

}

|

|

189

341

|

throw new Error("Unable to locate Claude Code binary.");

|

|

190

342

|

};

|

|

@@ -194,30 +346,43 @@ const findExecutableInPath = async (name) => {

|

|

|

194

346

|

if (!entry) {

|

|

195

347

|

continue;

|

|

196

348

|

}

|

|

197

|

-

const candidate

|

|

198

|

-

|

|

199

|

-

|

|

349

|

+

for (const candidate of buildExecutableCandidates(entry, name)) {

|

|

350

|

+

if (await isExecutable(candidate)) {

|

|

351

|

+

return candidate;

|

|

352

|

+

}

|

|

200

353

|

}

|

|

201

354

|

}

|

|

202

355

|

return null;

|

|

203

356

|

};

|

|

357

|

+

const spawnClaudeProcess = (claudeBinary, args, env) => {

|

|

358

|

+

if (isWindows && /\.(cmd|bat)$/i.test(claudeBinary)) {

|

|

359

|

+

return spawn(claudeBinary, args, {

|

|

360

|

+

stdio: "inherit",

|

|

361

|

+

env,

|

|

362

|

+

shell: true,

|

|

363

|

+

windowsHide: true,

|

|

364

|

+

});

|

|

365

|

+

}

|

|

366

|

+

return spawn(claudeBinary, args, {

|

|

367

|

+

stdio: "inherit",

|

|

368

|

+

env,

|

|

369

|

+

windowsHide: true,

|

|

370

|

+

});

|

|

371

|

+

};

|

|

204

372

|

const runLaunchClaude = async (args) => {

|

|

205

373

|

const config = await ensureConfigured();

|

|

206

374

|

await ensureProxyRunning(config);

|

|

207

375

|

const claudeBinary = await resolveClaudeBinary();

|

|

208

|

-

const child =

|

|

209

|

-

|

|

210

|

-

|

|

211

|

-

|

|

212

|

-

|

|

213

|

-

|

|

214

|

-

|

|

215

|

-

|

|

216

|

-

|

|

217

|

-

|

|

218

|

-

ANTHROPIC_CUSTOM_MODEL_OPTION_NAME: "nvicode custom model",

|

|

219

|

-

ANTHROPIC_CUSTOM_MODEL_OPTION_DESCRIPTION: "Claude Code via local NVIDIA gateway",

|

|

220

|

-

},

|

|

376

|

+

const child = spawnClaudeProcess(claudeBinary, args, {

|

|

377

|

+

...process.env,

|

|

378

|

+

ANTHROPIC_BASE_URL: `http://127.0.0.1:${config.proxyPort}`,

|

|

379

|

+

ANTHROPIC_AUTH_TOKEN: config.proxyToken,

|

|

380

|

+

ANTHROPIC_API_KEY: "",

|

|

381

|

+

ANTHROPIC_MODEL: config.model,

|

|

382

|

+

CLAUDE_CODE_DISABLE_EXPERIMENTAL_BETAS: "1",

|

|

383

|

+

ANTHROPIC_CUSTOM_MODEL_OPTION: config.model,

|

|

384

|

+

ANTHROPIC_CUSTOM_MODEL_OPTION_NAME: "nvicode custom model",

|

|

385

|

+

ANTHROPIC_CUSTOM_MODEL_OPTION_DESCRIPTION: "Claude Code via local NVIDIA gateway",

|

|

221

386

|

});

|

|

222

387

|

await new Promise((resolve, reject) => {

|

|

223

388

|

child.on("exit", (code, signal) => {

|

|

@@ -269,6 +434,18 @@ const main = async () => {

|

|

|

269

434

|

await runConfig();

|

|

270

435

|

return;

|

|

271

436

|

}

|

|

437

|

+

if (command === "usage") {

|

|

438

|

+

await runUsage();

|

|

439

|

+

return;

|

|

440

|

+

}

|

|

441

|

+

if (command === "activity") {

|

|

442

|

+

await runActivity();

|

|

443

|

+

return;

|

|

444

|

+

}

|

|

445

|

+

if (command === "dashboard") {

|

|

446

|

+

await runDashboard();

|

|

447

|

+

return;

|

|

448

|

+

}

|

|

272

449

|

if ((command === "select" && rest[0] === "model") ||

|

|

273

450

|

command === "select-model") {

|

|

274

451

|

await runSelectModel();

|

package/dist/config.js

CHANGED

|

@@ -4,9 +4,43 @@ import os from "node:os";

|

|

|

4

4

|

import path from "node:path";

|

|

5

5

|

const DEFAULT_PROXY_PORT = 8788;

|

|

6

6

|

const DEFAULT_MODEL = "moonshotai/kimi-k2.5";

|

|

7

|

+

const DEFAULT_MAX_REQUESTS_PER_MINUTE = 40;

|

|

8

|

+

const getEnvNumber = (name) => {

|

|

9

|

+

const raw = process.env[name];

|

|

10

|

+

if (!raw) {

|

|

11

|

+

return null;

|

|

12

|

+

}

|

|

13

|

+

const parsed = Number(raw);

|

|

14

|

+

if (!Number.isFinite(parsed) || parsed <= 0) {

|

|

15

|

+

return null;

|

|

16

|

+

}

|

|

17

|

+

return Math.floor(parsed);

|

|

18

|

+

};

|

|

19

|

+

const getDefaultConfigHome = () => {

|

|

20

|

+

if (process.env.XDG_CONFIG_HOME) {

|

|

21

|

+

return process.env.XDG_CONFIG_HOME;

|

|

22

|

+

}

|

|

23

|

+

if (process.platform === "win32") {

|

|

24

|

+

return (process.env.APPDATA ||

|

|

25

|

+

process.env.LOCALAPPDATA ||

|

|

26

|

+

path.join(os.homedir(), ".local", "share"));

|

|

27

|

+

}

|

|

28

|

+

return path.join(os.homedir(), ".local", "share");

|

|

29

|

+

};

|

|

30

|

+

const getDefaultStateHome = () => {

|

|

31

|

+

if (process.env.XDG_STATE_HOME) {

|

|

32

|

+

return process.env.XDG_STATE_HOME;

|

|

33

|

+

}

|

|

34

|

+

if (process.platform === "win32") {

|

|

35

|

+

return (process.env.LOCALAPPDATA ||

|

|

36

|

+

process.env.APPDATA ||

|

|

37

|

+

path.join(os.homedir(), ".local", "state"));

|

|

38

|

+

}

|

|

39

|

+

return path.join(os.homedir(), ".local", "state");

|

|

40

|

+

};

|

|

7

41

|

export const getNvicodePaths = () => {

|

|

8

|

-

const configHome =

|

|

9

|

-

const stateHome =

|

|

42

|

+

const configHome = getDefaultConfigHome();

|

|

43

|

+

const stateHome = getDefaultStateHome();

|

|

10

44

|

const configDir = path.join(configHome, "nvicode");

|

|

11

45

|

const stateDir = path.join(stateHome, "nvicode");

|

|

12

46

|

return {

|

|

@@ -15,17 +49,26 @@ export const getNvicodePaths = () => {

|

|

|

15

49

|

stateDir,

|

|

16

50

|

logFile: path.join(stateDir, "proxy.log"),

|

|

17

51

|

pidFile: path.join(stateDir, "proxy.pid"),

|

|

52

|

+

usageLogFile: path.join(stateDir, "usage.jsonl"),

|

|

53

|

+

};

|

|

54

|

+

};

|

|

55

|

+

const withDefaults = (config) => {

|

|

56

|

+

const envMaxRequestsPerMinute = getEnvNumber("NVICODE_MAX_RPM");

|

|

57

|

+

return {

|

|

58

|

+

apiKey: config.apiKey?.trim() || "",

|

|

59

|

+

model: config.model?.trim() || DEFAULT_MODEL,

|

|

60

|

+

proxyPort: Number.isInteger(config.proxyPort) && config.proxyPort > 0

|

|

61

|

+

? config.proxyPort

|

|

62

|

+

: DEFAULT_PROXY_PORT,

|

|

63

|

+

proxyToken: config.proxyToken?.trim() || randomUUID(),

|

|

64

|

+

thinking: config.thinking ?? false,

|

|

65

|

+

maxRequestsPerMinute: envMaxRequestsPerMinute ||

|

|

66

|

+

(Number.isInteger(config.maxRequestsPerMinute) &&

|

|

67

|

+

config.maxRequestsPerMinute > 0

|

|

68

|

+

? config.maxRequestsPerMinute

|

|

69

|

+

: DEFAULT_MAX_REQUESTS_PER_MINUTE),

|

|

18

70

|

};

|

|

19

71

|

};

|

|

20

|

-

const withDefaults = (config) => ({

|

|

21

|

-

apiKey: config.apiKey?.trim() || "",

|

|

22

|

-

model: config.model?.trim() || DEFAULT_MODEL,

|

|

23

|

-

proxyPort: Number.isInteger(config.proxyPort) && config.proxyPort > 0

|

|

24

|

-

? config.proxyPort

|

|

25

|

-

: DEFAULT_PROXY_PORT,

|

|

26

|

-

proxyToken: config.proxyToken?.trim() || randomUUID(),

|

|

27

|

-

thinking: config.thinking ?? false,

|

|

28

|

-

});

|

|

29

72

|

export const loadConfig = async () => {

|

|

30

73

|

const paths = getNvicodePaths();

|

|

31

74

|

try {

|

package/dist/proxy.js

CHANGED

|

@@ -1,6 +1,46 @@

|

|

|

1

1

|

import { randomUUID } from "node:crypto";

|

|

2

2

|

import { createServer } from "node:http";

|

|

3

|

+

import { appendUsageRecord, buildUsageRecord, getPricingSnapshot, } from "./usage.js";

|

|

3

4

|

const NVIDIA_URL = "https://integrate.api.nvidia.com/v1/chat/completions";

|

|

5

|

+

const DEFAULT_RETRY_DELAY_MS = 2_000;

|

|

6

|

+

const MAX_NVIDIA_RETRIES = 3;

|

|

7

|

+

const sleep = async (ms) => {

|

|

8

|

+

if (ms <= 0) {

|

|

9

|

+

return;

|

|

10

|

+

}

|

|

11

|

+

await new Promise((resolve) => setTimeout(resolve, ms));

|

|

12

|

+

};

|

|

13

|

+

const parseRetryAfterMs = (value) => {

|

|

14

|

+

if (!value) {

|

|

15

|

+

return null;

|

|

16

|

+

}

|

|

17

|

+

const seconds = Number(value);

|

|

18

|

+

if (Number.isFinite(seconds) && seconds >= 0) {

|

|

19

|

+

return Math.ceil(seconds * 1000);

|

|

20

|

+

}

|

|

21

|

+

const timestamp = Date.parse(value);

|

|

22

|

+

if (Number.isNaN(timestamp)) {

|

|

23

|

+

return null;

|

|

24

|

+

}

|

|

25

|

+

return Math.max(0, timestamp - Date.now());

|

|

26

|

+

};

|

|

27

|

+

const createRequestScheduler = (maxRequestsPerMinute) => {

|

|

28

|

+

const intervalMs = Math.max(1, Math.ceil(60_000 / maxRequestsPerMinute));

|

|

29

|

+

let nextAvailableAt = 0;

|

|

30

|

+

let queue = Promise.resolve();

|

|

31

|

+

return async (task) => {

|

|

32

|

+

const runTask = async () => {

|

|

33

|

+

const now = Date.now();

|

|

34

|

+

const scheduledAt = Math.max(now, nextAvailableAt);

|

|

35

|

+

nextAvailableAt = scheduledAt + intervalMs;

|

|

36

|

+

await sleep(scheduledAt - now);

|

|

37

|

+

return task();

|

|

38

|

+

};

|

|

39

|

+

const result = queue.then(runTask, runTask);

|

|

40

|

+

queue = result.then(() => undefined, () => undefined);

|

|

41

|

+

return result;

|

|

42

|

+

};

|

|

43

|

+

};

|

|

4

44

|

const sendJson = (response, statusCode, payload) => {

|

|

5

45

|

response.writeHead(statusCode, {

|

|

6

46

|

"Content-Type": "application/json",

|

|

@@ -296,10 +336,11 @@ const estimateTokens = (payload) => {

|

|

|

296

336

|

const raw = JSON.stringify(payload);

|

|

297

337

|

return Math.max(1, Math.ceil(raw.length / 4));

|

|

298

338

|

};

|

|

299

|

-

const

|

|

300

|

-

|

|

301

|

-

|

|

302

|

-

|

|

339

|

+

const resolveTargetModel = (config, payload) => payload.model && payload.model.includes("/") && !payload.model.startsWith("claude-")

|

|

340

|

+

? payload.model

|

|

341

|

+

: config.model;

|

|

342

|

+

const callNvidia = async (config, scheduleRequest, payload) => {

|

|

343

|

+

const targetModel = resolveTargetModel(config, payload);

|

|

303

344

|

const requestBody = {

|

|

304

345

|

model: targetModel,

|

|

305

346

|

messages: mapMessages(payload),

|

|

@@ -328,25 +369,38 @@ const callNvidia = async (config, payload) => {

|

|

|

328

369

|

thinking: true,

|

|

329

370

|

};

|

|

330

371

|

}

|

|

331

|

-

const

|

|

332

|

-

|

|

333

|

-

|

|

334

|

-

|

|

335

|

-

|

|

336

|

-

|

|

337

|

-

|

|

338

|

-

|

|

339

|

-

|

|

340

|

-

|

|

341

|

-

|

|

342

|

-

|

|

343

|

-

|

|

372

|

+

const invoke = async () => {

|

|

373

|

+

for (let attempt = 0; attempt <= MAX_NVIDIA_RETRIES; attempt += 1) {

|

|

374

|

+

const response = await fetch(NVIDIA_URL, {

|

|

375

|

+

method: "POST",

|

|

376

|

+

headers: {

|

|

377

|

+

Authorization: `Bearer ${config.apiKey}`,

|

|

378

|

+

Accept: "application/json",

|

|

379

|

+

"Content-Type": "application/json",

|

|

380

|

+

},

|

|

381

|

+

body: JSON.stringify(requestBody),

|

|

382

|

+

});

|

|

383

|

+

const raw = await response.text();

|

|

384

|

+

if (response.ok) {

|

|

385

|

+

return JSON.parse(raw);

|

|

386

|

+

}

|

|

387

|

+

if (response.status === 429 && attempt < MAX_NVIDIA_RETRIES) {

|

|

388

|

+

const retryAfterMs = parseRetryAfterMs(response.headers.get("retry-after")) ||

|

|

389

|

+

DEFAULT_RETRY_DELAY_MS * 2 ** attempt;

|

|

390

|

+

await sleep(retryAfterMs);

|

|

391

|

+

continue;

|

|

392

|

+

}

|

|

393

|

+

throw new Error(`NVIDIA API HTTP ${response.status}: ${raw}`);

|

|

394

|

+

}

|

|

395

|

+

throw new Error("NVIDIA API retry loop exhausted unexpectedly.");

|

|

396

|

+

};

|

|

344

397

|

return {

|

|

345

398

|

targetModel,

|

|

346

|

-

upstream:

|

|

399

|

+

upstream: await scheduleRequest(invoke),

|

|

347

400

|

};

|

|

348

401

|

};

|

|

349

402

|

export const createProxyServer = (config) => {

|

|

403

|

+

const scheduleNvidiaRequest = createRequestScheduler(config.maxRequestsPerMinute);

|

|

350

404

|

return createServer(async (request, response) => {

|

|

351

405

|

try {

|

|

352

406

|

const url = new URL(request.url || "/", "http://127.0.0.1");

|

|

@@ -361,6 +415,7 @@ export const createProxyServer = (config) => {

|

|

|

361

415

|

model: config.model,

|

|

362

416

|

port: config.proxyPort,

|

|

363

417

|

thinking: config.thinking,

|

|

418

|

+

maxRequestsPerMinute: config.maxRequestsPerMinute,

|

|

364

419

|

});

|

|

365

420

|

return;

|

|

366

421

|

}

|

|

@@ -384,114 +439,143 @@ export const createProxyServer = (config) => {

|

|

|

384

439

|

if (request.method === "POST" && url.pathname === "/v1/messages") {

|

|

385

440

|

const rawBody = await readRequestBody(request);

|

|

386

441

|

const payload = JSON.parse(rawBody);

|

|

387

|

-

const

|

|

388

|

-

const

|

|

389

|

-

|

|

390

|

-

|

|

391

|

-

|

|

392

|

-

type: "message",

|

|

393

|

-

role: "assistant",

|

|

394

|

-

model: targetModel,

|

|

395

|

-

content: mappedContent,

|

|

396

|

-

stop_reason: mapStopReason(choice?.finish_reason),

|

|

397

|

-

stop_sequence: null,

|

|

398

|

-

usage: {

|

|

399

|

-

input_tokens: upstream.usage?.prompt_tokens ??

|

|

400

|

-

estimateTokens({

|

|

401

|

-

system: payload.system ?? null,

|

|

402

|

-

messages: payload.messages ?? [],

|

|

403

|

-

tools: payload.tools ?? [],

|

|

404

|

-

}),

|

|

405

|

-

output_tokens: upstream.usage?.completion_tokens ?? 0,

|

|

406

|

-

},

|

|

407

|

-

};

|

|

408

|

-

if (!payload.stream) {

|

|

409

|

-

sendJson(response, 200, anthropicResponse);

|

|

410

|

-

return;

|

|

411

|

-

}

|

|

412

|

-

response.writeHead(200, {

|

|

413

|

-

"Cache-Control": "no-cache, no-transform",

|

|

414

|

-

Connection: "keep-alive",

|

|

415

|

-

"Content-Type": "text/event-stream",

|

|

442

|

+

const targetModel = resolveTargetModel(config, payload);

|

|

443

|

+

const estimatedInputTokens = estimateTokens({

|

|

444

|

+

system: payload.system ?? null,

|

|

445

|

+

messages: payload.messages ?? [],

|

|

446

|

+

tools: payload.tools ?? [],

|

|

416

447

|

});

|

|

417

|

-

|

|

418

|

-

|

|

419

|

-

|

|

420

|

-

|

|

421

|

-

|

|

422

|

-

|

|

448

|

+

const startedAt = Date.now();

|

|

449

|

+

const pricing = getPricingSnapshot();

|

|

450

|

+

try {

|

|

451

|

+

const { upstream } = await callNvidia(config, scheduleNvidiaRequest, payload);

|

|

452

|

+

const choice = upstream.choices?.[0];

|

|

453

|

+

const mappedContent = mapResponseContent(choice);

|

|

454

|

+

const anthropicResponse = {

|

|

455

|

+

id: upstream.id || `msg_${randomUUID()}`,

|

|

456

|

+

type: "message",

|

|

457

|

+

role: "assistant",

|

|

458

|

+

model: targetModel,

|

|

459

|

+

content: mappedContent,

|

|

460

|

+

stop_reason: mapStopReason(choice?.finish_reason),

|

|

461

|

+

stop_sequence: null,

|

|

423

462

|

usage: {

|

|

424

|

-

input_tokens:

|

|

425

|

-

output_tokens: 0,

|

|

463

|

+

input_tokens: upstream.usage?.prompt_tokens ?? estimatedInputTokens,

|

|

464

|

+

output_tokens: upstream.usage?.completion_tokens ?? 0,

|

|

426

465

|

},

|

|

427

|

-

}

|

|

428

|

-

|

|

429

|

-

|

|

430

|

-

|

|

431

|

-

|

|

432

|

-

|

|

433

|

-

|

|

434

|

-

|

|

435

|

-

|

|

436

|

-

|

|

466

|

+

};

|

|

467

|

+

await appendUsageRecord(buildUsageRecord({

|

|

468

|

+

id: anthropicResponse.id,

|

|

469

|

+

status: "success",

|

|

470

|

+

model: targetModel,

|

|

471

|

+

inputTokens: anthropicResponse.usage.input_tokens,

|

|

472

|

+

outputTokens: anthropicResponse.usage.output_tokens,

|

|

473

|

+

latencyMs: Date.now() - startedAt,

|

|

474

|

+

stopReason: anthropicResponse.stop_reason,

|

|

475

|

+

pricing,

|

|

476

|

+

}));

|

|

477

|

+

if (!payload.stream) {

|

|

478

|

+

sendJson(response, 200, anthropicResponse);

|

|

479

|

+

return;

|

|

480

|

+

}

|

|

481

|

+

response.writeHead(200, {

|

|

482

|

+

"Cache-Control": "no-cache, no-transform",

|

|

483

|

+

Connection: "keep-alive",

|

|

484

|

+

"Content-Type": "text/event-stream",

|

|

485

|

+

});

|

|

486

|

+

writeSse(response, "message_start", {

|

|

487

|

+

type: "message_start",

|

|

488

|

+

message: {

|

|

489

|

+

...anthropicResponse,

|

|

490

|

+

content: [],

|

|

491

|

+

stop_reason: null,

|

|

492

|

+

usage: {

|

|

493

|

+

input_tokens: anthropicResponse.usage.input_tokens,

|

|

494

|

+

output_tokens: 0,

|

|

437

495

|

},

|

|

438

|

-

}

|

|

439

|

-

|

|

496

|

+

},

|

|

497

|

+

});

|

|

498

|

+

mappedContent.forEach((block, index) => {

|

|

499

|

+

if (block.type === "text") {

|

|

500

|

+

writeSse(response, "content_block_start", {

|

|

501

|

+

type: "content_block_start",

|

|

502

|

+

index,

|

|

503

|

+

content_block: {

|

|

504

|

+

type: "text",

|

|

505

|

+

text: "",

|

|

506

|

+

},

|

|

507

|

+

});

|

|

508

|

+

for (const chunk of chunkText(block.text)) {

|

|

509

|

+

writeSse(response, "content_block_delta", {

|

|

510

|

+

type: "content_block_delta",

|

|

511

|

+

index,

|

|

512

|

+

delta: {

|

|

513

|

+

type: "text_delta",

|

|

514

|

+

text: chunk,

|

|

515

|

+

},

|

|

516

|

+

});

|

|

517

|

+

}

|

|

518

|

+

writeSse(response, "content_block_stop", {

|

|

519

|

+

type: "content_block_stop",

|

|

520

|

+

index,

|

|

521

|

+

});

|

|

522

|

+

return;

|

|

523

|

+

}

|

|

524

|

+

if (block.type === "tool_use") {

|

|

525

|

+

writeSse(response, "content_block_start", {

|

|

526

|

+

type: "content_block_start",

|

|

527

|

+

index,

|

|

528

|

+

content_block: {

|

|

529

|

+

type: "tool_use",

|

|

530

|

+

id: block.id,

|

|

531

|

+

name: block.name,

|

|

532

|

+

input: {},

|

|

533

|

+

},

|

|

534

|

+

});

|

|

440

535

|

writeSse(response, "content_block_delta", {

|

|

441

536

|

type: "content_block_delta",

|

|

442

537

|

index,

|

|

443

538

|

delta: {

|

|

444

|

-

type: "

|

|

445

|

-

|

|

539

|

+

type: "input_json_delta",

|

|

540

|

+

partial_json: JSON.stringify(block.input ?? {}),

|

|

446

541

|

},

|

|

447

542

|

});

|

|

543

|

+

writeSse(response, "content_block_stop", {

|

|

544

|

+

type: "content_block_stop",

|

|

545

|

+

index,

|

|

546

|

+

});

|

|

448

547

|

}

|

|

449

|

-

|

|

450

|

-

|

|

451

|

-

|

|

452

|

-

|

|

453

|

-

|

|

454

|

-

|

|

455

|

-

|

|

456

|

-

|

|

457

|

-

|

|

458

|

-

|

|

459

|

-

|

|

460

|

-

|

|

461

|

-

|

|

462

|

-

|

|

463

|

-

|

|

464

|

-

|

|

465

|

-

|

|

466

|

-

|

|

467

|

-

|

|

468

|

-

|

|

469

|

-

|

|

470

|

-

|

|

471

|

-

|

|

472

|

-

|

|

473

|

-

|

|

474

|

-

|

|

475

|

-

|

|

476

|

-

|

|

477

|

-

|

|

478

|

-

|

|

479

|

-

}

|

|

480

|

-

writeSse(response, "message_delta", {

|

|

481

|

-

type: "message_delta",

|

|

482

|

-

delta: {

|

|

483

|

-

stop_reason: anthropicResponse.stop_reason,

|

|

484

|

-

stop_sequence: null,

|

|

485

|

-

},

|

|

486

|

-

usage: {

|

|

487

|

-

output_tokens: anthropicResponse.usage.output_tokens,

|

|

488

|

-

},

|

|

489

|

-

});

|

|

490

|

-

writeSse(response, "message_stop", {

|

|

491

|

-

type: "message_stop",

|

|

492

|

-

});

|

|

493

|

-

response.end();

|

|

494

|

-

return;

|

|

548

|

+

});

|

|

549

|

+

writeSse(response, "message_delta", {

|

|

550

|

+

type: "message_delta",

|

|

551

|

+

delta: {

|

|

552

|

+

stop_reason: anthropicResponse.stop_reason,

|

|

553

|

+

stop_sequence: null,

|

|

554

|

+

},

|

|

555

|

+

usage: {

|

|

556

|

+

output_tokens: anthropicResponse.usage.output_tokens,

|

|

557

|

+

},

|

|

558

|

+

});

|

|

559

|

+

writeSse(response, "message_stop", {

|

|

560

|

+

type: "message_stop",

|

|

561

|

+

});

|

|

562

|

+

response.end();

|

|

563

|

+

return;

|

|

564

|

+

}

|

|

565

|

+

catch (error) {

|

|

566

|

+

const message = error instanceof Error ? error.message : String(error);

|

|

567

|

+

await appendUsageRecord(buildUsageRecord({

|

|

568

|

+

id: `err_${randomUUID()}`,

|

|

569

|

+

status: "error",

|

|

570

|

+

model: targetModel,

|

|

571

|

+

inputTokens: estimatedInputTokens,

|

|

572

|

+

outputTokens: 0,

|

|

573

|

+

latencyMs: Date.now() - startedAt,

|

|

574

|

+

error: message,

|

|

575

|

+

pricing,

|

|

576

|

+

}));

|

|

577

|

+

throw error;

|

|

578

|

+

}

|

|

495

579

|

}

|

|

496

580

|

sendAnthropicError(response, 404, "not_found_error", `Unsupported route: ${request.method || "GET"} ${url.pathname}`);

|

|

497

581

|

}

|

package/dist/usage.js

ADDED

|

@@ -0,0 +1,120 @@

|

|

|

1

|

+

import { promises as fs } from "node:fs";

|

|

2

|

+

import { getNvicodePaths } from "./config.js";

|

|

3

|

+

const OPUS_4_6_INPUT_USD_PER_MTOK = 5;

|

|

4

|

+

const OPUS_4_6_OUTPUT_USD_PER_MTOK = 25;

|

|

5

|

+

const OPUS_4_6_PRICING_SOURCE = "https://www.anthropic.com/claude/opus";

|

|

6

|

+

const OPUS_4_6_PRICING_UPDATED_AT = "2026-03-30";

|

|

7

|

+

const getEnvUsdRate = (name, fallback) => {

|

|

8

|

+

const raw = process.env[name];

|

|

9

|

+

if (raw === undefined || raw === null || raw.trim() === "") {

|

|

10

|

+

return fallback;

|

|

11

|

+

}

|

|

12

|

+

const parsed = Number(raw);

|

|

13

|

+

if (!Number.isFinite(parsed) || parsed < 0) {

|

|

14

|

+

return fallback;

|

|

15

|

+

}

|

|

16

|

+

return parsed;

|

|

17

|

+

};

|

|

18

|

+

export const getPricingSnapshot = () => ({

|

|

19

|

+

providerInputUsdPerMTok: getEnvUsdRate("NVICODE_INPUT_USD_PER_MTOK", 0),

|

|

20

|

+

providerOutputUsdPerMTok: getEnvUsdRate("NVICODE_OUTPUT_USD_PER_MTOK", 0),

|

|

21

|

+

compareModel: "Claude Opus 4.6",

|

|

22

|

+

compareInputUsdPerMTok: OPUS_4_6_INPUT_USD_PER_MTOK,

|

|

23

|

+

compareOutputUsdPerMTok: OPUS_4_6_OUTPUT_USD_PER_MTOK,

|

|

24

|

+

comparePricingSource: OPUS_4_6_PRICING_SOURCE,

|

|

25

|

+

comparePricingUpdatedAt: OPUS_4_6_PRICING_UPDATED_AT,

|

|

26

|

+

});

|

|

27

|

+

export const estimateCostUsd = (inputTokens, outputTokens, inputUsdPerMTok, outputUsdPerMTok) => (inputTokens / 1_000_000) * inputUsdPerMTok +

|

|

28

|

+

(outputTokens / 1_000_000) * outputUsdPerMTok;

|

|

29

|

+

export const buildUsageRecord = ({ id, timestamp = new Date().toISOString(), status, model, inputTokens, outputTokens, latencyMs, stopReason, error, pricing = getPricingSnapshot(), }) => {

|

|

30

|

+

const providerCostUsd = estimateCostUsd(inputTokens, outputTokens, pricing.providerInputUsdPerMTok, pricing.providerOutputUsdPerMTok);

|

|

31

|

+

const compareCostUsd = estimateCostUsd(inputTokens, outputTokens, pricing.compareInputUsdPerMTok, pricing.compareOutputUsdPerMTok);

|

|

32

|

+

return {

|

|

33

|

+

id,

|

|

34

|

+

timestamp,

|

|

35

|

+

status,

|

|

36

|

+

model,

|

|

37

|

+

inputTokens,

|

|

38

|

+

outputTokens,

|

|

39

|

+

latencyMs,

|

|

40

|

+

providerCostUsd,

|

|

41

|

+

compareCostUsd,

|

|

42

|

+

savingsUsd: compareCostUsd - providerCostUsd,

|

|

43

|

+

stopReason: stopReason ?? null,

|

|

44

|

+

...(error ? { error } : {}),

|

|

45

|

+

pricing,

|

|

46

|

+

};

|

|

47

|

+

};

|

|

48

|

+

export const appendUsageRecord = async (record) => {

|

|

49

|

+

const paths = getNvicodePaths();

|

|

50

|

+

await fs.mkdir(paths.stateDir, { recursive: true });

|

|

51

|

+

await fs.appendFile(paths.usageLogFile, `${JSON.stringify(record)}\n`, "utf8");

|

|

52

|

+

};

|

|

53

|

+

export const readUsageRecords = async () => {

|

|

54

|

+

const paths = getNvicodePaths();

|

|

55

|

+

try {

|

|

56

|

+

const raw = await fs.readFile(paths.usageLogFile, "utf8");

|

|

57

|

+

return raw

|

|

58

|

+

.split("\n")

|

|

59

|

+

.map((line) => line.trim())

|

|

60

|

+

.filter(Boolean)

|

|

61

|

+

.map((line) => JSON.parse(line))

|

|

62

|

+

.filter((record) => typeof record.timestamp === "string")

|

|

63

|

+

.sort((left, right) => right.timestamp.localeCompare(left.timestamp));

|

|

64

|

+

}

|

|

65

|

+

catch (error) {

|

|

66

|

+

if (error.code === "ENOENT") {

|

|

67

|

+

return [];

|

|

68

|

+

}

|

|

69

|

+

throw error;

|

|

70

|

+

}

|

|

71

|

+

};

|

|

72

|

+

export const summarizeUsage = (records) => records.reduce((summary, record) => {

|

|

73

|

+

summary.requests += 1;

|

|

74

|

+

summary.successes += record.status === "success" ? 1 : 0;

|

|

75

|

+

summary.errors += record.status === "error" ? 1 : 0;

|

|

76

|

+

summary.inputTokens += record.inputTokens;

|

|

77

|

+

summary.outputTokens += record.outputTokens;

|

|

78

|

+

summary.providerCostUsd += record.providerCostUsd;

|

|

79

|

+

summary.compareCostUsd += record.compareCostUsd;

|

|

80

|

+

summary.savingsUsd += record.savingsUsd;

|

|

81

|

+

return summary;

|

|

82

|

+

}, {

|

|

83

|

+

requests: 0,

|

|

84

|

+

successes: 0,

|

|

85

|

+

errors: 0,

|

|

86

|

+

inputTokens: 0,

|

|

87

|

+

outputTokens: 0,

|

|

88

|

+

providerCostUsd: 0,

|

|

89

|

+

compareCostUsd: 0,

|

|

90

|

+

savingsUsd: 0,

|

|

91

|

+

});

|

|

92

|

+

export const filterRecordsSince = (records, sinceMs) => records.filter((record) => {

|

|

93

|

+

const timestamp = Date.parse(record.timestamp);

|

|

94

|

+

return !Number.isNaN(timestamp) && timestamp >= sinceMs;

|

|

95

|

+

});

|

|

96

|

+

const integerFormatter = new Intl.NumberFormat("en-US");

|

|

97

|

+

const moneyFormatter = new Intl.NumberFormat("en-US", {

|

|

98

|

+

style: "currency",

|

|

99

|

+

currency: "USD",

|

|

100

|

+

minimumFractionDigits: 4,

|

|

101

|

+

maximumFractionDigits: 4,

|

|

102

|

+

});

|

|

103

|

+

export const formatInteger = (value) => integerFormatter.format(Math.round(value));

|

|

104

|

+

export const formatUsd = (value) => moneyFormatter.format(value);

|

|

105

|

+

export const formatDuration = (ms) => {

|

|

106

|

+

if (ms < 1_000) {

|

|

107

|

+

return `${ms}ms`;

|

|

108

|

+

}

|

|

109

|

+

if (ms < 60_000) {

|

|

110

|

+

return `${(ms / 1_000).toFixed(1)}s`;

|

|

111

|

+

}

|

|

112

|

+

return `${(ms / 60_000).toFixed(1)}m`;

|

|

113

|

+

};

|

|

114

|

+

export const formatTimestamp = (value) => {

|

|

115

|

+

const timestamp = new Date(value);

|

|

116

|

+

if (Number.isNaN(timestamp.getTime())) {

|

|

117

|

+

return value;

|

|

118

|

+

}

|

|

119

|

+

return timestamp.toISOString().replace("T", " ").slice(0, 19);

|

|

120

|

+

};

|