llm-usage-metrics 0.3.1 → 0.3.3

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- package/README.md +174 -236

- package/dist/index.js +1576 -363

- package/dist/index.js.map +1 -1

- package/package.json +6 -4

package/README.md

CHANGED

|

@@ -1,341 +1,279 @@

|

|

|

1

|

-

|

|

2

|

-

|

|

3

|

-

[](https://deepwiki.com/ayagmar/llm-usage-metrics)

|

|

4

|

-

[](https://github.com/ayagmar/llm-usage-metrics/actions/workflows/ci.yml)

|

|

5

|

-

[](https://codecov.io/gh/ayagmar/llm-usage-metrics)

|

|

6

|

-

[](https://www.npmjs.com/package/llm-usage-metrics)

|

|

7

|

-

[](https://www.npmjs.com/package/llm-usage-metrics)

|

|

8

|

-

[](https://packagephobia.com/result?p=llm-usage-metrics)

|

|

1

|

+

<div align="center">

|

|

9

2

|

|

|

10

|

-

|

|

3

|

+

<img src="https://ayagmar.github.io/llm-usage-metrics/favicon.svg" width="64" height="64" alt="llm-usage-metrics logo">

|

|

11

4

|

|

|

12

|

-

-

|

|

13

|

-

- `~/.codex/sessions/**/*.jsonl`

|

|

14

|

-

- OpenCode SQLite DB (auto-discovered or provided via `--opencode-db`)

|

|

15

|

-

|

|

16

|

-

Reports are available for daily, weekly (Monday-start), and monthly periods.

|

|

17

|

-

|

|

18

|

-

**Documentation: [ayagmar.github.io/llm-usage-metrics](https://ayagmar.github.io/llm-usage-metrics/)**

|

|

5

|

+

# llm-usage-metrics

|

|

19

6

|

|

|

20

|

-

|

|

7

|

+

**Track and analyze your local LLM usage across coding agents**

|

|

21

8

|

|

|

22

|

-

|

|

9

|

+

[](https://deepwiki.com/ayagmar/llm-usage-metrics)

|

|

10

|

+

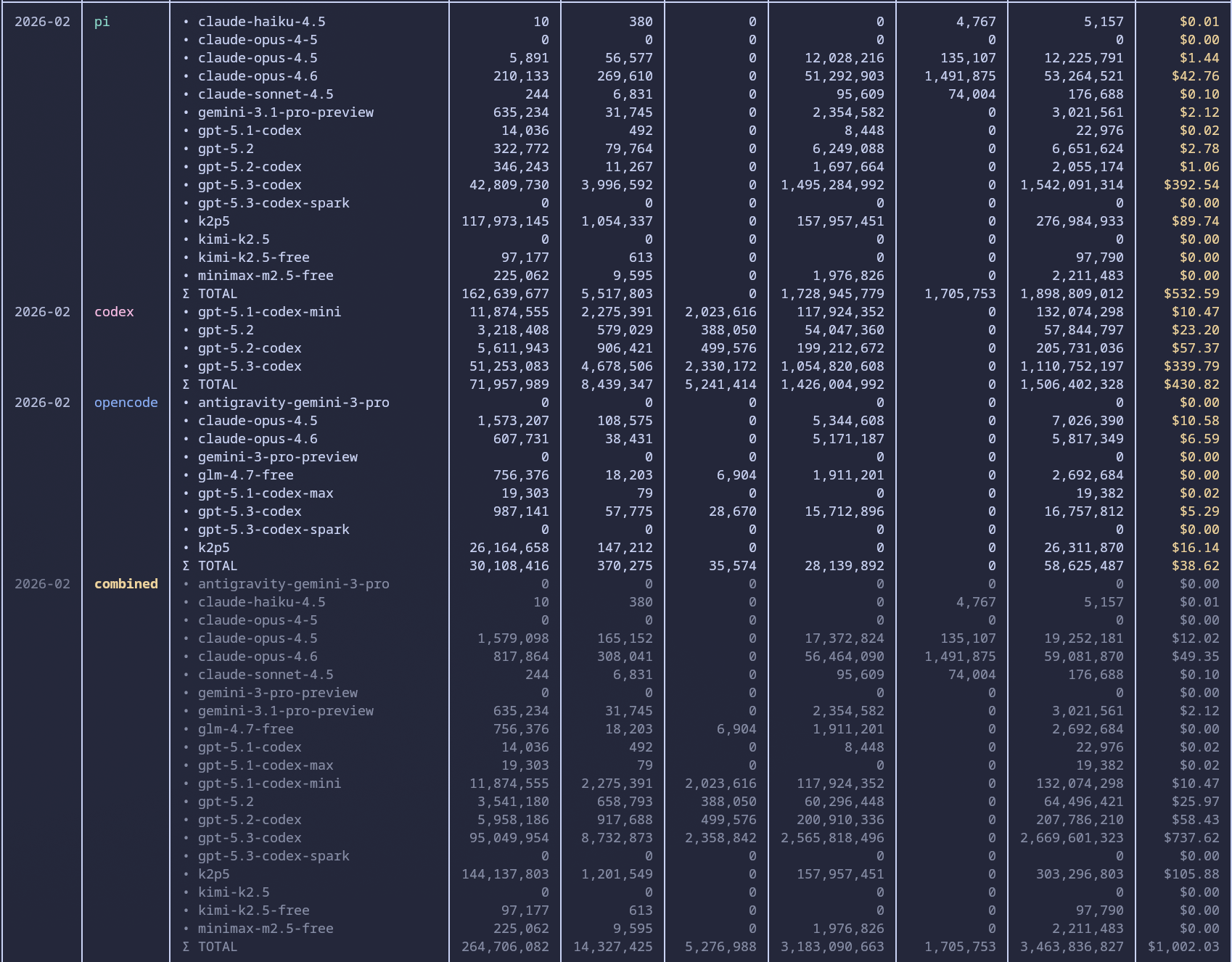

[](https://www.npmjs.com/package/llm-usage-metrics)

|

|

11

|

+

[](https://www.npmjs.com/package/llm-usage-metrics)

|

|

12

|

+

[](https://github.com/ayagmar/llm-usage-metrics/actions/workflows/ci.yml)

|

|

13

|

+

[](https://codecov.io/gh/ayagmar/llm-usage-metrics)

|

|

23

14

|

|

|

24

|

-

|

|

25

|

-

|

|

26

|

-

|

|

15

|

+

[📖 Documentation](https://ayagmar.github.io/llm-usage-metrics/) ·

|

|

16

|

+

[⚡ Quick Start](#quick-start) ·

|

|

17

|

+

[📊 Examples](#usage) ·

|

|

18

|

+

[🤝 Contributing](./CONTRIBUTING.md)

|

|

27

19

|

|

|

28

|

-

|

|

20

|

+

</div>

|

|

29

21

|

|

|

30

|

-

|

|

31

|

-

npx --yes llm-usage-metrics daily

|

|

32

|

-

```

|

|

22

|

+

---

|

|

33

23

|

|

|

34

|

-

|

|

24

|

+

Aggregate token usage and costs from your local coding agent sessions. Supports **pi**, **codex**, and **OpenCode** with zero configuration required.

|

|

35

25

|

|

|

36

|

-

|

|

26

|

+

## ✨ Features

|

|

37

27

|

|

|

38

|

-

-

|

|

39

|

-

-

|

|

40

|

-

-

|

|

28

|

+

- **Zero-Config Discovery** — Automatically finds `.pi`, `.codex`, and OpenCode session data

|

|

29

|

+

- **LiteLLM Pricing** — Real-time pricing sync with offline caching support

|

|

30

|

+

- **Flexible Reports** — Daily, weekly, and monthly aggregations

|

|

31

|

+

- **Efficiency Reports** — Correlate cost/tokens with repository commit outcomes

|

|

32

|

+

- **Multiple Outputs** — Terminal tables, JSON, or Markdown

|

|

33

|

+

- **Smart Filtering** — By source, provider, model, and date ranges

|

|

41

34

|

|

|

42

|

-

##

|

|

35

|

+

## 🚀 Quick Start

|

|

43

36

|

|

|

44

|

-

|

|

45

|

-

|

|

46

|

-

|

|

47

|

-

|

|

48

|

-

- uses a local cache (`<platform-cache-root>/llm-usage-metrics/update-check.json`; defaults to `~/.cache/llm-usage-metrics/update-check.json` on Linux when `XDG_CACHE_HOME` is unset) with a 1-hour default TTL

|

|

49

|

-

- optional session-scoped cache mode via `LLM_USAGE_UPDATE_CACHE_SCOPE=session`

|

|

50

|

-

- skips checks for `--help` / `--version` invocations

|

|

51

|

-

- skips checks when run through `npx`

|

|

52

|

-

- prompts for install + restart only in interactive TTY sessions

|

|

53

|

-

- prints a one-line notice in non-interactive sessions

|

|

37

|

+

```bash

|

|

38

|

+

# Install globally

|

|

39

|

+

npm install -g llm-usage-metrics

|

|

54

40

|

|

|

55

|

-

|

|

41

|

+

# Or run without installing

|

|

42

|

+

npx llm-usage-metrics daily

|

|

56

43

|

|

|

57

|

-

|

|

58

|

-

|

|

44

|

+

# Generate your first report

|

|

45

|

+

llm-usage daily

|

|

59

46

|

```

|

|

60

47

|

|

|

61

|

-

|

|

48

|

+

<div align="center">

|

|

62

49

|

|

|

63

|

-

|

|

50

|

+

|

|

64

51

|

|

|

65

|

-

|

|

66

|

-

- `LLM_USAGE_UPDATE_CACHE_SCOPE`: cache scope for update checks (`global` default, `session` to scope by terminal shell session)

|

|

67

|

-

- `LLM_USAGE_UPDATE_CACHE_SESSION_KEY`: optional custom session key when `LLM_USAGE_UPDATE_CACHE_SCOPE=session` (defaults to parent shell PID)

|

|

68

|

-

- `LLM_USAGE_UPDATE_CACHE_TTL_MS`: update-check cache TTL in milliseconds (clamped: `0..2592000000`; use `0` to check on every CLI run)

|

|

69

|

-

- `LLM_USAGE_UPDATE_FETCH_TIMEOUT_MS`: update-check network timeout in milliseconds (clamped: `200..30000`)

|

|

70

|

-

- `LLM_USAGE_PRICING_CACHE_TTL_MS`: pricing cache TTL in milliseconds (clamped: `60000..2592000000`)

|

|

71

|

-

- `LLM_USAGE_PRICING_FETCH_TIMEOUT_MS`: pricing fetch timeout in milliseconds (clamped: `200..30000`)

|

|

72

|

-

- `LLM_USAGE_PARSE_MAX_PARALLEL`: max concurrent file parses per source adapter (clamped: `1..64`)

|

|

73

|

-

- `LLM_USAGE_PARSE_CACHE_ENABLED`: enable file parse cache (`1` by default; accepts `1/0`, `true/false`, `yes/no`)

|

|

74

|

-

- `LLM_USAGE_PARSE_CACHE_TTL_MS`: file parse cache TTL in milliseconds (clamped: `3600000..2592000000`)

|

|

75

|

-

- `LLM_USAGE_PARSE_CACHE_MAX_ENTRIES`: max cached file parse entries (clamped: `100..20000`)

|

|

76

|

-

- `LLM_USAGE_PARSE_CACHE_MAX_BYTES`: max parse-cache file size in bytes (clamped: `1048576..536870912`)

|

|

52

|

+

</div>

|

|

77

53

|

|

|

78

|

-

|

|

54

|

+

## 📋 Supported Sources

|

|

79

55

|

|

|

80

|

-

|

|

56

|

+

| Source | Pattern | Discovery |

|

|

57

|

+

| ------------ | --------------------------------- | -------------------------------- |

|

|

58

|

+

| **pi** | `~/.pi/agent/sessions/**/*.jsonl` | Automatic |

|

|

59

|

+

| **codex** | `~/.codex/sessions/**/*.jsonl` | Automatic |

|

|

60

|

+

| **OpenCode** | `~/.opencode/opencode.db` | Auto or explicit `--opencode-db` |

|

|

81

61

|

|

|

82

|

-

|

|

62

|

+

OpenCode source support requires Node.js 24+ runtime with built-in `node:sqlite`.

|

|

83

63

|

|

|

84

|

-

|

|

85

|

-

LLM_USAGE_PARSE_MAX_PARALLEL=16 LLM_USAGE_PRICING_FETCH_TIMEOUT_MS=8000 llm-usage monthly

|

|

86

|

-

```

|

|

64

|

+

## 🎯 Usage

|

|

87

65

|

|

|

88

|

-

|

|

89

|

-

|

|

90

|

-

### Daily report (default terminal table)

|

|

66

|

+

### Basic Reports

|

|

91

67

|

|

|

92

68

|

```bash

|

|

69

|

+

# Daily report (default terminal table)

|

|

93

70

|

llm-usage daily

|

|

94

|

-

```

|

|

95

71

|

|

|

96

|

-

|

|

97

|

-

|

|

98

|

-

```bash

|

|

72

|

+

# Weekly with timezone

|

|

99

73

|

llm-usage weekly --timezone Europe/Paris

|

|

100

|

-

```

|

|

101

74

|

|

|

102

|

-

|

|

103

|

-

|

|

104

|

-

```bash

|

|

75

|

+

# Monthly date range

|

|

105

76

|

llm-usage monthly --since 2026-01-01 --until 2026-01-31

|

|

106

77

|

```

|

|

107

78

|

|

|

108

|

-

###

|

|

109

|

-

|

|

110

|

-

```bash

|

|

111

|

-

llm-usage daily --markdown

|

|

112

|

-

```

|

|

113

|

-

|

|

114

|

-

### JSON output

|

|

79

|

+

### Output Formats

|

|

115

80

|

|

|

116

81

|

```bash

|

|

82

|

+

# JSON for pipelines

|

|

117

83

|

llm-usage daily --json

|

|

118

|

-

```

|

|

119

84

|

|

|

120

|

-

|

|

85

|

+

# Markdown for documentation

|

|

86

|

+

llm-usage daily --markdown

|

|

121

87

|

|

|

122

|

-

|

|

123

|

-

llm-usage monthly --

|

|

88

|

+

# Detailed per-model breakdown

|

|

89

|

+

llm-usage monthly --per-model-columns

|

|

124

90

|

```

|

|

125

91

|

|

|

126

|

-

###

|

|

92

|

+

### Efficiency Reports

|

|

127

93

|

|

|

128

94

|

```bash

|

|

129

|

-

|

|

130

|

-

|

|

131

|

-

|

|

132

|

-

Pricing behavior notes:

|

|

133

|

-

|

|

134

|

-

- LiteLLM is the active pricing source.

|

|

135

|

-

- explicit `costUsd: 0` events are re-priced from LiteLLM when model pricing is available.

|

|

136

|

-

- if all contributing events in a row have unresolved cost, the row `Cost` is rendered as `-`.

|

|

137

|

-

- if only part of a row cost is known, the row `Cost` is rendered as `~$...` to mark incomplete pricing.

|

|

138

|

-

- when pricing cannot be loaded from LiteLLM (or cache in offline mode), report generation fails fast.

|

|

95

|

+

# Daily efficiency in current repository

|

|

96

|

+

llm-usage efficiency daily

|

|

139

97

|

|

|

140

|

-

|

|

98

|

+

# Weekly efficiency for a specific repository path

|

|

99

|

+

llm-usage efficiency weekly --repo-dir /path/to/repo

|

|

141

100

|

|

|

142

|

-

|

|

143

|

-

llm-usage

|

|

101

|

+

# Include merge commits and export JSON

|

|

102

|

+

llm-usage efficiency monthly --include-merge-commits --json

|

|

144

103

|

```

|

|

145

104

|

|

|

146

|

-

|

|

105

|

+

Efficiency reports are repo-attributed: usage events are mapped to a Git repository root using source metadata (`cwd`/path info), and only events attributed to the selected repo are included in efficiency totals.

|

|

147

106

|

|

|

148

|

-

|

|

149

|

-

llm-usage daily --source-dir pi=/path/to/pi/sessions --source-dir codex=/path/to/codex/sessions

|

|

150

|

-

```

|

|

107

|

+

#### Reading efficiency output

|

|

151

108

|

|

|

152

|

-

|

|

109

|

+

- `Commits`, `+Lines`, `-Lines`, `ΔLines` come from local Git shortstat outcomes (for your configured Git author).

|

|

110

|

+

- `Input`, `Output`, `Reasoning`, `Cache Read`, `Cache Write`, `Total`, and `Cost` come from repo-attributed usage events.

|

|

111

|

+

- `All Tokens/Commit` uses `Total / Commits` and includes cache read/write tokens.

|

|

112

|

+

- `Non-Cache/Commit` uses `(Input + Output + Reasoning) / Commits` and excludes cache read/write tokens.

|

|

113

|

+

- `$/Commit` uses `Cost / Commits`.

|

|

114

|

+

- `$/1k Lines` uses `Cost / (ΔLines / 1000)`.

|

|

115

|

+

- `Commits/$` uses `Commits / Cost` (shown only when `Cost > 0`).

|

|

153

116

|

|

|

154

|

-

|

|

155

|

-

|

|

156

|

-

|

|

117

|

+

Efficiency period rows are emitted only when both Git outcomes and repo-attributed usage signal exist for that period.

|

|

118

|

+

When a denominator is zero, derived values in emitted rows render as `-`.

|

|

119

|

+

When pricing is incomplete, terminal/markdown output prefixes affected USD metrics with `~`.

|

|

157

120

|

|

|

158

|

-

|

|

121

|

+

For source-by-source comparisons, run the same report per source:

|

|

159

122

|

|

|

160

123

|

```bash

|

|

161

|

-

llm-usage

|

|

124

|

+

llm-usage efficiency monthly --repo-dir /path/to/repo --source pi

|

|

125

|

+

llm-usage efficiency monthly --repo-dir /path/to/repo --source codex

|

|

126

|

+

llm-usage efficiency monthly --repo-dir /path/to/repo --source opencode

|

|

162

127

|

```

|

|

163

128

|

|

|

164

|

-

|

|

165

|

-

|

|

166

|

-

1. explicit `--opencode-db`

|

|

167

|

-

2. deterministic OS-specific default path candidates

|

|

129

|

+

Note: usage filters (`--source`, `--provider`, `--model`, `--pi-dir`, `--codex-dir`, `--opencode-db`, `--source-dir`) also constrain commit attribution: only commit days with matching repo-attributed usage events are counted.

|

|

168

130

|

|

|

169

|

-

|

|

131

|

+

### Filtering

|

|

170

132

|

|

|

171

133

|

```bash

|

|

172

|

-

|

|

173

|

-

|

|

174

|

-

|

|

175

|

-

OpenCode safety notes:

|

|

176

|

-

|

|

177

|

-

- OpenCode DB is opened in read-only mode

|

|

178

|

-

- unreadable/missing explicit paths fail fast with actionable errors

|

|

179

|

-

- OpenCode CLI is optional for troubleshooting and not required for runtime parsing

|

|

180

|

-

|

|

181

|

-

### Filter by source (`--source`)

|

|

182

|

-

|

|

183

|

-

Use `--source` to limit reports to one or more source ids.

|

|

184

|

-

|

|

185

|

-

Supported source ids:

|

|

134

|

+

# By source

|

|

135

|

+

llm-usage monthly --source pi,codex

|

|

186

136

|

|

|

187

|

-

|

|

188

|

-

-

|

|

189

|

-

- `opencode`

|

|

137

|

+

# By provider

|

|

138

|

+

llm-usage monthly --provider openai

|

|

190

139

|

|

|

191

|

-

|

|

140

|

+

# By model

|

|

141

|

+

llm-usage monthly --model claude

|

|

192

142

|

|

|

193

|

-

|

|

194

|

-

-

|

|

195

|

-

|

|

143

|

+

# Combined filters

|

|

144

|

+

llm-usage monthly --source opencode --provider openai --model gpt-4.1

|

|

145

|

+

```

|

|

196

146

|

|

|

197

|

-

|

|

147

|

+

### Custom Paths

|

|

198

148

|

|

|

199

149

|

```bash

|

|

200

|

-

#

|

|

201

|

-

llm-usage

|

|

150

|

+

# Custom directories

|

|

151

|

+

llm-usage daily --source-dir pi=/path/to/pi --source-dir codex=/path/to/codex

|

|

202

152

|

|

|

203

|

-

#

|

|

204

|

-

llm-usage

|

|

153

|

+

# Explicit OpenCode database

|

|

154

|

+

llm-usage daily --opencode-db /path/to/opencode.db

|

|

155

|

+

```

|

|

205

156

|

|

|

206

|

-

|

|

207

|

-

llm-usage monthly --source opencode

|

|

157

|

+

### Offline Mode

|

|

208

158

|

|

|

209

|

-

|

|

210

|

-

|

|

211

|

-

llm-usage monthly --

|

|

159

|

+

```bash

|

|

160

|

+

# Use cached pricing only

|

|

161

|

+

llm-usage monthly --pricing-offline

|

|

212

162

|

|

|

213

|

-

#

|

|

214

|

-

llm-usage monthly --

|

|

163

|

+

# Continue even if pricing fetch fails

|

|

164

|

+

llm-usage monthly --ignore-pricing-failures

|

|

215

165

|

```

|

|

216

166

|

|

|

217

|

-

|

|

218

|

-

|

|

219

|

-

Use `--provider` to keep only events whose provider contains the filter text.

|

|

167

|

+

## 🧪 Production Benchmarks

|

|

220

168

|

|

|

221

|

-

|

|

169

|

+

Benchmarked on **February 24, 2026** on a local production machine:

|

|

222

170

|

|

|

223

|

-

-

|

|

224

|

-

-

|

|

225

|

-

-

|

|

171

|

+

- OS: CachyOS (Linux 6.19.2-2-cachyos)

|

|

172

|

+

- CPU: Intel Core Ultra 9 185H (22 logical CPUs)

|

|

173

|

+

- RAM: 62 GiB

|

|

174

|

+

- Storage: NVMe SSD

|

|

226

175

|

|

|

227

|

-

|

|

176

|

+

Compared commands:

|

|

228

177

|

|

|

229

178

|

```bash

|

|

230

|

-

|

|

179

|

+

ccusage-codex monthly

|

|

231

180

|

llm-usage monthly --provider openai

|

|

232

|

-

|

|

233

|

-

# GitHub Models providers

|

|

234

|

-

llm-usage monthly --provider github

|

|

235

|

-

|

|

236

|

-

# source + provider together

|

|

237

|

-

llm-usage monthly --source codex --provider openai

|

|

238

181

|

```

|

|

239

182

|

|

|

240

|

-

|

|

241

|

-

|

|

242

|

-

`--model` supports repeatable and comma-separated filters. Matching is case-insensitive.

|

|

243

|

-

|

|

244

|

-

Per filter value:

|

|

183

|

+

Timed benchmark summary (5 runs per scenario):

|

|

245

184

|

|

|

246

|

-

|

|

247

|

-

|

|

185

|

+

| Tool | Cache mode | Median (s) | Mean (s) |

|

|

186

|

+

| ------------------------------------------------------- | ---------- | ---------: | -------: |

|

|

187

|

+

| `ccusage-codex monthly` | no cache | 14.247 | 14.456 |

|

|

188

|

+

| `ccusage-codex monthly --offline` | with cache | 14.043 | 14.268 |

|

|

189

|

+

| `llm-usage monthly --provider openai` | no cache | 4.192 | 4.196 |

|

|

190

|

+

| `llm-usage monthly --provider openai --pricing-offline` | with cache | 0.793 | 0.784 |

|

|

248

191

|

|

|

249

|

-

|

|

192

|

+

On this dataset and machine:

|

|

250

193

|

|

|

251

|

-

|

|

252

|

-

|

|

253

|

-

llm-usage

|

|

194

|

+

- `llm-usage` is `3.40x` faster than `ccusage-codex` in no-cache mode.

|

|

195

|

+

- `llm-usage` is `17.71x` faster than `ccusage-codex` in cached mode.

|

|

196

|

+

- `llm-usage` improves `5.29x` with cache; `ccusage-codex` improves `1.01x`.

|

|

254

197

|

|

|

255

|

-

|

|

256

|

-

llm-usage monthly --model claude-sonnet-4.5

|

|

198

|

+

Full methodology, cache-mode definition, and scope caveats are documented in the Astro docs: [Benchmarks](https://ayagmar.github.io/llm-usage-metrics/benchmarks/).

|

|

257

199

|

|

|

258

|

-

|

|

259

|

-

llm-usage monthly --model claude --model gpt-5

|

|

260

|

-

llm-usage monthly --model claude,gpt-5

|

|

200

|

+

Re-run benchmark locally:

|

|

261

201

|

|

|

262

|

-

|

|

263

|

-

|

|

202

|

+

```bash

|

|

203

|

+

pnpm run perf:production-benchmark -- --runs 5

|

|

264

204

|

```

|

|

265

205

|

|

|

266

|

-

|

|

267

|

-

|

|

268

|

-

Default output is compact (model names only in the Models column).

|

|

269

|

-

|

|

270

|

-

Use `--per-model-columns` to render per-model multiline metrics in each numeric column:

|

|

206

|

+

Generate machine-readable artifacts:

|

|

271

207

|

|

|

272

208

|

```bash

|

|

273

|

-

|

|

274

|

-

|

|

209

|

+

pnpm run perf:production-benchmark -- \

|

|

210

|

+

--runs 5 \

|

|

211

|

+

--json-output ./tmp/production-benchmark.json \

|

|

212

|

+

--markdown-output ./tmp/production-benchmark.md

|

|

275

213

|

```

|

|

276

214

|

|

|

277

|

-

##

|

|

278

|

-

|

|

279

|

-

### Terminal UI

|

|

215

|

+

## ⚙️ Configuration

|

|

280

216

|

|

|

281

|

-

|

|

217

|

+

### Environment Variables

|

|

282

218

|

|

|

283

|

-

|

|

284

|

-

|

|

285

|

-

|

|

286

|

-

|

|

287

|

-

|

|

288

|

-

|

|

289

|

-

- **Models displayed as bullet points** for better readability

|

|

290

|

-

- **Rounded table borders** and improved color scheme

|

|

219

|

+

| Variable | Description |

|

|

220

|

+

| -------------------------------- | --------------------------------- |

|

|

221

|

+

| `LLM_USAGE_SKIP_UPDATE_CHECK` | Skip update check (`1`) |

|

|

222

|

+

| `LLM_USAGE_PRICING_CACHE_TTL_MS` | Pricing cache duration |

|

|

223

|

+

| `LLM_USAGE_PARSE_MAX_PARALLEL` | Max parallel file parses (`1-64`) |

|

|

224

|

+

| `LLM_USAGE_PARSE_CACHE_ENABLED` | Enable parse cache (`1/0`) |

|

|

291

225

|

|

|

292

|

-

|

|

226

|

+

See full environment variable reference in the [documentation](https://ayagmar.github.io/llm-usage-metrics/configuration/).

|

|

293

227

|

|

|

294

|

-

|

|

295

|

-

ℹ Found 12 session file(s) with 45 event(s)

|

|

296

|

-

• pi: 8 file(s), 32 events

|

|

297

|

-

• codex: 4 file(s), 13 events

|

|

298

|

-

ℹ Loaded pricing from cache

|

|

228

|

+

### Update Checks

|

|

299

229

|

|

|

300

|

-

|

|

301

|

-

│ Monthly Token Usage Report │

|

|

302

|

-

└────────────────────────────┘

|

|

230

|

+

The CLI performs lightweight update checks with smart defaults:

|

|

303

231

|

|

|

304

|

-

|

|

305

|

-

|

|

306

|

-

|

|

307

|

-

│ Feb 2026 │ pi │ • gpt-5.2 │

|

|

308

|

-

│ │ │ • gpt-5.2-codex │

|

|

309

|

-

╰────────────┴──────────┴──────────────────────╯

|

|

310

|

-

```

|

|

311

|

-

|

|

312

|

-

### Report structure

|

|

313

|

-

|

|

314

|

-

Each report includes:

|

|

232

|

+

- 1-hour cache TTL

|

|

233

|

+

- Skipped for `--help`, `--version`, and `npx` runs

|

|

234

|

+

- Prompts only in interactive TTY sessions

|

|

315

235

|

|

|

316

|

-

|

|

317

|

-

- a per-period combined subtotal row (only when multiple sources exist in that period)

|

|

318

|

-

- a final grand total row across all periods

|

|

236

|

+

Disable with:

|

|

319

237

|

|

|

320

|

-

|

|

321

|

-

|

|

322

|

-

|

|

323

|

-

- Source

|

|

324

|

-

- Models

|

|

325

|

-

- Input

|

|

326

|

-

- Output

|

|

327

|

-

- Reasoning

|

|

328

|

-

- Cache Read

|

|

329

|

-

- Cache Write

|

|

330

|

-

- Total

|

|

331

|

-

- Cost

|

|

238

|

+

```bash

|

|

239

|

+

LLM_USAGE_SKIP_UPDATE_CHECK=1 llm-usage daily

|

|

240

|

+

```

|

|

332

241

|

|

|

333

|

-

## Development

|

|

242

|

+

## 🛠️ Development

|

|

334

243

|

|

|

335

244

|

```bash

|

|

245

|

+

# Install dependencies

|

|

336

246

|

pnpm install

|

|

247

|

+

|

|

248

|

+

# Run quality checks

|

|

337

249

|

pnpm run lint

|

|

338

250

|

pnpm run typecheck

|

|

339

251

|

pnpm run test

|

|

340

252

|

pnpm run format:check

|

|

253

|

+

|

|

254

|

+

# Build

|

|

255

|

+

pnpm run build

|

|

256

|

+

|

|

257

|

+

# Run locally

|

|

258

|

+

pnpm cli daily

|

|

341

259

|

```

|

|

260

|

+

|

|

261

|

+

## 📚 Documentation

|

|

262

|

+

|

|

263

|

+

- **[Getting Started](https://ayagmar.github.io/llm-usage-metrics/getting-started/)** — Installation and first steps

|

|

264

|

+

- **[CLI Reference](https://ayagmar.github.io/llm-usage-metrics/cli-reference/)** — Complete command reference

|

|

265

|

+

- **[Efficiency](https://ayagmar.github.io/llm-usage-metrics/efficiency/)** — Efficiency report semantics and interpretation

|

|

266

|

+

- **[Data Sources](https://ayagmar.github.io/llm-usage-metrics/sources/)** — Source configuration

|

|

267

|

+

- **[Configuration](https://ayagmar.github.io/llm-usage-metrics/configuration/)** — Environment variables

|

|

268

|

+

- **[Benchmarks](https://ayagmar.github.io/llm-usage-metrics/benchmarks/)** — Production benchmark methodology and results

|

|

269

|

+

- **[Architecture](https://ayagmar.github.io/llm-usage-metrics/architecture/)** — Technical overview

|

|

270

|

+

|

|

271

|

+

## 🤝 Contributing

|

|

272

|

+

|

|

273

|

+

Contributions are welcome! See [CONTRIBUTING.md](./CONTRIBUTING.md) for guidelines.

|

|

274

|

+

|

|

275

|

+

The codebase is structured to add more sources through the `SourceAdapter` pattern.

|

|

276

|

+

|

|

277

|

+

## 📄 License

|

|

278

|

+

|

|

279

|

+

MIT © [Abdeslam Yagmar](https://github.com/ayagmar)

|