aicommit2 2.0.5 → 2.0.7

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- package/README.md +110 -84

- package/dist/cli.mjs +53 -53

- package/package.json +3 -2

package/README.md

CHANGED

|

@@ -42,6 +42,7 @@ _aicommit2_ is a reactive CLI tool that automatically generates Git commit messa

|

|

|

42

42

|

- [Cohere](https://cohere.com/)

|

|

43

43

|

- [Groq](https://groq.com/)

|

|

44

44

|

- [Perplexity](https://docs.perplexity.ai/)

|

|

45

|

+

- [DeepSeek](https://www.deepseek.com/)

|

|

45

46

|

- [Huggingface **(Unofficial)**](https://huggingface.co/chat/)

|

|

46

47

|

|

|

47

48

|

### Local

|

|

@@ -216,20 +217,21 @@ model[]=codestral

|

|

|

216

217

|

The following settings can be applied to most models, but support may vary.

|

|

217

218

|

Please check the documentation for each specific model to confirm which settings are supported.

|

|

218

219

|

|

|

219

|

-

| Setting | Description | Default

|

|

220

|

-

|

|

221

|

-

| `systemPrompt` | System Prompt text | -

|

|

222

|

-

| `systemPromptPath` | Path to system prompt file | -

|

|

223

|

-

| `exclude` | Files to exclude from AI analysis | -

|

|

224

|

-

| `

|

|

225

|

-

| `

|

|

226

|

-

| `

|

|

227

|

-

| `

|

|

228

|

-

| `

|

|

229

|

-

| `

|

|

230

|

-

| `

|

|

231

|

-

| `

|

|

232

|

-

| `

|

|

220

|

+

| Setting | Description | Default |

|

|

221

|

+

|--------------------|---------------------------------------------------------------------|---------------|

|

|

222

|

+

| `systemPrompt` | System Prompt text | - |

|

|

223

|

+

| `systemPromptPath` | Path to system prompt file | - |

|

|

224

|

+

| `exclude` | Files to exclude from AI analysis | - |

|

|

225

|

+

| `type` | Type of commit message to generate | conventional |

|

|

226

|

+

| `locale` | Locale for the generated commit messages | en |

|

|

227

|

+

| `generate` | Number of commit messages to generate | 1 |

|

|

228

|

+

| `logging` | Enable logging | true |

|

|

229

|

+

| `ignoreBody` | Whether the commit message includes body | true |

|

|

230

|

+

| `maxLength` | Maximum character length of the Subject of generated commit message | 50 |

|

|

231

|

+

| `timeout` | Request timeout (milliseconds) | 10000 |

|

|

232

|

+

| `temperature` | Model's creativity (0.0 - 2.0) | 0.7 |

|

|

233

|

+

| `maxTokens` | Maximum number of tokens to generate | 1024 |

|

|

234

|

+

| `topP` | Nucleus sampling | 1 |

|

|

233

235

|

|

|

234

236

|

> 👉 **Tip:** To set the General Settings for each model, use the following command.

|

|

235

237

|

> ```shell

|

|

@@ -266,35 +268,17 @@ aicommit2 config set exclude="*.ts,*.json"

|

|

|

266

268

|

```

|

|

267

269

|

|

|

268

270

|

> NOTE: `exclude` option does not support per model. It is **only** supported by General Settings.

|

|

269

|

-

|

|

270

|

-

##### timeout

|

|

271

|

-

|

|

272

|

-

The timeout for network requests in milliseconds.

|

|

273

|

-

|

|

274

|

-

Default: `10_000` (10 seconds)

|

|

275

|

-

|

|

276

|

-

```sh

|

|

277

|

-

aicommit2 config set timeout=20000 # 20s

|

|

278

|

-

```

|

|

279

|

-

|

|

280

|

-

##### temperature

|

|

281

|

-

|

|

282

|

-

The temperature (0.0-2.0) is used to control the randomness of the output

|

|

283

|

-

|

|

284

|

-

Default: `0.7`

|

|

285

271

|

|

|

286

|

-

|

|

287

|

-

aicommit2 config set temperature=0.3

|

|

288

|

-

```

|

|

272

|

+

##### type

|

|

289

273

|

|

|

290

|

-

|

|

274

|

+

Default: `conventional`

|

|

291

275

|

|

|

292

|

-

|

|

276

|

+

Supported: `conventional`, `gitmoji`

|

|

293

277

|

|

|

294

|

-

|

|

278

|

+

The type of commit message to generate. Set this to "conventional" to generate commit messages that follow the Conventional Commits specification:

|

|

295

279

|

|

|

296

280

|

```sh

|

|

297

|

-

aicommit2 config set

|

|

281

|

+

aicommit2 config set type="conventional"

|

|

298

282

|

```

|

|

299

283

|

|

|

300

284

|

##### locale

|

|

@@ -319,18 +303,40 @@ Note, this will use more tokens as it generates more results.

|

|

|

319

303

|

aicommit2 config set generate=2

|

|

320

304

|

```

|

|

321

305

|

|

|

322

|

-

#####

|

|

306

|

+

##### logging

|

|

323

307

|

|

|

324

|

-

Default: `

|

|

308

|

+

Default: `true`

|

|

325

309

|

|

|

326

|

-

|

|

310

|

+

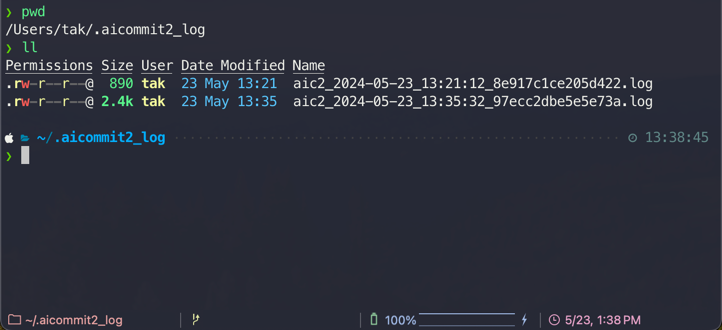

Option that allows users to decide whether to generate a log file capturing the responses.

|

|

311

|

+

The log files will be stored in the `~/.aicommit2_log` directory(user's home).

|

|

327

312

|

|

|

328

|

-

|

|

313

|

+

|

|

314

|

+

|

|

315

|

+

- You can remove all logs below comamnd.

|

|

329

316

|

|

|

330

317

|

```sh

|

|

331

|

-

aicommit2

|

|

318

|

+

aicommit2 log removeAll

|

|

319

|

+

```

|

|

320

|

+

|

|

321

|

+

##### ignoreBody

|

|

322

|

+

|

|

323

|

+

Default: `true`

|

|

324

|

+

|

|

325

|

+

This option determines whether the commit message includes body. If you want to include body in message, you can set it to `false`.

|

|

326

|

+

|

|

327

|

+

```sh

|

|

328

|

+

aicommit2 config set ignoreBody="false"

|

|

332

329

|

```

|

|

333

330

|

|

|

331

|

+

|

|

332

|

+

|

|

333

|

+

|

|

334

|

+

```sh

|

|

335

|

+

aicommit2 config set ignoreBody="true"

|

|

336

|

+

```

|

|

337

|

+

|

|

338

|

+

|

|

339

|

+

|

|

334

340

|

##### maxLength

|

|

335

341

|

|

|

336

342

|

The maximum character length of the Subject of generated commit message

|

|

@@ -341,39 +347,63 @@ Default: `50`

|

|

|

341

347

|

aicommit2 config set maxLength=100

|

|

342

348

|

```

|

|

343

349

|

|

|

344

|

-

#####

|

|

350

|

+

##### timeout

|

|

345

351

|

|

|

346

|

-

|

|

352

|

+

The timeout for network requests in milliseconds.

|

|

347

353

|

|

|

348

|

-

|

|

349

|

-

The log files will be stored in the `~/.aicommit2_log` directory(user's home).

|

|

354

|

+

Default: `10_000` (10 seconds)

|

|

350

355

|

|

|

351

|

-

|

|

356

|

+

```sh

|

|

357

|

+

aicommit2 config set timeout=20000 # 20s

|

|

358

|

+

```

|

|

352

359

|

|

|

353

|

-

|

|

360

|

+

##### temperature

|

|

361

|

+

|

|

362

|

+

The temperature (0.0-2.0) is used to control the randomness of the output

|

|

363

|

+

|

|

364

|

+

Default: `0.7`

|

|

354

365

|

|

|

355

366

|

```sh

|

|

356

|

-

aicommit2

|

|

367

|

+

aicommit2 config set temperature=0.3

|

|

357

368

|

```

|

|

358

369

|

|

|

359

|

-

#####

|

|

370

|

+

##### maxTokens

|

|

360

371

|

|

|

361

|

-

|

|

372

|

+

The maximum number of tokens that the AI models can generate.

|

|

362

373

|

|

|

363

|

-

|

|

374

|

+

Default: `1024`

|

|

364

375

|

|

|

365

376

|

```sh

|

|

366

|

-

aicommit2 config set

|

|

377

|

+

aicommit2 config set maxTokens=3000

|

|

367

378

|

```

|

|

368

379

|

|

|

369

|

-

|

|

380

|

+

##### topP

|

|

381

|

+

|

|

382

|

+

Default: `1`

|

|

370

383

|

|

|

384

|

+

Nucleus sampling, where the model considers the results of the tokens with top_p probability mass.

|

|

371

385

|

|

|

372

386

|

```sh

|

|

373

|

-

aicommit2 config set

|

|

387

|

+

aicommit2 config set topP=0.2

|

|

374

388

|

```

|

|

375

389

|

|

|

376

|

-

|

|

390

|

+

## Available General Settings by Model

|

|

391

|

+

| | timeout | temperature | maxTokens | topP |

|

|

392

|

+

|:--------------------:|:-------:|:-----------:|:---------:|:----:|

|

|

393

|

+

| **OpenAI** | ✓ | ✓ | ✓ | ✓ |

|

|

394

|

+

| **Anthropic Claude** | | ✓ | ✓ | |

|

|

395

|

+

| **Gemini** | | ✓ | ✓ | |

|

|

396

|

+

| **Mistral AI** | ✓ | ✓ | ✓ | ✓ |

|

|

397

|

+

| **Codestral** | ✓ | ✓ | ✓ | ✓ |

|

|

398

|

+

| **Cohere** | | ✓ | ✓ | |

|

|

399

|

+

| **Groq** | ✓ | ✓ | ✓ | ✓ |

|

|

400

|

+

| **Perplexity** | ✓ | ✓ | ✓ | ✓ |

|

|

401

|

+

| **DeepSeek** | ✓ | ✓ | ✓ | ✓ |

|

|

402

|

+

| **Huggingface** | | | | |

|

|

403

|

+

| **Ollama** | ✓ | ✓ | | |

|

|

404

|

+

|

|

405

|

+

> All AI support the following options in General Settings.

|

|

406

|

+

> - systemPrompt, systemPromptPath, exclude, type, locale, generate, logging, ignoreBody, maxLength

|

|

377

407

|

|

|

378

408

|

## Model-Specific Settings

|

|

379

409

|

|

|

@@ -388,7 +418,6 @@ aicommit2 config set ignoreBody="true"

|

|

|

388

418

|

| `url` | API endpoint URL | https://api.openai.com |

|

|

389

419

|

| `path` | API path | /v1/chat/completions |

|

|

390

420

|

| `proxy` | Proxy settings | - |

|

|

391

|

-

| `topP` | Nucleus sampling | 1 |

|

|

392

421

|

|

|

393

422

|

##### OPENAI.key

|

|

394

423

|

|

|

@@ -482,6 +511,7 @@ aicommit2 config set OLLAMA.timeout=<timeout>

|

|

|

482

511

|

Ollama does not support the following options in General Settings.

|

|

483

512

|

|

|

484

513

|

- maxTokens

|

|

514

|

+

- topP

|

|

485

515

|

|

|

486

516

|

### HuggingFace

|

|

487

517

|

|

|

@@ -524,6 +554,7 @@ Huggingface does not support the following options in General Settings.

|

|

|

524

554

|

- maxTokens

|

|

525

555

|

- timeout

|

|

526

556

|

- temperature

|

|

557

|

+

- topP

|

|

527

558

|

|

|

528

559

|

### Gemini

|

|

529

560

|

|

|

@@ -558,6 +589,7 @@ aicommit2 config set GEMINI.model="gemini-1.5-pro-exp-0801"

|

|

|

558

589

|

Gemini does not support the following options in General Settings.

|

|

559

590

|

|

|

560

591

|

- timeout

|

|

592

|

+

- topP

|

|

561

593

|

|

|

562

594

|

### Anthropic

|

|

563

595

|

|

|

@@ -589,6 +621,7 @@ aicommit2 config set ANTHROPIC.model="claude-3-5-sonnet-20240620"

|

|

|

589

621

|

Anthropic does not support the following options in General Settings.

|

|

590

622

|

|

|

591

623

|

- timeout

|

|

624

|

+

- topP

|

|

592

625

|

|

|

593

626

|

### Mistral

|

|

594

627

|

|

|

@@ -596,7 +629,6 @@ Anthropic does not support the following options in General Settings.

|

|

|

596

629

|

|----------|------------------|----------------|

|

|

597

630

|

| `key` | API key | - |

|

|

598

631

|

| `model` | Model to use | `mistral-tiny` |

|

|

599

|

-

| `topP` | Nucleus sampling | 1 |

|

|

600

632

|

|

|

601

633

|

##### MISTRAL.key

|

|

602

634

|

|

|

@@ -622,23 +654,12 @@ Supported:

|

|

|

622

654

|

- `mistral-large-2402`

|

|

623

655

|

- `mistral-embed`

|

|

624

656

|

|

|

625

|

-

##### MISTRAL.topP

|

|

626

|

-

|

|

627

|

-

Default: `1`

|

|

628

|

-

|

|

629

|

-

Nucleus sampling, where the model considers the results of the tokens with top_p probability mass.

|

|

630

|

-

|

|

631

|

-

```sh

|

|

632

|

-

aicommit2 config set MISTRAL.topP=0.2

|

|

633

|

-

```

|

|

634

|

-

|

|

635

657

|

### Codestral

|

|

636

658

|

|

|

637

659

|

| Setting | Description | Default |

|

|

638

660

|

|---------|------------------|--------------------|

|

|

639

661

|

| `key` | API key | - |

|

|

640

662

|

| `model` | Model to use | `codestral-latest` |

|

|

641

|

-

| `topP` | Nucleus sampling | 1 |

|

|

642

663

|

|

|

643

664

|

##### CODESTRAL.key

|

|

644

665

|

|

|

@@ -656,16 +677,6 @@ Supported:

|

|

|

656

677

|

aicommit2 config set CODESTRAL.model="codestral-2405"

|

|

657

678

|

```

|

|

658

679

|

|

|

659

|

-

##### CODESTRAL.topP

|

|

660

|

-

|

|

661

|

-

Default: `1`

|

|

662

|

-

|

|

663

|

-

Nucleus sampling, where the model considers the results of the tokens with top_p probability mass.

|

|

664

|

-

|

|

665

|

-

```sh

|

|

666

|

-

aicommit2 config set CODESTRAL.topP=0.1

|

|

667

|

-

```

|

|

668

|

-

|

|

669

680

|

#### Cohere

|

|

670

681

|

|

|

671

682

|

| Setting | Description | Default |

|

|

@@ -696,6 +707,7 @@ aicommit2 config set COHERE.model="command-nightly"

|

|

|

696

707

|

Cohere does not support the following options in General Settings.

|

|

697

708

|

|

|

698

709

|

- timeout

|

|

710

|

+

- topP

|

|

699

711

|

|

|

700

712

|

### Groq

|

|

701

713

|

|

|

@@ -721,6 +733,8 @@ Supported:

|

|

|

721

733

|

- `llama3-8b-8192`

|

|

722

734

|

- `llama3-groq-70b-8192-tool-use-preview`

|

|

723

735

|

- `llama3-groq-8b-8192-tool-use-preview`

|

|

736

|

+

- `llama-guard-3-8b`

|

|

737

|

+

- `mixtral-8x7b-32768`

|

|

724

738

|

|

|

725

739

|

```sh

|

|

726

740

|

aicommit2 config set GROQ.model="llama3-8b-8192"

|

|

@@ -732,7 +746,6 @@ aicommit2 config set GROQ.model="llama3-8b-8192"

|

|

|

732

746

|

|----------|------------------|-----------------------------------|

|

|

733

747

|

| `key` | API key | - |

|

|

734

748

|

| `model` | Model to use | `llama-3.1-sonar-small-128k-chat` |

|

|

735

|

-

| `topP` | Nucleus sampling | 1 |

|

|

736

749

|

|

|

737

750

|

##### PERPLEXITY.key

|

|

738

751

|

|

|

@@ -758,14 +771,27 @@ Supported:

|

|

|

758

771

|

aicommit2 config set PERPLEXITY.model="llama-3.1-70b"

|

|

759

772

|

```

|

|

760

773

|

|

|

761

|

-

|

|

774

|

+

### DeepSeek

|

|

762

775

|

|

|

763

|

-

Default

|

|

776

|

+

| Setting | Description | Default |

|

|

777

|

+

|---------|------------------|--------------------|

|

|

778

|

+

| `key` | API key | - |

|

|

779

|

+

| `model` | Model to use | `deepseek-coder` |

|

|

764

780

|

|

|

765

|

-

|

|

781

|

+

##### DEEPSEEK.key

|

|

782

|

+

|

|

783

|

+

The DeepSeek API key. If you don't have one, please sign up and subscribe in [DeepSeek Platform](https://platform.deepseek.com/).

|

|

784

|

+

|

|

785

|

+

##### DEEPSEEK.model

|

|

786

|

+

|

|

787

|

+

Default: `deepseek-coder`

|

|

788

|

+

|

|

789

|

+

Supported:

|

|

790

|

+

- `deepseek-coder`

|

|

791

|

+

- `deepseek-chat`

|

|

766

792

|

|

|

767

793

|

```sh

|

|

768

|

-

aicommit2 config set

|

|

794

|

+

aicommit2 config set DEEPSEEK.model="deepseek-chat"

|

|

769

795

|

```

|

|

770

796

|

|

|

771

797

|

## Upgrading

|