aicommit2 1.13.0 → 2.0.1

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- package/README.md +388 -376

- package/dist/cli.mjs +93 -89

- package/package.json +4 -4

package/README.md

CHANGED

|

@@ -1,6 +1,6 @@

|

|

|

1

1

|

<div align="center">

|

|

2

2

|

<div>

|

|

3

|

-

<img src="https://github.com/tak-bro/aicommit2/blob/main/img/

|

|

3

|

+

<img src="https://github.com/tak-bro/aicommit2/blob/main/img/demo-min.gif?raw=true" alt="AICommit2"/>

|

|

4

4

|

<h1 align="center">AICommit2</h1>

|

|

5

5

|

</div>

|

|

6

6

|

<p>

|

|

@@ -25,11 +25,11 @@ _aicommit2_ is a reactive CLI tool that automatically generates Git commit messa

|

|

|

25

25

|

|

|

26

26

|

## Key Features

|

|

27

27

|

|

|

28

|

-

- **Multi-AI Support**: Integrates with OpenAI, Anthropic Claude, Google Gemini, Mistral AI, Cohere, Groq

|

|

28

|

+

- **Multi-AI Support**: Integrates with OpenAI, Anthropic Claude, Google Gemini, Mistral AI, Cohere, Groq and more.

|

|

29

29

|

- **Local Model Support**: Use local AI models via Ollama.

|

|

30

30

|

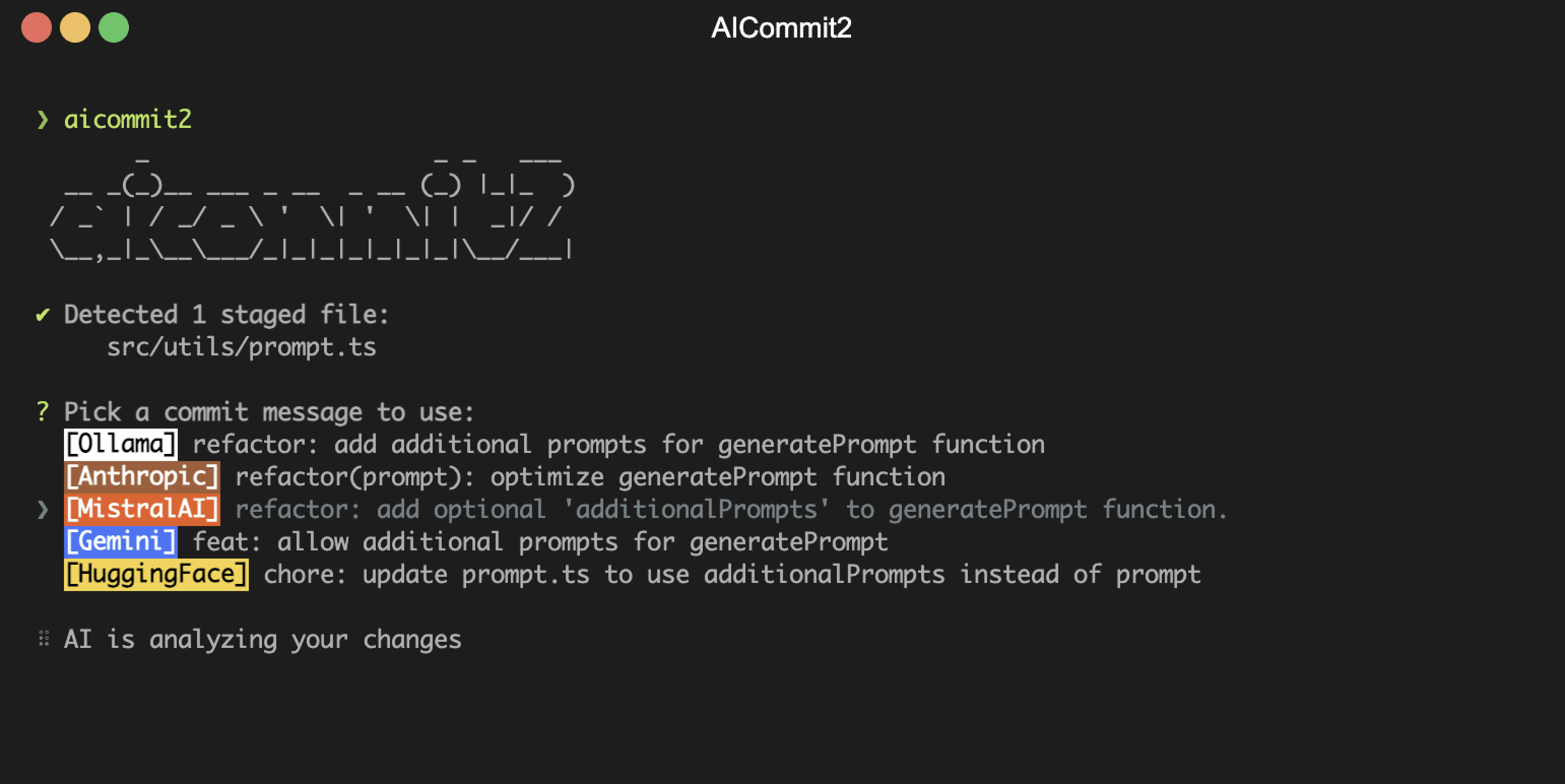

- **Reactive CLI**: Enables simultaneous requests to multiple AIs and selection of the best commit message.

|

|

31

31

|

- **Git Hook Integration**: Can be used as a prepare-commit-msg hook.

|

|

32

|

-

- **Custom

|

|

32

|

+

- **Custom Prompt**: Supports user-defined system prompt templates.

|

|

33

33

|

|

|

34

34

|

## Supported Providers

|

|

35

35

|

|

|

@@ -38,8 +38,7 @@ _aicommit2_ is a reactive CLI tool that automatically generates Git commit messa

|

|

|

38

38

|

- [OpenAI](https://openai.com/)

|

|

39

39

|

- [Anthropic Claude](https://console.anthropic.com/)

|

|

40

40

|

- [Gemini](https://gemini.google.com/)

|

|

41

|

-

- [Mistral AI](https://mistral.ai/)

|

|

42

|

-

- [Codestral **(Free till August 1, 2024)**](https://mistral.ai/news/codestral/)

|

|

41

|

+

- [Mistral AI](https://mistral.ai/) (including [Codestral](https://mistral.ai/news/codestral/))

|

|

43

42

|

- [Cohere](https://cohere.com/)

|

|

44

43

|

- [Groq](https://groq.com/)

|

|

45

44

|

- [Perplexity](https://docs.perplexity.ai/)

|

|

@@ -59,66 +58,22 @@ _aicommit2_ is a reactive CLI tool that automatically generates Git commit messa

|

|

|

59

58

|

npm install -g aicommit2

|

|

60

59

|

```

|

|

61

60

|

|

|

62

|

-

2.

|

|

61

|

+

2. Set up API keys (**at least ONE key must be set**):

|

|

63

62

|

|

|

64

|

-

It is not necessary to set all keys. **But at least one key must be set up.**

|

|

65

|

-

|

|

66

|

-

- [OpenAI](https://platform.openai.com/account/api-keys)

|

|

67

|

-

```sh

|

|

68

|

-

aicommit2 config set OPENAI_KEY=<your key>

|

|

69

|

-

```

|

|

70

|

-

|

|

71

|

-

- [Anthropic Claude](https://console.anthropic.com/)

|

|

72

|

-

```sh

|

|

73

|

-

aicommit2 config set ANTHROPIC_KEY=<your key>

|

|

74

|

-

```

|

|

75

|

-

|

|

76

|

-

- [Gemini](https://aistudio.google.com/app/apikey)

|

|

77

|

-

```sh

|

|

78

|

-

aicommit2 config set GEMINI_KEY=<your key>

|

|

79

|

-

```

|

|

80

|

-

|

|

81

|

-

- [Mistral AI](https://console.mistral.ai/)

|

|

82

|

-

```sh

|

|

83

|

-

aicommit2 config set MISTRAL_KEY=<your key>

|

|

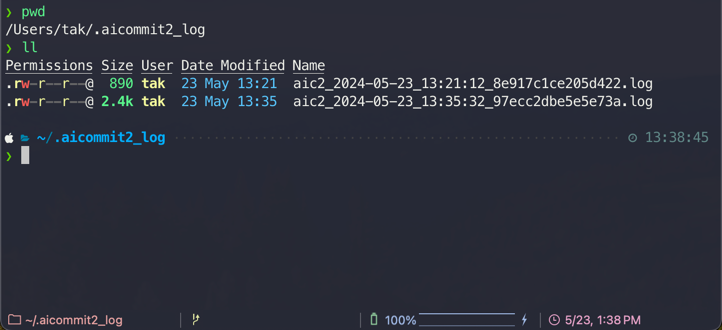

84

|

-

```

|

|

85

|

-

|

|

86

|

-

- [Codestral](https://console.mistral.ai/)

|

|

87

63

|

```sh

|

|

88

|

-

aicommit2 config set

|

|

64

|

+

aicommit2 config set OPENAI.key=<your key>

|

|

65

|

+

aicommit2 config set OLLAMA.model=<your local model>

|

|

66

|

+

# ... (similar commands for other providers)

|

|

89

67

|

```

|

|

90

68

|

|

|

91

|

-

|

|

92

|

-

```sh

|

|

93

|

-

aicommit2 config set COHERE_KEY=<your key>

|

|

94

|

-

```

|

|

95

|

-

|

|

96

|

-

- [Groq](https://console.groq.com)

|

|

97

|

-

```sh

|

|

98

|

-

aicommit2 config set GROQ_KEY=<your key>

|

|

99

|

-

```

|

|

100

|

-

|

|

101

|

-

- [Perplexity](https://docs.perplexity.ai/)

|

|

102

|

-

```sh

|

|

103

|

-

aicommit2 config set PERPLEXITY_KEY=<your key>

|

|

104

|

-

```

|

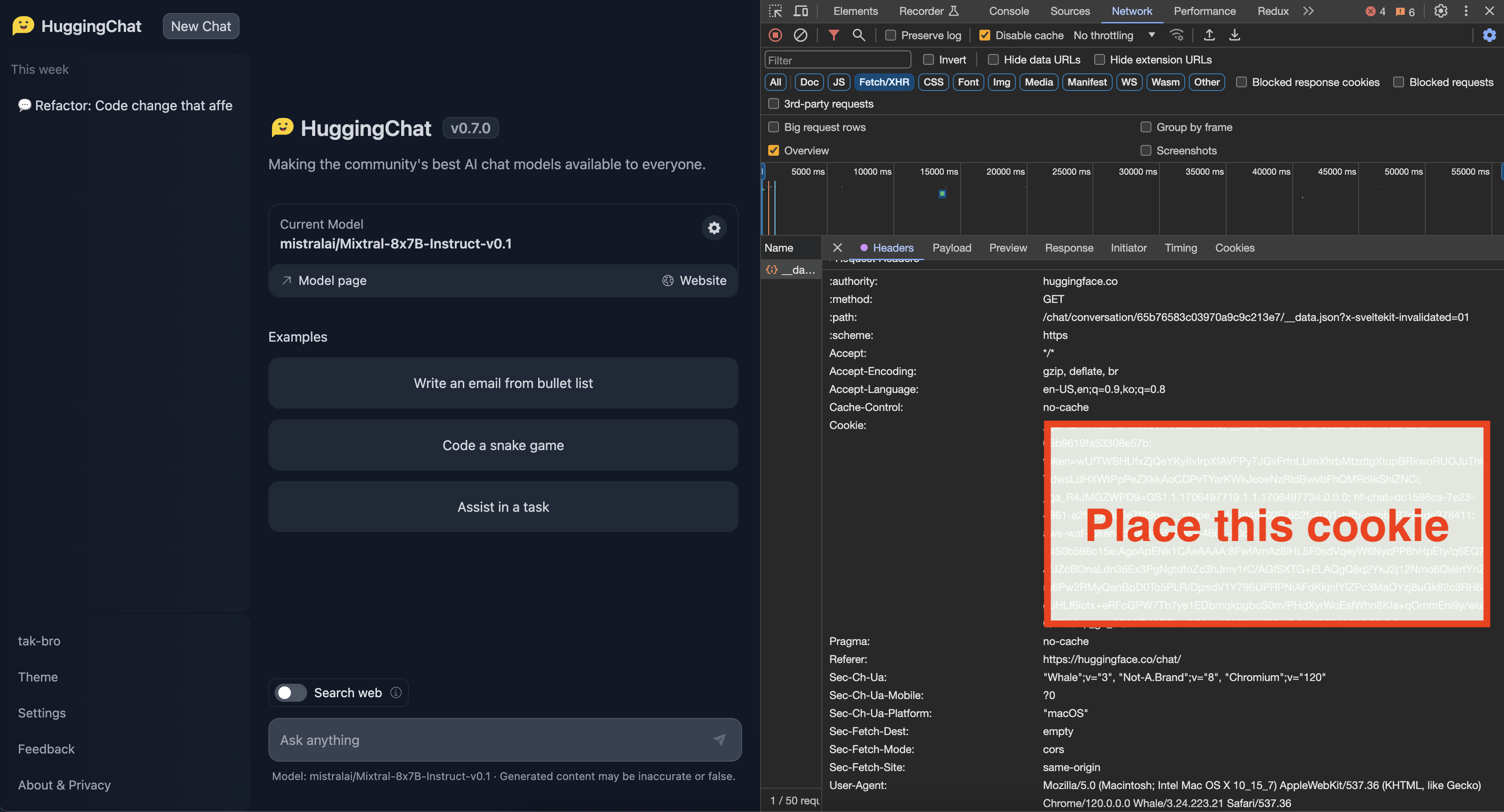

|

105

|

-

|

|

106

|

-

- [Huggingface **(Unofficial)**](https://github.com/tak-bro/aicommit2?tab=readme-ov-file#how-to-get-cookieunofficial-api)

|

|

107

|

-

```shell

|

|

108

|

-

# Please be cautious of Escape characters(\", \') in browser cookie string

|

|

109

|

-

aicommit2 config set HUGGINGFACE_COOKIE="<your browser cookie>"

|

|

110

|

-

```

|

|

111

|

-

|

|

112

|

-

This will create a `.aicommit2` file in your home directory.

|

|

113

|

-

|

|

114

|

-

> You may need to create an account and set up billing.

|

|

115

|

-

|

|

116

|

-

3. Run aicommit2 with your staged files in git repository:

|

|

69

|

+

3. Run _aicommit2_ with your staged files in git repository:

|

|

117

70

|

```shell

|

|

118

71

|

git add <files...>

|

|

119

72

|

aicommit2

|

|

120

73

|

```

|

|

121

74

|

|

|

75

|

+

> 👉 **Tip:** Use the `aic2` alias if `aicommit2` is too long for you.

|

|

76

|

+

|

|

122

77

|

## Using Locally

|

|

123

78

|

|

|

124

79

|

You can also use your model for free with [Ollama](https://ollama.com/) and it is available to use both Ollama and remote providers **simultaneously**.

|

|

@@ -126,18 +81,16 @@ You can also use your model for free with [Ollama](https://ollama.com/) and it i

|

|

|

126

81

|

1. Install Ollama from [https://ollama.com](https://ollama.com/)

|

|

127

82

|

|

|

128

83

|

2. Start it with your model

|

|

129

|

-

|

|

130

84

|

```shell

|

|

131

|

-

ollama run llama3 # model you want use. ex) codellama, deepseek-coder

|

|

85

|

+

ollama run llama3.1 # model you want use. ex) codellama, deepseek-coder

|

|

132

86

|

```

|

|

133

87

|

|

|

134

88

|

3. Set the model and host

|

|

135

|

-

|

|

136

89

|

```sh

|

|

137

|

-

aicommit2 config set

|

|

90

|

+

aicommit2 config set OLLAMA.model=<your model>

|

|

138

91

|

```

|

|

139

92

|

|

|

140

|

-

> If you want to use

|

|

93

|

+

> If you want to use Ollama, you must set **OLLAMA.model**.

|

|

141

94

|

|

|

142

95

|

4. Run _aicommit2_ with your staged in git repository

|

|

143

96

|

```shell

|

|

@@ -172,202 +125,177 @@ For example, you can stage all changes in tracked files with as you commit:

|

|

|

172

125

|

aicommit2 --all # or -a

|

|

173

126

|

```

|

|

174

127

|

|

|

175

|

-

> 👉 **Tip:** Use the `aic2` alias if `aicommit2` is too long for you.

|

|

176

|

-

|

|

177

128

|

#### CLI Options

|

|

178

129

|

|

|

179

|

-

|

|

180

|

-

-

|

|

130

|

+

- `--locale` or `-l`: Locale to use for the generated commit messages (default: **en**)

|

|

131

|

+

- `--all` or `-a`: Automatically stage changes in tracked files for the commit (default: **false**)

|

|

132

|

+

- `--type` or `-t`: Git commit message format (default: **conventional**). It supports [`conventional`](https://conventionalcommits.org/) and [`gitmoji`](https://gitmoji.dev/)

|

|

133

|

+

- `--confirm` or `-y`: Skip confirmation when committing after message generation (default: **false**)

|

|

134

|

+

- `--clipboard` or `-c`: Copy the selected message to the clipboard (default: **false**).

|

|

135

|

+

- If you give this option, **_aicommit2_ will not commit**.

|

|

136

|

+

- `--generate` or `-g`: Number of messages to generate (default: **1**)

|

|

137

|

+

- **Warning**: This uses more tokens, meaning it costs more.

|

|

138

|

+

- `--exclude` or `-x`: Files to exclude from AI analysis

|

|

181

139

|

|

|

140

|

+

Example:

|

|

182

141

|

```sh

|

|

183

|

-

aicommit2 --locale

|

|

142

|

+

aicommit2 --locale "jp" --all --type "conventional" --generate 3 --clipboard --exclude "*.json" --exclude "*.ts"

|

|

184

143

|

```

|

|

185

144

|

|

|

186

|

-

|

|

187

|

-

- Number of messages to generate (Warning: generating multiple costs more) (default: **1**)

|

|

188

|

-

- Sometimes the recommended commit message isn't the best so you want it to generate a few to pick from. You can generate multiple commit messages at once by passing in the `--generate <i>` flag, where 'i' is the number of generated messages:

|

|

145

|

+

### Git hook

|

|

189

146

|

|

|

190

|

-

|

|

191

|

-

aicommit2 --generate <i> # or -g <i>

|

|

192

|

-

```

|

|

147

|

+

You can also integrate _aicommit2_ with Git via the [`prepare-commit-msg`](https://git-scm.com/docs/githooks#_prepare_commit_msg) hook. This lets you use Git like you normally would, and edit the commit message before committing.

|

|

193

148

|

|

|

194

|

-

|

|

149

|

+

#### Install

|

|

195

150

|

|

|

196

|

-

|

|

197

|

-

- Automatically stage changes in tracked files for the commit (default: **false**)

|

|

151

|

+

In the Git repository you want to install the hook in:

|

|

198

152

|

|

|

199

153

|

```sh

|

|

200

|

-

aicommit2

|

|

154

|

+

aicommit2 hook install

|

|

201

155

|

```

|

|

202

156

|

|

|

203

|

-

|

|

204

|

-

- Automatically stage changes in tracked files for the commit (default: **conventional**)

|

|

205

|

-

- it supports [`conventional`](https://conventionalcommits.org/) and [`gitmoji`](https://gitmoji.dev/)

|

|

206

|

-

|

|

207

|

-

```sh

|

|

208

|

-

aicommit2 --type conventional # or -t conventional

|

|

209

|

-

aicommit2 --type gitmoji # or -t gitmoji

|

|

210

|

-

```

|

|

157

|

+

#### Uninstall

|

|

211

158

|

|

|

212

|

-

|

|

213

|

-

- Skip confirmation when committing after message generation (default: **false**)

|

|

159

|

+

In the Git repository you want to uninstall the hook from:

|

|

214

160

|

|

|

215

161

|

```sh

|

|

216

|

-

aicommit2

|

|

162

|

+

aicommit2 hook uninstall

|

|

217

163

|

```

|

|

218

164

|

|

|

219

|

-

|

|

220

|

-

- Copy the selected message to the clipboard (default: **false**)

|

|

221

|

-

- This is a useful option when you don't want to commit through _aicommit2_.

|

|

222

|

-

- If you give this option, _aicommit2_ will not commit.

|

|

165

|

+

### Configuration

|

|

223

166

|

|

|

224

|

-

|

|

225

|

-

aicommit2 --clipboard # or -c

|

|

226

|

-

```

|

|

167

|

+

#### Reading and Setting Configuration

|

|

227

168

|

|

|

228

|

-

|

|

229

|

-

-

|

|

230

|

-

- Enable users to define and use their own prompts instead of relying solely on the default prompt

|

|

231

|

-

- Please see [Custom Prompt Template](#custom-prompt-template)

|

|

169

|

+

- READ: `aicommit2 config get <key>`

|

|

170

|

+

- SET: `aicommit2 config set <key>=<value>`

|

|

232

171

|

|

|

172

|

+

Example:

|

|

233

173

|

```sh

|

|

234

|

-

aicommit2

|

|

174

|

+

aicommit2 config get OPENAI

|

|

175

|

+

aicommit2 config get GEMINI.key

|

|

176

|

+

aicommit2 config set OPENAI.generate=3 GEMINI.temperature=0.5

|

|

235

177

|

```

|

|

236

178

|

|

|

237

|

-

|

|

238

|

-

|

|

239

|

-

You can also integrate _aicommit2_ with Git via the [`prepare-commit-msg`](https://git-scm.com/docs/githooks#_prepare_commit_msg) hook. This lets you use Git like you normally would, and edit the commit message before committing.

|

|

240

|

-

|

|

241

|

-

#### Install

|

|

242

|

-

|

|

243

|

-

In the Git repository you want to install the hook in:

|

|

179

|

+

#### How to Configure in detail

|

|

244

180

|

|

|

181

|

+

1. Command-line arguments: **use the format** `--[ModelName].[SettingKey]=value`

|

|

245

182

|

```sh

|

|

246

|

-

aicommit2

|

|

183

|

+

aicommit2 --OPENAI.locale="jp" --GEMINI.temperatue="0.5"

|

|

247

184

|

```

|

|

248

185

|

|

|

249

|

-

|

|

186

|

+

2. Configuration file: **use INI format in the `~/.aicommit2` file or use `set` command**.

|

|

187

|

+

Example `~/.aicommit2`:

|

|

188

|

+

```ini

|

|

189

|

+

# General Settings

|

|

190

|

+

logging=true

|

|

191

|

+

generate=2

|

|

192

|

+

temperature=1.0

|

|

250

193

|

|

|

251

|

-

|

|

194

|

+

# Model-Specific Settings

|

|

195

|

+

[OPENAI]

|

|

196

|

+

key="<your-api-key>"

|

|

197

|

+

temperature=0.8

|

|

198

|

+

generate=1

|

|

199

|

+

systemPromptPath="<your-prompt-path>"

|

|

252

200

|

|

|

253

|

-

|

|

254

|

-

|

|

255

|

-

|

|

256

|

-

|

|

257

|

-

#### Usage

|

|

201

|

+

[GEMINI]

|

|

202

|

+

key="<your-api-key>"

|

|

203

|

+

generate=5

|

|

204

|

+

ignoreBody=false

|

|

258

205

|

|

|

259

|

-

|

|

260

|

-

|

|

261

|

-

|

|

262

|

-

|

|

263

|

-

git commit # Only generates a message when it's not passed in

|

|

206

|

+

[OLLAMA]

|

|

207

|

+

temperature=0.7

|

|

208

|

+

model[]=llama3.1

|

|

209

|

+

model[]=codestral

|

|

264

210

|

```

|

|

265

211

|

|

|

266

|

-

>

|

|

212

|

+

> The priority of settings is: **Command-line Arguments > Model-Specific Settings > General Settings > Default Values**.

|

|

267

213

|

|

|

268

|

-

|

|

214

|

+

## General Settings

|

|

269

215

|

|

|

270

|

-

|

|

216

|

+

The following settings can be applied to most models, but support may vary.

|

|

217

|

+

Please check the documentation for each specific model to confirm which settings are supported.

|

|

271

218

|

|

|

272

|

-

|

|

219

|

+

| Setting | Description | Default |

|

|

220

|

+

|--------------------|---------------------------------------------------------------------|--------------|

|

|

221

|

+

| `systemPrompt` | System Prompt text | - |

|

|

222

|

+

| `systemPromptPath` | Path to system prompt file | - |

|

|

223

|

+

| `exclude` | Files to exclude from AI analysis | - |

|

|

224

|

+

| `timeout` | Request timeout (milliseconds) | 10000 |

|

|

225

|

+

| `temperature` | Model's creativity (0.0 - 2.0) | 0.7 |

|

|

226

|

+

| `maxTokens` | Maximum number of tokens to generate | 1024 |

|

|

227

|

+

| `locale` | Locale for the generated commit messages | en |

|

|

228

|

+

| `generate` | Number of commit messages to generate | 1 |

|

|

229

|

+

| `type` | Type of commit message to generate | conventional |

|

|

230

|

+

| `maxLength` | Maximum character length of the Subject of generated commit message | 50 |

|

|

231

|

+

| `logging` | Enable logging | true |

|

|

232

|

+

| `ignoreBody` | Whether the commit message includes body | true |

|

|

273

233

|

|

|

274

|

-

|

|

234

|

+

> 👉 **Tip:** To set the General Settings for each model, use the following command.

|

|

235

|

+

> ```shell

|

|

236

|

+

> aicommit2 config set OPENAI.locale="jp"

|

|

237

|

+

> aicommit2 config set CODESTRAL.type="gitmoji"

|

|

238

|

+

> aicommit2 config set GEMINI.ignoreBody=false

|

|

239

|

+

> ```

|

|

275

240

|

|

|

276

|

-

|

|

241

|

+

##### systemPrompt

|

|

242

|

+

- Allow users to specify a custom system prompt

|

|

277

243

|

|

|

278

244

|

```sh

|

|

279

|

-

aicommit2 config

|

|

245

|

+

aicommit2 config set systemPrompt="Generate git commit message."

|

|

280

246

|

```

|

|

281

247

|

|

|

282

|

-

|

|

248

|

+

> `systemPrompt` takes precedence over `systemPromptPath` and does not apply at the same time.

|

|

249

|

+

|

|

250

|

+

##### systemPromptPath

|

|

251

|

+

- Allow users to specify a custom file path for their own system prompt template

|

|

252

|

+

- Please see [Custom Prompt Template](#custom-prompt-template)

|

|

283

253

|

|

|

284

254

|

```sh

|

|

285

|

-

aicommit2 config

|

|

255

|

+

aicommit2 config set systemPromptPath="/path/to/user/prompt.txt"

|

|

286

256

|

```

|

|

287

257

|

|

|

288

|

-

|

|

258

|

+

##### exclude

|

|

259

|

+

|

|

260

|

+

- Files to exclude from AI analysis

|

|

261

|

+

- It is applied with the `--exclude` option of the CLI option. All files excluded through `--exclude` in CLI and `exclude` general setting.

|

|

289

262

|

|

|

290

263

|

```sh

|

|

291

|

-

aicommit2 config

|

|

264

|

+

aicommit2 config set exclude="*.ts"

|

|

265

|

+

aicommit2 config set exclude="*.ts,*.json"

|

|

292

266

|

```

|

|

293

267

|

|

|

294

|

-

|

|

268

|

+

> NOTE: `exclude` option does not support per model. It is **only** supported by General Settings.

|

|

269

|

+

|

|

270

|

+

##### timeout

|

|

295

271

|

|

|

296

|

-

|

|

272

|

+

The timeout for network requests in milliseconds.

|

|

273

|

+

|

|

274

|

+

Default: `10_000` (10 seconds)

|

|

297

275

|

|

|

298

276

|

```sh

|

|

299

|

-

aicommit2 config set

|

|

277

|

+

aicommit2 config set timeout=20000 # 20s

|

|

300

278

|

```

|

|

301

279

|

|

|

302

|

-

|

|

280

|

+

##### temperature

|

|

303

281

|

|

|

304

|

-

|

|

305

|

-

aicommit2 config set OPENAI_KEY=<your-api-key>

|

|

306

|

-

```

|

|

282

|

+

The temperature (0.0-2.0) is used to control the randomness of the output

|

|

307

283

|

|

|

308

|

-

|

|

284

|

+

Default: `0.7`

|

|

309

285

|

|

|

310

286

|

```sh

|

|

311

|

-

aicommit2 config set

|

|

287

|

+

aicommit2 config set temperature=0.3

|

|

312

288

|

```

|

|

313

289

|

|

|

314

|

-

|

|

290

|

+

##### maxTokens

|

|

315

291

|

|

|

316

|

-

|

|

317

|

-

|----------------------|----------------------------------------|------------------------------------------------------------------------------------------------------------------------------------|

|

|

318

|

-

| `OPENAI_KEY` | N/A | The OpenAI API key |

|

|

319

|

-

| `OPENAI_MODEL` | `gpt-3.5-turbo` | The OpenAI Model to use |

|

|

320

|

-

| `OPENAI_URL` | `https://api.openai.com` | The OpenAI URL |

|

|

321

|

-

| `OPENAI_PATH` | `/v1/chat/completions` | The OpenAI request pathname |

|

|

322

|

-

| `ANTHROPIC_KEY` | N/A | The Anthropic API key |

|

|

323

|

-

| `ANTHROPIC_MODEL` | `claude-3-haiku-20240307` | The Anthropic Model to use |

|

|

324

|

-

| `GEMINI_KEY` | N/A | The Gemini API key |

|

|

325

|

-

| `GEMINI_MODEL` | `gemini-1.5-pro-latest` | The Gemini Model |

|

|

326

|

-

| `MISTRAL_KEY` | N/A | The Mistral API key |

|

|

327

|

-

| `MISTRAL_MODEL` | `mistral-tiny` | The Mistral Model to use |

|

|

328

|

-

| `CODESTRAL_KEY` | N/A | The Codestral API key |

|

|

329

|

-

| `CODESTRAL_MODEL` | `codestral-latest` | The Codestral Model to use |

|

|

330

|

-

| `COHERE_KEY` | N/A | The Cohere API Key |

|

|

331

|

-

| `COHERE_MODEL` | `command` | The identifier of the Cohere model |

|

|

332

|

-

| `GROQ_KEY` | N/A | The Groq API Key |

|

|

333

|

-

| `GROQ_MODEL` | `gemma-7b-it` | The Groq model name to use |

|

|

334

|

-

| `PERPLEXITY_KEY` | N/A | The Perplexity API key |

|

|

335

|

-

| `PERPLEXITY_MODEL` | `llama-3.1-sonar-small-128k-chat` | The Perplexity Model to use |

|

|

336

|

-

| `HUGGINGFACE_COOKIE` | N/A | The HuggingFace Cookie string |

|

|

337

|

-

| `HUGGINGFACE_MODEL` | `mistralai/Mixtral-8x7B-Instruct-v0.1` | The HuggingFace Model to use |

|

|

338

|

-

| `OLLAMA_MODEL` | N/A | The Ollama Model. It should be downloaded your local |

|

|

339

|

-

| `OLLAMA_HOST` | `http://localhost:11434` | The Ollama Host |

|

|

340

|

-

| `OLLAMA_TIMEOUT` | `100_000` ms | Request timeout for the Ollama |

|

|

341

|

-

| `locale` | `en` | Locale for the generated commit messages |

|

|

342

|

-

| `generate` | `1` | Number of commit messages to generate |

|

|

343

|

-

| `type` | `conventional` | Type of commit message to generate |

|

|

344

|

-

| `proxy` | N/A | Set a HTTP/HTTPS proxy to use for requests(only **OpenAI**) |

|

|

345

|

-

| `timeout` | `10_000` ms | Network request timeout |

|

|

346

|

-

| `max-length` | `50` | Maximum character length of the generated commit message(Subject) |

|

|

347

|

-

| `max-tokens` | `1024` | The maximum number of tokens that the AI models can generate (for **Open AI, Anthropic, Gemini, Mistral, Codestral**) |

|

|

348

|

-

| `temperature` | `0.7` | The temperature (0.0-2.0) is used to control the randomness of the output (for **Open AI, Anthropic, Gemini, Mistral, Codestral**) |

|

|

349

|

-

| `promptPath` | N/A | Allow users to specify a custom file path for their own prompt template |

|

|

350

|

-

| `logging` | `false` | Whether to log AI responses for debugging (true or false) |

|

|

351

|

-

| `ignoreBody` | `true` | Whether the commit message includes body (true or false) |

|

|

352

|

-

|

|

353

|

-

> **Currently, options are set universally. However, there are plans to develop the ability to set individual options in the future.**

|

|

354

|

-

|

|

355

|

-

### Available Options by Model

|

|

356

|

-

| | locale | generate | type | proxy | timeout | max-length | max-tokens | temperature | prompt |

|

|

357

|

-

|:--------------------:|:------:|:--------:|:-----:|:-----:|:----------------------:|:-----------:|:----------:|:-----------:|:------:|

|

|

358

|

-

| **OpenAI** | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

|

|

359

|

-

| **Anthropic Claude** | ✓ | ✓ | ✓ | | | ✓ | ✓ | ✓ | ✓ |

|

|

360

|

-

| **Gemini** | ✓ | ✓ | ✓ | | | ✓ | ✓ | ✓ | ✓ |

|

|

361

|

-

| **Mistral AI** | ✓ | ✓ | ✓ | | ✓ | ✓ | ✓ | ✓ | ✓ |

|

|

362

|

-

| **Codestral** | ✓ | ✓ | ✓ | | ✓ | ✓ | ✓ | ✓ | ✓ |

|

|

363

|

-

| **Cohere** | ✓ | ✓ | ✓ | | | ✓ | ✓ | ✓ | ✓ |

|

|

364

|

-

| **Groq** | ✓ | ✓ | ✓ | | ✓ | ✓ | | | ✓ |

|

|

365

|

-

| **Huggingface** | ✓ | ✓ | ✓ | | | ✓ | | | ✓ |

|

|

366

|

-

| **Perplexity** | ✓ | ✓ | ✓ | | ✓ | ✓ | ✓ | ✓ | ✓ |

|

|

367

|

-

| **Ollama** | ✓ | ✓ | ✓ | | ⚠<br/>(OLLAMA_TIMEOUT) | ✓ | | ✓ | ✓ |

|

|

292

|

+

The maximum number of tokens that the AI models can generate.

|

|

368

293

|

|

|

294

|

+

Default: `1024`

|

|

369

295

|

|

|

370

|

-

|

|

296

|

+

```sh

|

|

297

|

+

aicommit2 config set maxTokens=3000

|

|

298

|

+

```

|

|

371

299

|

|

|

372

300

|

##### locale

|

|

373

301

|

|

|

@@ -375,6 +303,10 @@ Default: `en`

|

|

|

375

303

|

|

|

376

304

|

The locale to use for the generated commit messages. Consult the list of codes in: https://wikipedia.org/wiki/List_of_ISO_639_language_codes.

|

|

377

305

|

|

|

306

|

+

```sh

|

|

307

|

+

aicommit2 config set locale="jp"

|

|

308

|

+

```

|

|

309

|

+

|

|

378

310

|

##### generate

|

|

379

311

|

|

|

380

312

|

Default: `1`

|

|

@@ -383,189 +315,251 @@ The number of commit messages to generate to pick from.

|

|

|

383

315

|

|

|

384

316

|

Note, this will use more tokens as it generates more results.

|

|

385

317

|

|

|

386

|

-

##### proxy

|

|

387

|

-

|

|

388

|

-

Set a HTTP/HTTPS proxy to use for requests.

|

|

389

|

-

|

|

390

|

-

To clear the proxy option, you can use the command (note the empty value after the equals sign):

|

|

391

|

-

|

|

392

|

-

> **Only supported within the OpenAI**

|

|

393

|

-

|

|

394

318

|

```sh

|

|

395

|

-

aicommit2 config set

|

|

319

|

+

aicommit2 config set generate=2

|

|

396

320

|

```

|

|

397

321

|

|

|

398

|

-

#####

|

|

322

|

+

##### type

|

|

399

323

|

|

|

400

|

-

|

|

324

|

+

Default: `conventional`

|

|

401

325

|

|

|

402

|

-

|

|

326

|

+

Supported: `conventional`, `gitmoji`

|

|

327

|

+

|

|

328

|

+

The type of commit message to generate. Set this to "conventional" to generate commit messages that follow the Conventional Commits specification:

|

|

403

329

|

|

|

404

330

|

```sh

|

|

405

|

-

aicommit2 config set

|

|

331

|

+

aicommit2 config set type="conventional"

|

|

406

332

|

```

|

|

407

333

|

|

|

408

|

-

#####

|

|

334

|

+

##### maxLength

|

|

409

335

|

|

|

410

|

-

The maximum character length of the generated commit message

|

|

336

|

+

The maximum character length of the Subject of generated commit message

|

|

411

337

|

|

|

412

338

|

Default: `50`

|

|

413

339

|

|

|

414

340

|

```sh

|

|

415

|

-

aicommit2 config set

|

|

341

|

+

aicommit2 config set maxLength=100

|

|

416

342

|

```

|

|

417

343

|

|

|

418

|

-

#####

|

|

419

|

-

|

|

420

|

-

Default: `conventional`

|

|

344

|

+

##### logging

|

|

421

345

|

|

|

422

|

-

|

|

346

|

+

Default: `true`

|

|

423

347

|

|

|

424

|

-

|

|

348

|

+

Option that allows users to decide whether to generate a log file capturing the responses.

|

|

349

|

+

The log files will be stored in the `~/.aicommit2_log` directory(user's home).

|

|

425

350

|

|

|

426

|

-

|

|

427

|

-

aicommit2 config set type=conventional

|

|

428

|

-

```

|

|

351

|

+

|

|

429

352

|

|

|

430

|

-

You can

|

|

353

|

+

- You can remove all logs below comamnd.

|

|

431

354

|

|

|

432

355

|

```sh

|

|

433

|

-

aicommit2

|

|

356

|

+

aicommit2 log removeAll

|

|

434

357

|

```

|

|

435

358

|

|

|

436

|

-

#####

|

|

437

|

-

The maximum number of tokens that the AI models can generate.

|

|

359

|

+

##### ignoreBody

|

|

438

360

|

|

|

439

|

-

Default: `

|

|

361

|

+

Default: `true`

|

|

362

|

+

|

|

363

|

+

This option determines whether the commit message includes body. If you want to include body in message, you can set it to `false`.

|

|

440

364

|

|

|

441

365

|

```sh

|

|

442

|

-

aicommit2 config set

|

|

366

|

+

aicommit2 config set ignoreBody="false"

|

|

443

367

|

```

|

|

444

368

|

|

|

445

|

-

|

|

446

|

-

The temperature (0.0-2.0) is used to control the randomness of the output

|

|

369

|

+

|

|

447

370

|

|

|

448

|

-

Default: `0.7`

|

|

449

371

|

|

|

450

372

|

```sh

|

|

451

|

-

aicommit2 config set

|

|

373

|

+

aicommit2 config set ignoreBody="true"

|

|

452

374

|

```

|

|

453

375

|

|

|

454

|

-

|

|

455

|

-

- Allow users to specify a custom file path for their own prompt template

|

|

456

|

-

- Enable users to define and use their own prompts instead of relying solely on the default prompt

|

|

457

|

-

- Please see [Custom Prompt Template](#custom-prompt-template)

|

|

376

|

+

|

|

458

377

|

|

|

459

|

-

|

|

460

|

-

aicommit2 config set promptPath="/path/to/user/prompt.txt"

|

|

461

|

-

```

|

|

378

|

+

## Model-Specific Settings

|

|

462

379

|

|

|

463

|

-

|

|

380

|

+

> Some models mentioned below are subject to change.

|

|

464

381

|

|

|

465

|

-

|

|

382

|

+

### OpenAI

|

|

466

383

|

|

|

467

|

-

|

|

468

|

-

|

|

384

|

+

| Setting | Description | Default |

|

|

385

|

+

|--------------------|---------------------------------------------------------------------|------------------------|

|

|

386

|

+

| `key` | API key | - |

|

|

387

|

+

| `model` | Model to use | `gpt-3.5-turbo` |

|

|

388

|

+

| `url` | API endpoint URL | https://api.openai.com |

|

|

389

|

+

| `path` | API path | /v1/chat/completions |

|

|

390

|

+

| `proxy` | Proxy settings | - |

|

|

469

391

|

|

|

470

|

-

|

|

392

|

+

##### OPENAI.key

|

|

471

393

|

|

|

472

|

-

|

|

473

|

-

aicommit2 config set logging="true"

|

|

474

|

-

```

|

|

394

|

+

The OpenAI API key. You can retrieve it from [OpenAI API Keys page](https://platform.openai.com/account/api-keys).

|

|

475

395

|

|

|

476

|

-

- You can remove all logs below comamnd.

|

|

477

|

-

|

|

478

396

|

```sh

|

|

479

|

-

aicommit2

|

|

397

|

+

aicommit2 config set OPENAI.key="your api key"

|

|

480

398

|

```

|

|

481

399

|

|

|

482

|

-

#####

|

|

400

|

+

##### OPENAI.model

|

|

483

401

|

|

|

484

|

-

Default: `

|

|

402

|

+

Default: `gpt-3.5-turbo`

|

|

485

403

|

|

|

486

|

-

|

|

404

|

+

The Chat Completions (`/v1/chat/completions`) model to use. Consult the list of models available in the [OpenAI Documentation](https://platform.openai.com/docs/models/model-endpoint-compatibility).

|

|

405

|

+

|

|

406

|

+

> Tip: If you have access, try upgrading to [`gpt-4`](https://platform.openai.com/docs/models/gpt-4) for next-level code analysis. It can handle double the input size, but comes at a higher cost. Check out OpenAI's website to learn more.

|

|

487

407

|

|

|

488

408

|

```sh

|

|

489

|

-

aicommit2 config set

|

|

409

|

+

aicommit2 config set OPENAI.model=gpt-4

|

|

490

410

|

```

|

|

491

411

|

|

|

492

|

-

|

|

412

|

+

##### OPENAI.url

|

|

493

413

|

|

|

414

|

+

Default: `https://api.openai.com`

|

|

415

|

+

|

|

416

|

+

The OpenAI URL. Both https and http protocols supported. It allows to run local OpenAI-compatible server.

|

|

494

417

|

|

|

495

418

|

```sh

|

|

496

|

-

aicommit2 config set

|

|

419

|

+

aicommit2 config set OPENAI.url="<your-host>"

|

|

497

420

|

```

|

|

498

421

|

|

|

499

|

-

|

|

422

|

+

##### OPENAI.path

|

|

423

|

+

|

|

424

|

+

Default: `/v1/chat/completions`

|

|

425

|

+

|

|

426

|

+

The OpenAI Path.

|

|

500

427

|

|

|

501

428

|

### Ollama

|

|

502

429

|

|

|

503

|

-

|

|

430

|

+

| Setting | Description | Default |

|

|

431

|

+

|--------------------|------------------------------------------------------------------------------------------------------------------|------------------------|

|

|

432

|

+

| `model` | Model(s) to use (comma-separated list) | - |

|

|

433

|

+

| `host` | Ollama host URL | http://localhost:11434 |

|

|

434

|

+

| `timeout` | Request timeout (milliseconds) | 100_000 (100sec) |

|

|

435

|

+

|

|

436

|

+

##### OLLAMA.model

|

|

504

437

|

|

|

505

438

|

The Ollama Model. Please see [a list of models available](https://ollama.com/library)

|

|

506

439

|

|

|

507

440

|

```sh

|

|

508

|

-

aicommit2 config set

|

|

509

|

-

aicommit2 config set

|

|

441

|

+

aicommit2 config set OLLAMA.model="llama3.1"

|

|

442

|

+

aicommit2 config set OLLAMA.model="llama3,codellama" # for multiple models

|

|

443

|

+

|

|

444

|

+

aicommit2 config add OLLAMA.model="gemma2" # Only Ollama.model can be added.

|

|

510

445

|

```

|

|

511

446

|

|

|

512

|

-

|

|

447

|

+

> OLLAMA.model is **string array** type to support multiple Ollama. Please see [this section](#loading-multiple-ollama-models).

|

|

448

|

+

|

|

449

|

+

##### OLLAMA.host

|

|

513

450

|

|

|

514

451

|

Default: `http://localhost:11434`

|

|

515

452

|

|

|

516

453

|

The Ollama host

|

|

517

454

|

|

|

518

455

|

```sh

|

|

519

|

-

aicommit2 config set

|

|

456

|

+

aicommit2 config set OLLAMA.host=<host>

|

|

520

457

|

```

|

|

521

458

|

|

|

522

|

-

#####

|

|

459

|

+

##### OLLAMA.timeout

|

|

523

460

|

|

|

524

461

|

Default: `100_000` (100 seconds)

|

|

525

462

|

|

|

526

|

-

Request timeout for the Ollama.

|

|

463

|

+

Request timeout for the Ollama.

|

|

527

464

|

|

|

528

465

|

```sh

|

|

529

|

-

aicommit2 config set

|

|

466

|

+

aicommit2 config set OLLAMA.timeout=<timeout>

|

|

530

467

|

```

|

|

531

468

|

|

|

532

|

-

|

|

469

|

+

##### Unsupported Options

|

|

533

470

|

|

|

534

|

-

|

|

471

|

+

Ollama does not support the following options in General Settings.

|

|

472

|

+

|

|

473

|

+

- maxTokens

|

|

535

474

|

|

|

536

|

-

|

|

475

|

+

### HuggingFace

|

|

537

476

|

|

|

538

|

-

|

|

477

|

+

| Setting | Description | Default |

|

|

478

|

+

|--------------------|------------------------------------------------------------------------------------------------------------------|----------------------------------------|

|

|

479

|

+

| `cookie` | Authentication cookie | - |

|

|

480

|

+

| `model` | Model to use | `CohereForAI/c4ai-command-r-plus` |

|

|

539

481

|

|

|

540

|

-

|

|

482

|

+

##### HUGGINGFACE.cookie

|

|

541

483

|

|

|

542

|

-

The Chat

|

|

484

|

+

The [Huggingface Chat](https://huggingface.co/chat/) Cookie. Please check [how to get cookie](https://github.com/tak-bro/aicommit2?tab=readme-ov-file#how-to-get-cookieunofficial-api)

|

|

543

485

|

|

|

544

|

-

|

|

486

|

+

```sh

|

|

487

|

+

# Please be cautious of Escape characters(\", \') in browser cookie string

|

|

488

|

+

aicommit2 config set HUGGINGFACE.cookie="your-cooke"

|

|

489

|

+

```

|

|

490

|

+

|

|

491

|

+

##### HUGGINGFACE.model

|

|

492

|

+

|

|

493

|

+

Default: `CohereForAI/c4ai-command-r-plus`

|

|

494

|

+

|

|

495

|

+

Supported:

|

|

496

|

+

- `CohereForAI/c4ai-command-r-plus`

|

|

497

|

+

- `meta-llama/Meta-Llama-3-70B-Instruct`

|

|

498

|

+

- `HuggingFaceH4/zephyr-orpo-141b-A35b-v0.1`

|

|

499

|

+

- `mistralai/Mixtral-8x7B-Instruct-v0.1`

|

|

500

|

+

- `NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO`

|

|

501

|

+

- `01-ai/Yi-1.5-34B-Chat`

|

|

502

|

+

- `mistralai/Mistral-7B-Instruct-v0.2`

|

|

503

|

+

- `microsoft/Phi-3-mini-4k-instruct`

|

|

545

504

|

|

|

546

505

|

```sh

|

|

547

|

-

aicommit2 config set

|

|

506

|

+

aicommit2 config set HUGGINGFACE.model="mistralai/Mistral-7B-Instruct-v0.2"

|

|

548

507

|

```

|

|

549

508

|

|

|

550

|

-

#####

|

|

509

|

+

##### Unsupported Options

|

|

551

510

|

|

|

552

|

-

|

|

511

|

+

Huggingface does not support the following options in General Settings.

|

|

553

512

|

|

|

554

|

-

|

|

513

|

+

- maxTokens

|

|

514

|

+

- timeout

|

|

515

|

+

- temperature

|

|

555

516

|

|

|

556

|

-

|

|

517

|

+

### Gemini

|

|

557

518

|

|

|

558

|

-

Default

|

|

519

|

+

| Setting | Description | Default |

|

|

520

|

+

|--------------------|------------------------------------------------------------------------------------------------------------------|-------------------|

|

|

521

|

+

| `key` | API key | - |

|

|

522

|

+

| `model` | Model to use | `gemini-1.5-pro` |

|

|

559

523

|

|

|

560

|

-

|

|

524

|

+

##### GEMINI.key

|

|

525

|

+

|

|

526

|

+

The Gemini API key. If you don't have one, create a key in [Google AI Studio](https://aistudio.google.com/app/apikey).

|

|

527

|

+

|

|

528

|

+

```sh

|

|

529

|

+

aicommit2 config set GEMINI.key="your api key"

|

|

530

|

+

```

|

|

531

|

+

|

|

532

|

+

##### GEMINI.model

|

|

533

|

+

|

|

534

|

+

Default: `gemini-1.5-pro`

|

|

535

|

+

|

|

536

|

+

Supported:

|

|

537

|

+

- `gemini-1.5-pro`

|

|

538

|

+

- `gemini-1.5-flash`

|

|

539

|

+

- `gemini-1.5-pro-exp-0801`

|

|

540

|

+

|

|

541

|

+

```sh

|

|

542

|

+

aicommit2 config set GEMINI.model="gemini-1.5-pro-exp-0801"

|

|

543

|

+

```

|

|

544

|

+

|

|

545

|

+

##### Unsupported Options

|

|

546

|

+

|

|

547

|

+

Gemini does not support the following options in General Settings.

|

|

548

|

+

|

|

549

|

+

- timeout

|

|

561

550

|

|

|

562

|

-

### Anthropic

|

|

551

|

+

### Anthropic

|

|

563

552

|

|

|

564

|

-

|

|

553

|

+

| Setting | Description | Default |

|

|

554

|

+

|-------------|----------------|---------------------------|

|

|

555

|

+

| `key` | API key | - |

|

|

556

|

+

| `model` | Model to use | `claude-3-haiku-20240307` |

|

|

557

|

+

|

|

558

|

+

##### ANTHROPIC.key

|

|

565

559

|

|

|

566

560

|

The Anthropic API key. To get started with Anthropic Claude, request access to their API at [anthropic.com/earlyaccess](https://www.anthropic.com/earlyaccess).

|

|

567

561

|

|

|

568

|

-

#####

|

|

562

|

+

##### ANTHROPIC.model

|

|

569

563

|

|

|

570

564

|

Default: `claude-3-haiku-20240307`

|

|

571

565

|

|

|

@@ -573,37 +567,30 @@ Supported:

|

|

|

573

567

|

- `claude-3-haiku-20240307`

|

|

574

568

|

- `claude-3-sonnet-20240229`

|

|

575

569

|

- `claude-3-opus-20240229`

|

|

576

|

-

- `claude-

|

|

577

|

-

- `claude-2.0`

|

|

578

|

-

- `claude-instant-1.2`

|

|

570

|

+

- `claude-3-5-sonnet-20240620`

|

|

579

571

|

|

|

580

572

|

```sh

|

|

581

|

-

aicommit2 config set

|

|

573

|

+

aicommit2 config set ANTHROPIC.model="claude-3-5-sonnet-20240620"

|

|

582

574

|

```

|

|

583

575

|

|

|

584

|

-

|

|

576

|

+

##### Unsupported Options

|

|

585

577

|

|

|

586

|

-

|

|

578

|

+

Anthropic does not support the following options in General Settings.

|

|

587

579

|

|

|

588

|

-

|

|

580

|

+

- timeout

|

|

589

581

|

|

|

590

|

-

|

|

582

|

+

### Mistral

|

|

591

583

|

|

|

592

|

-

Default

|

|

584

|

+

| Setting | Description | Default |

|

|

585

|

+

|--------------------|------------------------------------------------------------------------------------------------------------------|----------------|

|

|

586

|

+

| `key` | API key | - |

|

|

587

|

+

| `model` | Model to use | `mistral-tiny` |

|

|

593

588

|

|

|

594

|

-

|

|

595

|

-

- `gemini-1.5-pro-latest`

|

|

596

|

-

- `gemini-1.5-flash-latest`

|

|

597

|

-

|

|

598

|

-

> The models mentioned above are subject to change.

|

|

599

|

-

|

|

600

|

-

### MISTRAL

|

|

601

|

-

|

|

602

|

-

##### MISTRAL_KEY

|

|

589

|

+

##### MISTRAL.key

|

|

603

590

|

|

|

604

591

|

The Mistral API key. If you don't have one, please sign up and subscribe in [Mistral Console](https://console.mistral.ai/).

|

|

605

592

|

|

|

606

|

-

#####

|

|

593

|

+

##### MISTRAL.model

|

|

607

594

|

|

|

608

595

|

Default: `mistral-tiny`

|

|

609

596

|

|

|

@@ -623,15 +610,18 @@ Supported:

|

|

|

623

610

|

- `mistral-large-2402`

|

|

624

611

|

- `mistral-embed`

|

|

625

612

|

|

|

626

|

-

|

|

613

|

+

### Codestral

|

|

627

614

|

|

|

628

|

-

|

|

615

|

+

| Setting | Description | Default |

|

|

616

|

+

|--------------------|------------------------------------------------------------------------------------------------------------------|--------------------|

|

|

617

|

+

| `key` | API key | - |

|

|

618

|

+

| `model` | Model to use | `codestral-latest` |

|

|

629

619

|

|

|

630

|

-

#####

|

|

620

|

+

##### CODESTRAL.key

|

|

631

621

|

|

|

632

622

|

The Codestral API key. If you don't have one, please sign up and subscribe in [Mistral Console](https://console.mistral.ai/codestral).

|

|

633

623

|

|

|

634

|

-

#####

|

|

624

|

+

##### CODESTRAL.model

|

|

635

625

|

|

|

636

626

|

Default: `codestral-latest`

|

|

637

627

|

|

|

@@ -639,51 +629,82 @@ Supported:

|

|

|

639

629

|

- `codestral-latest`

|

|

640

630

|

- `codestral-2405`

|

|

641

631

|

|

|

642

|

-

|

|

632

|

+

```sh

|

|

633

|

+

aicommit2 config set CODESTRAL.model="codestral-2405"

|

|

634

|

+

```

|

|

643

635

|

|

|

644

|

-

|

|

636

|

+

#### Cohere

|

|

645

637

|

|

|

646

|

-

|

|

638

|

+

| Setting | Description | Default |

|

|

639

|

+

|--------------------|------------------------------------------------------------------------------------------------------------------|-------------|

|

|

640

|

+

| `key` | API key | - |

|

|

641

|

+

| `model` | Model to use | `command` |

|

|

642

|

+

|

|

643

|

+

##### COHERE.key

|

|

647

644

|

|

|

648

645

|

The Cohere API key. If you don't have one, please sign up and get the API key in [Cohere Dashboard](https://dashboard.cohere.com/).

|

|

649

646

|

|

|

650

|

-

#####

|

|

647

|

+

##### COHERE.model

|

|

651

648

|

|

|

652

649

|

Default: `command`

|

|

653

650

|

|

|

654

|

-

Supported:

|

|

651

|

+

Supported models:

|

|

655

652

|

- `command`

|

|

656

653

|

- `command-nightly`

|

|

657

654

|

- `command-light`

|

|

658

655

|

- `command-light-nightly`

|

|

659

656

|

|

|

660

|

-

|

|

657

|

+

```sh

|

|

658

|

+

aicommit2 config set COHERE.model="command-nightly"

|

|

659

|

+

```

|

|

660

|

+

|

|

661

|

+

##### Unsupported Options

|

|

662

|

+

|

|

663

|

+

Cohere does not support the following options in General Settings.

|

|

664

|

+

|

|

665

|

+

- timeout

|

|

661

666

|

|

|

662

667

|

### Groq

|

|

663

668

|

|

|

664

|

-

|

|

669

|

+

| Setting | Description | Default |

|

|

670

|

+

|--------------------|------------------------|----------------|

|

|

671

|

+

| `key` | API key | - |

|

|

672

|

+

| `model` | Model to use | `gemma2-9b-it` |

|

|

673

|

+

|

|

674

|

+

##### GROQ.key

|

|

665

675

|

|

|

666

676

|

The Groq API key. If you don't have one, please sign up and get the API key in [Groq Console](https://console.groq.com).

|

|

667

677

|

|

|

668

|

-

#####

|

|

678

|

+

##### GROQ.model

|

|

669

679

|

|

|

670

|

-

Default: `

|

|

680

|

+

Default: `gemma2-9b-it`

|

|

671

681

|

|

|

672

682

|

Supported:

|

|

673

|

-

- `

|

|

674

|

-

- `llama3-70b-8192`

|

|

675

|

-

- `mixtral-8x7b-32768`

|

|

683

|

+

- `gemma2-9b-it`

|

|

676

684

|

- `gemma-7b-it`

|

|

685

|

+

- `llama-3.1-70b-versatile`

|

|

686

|

+

- `llama-3.1-8b-instant`

|

|

687

|

+

- `llama3-70b-8192`

|

|

688

|

+

- `llama3-8b-8192`

|

|

689

|

+

- `llama3-groq-70b-8192-tool-use-preview`

|

|

690

|

+

- `llama3-groq-8b-8192-tool-use-preview`

|

|

677

691

|

|

|

678

|

-

|

|

692

|

+

```sh

|

|

693

|

+

aicommit2 config set GROQ.model="llama3-8b-8192"

|

|

694

|

+

```

|

|

679

695

|

|

|

680

696

|

### Perplexity

|

|

681

697

|

|

|

682

|

-

|

|

698

|

+

| Setting | Description | Default |

|

|

699

|

+

|--------------------|------------------|-----------------------------------|

|

|

700

|

+

| `key` | API key | - |

|

|

701

|

+

| `model` | Model to use | `llama-3.1-sonar-small-128k-chat` |

|

|

702

|

+

|

|

703

|

+

##### PERPLEXITY.key

|

|

683

704

|

|

|

684

|

-

The Perplexity API key. If you don't have one, please sign up and

|

|

705

|

+

The Perplexity API key. If you don't have one, please sign up and get the API key in [Perplexity](https://docs.perplexity.ai/)

|

|

685

706

|

|

|

686

|

-

#####

|

|

707

|

+

##### PERPLEXITY.model

|

|

687

708

|

|

|

688

709

|

Default: `llama-3.1-sonar-small-128k-chat`

|

|

689

710

|

|

|

@@ -699,27 +720,24 @@ Supported:

|

|

|

699

720

|

|

|

700

721

|

> The models mentioned above are subject to change.

|

|

701

722

|

|

|

702

|

-

|

|

723

|

+

```sh

|

|

724

|

+

aicommit2 config set PERPLEXITY.model="llama-3.1-70b"

|

|

725

|

+

```

|

|

703

726

|

|

|

704

|

-

|

|

727

|

+

#### Usage

|

|

705

728

|

|

|

706

|

-

|

|

729

|

+

1. Stage your files and commit:

|

|

707

730

|

|

|

708

|

-

|

|

731

|

+

```sh

|

|

732

|

+

git add <files...>

|

|

733

|

+

git commit # Only generates a message when it's not passed in

|

|

734

|

+

```

|

|

709

735

|

|

|

710

|

-

|

|

736

|

+

> If you ever want to write your own message instead of generating one, you can simply pass one in: `git commit -m "My message"`

|

|

711

737

|

|

|

712

|

-

|

|

713

|

-

- `CohereForAI/c4ai-command-r-plus`

|

|

714

|

-

- `meta-llama/Meta-Llama-3-70B-Instruct`

|

|

715

|

-

- `HuggingFaceH4/zephyr-orpo-141b-A35b-v0.1`

|

|

716

|

-

- `mistralai/Mixtral-8x7B-Instruct-v0.1`

|

|

717

|

-

- `NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO`

|

|

718

|

-

- `01-ai/Yi-1.5-34B-Chat`

|

|

719

|

-

- `mistralai/Mistral-7B-Instruct-v0.2`

|

|

720

|

-

- `microsoft/Phi-3-mini-4k-instruct`

|

|

738

|

+

2. _aicommit2_ will generate the commit message for you and pass it back to Git. Git will open it with the [configured editor](https://docs.github.com/en/get-started/getting-started-with-git/associating-text-editors-with-git) for you to review/edit it.

|

|

721

739

|

|

|

722

|

-

|

|

740

|

+

3. Save and close the editor to commit!

|

|

723

741

|

|

|

724

742

|

## Upgrading

|

|

725

743

|

|

|

@@ -737,23 +755,29 @@ npm update -g aicommit2

|

|

|

737

755

|

|

|

738

756

|

## Custom Prompt Template

|

|

739

757

|

|

|

740

|

-

_aicommit2_ supports custom prompt templates through the `

|

|

758

|

+

_aicommit2_ supports custom prompt templates through the `systemPromptPath` option. This feature allows you to define your own prompt structure, giving you more control over the commit message generation process.

|

|

741

759

|

|

|

742

|

-

### Using the

|

|

760

|

+

### Using the systemPromptPath Option

|

|

743

761

|

To use a custom prompt template, specify the path to your template file when running the tool:

|

|

762

|

+

|

|

744

763

|

```

|

|

745

|

-

aicommit2 config set

|

|

764

|

+

aicommit2 config set systemPromptPath="/path/to/user/prompt.txt"

|

|

765

|

+

aicommit2 config set OPENAI.systemPromptPath="/path/to/another-prompt.txt"

|

|

746

766

|

```

|

|

747

767

|

|

|

768

|

+

For the above command, OpenAI uses the prompt in the `another-prompt.txt` file, and the rest of the model uses `prompt.txt`.

|

|

769

|

+

|

|

770

|

+