@thinkbase/sdk 1.0.0

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- package/LICENSE +21 -0

- package/README.md +291 -0

- package/dist/client.d.ts +8 -0

- package/dist/client.js +16 -0

- package/dist/connectors/base.d.ts +9 -0

- package/dist/connectors/base.js +9 -0

- package/dist/connectors/factory.d.ts +5 -0

- package/dist/connectors/factory.js +29 -0

- package/dist/connectors/json.d.ts +7 -0

- package/dist/connectors/json.js +22 -0

- package/dist/connectors/mongodb.d.ts +9 -0

- package/dist/connectors/mongodb.js +39 -0

- package/dist/connectors/mysql.d.ts +8 -0

- package/dist/connectors/mysql.js +38 -0

- package/dist/connectors/postgres.d.ts +8 -0

- package/dist/connectors/postgres.js +35 -0

- package/dist/connectors/rest.d.ts +7 -0

- package/dist/connectors/rest.js +27 -0

- package/dist/context/builder.d.ts +11 -0

- package/dist/context/builder.js +40 -0

- package/dist/dataset.d.ts +21 -0

- package/dist/dataset.js +83 -0

- package/dist/embedding/chunker.d.ts +10 -0

- package/dist/embedding/chunker.js +41 -0

- package/dist/embedding/embedder.d.ts +9 -0

- package/dist/embedding/embedder.js +49 -0

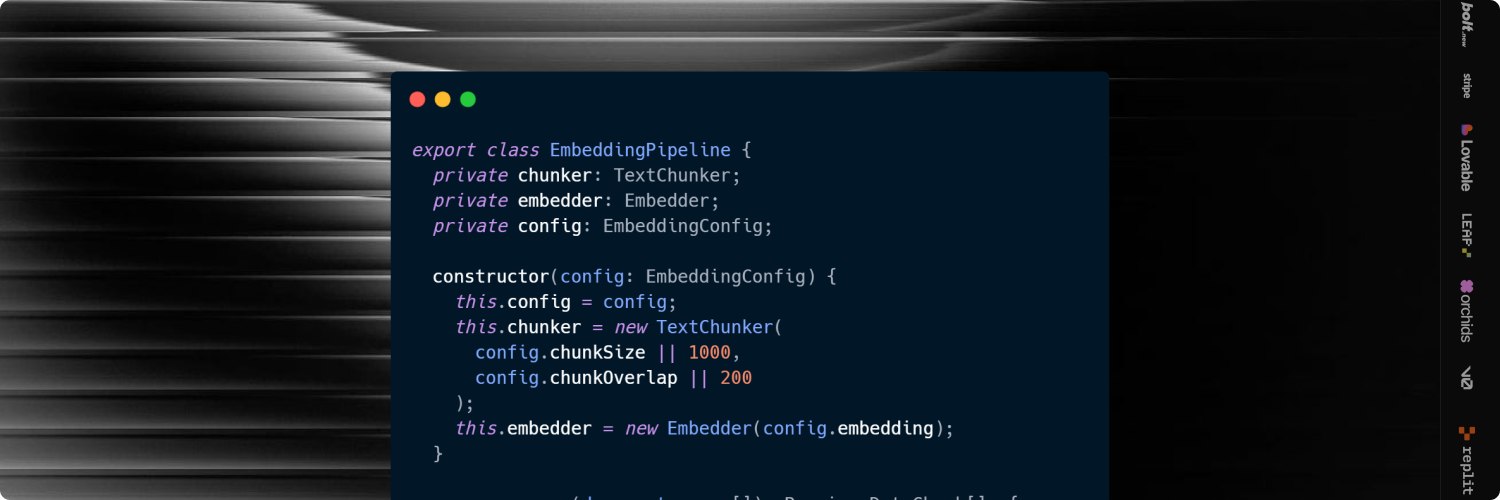

- package/dist/embedding/pipeline.d.ts +8 -0

- package/dist/embedding/pipeline.js +24 -0

- package/dist/index.d.ts +3 -0

- package/dist/index.js +22 -0

- package/dist/query/natural-language.d.ts +9 -0

- package/dist/query/natural-language.js +44 -0

- package/dist/search/vector.d.ts +8 -0

- package/dist/search/vector.js +65 -0

- package/dist/types.d.ts +48 -0

- package/dist/types.js +2 -0

- package/package.json +39 -0

package/LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

1

|

+

MIT License

|

|

2

|

+

|

|

3

|

+

Copyright (c) 2026 ThinkBase

|

|

4

|

+

|

|

5

|

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

|

6

|

+

of this software and associated documentation files (the "Software"), to deal

|

|

7

|

+

in the Software without restriction, including without limitation the rights

|

|

8

|

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

|

9

|

+

copies of the Software, and to permit persons to whom the Software is

|

|

10

|

+

furnished to do so, subject to the following conditions:

|

|

11

|

+

|

|

12

|

+

The above copyright notice and this permission notice shall be included in all

|

|

13

|

+

copies or substantial portions of the Software.

|

|

14

|

+

|

|

15

|

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

|

16

|

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

|

17

|

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

|

18

|

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

|

19

|

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

|

20

|

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

|

21

|

+

SOFTWARE.

|

package/README.md

ADDED

|

@@ -0,0 +1,291 @@

|

|

|

1

|

+

# ThinkBase SDK

|

|

2

|

+

|

|

3

|

+

|

|

4

|

+

|

|

5

|

+

Turn any data into structured, searchable, and AI-ready context with a single API.

|

|

6

|

+

|

|

7

|

+

<p align="center">

|

|

8

|

+

<img src="https://img.shields.io/badge/Node.js-339933?style=for-the-badge&logo=nodedotjs&logoColor=white" />

|

|

9

|

+

<img src="https://img.shields.io/badge/TypeScript-3178C6?style=for-the-badge&logo=typescript&logoColor=white" />

|

|

10

|

+

<img src="https://img.shields.io/badge/OpenAI-412991?style=for-the-badge&logo=openai&logoColor=white" />

|

|

11

|

+

<img src="https://img.shields.io/badge/PostgreSQL-4169E1?style=for-the-badge&logo=postgresql&logoColor=white" />

|

|

12

|

+

<img src="https://img.shields.io/badge/MySQL-4479A1?style=for-the-badge&logo=mysql&logoColor=white" />

|

|

13

|

+

<img src="https://img.shields.io/badge/MongoDB-47A248?style=for-the-badge&logo=mongodb&logoColor=white" />

|

|

14

|

+

<img src="https://img.shields.io/badge/REST_API-000000?style=for-the-badge&logo=fastapi&logoColor=white" />

|

|

15

|

+

<img src="https://img.shields.io/badge/JSON_Data-000000?style=for-the-badge&logo=json&logoColor=white" />

|

|

16

|

+

<img src="https://img.shields.io/badge/Vector_Search-FF6F00?style=for-the-badge&logo=semanticweb&logoColor=white" />

|

|

17

|

+

</p>

|

|

18

|

+

|

|

19

|

+

|

|

20

|

+

## Installation

|

|

21

|

+

|

|

22

|

+

```bash

|

|

23

|

+

npm i @thinkbase/sdk

|

|

24

|

+

```

|

|

25

|

+

|

|

26

|

+

## Quick Start

|

|

27

|

+

|

|

28

|

+

```typescript

|

|

29

|

+

import { ThinkBase } from '@thinkbase/sdk';

|

|

30

|

+

|

|

31

|

+

const thinkbase = new ThinkBase();

|

|

32

|

+

|

|

33

|

+

const dataset = thinkbase.createDataset({

|

|

34

|

+

source: 'postgres',

|

|

35

|

+

connection: process.env.DATABASE_URL

|

|

36

|

+

});

|

|

37

|

+

|

|

38

|

+

await dataset.sync({

|

|

39

|

+

embedding: 'openai:text-embedding-3-small'

|

|

40

|

+

});

|

|

41

|

+

|

|

42

|

+

const results = await dataset.search('top performing meme coins today');

|

|

43

|

+

```

|

|

44

|

+

|

|

45

|

+

## Core Features

|

|

46

|

+

|

|

47

|

+

### 1. Universal Data Connector

|

|

48

|

+

|

|

49

|

+

Connect to any data source instantly:

|

|

50

|

+

|

|

51

|

+

#### PostgreSQL

|

|

52

|

+

|

|

53

|

+

```typescript

|

|

54

|

+

const dataset = thinkbase.createDataset({

|

|

55

|

+

source: 'postgres',

|

|

56

|

+

connection: process.env.DATABASE_URL,

|

|

57

|

+

query: 'SELECT * FROM products WHERE active = true'

|

|

58

|

+

});

|

|

59

|

+

```

|

|

60

|

+

|

|

61

|

+

#### MySQL

|

|

62

|

+

|

|

63

|

+

```typescript

|

|

64

|

+

const dataset = thinkbase.createDataset({

|

|

65

|

+

source: 'mysql',

|

|

66

|

+

connection: process.env.MYSQL_URL,

|

|

67

|

+

table: 'users'

|

|

68

|

+

});

|

|

69

|

+

```

|

|

70

|

+

|

|

71

|

+

#### MongoDB

|

|

72

|

+

|

|

73

|

+

```typescript

|

|

74

|

+

const dataset = thinkbase.createDataset({

|

|

75

|

+

source: 'mongodb',

|

|

76

|

+

connection: process.env.MONGO_URL,

|

|

77

|

+

collection: 'documents'

|

|

78

|

+

});

|

|

79

|

+

```

|

|

80

|

+

|

|

81

|

+

#### REST API

|

|

82

|

+

|

|

83

|

+

```typescript

|

|

84

|

+

const dataset = thinkbase.createDataset({

|

|

85

|

+

source: 'rest',

|

|

86

|

+

url: 'https://api.example.com/data'

|

|

87

|

+

});

|

|

88

|

+

```

|

|

89

|

+

|

|

90

|

+

#### JSON Data

|

|

91

|

+

|

|

92

|

+

```typescript

|

|

93

|

+

const dataset = thinkbase.createDataset({

|

|

94

|

+

source: 'json',

|

|

95

|

+

data: [

|

|

96

|

+

{ id: 1, name: 'Product A', price: 100 },

|

|

97

|

+

{ id: 2, name: 'Product B', price: 200 }

|

|

98

|

+

]

|

|

99

|

+

});

|

|

100

|

+

```

|

|

101

|

+

|

|

102

|

+

### 2. Auto Embedding Pipeline

|

|

103

|

+

|

|

104

|

+

Automatically convert your data into AI-searchable vectors:

|

|

105

|

+

|

|

106

|

+

```typescript

|

|

107

|

+

await dataset.sync({

|

|

108

|

+

embedding: 'openai:text-embedding-3-small',

|

|

109

|

+

chunkSize: 1000,

|

|

110

|

+

chunkOverlap: 200,

|

|

111

|

+

batchSize: 100

|

|

112

|

+

});

|

|

113

|

+

```

|

|

114

|

+

|

|

115

|

+

Features:

|

|

116

|

+

- Smart text chunking with overlap

|

|

117

|

+

- Multi-model support (OpenAI, and more)

|

|

118

|

+

- Automatic background indexing

|

|

119

|

+

- Batch processing for efficiency

|

|

120

|

+

|

|

121

|

+

### 3. Semantic Search API

|

|

122

|

+

|

|

123

|

+

Query your data using natural language:

|

|

124

|

+

|

|

125

|

+

```typescript

|

|

126

|

+

const results = await dataset.search('top performing meme coins today', {

|

|

127

|

+

limit: 10,

|

|

128

|

+

threshold: 0.7,

|

|

129

|

+

hybrid: false

|

|

130

|

+

});

|

|

131

|

+

|

|

132

|

+

results.forEach(result => {

|

|

133

|

+

console.log(`Score: ${result.score}`);

|

|

134

|

+

console.log(`Content: ${result.content}`);

|

|

135

|

+

});

|

|

136

|

+

```

|

|

137

|

+

|

|

138

|

+

#### Hybrid Search

|

|

139

|

+

|

|

140

|

+

Combine vector similarity with keyword matching:

|

|

141

|

+

|

|

142

|

+

```typescript

|

|

143

|

+

const results = await dataset.search('important updates', {

|

|

144

|

+

limit: 5,

|

|

145

|

+

hybrid: true

|

|

146

|

+

});

|

|

147

|

+

```

|

|

148

|

+

|

|

149

|

+

### 4. AI Query Layer (Natural Language → Data)

|

|

150

|

+

|

|

151

|

+

Ask questions in plain English:

|

|

152

|

+

|

|

153

|

+

```typescript

|

|

154

|

+

const result = await dataset.ask('Which users spent the most last month?');

|

|

155

|

+

|

|

156

|

+

console.log('Generated SQL:', result.sql);

|

|

157

|

+

console.log('Results:', result.data);

|

|

158

|

+

```

|

|

159

|

+

|

|

160

|

+

This translates natural language into SQL and executes it safely.

|

|

161

|

+

|

|

162

|

+

### 5. Context Builder for LLM

|

|

163

|

+

|

|

164

|

+

Prepare optimized context for AI models:

|

|

165

|

+

|

|

166

|

+

```typescript

|

|

167

|

+

const context = await dataset.context({

|

|

168

|

+

query: 'analyze this token',

|

|

169

|

+

limit: 5,

|

|

170

|

+

maxTokens: 4000

|

|

171

|

+

});

|

|

172

|

+

|

|

173

|

+

const response = await openai.chat.completions.create({

|

|

174

|

+

model: 'gpt-4',

|

|

175

|

+

messages: [

|

|

176

|

+

{ role: 'system', content: context },

|

|

177

|

+

{ role: 'user', content: 'What are the key insights?' }

|

|

178

|

+

]

|

|

179

|

+

});

|

|

180

|

+

```

|

|

181

|

+

|

|

182

|

+

#### Structured Context

|

|

183

|

+

|

|

184

|

+

Get structured data instead of formatted text:

|

|

185

|

+

|

|

186

|

+

```typescript

|

|

187

|

+

const chunks = await dataset.contextStructured({

|

|

188

|

+

query: 'product analysis',

|

|

189

|

+

limit: 3

|

|

190

|

+

});

|

|

191

|

+

|

|

192

|

+

chunks.forEach(chunk => {

|

|

193

|

+

console.log(chunk.content);

|

|

194

|

+

console.log('Relevance:', chunk.score);

|

|

195

|

+

});

|

|

196

|

+

```

|

|

197

|

+

|

|

198

|

+

## Complete Example

|

|

199

|

+

|

|

200

|

+

```typescript

|

|

201

|

+

import { ThinkBase } from '@thinkbase/sdk';

|

|

202

|

+

|

|

203

|

+

async function main() {

|

|

204

|

+

const thinkbase = new ThinkBase();

|

|

205

|

+

|

|

206

|

+

const dataset = thinkbase.createDataset({

|

|

207

|

+

source: 'postgres',

|

|

208

|

+

connection: process.env.DATABASE_URL,

|

|

209

|

+

query: 'SELECT * FROM crypto_tokens'

|

|

210

|

+

});

|

|

211

|

+

|

|

212

|

+

await dataset.sync({

|

|

213

|

+

embedding: 'openai:text-embedding-3-small',

|

|

214

|

+

chunkSize: 1000

|

|

215

|

+

});

|

|

216

|

+

|

|

217

|

+

console.log('Stats:', dataset.getStats());

|

|

218

|

+

|

|

219

|

+

const searchResults = await dataset.search(

|

|

220

|

+

'best performing tokens today',

|

|

221

|

+

{ limit: 5, threshold: 0.75 }

|

|

222

|

+

);

|

|

223

|

+

|

|

224

|

+

console.log('Search Results:', searchResults);

|

|

225

|

+

|

|

226

|

+

const queryResult = await dataset.ask(

|

|

227

|

+

'What are the top 5 tokens by market cap?'

|

|

228

|

+

);

|

|

229

|

+

|

|

230

|

+

console.log('Query Results:', queryResult.data);

|

|

231

|

+

console.log('Generated SQL:', queryResult.sql);

|

|

232

|

+

|

|

233

|

+

const context = await dataset.context({

|

|

234

|

+

query: 'token performance analysis',

|

|

235

|

+

limit: 5,

|

|

236

|

+

maxTokens: 3000

|

|

237

|

+

});

|

|

238

|

+

|

|

239

|

+

console.log('LLM Context:', context);

|

|

240

|

+

|

|

241

|

+

await dataset.disconnect();

|

|

242

|

+

}

|

|

243

|

+

|

|

244

|

+

main().catch(console.error);

|

|

245

|

+

```

|

|

246

|

+

|

|

247

|

+

## Environment Variables

|

|

248

|

+

|

|

249

|

+

Set up your API keys:

|

|

250

|

+

|

|

251

|

+

```bash

|

|

252

|

+

OPENAI_API_KEY=your_openai_key

|

|

253

|

+

DATABASE_URL=postgresql://user:pass@host:5432/db

|

|

254

|

+

```

|

|

255

|

+

|

|

256

|

+

## API Reference

|

|

257

|

+

|

|

258

|

+

### ThinkBase

|

|

259

|

+

|

|

260

|

+

Main client for creating datasets.

|

|

261

|

+

|

|

262

|

+

```typescript

|

|

263

|

+

const thinkbase = new ThinkBase(config?: ThinkBaseConfig);

|

|

264

|

+

```

|

|

265

|

+

|

|

266

|

+

### Dataset

|

|

267

|

+

|

|

268

|

+

Main class for working with data.

|

|

269

|

+

|

|

270

|

+

#### Methods

|

|

271

|

+

|

|

272

|

+

- `sync(config: EmbeddingConfig)` - Process and embed data

|

|

273

|

+

- `search(query: string, options?: SearchOptions)` - Semantic search

|

|

274

|

+

- `ask(query: string)` - Natural language query

|

|

275

|

+

- `context(options: ContextOptions)` - Build LLM context

|

|

276

|

+

- `contextStructured(options: ContextOptions)` - Get structured context

|

|

277

|

+

- `getStats()` - Get dataset statistics

|

|

278

|

+

- `connect()` - Connect to data source

|

|

279

|

+

- `disconnect()` - Disconnect from data source

|

|

280

|

+

|

|

281

|

+

## TypeScript Support

|

|

282

|

+

|

|

283

|

+

Full TypeScript definitions included:

|

|

284

|

+

|

|

285

|

+

```typescript

|

|

286

|

+

import { ThinkBase, Dataset, SearchResult, QueryResult } from '@thinkbase/sdk';

|

|

287

|

+

```

|

|

288

|

+

|

|

289

|

+

## License

|

|

290

|

+

|

|

291

|

+

MIT

|

package/dist/client.d.ts

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

1

|

+

import { Dataset } from './dataset';

|

|

2

|

+

import { ThinkBaseConfig, DataSourceConfig } from './types';

|

|

3

|

+

export declare class ThinkBase {

|

|

4

|

+

private config;

|

|

5

|

+

constructor(config?: ThinkBaseConfig);

|

|

6

|

+

createDataset(config: DataSourceConfig): Dataset;

|

|

7

|

+

getConfig(): ThinkBaseConfig;

|

|

8

|

+

}

|

package/dist/client.js

ADDED

|

@@ -0,0 +1,16 @@

|

|

|

1

|

+

"use strict";

|

|

2

|

+

Object.defineProperty(exports, "__esModule", { value: true });

|

|

3

|

+

exports.ThinkBase = void 0;

|

|

4

|

+

const dataset_1 = require("./dataset");

|

|

5

|

+

class ThinkBase {

|

|

6

|

+

constructor(config = {}) {

|

|

7

|

+

this.config = config;

|

|

8

|

+

}

|

|

9

|

+

createDataset(config) {

|

|

10

|

+

return new dataset_1.Dataset(config);

|

|

11

|

+

}

|

|

12

|

+

getConfig() {

|

|

13

|

+

return this.config;

|

|

14

|

+

}

|

|

15

|

+

}

|

|

16

|

+

exports.ThinkBase = ThinkBase;

|

|

@@ -0,0 +1,9 @@

|

|

|

1

|

+

import { DataSourceConfig } from '../types';

|

|

2

|

+

export declare abstract class DataConnector {

|

|

3

|

+

protected config: DataSourceConfig;

|

|

4

|

+

constructor(config: DataSourceConfig);

|

|

5

|

+

abstract connect(): Promise<void>;

|

|

6

|

+

abstract disconnect(): Promise<void>;

|

|

7

|

+

abstract fetchData(): Promise<any[]>;

|

|

8

|

+

abstract getName(): string;

|

|

9

|

+

}

|

|

@@ -0,0 +1,29 @@

|

|

|

1

|

+

"use strict";

|

|

2

|

+

Object.defineProperty(exports, "__esModule", { value: true });

|

|

3

|

+

exports.ConnectorFactory = void 0;

|

|

4

|

+

const postgres_1 = require("./postgres");

|

|

5

|

+

const mysql_1 = require("./mysql");

|

|

6

|

+

const mongodb_1 = require("./mongodb");

|

|

7

|

+

const rest_1 = require("./rest");

|

|

8

|

+

const json_1 = require("./json");

|

|

9

|

+

class ConnectorFactory {

|

|

10

|

+

static create(config) {

|

|

11

|

+

switch (config.source) {

|

|

12

|

+

case 'postgres':

|

|

13

|

+

return new postgres_1.PostgresConnector(config);

|

|

14

|

+

case 'mysql':

|

|

15

|

+

return new mysql_1.MySQLConnector(config);

|

|

16

|

+

case 'mongodb':

|

|

17

|

+

return new mongodb_1.MongoDBConnector(config);

|

|

18

|

+

case 'rest':

|

|

19

|

+

case 'graphql':

|

|

20

|

+

return new rest_1.RESTConnector(config);

|

|

21

|

+

case 'json':

|

|

22

|

+

case 'csv':

|

|

23

|

+

return new json_1.JSONConnector(config);

|

|

24

|

+

default:

|

|

25

|

+

throw new Error(`Unsupported data source: ${config.source}`);

|

|

26

|

+

}

|

|

27

|

+

}

|

|

28

|

+

}

|

|

29

|

+

exports.ConnectorFactory = ConnectorFactory;

|

|

@@ -0,0 +1,22 @@

|

|

|

1

|

+

"use strict";

|

|

2

|

+

Object.defineProperty(exports, "__esModule", { value: true });

|

|

3

|

+

exports.JSONConnector = void 0;

|

|

4

|

+

const base_1 = require("./base");

|

|

5

|

+

class JSONConnector extends base_1.DataConnector {

|

|

6

|

+

async connect() {

|

|

7

|

+

// No connection needed for in-memory data

|

|

8

|

+

}

|

|

9

|

+

async disconnect() {

|

|

10

|

+

// No connection to close

|

|

11

|

+

}

|

|

12

|

+

async fetchData() {

|

|

13

|

+

if (!this.config.data) {

|

|

14

|

+

throw new Error('JSON data is required');

|

|

15

|

+

}

|

|

16

|

+

return Array.isArray(this.config.data) ? this.config.data : [this.config.data];

|

|

17

|

+

}

|

|

18

|

+

getName() {

|

|

19

|

+

return 'JSON';

|

|

20

|

+

}

|

|

21

|

+

}

|

|

22

|

+

exports.JSONConnector = JSONConnector;

|

|

@@ -0,0 +1,39 @@

|

|

|

1

|

+

"use strict";

|

|

2

|

+

Object.defineProperty(exports, "__esModule", { value: true });

|

|

3

|

+

exports.MongoDBConnector = void 0;

|

|

4

|

+

const mongodb_1 = require("mongodb");

|

|

5

|

+

const base_1 = require("./base");

|

|

6

|

+

class MongoDBConnector extends base_1.DataConnector {

|

|

7

|

+

constructor() {

|

|

8

|

+

super(...arguments);

|

|

9

|

+

this.client = null;

|

|

10

|

+

this.db = null;

|

|

11

|

+

}

|

|

12

|

+

async connect() {

|

|

13

|

+

if (!this.config.connection) {

|

|

14

|

+

throw new Error('MongoDB connection string is required');

|

|

15

|

+

}

|

|

16

|

+

this.client = new mongodb_1.MongoClient(this.config.connection);

|

|

17

|

+

await this.client.connect();

|

|

18

|

+

this.db = this.client.db();

|

|

19

|

+

}

|

|

20

|

+

async disconnect() {

|

|

21

|

+

if (this.client) {

|

|

22

|

+

await this.client.close();

|

|

23

|

+

this.client = null;

|

|

24

|

+

this.db = null;

|

|

25

|

+

}

|

|

26

|

+

}

|

|

27

|

+

async fetchData() {

|

|

28

|

+

if (!this.db) {

|

|

29

|

+

throw new Error('Not connected to MongoDB');

|

|

30

|

+

}

|

|

31

|

+

const collection = this.config.collection || 'data';

|

|

32

|

+

const documents = await this.db.collection(collection).find({}).toArray();

|

|

33

|

+

return documents;

|

|

34

|

+

}

|

|

35

|

+

getName() {

|

|

36

|

+

return 'MongoDB';

|

|

37

|

+

}

|

|

38

|

+

}

|

|

39

|

+

exports.MongoDBConnector = MongoDBConnector;

|

|

@@ -0,0 +1,38 @@

|

|

|

1

|

+

"use strict";

|

|

2

|

+

var __importDefault = (this && this.__importDefault) || function (mod) {

|

|

3

|

+

return (mod && mod.__esModule) ? mod : { "default": mod };

|

|

4

|

+

};

|

|

5

|

+

Object.defineProperty(exports, "__esModule", { value: true });

|

|

6

|

+

exports.MySQLConnector = void 0;

|

|

7

|

+

const promise_1 = __importDefault(require("mysql2/promise"));

|

|

8

|

+

const base_1 = require("./base");

|

|

9

|

+

class MySQLConnector extends base_1.DataConnector {

|

|

10

|

+

constructor() {

|

|

11

|

+

super(...arguments);

|

|

12

|

+

this.connection = null;

|

|

13

|

+

}

|

|

14

|

+

async connect() {

|

|

15

|

+

if (!this.config.connection) {

|

|

16

|

+

throw new Error('MySQL connection string is required');

|

|

17

|

+

}

|

|

18

|

+

this.connection = await promise_1.default.createConnection(this.config.connection);

|

|

19

|

+

}

|

|

20

|

+

async disconnect() {

|

|

21

|

+

if (this.connection) {

|

|

22

|

+

await this.connection.end();

|

|

23

|

+

this.connection = null;

|

|

24

|

+

}

|

|

25

|

+

}

|

|

26

|

+

async fetchData() {

|

|

27

|

+

if (!this.connection) {

|

|

28

|

+

throw new Error('Not connected to MySQL');

|

|

29

|

+

}

|

|

30

|

+

const query = this.config.query || `SELECT * FROM ${this.config.table || 'data'}`;

|

|

31

|

+

const [rows] = await this.connection.execute(query);

|

|

32

|

+

return rows;

|

|

33

|

+

}

|

|

34

|

+

getName() {

|

|

35

|

+

return 'MySQL';

|

|

36

|

+

}

|

|

37

|

+

}

|

|

38

|

+

exports.MySQLConnector = MySQLConnector;

|

|

@@ -0,0 +1,35 @@

|

|

|

1

|

+

"use strict";

|

|

2

|

+

Object.defineProperty(exports, "__esModule", { value: true });

|

|

3

|

+

exports.PostgresConnector = void 0;

|

|

4

|

+

const pg_1 = require("pg");

|

|

5

|

+

const base_1 = require("./base");

|

|

6

|

+

class PostgresConnector extends base_1.DataConnector {

|

|

7

|

+

constructor() {

|

|

8

|

+

super(...arguments);

|

|

9

|

+

this.pool = null;

|

|

10

|

+

}

|

|

11

|

+

async connect() {

|

|

12

|

+

if (!this.config.connection) {

|

|

13

|

+

throw new Error('PostgreSQL connection string is required');

|

|

14

|

+

}

|

|

15

|

+

this.pool = new pg_1.Pool({ connectionString: this.config.connection });

|

|

16

|

+

}

|

|

17

|

+

async disconnect() {

|

|

18

|

+

if (this.pool) {

|

|

19

|

+

await this.pool.end();

|

|

20

|

+

this.pool = null;

|

|

21

|

+

}

|

|

22

|

+

}

|

|

23

|

+

async fetchData() {

|

|

24

|

+

if (!this.pool) {

|

|

25

|

+

throw new Error('Not connected to PostgreSQL');

|

|

26

|

+

}

|

|

27

|

+

const query = this.config.query || `SELECT * FROM ${this.config.table || 'data'}`;

|

|

28

|

+

const result = await this.pool.query(query);

|

|

29

|

+

return result.rows;

|

|

30

|

+

}

|

|

31

|

+

getName() {

|

|

32

|

+

return 'PostgreSQL';

|

|

33

|

+

}

|

|

34

|

+

}

|

|

35

|

+

exports.PostgresConnector = PostgresConnector;

|

|

@@ -0,0 +1,27 @@

|

|

|

1

|

+

"use strict";

|

|

2

|

+

var __importDefault = (this && this.__importDefault) || function (mod) {

|

|

3

|

+

return (mod && mod.__esModule) ? mod : { "default": mod };

|

|

4

|

+

};

|

|

5

|

+

Object.defineProperty(exports, "__esModule", { value: true });

|

|

6

|

+

exports.RESTConnector = void 0;

|

|

7

|

+

const axios_1 = __importDefault(require("axios"));

|

|

8

|

+

const base_1 = require("./base");

|

|

9

|

+

class RESTConnector extends base_1.DataConnector {

|

|

10

|

+

async connect() {

|

|

11

|

+

// No persistent connection needed for REST

|

|

12

|

+

}

|

|

13

|

+

async disconnect() {

|

|

14

|

+

// No persistent connection to close

|

|

15

|

+

}

|

|

16

|

+

async fetchData() {

|

|

17

|

+

if (!this.config.url) {

|

|

18

|

+

throw new Error('REST API URL is required');

|

|

19

|

+

}

|

|

20

|

+

const response = await axios_1.default.get(this.config.url);

|

|

21

|

+

return Array.isArray(response.data) ? response.data : [response.data];

|

|

22

|

+

}

|

|

23

|

+

getName() {

|

|

24

|

+

return 'REST API';

|

|

25

|

+

}

|

|

26

|

+

}

|

|

27

|

+

exports.RESTConnector = RESTConnector;

|

|

@@ -0,0 +1,11 @@

|

|

|

1

|

+

import { SearchResult, ContextOptions } from '../types';

|

|

2

|

+

import { DataChunk } from '../types';

|

|

3

|

+

export declare class ContextBuilder {

|

|

4

|

+

private search;

|

|

5

|

+

private embedder;

|

|

6

|

+

constructor(chunks: DataChunk[], embeddingModel: string);

|

|

7

|

+

build(options: ContextOptions): Promise<string>;

|

|

8

|

+

private formatContext;

|

|

9

|

+

private estimateTokens;

|

|

10

|

+

buildStructured(options: ContextOptions): Promise<SearchResult[]>;

|

|

11

|

+

}

|

|

@@ -0,0 +1,40 @@

|

|

|

1

|

+

"use strict";

|

|

2

|

+

Object.defineProperty(exports, "__esModule", { value: true });

|

|

3

|

+

exports.ContextBuilder = void 0;

|

|

4

|

+

const embedder_1 = require("../embedding/embedder");

|

|

5

|

+

const vector_1 = require("../search/vector");

|

|

6

|

+

class ContextBuilder {

|

|

7

|

+

constructor(chunks, embeddingModel) {

|

|

8

|

+

this.search = new vector_1.VectorSearch(chunks);

|

|

9

|

+

this.embedder = new embedder_1.Embedder(embeddingModel);

|

|

10

|

+

}

|

|

11

|

+

async build(options) {

|

|

12

|

+

const queryEmbedding = await this.embedder.embed(options.query);

|

|

13

|

+

const results = this.search.search(queryEmbedding, options.limit || 5, 0.5);

|

|

14

|

+

return this.formatContext(results, options);

|

|

15

|

+

}

|

|

16

|

+

formatContext(results, options) {

|

|

17

|

+

const maxTokens = options.maxTokens || 4000;

|

|

18

|

+

let context = `Relevant context for: "${options.query}"\n\n`;

|

|

19

|

+

let currentTokens = this.estimateTokens(context);

|

|

20

|

+

for (let i = 0; i < results.length; i++) {

|

|

21

|

+

const result = results[i];

|

|

22

|

+

const chunk = `[${i + 1}] (score: ${result.score.toFixed(3)})\n${result.content}\n\n`;

|

|

23

|

+

const chunkTokens = this.estimateTokens(chunk);

|

|

24

|

+

if (currentTokens + chunkTokens > maxTokens) {

|

|

25

|

+

break;

|

|

26

|

+

}

|

|

27

|

+

context += chunk;

|

|

28

|

+

currentTokens += chunkTokens;

|

|

29

|

+

}

|

|

30

|

+

return context.trim();

|

|

31

|

+

}

|

|

32

|

+

estimateTokens(text) {

|

|

33

|

+

return Math.ceil(text.length / 4);

|

|

34

|

+

}

|

|

35

|

+

async buildStructured(options) {

|

|

36

|

+

const queryEmbedding = await this.embedder.embed(options.query);

|

|

37

|

+

return this.search.search(queryEmbedding, options.limit || 5, 0.5);

|

|

38

|

+

}

|

|

39

|

+

}

|

|

40

|

+

exports.ContextBuilder = ContextBuilder;

|

|

@@ -0,0 +1,21 @@

|

|

|

1

|

+

import { DataSourceConfig, EmbeddingConfig, SearchOptions, ContextOptions, DataChunk, SearchResult, QueryResult } from './types';

|

|

2

|

+

export declare class Dataset {

|

|

3

|

+

private connector;

|

|

4

|

+

private chunks;

|

|

5

|

+

private embeddingConfig?;

|

|

6

|

+

private nlQuery?;

|

|

7

|

+

private contextBuilder?;

|

|

8

|

+

constructor(config: DataSourceConfig);

|

|

9

|

+

connect(): Promise<void>;

|

|

10

|

+

disconnect(): Promise<void>;

|

|

11

|

+

sync(config: EmbeddingConfig): Promise<void>;

|

|

12

|

+

search(query: string, options?: SearchOptions): Promise<SearchResult[]>;

|

|

13

|

+

ask(query: string): Promise<QueryResult>;

|

|

14

|

+

context(options: ContextOptions): Promise<string>;

|

|

15

|

+

contextStructured(options: ContextOptions): Promise<SearchResult[]>;

|

|

16

|

+

getChunks(): DataChunk[];

|

|

17

|

+

getStats(): {

|

|

18

|

+

totalChunks: number;

|

|

19

|

+

totalDocuments: number;

|

|

20

|

+

};

|

|

21

|

+

}

|

package/dist/dataset.js

ADDED

|

@@ -0,0 +1,83 @@

|

|

|

1

|

+

"use strict";

|

|

2

|

+

Object.defineProperty(exports, "__esModule", { value: true });

|

|

3

|

+

exports.Dataset = void 0;

|

|

4

|

+

const factory_1 = require("./connectors/factory");

|

|

5

|

+

const pipeline_1 = require("./embedding/pipeline");

|

|

6

|

+

const vector_1 = require("./search/vector");

|

|

7

|

+

const natural_language_1 = require("./query/natural-language");

|

|

8

|

+

const builder_1 = require("./context/builder");

|

|

9

|

+

const embedder_1 = require("./embedding/embedder");

|

|

10

|

+

class Dataset {

|

|

11

|

+

constructor(config) {

|

|

12

|

+

this.chunks = [];

|

|

13

|

+

this.connector = factory_1.ConnectorFactory.create(config);

|

|

14

|

+

}

|

|

15

|

+

async connect() {

|

|

16

|

+

await this.connector.connect();

|

|

17

|

+

}

|

|

18

|

+

async disconnect() {

|

|

19

|

+

await this.connector.disconnect();

|

|

20

|

+

}

|

|

21

|

+

async sync(config) {

|

|

22

|

+

this.embeddingConfig = config;

|

|

23

|

+

await this.connector.connect();

|

|

24

|

+

const data = await this.connector.fetchData();

|

|

25

|

+

await this.connector.disconnect();

|

|

26

|

+

const pipeline = new pipeline_1.EmbeddingPipeline(config);

|

|

27

|

+

this.chunks = await pipeline.process(data);

|

|

28

|

+

this.contextBuilder = new builder_1.ContextBuilder(this.chunks, config.embedding);

|

|

29

|

+

this.nlQuery = new natural_language_1.NaturalLanguageQuery();

|

|

30

|

+

}

|

|

31

|

+

async search(query, options = {}) {

|

|

32

|

+

if (!this.embeddingConfig) {

|

|

33

|

+

throw new Error('Dataset not synced. Call sync() first.');

|

|

34

|

+

}

|

|

35

|

+

const embedder = new embedder_1.Embedder(this.embeddingConfig.embedding);

|

|

36

|

+

const queryEmbedding = await embedder.embed(query);

|

|

37

|

+

const search = new vector_1.VectorSearch(this.chunks);

|

|

38

|

+

if (options.hybrid) {

|

|

39

|

+

const keywords = query.toLowerCase().split(' ');

|

|

40

|

+

return search.hybridSearch(queryEmbedding, keywords, options.limit || 10, 0.7);

|

|

41

|

+

}

|

|

42

|

+

return search.search(queryEmbedding, options.limit || 10, options.threshold || 0.7);

|

|

43

|

+

}

|

|

44

|

+

async ask(query) {

|

|

45

|

+

if (!this.nlQuery) {

|

|

46

|

+

this.nlQuery = new natural_language_1.NaturalLanguageQuery();

|

|

47

|

+

}

|

|

48

|

+

await this.connector.connect();

|

|

49

|

+

try {

|

|

50

|

+

const result = await this.nlQuery.executeQuery(query, async (sql) => {

|

|

51

|

+

return await this.connector.fetchData();

|

|

52

|

+

});

|

|

53

|

+

await this.connector.disconnect();

|

|

54

|

+

return result;

|

|

55

|

+

}

|

|

56

|

+

catch (error) {

|

|

57

|

+

await this.connector.disconnect();

|

|

58

|

+

throw error;

|

|

59

|

+

}

|

|

60

|

+

}

|

|

61

|

+

async context(options) {

|

|

62

|

+

if (!this.contextBuilder) {

|

|

63

|

+

throw new Error('Dataset not synced. Call sync() first.');

|

|

64

|

+

}

|

|

65

|

+

return this.contextBuilder.build(options);

|

|

66

|

+

}

|

|

67

|

+

async contextStructured(options) {

|

|

68

|

+

if (!this.contextBuilder) {

|

|

69

|

+

throw new Error('Dataset not synced. Call sync() first.');

|

|

70

|

+

}

|

|

71

|

+

return this.contextBuilder.buildStructured(options);

|

|

72

|

+

}

|

|

73

|

+

getChunks() {

|

|

74

|

+

return this.chunks;

|

|

75

|

+

}

|

|

76

|

+

getStats() {

|

|

77

|

+

return {

|

|

78

|

+

totalChunks: this.chunks.length,

|

|

79

|

+

totalDocuments: this.chunks.length

|

|

80

|

+

};

|

|

81

|

+

}

|

|

82

|

+

}

|

|

83

|

+

exports.Dataset = Dataset;

|

|

@@ -0,0 +1,10 @@

|

|

|

1

|

+

export declare class TextChunker {

|

|

2

|

+

private chunkSize;

|

|

3

|

+

private chunkOverlap;

|

|

4

|

+

constructor(chunkSize?: number, chunkOverlap?: number);

|

|

5

|

+

chunk(text: string): string[];

|

|

6

|

+

chunkDocuments(documents: any[]): Array<{

|

|

7

|

+

content: string;

|

|

8

|

+

metadata: any;

|

|

9

|

+

}>;

|

|

10

|

+

}

|

|

@@ -0,0 +1,41 @@

|

|

|

1

|

+

"use strict";

|

|

2

|

+

Object.defineProperty(exports, "__esModule", { value: true });

|

|

3

|

+

exports.TextChunker = void 0;

|

|

4

|

+

class TextChunker {

|

|

5

|

+

constructor(chunkSize = 1000, chunkOverlap = 200) {

|

|

6

|

+

this.chunkSize = chunkSize;

|

|

7

|

+

this.chunkOverlap = chunkOverlap;

|

|

8

|

+

}

|

|

9

|

+

chunk(text) {

|

|

10

|

+

const chunks = [];

|

|

11

|

+

let start = 0;

|

|

12

|

+

while (start < text.length) {

|

|

13

|

+

const end = Math.min(start + this.chunkSize, text.length);

|

|

14

|

+

let chunk = text.slice(start, end);

|

|

15

|

+

if (end < text.length) {

|

|

16

|

+

const lastSpace = chunk.lastIndexOf(' ');

|

|

17

|

+

if (lastSpace > 0) {

|

|

18

|

+

chunk = chunk.slice(0, lastSpace);

|

|

19

|

+

}

|

|

20

|

+

}

|

|

21

|

+

chunks.push(chunk.trim());

|

|

22

|

+

start += this.chunkSize - this.chunkOverlap;

|

|

23

|

+

}

|

|

24

|

+

return chunks.filter(c => c.length > 0);

|

|

25

|

+

}

|

|

26

|

+

chunkDocuments(documents) {

|

|

27

|

+

const chunkedDocs = [];

|

|

28

|

+

for (const doc of documents) {

|

|

29

|

+

const text = typeof doc === 'string' ? doc : JSON.stringify(doc);

|

|

30

|

+

const chunks = this.chunk(text);

|

|

31

|

+

for (const chunk of chunks) {

|

|

32

|

+

chunkedDocs.push({

|

|

33

|

+

content: chunk,

|

|

34

|

+

metadata: typeof doc === 'object' ? doc : {}

|

|

35

|

+

});

|

|

36

|

+

}

|

|

37

|

+

}

|

|

38

|

+

return chunkedDocs;

|

|

39

|

+

}

|

|

40

|

+

}

|

|

41

|

+

exports.TextChunker = TextChunker;

|

|

@@ -0,0 +1,49 @@

|

|

|

1

|

+

"use strict";

|

|

2

|

+

var __importDefault = (this && this.__importDefault) || function (mod) {

|

|

3

|

+

return (mod && mod.__esModule) ? mod : { "default": mod };

|

|

4

|

+

};

|

|

5

|

+

Object.defineProperty(exports, "__esModule", { value: true });

|

|

6

|

+

exports.Embedder = void 0;

|

|

7

|

+

const openai_1 = __importDefault(require("openai"));

|

|

8

|

+

class Embedder {

|

|

9

|

+

constructor(model) {

|

|

10

|

+

this.client = null;

|

|

11

|

+

this.model = model;

|

|

12

|

+

if (this.isOpenAIModel()) {

|

|

13

|

+

this.client = new openai_1.default({

|

|

14

|

+

apiKey: process.env.OPENAI_API_KEY

|

|

15

|

+

});

|

|

16

|

+

}

|

|

17

|

+

}

|

|

18

|

+

isOpenAIModel() {

|

|

19

|

+

return this.model.startsWith('openai:');

|

|

20

|

+

}

|

|

21

|

+

getModelName() {

|

|

22

|

+

return this.model.replace('openai:', '');

|

|

23

|

+

}

|

|

24

|

+

async embed(text) {

|

|

25

|

+

if (this.isOpenAIModel() && this.client) {

|

|

26

|

+

const response = await this.client.embeddings.create({

|

|

27

|

+

model: this.getModelName(),

|

|

28

|

+

input: text

|

|

29

|

+

});

|

|

30

|

+

return response.data[0].embedding;

|

|

31

|

+

}

|

|

32

|

+

throw new Error(`Unsupported embedding model: ${this.model}`);

|

|

33

|

+

}

|

|

34

|

+

async embedBatch(texts, batchSize = 100) {

|

|

35

|

+

const embeddings = [];

|

|

36

|

+

for (let i = 0; i < texts.length; i += batchSize) {

|

|

37

|

+

const batch = texts.slice(i, i + batchSize);

|

|

38

|

+

if (this.isOpenAIModel() && this.client) {

|

|

39

|

+

const response = await this.client.embeddings.create({

|

|

40

|

+

model: this.getModelName(),

|

|

41

|

+

input: batch

|

|

42

|

+

});

|

|

43

|

+

embeddings.push(...response.data.map(d => d.embedding));

|

|

44

|

+

}

|

|

45

|

+

}

|

|

46

|

+

return embeddings;

|

|

47

|

+

}

|

|

48

|

+

}

|

|

49

|

+

exports.Embedder = Embedder;

|

|

@@ -0,0 +1,24 @@

|

|

|

1

|

+

"use strict";

|

|

2

|

+

Object.defineProperty(exports, "__esModule", { value: true });

|

|

3

|

+

exports.EmbeddingPipeline = void 0;

|

|

4

|

+

const chunker_1 = require("./chunker");

|

|

5

|

+

const embedder_1 = require("./embedder");

|

|

6

|

+

class EmbeddingPipeline {

|

|

7

|

+

constructor(config) {

|

|

8

|

+

this.config = config;

|

|

9

|

+

this.chunker = new chunker_1.TextChunker(config.chunkSize || 1000, config.chunkOverlap || 200);

|

|

10

|

+

this.embedder = new embedder_1.Embedder(config.embedding);

|

|

11

|

+

}

|

|

12

|

+

async process(documents) {

|

|

13

|

+

const chunked = this.chunker.chunkDocuments(documents);

|

|

14

|

+

const texts = chunked.map(doc => doc.content);

|

|

15

|

+

const embeddings = await this.embedder.embedBatch(texts, this.config.batchSize || 100);

|

|

16

|

+

return chunked.map((doc, index) => ({

|

|

17

|

+

id: `chunk_${index}`,

|

|

18

|

+

content: doc.content,

|

|

19

|

+

embedding: embeddings[index],

|

|

20

|

+

metadata: doc.metadata

|

|

21

|

+

}));

|

|

22

|

+

}

|

|

23

|

+

}

|

|

24

|

+

exports.EmbeddingPipeline = EmbeddingPipeline;

|

package/dist/index.d.ts

ADDED

package/dist/index.js

ADDED

|

@@ -0,0 +1,22 @@

|

|

|

1

|

+

"use strict";

|

|

2

|

+

var __createBinding = (this && this.__createBinding) || (Object.create ? (function(o, m, k, k2) {

|

|

3

|

+

if (k2 === undefined) k2 = k;

|

|

4

|

+

var desc = Object.getOwnPropertyDescriptor(m, k);

|

|

5

|

+

if (!desc || ("get" in desc ? !m.__esModule : desc.writable || desc.configurable)) {

|

|

6

|

+

desc = { enumerable: true, get: function() { return m[k]; } };

|

|

7

|

+

}

|

|

8

|

+

Object.defineProperty(o, k2, desc);

|

|

9

|

+

}) : (function(o, m, k, k2) {

|

|

10

|

+

if (k2 === undefined) k2 = k;

|

|

11

|

+

o[k2] = m[k];

|

|

12

|

+

}));

|

|

13

|

+

var __exportStar = (this && this.__exportStar) || function(m, exports) {

|

|

14

|

+

for (var p in m) if (p !== "default" && !Object.prototype.hasOwnProperty.call(exports, p)) __createBinding(exports, m, p);

|

|

15

|

+

};

|

|

16

|

+

Object.defineProperty(exports, "__esModule", { value: true });

|

|

17

|

+

exports.Dataset = exports.ThinkBase = void 0;

|

|

18

|

+

var client_1 = require("./client");

|

|

19

|

+

Object.defineProperty(exports, "ThinkBase", { enumerable: true, get: function () { return client_1.ThinkBase; } });

|

|

20

|

+

var dataset_1 = require("./dataset");

|

|

21

|

+

Object.defineProperty(exports, "Dataset", { enumerable: true, get: function () { return dataset_1.Dataset; } });

|

|

22

|

+

__exportStar(require("./types"), exports);

|

|

@@ -0,0 +1,9 @@

|

|

|

1

|

+

import { QueryResult } from '../types';

|

|

2

|

+

export declare class NaturalLanguageQuery {

|

|

3

|

+

private client;

|

|

4

|

+

private schema;

|

|

5

|

+

constructor(schema?: any);

|

|

6

|

+

setSchema(schema: any): void;

|

|

7

|

+

translateToSQL(query: string, dialect?: string): Promise<string>;

|

|

8

|

+

executeQuery(query: string, executor: (sql: string) => Promise<any[]>): Promise<QueryResult>;

|

|

9

|

+

}

|

|

@@ -0,0 +1,44 @@

|

|

|

1

|

+

"use strict";

|

|

2

|

+

var __importDefault = (this && this.__importDefault) || function (mod) {

|

|

3

|

+

return (mod && mod.__esModule) ? mod : { "default": mod };

|

|

4

|

+

};

|

|

5

|

+

Object.defineProperty(exports, "__esModule", { value: true });

|

|

6

|

+

exports.NaturalLanguageQuery = void 0;

|

|

7

|

+

const openai_1 = __importDefault(require("openai"));

|

|

8

|

+

class NaturalLanguageQuery {

|

|

9

|

+

constructor(schema) {

|

|

10

|

+

this.client = new openai_1.default({

|

|

11

|

+

apiKey: process.env.OPENAI_API_KEY

|

|

12

|

+

});

|

|

13

|

+

this.schema = schema;

|

|

14

|

+

}

|

|

15

|

+

setSchema(schema) {

|

|

16

|

+

this.schema = schema;

|

|

17

|

+

}

|

|

18

|

+

async translateToSQL(query, dialect = 'postgres') {

|

|

19

|

+

const schemaContext = this.schema

|

|

20

|

+

? `Database schema:\n${JSON.stringify(this.schema, null, 2)}\n\n`

|

|

21

|

+

: '';

|

|

22

|

+

const prompt = `${schemaContext}Convert this natural language query to ${dialect} SQL:

|

|

23

|

+

"${query}"

|

|

24

|

+

|

|

25

|

+

Return ONLY the SQL query, no explanations.`;

|

|

26

|

+

const response = await this.client.chat.completions.create({

|

|

27

|

+

model: 'gpt-4o-mini',

|

|

28

|

+

messages: [{ role: 'user', content: prompt }],

|

|

29

|

+

temperature: 0

|

|

30

|

+

});

|

|

31

|

+

return response.choices[0].message.content?.trim() || '';

|

|

32

|

+

}

|

|

33

|

+

async executeQuery(query, executor) {

|

|

34

|

+

const sql = await this.translateToSQL(query);

|

|

35

|

+

try {

|

|

36

|

+

const data = await executor(sql);

|

|

37

|

+

return { data, sql };

|

|

38

|

+

}

|

|

39

|

+

catch (error) {

|

|

40

|

+

throw new Error(`Query execution failed: ${error.message}`);

|

|

41

|

+

}

|

|

42

|

+

}

|

|

43

|

+

}

|

|

44

|

+

exports.NaturalLanguageQuery = NaturalLanguageQuery;

|

|

@@ -0,0 +1,8 @@

|

|

|

1

|

+

import { DataChunk, SearchResult } from '../types';

|

|

2

|

+

export declare class VectorSearch {

|

|

3

|

+

private chunks;

|

|

4

|

+

constructor(chunks: DataChunk[]);

|

|

5

|

+

cosineSimilarity(a: number[], b: number[]): number;

|

|

6

|

+

search(queryEmbedding: number[], limit?: number, threshold?: number): SearchResult[];

|

|

7

|

+

hybridSearch(queryEmbedding: number[], keywords: string[], limit?: number, vectorWeight?: number): SearchResult[];

|

|

8

|

+

}

|

|

@@ -0,0 +1,65 @@

|

|

|

1

|

+

"use strict";

|

|

2

|

+

Object.defineProperty(exports, "__esModule", { value: true });

|

|

3

|

+

exports.VectorSearch = void 0;

|

|

4

|

+

class VectorSearch {

|

|

5

|

+

constructor(chunks) {

|

|

6

|

+

this.chunks = chunks;

|

|

7

|

+

}

|

|

8

|

+

cosineSimilarity(a, b) {

|

|

9

|

+

if (a.length !== b.length) {

|

|

10

|

+

throw new Error('Vectors must have the same length');

|

|

11

|

+

}

|

|

12

|

+

let dotProduct = 0;

|

|

13

|

+

let normA = 0;

|

|

14

|

+

let normB = 0;

|

|

15

|

+

for (let i = 0; i < a.length; i++) {

|

|

16

|

+

dotProduct += a[i] * b[i];

|

|

17

|

+

normA += a[i] * a[i];

|

|

18

|

+

normB += b[i] * b[i];

|

|

19

|

+

}

|

|

20

|

+

return dotProduct / (Math.sqrt(normA) * Math.sqrt(normB));

|

|

21

|

+

}

|

|

22

|

+

search(queryEmbedding, limit = 5, threshold = 0.7) {

|

|

23

|

+

const results = [];

|

|

24

|

+

for (const chunk of this.chunks) {

|

|

25

|

+

if (!chunk.embedding)

|

|

26

|

+

continue;

|

|

27

|

+

const score = this.cosineSimilarity(queryEmbedding, chunk.embedding);

|

|

28

|

+

if (score >= threshold) {

|

|

29

|

+

results.push({

|

|

30

|

+

id: chunk.id,

|

|

31

|

+

content: chunk.content,

|

|

32

|

+

score,

|

|

33

|

+

metadata: chunk.metadata

|

|

34

|

+

});

|

|

35

|

+

}

|

|

36

|

+

}

|

|

37

|

+

return results

|

|

38

|

+

.sort((a, b) => b.score - a.score)

|

|

39

|

+

.slice(0, limit);

|

|

40

|

+

}

|

|

41

|

+

hybridSearch(queryEmbedding, keywords, limit = 5, vectorWeight = 0.7) {

|

|

42

|

+

const results = [];

|

|

43

|

+

const keywordWeight = 1 - vectorWeight;

|

|

44

|

+

for (const chunk of this.chunks) {

|

|

45

|

+

if (!chunk.embedding)

|

|

46

|

+

continue;

|

|

47

|

+

const vectorScore = this.cosineSimilarity(queryEmbedding, chunk.embedding);

|

|

48

|

+

const lowerContent = chunk.content.toLowerCase();

|

|

49

|

+

const keywordScore = keywords.reduce((score, keyword) => {

|

|

50

|

+

return score + (lowerContent.includes(keyword.toLowerCase()) ? 1 : 0);

|

|

51

|

+

}, 0) / keywords.length;

|

|

52

|

+

const combinedScore = (vectorScore * vectorWeight) + (keywordScore * keywordWeight);

|

|

53

|

+

results.push({

|

|

54

|

+

id: chunk.id,

|

|

55

|

+

content: chunk.content,

|

|

56

|

+

score: combinedScore,

|

|

57

|

+

metadata: chunk.metadata

|

|

58

|

+

});

|

|

59

|

+

}

|

|

60

|

+

return results

|

|

61

|

+

.sort((a, b) => b.score - a.score)

|

|

62

|

+

.slice(0, limit);

|

|

63

|

+

}

|

|

64

|

+

}

|

|

65

|

+

exports.VectorSearch = VectorSearch;

|

package/dist/types.d.ts

ADDED

|

@@ -0,0 +1,48 @@

|

|

|

1

|

+

export interface ThinkBaseConfig {

|

|

2

|

+

apiKey?: string;

|

|

3

|

+

apiUrl?: string;

|

|

4

|

+

}

|

|

5

|

+

export type DataSourceType = 'postgres' | 'mysql' | 'mongodb' | 'rest' | 'graphql' | 'csv' | 'json' | 'webscrape';

|

|

6

|

+

export interface DataSourceConfig {

|

|

7

|

+

source: DataSourceType;

|

|

8

|

+

connection?: string;