@qwen-code/qwen-code 0.14.0-preview.5 → 0.14.1-preview.0

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- package/README.md +18 -26

- package/bundled/qc-helper/docs/configuration/settings.md +9 -0

- package/bundled/qc-helper/docs/features/_meta.ts +1 -0

- package/bundled/qc-helper/docs/features/commands.md +86 -17

- package/bundled/qc-helper/docs/features/followup-suggestions.md +109 -0

- package/bundled/qc-helper/docs/overview.md +1 -0

- package/cli.js +5451 -3254

- package/locales/de.js +14 -2

- package/locales/en.js +13 -2

- package/locales/ja.js +12 -2

- package/locales/pt.js +14 -2

- package/locales/ru.js +14 -2

- package/locales/zh.js +11 -2

- package/package.json +2 -2

package/README.md

CHANGED

|

@@ -18,19 +18,23 @@

|

|

|

18

18

|

|

|

19

19

|

</div>

|

|

20

20

|

|

|

21

|

-

|

|

21

|

+

## 🎉 News

|

|

22

22

|

|

|

23

|

-

|

|

23

|

+

- **2026-04-02**: Qwen3.6-Plus is now live! Sign in via Qwen OAuth to use it directly, or get an API key from [Alibaba Cloud ModelStudio](https://modelstudio.console.alibabacloud.com/ap-southeast-1?tab=doc#/doc/?type=model&url=2840914_2&modelId=qwen3.6-plus) to access it through the OpenAI-compatible API.

|

|

24

24

|

|

|

25

|

-

|

|

25

|

+

- **2026-02-16**: Qwen3.5-Plus is now live!

|

|

26

26

|

|

|

27

27

|

## Why Qwen Code?

|

|

28

28

|

|

|

29

|

+

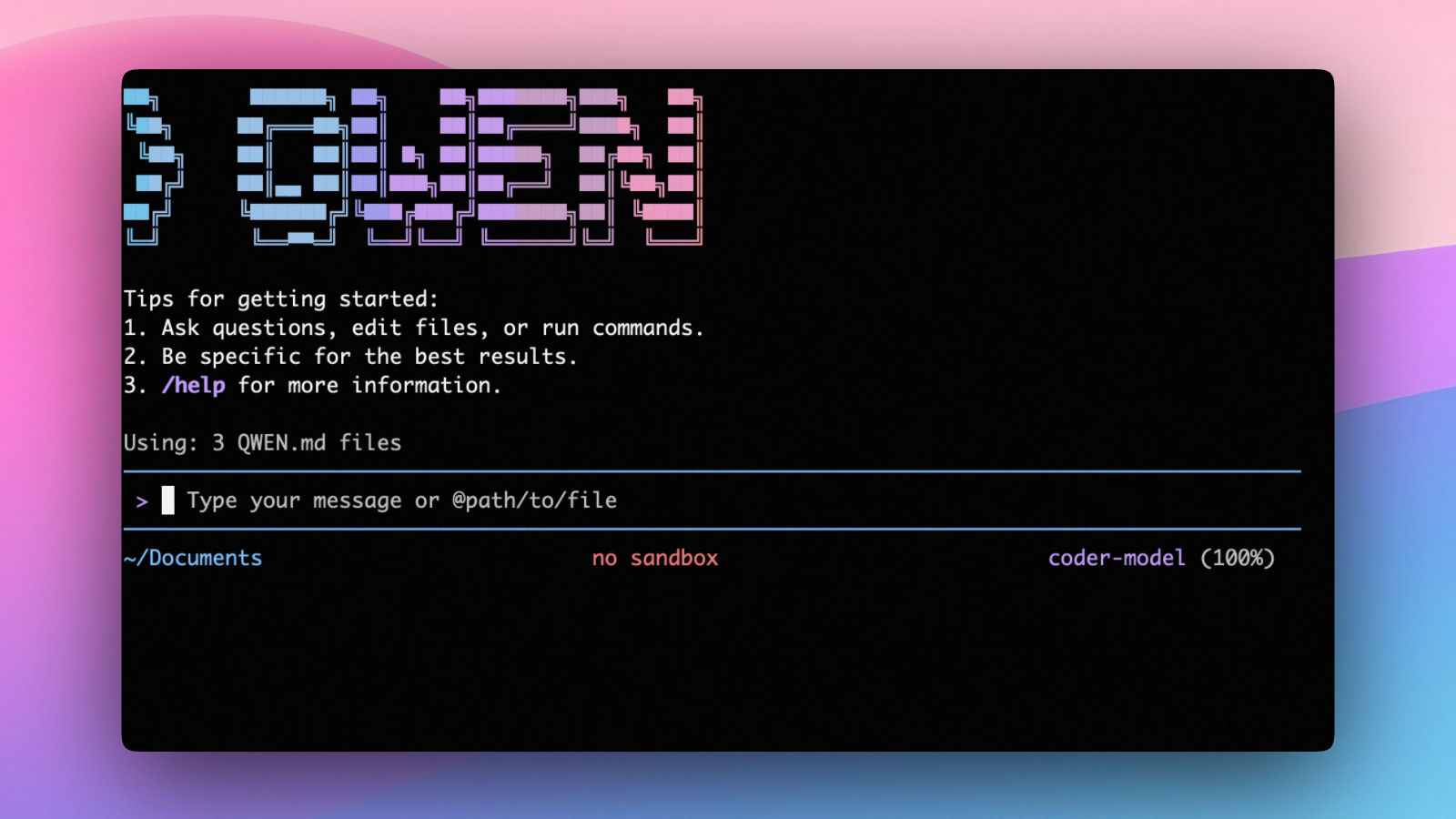

Qwen Code is an open-source AI agent for the terminal, optimized for Qwen series models. It helps you understand large codebases, automate tedious work, and ship faster.

|

|

30

|

+

|

|

29

31

|

- **Multi-protocol, OAuth free tier**: use OpenAI / Anthropic / Gemini-compatible APIs, or sign in with Qwen OAuth for 1,000 free requests/day.

|

|

30

32

|

- **Open-source, co-evolving**: both the framework and the Qwen3-Coder model are open-source—and they ship and evolve together.

|

|

31

33

|

- **Agentic workflow, feature-rich**: rich built-in tools (Skills, SubAgents) for a full agentic workflow and a Claude Code-like experience.

|

|

32

34

|

- **Terminal-first, IDE-friendly**: built for developers who live in the command line, with optional integration for VS Code, Zed, and JetBrains IDEs.

|

|

33

35

|

|

|

36

|

+

|

|

37

|

+

|

|

34

38

|

## Installation

|

|

35

39

|

|

|

36

40

|

### Quick Install (Recommended)

|

|

@@ -148,8 +152,8 @@ Here is a complete example:

|

|

|

148

152

|

"modelProviders": {

|

|

149

153

|

"openai": [

|

|

150

154

|

{

|

|

151

|

-

"id": "qwen3-

|

|

152

|

-

"name": "qwen3-

|

|

155

|

+

"id": "qwen3.6-plus",

|

|

156

|

+

"name": "qwen3.6-plus",

|

|

153

157

|

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

|

|

154

158

|

"description": "Qwen3-Coder via Dashscope",

|

|

155

159

|

"envKey": "DASHSCOPE_API_KEY"

|

|

@@ -165,7 +169,7 @@ Here is a complete example:

|

|

|

165

169

|

}

|

|

166

170

|

},

|

|

167

171

|

"model": {

|

|

168

|

-

"name": "qwen3-

|

|

172

|

+

"name": "qwen3.6-plus"

|

|

169

173

|

}

|

|

170

174

|

}

|

|

171

175

|

```

|

|

@@ -175,7 +179,7 @@ Here is a complete example:

|

|

|

175

179

|

| Field | What it does |

|

|

176

180

|

| ---------------------------- | ------------------------------------------------------------------------------------------------------------------------------------- |

|

|

177

181

|

| `modelProviders` | Declares which models are available and how to connect to them. Keys like `openai`, `anthropic`, `gemini` represent the API protocol. |

|

|

178

|

-

| `modelProviders[].id` | The model ID sent to the API (e.g. `qwen3-

|

|

182

|

+

| `modelProviders[].id` | The model ID sent to the API (e.g. `qwen3.6-plus`, `gpt-4o`). |

|

|

179

183

|

| `modelProviders[].envKey` | The name of the environment variable that holds your API key. |

|

|

180

184

|

| `modelProviders[].baseUrl` | The API endpoint URL (required for non-default endpoints). |

|

|

181

185

|

| `env` | A fallback place to store API keys (lowest priority; prefer `.env` files or `export` for sensitive keys). |

|

|

@@ -200,29 +204,17 @@ Use the `/model` command at any time to switch between all configured models.

|

|

|

200

204

|

"modelProviders": {

|

|

201

205

|

"openai": [

|

|

202

206

|

{

|

|

203

|

-

"id": "qwen3.

|

|

204

|

-

"name": "qwen3.

|

|

207

|

+

"id": "qwen3.6-plus",

|

|

208

|

+

"name": "qwen3.6-plus (Coding Plan)",

|

|

205

209

|

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

|

|

206

|

-

"description": "qwen3.

|

|

207

|

-

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

|

|

208

|

-

"generationConfig": {

|

|

209

|

-

"extra_body": {

|

|

210

|

-

"enable_thinking": true

|

|

211

|

-

}

|

|

212

|

-

}

|

|

213

|

-

},

|

|

214

|

-

{

|

|

215

|

-

"id": "qwen3-coder-plus",

|

|

216

|

-

"name": "qwen3-coder-plus (Coding Plan)",

|

|

217

|

-

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

|

|

218

|

-

"description": "qwen3-coder-plus from ModelStudio Coding Plan",

|

|

210

|

+

"description": "qwen3.6-plus from ModelStudio Coding Plan",

|

|

219

211

|

"envKey": "BAILIAN_CODING_PLAN_API_KEY"

|

|

220

212

|

},

|

|

221

213

|

{

|

|

222

|

-

"id": "qwen3-

|

|

223

|

-

"name": "qwen3-

|

|

214

|

+

"id": "qwen3.5-plus",

|

|

215

|

+

"name": "qwen3.5-plus (Coding Plan)",

|

|

224

216

|

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

|

|

225

|

-

"description": "qwen3-

|

|

217

|

+

"description": "qwen3.5-plus with thinking enabled from ModelStudio Coding Plan",

|

|

226

218

|

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

|

|

227

219

|

"generationConfig": {

|

|

228

220

|

"extra_body": {

|

|

@@ -265,7 +257,7 @@ Use the `/model` command at any time to switch between all configured models.

|

|

|

265

257

|

}

|

|

266

258

|

},

|

|

267

259

|

"model": {

|

|

268

|

-

"name": "qwen3-

|

|

260

|

+

"name": "qwen3.6-plus"

|

|

269

261

|

}

|

|

270

262

|

}

|

|

271

263

|

```

|

|

@@ -109,6 +109,9 @@ Settings are organized into categories. All settings should be placed within the

|

|

|

109

109

|

| `ui.accessibility.enableLoadingPhrases` | boolean | Enable loading phrases (disable for accessibility). | `true` |

|

|

110

110

|

| `ui.accessibility.screenReader` | boolean | Enables screen reader mode, which adjusts the TUI for better compatibility with screen readers. | `false` |

|

|

111

111

|

| `ui.customWittyPhrases` | array of strings | A list of custom phrases to display during loading states. When provided, the CLI will cycle through these phrases instead of the default ones. | `[]` |

|

|

112

|

+

| `ui.enableFollowupSuggestions` | boolean | Enable [followup suggestions](../features/followup-suggestions) that predict what you want to type next after the model responds. Suggestions appear as ghost text and can be accepted with Tab, Enter, or Right Arrow. | `true` |

|

|

113

|

+

| `ui.enableCacheSharing` | boolean | Use cache-aware forked queries for suggestion generation. Reduces cost on providers that support prefix caching (experimental). | `true` |

|

|

114

|

+

| `ui.enableSpeculation` | boolean | Speculatively execute accepted suggestions before submission. Results appear instantly when you accept (experimental). | `false` |

|

|

112

115

|

|

|

113

116

|

#### ide

|

|

114

117

|

|

|

@@ -185,6 +188,12 @@ The `extra_body` field allows you to add custom parameters to the request body s

|

|

|

185

188

|

- `"./custom-logs"` - Logs to `./custom-logs` relative to current directory

|

|

186

189

|

- `"/tmp/openai-logs"` - Logs to absolute path `/tmp/openai-logs`

|

|

187

190

|

|

|

191

|

+

#### fastModel

|

|

192

|

+

|

|

193

|

+

| Setting | Type | Description | Default |

|

|

194

|

+

| ----------- | ------ | ----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ------- |

|

|

195

|

+

| `fastModel` | string | Model for background tasks ([suggestion generation](../features/followup-suggestions), speculation). Leave empty to use the main model. A smaller/faster model (e.g., `qwen3.5-flash`) reduces latency and cost. Can also be set via `/model --fast`. | `""` |

|

|

196

|

+

|

|

188

197

|

#### context

|

|

189

198

|

|

|

190

199

|

| Setting | Type | Description | Default |

|

|

@@ -56,21 +56,90 @@ Commands specifically for controlling interface and output language.

|

|

|

56

56

|

|

|

57

57

|

Commands for managing AI tools and models.

|

|

58

58

|

|

|

59

|

-

| Command | Description

|

|

60

|

-

| ---------------- |

|

|

61

|

-

| `/mcp` | List configured MCP servers and tools

|

|

62

|

-

| `/tools` | Display currently available tool list

|

|

63

|

-

| `/skills` | List and run available skills

|

|

64

|

-

| `/approval-mode` | Change approval mode for tool usage

|

|

65

|

-

| →`plan` | Analysis only, no execution

|

|

66

|

-

| →`default` | Require approval for edits

|

|

67

|

-

| →`auto-edit` | Automatically approve edits

|

|

68

|

-

| →`yolo` | Automatically approve all

|

|

69

|

-

| `/model` | Switch model used in current session

|

|

70

|

-

| `/

|

|

71

|

-

| `/

|

|

72

|

-

|

|

73

|

-

|

|

59

|

+

| Command | Description | Usage Examples |

|

|

60

|

+

| ---------------- | ------------------------------------------------- | --------------------------------------------- |

|

|

61

|

+

| `/mcp` | List configured MCP servers and tools | `/mcp`, `/mcp desc` |

|

|

62

|

+

| `/tools` | Display currently available tool list | `/tools`, `/tools desc` |

|

|

63

|

+

| `/skills` | List and run available skills | `/skills`, `/skills <name>` |

|

|

64

|

+

| `/approval-mode` | Change approval mode for tool usage | `/approval-mode <mode (auto-edit)> --project` |

|

|

65

|

+

| →`plan` | Analysis only, no execution | Secure review |

|

|

66

|

+

| →`default` | Require approval for edits | Daily use |

|

|

67

|

+

| →`auto-edit` | Automatically approve edits | Trusted environment |

|

|

68

|

+

| →`yolo` | Automatically approve all | Quick prototyping |

|

|

69

|

+

| `/model` | Switch model used in current session | `/model` |

|

|

70

|

+

| `/model --fast` | Set or select the fast model for background tasks | `/model --fast qwen3.5-flash` |

|

|

71

|

+

| `/extensions` | List all active extensions in current session | `/extensions` |

|

|

72

|

+

| `/memory` | Manage AI's instruction context | `/memory add Important Info` |

|

|

73

|

+

|

|

74

|

+

### 1.5 Side Question (`/btw`)

|

|

75

|

+

|

|

76

|

+

The `/btw` command allows you to ask quick side questions without interrupting or affecting the main conversation flow.

|

|

77

|

+

|

|

78

|

+

| Command | Description |

|

|

79

|

+

| ---------------------- | ------------------------------------- |

|

|

80

|

+

| `/btw <your question>` | Ask a quick side question |

|

|

81

|

+

| `?btw <your question>` | Alternative syntax for side questions |

|

|

82

|

+

|

|

83

|

+

**How It Works:**

|

|

84

|

+

|

|

85

|

+

- The side question is sent as a separate API call with recent conversation context (up to the last 20 messages)

|

|

86

|

+

- The response is displayed above the Composer — you can continue typing while waiting

|

|

87

|

+

- The main conversation is **not blocked** — it continues independently

|

|

88

|

+

- The side question response does **not** become part of the main conversation history

|

|

89

|

+

- Answers are rendered with full Markdown support (code blocks, lists, tables, etc.)

|

|

90

|

+

|

|

91

|

+

**Keyboard Shortcuts (Interactive Mode):**

|

|

92

|

+

|

|

93

|

+

| Shortcut | Action |

|

|

94

|

+

| -------------------- | --------------------------------------------------- |

|

|

95

|

+

| `Escape` | Cancel (while loading) or dismiss (after completed) |

|

|

96

|

+

| `Space` or `Enter` | Dismiss the answer (when input is empty) |

|

|

97

|

+

| `Ctrl+C` or `Ctrl+D` | Cancel an in-flight side question |

|

|

98

|

+

|

|

99

|

+

**Example:**

|

|

100

|

+

|

|

101

|

+

```

|

|

102

|

+

(While the main conversation is about refactoring code)

|

|

103

|

+

|

|

104

|

+

> /btw What's the difference between let and var in JavaScript?

|

|

105

|

+

|

|

106

|

+

╭──────────────────────────────────────────╮

|

|

107

|

+

│ /btw What's the difference between let │

|

|

108

|

+

│ and var in JavaScript? │

|

|

109

|

+

│ │

|

|

110

|

+

│ + Answering... │

|

|

111

|

+

│ Press Escape, Ctrl+C, or Ctrl+D to cancel│

|

|

112

|

+

╰──────────────────────────────────────────╯

|

|

113

|

+

> (Composer remains active — keep typing)

|

|

114

|

+

|

|

115

|

+

(After the answer arrives)

|

|

116

|

+

|

|

117

|

+

╭──────────────────────────────────────────╮

|

|

118

|

+

│ /btw What's the difference between let │

|

|

119

|

+

│ and var in JavaScript? │

|

|

120

|

+

│ │

|

|

121

|

+

│ `let` is block-scoped, while `var` is │

|

|

122

|

+

│ function-scoped. `let` was introduced │

|

|

123

|

+

│ in ES6 and doesn't hoist the same way. │

|

|

124

|

+

│ │

|

|

125

|

+

│ Press Space, Enter, or Escape to dismiss │

|

|

126

|

+

╰──────────────────────────────────────────╯

|

|

127

|

+

> (Composer still active)

|

|

128

|

+

```

|

|

129

|

+

|

|

130

|

+

**Supported Execution Modes:**

|

|

131

|

+

|

|

132

|

+

| Mode | Behavior |

|

|

133

|

+

| -------------------- | -------------------------------------------- |

|

|

134

|

+

| Interactive | Shows above Composer with Markdown rendering |

|

|

135

|

+

| Non-interactive | Returns text result: `btw> question\nanswer` |

|

|

136

|

+

| ACP (Agent Protocol) | Returns stream_messages async generator |

|

|

137

|

+

|

|

138

|

+

> [!tip]

|

|

139

|

+

>

|

|

140

|

+

> Use `/btw` when you need a quick answer without derailing your main task. It's especially useful for clarifying concepts, checking facts, or getting quick explanations while staying focused on your primary workflow.

|

|

141

|

+

|

|

142

|

+

### 1.6 Information, Settings, and Help

|

|

74

143

|

|

|

75

144

|

Commands for obtaining information and performing system settings.

|

|

76

145

|

|

|

@@ -85,7 +154,7 @@ Commands for obtaining information and performing system settings.

|

|

|

85

154

|

| `/copy` | Copy last output content to clipboard | `/copy` |

|

|

86

155

|

| `/quit` | Exit Qwen Code immediately | `/quit` or `/exit` |

|

|

87

156

|

|

|

88

|

-

### 1.

|

|

157

|

+

### 1.7 Common Shortcuts

|

|

89

158

|

|

|

90

159

|

| Shortcut | Function | Note |

|

|

91

160

|

| ------------------ | ----------------------- | ---------------------- |

|

|

@@ -95,7 +164,7 @@ Commands for obtaining information and performing system settings.

|

|

|

95

164

|

| `Ctrl/cmd+Z` | Undo input | Text editing |

|

|

96

165

|

| `Ctrl/cmd+Shift+Z` | Redo input | Text editing |

|

|

97

166

|

|

|

98

|

-

### 1.

|

|

167

|

+

### 1.8 CLI Auth Subcommands

|

|

99

168

|

|

|

100

169

|

In addition to the in-session `/auth` slash command, Qwen Code provides standalone CLI subcommands for managing authentication directly from the terminal:

|

|

101

170

|

|

|

@@ -0,0 +1,109 @@

|

|

|

1

|

+

# Followup Suggestions

|

|

2

|

+

|

|

3

|

+

Qwen Code can predict what you want to type next and show it as ghost text in the input area. This feature uses an LLM call to analyze the conversation context and generate a natural next step suggestion.

|

|

4

|

+

|

|

5

|

+

This feature works end-to-end in the CLI. In the WebUI, the hook and UI plumbing are available, but host applications must trigger suggestion generation and wire the followup state for suggestions to appear.

|

|

6

|

+

|

|

7

|

+

## How It Works

|

|

8

|

+

|

|

9

|

+

After Qwen Code finishes responding, a suggestion appears as dimmed text in the input area after a short delay (~300ms). For example, after fixing a bug, you might see:

|

|

10

|

+

|

|

11

|

+

```

|

|

12

|

+

> run the tests

|

|

13

|

+

```

|

|

14

|

+

|

|

15

|

+

The suggestion is generated by sending the conversation history to the model, which predicts what you would naturally type next.

|

|

16

|

+

|

|

17

|

+

## Accepting Suggestions

|

|

18

|

+

|

|

19

|

+

| Key | Action |

|

|

20

|

+

| ------------- | ------------------------------------------------ |

|

|

21

|

+

| `Tab` | Accept the suggestion and fill it into the input |

|

|

22

|

+

| `Enter` | Accept the suggestion and submit it immediately |

|

|

23

|

+

| `Right Arrow` | Accept the suggestion and fill it into the input |

|

|

24

|

+

| Any typing | Dismiss the suggestion and type normally |

|

|

25

|

+

|

|

26

|

+

## When Suggestions Appear

|

|

27

|

+

|

|

28

|

+

Suggestions are generated when all of the following conditions are met:

|

|

29

|

+

|

|

30

|

+

- The model has completed its response (not during streaming)

|

|

31

|

+

- At least 2 model turns have occurred in the conversation

|

|

32

|

+

- There are no errors in the most recent response

|

|

33

|

+

- No confirmation dialogs are pending (e.g., shell confirmation, permissions)

|

|

34

|

+

- The approval mode is not set to `plan`

|

|

35

|

+

- The feature is enabled in settings (enabled by default)

|

|

36

|

+

|

|

37

|

+

Suggestions will not appear in non-interactive mode (e.g., headless/SDK mode).

|

|

38

|

+

|

|

39

|

+

Suggestions are automatically dismissed when:

|

|

40

|

+

|

|

41

|

+

- You start typing

|

|

42

|

+

- A new model turn begins

|

|

43

|

+

- The suggestion is accepted

|

|

44

|

+

|

|

45

|

+

## Fast Model

|

|

46

|

+

|

|

47

|

+

By default, suggestions use the same model as your main conversation. For faster and cheaper suggestions, configure a dedicated fast model:

|

|

48

|

+

|

|

49

|

+

### Via command

|

|

50

|

+

|

|

51

|

+

```

|

|

52

|

+

/model --fast qwen3.5-flash

|

|

53

|

+

```

|

|

54

|

+

|

|

55

|

+

Or use `/model --fast` (without a model name) to open a selection dialog.

|

|

56

|

+

|

|

57

|

+

### Via settings.json

|

|

58

|

+

|

|

59

|

+

```json

|

|

60

|

+

{

|

|

61

|

+

"fastModel": "qwen3.5-flash"

|

|

62

|

+

}

|

|

63

|

+

```

|

|

64

|

+

|

|

65

|

+

The fast model is used for background tasks like suggestion generation. When not configured, the main conversation model is used as fallback.

|

|

66

|

+

|

|

67

|

+

Thinking/reasoning mode is automatically disabled for all background tasks (suggestion generation and speculation), regardless of your main model's thinking configuration. This avoids wasting tokens on internal reasoning that isn't needed for these tasks.

|

|

68

|

+

|

|

69

|

+

## Configuration

|

|

70

|

+

|

|

71

|

+

These settings can be configured in `settings.json`:

|

|

72

|

+

|

|

73

|

+

| Setting | Type | Default | Description |

|

|

74

|

+

| ------------------------------ | ------- | ------- | ------------------------------------------------------------------ |

|

|

75

|

+

| `ui.enableFollowupSuggestions` | boolean | `true` | Enable or disable followup suggestions |

|

|

76

|

+

| `ui.enableCacheSharing` | boolean | `true` | Use cache-aware forked queries to reduce cost (experimental) |

|

|

77

|

+

| `ui.enableSpeculation` | boolean | `false` | Speculatively execute suggestions before submission (experimental) |

|

|

78

|

+

| `fastModel` | string | `""` | Model for background tasks (suggestion generation, speculation) |

|

|

79

|

+

|

|

80

|

+

### Example

|

|

81

|

+

|

|

82

|

+

```json

|

|

83

|

+

{

|

|

84

|

+

"fastModel": "qwen3.5-flash",

|

|

85

|

+

"ui": {

|

|

86

|

+

"enableFollowupSuggestions": true,

|

|

87

|

+

"enableCacheSharing": true

|

|

88

|

+

}

|

|

89

|

+

}

|

|

90

|

+

```

|

|

91

|

+

|

|

92

|

+

## Monitoring

|

|

93

|

+

|

|

94

|

+

Suggestion model usage appears in `/stats` output, showing tokens consumed by the fast model for suggestion generation.

|

|

95

|

+

|

|

96

|

+

The fast model is also shown in `/about` output under "Fast Model".

|

|

97

|

+

|

|

98

|

+

## Suggestion Quality

|

|

99

|

+

|

|

100

|

+

Suggestions go through quality filters to ensure they are useful:

|

|

101

|

+

|

|

102

|

+

- Must be 2-12 words (CJK: 2-30 characters), under 100 characters total

|

|

103

|

+

- Cannot be evaluative ("looks good", "thanks")

|

|

104

|

+

- Cannot use AI voice ("Let me...", "I'll...")

|

|

105

|

+

- Cannot be multiple sentences or contain formatting (markdown, newlines)

|

|

106

|

+

- Cannot be meta-commentary ("nothing to suggest", "silence")

|

|

107

|

+

- Cannot be error messages or prefixed labels ("Suggestion: ...")

|

|

108

|

+

- Single-word suggestions are only allowed for common commands (yes, commit, push, etc.)

|

|

109

|

+

- Slash commands (e.g., `/commit`) are always allowed as single-word suggestions

|

|

@@ -56,6 +56,7 @@ You'll be prompted to log in on first use. That's it! [Continue with Quickstart

|

|

|

56

56

|

- **Debug and fix issues**: Describe a bug or paste an error message. Qwen Code will analyze your codebase, identify the problem, and implement a fix.

|

|

57

57

|

- **Navigate any codebase**: Ask anything about your team's codebase, and get a thoughtful answer back. Qwen Code maintains awareness of your entire project structure, can find up-to-date information from the web, and with [MCP](./features/mcp) can pull from external datasources like Google Drive, Figma, and Slack.

|

|

58

58

|

- **Automate tedious tasks**: Fix fiddly lint issues, resolve merge conflicts, and write release notes. Do all this in a single command from your developer machines, or automatically in CI.

|

|

59

|

+

- **[Followup suggestions](./features/followup-suggestions)**: Qwen Code predicts what you want to type next and shows it as ghost text. Press Tab to accept, or just keep typing to dismiss.

|

|

59

60

|

|

|

60

61

|

## Why developers love Qwen Code

|

|

61

62

|

|