wgit 0.8.0 → 0.9.0

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- checksums.yaml +4 -4

- data/.yardopts +1 -1

- data/CHANGELOG.md +39 -0

- data/LICENSE.txt +1 -1

- data/README.md +118 -323

- data/bin/wgit +9 -5

- data/lib/wgit.rb +3 -1

- data/lib/wgit/assertable.rb +3 -3

- data/lib/wgit/base.rb +30 -0

- data/lib/wgit/crawler.rb +206 -76

- data/lib/wgit/database/database.rb +309 -134

- data/lib/wgit/database/model.rb +10 -3

- data/lib/wgit/document.rb +138 -95

- data/lib/wgit/{document_extensions.rb → document_extractors.rb} +11 -11

- data/lib/wgit/dsl.rb +324 -0

- data/lib/wgit/indexer.rb +65 -162

- data/lib/wgit/response.rb +5 -2

- data/lib/wgit/url.rb +133 -31

- data/lib/wgit/utils.rb +32 -20

- data/lib/wgit/version.rb +2 -1

- metadata +26 -14

checksums.yaml

CHANGED

|

@@ -1,7 +1,7 @@

|

|

|

1

1

|

---

|

|

2

2

|

SHA256:

|

|

3

|

-

metadata.gz:

|

|

4

|

-

data.tar.gz:

|

|

3

|

+

metadata.gz: 07e1146e7ddcbb35abb813ae1461520e581576181750d4b9dc654de3f3375d4c

|

|

4

|

+

data.tar.gz: 6f43949fcdf13c731362d242110348dd43c5183c10130605c2e022e15cbe8cdb

|

|

5

5

|

SHA512:

|

|

6

|

-

metadata.gz:

|

|

7

|

-

data.tar.gz:

|

|

6

|

+

metadata.gz: 7288c42fe7b8598572e8b4c8013f8614bd60caa048474a039d8c9a1f4ae231695148158293730998ac78b1f36a4ccd52c9664be1df0c49e218d740fd881d64c4

|

|

7

|

+

data.tar.gz: 0e36ea8f76aa41f5576044902cdc3e92c3affeb742c179a2fa5ba2b404ad057dede949b5e767bc09eb771b47bc153cf9462e56d9e5a393a63cb9e120bae870a9

|

data/.yardopts

CHANGED

data/CHANGELOG.md

CHANGED

|

@@ -9,6 +9,45 @@

|

|

|

9

9

|

- ...

|

|

10

10

|

---

|

|

11

11

|

|

|

12

|

+

## v0.9.0

|

|

13

|

+

This release is a big one with the introduction of a `Wgit::DSL` and Javascript parse support. The `README` has been revamped as a result with new usage examples. And all of the wiki articles have been updated to reflect the latest code base.

|

|

14

|

+

### Added

|

|

15

|

+

- `Wgit::DSL` module providing a wrapper around the underlying classes and methods. Check out the `README` for example usage.

|

|

16

|

+

- `Wgit::Crawler#parse_javascript` which when set to `true` uses Chrome to parse a page's Javascript before returning the fully rendered HTML. This feature is disabled by default.

|

|

17

|

+

- `Wgit::Base` class to inherit from, acting as an alternative form of using the DSL.

|

|

18

|

+

- `Wgit::Utils.sanitize` which calls `.sanitize_*` underneath.

|

|

19

|

+

- `Wgit::Crawler#crawl_site` now has a `follow:` named param - if set, it's xpath value is used to retrieve the next urls to crawl. Otherwise the `:default` is used (as it was before). Use this to override how the site is crawled.

|

|

20

|

+

- `Wgit::Database` methods: `#clear_urls`, `#clear_docs`, `#clear_db`, `#text_index`, `#text_index=`, `#create_collections`, `#create_unique_indexes`, `#docs`, `#get`, `#exists?`, `#delete`, `#upsert`.

|

|

21

|

+

- `Wgit::Database#clear_db!` alias.

|

|

22

|

+

- `Wgit::Document` methods: `#at_xpath`, `#at_css` - which call nokogiri underneath.

|

|

23

|

+

- `Wgit::Document#extract` method to perform one off content extractions.

|

|

24

|

+

- `Wgit::Indexer#index_urls` method which can index several urls in one call.

|

|

25

|

+

- `Wgit::Url` methods: `#to_user`, `#to_password`, `#to_sub_domain`, `#to_port`, `#omit_origin`, `#index?`.

|

|

26

|

+

### Changed/Removed

|

|

27

|

+

- Breaking change: Moved all `Wgit.index*` convienence methods into `Wgit::DSL`.

|

|

28

|

+

- Breaking change: Removed `Wgit::Url#normalise`, use `#normalize` instead.

|

|

29

|

+

- Breaking change: Removed `Wgit::Database#num_documents`, use `#num_docs` instead.

|

|

30

|

+

- Breaking change: Removed `Wgit::Database#length` and `#count`, use `#size` instead.

|

|

31

|

+

- Breaking change: Removed `Wgit::Database#document?`, use `#doc?` instead.

|

|

32

|

+

- Breaking change: Renamed `Wgit::Indexer#index_page` to `#index_url`.

|

|

33

|

+

- Breaking change: Renamed `Wgit::Url.parse_or_nil` to be `.parse?`.

|

|

34

|

+

- Breaking change: Renamed `Wgit::Utils.process_*` to be `.sanitize_*`.

|

|

35

|

+

- Breaking change: Renamed `Wgit::Utils.remove_non_bson_types` to be `Wgit::Model.select_bson_types`.

|

|

36

|

+

- Breaking change: Changed `Wgit::Indexer.index*` named param default from `insert_externals: true` to `false`. Explicitly set it to `true` for the old behaviour.

|

|

37

|

+

- Breaking change: Renamed `Wgit::Document.define_extension` to `define_extractor`. Same goes for `remove_extension -> remove_extractor` and `extensions -> extractors`. See the docs for more information.

|

|

38

|

+

- Breaking change: Renamed `Wgit::Document#doc` to `#parser`.

|

|

39

|

+

- Breaking change: Renamed `Wgit::Crawler#time_out` to `#timeout`. Same goes for the named param passed to `Wgit::Crawler.initialize`.

|

|

40

|

+

- Breaking change: Refactored `Wgit::Url#relative?` now takes `:origin` instead of `:base` which takes the port into account. This has a knock on effect for some other methods too - check the docs if you're getting parameter errors.

|

|

41

|

+

- Breaking change: Renamed `Wgit::Url#prefix_base` to `#make_absolute`.

|

|

42

|

+

- Updated `Utils.printf_search_results` to return the number of results.

|

|

43

|

+

- Updated `Wgit::Indexer.new` which can now be called without parameters - the first param (for a database) now defaults to `Wgit::Database.new` which works if `ENV['WGIT_CONNECTION_STRING']` is set.

|

|

44

|

+

- Updated `Wgit::Document.define_extractor` to define a setter method (as well as the usual getter method).

|

|

45

|

+

- Updated `Wgit::Document#search` to support a `Regexp` query (in addition to a String).

|

|

46

|

+

### Fixed

|

|

47

|

+

- [Re-indexing bug](https://github.com/michaeltelford/wgit/issues/8) so that indexing content a 2nd time will update it in the database - before it simply disgarded the document.

|

|

48

|

+

- `Wgit::Crawler#crawl_site` params `allow/disallow_paths` values can now start with a `/`.

|

|

49

|

+

---

|

|

50

|

+

|

|

12

51

|

## v0.8.0

|

|

13

52

|

### Added

|

|

14

53

|

- To the range of `Wgit::Document.text_elements`. Now (only and) all visible page text should be extracted into `Wgit::Document#text` successfully.

|

data/LICENSE.txt

CHANGED

|

@@ -1,6 +1,6 @@

|

|

|

1

1

|

The MIT License (MIT)

|

|

2

2

|

|

|

3

|

-

Copyright (c) 2016 -

|

|

3

|

+

Copyright (c) 2016 - 2020 Michael Telford

|

|

4

4

|

|

|

5

5

|

Permission is hereby granted, free of charge, to any person obtaining a copy

|

|

6

6

|

of this software and associated documentation files (the "Software"), to deal

|

data/README.md

CHANGED

|

@@ -8,382 +8,191 @@

|

|

|

8

8

|

|

|

9

9

|

---

|

|

10

10

|

|

|

11

|

-

Wgit is a

|

|

11

|

+

Wgit is a HTML web crawler, written in Ruby, that allows you to programmatically extract the data you want from the web.

|

|

12

12

|

|

|

13

|

-

|

|

13

|

+

Wgit was primarily designed to crawl static HTML websites to index and search their content - providing the basis of any search engine; but Wgit is suitable for many application domains including:

|

|

14

14

|

|

|

15

|

-

|

|

15

|

+

- URL parsing

|

|

16

|

+

- Document content extraction (data mining)

|

|

17

|

+

- Crawling entire websites (statistical analysis)

|

|

16

18

|

|

|

17

|

-

|

|

19

|

+

Wgit provides a high level, easy-to-use API and DSL that you can use in your own applications and scripts.

|

|

18

20

|

|

|

19

|

-

|

|

20

|

-

|

|

21

|

-

1. [Installation](#Installation)

|

|

22

|

-

2. [Basic Usage](#Basic-Usage)

|

|

23

|

-

3. [Documentation](#Documentation)

|

|

24

|

-

4. [Practical Examples](#Practical-Examples)

|

|

25

|

-

5. [Database Example](#Database-Example)

|

|

26

|

-

6. [Extending The API](#Extending-The-API)

|

|

27

|

-

7. [Caveats](#Caveats)

|

|

28

|

-

8. [Executable](#Executable)

|

|

29

|

-

9. [Change Log](#Change-Log)

|

|

30

|

-

10. [License](#License)

|

|

31

|

-

11. [Contributing](#Contributing)

|

|

32

|

-

12. [Development](#Development)

|

|

33

|

-

|

|

34

|

-

## Installation

|

|

35

|

-

|

|

36

|

-

Currently, the required Ruby version is:

|

|

37

|

-

|

|

38

|

-

`~> 2.5` a.k.a. `>= 2.5 && < 3`

|

|

39

|

-

|

|

40

|

-

Add this line to your application's `Gemfile`:

|

|

41

|

-

|

|

42

|

-

```ruby

|

|

43

|

-

gem 'wgit'

|

|

44

|

-

```

|

|

45

|

-

|

|

46

|

-

And then execute:

|

|

47

|

-

|

|

48

|

-

$ bundle

|

|

49

|

-

|

|

50

|

-

Or install it yourself as:

|

|

21

|

+

Check out this [demo search engine](https://search-engine-rb.herokuapp.com) - [built](https://github.com/michaeltelford/search_engine) using Wgit and Sinatra - deployed to [Heroku](https://www.heroku.com/). Heroku's free tier is used so the initial page load may be slow. Try searching for "Matz" or something else that's Ruby related.

|

|

51

22

|

|

|

52

|

-

|

|

23

|

+

## Table Of Contents

|

|

53

24

|

|

|

54

|

-

|

|

25

|

+

1. [Usage](#Usage)

|

|

26

|

+

2. [Why Wgit?](#Why-Wgit)

|

|

27

|

+

3. [Why Not Wgit?](#Why-Not-Wgit)

|

|

28

|

+

4. [Installation](#Installation)

|

|

29

|

+

5. [Documentation](#Documentation)

|

|

30

|

+

6. [Executable](#Executable)

|

|

31

|

+

7. [License](#License)

|

|

32

|

+

8. [Contributing](#Contributing)

|

|

33

|

+

9. [Development](#Development)

|

|

55

34

|

|

|

56

|

-

|

|

35

|

+

## Usage

|

|

57

36

|

|

|

58

|

-

|

|

37

|

+

Let's crawl a [quotes website](http://quotes.toscrape.com/) extracting its *quotes* and *authors* using the Wgit DSL:

|

|

59

38

|

|

|

60

39

|

```ruby

|

|

61

40

|

require 'wgit'

|

|

41

|

+

require 'json'

|

|

62

42

|

|

|

63

|

-

|

|

64

|

-

url = Wgit::Url.new 'https://wikileaks.org/What-is-Wikileaks.html'

|

|

65

|

-

|

|

66

|

-

doc = crawler.crawl url # Or use #crawl_site(url) { |doc| ... } etc.

|

|

67

|

-

crawler.last_response.class # => Wgit::Response is a wrapper for Typhoeus::Response.

|

|

68

|

-

|

|

69

|

-

doc.class # => Wgit::Document

|

|

70

|

-

doc.class.public_instance_methods(false).sort # => [

|

|

71

|

-

# :==, :[], :author, :base, :base_url, :content, :css, :description, :doc, :empty?,

|

|

72

|

-

# :external_links, :external_urls, :html, :internal_absolute_links,

|

|

73

|

-

# :internal_absolute_urls,:internal_links, :internal_urls, :keywords, :links, :score,

|

|

74

|

-

# :search, :search!, :size, :statistics, :stats, :text, :title, :to_h, :to_json,

|

|

75

|

-

# :url, :xpath

|

|

76

|

-

# ]

|

|

77

|

-

|

|

78

|

-

doc.url # => "https://wikileaks.org/What-is-Wikileaks.html"

|

|

79

|

-

doc.title # => "WikiLeaks - What is WikiLeaks"

|

|

80

|

-

doc.stats # => {

|

|

81

|

-

# :url=>44, :html=>28133, :title=>17, :keywords=>0,

|

|

82

|

-

# :links=>35, :text=>67, :text_bytes=>13735

|

|

83

|

-

# }

|

|

84

|

-

doc.links # => ["#submit_help_contact", "#submit_help_tor", "#submit_help_tips", ...]

|

|

85

|

-

doc.text # => ["The Courage Foundation is an international organisation that <snip>", ...]

|

|

86

|

-

|

|

87

|

-

results = doc.search 'corruption' # Searches doc.text for the given query.

|

|

88

|

-

results.first # => "ial materials involving war, spying and corruption.

|

|

89

|

-

# It has so far published more"

|

|

90

|

-

```

|

|

91

|

-

|

|

92

|

-

## Documentation

|

|

43

|

+

include Wgit::DSL

|

|

93

44

|

|

|

94

|

-

|

|

45

|

+

start 'http://quotes.toscrape.com/tag/humor/'

|

|

46

|

+

follow "//li[@class='next']/a/@href"

|

|

95

47

|

|

|

96

|

-

|

|

48

|

+

extract :quotes, "//div[@class='quote']/span[@class='text']", singleton: false

|

|

49

|

+

extract :authors, "//div[@class='quote']/span/small", singleton: false

|

|

97

50

|

|

|

98

|

-

|

|

51

|

+

quotes = []

|

|

99

52

|

|

|

100

|

-

|

|

101

|

-

|

|

102

|

-

|

|

103

|

-

|

|

104

|

-

|

|

105

|

-

|

|

106

|

-

|

|

107

|

-

|

|

108

|

-

### Website Downloader

|

|

109

|

-

|

|

110

|

-

Wgit uses itself to download and save fixture webpages to disk (used in tests). See the script [here](https://github.com/michaeltelford/wgit/blob/master/test/mock/save_site.rb) and edit it for your own purposes.

|

|

111

|

-

|

|

112

|

-

### Broken Link Finder

|

|

113

|

-

|

|

114

|

-

The `broken_link_finder` gem uses Wgit under the hood to find and report a website's broken links. Check out its [repository](https://github.com/michaeltelford/broken_link_finder) for more details.

|

|

115

|

-

|

|

116

|

-

### CSS Indexer

|

|

117

|

-

|

|

118

|

-

The below script downloads the contents of the first css link found on Facebook's index page.

|

|

119

|

-

|

|

120

|

-

```ruby

|

|

121

|

-

require 'wgit'

|

|

122

|

-

require 'wgit/core_ext' # Provides the String#to_url and Enumerable#to_urls methods.

|

|

123

|

-

|

|

124

|

-

crawler = Wgit::Crawler.new

|

|

125

|

-

url = 'https://www.facebook.com'.to_url

|

|

126

|

-

|

|

127

|

-

doc = crawler.crawl url

|

|

128

|

-

|

|

129

|

-

# Provide your own xpath (or css selector) to search the HTML using Nokogiri underneath.

|

|

130

|

-

hrefs = doc.xpath "//link[@rel='stylesheet']/@href"

|

|

131

|

-

|

|

132

|

-

hrefs.class # => Nokogiri::XML::NodeSet

|

|

133

|

-

href = hrefs.first.value # => "https://static.xx.fbcdn.net/rsrc.php/v3/y1/l/0,cross/NvZ4mNTW3Fd.css"

|

|

53

|

+

crawl_site do |doc|

|

|

54

|

+

doc.quotes.zip(doc.authors).each do |arr|

|

|

55

|

+

quotes << {

|

|

56

|

+

quote: arr.first,

|

|

57

|

+

author: arr.last

|

|

58

|

+

}

|

|

59

|

+

end

|

|

60

|

+

end

|

|

134

61

|

|

|

135

|

-

|

|

136

|

-

css[0..50] # => "._3_s0._3_s0{border:0;display:flex;height:44px;min-"

|

|

62

|

+

puts JSON.generate(quotes)

|

|

137

63

|

```

|

|

138

64

|

|

|

139

|

-

|

|

140

|

-

|

|

141

|

-

The below script downloads the contents of several webpages and pulls out their keywords for comparison. Such a script might be used by marketeers for search engine optimisation (SEO) for example.

|

|

65

|

+

The [DSL](https://github.com/michaeltelford/wgit/wiki/How-To-Use-The-DSL) makes it easy to write scripts for experimenting with. Wgit's DSL is simply a wrapper around the underlying classes however. For comparison, here is the above example written using the Wgit API *instead of* the DSL:

|

|

142

66

|

|

|

143

67

|

```ruby

|

|

144

68

|

require 'wgit'

|

|

145

|

-

require '

|

|

146

|

-

|

|

147

|

-

my_pages_keywords = ['Everest', 'mountaineering school', 'adventure']

|

|

148

|

-

my_pages_missing_keywords = []

|

|

149

|

-

|

|

150

|

-

competitor_urls = [

|

|

151

|

-

'http://altitudejunkies.com',

|

|

152

|

-

'http://www.mountainmadness.com',

|

|

153

|

-

'http://www.adventureconsultants.com'

|

|

154

|

-

].to_urls

|

|

69

|

+

require 'json'

|

|

155

70

|

|

|

156

71

|

crawler = Wgit::Crawler.new

|

|

157

|

-

|

|

158

|

-

|

|

159

|

-

|

|

160

|

-

|

|

161

|

-

|

|

162

|

-

|

|

72

|

+

url = Wgit::Url.new('http://quotes.toscrape.com/tag/humor/')

|

|

73

|

+

quotes = []

|

|

74

|

+

|

|

75

|

+

Wgit::Document.define_extractor(:quotes, "//div[@class='quote']/span[@class='text']", singleton: false)

|

|

76

|

+

Wgit::Document.define_extractor(:authors, "//div[@class='quote']/span/small", singleton: false)

|

|

77

|

+

|

|

78

|

+

crawler.crawl_site(url, follow: "//li[@class='next']/a/@href") do |doc|

|

|

79

|

+

doc.quotes.zip(doc.authors).each do |arr|

|

|

80

|

+

quotes << {

|

|

81

|

+

quote: arr.first,

|

|

82

|

+

author: arr.last

|

|

83

|

+

}

|

|

163

84

|

end

|

|

164

85

|

end

|

|

165

86

|

|

|

166

|

-

|

|

167

|

-

puts 'Your pages are missing no keywords, nice one!'

|

|

168

|

-

else

|

|

169

|

-

puts 'Your pages compared to your competitors are missing the following keywords:'

|

|

170

|

-

puts my_pages_missing_keywords.uniq

|

|

171

|

-

end

|

|

87

|

+

puts JSON.generate(quotes)

|

|

172

88

|

```

|

|

173

89

|

|

|

174

|

-

|

|

175

|

-

|

|

176

|

-

The next example requires a configured database instance. The use of a database for Wgit is entirely optional however and isn't required for crawling or URL parsing etc. A database is only needed when indexing (inserting crawled data into the database).

|

|

177

|

-

|

|

178

|

-

Currently the only supported DBMS is MongoDB. See [MongoDB Atlas](https://www.mongodb.com/cloud/atlas) for a (small) free account or provide your own MongoDB instance. Take a look at this [Docker Hub image](https://hub.docker.com/r/michaeltelford/mongo-wgit) for an already built example of a `mongo` image configured for use with Wgit; the source of which can be found in the [`./docker`](https://github.com/michaeltelford/wgit/tree/master/docker) directory of this repository.

|

|

90

|

+

But what if we want to crawl and store the content in a database, so that it can be searched? Wgit makes it easy to index and search HTML using [MongoDB](https://www.mongodb.com/):

|

|

179

91

|

|

|

180

|

-

|

|

181

|

-

|

|

182

|

-

### Versioning

|

|

183

|

-

|

|

184

|

-

The following versions of MongoDB are currently supported:

|

|

92

|

+

```ruby

|

|

93

|

+

require 'wgit'

|

|

185

94

|

|

|

186

|

-

|

|

187

|

-

| ------ | -------- |

|

|

188

|

-

| ~> 2.9 | ~> 4.0 |

|

|

95

|

+

include Wgit::DSL

|

|

189

96

|

|

|

190

|

-

|

|

97

|

+

Wgit.logger.level = Logger::WARN

|

|

191

98

|

|

|

192

|

-

|

|

99

|

+

connection_string 'mongodb://user:password@localhost/crawler'

|

|

100

|

+

clear_db!

|

|

193

101

|

|

|

194

|

-

|

|

195

|

-

|

|

196

|

-

| `urls` | Stores URL's to be crawled at a later date |

|

|

197

|

-

| `documents` | Stores web documents after they've been crawled |

|

|

102

|

+

extract :quotes, "//div[@class='quote']/span[@class='text']", singleton: false

|

|

103

|

+

extract :authors, "//div[@class='quote']/span/small", singleton: false

|

|

198

104

|

|

|

199

|

-

|

|

105

|

+

start 'http://quotes.toscrape.com/tag/humor/'

|

|

106

|

+

follow "//li[@class='next']/a/@href"

|

|

200

107

|

|

|

201

|

-

|

|

108

|

+

index_site

|

|

109

|

+

search 'prejudice'

|

|

110

|

+

```

|

|

202

111

|

|

|

203

|

-

|

|

112

|

+

The `search` call (on the last line) will return and output the results:

|

|

204

113

|

|

|

205

|

-

|

|

206

|

-

|

|

114

|

+

```text

|

|

115

|

+

Quotes to Scrape

|

|

116

|

+

“I am free of all prejudice. I hate everyone equally. ”

|

|

117

|

+

http://quotes.toscrape.com/tag/humor/page/2/

|

|

118

|

+

```

|

|

207

119

|

|

|

208

|

-

|

|

209

|

-

| ----------- | ------------------- | ------------------- |

|

|

210

|

-

| `urls` | `{ "url" : 1 }` | `{ unique : true }` |

|

|

211

|

-

| `documents` | `{ "url.url" : 1 }` | `{ unique : true }` |

|

|

120

|

+

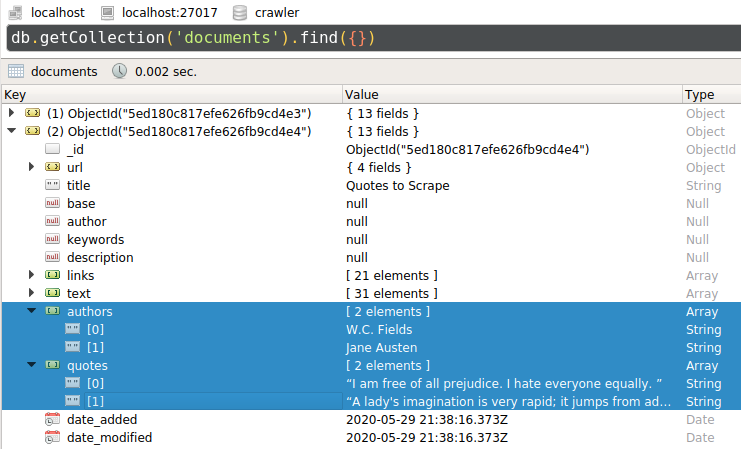

Using a Mongo DB [client](https://robomongo.org/), we can see that the two webpages have been indexed, along with their extracted *quotes* and *authors*:

|

|

212

121

|

|

|

213

|

-

|

|

214

|

-

4) Create a [*text search index*](https://docs.mongodb.com/manual/core/index-text/#index-feature-text) for the `documents` collection using:

|

|

215

|

-

```json

|

|

216

|

-

{

|

|

217

|

-

"text": "text",

|

|

218

|

-

"author": "text",

|

|

219

|

-

"keywords": "text",

|

|

220

|

-

"title": "text"

|

|

221

|

-

}

|

|

222

|

-

```

|

|

122

|

+

|

|

223

123

|

|

|

224

|

-

|

|

124

|

+

## Why Wgit?

|

|

225

125

|

|

|

226

|

-

|

|

126

|

+

There are many [other HTML crawlers](https://awesome-ruby.com/#-web-crawling) out there so why use Wgit?

|

|

227

127

|

|

|

228

|

-

|

|

128

|

+

- Wgit has excellent unit testing, 100% documentation coverage and follows [semantic versioning](https://semver.org/) rules.

|

|

129

|

+

- Wgit excels at crawling an entire website's HTML out of the box. Many alternative crawlers require you to provide the `xpath` needed to *follow* the next URLs to crawl. Wgit by default, crawls the entire site by extracting its internal links pointing to the same host.

|

|

130

|

+

- Wgit allows you to define content *extractors* that will fire on every subsequent crawl; be it a single URL or an entire website. This enables you to focus on the content you want.

|

|

131

|

+

- Wgit can index (crawl and store) HTML to a database making it a breeze to build custom search engines. You can also specify which page content gets searched, making the search more meaningful. For example, here's a script that will index the Wgit [wiki](https://github.com/michaeltelford/wgit/wiki) articles:

|

|

229

132

|

|

|

230

133

|

```ruby

|

|

231

134

|

require 'wgit'

|

|

232

135

|

|

|

233

|

-

|

|

234

|

-

|

|

235

|

-

# In the absence of a connection string parameter, ENV['WGIT_CONNECTION_STRING'] will be used.

|

|

236

|

-

db = Wgit::Database.connect '<your_connection_string>'

|

|

136

|

+

ENV['WGIT_CONNECTION_STRING'] = 'mongodb://user:password@localhost/crawler'

|

|

237

137

|

|

|

238

|

-

|

|

138

|

+

wiki = Wgit::Url.new('https://github.com/michaeltelford/wgit/wiki')

|

|

239

139

|

|

|

240

|

-

#

|

|

241

|

-

|

|

242

|

-

|

|

243

|

-

'

|

|

244

|

-

|

|

245

|

-

)

|

|

246

|

-

db.insert doc

|

|

247

|

-

|

|

248

|

-

### SEARCH THE DATABASE ###

|

|

249

|

-

|

|

250

|

-

# Searching the database returns Wgit::Document's which have fields containing the query.

|

|

251

|

-

query = 'cow'

|

|

252

|

-

results = db.search query

|

|

253

|

-

|

|

254

|

-

# By default, the MongoDB ranking applies i.e. results.first has the most hits.

|

|

255

|

-

# Because results is an Array of Wgit::Document's, we can custom sort/rank e.g.

|

|

256

|

-

# `results.sort_by! { |doc| doc.url.crawl_duration }` ranks via page load times with

|

|

257

|

-

# results.first being the fastest. Any Wgit::Document attribute can be used, including

|

|

258

|

-

# those you define yourself by extending the API.

|

|

259

|

-

|

|

260

|

-

top_result = results.first

|

|

261

|

-

top_result.class # => Wgit::Document

|

|

262

|

-

doc.url == top_result.url # => true

|

|

263

|

-

|

|

264

|

-

### PULL OUT THE BITS THAT MATCHED OUR QUERY ###

|

|

265

|

-

|

|

266

|

-

# Searching each result gives the matching text snippets from that Wgit::Document.

|

|

267

|

-

top_result.search(query).first # => "How now brown cow."

|

|

268

|

-

|

|

269

|

-

### SEED URLS TO BE CRAWLED LATER ###

|

|

140

|

+

# Only index the most recent of each wiki article, ignoring the rest of Github.

|

|

141

|

+

opts = {

|

|

142

|

+

allow_paths: 'michaeltelford/wgit/wiki/*',

|

|

143

|

+

disallow_paths: 'michaeltelford/wgit/wiki/*/_history'

|

|

144

|

+

}

|

|

270

145

|

|

|

271

|

-

|

|

272

|

-

|

|

146

|

+

indexer = Wgit::Indexer.new

|

|

147

|

+

indexer.index_site(wiki, **opts)

|

|

273

148

|

```

|

|

274

149

|

|

|

275

|

-

##

|

|

276

|

-

|

|

277

|

-

Document serialising in Wgit is the means of downloading a web page and serialising parts of its content into accessible `Wgit::Document` attributes/methods. For example, `Wgit::Document#author` will return you the webpage's xpath value of `meta[@name='author']`.

|

|

278

|

-

|

|

279

|

-

There are two ways to extend the Document serialising behaviour of Wgit for your own means:

|

|

280

|

-

|

|

281

|

-

1. Add additional **textual** content to `Wgit::Document#text`.

|

|

282

|

-

2. Define `Wgit::Document` instance methods for specific HTML **elements**.

|

|

283

|

-

|

|

284

|

-

Below describes these two methods in more detail.

|

|

150

|

+

## Why Not Wgit?

|

|

285

151

|

|

|

286

|

-

|

|

152

|

+

So why might you not use Wgit, I hear you ask?

|

|

287

153

|

|

|

288

|

-

|

|

154

|

+

- Wgit doesn't allow for webpage interaction e.g. signing in as a user. There are better gems out there for that.

|

|

155

|

+

- Wgit can parse a crawled page's Javascript, but it doesn't do so by default. If your crawls are JS heavy then you might best consider a pure browser-based crawler instead.

|

|

156

|

+

- Wgit while fast (using `libcurl` for HTTP etc.), isn't multi-threaded; so each URL gets crawled sequentially. You could hand each crawled document to a worker thread for processing - but if you need concurrent crawling then you should consider something else.

|

|

289

157

|

|

|

290

|

-

|

|

291

|

-

|

|

292

|

-

```ruby

|

|

293

|

-

require 'wgit'

|

|

294

|

-

|

|

295

|

-

# The default text_elements cover most visible page text but let's say we

|

|

296

|

-

# have a <table> element with text content that we want.

|

|

297

|

-

Wgit::Document.text_elements << :table

|

|

298

|

-

|

|

299

|

-

doc = Wgit::Document.new(

|

|

300

|

-

'http://some_url.com',

|

|

301

|

-

<<~HTML

|

|

302

|

-

<html>

|

|

303

|

-

<p>Hello world!</p>

|

|

304

|

-

<table>My table</table>

|

|

305

|

-

</html>

|

|

306

|

-

HTML

|

|

307

|

-

)

|

|

308

|

-

|

|

309

|

-

# Now every crawled Document#text will include <table> text content.

|

|

310

|

-

doc.text # => ["Hello world!", "My table"]

|

|

311

|

-

doc.search('table') # => ["My table"]

|

|

312

|

-

```

|

|

158

|

+

## Installation

|

|

313

159

|

|

|

314

|

-

|

|

160

|

+

Only MRI Ruby is tested and supported, but Wgit may work with other Ruby implementations.

|

|

315

161

|

|

|

316

|

-

|

|

162

|

+

Currently, the required MRI Ruby version is:

|

|

317

163

|

|

|

318

|

-

|

|

164

|

+

`~> 2.5` a.k.a. `>= 2.5 && < 3`

|

|

319

165

|

|

|

320

|

-

|

|

166

|

+

### Using Bundler

|

|

321

167

|

|

|

322

|

-

|

|

168

|

+

Add this line to your application's `Gemfile`:

|

|

323

169

|

|

|

324

170

|

```ruby

|

|

325

|

-

|

|

171

|

+

gem 'wgit'

|

|

172

|

+

```

|

|

326

173

|

|

|

327

|

-

|

|

328

|

-

Wgit::Document.define_extension(

|

|

329

|

-

:tables, # Wgit::Document#tables will return the page's tables.

|

|

330

|

-

'//table', # The xpath to extract the tables.

|

|

331

|

-

singleton: false, # True returns the first table found, false returns all.

|

|

332

|

-

text_content_only: false, # True returns the table text, false returns the Nokogiri object.

|

|

333

|

-

) do |tables|

|

|

334

|

-

# Here we can inspect/manipulate the tables before they're set as Wgit::Document#tables.

|

|

335

|

-

tables

|

|

336

|

-

end

|

|

174

|

+

And then execute:

|

|

337

175

|

|

|

338

|

-

|

|

339

|

-

# is initialised e.g. manually (as below) or via Wgit::Crawler methods etc.

|

|

340

|

-

doc = Wgit::Document.new(

|

|

341

|

-

'http://some_url.com',

|

|

342

|

-

<<~HTML

|

|

343

|

-

<html>

|

|

344

|

-

<p>Hello world! Welcome to my site.</p>

|

|

345

|

-

<table>

|

|

346

|

-

<tr><th>Name</th><th>Age</th></tr>

|

|

347

|

-

<tr><td>Socrates</td><td>101</td></tr>

|

|

348

|

-

<tr><td>Plato</td><td>106</td></tr>

|

|

349

|

-

</table>

|

|

350

|

-

<p>I hope you enjoyed your visit :-)</p>

|

|

351

|

-

</html>

|

|

352

|

-

HTML

|

|

353

|

-

)

|

|

354

|

-

|

|

355

|

-

# Call our newly defined method to obtain the table data we're interested in.

|

|

356

|

-

tables = doc.tables

|

|

357

|

-

|

|

358

|

-

# Both the collection and each table within the collection are plain Nokogiri objects.

|

|

359

|

-

tables.class # => Nokogiri::XML::NodeSet

|

|

360

|

-

tables.first.class # => Nokogiri::XML::Element

|

|

361

|

-

|

|

362

|

-

# Note, the Document's stats now include our 'tables' extension.

|

|

363

|

-

doc.stats # => {

|

|

364

|

-

# :url=>19, :html=>242, :links=>0, :text=>8, :text_bytes=>91, :tables=>1

|

|

365

|

-

# }

|

|

366

|

-

```

|

|

176

|

+

$ bundle

|

|

367

177

|

|

|

368

|

-

|

|

178

|

+

### Using RubyGems

|

|

369

179

|

|

|

370

|

-

|

|

180

|

+

$ gem install wgit

|

|

371

181

|

|

|

372

|

-

|

|

373

|

-

- A `Wgit::Document` extension (once initialised) will become a Document instance variable, meaning that the value will be inserted into the Database if it's a primitive type e.g. `String`, `Array` etc. Complex types e.g. Ruby objects won't be inserted. It's up to you to ensure the data you want inserted, can be inserted.

|

|

374

|

-

- Once inserted into the Database, you can search a `Wgit::Document`'s extension attributes by updating the Database's *text search index*. See the [Database Example](#Database-Example) for more information.

|

|

182

|

+

Verify the install by using the executable (to start an REPL session):

|

|

375

183

|

|

|

376

|

-

|

|

184

|

+

$ wgit

|

|

377

185

|

|

|

378

|

-

|

|

186

|

+

## Documentation

|

|

379

187

|

|

|

380

|

-

-

|

|

381

|

-

-

|

|

382

|

-

-

|

|

188

|

+

- [Getting Started](https://github.com/michaeltelford/wgit/wiki/Getting-Started)

|

|

189

|

+

- [Wiki](https://github.com/michaeltelford/wgit/wiki)

|

|

190

|

+

- [Yardocs](https://www.rubydoc.info/github/michaeltelford/wgit/master)

|

|

191

|

+

- [CHANGELOG](https://github.com/michaeltelford/wgit/blob/master/CHANGELOG.md)

|

|

383

192

|

|

|

384

193

|

## Executable

|

|

385

194

|

|

|

386

|

-

Installing the Wgit gem

|

|

195

|

+

Installing the Wgit gem adds a `wgit` executable to your `$PATH`. The executable launches an interactive REPL session with the Wgit gem already loaded; making it super easy to index and search from the command line without the need for scripts.

|

|

387

196

|

|

|

388

197

|

The `wgit` executable does the following things (in order):

|

|

389

198

|

|

|

@@ -391,21 +200,7 @@ The `wgit` executable does the following things (in order):

|

|

|

391

200

|

2. `eval`'s a `.wgit.rb` file (if one exists in either the local or home directory, which ever is found first)

|

|

392

201

|

3. Starts an interactive shell (using `pry` if it's installed, or `irb` if not)

|

|

393

202

|

|

|

394

|

-

The `.wgit.rb` file can be used to seed fixture data or define helper functions for the session. For example, you could define a function which indexes your website for quick and easy searching everytime you start a new session.

|

|

395

|

-

|

|

396

|

-

## Change Log

|

|

397

|

-

|

|

398

|

-

See the [CHANGELOG.md](https://github.com/michaeltelford/wgit/blob/master/CHANGELOG.md) for differences (including any breaking changes) between releases of Wgit.

|

|

399

|

-

|

|

400

|

-

### Gem Versioning

|

|

401

|

-

|

|

402

|

-

The `wgit` gem follows these versioning rules:

|

|

403

|

-

|

|

404

|

-

- The version format is `MAJOR.MINOR.PATCH` e.g. `0.1.0`.

|

|

405

|

-

- Since the gem hasn't reached `v1.0.0` yet, slightly different semantic versioning rules apply.

|

|

406

|

-

- The `PATCH` represents *non breaking changes* while the `MINOR` represents *breaking changes* e.g. updating from version `0.1.0` to `0.2.0` will likely introduce breaking changes necessitating updates to your codebase.

|

|

407

|

-

- To determine what changes are needed, consult the `CHANGELOG.md`. If you need help, raise an issue.

|

|

408

|

-

- Once `wgit v1.0.0` is released, *normal* [semantic versioning](https://semver.org/) rules will apply e.g. only a `MAJOR` version change should introduce breaking changes.

|

|

203

|

+

The `.wgit.rb` file can be used to seed fixture data or define helper functions for the session. For example, you could define a function which indexes your website for quick and easy searching everytime you start a new session.

|

|

409

204

|

|

|

410

205

|

## License

|

|

411

206

|

|

|

@@ -431,14 +226,14 @@ And you're good to go!

|

|

|

431

226

|

|

|

432

227

|

### Tooling

|

|

433

228

|

|

|

434

|

-

Wgit uses the [`toys`](https://github.com/dazuma/toys) gem (instead of Rake) for task invocation

|

|

229

|

+

Wgit uses the [`toys`](https://github.com/dazuma/toys) gem (instead of Rake) for task invocation. For a full list of available tasks a.k.a. tools, run `toys --tools`. You can search for a tool using `toys -s tool_name`. The most commonly used tools are listed below...

|

|

435

230

|

|

|

436

|

-

Run `toys db` to see a list of database related tools, enabling you to run a Mongo DB instance locally using Docker. Run `toys test` to execute the tests

|

|

231

|

+

Run `toys db` to see a list of database related tools, enabling you to run a Mongo DB instance locally using Docker. Run `toys test` to execute the tests.

|

|

437

232

|

|

|

438

|

-

To generate code documentation run `toys yardoc`. To browse the

|

|

233

|

+

To generate code documentation locally, run `toys yardoc`. To browse the docs in a browser run `toys yardoc --serve`. You can also use the `yri` command line tool e.g. `yri Wgit::Crawler#crawl_site` etc.

|

|

439

234

|

|

|

440

|

-

To install this gem onto your local machine, run `toys install

|

|

235

|

+

To install this gem onto your local machine, run `toys install` and follow the prompt.

|

|

441

236

|

|

|

442

237

|

### Console

|

|

443

238

|

|

|

444

|

-

You can run `toys console` for an interactive shell using the `./bin/wgit` executable. The `toys setup` task will have created

|

|

239

|

+

You can run `toys console` for an interactive shell using the `./bin/wgit` executable. The `toys setup` task will have created an `.env` and `.wgit.rb` file which get loaded by the executable. You can use the contents of this [gist](https://gist.github.com/michaeltelford/b90d5e062da383be503ca2c3a16e9164) to turn the executable into a development console. It defines some useful functions, fixtures and connects to the database etc. Don't forget to set the `WGIT_CONNECTION_STRING` in the `.env` file.

|