waterdrop 2.6.7 → 2.6.8

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- checksums.yaml +4 -4

- checksums.yaml.gz.sig +0 -0

- data/.github/workflows/ci.yml +16 -9

- data/CHANGELOG.md +17 -8

- data/Gemfile.lock +6 -6

- data/README.md +19 -500

- data/docker-compose.yml +19 -12

- data/lib/waterdrop/clients/buffered.rb +59 -3

- data/lib/waterdrop/clients/dummy.rb +20 -0

- data/lib/waterdrop/clients/rdkafka.rb +6 -1

- data/lib/waterdrop/config.rb +5 -0

- data/lib/waterdrop/contracts/message.rb +4 -1

- data/lib/waterdrop/errors.rb +3 -0

- data/lib/waterdrop/instrumentation/callbacks/delivery.rb +6 -4

- data/lib/waterdrop/instrumentation/logger_listener.rb +21 -1

- data/lib/waterdrop/instrumentation/notifications.rb +5 -0

- data/lib/waterdrop/producer/async.rb +4 -2

- data/lib/waterdrop/producer/sync.rb +4 -2

- data/lib/waterdrop/producer/transactions.rb +165 -0

- data/lib/waterdrop/producer.rb +42 -3

- data/lib/waterdrop/version.rb +1 -1

- data/waterdrop.gemspec +3 -3

- data.tar.gz.sig +0 -0

- metadata +7 -6

- metadata.gz.sig +0 -0

data/README.md

CHANGED

|

@@ -4,80 +4,37 @@

|

|

|

4

4

|

[](http://badge.fury.io/rb/waterdrop)

|

|

5

5

|

[](https://slack.karafka.io)

|

|

6

6

|

|

|

7

|

-

|

|

7

|

+

WaterDrop is a standalone gem that sends messages to Kafka easily with an extra validation layer. It is a part of the [Karafka](https://github.com/karafka/karafka) ecosystem.

|

|

8

8

|

|

|

9

9

|

It:

|

|

10

10

|

|

|

11

11

|

- Is thread-safe

|

|

12

12

|

- Supports sync producing

|

|

13

13

|

- Supports async producing

|

|

14

|

+

- Supports transactions

|

|

14

15

|

- Supports buffering

|

|

15

16

|

- Supports producing messages to multiple clusters

|

|

16

17

|

- Supports multiple delivery policies

|

|

17

18

|

- Works with Kafka `1.0+` and Ruby `2.7+`

|

|

19

|

+

- Works with and without Karafka

|

|

18

20

|

|

|

19

|

-

##

|

|

21

|

+

## Documentation

|

|

20

22

|

|

|

21

|

-

|

|

22

|

-

- [Setup](#setup)

|

|

23

|

-

* [WaterDrop configuration options](#waterdrop-configuration-options)

|

|

24

|

-

* [Kafka configuration options](#kafka-configuration-options)

|

|

25

|

-

- [Usage](#usage)

|

|

26

|

-

* [Basic usage](#basic-usage)

|

|

27

|

-

* [Using WaterDrop across the application and with Ruby on Rails](#using-waterdrop-across-the-application-and-with-ruby-on-rails)

|

|

28

|

-

* [Using WaterDrop with a connection-pool](#using-waterdrop-with-a-connection-pool)

|

|

29

|

-

* [Buffering](#buffering)

|

|

30

|

-

+ [Using WaterDrop to buffer messages based on the application logic](#using-waterdrop-to-buffer-messages-based-on-the-application-logic)

|

|

31

|

-

+ [Using WaterDrop with rdkafka buffers to achieve periodic auto-flushing](#using-waterdrop-with-rdkafka-buffers-to-achieve-periodic-auto-flushing)

|

|

32

|

-

* [Idempotence](#idempotence)

|

|

33

|

-

* [Compression](#compression)

|

|

34

|

-

- [Instrumentation](#instrumentation)

|

|

35

|

-

* [Usage statistics](#usage-statistics)

|

|

36

|

-

* [Error notifications](#error-notifications)

|

|

37

|

-

* [Acknowledgment notifications](#acknowledgment-notifications)

|

|

38

|

-

* [Datadog and StatsD integration](#datadog-and-statsd-integration)

|

|

39

|

-

* [Forking and potential memory problems](#forking-and-potential-memory-problems)

|

|

40

|

-

- [Middleware](#middleware)

|

|

41

|

-

- [Note on contributions](#note-on-contributions)

|

|

23

|

+

Karafka ecosystem components documentation, including WaterDrop, can be found [here](https://karafka.io/docs/#waterdrop).

|

|

42

24

|

|

|

43

|

-

##

|

|

25

|

+

## Getting Started

|

|

44

26

|

|

|

45

|

-

|

|

27

|

+

If you want to both produce and consume messages, please use [Karafka](https://github.com/karafka/karafka/). It integrates WaterDrop automatically.

|

|

46

28

|

|

|

47

|

-

|

|

29

|

+

To get started with WaterDrop:

|

|

48

30

|

|

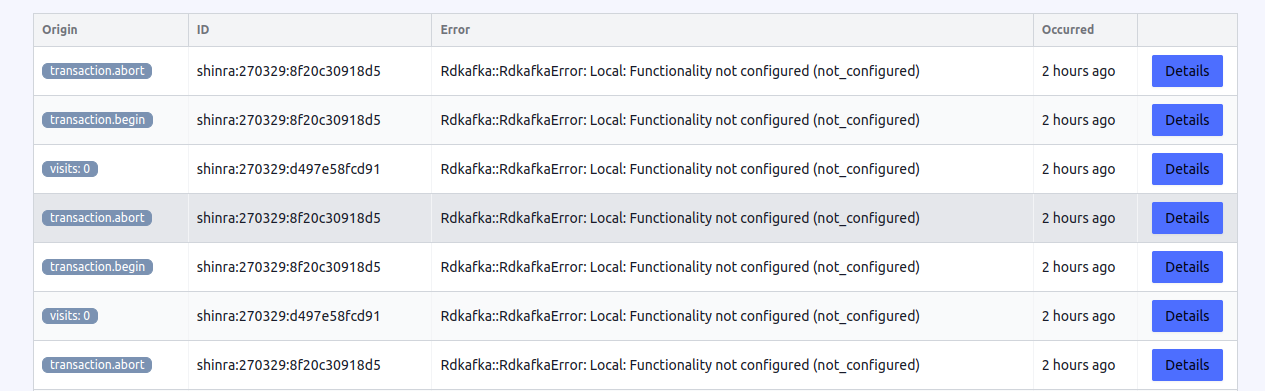

|

49

|

-

|

|

50

|

-

gem 'waterdrop'

|

|

51

|

-

```

|

|

52

|

-

|

|

53

|

-

and run

|

|

54

|

-

|

|

55

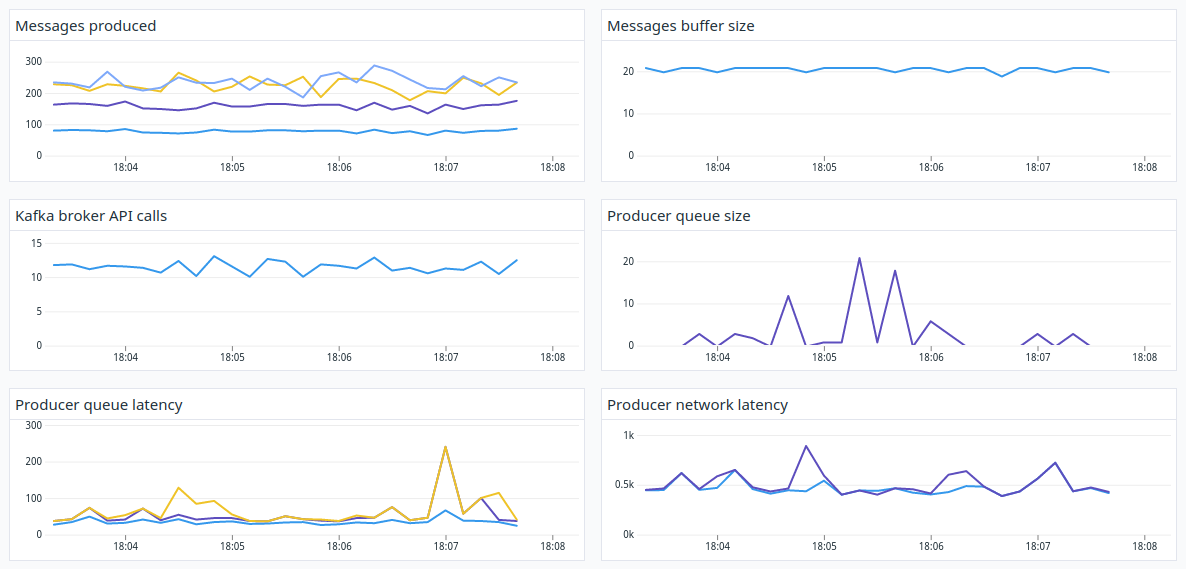

|

-

```

|

|

56

|

-

bundle install

|

|

57

|

-

```

|

|

58

|

-

|

|

59

|

-

## Setup

|

|

60

|

-

|

|

61

|

-

WaterDrop is a complex tool that contains multiple configuration options. To keep everything organized, all the configuration options were divided into two groups:

|

|

31

|

+

1. Add it to your Gemfile:

|

|

62

32

|

|

|

63

|

-

|

|

64

|

-

|

|

65

|

-

|

|

66

|

-

To apply all those configuration options, you need to create a producer instance and use the ```#setup``` method:

|

|

67

|

-

|

|

68

|

-

```ruby

|

|

69

|

-

producer = WaterDrop::Producer.new

|

|

70

|

-

|

|

71

|

-

producer.setup do |config|

|

|

72

|

-

config.deliver = true

|

|

73

|

-

config.kafka = {

|

|

74

|

-

'bootstrap.servers': 'localhost:9092',

|

|

75

|

-

'request.required.acks': 1

|

|

76

|

-

}

|

|

77

|

-

end

|

|

33

|

+

```bash

|

|

34

|

+

bundle add waterdrop

|

|

78

35

|

```

|

|

79

36

|

|

|

80

|

-

|

|

37

|

+

2. Create and configure a producer:

|

|

81

38

|

|

|

82

39

|

```ruby

|

|

83

40

|

producer = WaterDrop::Producer.new do |config|

|

|

@@ -89,46 +46,17 @@ producer = WaterDrop::Producer.new do |config|

|

|

|

89

46

|

end

|

|

90

47

|

```

|

|

91

48

|

|

|

92

|

-

|

|

93

|

-

|

|

94

|

-

Some of the options are:

|

|

95

|

-

|

|

96

|

-

| Option | Description |

|

|

97

|

-

|------------------------------|----------------------------------------------------------------------------|

|

|

98

|

-

| `id` | id of the producer for instrumentation and logging |

|

|

99

|

-

| `logger` | Logger that we want to use |

|

|

100

|

-

| `deliver` | Should we send messages to Kafka or just fake the delivery |

|

|

101

|

-

| `max_wait_timeout` | Waits that long for the delivery report or raises an error |

|

|

102

|

-

| `wait_timeout` | Waits that long before re-check of delivery report availability |

|

|

103

|

-

| `wait_on_queue_full` | Should be wait on queue full or raise an error when that happens |

|

|

104

|

-

| `wait_backoff_on_queue_full` | Waits that long before retry when queue is full |

|

|

105

|

-

| `wait_timeout_on_queue_full` | If back-offs and attempts that that much time, error won't be retried more |

|

|

106

|

-

|

|

107

|

-

Full list of the root configuration options is available [here](https://github.com/karafka/waterdrop/blob/master/lib/waterdrop/config.rb#L25).

|

|

49

|

+

3. Use it as follows:

|

|

108

50

|

|

|

109

|

-

### Kafka configuration options

|

|

110

|

-

|

|

111

|

-

You can create producers with different `kafka` settings. Documentation of the available configuration options is available on https://github.com/edenhill/librdkafka/blob/master/CONFIGURATION.md.

|

|

112

|

-

|

|

113

|

-

## Usage

|

|

114

|

-

|

|

115

|

-

### Basic usage

|

|

116

|

-

|

|

117

|

-

To send Kafka messages, just create a producer and use it:

|

|

118

51

|

|

|

119

52

|

```ruby

|

|

120

|

-

|

|

121

|

-

|

|

122

|

-

producer.setup do |config|

|

|

123

|

-

config.kafka = { 'bootstrap.servers': 'localhost:9092' }

|

|

124

|

-

end

|

|

125

|

-

|

|

53

|

+

# sync producing

|

|

126

54

|

producer.produce_sync(topic: 'my-topic', payload: 'my message')

|

|

127

55

|

|

|

128

56

|

# or for async

|

|

129

57

|

producer.produce_async(topic: 'my-topic', payload: 'my message')

|

|

130

58

|

|

|

131

|

-

# or in batches

|

|

59

|

+

# or in sync batches

|

|

132

60

|

producer.produce_many_sync(

|

|

133

61

|

[

|

|

134

62

|

{ topic: 'my-topic', payload: 'my message'},

|

|

@@ -136,7 +64,7 @@ producer.produce_many_sync(

|

|

|

136

64

|

]

|

|

137

65

|

)

|

|

138

66

|

|

|

139

|

-

#

|

|

67

|

+

# and async batches

|

|

140

68

|

producer.produce_many_async(

|

|

141

69

|

[

|

|

142

70

|

{ topic: 'my-topic', payload: 'my message'},

|

|

@@ -144,418 +72,9 @@ producer.produce_many_async(

|

|

|

144

72

|

]

|

|

145

73

|

)

|

|

146

74

|

|

|

147

|

-

#

|

|

148

|

-

producer.

|

|

149

|

-

|

|

150

|

-

|

|

151

|

-

Each message that you want to publish, will have its value checked.

|

|

152

|

-

|

|

153

|

-

Here are all the things you can provide in the message hash:

|

|

154

|

-

|

|

155

|

-

| Option | Required | Value type | Description |

|

|

156

|

-

|-----------------|----------|---------------|----------------------------------------------------------|

|

|

157

|

-

| `topic` | true | String | The Kafka topic that should be written to |

|

|

158

|

-

| `payload` | true | String | Data you want to send to Kafka |

|

|

159

|

-

| `key` | false | String | The key that should be set in the Kafka message |

|

|

160

|

-

| `partition` | false | Integer | A specific partition number that should be written to |

|

|

161

|

-

| `partition_key` | false | String | Key to indicate the destination partition of the message |

|

|

162

|

-

| `timestamp` | false | Time, Integer | The timestamp that should be set on the message |

|

|

163

|

-

| `headers` | false | Hash | Headers for the message |

|

|

164

|

-

|

|

165

|

-

Keep in mind, that message you want to send should be either binary or stringified (to_s, to_json, etc).

|

|

166

|

-

|

|

167

|

-

### Using WaterDrop across the application and with Ruby on Rails

|

|

168

|

-

|

|

169

|

-

If you plan to both produce and consume messages using Kafka, you should install and use [Karafka](https://github.com/karafka/karafka). It integrates automatically with Ruby on Rails applications and auto-configures WaterDrop producer to make it accessible via `Karafka#producer` method:

|

|

170

|

-

|

|

171

|

-

```ruby

|

|

172

|

-

event = Events.last

|

|

173

|

-

Karafka.producer.produce_async(topic: 'events', payload: event.to_json)

|

|

174

|

-

```

|

|

175

|

-

|

|

176

|

-

If you want to only produce messages from within your application without consuming with Karafka, since WaterDrop is thread-safe you can create a single instance in an initializer like so:

|

|

177

|

-

|

|

178

|

-

```ruby

|

|

179

|

-

KAFKA_PRODUCER = WaterDrop::Producer.new

|

|

180

|

-

|

|

181

|

-

KAFKA_PRODUCER.setup do |config|

|

|

182

|

-

config.kafka = { 'bootstrap.servers': 'localhost:9092' }

|

|

183

|

-

end

|

|

184

|

-

|

|

185

|

-

# And just dispatch messages

|

|

186

|

-

KAFKA_PRODUCER.produce_sync(topic: 'my-topic', payload: 'my message')

|

|

187

|

-

```

|

|

188

|

-

|

|

189

|

-

### Using WaterDrop with a connection-pool

|

|

190

|

-

|

|

191

|

-

While WaterDrop is thread-safe, there is no problem in using it with a connection pool inside high-intensity applications. The only thing worth keeping in mind, is that WaterDrop instances should be shutdown before the application is closed.

|

|

192

|

-

|

|

193

|

-

```ruby

|

|

194

|

-

KAFKA_PRODUCERS_CP = ConnectionPool.new do

|

|

195

|

-

WaterDrop::Producer.new do |config|

|

|

196

|

-

config.kafka = { 'bootstrap.servers': 'localhost:9092' }

|

|

197

|

-

end

|

|

198

|

-

end

|

|

199

|

-

|

|

200

|

-

KAFKA_PRODUCERS_CP.with do |producer|

|

|

75

|

+

# transactions

|

|

76

|

+

producer.transaction do

|

|

77

|

+

producer.produce_async(topic: 'my-topic', payload: 'my message')

|

|

201

78

|

producer.produce_async(topic: 'my-topic', payload: 'my message')

|

|

202

79

|

end

|

|

203

|

-

|

|

204

|

-

KAFKA_PRODUCERS_CP.shutdown { |producer| producer.close }

|

|

205

|

-

```

|

|

206

|

-

|

|

207

|

-

### Buffering

|

|

208

|

-

|

|

209

|

-

WaterDrop producers support buffering messages in their internal buffers and on the `rdkafka` level via `queue.buffering.*` set of settings.

|

|

210

|

-

|

|

211

|

-

This means that depending on your use case, you can achieve both granular buffering and flushing control when needed with context awareness and periodic and size-based flushing functionalities.

|

|

212

|

-

|

|

213

|

-

### Idempotence

|

|

214

|

-

|

|

215

|

-

When idempotence is enabled, the producer will ensure that messages are successfully produced exactly once and in the original production order.

|

|

216

|

-

|

|

217

|

-

To enable idempotence, you need to set the `enable.idempotence` kafka scope setting to `true`:

|

|

218

|

-

|

|

219

|

-

```ruby

|

|

220

|

-

WaterDrop::Producer.new do |config|

|

|

221

|

-

config.deliver = true

|

|

222

|

-

config.kafka = {

|

|

223

|

-

'bootstrap.servers': 'localhost:9092',

|

|

224

|

-

'enable.idempotence': true

|

|

225

|

-

}

|

|

226

|

-

end

|

|

227

|

-

```

|

|

228

|

-

|

|

229

|

-

The following Kafka configuration properties are adjusted automatically (if not modified by the user) when idempotence is enabled:

|

|

230

|

-

|

|

231

|

-

- `max.in.flight.requests.per.connection` set to `5`

|

|

232

|

-

- `retries` set to `2147483647`

|

|

233

|

-

- `acks` set to `all`

|

|

234

|

-

|

|

235

|

-

The idempotent producer ensures that messages are always delivered in the correct order and without duplicates. In other words, when an idempotent producer sends a message, the messaging system ensures that the message is only delivered once to the message broker and subsequently to the consumers, even if the producer tries to send the message multiple times.

|

|

236

|

-

|

|

237

|

-

### Compression

|

|

238

|

-

|

|

239

|

-

WaterDrop supports following compression types:

|

|

240

|

-

|

|

241

|

-

- `gzip`

|

|

242

|

-

- `zstd`

|

|

243

|

-

- `lz4`

|

|

244

|

-

- `snappy`

|

|

245

|

-

|

|

246

|

-

To use compression, set `kafka` scope `compression.codec` setting. You can also optionally indicate the `compression.level`:

|

|

247

|

-

|

|

248

|

-

```ruby

|

|

249

|

-

producer = WaterDrop::Producer.new

|

|

250

|

-

|

|

251

|

-

producer.setup do |config|

|

|

252

|

-

config.kafka = {

|

|

253

|

-

'bootstrap.servers': 'localhost:9092',

|

|

254

|

-

'compression.codec': 'gzip',

|

|

255

|

-

'compression.level': 6

|

|

256

|

-

}

|

|

257

|

-

end

|

|

258

|

-

```

|

|

259

|

-

|

|

260

|

-

**Note**: In order to use `zstd`, you need to install `libzstd-dev`:

|

|

261

|

-

|

|

262

|

-

```bash

|

|

263

|

-

apt-get install -y libzstd-dev

|

|

264

|

-

```

|

|

265

|

-

|

|

266

|

-

#### Using WaterDrop to buffer messages based on the application logic

|

|

267

|

-

|

|

268

|

-

```ruby

|

|

269

|

-

producer = WaterDrop::Producer.new

|

|

270

|

-

|

|

271

|

-

producer.setup do |config|

|

|

272

|

-

config.kafka = { 'bootstrap.servers': 'localhost:9092' }

|

|

273

|

-

end

|

|

274

|

-

|

|

275

|

-

# Simulating some events states of a transaction - notice, that the messages will be flushed to

|

|

276

|

-

# kafka only upon arrival of the `finished` state.

|

|

277

|

-

%w[

|

|

278

|

-

started

|

|

279

|

-

processed

|

|

280

|

-

finished

|

|

281

|

-

].each do |state|

|

|

282

|

-

producer.buffer(topic: 'events', payload: state)

|

|

283

|

-

|

|

284

|

-

puts "The messages buffer size #{producer.messages.size}"

|

|

285

|

-

producer.flush_sync if state == 'finished'

|

|

286

|

-

puts "The messages buffer size #{producer.messages.size}"

|

|

287

|

-

end

|

|

288

|

-

|

|

289

|

-

producer.close

|

|

290

|

-

```

|

|

291

|

-

|

|

292

|

-

#### Using WaterDrop with rdkafka buffers to achieve periodic auto-flushing

|

|

293

|

-

|

|

294

|

-

```ruby

|

|

295

|

-

producer = WaterDrop::Producer.new

|

|

296

|

-

|

|

297

|

-

producer.setup do |config|

|

|

298

|

-

config.kafka = {

|

|

299

|

-

'bootstrap.servers': 'localhost:9092',

|

|

300

|

-

# Accumulate messages for at most 10 seconds

|

|

301

|

-

'queue.buffering.max.ms': 10_000

|

|

302

|

-

}

|

|

303

|

-

end

|

|

304

|

-

|

|

305

|

-

# WaterDrop will flush messages minimum once every 10 seconds

|

|

306

|

-

30.times do |i|

|

|

307

|

-

producer.produce_async(topic: 'events', payload: i.to_s)

|

|

308

|

-

sleep(1)

|

|

309

|

-

end

|

|

310

|

-

|

|

311

|

-

producer.close

|

|

312

|

-

```

|

|

313

|

-

|

|

314

|

-

## Instrumentation

|

|

315

|

-

|

|

316

|

-

Each of the producers after the `#setup` is done, has a custom monitor to which you can subscribe.

|

|

317

|

-

|

|

318

|

-

```ruby

|

|

319

|

-

producer = WaterDrop::Producer.new

|

|

320

|

-

|

|

321

|

-

producer.setup do |config|

|

|

322

|

-

config.kafka = { 'bootstrap.servers': 'localhost:9092' }

|

|

323

|

-

end

|

|

324

|

-

|

|

325

|

-

producer.monitor.subscribe('message.produced_async') do |event|

|

|

326

|

-

puts "A message was produced to '#{event[:message][:topic]}' topic!"

|

|

327

|

-

end

|

|

328

|

-

|

|

329

|

-

producer.produce_async(topic: 'events', payload: 'data')

|

|

330

|

-

|

|

331

|

-

producer.close

|

|

332

|

-

```

|

|

333

|

-

|

|

334

|

-

See the `WaterDrop::Instrumentation::Notifications::EVENTS` for the list of all the supported events.

|

|

335

|

-

|

|

336

|

-

### Karafka Web-UI

|

|

337

|

-

|

|

338

|

-

Karafka [Web UI](https://karafka.io/docs/Web-UI-Getting-Started/) is a user interface for the Karafka framework. The Web UI provides a convenient way for monitor all producers related errors out of the box.

|

|

339

|

-

|

|

340

|

-

|

|

341

|

-

|

|

342

|

-

### Logger Listener

|

|

343

|

-

|

|

344

|

-

WaterDrop comes equipped with a `LoggerListener`, a useful feature designed to facilitate the reporting of WaterDrop's operational details directly into the assigned logger. The `LoggerListener` provides a convenient way of tracking the events and operations that occur during the usage of WaterDrop, enhancing transparency and making debugging and issue tracking easier.

|

|

345

|

-

|

|

346

|

-

However, it's important to note that this Logger Listener is not subscribed by default. This means that WaterDrop does not automatically send operation data to your logger out of the box. This design choice has been made to give users greater flexibility and control over their logging configuration.

|

|

347

|

-

|

|

348

|

-

To use this functionality, you need to manually subscribe the `LoggerListener` to WaterDrop instrumentation. Below, you will find an example demonstrating how to perform this subscription.

|

|

349

|

-

|

|

350

|

-

```ruby

|

|

351

|

-

LOGGER = Logger.new($stdout)

|

|

352

|

-

|

|

353

|

-

producer = WaterDrop::Producer.new do |config|

|

|

354

|

-

config.kafka = { 'bootstrap.servers': 'localhost:9092' }

|

|

355

|

-

config.logger = LOGGER

|

|

356

|

-

end

|

|

357

|

-

|

|

358

|

-

producer.monitor.subscribe(

|

|

359

|

-

WaterDrop::Instrumentation::LoggerListener.new(

|

|

360

|

-

# You can use a different logger than the one assigned to WaterDrop if you want

|

|

361

|

-

producer.config.logger,

|

|

362

|

-

# If this is set to false, the produced messages details will not be sent to logs

|

|

363

|

-

# You may set it to false if you find the level of reporting in the debug too extensive

|

|

364

|

-

log_messages: true

|

|

365

|

-

)

|

|

366

|

-

)

|

|

367

|

-

|

|

368

|

-

producer.produce_sync(topic: 'my.topic', payload: 'my.message')

|

|

369

|

-

```

|

|

370

|

-

|

|

371

|

-

### Usage statistics

|

|

372

|

-

|

|

373

|

-

WaterDrop is configured to emit internal `librdkafka` metrics every five seconds. You can change this by setting the `kafka` `statistics.interval.ms` configuration property to a value greater of equal `0`. Emitted statistics are available after subscribing to the `statistics.emitted` publisher event. If set to `0`, metrics will not be published.

|

|

374

|

-

|

|

375

|

-

The statistics include all of the metrics from `librdkafka` (complete list [here](https://github.com/edenhill/librdkafka/blob/master/STATISTICS.md)) as well as the diff of those against the previously emitted values.

|

|

376

|

-

|

|

377

|

-

For several attributes like `txmsgs`, `librdkafka` publishes only the totals. In order to make it easier to track the progress (for example number of messages sent between statistics emitted events), WaterDrop diffs all the numeric values against previously available numbers. All of those metrics are available under the same key as the metric but with additional `_d` postfix:

|

|

378

|

-

|

|

379

|

-

|

|

380

|

-

```ruby

|

|

381

|

-

producer = WaterDrop::Producer.new do |config|

|

|

382

|

-

config.kafka = {

|

|

383

|

-

'bootstrap.servers': 'localhost:9092',

|

|

384

|

-

'statistics.interval.ms': 2_000 # emit statistics every 2 seconds

|

|

385

|

-

}

|

|

386

|

-

end

|

|

387

|

-

|

|

388

|

-

producer.monitor.subscribe('statistics.emitted') do |event|

|

|

389

|

-

sum = event[:statistics]['txmsgs']

|

|

390

|

-

diff = event[:statistics]['txmsgs_d']

|

|

391

|

-

|

|

392

|

-

p "Sent messages: #{sum}"

|

|

393

|

-

p "Messages sent from last statistics report: #{diff}"

|

|

394

|

-

end

|

|

395

|

-

|

|

396

|

-

sleep(2)

|

|

397

|

-

|

|

398

|

-

# Sent messages: 0

|

|

399

|

-

# Messages sent from last statistics report: 0

|

|

400

|

-

|

|

401

|

-

20.times { producer.produce_async(topic: 'events', payload: 'data') }

|

|

402

|

-

|

|

403

|

-

# Sent messages: 20

|

|

404

|

-

# Messages sent from last statistics report: 20

|

|

405

|

-

|

|

406

|

-

sleep(2)

|

|

407

|

-

|

|

408

|

-

20.times { producer.produce_async(topic: 'events', payload: 'data') }

|

|

409

|

-

|

|

410

|

-

# Sent messages: 40

|

|

411

|

-

# Messages sent from last statistics report: 20

|

|

412

|

-

|

|

413

|

-

sleep(2)

|

|

414

|

-

|

|

415

|

-

# Sent messages: 40

|

|

416

|

-

# Messages sent from last statistics report: 0

|

|

417

|

-

|

|

418

|

-

producer.close

|

|

419

|

-

```

|

|

420

|

-

|

|

421

|

-

Note: The metrics returned may not be completely consistent between brokers, toppars and totals, due to the internal asynchronous nature of librdkafka. E.g., the top level tx total may be less than the sum of the broker tx values which it represents.

|

|

422

|

-

|

|

423

|

-

### Error notifications

|

|

424

|

-

|

|

425

|

-

WaterDrop allows you to listen to all errors that occur while producing messages and in its internal background threads. Things like reconnecting to Kafka upon network errors and others unrelated to publishing messages are all available under `error.occurred` notification key. You can subscribe to this event to ensure your setup is healthy and without any problems that would otherwise go unnoticed as long as messages are delivered.

|

|

426

|

-

|

|

427

|

-

```ruby

|

|

428

|

-

producer = WaterDrop::Producer.new do |config|

|

|

429

|

-

# Note invalid connection port...

|

|

430

|

-

config.kafka = { 'bootstrap.servers': 'localhost:9090' }

|

|

431

|

-

end

|

|

432

|

-

|

|

433

|

-

producer.monitor.subscribe('error.occurred') do |event|

|

|

434

|

-

error = event[:error]

|

|

435

|

-

|

|

436

|

-

p "WaterDrop error occurred: #{error}"

|

|

437

|

-

end

|

|

438

|

-

|

|

439

|

-

# Run this code without Kafka cluster

|

|

440

|

-

loop do

|

|

441

|

-

producer.produce_async(topic: 'events', payload: 'data')

|

|

442

|

-

|

|

443

|

-

sleep(1)

|

|

444

|

-

end

|

|

445

|

-

|

|

446

|

-

# After you stop your Kafka cluster, you will see a lot of those:

|

|

447

|

-

#

|

|

448

|

-

# WaterDrop error occurred: Local: Broker transport failure (transport)

|

|

449

|

-

#

|

|

450

|

-

# WaterDrop error occurred: Local: Broker transport failure (transport)

|

|

451

|

-

```

|

|

452

|

-

|

|

453

|

-

**Note:** `error.occurred` will also include any errors originating from `librdkafka` for synchronous operations, including those that are raised back to the end user.

|

|

454

|

-

|

|

455

|

-

### Acknowledgment notifications

|

|

456

|

-

|

|

457

|

-

WaterDrop allows you to listen to Kafka messages' acknowledgment events. This will enable you to monitor deliveries of messages from WaterDrop even when using asynchronous dispatch methods.

|

|

458

|

-

|

|

459

|

-

That way, you can make sure, that dispatched messages are acknowledged by Kafka.

|

|

460

|

-

|

|

461

|

-

```ruby

|

|

462

|

-

producer = WaterDrop::Producer.new do |config|

|

|

463

|

-

config.kafka = { 'bootstrap.servers': 'localhost:9092' }

|

|

464

|

-

end

|

|

465

|

-

|

|

466

|

-

producer.monitor.subscribe('message.acknowledged') do |event|

|

|

467

|

-

producer_id = event[:producer_id]

|

|

468

|

-

offset = event[:offset]

|

|

469

|

-

|

|

470

|

-

p "WaterDrop [#{producer_id}] delivered message with offset: #{offset}"

|

|

471

|

-

end

|

|

472

|

-

|

|

473

|

-

loop do

|

|

474

|

-

producer.produce_async(topic: 'events', payload: 'data')

|

|

475

|

-

|

|

476

|

-

sleep(1)

|

|

477

|

-

end

|

|

478

|

-

|

|

479

|

-

# WaterDrop [dd8236fff672] delivered message with offset: 32

|

|

480

|

-

# WaterDrop [dd8236fff672] delivered message with offset: 33

|

|

481

|

-

# WaterDrop [dd8236fff672] delivered message with offset: 34

|

|

482

|

-

```

|

|

483

|

-

|

|

484

|

-

### Datadog and StatsD integration

|

|

485

|

-

|

|

486

|

-

WaterDrop comes with (optional) full Datadog and StatsD integration that you can use. To use it:

|

|

487

|

-

|

|

488

|

-

```ruby

|

|

489

|

-

# require datadog/statsd and the listener as it is not loaded by default

|

|

490

|

-

require 'datadog/statsd'

|

|

491

|

-

require 'waterdrop/instrumentation/vendors/datadog/metrics_listener'

|

|

492

|

-

|

|

493

|

-

# initialize your producer with statistics.interval.ms enabled so the metrics are published

|

|

494

|

-

producer = WaterDrop::Producer.new do |config|

|

|

495

|

-

config.deliver = true

|

|

496

|

-

config.kafka = {

|

|

497

|

-

'bootstrap.servers': 'localhost:9092',

|

|

498

|

-

'statistics.interval.ms': 1_000

|

|

499

|

-

}

|

|

500

|

-

end

|

|

501

|

-

|

|

502

|

-

# initialize the listener with statsd client

|

|

503

|

-

listener = ::WaterDrop::Instrumentation::Vendors::Datadog::MetricsListener.new do |config|

|

|

504

|

-

config.client = Datadog::Statsd.new('localhost', 8125)

|

|

505

|

-

# Publish host as a tag alongside the rest of tags

|

|

506

|

-

config.default_tags = ["host:#{Socket.gethostname}"]

|

|

507

|

-

end

|

|

508

|

-

|

|

509

|

-

# Subscribe with your listener to your producer and you should be ready to go!

|

|

510

|

-

producer.monitor.subscribe(listener)

|

|

511

|

-

```

|

|

512

|

-

|

|

513

|

-

You can also find [here](https://github.com/karafka/waterdrop/blob/master/lib/waterdrop/instrumentation/vendors/datadog/dashboard.json) a ready to import DataDog dashboard configuration file that you can use to monitor all of your producers.

|

|

514

|

-

|

|

515

|

-

|

|

516

|

-

|

|

517

|

-

### Forking and potential memory problems

|

|

518

|

-

|

|

519

|

-

If you work with forked processes, make sure you **don't** use the producer before the fork. You can easily configure the producer and then fork and use it.

|

|

520

|

-

|

|

521

|

-

To tackle this [obstacle](https://github.com/appsignal/rdkafka-ruby/issues/15) related to rdkafka, WaterDrop adds finalizer to each of the producers to close the rdkafka client before the Ruby process is shutdown. Due to the [nature of the finalizers](https://www.mikeperham.com/2010/02/24/the-trouble-with-ruby-finalizers/), this implementation prevents producers from being GCed (except upon VM shutdown) and can cause memory leaks if you don't use persistent/long-lived producers in a long-running process or if you don't use the `#close` method of a producer when it is no longer needed. Creating a producer instance for each message is anyhow a rather bad idea, so we recommend not to.

|

|

522

|

-

|

|

523

|

-

## Middleware

|

|

524

|

-

|

|

525

|

-

WaterDrop supports injecting middleware similar to Rack.

|

|

526

|

-

|

|

527

|

-

Middleware can be used to provide extra functionalities like auto-serialization of data or any other modifications of messages before their validation and dispatch.

|

|

528

|

-

|

|

529

|

-

Each middleware accepts the message hash as input and expects a message hash as a result.

|

|

530

|

-

|

|

531

|

-

There are two methods to register middlewares:

|

|

532

|

-

|

|

533

|

-

- `#prepend` - registers middleware as the first in the order of execution

|

|

534

|

-

- `#append` - registers middleware as the last in the order of execution

|

|

535

|

-

|

|

536

|

-

Below you can find an example middleware that converts the incoming payload into a JSON string by running `#to_json` automatically:

|

|

537

|

-

|

|

538

|

-

```ruby

|

|

539

|

-

class AutoMapper

|

|

540

|

-

def call(message)

|

|

541

|

-

message[:payload] = message[:payload].to_json

|

|

542

|

-

message

|

|

543

|

-

end

|

|

544

|

-

end

|

|

545

|

-

|

|

546

|

-

# Register middleware

|

|

547

|

-

producer.middleware.append(AutoMapper.new)

|

|

548

|

-

|

|

549

|

-

# Dispatch without manual casting

|

|

550

|

-

producer.produce_async(topic: 'users', payload: user)

|

|

551

80

|

```

|

|

552

|

-

|

|

553

|

-

**Note**: It is up to the end user to decide whether to modify the provided message or deep copy it and update the newly created one.

|

|

554

|

-

|

|

555

|

-

## Note on contributions

|

|

556

|

-

|

|

557

|

-

First, thank you for considering contributing to the Karafka ecosystem! It's people like you that make the open source community such a great community!

|

|

558

|

-

|

|

559

|

-

Each pull request must pass all the RSpec specs, integration tests and meet our quality requirements.

|

|

560

|

-

|

|

561

|

-

Fork it, update and wait for the Github Actions results.

|

data/docker-compose.yml

CHANGED

|

@@ -1,18 +1,25 @@

|

|

|

1

1

|

version: '2'

|

|

2

|

+

|

|

2

3

|

services:

|

|

3

|

-

zookeeper:

|

|

4

|

-

image: wurstmeister/zookeeper

|

|

5

|

-

ports:

|

|

6

|

-

- "2181:2181"

|

|

7

4

|

kafka:

|

|

8

|

-

|

|

5

|

+

container_name: kafka

|

|

6

|

+

image: confluentinc/cp-kafka:7.5.1

|

|

7

|

+

|

|

9

8

|

ports:

|

|

10

|

-

-

|

|

9

|

+

- 9092:9092

|

|

10

|

+

|

|

11

11

|

environment:

|

|

12

|

-

|

|

13

|

-

|

|

14

|

-

|

|

12

|

+

CLUSTER_ID: kafka-docker-cluster-1

|

|

13

|

+

KAFKA_INTER_BROKER_LISTENER_NAME: PLAINTEXT

|

|

14

|

+

KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR: 1

|

|

15

|

+

KAFKA_PROCESS_ROLES: broker,controller

|

|

16

|

+

KAFKA_CONTROLLER_LISTENER_NAMES: CONTROLLER

|

|

17

|

+

KAFKA_LISTENERS: PLAINTEXT://:9092,CONTROLLER://:9093

|

|

18

|

+

KAFKA_LISTENER_SECURITY_PROTOCOL_MAP: CONTROLLER:PLAINTEXT,PLAINTEXT:PLAINTEXT

|

|

19

|

+

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://127.0.0.1:9092

|

|

20

|

+

KAFKA_BROKER_ID: 1

|

|

21

|

+

KAFKA_CONTROLLER_QUORUM_VOTERS: 1@127.0.0.1:9093

|

|

22

|

+

ALLOW_PLAINTEXT_LISTENER: 'yes'

|

|

15

23

|

KAFKA_AUTO_CREATE_TOPICS_ENABLE: 'true'

|

|

16

|

-

|

|

17

|

-

|

|

18

|

-

- /var/run/docker.sock:/var/run/docker.sock

|

|

24

|

+

KAFKA_TRANSACTION_STATE_LOG_REPLICATION_FACTOR: 1

|

|

25

|

+

KAFKA_TRANSACTION_STATE_LOG_MIN_ISR: 1

|

|

@@ -19,17 +19,73 @@ module WaterDrop

|

|

|

19

19

|

super

|

|

20

20

|

@messages = []

|

|

21

21

|

@topics = Hash.new { |k, v| k[v] = [] }

|

|

22

|

+

|

|

23

|

+

@transaction_mutex = Mutex.new

|

|

24

|

+

@transaction_active = false

|

|

25

|

+

@transaction_messages = []

|

|

26

|

+

@transaction_topics = Hash.new { |k, v| k[v] = [] }

|

|

27

|

+

@transaction_level = 0

|

|

22

28

|

end

|

|

23

29

|

|

|

24

30

|

# "Produces" message to Kafka: it acknowledges it locally, adds it to the internal buffer

|

|

25

31

|

# @param message [Hash] `WaterDrop::Producer#produce_sync` message hash

|

|

26

32

|

def produce(message)

|

|

27

|

-

|

|

28

|

-

|

|

29

|

-

|

|

33

|

+

if @transaction_active

|

|

34

|

+

@transaction_topics[message.fetch(:topic)] << message

|

|

35

|

+

@transaction_messages << message

|

|

36

|

+

else

|

|

37

|

+

# We pre-validate the message payload, so topic is ensured to be present

|

|

38

|

+

@topics[message.fetch(:topic)] << message

|

|

39

|

+

@messages << message

|

|

40

|

+

end

|

|

41

|

+

|

|

30

42

|

SyncResponse.new

|

|

31

43

|

end

|

|

32

44

|

|

|

45

|

+

# Yields the code pretending it is in a transaction

|

|

46

|

+

# Supports our aborting transaction flow

|

|

47

|

+

# Moves messages the appropriate buffers only if transaction is successful

|

|

48

|

+

def transaction

|

|

49

|

+

@transaction_level += 1

|

|

50

|

+

|

|

51

|

+

return yield if @transaction_mutex.owned?

|

|

52

|

+

|

|

53

|

+

@transaction_mutex.lock

|

|

54

|

+

@transaction_active = true

|

|

55

|

+

|

|

56

|

+

result = nil

|

|

57

|

+

commit = false

|

|

58

|

+

|

|

59

|

+

catch(:abort) do

|

|

60

|

+

result = yield

|

|

61

|

+

commit = true

|

|

62

|

+

end

|

|

63

|

+

|

|

64

|

+

commit || raise(WaterDrop::Errors::AbortTransaction)

|

|

65

|

+

|

|

66

|

+

# Transfer transactional data on success

|

|

67

|

+

@transaction_topics.each do |topic, messages|

|

|

68

|

+

@topics[topic] += messages

|

|

69

|

+

end

|

|

70

|

+

|

|

71

|

+

@messages += @transaction_messages

|

|

72

|

+

|

|

73

|

+

result

|

|

74

|

+

rescue StandardError => e

|

|

75

|

+

return if e.is_a?(WaterDrop::Errors::AbortTransaction)

|

|

76

|

+

|

|

77

|

+

raise

|

|

78

|

+

ensure

|

|

79

|

+

@transaction_level -= 1

|

|

80

|

+

|

|

81

|

+

if @transaction_level.zero? && @transaction_mutex.owned?

|

|

82

|

+

@transaction_topics.clear

|

|

83

|

+

@transaction_messages.clear

|

|

84

|

+

@transaction_active = false

|

|

85

|

+

@transaction_mutex.unlock

|

|

86

|

+

end

|

|

87

|

+

end

|

|

88

|

+

|

|

33

89

|

# Returns messages produced to a given topic

|

|

34

90

|

# @param topic [String]

|

|

35

91

|

def messages_for(topic)

|