tracebrain 0.1.0__tar.gz

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- tracebrain-0.1.0/PKG-INFO +798 -0

- tracebrain-0.1.0/README.md +745 -0

- tracebrain-0.1.0/pyproject.toml +115 -0

- tracebrain-0.1.0/setup.cfg +4 -0

- tracebrain-0.1.0/src/tracebrain/__init__.py +63 -0

- tracebrain-0.1.0/src/tracebrain/api/v1/__init__.py +9 -0

- tracebrain-0.1.0/src/tracebrain/api/v1/ai_features.py +168 -0

- tracebrain-0.1.0/src/tracebrain/api/v1/api_router.py +19 -0

- tracebrain-0.1.0/src/tracebrain/api/v1/common.py +53 -0

- tracebrain-0.1.0/src/tracebrain/api/v1/curriculum.py +138 -0

- tracebrain-0.1.0/src/tracebrain/api/v1/episodes.py +154 -0

- tracebrain-0.1.0/src/tracebrain/api/v1/operations.py +115 -0

- tracebrain-0.1.0/src/tracebrain/api/v1/schemas/__init__.py +77 -0

- tracebrain-0.1.0/src/tracebrain/api/v1/schemas/api_models.py +414 -0

- tracebrain-0.1.0/src/tracebrain/api/v1/system.py +201 -0

- tracebrain-0.1.0/src/tracebrain/api/v1/traces.py +387 -0

- tracebrain-0.1.0/src/tracebrain/cli.py +655 -0

- tracebrain-0.1.0/src/tracebrain/config.py +224 -0

- tracebrain-0.1.0/src/tracebrain/core/__init__.py +0 -0

- tracebrain-0.1.0/src/tracebrain/core/curator.py +301 -0

- tracebrain-0.1.0/src/tracebrain/core/librarian.py +704 -0

- tracebrain-0.1.0/src/tracebrain/core/llm_providers.py +1147 -0

- tracebrain-0.1.0/src/tracebrain/core/schema.py +121 -0

- tracebrain-0.1.0/src/tracebrain/core/seeder.py +68 -0

- tracebrain-0.1.0/src/tracebrain/core/services/__init__.py +1 -0

- tracebrain-0.1.0/src/tracebrain/core/services/embedding.py +129 -0

- tracebrain-0.1.0/src/tracebrain/core/store.py +1773 -0

- tracebrain-0.1.0/src/tracebrain/db/__init__.py +0 -0

- tracebrain-0.1.0/src/tracebrain/db/base.py +400 -0

- tracebrain-0.1.0/src/tracebrain/db/session.py +132 -0

- tracebrain-0.1.0/src/tracebrain/evaluators/__init__.py +1 -0

- tracebrain-0.1.0/src/tracebrain/evaluators/judge_agent.py +270 -0

- tracebrain-0.1.0/src/tracebrain/main.py +268 -0

- tracebrain-0.1.0/src/tracebrain/resources/docker/Dockerfile +54 -0

- tracebrain-0.1.0/src/tracebrain/resources/docker/README.md +132 -0

- tracebrain-0.1.0/src/tracebrain/resources/docker/docker-compose.yml +93 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_10_partial_failure.json +118 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_11_episode_group_attempt_1.json +71 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_12_episode_group_attempt_2.json +69 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_13_governance_status.json +53 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_14_failed_status.json +55 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_15_hallucination.json +64 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_16_format_error.json +35 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_17_context_overflow.json +35 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_18_invalid_arguments.json +66 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_19_multi_agent_interaction.json +52 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_1_simple_success.json +72 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_20_experience_retrieval.json +65 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_2_complex_multistep.json +102 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_3_tool_error.json +72 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_4_self_correction.json +102 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_5_multi_tool_orchestration.json +102 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_6_no_tool_call.json +38 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_7_parallel_calls.json +82 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_8_clarifying_question.json +38 -0

- tracebrain-0.1.0/src/tracebrain/resources/samples/sample_9_looping_behavior.json +135 -0

- tracebrain-0.1.0/src/tracebrain/sdk/__init__.py +19 -0

- tracebrain-0.1.0/src/tracebrain/sdk/agent_tools.py +111 -0

- tracebrain-0.1.0/src/tracebrain/sdk/client.py +785 -0

- tracebrain-0.1.0/src/tracebrain/sdk/trace_context.py +20 -0

- tracebrain-0.1.0/src/tracebrain/static/assets/chat-dark-bg-BmOTGz3x.png +0 -0

- tracebrain-0.1.0/src/tracebrain/static/assets/chat-light-bg-DwNPDG7g.png +0 -0

- tracebrain-0.1.0/src/tracebrain/static/assets/dark-owl-CATNyvf8.png +0 -0

- tracebrain-0.1.0/src/tracebrain/static/assets/index-B6hMk-_K.js +286 -0

- tracebrain-0.1.0/src/tracebrain/static/assets/index-CXBZvQ1E.css +1 -0

- tracebrain-0.1.0/src/tracebrain/static/assets/light-owl-CAs_QdDB.png +0 -0

- tracebrain-0.1.0/src/tracebrain/static/chat-dark-bg.png +0 -0

- tracebrain-0.1.0/src/tracebrain/static/chat-light-bg.png +0 -0

- tracebrain-0.1.0/src/tracebrain/static/favicon-dark.png +0 -0

- tracebrain-0.1.0/src/tracebrain/static/favicon-light.png +0 -0

- tracebrain-0.1.0/src/tracebrain/static/index.html +16 -0

- tracebrain-0.1.0/src/tracebrain.egg-info/PKG-INFO +798 -0

- tracebrain-0.1.0/src/tracebrain.egg-info/SOURCES.txt +75 -0

- tracebrain-0.1.0/src/tracebrain.egg-info/dependency_links.txt +1 -0

- tracebrain-0.1.0/src/tracebrain.egg-info/entry_points.txt +2 -0

- tracebrain-0.1.0/src/tracebrain.egg-info/requires.txt +37 -0

- tracebrain-0.1.0/src/tracebrain.egg-info/top_level.txt +1 -0

|

@@ -0,0 +1,798 @@

|

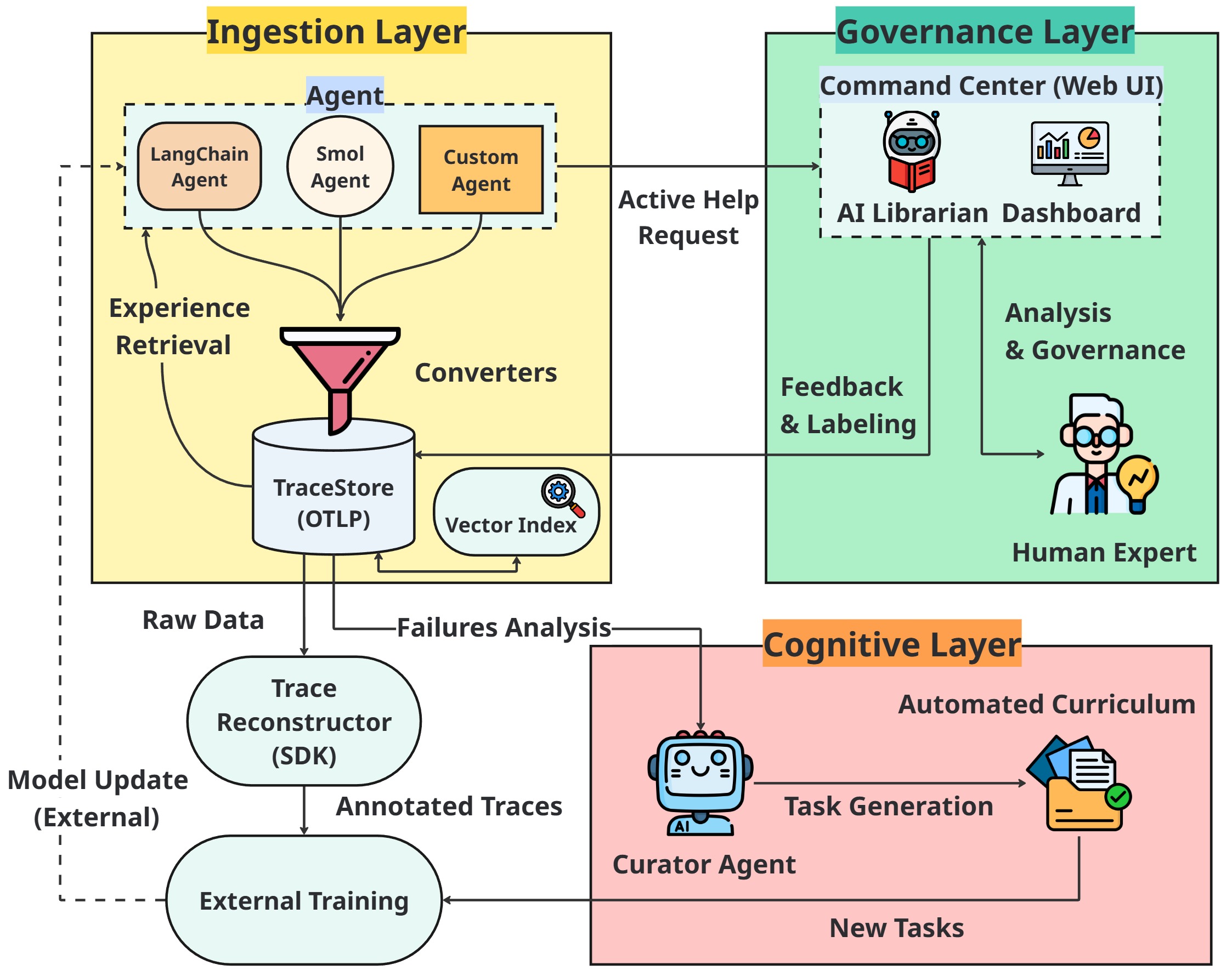

|

|

1

|

+

Metadata-Version: 2.4

|

|

2

|

+

Name: tracebrain

|

|

3

|

+

Version: 0.1.0

|

|

4

|

+

Summary: A standalone trace management platform for observability and continuous improvement of LLM-based agents.

|

|

5

|

+

Author: TraceBrain Team

|

|

6

|

+

License: MIT

|

|

7

|

+

Project-URL: Homepage, https://github.com/ToolBrain/TraceBrain

|

|

8

|

+

Project-URL: Repository, https://github.com/ToolBrain/TraceBrain

|

|

9

|

+

Project-URL: Issues, https://github.com/ToolBrain/TraceBrain/issues

|

|

10

|

+

Keywords: llm,llm-agents,agent-observability,tracing,trace-management,opentelemetry,langchain,agent-evaluation,debugging,monitoring

|

|

11

|

+

Classifier: Development Status :: 4 - Beta

|

|

12

|

+

Classifier: Intended Audience :: Developers

|

|

13

|

+

Classifier: License :: OSI Approved :: MIT License

|

|

14

|

+

Classifier: Programming Language :: Python :: 3

|

|

15

|

+

Classifier: Programming Language :: Python :: 3.8

|

|

16

|

+

Classifier: Programming Language :: Python :: 3.9

|

|

17

|

+

Classifier: Programming Language :: Python :: 3.10

|

|

18

|

+

Classifier: Programming Language :: Python :: 3.11

|

|

19

|

+

Classifier: Programming Language :: Python :: 3.12

|

|

20

|

+

Requires-Python: >=3.8

|

|

21

|

+

Description-Content-Type: text/markdown

|

|

22

|

+

Requires-Dist: sqlalchemy<3,>=2.0

|

|

23

|

+

Requires-Dist: psycopg2-binary>=2.9

|

|

24

|

+

Requires-Dist: fastapi<1,>=0.104

|

|

25

|

+

Requires-Dist: uvicorn[standard]<1,>=0.24

|

|

26

|

+

Requires-Dist: pydantic<3,>=2.0

|

|

27

|

+

Requires-Dist: pydantic-settings<3,>=2.0

|

|

28

|

+

Requires-Dist: typer<1,>=0.24

|

|

29

|

+

Requires-Dist: requests<3,>=2.31

|

|

30

|

+

Requires-Dist: sqlparse>=0.5

|

|

31

|

+

Requires-Dist: pgvector>=0.2

|

|

32

|

+

Requires-Dist: google-genai<2,>=1.60

|

|

33

|

+

Requires-Dist: python-dotenv>=1.0.0

|

|

34

|

+

Requires-Dist: platformdirs>=4.0

|

|

35

|

+

Provides-Extra: openai

|

|

36

|

+

Requires-Dist: openai<3,>=2.26; extra == "openai"

|

|

37

|

+

Provides-Extra: anthropic

|

|

38

|

+

Requires-Dist: anthropic<1,>=0.34; extra == "anthropic"

|

|

39

|

+

Provides-Extra: huggingface

|

|

40

|

+

Requires-Dist: huggingface_hub<2,>=1.0; extra == "huggingface"

|

|

41

|

+

Provides-Extra: all-llms

|

|

42

|

+

Requires-Dist: openai<3,>=2.26; extra == "all-llms"

|

|

43

|

+

Requires-Dist: anthropic<1,>=0.34; extra == "all-llms"

|

|

44

|

+

Requires-Dist: huggingface_hub<2,>=1.0; extra == "all-llms"

|

|

45

|

+

Provides-Extra: embeddings-local

|

|

46

|

+

Requires-Dist: sentence-transformers>=2.7.0; extra == "embeddings-local"

|

|

47

|

+

Provides-Extra: dev

|

|

48

|

+

Requires-Dist: pytest>=7.0.0; extra == "dev"

|

|

49

|

+

Requires-Dist: pytest-cov>=4.0.0; extra == "dev"

|

|

50

|

+

Requires-Dist: black>=23.0.0; extra == "dev"

|

|

51

|

+

Requires-Dist: isort>=5.12.0; extra == "dev"

|

|

52

|

+

Requires-Dist: mypy>=1.0.0; extra == "dev"

|

|

53

|

+

|

|

54

|

+

# TraceBrain: An Open-Source Framework for Agentic Trace Management 🧠🚀

|

|

55

|

+

|

|

56

|

+

<p align="center">

|

|

57

|

+

<picture>

|

|

58

|

+

<source media="(prefers-color-scheme: dark)" srcset="images/banner-dark.png">

|

|

59

|

+

<source media="(prefers-color-scheme: light)" srcset="images/banner-light.png">

|

|

60

|

+

<img src="https://raw.githubusercontent.com/ToolBrain/TraceBrain/main/images/banner-light.png" alt="TraceBrain Banner" width="100%">

|

|

61

|

+

</picture>

|

|

62

|

+

</p>

|

|

63

|

+

|

|

64

|

+

<p align="center">

|

|

65

|

+

<a href="https://pypi.org/project/tracebrain/">

|

|

66

|

+

<img src="https://img.shields.io/pypi/v/tracebrain" alt="PyPI Version">

|

|

67

|

+

</a>

|

|

68

|

+

<a href="https://pypistats.org/packages/tracebrain">

|

|

69

|

+

<img src="https://img.shields.io/badge/dynamic/json?url=https://pypistats.org/api/packages/tracebrain/recent&query=data.last_month&label=downloads/month" alt="Monthly Downloads">

|

|

70

|

+

</a>

|

|

71

|

+

<img src="https://img.shields.io/badge/License-MIT-green" alt="License">

|

|

72

|

+

</p>

|

|

73

|

+

|

|

74

|

+

**TraceBrain** is an open-source platform for collecting, managing, and analyzing execution traces from LLM agents.

|

|

75

|

+

|

|

76

|

+

The system standardizes heterogeneous agent logs into a unified trace format, enabling consistent inspection, evaluation, and downstream analysis across different frameworks.

|

|

77

|

+

|

|

78

|

+

By organizing historical traces as structured artifacts, TraceBrain supports agent observability, human oversight, and iterative improvement of agent workflows.

|

|

79

|

+

|

|

80

|

+

## ✨ Key Features

|

|

81

|

+

|

|

82

|

+

### 📥 Ingestion Layer (Standardization)

|

|

83

|

+

- **Standardized Trace Format**: Capture agent workflows using a unified OTLP-based schema.

|

|

84

|

+

- **Framework-Agnostic Integration**: Lightweight SDK and converters support agents built with various frameworks (e.g., LangChain, SmolAgents) or custom implementations.

|

|

85

|

+

- **Delta-based Tracing**: Stores only incremental context updates (`new_content`) to reduce redundant prompt storage.

|

|

86

|

+

|

|

87

|

+

### 🛡️ Governance Layer (Human-in-the-loop)

|

|

88

|

+

- **Active Help Request**: Allows agents to escalate uncertain decisions to human experts during execution.

|

|

89

|

+

- **Command Center UI**: Visualize multi-step agent traces and enable expert inspection and feedback.

|

|

90

|

+

- **Semi-Automated Evaluation**: An AI Judge generates draft evaluations (e.g., `rating`, `confidence`, `error_type`, and `feedback`) that experts can review and finalize.

|

|

91

|

+

|

|

92

|

+

### 🧠 Cognitive Layer (Trace-driven Learning)

|

|

93

|

+

- **Experience Retrieval**: Agents can query past successful trajectories to guide reasoning via in-context learning.

|

|

94

|

+

- **Automated Curriculum Generation**: Using error classifications produced by the AI Judge, a Curator agent analyzes clustered failure traces and synthesizes targeted training tasks.

|

|

95

|

+

- **Semantic Trace Search**: Vector-based retrieval (via `pgvector`) for locating similar reasoning trajectories.

|

|

96

|

+

|

|

97

|

+

## 🏗️ Architecture

|

|

98

|

+

|

|

99

|

+

|

|

100

|

+

|

|

101

|

+

- **Your AI Agent:** Any agent framework. Uses the TraceClient SDK to send data.

|

|

102

|

+

- **TraceStore API:** The central FastAPI server. Ingests, stores, and serves trace data.

|

|

103

|

+

- **Database:** The persistence layer (PostgreSQL or SQLite).

|

|

104

|

+

- **Admin Panel UI:** A React client in `web/` that consumes the TraceStore API.

|

|

105

|

+

|

|

106

|

+

**Tech Stack:**

|

|

107

|

+

- **Backend**: FastAPI, SQLAlchemy 2.0, Pydantic V2

|

|

108

|

+

- **Database**: PostgreSQL (production), SQLite (development), pgvector (semantic search)

|

|

109

|

+

- **Frontend**: React (Vite + MUI) in `web/`

|

|

110

|

+

- **Deployment**: Docker Compose

|

|

111

|

+

- **AI Integration**: LibrarianAgent + AI Judge + Curriculum Curator with multi-provider LLM support

|

|

112

|

+

- **Embeddings**: sentence-transformers (local) or OpenAI/Gemini (cloud)

|

|

113

|

+

|

|

114

|

+

## 📸 Platform Showcase

|

|

115

|

+

|

|

116

|

+

Take a look at the TraceBrain Command Center in action:

|

|

117

|

+

|

|

118

|

+

<p align="center">

|

|

119

|

+

<b>🌐 Welcome to the Command Center</b><br>

|

|

120

|

+

<i>The central hub for agentic trace management, featuring a clean, intuitive, and modern interface.</i><br>

|

|

121

|

+

<img src="https://raw.githubusercontent.com/ToolBrain/TraceBrain/main/images/homepage.jpg" alt="TraceBrain Homepage" width="100%">

|

|

122

|

+

</p>

|

|

123

|

+

|

|

124

|

+

<table>

|

|

125

|

+

<tr>

|

|

126

|

+

<td width="50%">

|

|

127

|

+

<b>📊 Command Center Dashboard</b><br>

|

|

128

|

+

<i>Real-time error distribution, confidence metrics, and active filters.</i><br>

|

|

129

|

+

<img src="https://raw.githubusercontent.com/ToolBrain/TraceBrain/main/images/dashboard_analytics.jpg" alt="Dashboard" style="width:100%; height:auto; border-radius:12px;">

|

|

130

|

+

</td>

|

|

131

|

+

<td width="50%">

|

|

132

|

+

<b>🔍 Trace Explorer & AI Judge</b><br>

|

|

133

|

+

<i>Side-by-side view of the execution tree, span properties, and Human-AI collaborative labeling.</i><br>

|

|

134

|

+

<img src="https://raw.githubusercontent.com/ToolBrain/TraceBrain/main/images/trace_explorer.jpg" alt="Trace Explorer" style="width:100%; height:auto; border-radius:12px;">

|

|

135

|

+

</td>

|

|

136

|

+

</tr>

|

|

137

|

+

<tr>

|

|

138

|

+

<td width="50%">

|

|

139

|

+

<b>🤖 AI Librarian</b><br>

|

|

140

|

+

<i>Query your trace database using natural language and intent-based UI filters.</i><br>

|

|

141

|

+

<img src="https://raw.githubusercontent.com/ToolBrain/TraceBrain/main/images/ai_librarian.jpg" alt="AI Librarian" style="width:100%; height:auto; border-radius:12px;">

|

|

142

|

+

</td>

|

|

143

|

+

<td width="50%">

|

|

144

|

+

<b>🗺️ Automated Curriculum</b><br>

|

|

145

|

+

<i>Transform diagnosed failures into targeted training tasks ready for export.</i><br>

|

|

146

|

+

<img src="https://raw.githubusercontent.com/ToolBrain/TraceBrain/main/images/training_roadmap.jpg" alt="Training Roadmap" style="width:100%; height:auto; border-radius:12px;">

|

|

147

|

+

</td>

|

|

148

|

+

</tr>

|

|

149

|

+

</table>

|

|

150

|

+

|

|

151

|

+

---

|

|

152

|

+

|

|

153

|

+

## 🚀 Quick Start

|

|

154

|

+

|

|

155

|

+

Choose one of three installation paths based on your needs. Each option ends with the

|

|

156

|

+

same user experience: a unified UI + API at http://localhost:8000.

|

|

157

|

+

|

|

158

|

+

### Option 1: Docker (Recommended)

|

|

159

|

+

|

|

160

|

+

This is the default path for most users. It automatically provisions a production-ready

|

|

161

|

+

PostgreSQL + pgvector environment. Option 1 uses pre-built images from Docker Hub.

|

|

162

|

+

|

|

163

|

+

1. **Install the CLI**

|

|

164

|

+

```bash

|

|

165

|

+

pip install tracebrain

|

|

166

|

+

```

|

|

167

|

+

|

|

168

|

+

2. **Initialize**

|

|

169

|

+

```bash

|

|

170

|

+

tracebrain init

|

|

171

|

+

```

|

|

172

|

+

This creates a template `.env` file for API keys and configuration.

|

|

173

|

+

|

|

174

|

+

Open the `.env` file and add your API keys before continuing. If you skip this step,

|

|

175

|

+

the containers will start but AI features (Librarian, Judge) will fail.

|

|

176

|

+

|

|

177

|

+

3. **Start the platform**

|

|

178

|

+

```bash

|

|

179

|

+

tracebrain up

|

|

180

|

+

```

|

|

181

|

+

|

|

182

|

+

**Access:** http://localhost:8000 (UI + API)

|

|

183

|

+

|

|

184

|

+

Note: Option 1 uses pre-built images from Docker Hub, so you don't need Node.js or local build tools.

|

|

185

|

+

|

|

186

|

+

If you use Docker, you only need `pip install tracebrain` to get the CLI. All LLM and embedding

|

|

187

|

+

dependencies are already bundled in the Docker image, so you do not install them on your host machine.

|

|

188

|

+

|

|

189

|

+

### Option 2: Local with SQLite (Portable Mode)

|

|

190

|

+

|

|

191

|

+

Best for fast evaluation without Docker.

|

|

192

|

+

|

|

193

|

+

1. **Install the CLI**

|

|

194

|

+

```bash

|

|

195

|

+

pip install tracebrain

|

|

196

|

+

```

|

|

197

|

+

|

|

198

|

+

2. **Initialize**

|

|

199

|

+

```bash

|

|

200

|

+

tracebrain init

|

|

201

|

+

```

|

|

202

|

+

|

|

203

|

+

3. **Create local DB**

|

|

204

|

+

```bash

|

|

205

|

+

tracebrain init-db

|

|

206

|

+

```

|

|

207

|

+

This creates a local SQLite file and prepares tables.

|

|

208

|

+

|

|

209

|

+

4. **Launch**

|

|

210

|

+

```bash

|

|

211

|

+

tracebrain start

|

|

212

|

+

```

|

|

213

|

+

|

|

214

|

+

**Access:** http://localhost:8000 (UI + API)

|

|

215

|

+

|

|

216

|

+

**Technical note:** the Python backend serves the bundled React build from its internal

|

|

217

|

+

static directory, so no separate frontend build step is required.

|

|

218

|

+

|

|

219

|

+

If you run locally without Docker and want to keep the environment light, install the core package

|

|

220

|

+

first (`pip install tracebrain`). When you need a specific provider, add only that extra (for example

|

|

221

|

+

`pip install tracebrain[openai]`).

|

|

222

|

+

|

|

223

|

+

### Option 3: Development Setup (Contributor Mode)

|

|

224

|

+

|

|

225

|

+

For contributors who plan to modify TraceBrain source code.

|

|

226

|

+

|

|

227

|

+

1. **Clone the repository**

|

|

228

|

+

```bash

|

|

229

|

+

git clone https://github.com/ToolBrain/TraceBrain.git

|

|

230

|

+

cd TraceBrain

|

|

231

|

+

```

|

|

232

|

+

|

|

233

|

+

2. **Backend (editable install)**

|

|

234

|

+

```bash

|

|

235

|

+

pip install -e .

|

|

236

|

+

tracebrain start

|

|

237

|

+

```

|

|

238

|

+

|

|

239

|

+

3. **Frontend (HMR)**

|

|

240

|

+

```bash

|

|

241

|

+

cd web

|

|

242

|

+

npm install

|

|

243

|

+

npm run dev

|

|

244

|

+

```

|

|

245

|

+

|

|

246

|

+

**Access:**

|

|

247

|

+

- Frontend: http://localhost:5173 (Hot Module Replacement)

|

|

248

|

+

- API: http://localhost:8000

|

|

249

|

+

|

|

250

|

+

## 📦 Installation

|

|

251

|

+

|

|

252

|

+

TraceBrain supports optional extras to minimize dependencies. Install only what you need.

|

|

253

|

+

|

|

254

|

+

```bash

|

|

255

|

+

pip install tracebrain

|

|

256

|

+

|

|

257

|

+

# Optional extras

|

|

258

|

+

pip install tracebrain[embeddings-local] # local embeddings

|

|

259

|

+

pip install tracebrain[openai] # OpenAI provider

|

|

260

|

+

pip install tracebrain[anthropic] # Anthropic provider

|

|

261

|

+

pip install tracebrain[huggingface] # Hugging Face provider SDK

|

|

262

|

+

pip install tracebrain[all-llms] # OpenAI + Anthropic + Hugging Face

|

|

263

|

+

```

|

|

264

|

+

|

|

265

|

+

## 📖 Usage

|

|

266

|

+

|

|

267

|

+

### CLI Commands

|

|

268

|

+

|

|

269

|

+

| Command | Description |

|

|

270

|

+

| --- | --- |

|

|

271

|

+

| `tracebrain init` | Create a template `.env` file in the current directory. |

|

|

272

|

+

| `tracebrain init-db` | Initialize a local SQLite database. |

|

|

273

|

+

| `tracebrain up` | Launch Docker-based infrastructure. |

|

|

274

|

+

| `tracebrain start` | Run the standalone FastAPI server. |

|

|

275

|

+

|

|

276

|

+

### API Endpoints

|

|

277

|

+

|

|

278

|

+

## Concepts

|

|

279

|

+

|

|

280

|

+

- **Trace**: A single execution attempt (an "experiment").

|

|

281

|

+

- **Episode**: A logical group of traces (attempts) aimed at solving a single user task.

|

|

282

|

+

|

|

283

|

+

**Traces**

|

|

284

|

+

- `POST /api/v1/traces` - Create a new trace

|

|

285

|

+

- `POST /api/v1/traces/init` - Initialize a trace before spans are available

|

|

286

|

+

- `GET /api/v1/traces` - List all traces

|

|

287

|

+

- `GET /api/v1/traces/{trace_id}` - Get trace details

|

|

288

|

+

- `POST /api/v1/traces/{trace_id}/feedback` - Add feedback to a trace

|

|

289

|

+

- `GET /api/v1/export/traces` - Export raw OTLP traces as JSONL (supports status, min_rating, error_type, min_confidence, max_confidence, start_time, end_time)

|

|

290

|

+

|

|

291

|

+

**Episodes**

|

|

292

|

+

- `GET /api/v1/episodes` - List all episodes along with their full traces

|

|

293

|

+

- `GET /api/v1/episodes/summary` - List episodes with aggregated metrics

|

|

294

|

+

- `GET /api/v1/episodes/{episode_id}` - Get episode details with trace summaries

|

|

295

|

+

- `GET /api/v1/episodes/{episode_id}/traces` - Get all full traces in an episode

|

|

296

|

+

|

|

297

|

+

**Analytics**

|

|

298

|

+

- `GET /api/v1/stats` - Get overall statistics

|

|

299

|

+

- `GET /api/v1/analytics/tool_usage` - Get tool usage analytics

|

|

300

|

+

|

|

301

|

+

**Natural Language Queries**

|

|

302

|

+

- `POST /api/v1/natural_language_query` - Query traces with natural language

|

|

303

|

+

- Uses Librarian provider/model from Settings (stored in DB)

|

|

304

|

+

- Requires the matching provider API key in environment (`{PROVIDER}_API_KEY`)

|

|

305

|

+

- Supports `session_id` for chat memory and returns `suggestions`

|

|

306

|

+

- `GET /api/v1/librarian_sessions/{session_id}` - Load stored chat history

|

|

307

|

+

|

|

308

|

+

**AI Evaluation**

|

|

309

|

+

- `POST /api/v1/ai_evaluate/{trace_id}` - Evaluate a trace using the configured Judge provider/model

|

|

310

|

+

- `POST /api/v1/ops/batch_evaluate` - Run AI judge over recent traces missing `tracebrain.ai_evaluation`

|

|

311

|

+

- `POST /api/v1/traces` triggers background evaluation when no AI draft exists

|

|

312

|

+

|

|

313

|

+

**Operations**

|

|

314

|

+

- `DELETE /api/v1/ops/traces/cleanup` - Delete traces that match cleanup filters

|

|

315

|

+

|

|

316

|

+

**Semantic Search**

|

|

317

|

+

- `GET /api/v1/traces/search` - Find similar traces using vector similarity

|

|

318

|

+

|

|

319

|

+

**Governance Signals**

|

|

320

|

+

- `POST /api/v1/traces/{trace_id}/signal` - Update trace status/priority

|

|

321

|

+

|

|

322

|

+

**Curriculum**

|

|

323

|

+

- `POST /api/v1/curriculum/generate` - Generate tasks from failed/low-rated traces using configured Curator provider/model

|

|

324

|

+

- `GET /api/v1/curriculum` - List pending curriculum tasks

|

|

325

|

+

- `GET /api/v1/curriculum/export` - Export curriculum tasks as JSONL

|

|

326

|

+

- `DELETE /api/v1/curriculum/{task_id}` - Delete a curriculum task

|

|

327

|

+

- `DELETE /api/v1/curriculum` - Delete all curriculum tasks

|

|

328

|

+

- `PATCH /api/v1/curriculum/{task_id}/complete` - Mark a curriculum task as complete

|

|

329

|

+

- `PATCH /api/v1/curriculum/complete` - Mark all curriculum tasks as complete

|

|

330

|

+

|

|

331

|

+

**History**

|

|

332

|

+

- `GET /api/v1/history` - Retrieve history of viewed traces and episodes

|

|

333

|

+

- `POST /api/v1/history` - Add or update last time trace or episode was viewed

|

|

334

|

+

- `DELETE /api/v1/history` - Clear all traces and episodes in viewed history

|

|

335

|

+

|

|

336

|

+

**Settings**

|

|

337

|

+

- `GET /api/v1/settings` - Retrieve current LLM routing settings

|

|

338

|

+

- `POST /api/v1/settings` - Update LLM routing + provider API keys (`librarian_*`, `judge_*`, `curator_*`, `*_api_key`)

|

|

339

|

+

|

|

340

|

+

### Trace Status and Needs Review

|

|

341

|

+

|

|

342

|

+

Trace status is stored in both the database column `status` and in

|

|

343

|

+

`attributes.tracebrain.trace.status` for UI and API consistency.

|

|

344

|

+

|

|

345

|

+

**Supported statuses:**

|

|

346

|

+

|

|

347

|

+

- `running` - Trace is in progress or not finalized.

|

|

348

|

+

- `completed` - Trace has been reviewed and finalized.

|

|

349

|

+

- `needs_review` - Trace requires human attention.

|

|

350

|

+

- `failed` - Trace is marked as failed.

|

|

351

|

+

|

|

352

|

+

**When `needs_review` is set:**

|

|

353

|

+

|

|

354

|

+

- **Agent Signal:** The agent calls `request_human_intervention` (Active Help Request).

|

|

355

|

+

- **AI Judgment:** `tracebrain.ai_evaluation.confidence` < 0.75, or

|

|

356

|

+

`tracebrain.ai_evaluation.error_type` is one of:

|

|

357

|

+

`logic_loop`, `hallucination`, `invalid_tool_usage`, `tool_execution_error`,

|

|

358

|

+

`format_error`, `misinterpretation`, `context_overflow`.

|

|

359

|

+

- **System Error:** Any span has `otel.status_code` = `ERROR`.

|

|

360

|

+

|

|

361

|

+

### Configuration (Settings + Provider Keys)

|

|

362

|

+

|

|

363

|

+

TraceBrain now separates configuration into two layers:

|

|

364

|

+

|

|

365

|

+

- **Runtime routing settings (DB-backed):** provider/model for Librarian, Judge, Curator.

|

|

366

|

+

- **Secrets and infra flags (env):** provider API keys, embedding config, debug flags.

|

|

367

|

+

|

|

368

|

+

Runtime settings are editable from the UI or `POST /api/v1/settings`, and are persisted in the database.

|

|

369

|

+

On first startup (when DB settings row does not exist), values are bootstrapped from `DEFAULT_*` env variables.

|

|

370

|

+

|

|

371

|

+

#### 1) Provider API keys (environment variables)

|

|

372

|

+

|

|

373

|

+

Use provider-specific key names only:

|

|

374

|

+

|

|

375

|

+

```bash

|

|

376

|

+

OPENAI_API_KEY=your_openai_api_key_here

|

|

377

|

+

GEMINI_API_KEY=your_gemini_api_key_here

|

|

378

|

+

# ANTHROPIC_API_KEY=your_anthropic_api_key_here

|

|

379

|

+

# HUGGINGFACE_API_KEY=your_huggingface_api_key_here

|

|

380

|

+

```

|

|

381

|

+

|

|

382

|

+

Optional provider base URLs:

|

|

383

|

+

|

|

384

|

+

```bash

|

|

385

|

+

# Optional: custom endpoints/proxies

|

|

386

|

+

# OPENAI_BASE_URL=https://your-openai-compatible-endpoint/v1

|

|

387

|

+

# ANTHROPIC_BASE_URL=https://your-anthropic-endpoint

|

|

388

|

+

# HUGGINGFACE_BASE_URL=https://your-huggingface-endpoint

|

|

389

|

+

```

|

|

390

|

+

|

|

391

|

+

**Hugging Face local inference (vLLM/TGI):**

|

|

392

|

+

|

|

393

|

+

If you run a local inference server (vLLM or TGI), set `HUGGINGFACE_BASE_URL` to your server URL.

|

|

394

|

+

When this is set, TraceBrain routes Hugging Face traffic to your local endpoint instead of the

|

|

395

|

+

Hugging Face cloud API.

|

|

396

|

+

|

|

397

|

+

```bash

|

|

398

|

+

# Example: local vLLM/TGI endpoint

|

|

399

|

+

HUGGINGFACE_BASE_URL=http://localhost:8000

|

|

400

|

+

HUGGINGFACE_API_KEY=your_token_if_required

|

|

401

|

+

```

|

|

402

|

+

|

|

403

|

+

#### 2) Bootstrap defaults for first run (environment variables)

|

|

404

|

+

|

|

405

|

+

These defaults are used only when settings are not yet stored in DB:

|

|

406

|

+

|

|

407

|

+

```bash

|

|

408

|

+

DEFAULT_LIBRARIAN_PROVIDER=openai

|

|

409

|

+

DEFAULT_LIBRARIAN_MODEL=gpt-4o-mini

|

|

410

|

+

|

|

411

|

+

DEFAULT_JUDGE_PROVIDER=gemini

|

|

412

|

+

DEFAULT_JUDGE_MODEL=gemini-2.5-flash

|

|

413

|

+

|

|

414

|

+

DEFAULT_CURATOR_PROVIDER=gemini

|

|

415

|

+

DEFAULT_CURATOR_MODEL=gemini-2.5-flash

|

|

416

|

+

```

|

|

417

|

+

|

|

418

|

+

#### 3) Global flags and embedding configuration

|

|

419

|

+

|

|

420

|

+

```bash

|

|

421

|

+

LLM_DEBUG=false

|

|

422

|

+

|

|

423

|

+

EMBEDDING_PROVIDER=local

|

|

424

|

+

EMBEDDING_MODEL=all-MiniLM-L6-v2

|

|

425

|

+

|

|

426

|

+

# For cloud embeddings

|

|

427

|

+

# EMBEDDING_API_KEY=your_embedding_key

|

|

428

|

+

# EMBEDDING_BASE_URL=https://your-embedding-endpoint/v1

|

|

429

|

+

```

|

|

430

|

+

|

|

431

|

+

#### Settings API payload

|

|

432

|

+

|

|

433

|

+

`GET /api/v1/settings` and `POST /api/v1/settings` use this shape:

|

|

434

|

+

|

|

435

|

+

```json

|

|

436

|

+

{

|

|

437

|

+

"librarian_provider": "openai",

|

|

438

|

+

"librarian_model": "gpt-4o-mini",

|

|

439

|

+

"judge_provider": "gemini",

|

|

440

|

+

"judge_model": "gemini-2.5-flash",

|

|

441

|

+

"curator_provider": "gemini",

|

|

442

|

+

"curator_model": "gemini-2.5-flash",

|

|

443

|

+

"openai_api_key": "sk-...abcd",

|

|

444

|

+

"gemini_api_key": "AIza...wxyz",

|

|

445

|

+

"anthropic_api_key": null,

|

|

446

|

+

"huggingface_api_key": null

|

|

447

|

+

}

|

|

448

|

+

```

|

|

449

|

+

|

|

450

|

+

Notes:

|

|

451

|

+

- `GET /api/v1/settings` returns masked API keys for safety.

|

|

452

|

+

- `POST /api/v1/settings` accepts plain-text API keys when you want to add or rotate keys.

|

|

453

|

+

- If a DB key is empty, TraceBrain falls back to the corresponding environment variable (`OPENAI_API_KEY`, `GEMINI_API_KEY`, `ANTHROPIC_API_KEY`, `HUGGINGFACE_API_KEY`).

|

|

454

|

+

|

|

455

|

+

**Example API Usage:**

|

|

456

|

+

|

|

457

|

+

```python

|

|

458

|

+

import requests

|

|

459

|

+

|

|

460

|

+

# Create a trace

|

|

461

|

+

response = requests.post("http://localhost:8000/api/v1/traces", json={

|

|

462

|

+

"trace_id": "trace-001",

|

|

463

|

+

"spans": [

|

|

464

|

+

{

|

|

465

|

+

"span_id": "span-001",

|

|

466

|

+

"trace_id": "trace-001",

|

|

467

|

+

"name": "User Request",

|

|

468

|

+

"start_time": "2024-01-01T10:00:00Z",

|

|

469

|

+

"end_time": "2024-01-01T10:00:05Z",

|

|

470

|

+

"attributes": {

|

|

471

|

+

"tracebrain.span.type": "user_request",

|

|

472

|

+

"tracebrain.content.new_content": "What's the stock price of NVIDIA?"

|

|

473

|

+

}

|

|

474

|

+

}

|

|

475

|

+

]

|

|

476

|

+

})

|

|

477

|

+

|

|

478

|

+

# Add feedback

|

|

479

|

+

requests.post("http://localhost:8000/api/v1/traces/trace-001/feedback", json={

|

|

480

|

+

"rating": 5,

|

|

481

|

+

"tags": ["accurate", "fast"],

|

|

482

|

+

"comment": "Great response!",

|

|

483

|

+

"metadata": {

|

|

484

|

+

"outcome": "success",

|

|

485

|

+

"efficiency_score": 0.95

|

|

486

|

+

}

|

|

487

|

+

})

|

|

488

|

+

```

|

|

489

|

+

|

|

490

|

+

### React Frontend

|

|

491

|

+

|

|

492

|

+

The admin UI provides:

|

|

493

|

+

- **Trace Browser**: View all traces with filters

|

|

494

|

+

- **Trace Details**: Expandable span tree visualization and compare related traces

|

|

495

|

+

- **Feedback Form**: Rate and tag traces

|

|

496

|

+

- **Analytics Dashboard**: Stats, tool usage charts

|

|

497

|

+

- **AI Librarian**: Session-aware chat with suggestions and history restore

|

|

498

|

+

- **AI Evaluation**: AI draft is auto-generated and experts verify or edit before finalizing

|

|

499

|

+

- **Governance Signal**: Mark traces with status and priority

|

|

500

|

+

- **Curriculum**: Generate and review training tasks

|

|

501

|

+

|

|

502

|

+

Frontend dev server (local development only):

|

|

503

|

+

|

|

504

|

+

```bash

|

|

505

|

+

cd web

|

|

506

|

+

npm install

|

|

507

|

+

npm run dev

|

|

508

|

+

```

|

|

509

|

+

|

|

510

|

+

### Embeddings and Semantic Search

|

|

511

|

+

|

|

512

|

+

Semantic search is used in these places:

|

|

513

|

+

- **API:** `GET /api/v1/traces/search` for vector similarity over traces

|

|

514

|

+

- **Experience Retrieval:** `search_similar_traces` and `search_past_experiences` agent tools

|

|

515

|

+

- **AI Librarian:** uses semantic search to surface relevant past traces when enabled

|

|

516

|

+

|

|

517

|

+

Configure embeddings for vector search and experience retrieval:

|

|

518

|

+

|

|

519

|

+

```bash

|

|

520

|

+

# local (default)

|

|

521

|

+

EMBEDDING_PROVIDER=local

|

|

522

|

+

EMBEDDING_MODEL=all-MiniLM-L6-v2

|

|

523

|

+

|

|

524

|

+

# cloud (OpenAI/Gemini)

|

|

525

|

+

EMBEDDING_PROVIDER=openai

|

|

526

|

+

EMBEDDING_API_KEY=your-key

|

|

527

|

+

EMBEDDING_MODEL=text-embedding-3-small

|

|

528

|

+

|

|

529

|

+

# optional for OpenAI-compatible endpoints

|

|

530

|

+

EMBEDDING_BASE_URL=https://your-endpoint/v1

|

|

531

|

+

```

|

|

532

|

+

|

|

533

|

+

**When embeddings run:** embeddings are created at trace ingest time, not during server startup.

|

|

534

|

+

|

|

535

|

+

**Do I need local embeddings?** No. You can skip `embeddings-local` entirely and still run the platform. If no embedding provider is configured, traces still ingest and all non-semantic features work normally; only vector search (and features that rely on it) are unavailable.

|

|

536

|

+

|

|

537

|

+

## 🔌 Integration with Your Agent

|

|

538

|

+

|

|

539

|

+

### Using the TraceStore Client (read/query)

|

|

540

|

+

|

|

541

|

+

This section focuses on read/query operations. For logging traces, see the

|

|

542

|

+

`trace_scope` section below.

|

|

543

|

+

|

|

544

|

+

```python

|

|

545

|

+

import json

|

|

546

|

+

|

|

547

|

+

from tracebrain.sdk.client import TraceClient, TraceScope

|

|

548

|

+

|

|

549

|

+

client = TraceClient(base_url="http://localhost:8000")

|

|

550

|

+

|

|

551

|

+

# Query traces

|

|

552

|

+

traces = client.list_traces()

|

|

553

|

+

|

|

554

|

+

# Export traces as JSONL

|

|

555

|

+

jsonl_payload = client.export_traces(min_rating=4, limit=100)

|

|

556

|

+

|

|

557

|

+

# Parse JSONL into Python objects

|

|

558

|

+

trace_items = [json.loads(line) for line in jsonl_payload.splitlines() if line.strip()]

|

|

559

|

+

|

|

560

|

+

# Reconstruct messages or turns from OTLP

|

|

561

|

+

trace_data = client.get_trace("my-trace-001")

|

|

562

|

+

|

|

563

|

+

# to_messages: rebuilds chat message list (role/content) from spans

|

|

564

|

+

messages = TraceScope.to_messages(trace_data)

|

|

565

|

+

# Example: messages[:2] -> [{"role": "user", "content": "..."}, {"role": "assistant", "content": "..."}]

|

|

566

|

+

|

|

567

|

+

# to_turns: groups messages into conversation turns for UI/analysis

|

|

568

|

+

turns = TraceScope.to_turns(trace_data)

|

|

569

|

+

# Example: turns[0] -> {"user": "...", "assistant": "..."}

|

|

570

|

+

|

|

571

|

+

# to_tracebrain_turns: returns TraceBrain-native turn objects with metadata

|

|

572

|

+

tracebrain_turns = TraceScope.to_tracebrain_turns(trace_data)

|

|

573

|

+

# Example: tracebrain_turns[0] -> {"turn_id": "...", "messages": [...], "span_ids": [...]}

|

|

574

|

+

```

|

|

575

|

+

|

|

576

|

+

### Trace Init and trace_scope (recommended for all runs)

|

|

577

|

+

|

|

578

|

+

Use `trace_scope` for every agent run you plan to log. It pre-registers a trace

|

|

579

|

+

via `/api/v1/traces/init`, sets the trace ID in a context-local store (safe for

|

|

580

|

+

async and multi-thread usage), and uploads the trace when the scope exits. This

|

|

581

|

+

is required if your agent might call `request_human_intervention` (Active Help

|

|

582

|

+

Request) so the help signal is attached to the correct trace.

|

|

583

|

+

|

|

584

|

+

**Recommended: use `trace_scope` (auto init + auto log)**

|

|

585

|

+

|

|

586

|

+

```python

|

|

587

|

+

from tracebrain import TraceClient

|

|

588

|

+

from my_converters import convert_smolagent_to_otlp

|

|

589

|

+

|

|

590

|

+

client = TraceClient(base_url="http://localhost:8000")

|

|

591

|

+

|

|

592

|

+

with client.trace_scope(system_prompt="You are a helpful assistant") as trace:

|

|

593

|

+

agent = MyAgent(system_prompt="You are a helpful assistant")

|

|

594

|

+

agent.run("Summarize this report")

|

|

595

|

+

|

|

596

|

+

otlp_trace = convert_smolagent_to_otlp(agent)

|

|

597

|

+

trace["spans"] = otlp_trace.get("spans", [])

|

|

598

|

+

```

|

|

599

|

+

|

|

600

|

+

**Advanced: manual trace ID + manual log**

|

|

601

|

+

|

|

602

|

+

```python

|

|

603

|

+

from tracebrain import TraceClient

|

|

604

|

+

from tracebrain.sdk.trace_context import set_trace_id, get_trace_id

|

|

605

|

+

from my_converters import convert_smolagent_to_otlp

|

|

606

|

+

|

|

607

|

+

client = TraceClient(base_url="http://localhost:8000")

|

|

608

|

+

set_trace_id("trace_123")

|

|

609

|

+

|

|

610

|

+

agent = MyAgent(system_prompt="You are a helpful assistant")

|

|

611

|

+

agent.run("Summarize this report")

|

|

612

|

+

|

|

613

|

+

otlp_trace = convert_smolagent_to_otlp(agent)

|

|

614

|

+

otlp_trace["trace_id"] = get_trace_id() or "trace_123"

|

|

615

|

+

client.log_trace(otlp_trace)

|

|

616

|

+

```

|

|

617

|

+

|

|

618

|

+

### Agent Tools (Experience Retrieval + Active Help Request)

|

|

619

|

+

|

|

620

|

+

When to use:

|

|

621

|

+

|

|

622

|

+

- Use `search_past_experiences` to fetch high-quality, previously successful traces for similar tasks.

|

|

623

|

+

- Use `search_similar_traces` when you need semantic similarity over trace content.

|

|

624

|

+

- Use `request_human_intervention` when the agent is blocked, uncertain, or needs clarification.

|

|

625

|

+

|

|

626

|

+

```python

|

|

627

|

+

from tracebrain.sdk import (

|

|

628

|

+

search_past_experiences,

|

|

629

|

+

search_similar_traces,

|

|

630

|

+

request_human_intervention,

|

|

631

|

+

)

|

|

632

|

+

|

|

633

|

+

# Retrieve prior successful experiences

|

|

634

|

+

experiences = search_past_experiences("resolve a tool error", min_rating=4, limit=3)

|

|

635

|

+

|

|

636

|

+

# Semantic search over traces

|

|

637

|

+

similar = search_similar_traces("multi-step planning", min_rating=4, limit=3)

|

|

638

|

+

|

|

639

|

+

# Escalate to human when the agent is blocked

|

|

640

|

+

help_request = request_human_intervention("User request is ambiguous, need clarification")

|

|

641

|

+

```

|

|

642

|

+

|

|

643

|

+

### Building a Custom Converter

|

|

644

|

+

|

|

645

|

+

TraceBrain uses the **TraceBrain OTLP (OpenTelemetry Protocol) format** - a delta-based trace schema with parent_id chains for conversation reconstruction.

|

|

646

|

+

|

|

647

|

+

See [docs/Converter.md](docs/Converter.md) for:

|

|

648

|

+

- OTLP schema explanation (parent_id, new_content, delta-based design)

|

|

649

|

+

- Step-by-step conversion recipe

|

|

650

|

+

- Python template code with examples

|

|

651

|

+

|

|

652

|

+

**Quick Example:**

|

|

653

|

+

|

|

654

|

+

```python

|

|

655

|

+

import uuid

|

|

656

|

+

|

|

657

|

+

from tracebrain.core.schema import TraceBrainAttributes, SpanType

|

|

658

|

+

|

|

659

|

+

def convert_my_agent_to_otlp(agent_data):

|

|

660

|

+

spans = []

|

|

661

|

+

parent_id = None

|

|

662

|

+

for step in agent_data.steps:

|

|

663

|

+

spans.append({

|

|

664

|

+

"span_id": str(uuid.uuid4()),

|

|

665

|

+

"parent_id": parent_id, # Chain spans together

|

|

666

|

+

"name": step.action,

|

|

667

|

+

"attributes": {

|

|

668

|

+

TraceBrainAttributes.SPAN_TYPE: SpanType.LLM_INFERENCE,

|

|

669

|

+

TraceBrainAttributes.LLM_NEW_CONTENT: step.output, # Delta content only

|

|

670

|

+

TraceBrainAttributes.TOOL_NAME: step.tool_name,

|

|

671

|

+

}

|

|

672

|

+

})

|

|

673

|

+

parent_id = spans[-1]["span_id"]

|

|

674

|

+

return {"trace_id": agent_data.id, "spans": spans}

|

|

675

|

+

```

|

|

676

|

+

|

|

677

|

+

## 📁 Project Structure

|

|

678

|

+

|

|

679

|

+

```

|

|

680

|

+

TraceBrain/

|

|

681

|

+

├── src/

|

|

682

|

+

│ ├── tracebrain/ # Core package logic

|

|

683

|

+

│ │ ├── api/v1/ # FastAPI REST endpoints

|

|

684

|

+

│ │ ├── core/ # TraceStore, schema, agent logic

|

|

685

|

+

│ │ ├── db/ # Database session management

|

|

686

|

+

│ │ ├── resources/ # Bundled Docker + sample data

|

|

687

|

+

│ │ ├── static/ # Bundled React build artifacts

|

|

688

|

+

│ │ ├── sdk/ # Client SDK

|

|

689

|

+

│ │ ├── cli.py # CLI commands

|

|

690

|

+

│ │ └── main.py # FastAPI app entry

|

|

691

|

+

├── docs/ # Documentation

|

|

692

|

+

├── web/ # React source code (contributors)

|

|

693

|

+

├── pyproject.toml # Project metadata

|

|

694

|

+

└── README.md

|

|

695

|

+

```

|

|

696

|

+

|

|

697

|

+

## 🛠️ Development

|

|

698

|

+

|

|

699

|

+

### Running Tests

|

|

700

|

+

|

|

701

|

+

No automated test suite is included yet.

|

|

702

|

+

|

|

703

|

+

### Seeding Sample Data

|

|

704

|

+

|

|

705

|

+

```bash

|

|

706

|

+

tracebrain seed

|

|

707

|

+

```

|

|

708

|

+

|

|

709

|

+

### Database Migrations

|

|

710

|

+

|

|

711

|

+

No migration tooling is included yet. For schema changes:

|

|

712

|

+

|

|

713

|

+

1. Update models in `src/tracebrain/db/base.py`

|

|

714

|

+

2. Recreate the database:

|

|

715

|

+

- **SQLite (local):** delete `tracebrain_traces.db`, then run `tracebrain init-db`

|

|

716

|

+

- **PostgreSQL (Docker):** `docker compose -f docker/docker-compose.yml down -v` then `tracebrain up`

|

|

717

|

+

|

|

718

|

+

### Working with JSONB Queries (PostgreSQL)

|

|

719

|

+

|

|

720

|

+

When querying JSONB fields:

|

|

721

|

+

|

|

722

|

+

```python

|

|

723

|

+

from sqlalchemy import func, cast

|

|

724

|

+

from sqlalchemy.dialects.postgresql import JSONB

|

|

725

|

+

|

|

726

|

+

# Extract text from JSONB

|

|

727

|

+

span_type = func.jsonb_extract_path_text(Span.attributes, "tracebrain.span.type")

|

|

728

|

+

|

|

729

|

+

# Cast for complex queries

|

|

730

|

+

rating = func.jsonb_extract_path_text(cast(Trace.feedback, JSONB), "rating")

|

|

731

|

+

```

|

|

732

|

+

|

|

733

|

+

## 📚 Documentation

|

|

734

|

+

|

|

735

|

+

- **[Building Your Own Trace Converter](docs/Converter.md)** - Complete guide for integrating custom agent frameworks

|

|

736

|

+

- **[LLM Provider Guide](docs/LLMProviders.md)** - Use TraceBrain LLM providers and attach usage metadata

|

|

737

|

+

- **[Trace Reconstruction Guide](docs/Reconstructor.md)** - Rebuild full context from delta traces for training

|

|

738

|

+

- **[Sample OTLP Traces](src/tracebrain/resources/samples)** - Example trace files

|

|

739

|

+

- **[API Documentation](http://localhost:8000/docs)** - Interactive OpenAPI docs (when server is running)

|

|

740

|

+

- **[Docker Setup Guide](src/tracebrain/resources/docker/README.md)** - Docker-specific instructions

|

|

741

|

+

|

|

742

|

+

## 🤝 Contributing

|

|

743

|

+

|

|

744

|

+

Contributions are welcome! Here's how to get started:

|

|

745

|

+

|

|

746

|

+

1. Fork the repository

|

|

747

|

+

2. Create a feature branch: `git checkout -b feature/amazing-feature`

|

|

748

|

+

3. Make your changes and test thoroughly

|

|

749

|

+

4. Commit with clear messages: `git commit -m 'Add amazing feature'`

|

|

750

|

+

5. Push to your fork: `git push origin feature/amazing-feature`

|

|

751

|

+

6. Open a Pull Request

|

|

752

|

+

|

|

753

|

+

**Development Guidelines:**

|

|

754

|

+

- Follow PEP 8 style guide

|

|

755

|

+

- Add tests for new features

|

|

756

|

+

- Update documentation as needed

|

|

757

|

+

- Ensure Docker builds pass

|

|

758

|

+

|

|

759

|

+

## 🐛 Troubleshooting

|

|

760

|

+

|

|

761

|

+

### Docker changes not reflected

|

|

762

|

+

|

|

763

|

+

If code changes aren't picked up after `tracebrain up --build`:

|

|

764

|

+

|

|

765

|

+

```bash

|

|

766

|

+

tracebrain down

|

|

767

|

+

docker compose -f docker/docker-compose.yml build --no-cache

|

|

768

|

+

tracebrain up

|

|

769

|

+

```

|

|

770

|

+

|

|

771

|

+

### PostgreSQL connection errors

|

|

772

|

+

|

|

773

|

+

Ensure PostgreSQL is running and check connection string in `src/tracebrain/config.py`:

|

|

774

|

+

|

|

775

|

+

```python

|

|

776

|

+

DATABASE_URL = "postgresql://traceuser:tracepass@localhost:5432/tracedb"

|

|

777

|

+

```

|

|

778

|

+

|

|

779

|

+

### Tool usage analytics showing incorrect data

|

|

780

|

+

|

|

781

|

+

After updating `store.py`, rebuild Docker containers to apply JSONB query fixes.

|

|

782

|

+

|

|

783

|

+

## 📄 License

|

|

784

|

+

|

|

785

|

+

This project is licensed under the MIT License - see the LICENSE file for details.

|

|

786

|

+

|

|

787

|

+

## 🙏 Acknowledgments

|

|

788

|

+

|

|

789

|

+

- Built with [FastAPI](https://fastapi.tiangolo.com/)

|

|

790

|

+

- Database powered by [SQLAlchemy](https://www.sqlalchemy.org/)

|

|

791

|

+

- UI with [React (Vite)](https://vitejs.dev/) + [MUI](https://mui.com/)

|

|

792

|

+

- Inspired by [OpenTelemetry](https://opentelemetry.io/) standards

|

|

793

|

+

|

|

794

|

+

---

|

|

795

|

+

|

|

796

|

+

**Made with ❤️ for the AI agent community**

|

|

797

|

+

|

|

798

|

+

For questions or support, please open an issue on GitHub.

|