livefold 0.1.0__tar.gz

This diff represents the content of publicly available package versions that have been released to one of the supported registries. The information contained in this diff is provided for informational purposes only and reflects changes between package versions as they appear in their respective public registries.

- livefold-0.1.0/.gitignore +2 -0

- livefold-0.1.0/LICENSE +21 -0

- livefold-0.1.0/PKG-INFO +162 -0

- livefold-0.1.0/README.md +152 -0

- livefold-0.1.0/assets/query_animation.gif +0 -0

- livefold-0.1.0/assets/render_query_animation.py +194 -0

- livefold-0.1.0/assets/render_resize_animation.py +243 -0

- livefold-0.1.0/assets/resize_animation.gif +0 -0

- livefold-0.1.0/livefold/__init__.py +15 -0

- livefold-0.1.0/livefold/livefold.py +402 -0

- livefold-0.1.0/livefold/py.typed +0 -0

- livefold-0.1.0/pyproject.toml +51 -0

- livefold-0.1.0/uv.lock +2157 -0

livefold-0.1.0/LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

1

|

+

MIT License

|

|

2

|

+

|

|

3

|

+

Copyright (c) 2025 Danny

|

|

4

|

+

|

|

5

|

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

|

6

|

+

of this software and associated documentation files (the "Software"), to deal

|

|

7

|

+

in the Software without restriction, including without limitation the rights

|

|

8

|

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

|

9

|

+

copies of the Software, and to permit persons to whom the Software is

|

|

10

|

+

furnished to do so, subject to the following conditions:

|

|

11

|

+

|

|

12

|

+

The above copyright notice and this permission notice shall be included in all

|

|

13

|

+

copies or substantial portions of the Software.

|

|

14

|

+

|

|

15

|

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

|

16

|

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

|

17

|

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

|

18

|

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

|

19

|

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

|

20

|

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

|

21

|

+

SOFTWARE.

|

livefold-0.1.0/PKG-INFO

ADDED

|

@@ -0,0 +1,162 @@

|

|

|

1

|

+

Metadata-Version: 2.4

|

|

2

|

+

Name: livefold

|

|

3

|

+

Version: 0.1.0

|

|

4

|

+

Summary: A primitive for online sequential aggregation in Python

|

|

5

|

+

Project-URL: Source code, https://github.com/danielenricocahall/livefold

|

|

6

|

+

Author: Daniel Cahall

|

|

7

|

+

License-File: LICENSE

|

|

8

|

+

Requires-Python: >=3.10

|

|

9

|

+

Description-Content-Type: text/markdown

|

|

10

|

+

|

|

11

|

+

# livefold

|

|

12

|

+

|

|

13

|

+

*live + fold — incremental folds over a mutable sequence.*

|

|

14

|

+

|

|

15

|

+

[](https://github.com/danielenricocahall/livefold/actions/workflows/ci.yaml)

|

|

16

|

+

[](https://github.com/danielenricocahall/livefold/blob/main/pyproject.toml)

|

|

17

|

+

[](https://github.com/danielenricocahall/livefold/blob/main/LICENSE)

|

|

18

|

+

|

|

19

|

+

> A primitive for online sequential aggregation in Python.

|

|

20

|

+

> Maintain a mutable numeric sequence; query exact aggregates over any range

|

|

21

|

+

> in **O(√n)**; plug in any associative reducer (any monoid).

|

|

22

|

+

|

|

23

|

+

## When to reach for it

|

|

24

|

+

|

|

25

|

+

| Need | Use |

|

|

26

|

+

|---|---|

|

|

27

|

+

| Immutable series, range aggregates | Prefix sums |

|

|

28

|

+

| Frequent point updates, log-time queries | Segment tree / Fenwick tree |

|

|

29

|

+

| Fixed-width rolling windows | `pandas.rolling()` / `polars.rolling()` |

|

|

30

|

+

| **Mutable series, arbitrary range queries, multi-fold** | **livefold** |

|

|

31

|

+

|

|

32

|

+

Common shapes: request latencies, sensor readings, trade prices, telemetry events.

|

|

33

|

+

|

|

34

|

+

## Quickstart

|

|

35

|

+

|

|

36

|

+

```bash

|

|

37

|

+

pip install livefold

|

|

38

|

+

```

|

|

39

|

+

|

|

40

|

+

```python

|

|

41

|

+

from livefold import LiveFold

|

|

42

|

+

|

|

43

|

+

lf = LiveFold([1, 2, 3, 4, 5, 6], folds={"sum": sum, "max": max, "min": min})

|

|

44

|

+

|

|

45

|

+

lf.append(7)

|

|

46

|

+

lf.query(0, 5)

|

|

47

|

+

# → {"sum": 21, "max": 6, "min": 1}

|

|

48

|

+

|

|

49

|

+

# Mutate freely; aggregates stay current

|

|

50

|

+

lf[2] = -1

|

|

51

|

+

lf.query(2, 5)

|

|

52

|

+

# → {"sum": 9, "max": 6, "min": -1}

|

|

53

|

+

```

|

|

54

|

+

|

|

55

|

+

## Performance

|

|

56

|

+

|

|

57

|

+

|

|

58

|

+

|

|

59

|

+

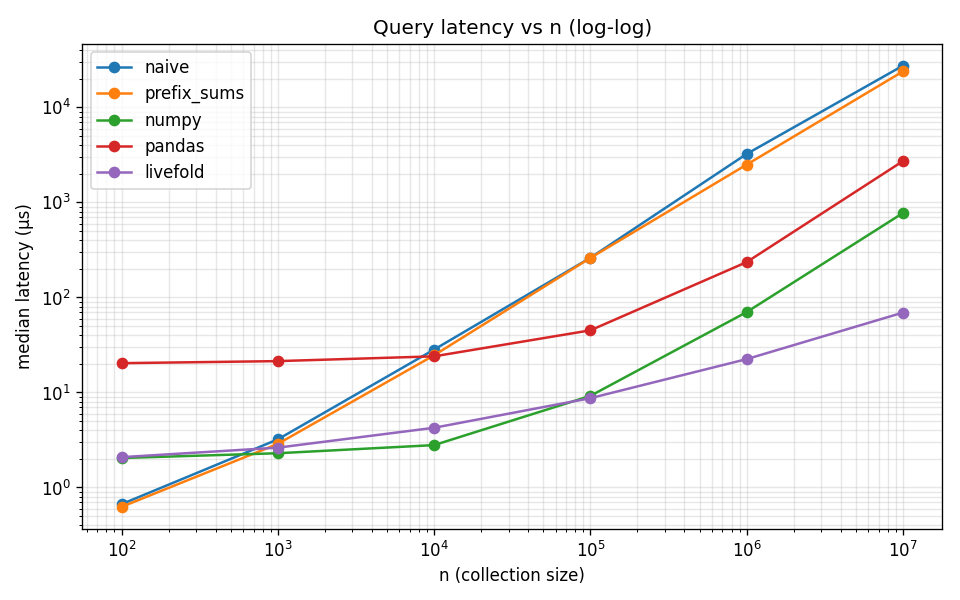

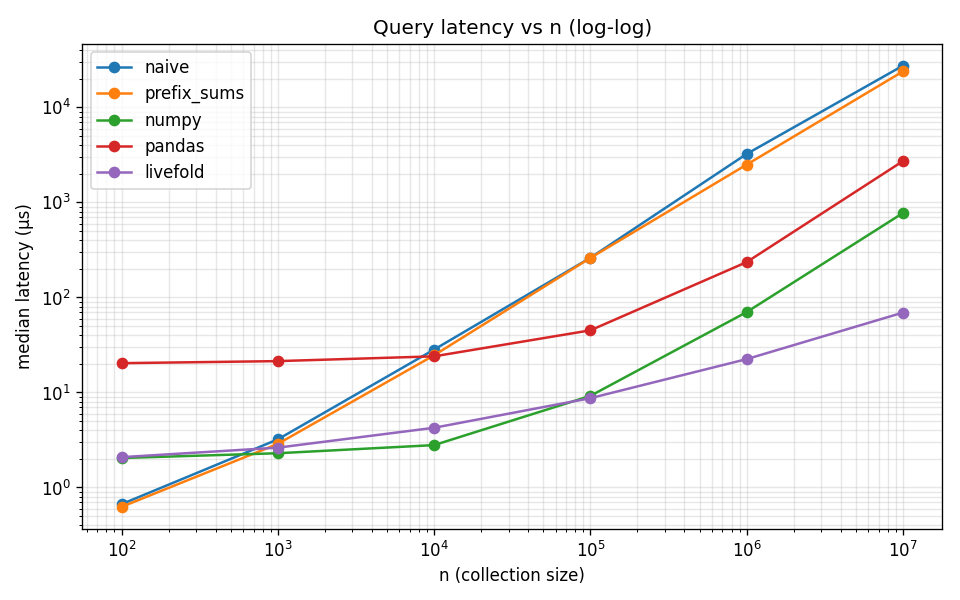

At n = 10⁷, `livefold`'s median range query is **69 µs vs naive Python's 29 ms** (~400× faster), and median append cost is **~2 µs across all n** while every other backend with a competitive query path (numpy, pandas) degrades linearly on *every* append. livefold's amortized append is O(√n) — boundary-crossing rebuilds are rare (~one per √n appends) but real — so the median characterizes typical streaming latency, not the rare rebuild spike.

|

|

60

|

+

|

|

61

|

+

Full methodology, append benchmarks, comparison against four backends, and the reproduction script: [`benchmarks/`](https://github.com/danielenricocahall/livefold/tree/main/benchmarks).

|

|

62

|

+

|

|

63

|

+

## Examples

|

|

64

|

+

|

|

65

|

+

Two runnable Streamlit demos in [`examples/`](https://github.com/danielenricocahall/livefold/tree/main/examples):

|

|

66

|

+

|

|

67

|

+

- **[`system_metrics/`](https://github.com/danielenricocahall/livefold/tree/main/examples/system_metrics)** — live `psutil`-driven CPU/memory dashboard with arbitrary-range aggregate queries. Runs entirely offline.

|

|

68

|

+

- **[`crypto_ticks/`](https://github.com/danielenricocahall/livefold/tree/main/examples/crypto_ticks)** — synthetic BTC/USD tick stream with high/low/avg-price queries. Includes a drop-in recipe for real Binance ticks.

|

|

69

|

+

|

|

70

|

+

|

|

71

|

+

## API

|

|

72

|

+

|

|

73

|

+

```python

|

|

74

|

+

LiveFold(data: Iterable, folds: dict[str, Callable])

|

|

75

|

+

```

|

|

76

|

+

|

|

77

|

+

| Member | Returns | Notes |

|

|

78

|

+

|---|---|---|

|

|

79

|

+

| `lf.append(x)` | `None` | Amortized O(√n); worst-case O(n) on perfect-square crossings |

|

|

80

|

+

| `lf.query(left, right)` | `dict[str, Any]` | O(√n); inclusive bounds |

|

|

81

|

+

| `lf.blocks` | `list[list]` | Underlying √n-sized blocks |

|

|

82

|

+

| `lf.folded_values` | `dict[str, list]` | Per-fold, per-block aggregates |

|

|

83

|

+

| `lf.insert / pop / extend / remove / sort / ...` | — | Standard `list` methods; blocks and folds updated in place |

|

|

84

|

+

|

|

85

|

+

`LiveFold` subclasses `list`, so it's a drop-in for any code that expected a plain list — until you start calling `query`.

|

|

86

|

+

|

|

87

|

+

## Time-indexed queries: `TimeIndexedLiveFold`

|

|

88

|

+

|

|

89

|

+

For monotonic streams where you want to query by *time* instead of *index*, `TimeIndexedLiveFold` carries a parallel timestamp per element and exposes `query_time_range` — still O(√n).

|

|

90

|

+

|

|

91

|

+

```python

|

|

92

|

+

from livefold import TimeIndexedLiveFold

|

|

93

|

+

|

|

94

|

+

lf = TimeIndexedLiveFold(

|

|

95

|

+

[1, 2, 3],

|

|

96

|

+

folds={"sum": sum, "max": max},

|

|

97

|

+

timestamps=[1.0, 2.0, 3.0],

|

|

98

|

+

)

|

|

99

|

+

lf.append(4, timestamp=4.0)

|

|

100

|

+

lf.append(5, timestamp=5.0)

|

|

101

|

+

|

|

102

|

+

lf.query_time_range(2.0, 4.0)

|

|

103

|

+

# → {"sum": 9, "max": 4}

|

|

104

|

+

```

|

|

105

|

+

|

|

106

|

+

If you omit `timestamps` / `timestamp`, `time.time()` is used by default. The class is generic over the timestamp type — anything orderable works (`float`, `int`, `datetime`, ...). Pass explicit timestamps for any non-`float` type:

|

|

107

|

+

|

|

108

|

+

```python

|

|

109

|

+

from datetime import datetime

|

|

110

|

+

from livefold import TimeIndexedLiveFold

|

|

111

|

+

|

|

112

|

+

lf = TimeIndexedLiveFold[datetime](

|

|

113

|

+

[1, 2, 3],

|

|

114

|

+

folds={"sum": sum},

|

|

115

|

+

timestamps=[datetime(2025, 1, 1), datetime(2025, 6, 1), datetime(2026, 1, 1)],

|

|

116

|

+

)

|

|

117

|

+

lf.query_time_range(datetime(2025, 5, 1), datetime(2026, 6, 1))

|

|

118

|

+

# → {"sum": 5}

|

|

119

|

+

```

|

|

120

|

+

|

|

121

|

+

`TimeIndexedLiveFold` is **append-only by design**. Operations that would break time ordering raise `MonotonicityError`:

|

|

122

|

+

|

|

123

|

+

- `insert`, `sort`, `reverse` — would break the ordering invariant

|

|

124

|

+

- slice `__setitem__`, slice `__delitem__` — would desync data and timestamps

|

|

125

|

+

- `append` / `extend` with a timestamp earlier than the last stored one

|

|

126

|

+

- `+` / `+=` with anything other than another `TimeIndexedLiveFold` (use `extend(values, timestamps=...)` instead)

|

|

127

|

+

|

|

128

|

+

Integer indexing (`lf[i] = x`, `del lf[i]`), `pop`, `remove`, and `clear` work normally and keep the parallel timestamp list in sync. `+` and `+=` between two `TimeIndexedLiveFold` instances concatenate timestamps and re-check monotonicity.

|

|

129

|

+

|

|

130

|

+

## How it works

|

|

131

|

+

|

|

132

|

+

|

|

133

|

+

|

|

134

|

+

`LiveFold` splits its underlying list into ⌊√n⌋ blocks of size √n, precomputes the configured folds for each block, and updates them incrementally on mutation. A `query(left, right)` walks at most two partial blocks plus the precomputed folds for whole-block spans in between — touching roughly 2√n elements per fold regardless of n. Mo's algorithm with mutability and a dict-shaped output.

|

|

135

|

+

|

|

136

|

+

|

|

137

|

+

|

|

138

|

+

`append` is **amortized O(√n)**. Most appends just push onto the last block at O(1), but each time `n` crosses a perfect square, `block_size = isqrt(n)` increments and the whole structure re-blocks — an O(n) cost. The gap between consecutive perfect squares is `2k + 1` (linear in `k = √n`, not geometric), so over `n` appends total rebuild work sums to Σ_{k=1}^{√n} O(k²) = O(n^(3/2)) — i.e. O(√n) per append amortized. Boundary crossings happen only ~once per √n appends, though, so the *median* append latency stays in the low µs range across all `n` (this is what the benchmarks show); the asymptotic only shows up in mean or total cost.

|

|

139

|

+

|

|

140

|

+

`TimeIndexedLiveFold` layers a parallel monotonically non-decreasing timestamp list on top. `query_time_range(start, end)` calls `bisect_left`/`bisect_right` to map timestamps to indices in O(log n), then routes through the same √n-decomposed query path — so overall query cost stays O(√n).

|

|

141

|

+

|

|

142

|

+

For the full derivation, complexity analysis, and other implementation details, see the [corresponding blog post](https://dannycahall.substack.com/p/square-root-decomposition-made-mutable).

|

|

143

|

+

|

|

144

|

+

> *Note: the blog post predates the rebrand from `pysquagg` and uses the old singular `aggregator_function=` API. The math and structural choices are unchanged; only the package name and the `folds={"name": fn, ...}` dict shape have evolved.*

|

|

145

|

+

|

|

146

|

+

## Fold contract

|

|

147

|

+

|

|

148

|

+

A fold is a single-argument callable `fn(items) -> result` that:

|

|

149

|

+

|

|

150

|

+

1. Accepts an **iterable** of elements (or, when called internally to combine block results, an iterable of prior fold results) and returns a single value.

|

|

151

|

+

2. Is **associative**: `fn([fn([a, b]), fn([c, d])]) == fn([a, b, c, d])`. This lets `query` combine precomputed block-level folds with the partial folds at each end.

|

|

152

|

+

3. Returns a value of the same shape it accepts as elements — i.e., feeding the result back through `fn` together with other results must work. `len` is a common-but-broken choice: it returns an `int` regardless of input shape, so `len([len(block_a), len(block_b)])` gives `2`, not the total element count.

|

|

153

|

+

|

|

154

|

+

Examples that satisfy the contract: `sum`, `max`, `min`, `math.prod`, `math.gcd` (via `functools.reduce`), bitwise `or`/`and`/`xor`, `"".join`, and any mergeable sketch (t-digest, HyperLogLog, Count-Min, Welford) wrapped in a fold-shaped callable. Commutativity is *not* required — string concatenation, matrix multiplication, and other ordered monoids work too.

|

|

155

|

+

|

|

156

|

+

## Constraints

|

|

157

|

+

|

|

158

|

+

- **Not thread-safe.** Single-process, single-thread workloads only.

|

|

159

|

+

|

|

160

|

+

## Contributing

|

|

161

|

+

|

|

162

|

+

See [CONTRIBUTING.md](https://github.com/danielenricocahall/livefold/blob/main/CONTRIBUTING.md).

|

livefold-0.1.0/README.md

ADDED

|

@@ -0,0 +1,152 @@

|

|

|

1

|

+

# livefold

|

|

2

|

+

|

|

3

|

+

*live + fold — incremental folds over a mutable sequence.*

|

|

4

|

+

|

|

5

|

+

[](https://github.com/danielenricocahall/livefold/actions/workflows/ci.yaml)

|

|

6

|

+

[](https://github.com/danielenricocahall/livefold/blob/main/pyproject.toml)

|

|

7

|

+

[](https://github.com/danielenricocahall/livefold/blob/main/LICENSE)

|

|

8

|

+

|

|

9

|

+

> A primitive for online sequential aggregation in Python.

|

|

10

|

+

> Maintain a mutable numeric sequence; query exact aggregates over any range

|

|

11

|

+

> in **O(√n)**; plug in any associative reducer (any monoid).

|

|

12

|

+

|

|

13

|

+

## When to reach for it

|

|

14

|

+

|

|

15

|

+

| Need | Use |

|

|

16

|

+

|---|---|

|

|

17

|

+

| Immutable series, range aggregates | Prefix sums |

|

|

18

|

+

| Frequent point updates, log-time queries | Segment tree / Fenwick tree |

|

|

19

|

+

| Fixed-width rolling windows | `pandas.rolling()` / `polars.rolling()` |

|

|

20

|

+

| **Mutable series, arbitrary range queries, multi-fold** | **livefold** |

|

|

21

|

+

|

|

22

|

+

Common shapes: request latencies, sensor readings, trade prices, telemetry events.

|

|

23

|

+

|

|

24

|

+

## Quickstart

|

|

25

|

+

|

|

26

|

+

```bash

|

|

27

|

+

pip install livefold

|

|

28

|

+

```

|

|

29

|

+

|

|

30

|

+

```python

|

|

31

|

+

from livefold import LiveFold

|

|

32

|

+

|

|

33

|

+

lf = LiveFold([1, 2, 3, 4, 5, 6], folds={"sum": sum, "max": max, "min": min})

|

|

34

|

+

|

|

35

|

+

lf.append(7)

|

|

36

|

+

lf.query(0, 5)

|

|

37

|

+

# → {"sum": 21, "max": 6, "min": 1}

|

|

38

|

+

|

|

39

|

+

# Mutate freely; aggregates stay current

|

|

40

|

+

lf[2] = -1

|

|

41

|

+

lf.query(2, 5)

|

|

42

|

+

# → {"sum": 9, "max": 6, "min": -1}

|

|

43

|

+

```

|

|

44

|

+

|

|

45

|

+

## Performance

|

|

46

|

+

|

|

47

|

+

|

|

48

|

+

|

|

49

|

+

At n = 10⁷, `livefold`'s median range query is **69 µs vs naive Python's 29 ms** (~400× faster), and median append cost is **~2 µs across all n** while every other backend with a competitive query path (numpy, pandas) degrades linearly on *every* append. livefold's amortized append is O(√n) — boundary-crossing rebuilds are rare (~one per √n appends) but real — so the median characterizes typical streaming latency, not the rare rebuild spike.

|

|

50

|

+

|

|

51

|

+

Full methodology, append benchmarks, comparison against four backends, and the reproduction script: [`benchmarks/`](https://github.com/danielenricocahall/livefold/tree/main/benchmarks).

|

|

52

|

+

|

|

53

|

+

## Examples

|

|

54

|

+

|

|

55

|

+

Two runnable Streamlit demos in [`examples/`](https://github.com/danielenricocahall/livefold/tree/main/examples):

|

|

56

|

+

|

|

57

|

+

- **[`system_metrics/`](https://github.com/danielenricocahall/livefold/tree/main/examples/system_metrics)** — live `psutil`-driven CPU/memory dashboard with arbitrary-range aggregate queries. Runs entirely offline.

|

|

58

|

+

- **[`crypto_ticks/`](https://github.com/danielenricocahall/livefold/tree/main/examples/crypto_ticks)** — synthetic BTC/USD tick stream with high/low/avg-price queries. Includes a drop-in recipe for real Binance ticks.

|

|

59

|

+

|

|

60

|

+

|

|

61

|

+

## API

|

|

62

|

+

|

|

63

|

+

```python

|

|

64

|

+

LiveFold(data: Iterable, folds: dict[str, Callable])

|

|

65

|

+

```

|

|

66

|

+

|

|

67

|

+

| Member | Returns | Notes |

|

|

68

|

+

|---|---|---|

|

|

69

|

+

| `lf.append(x)` | `None` | Amortized O(√n); worst-case O(n) on perfect-square crossings |

|

|

70

|

+

| `lf.query(left, right)` | `dict[str, Any]` | O(√n); inclusive bounds |

|

|

71

|

+

| `lf.blocks` | `list[list]` | Underlying √n-sized blocks |

|

|

72

|

+

| `lf.folded_values` | `dict[str, list]` | Per-fold, per-block aggregates |

|

|

73

|

+

| `lf.insert / pop / extend / remove / sort / ...` | — | Standard `list` methods; blocks and folds updated in place |

|

|

74

|

+

|

|

75

|

+

`LiveFold` subclasses `list`, so it's a drop-in for any code that expected a plain list — until you start calling `query`.

|

|

76

|

+

|

|

77

|

+

## Time-indexed queries: `TimeIndexedLiveFold`

|

|

78

|

+

|

|

79

|

+

For monotonic streams where you want to query by *time* instead of *index*, `TimeIndexedLiveFold` carries a parallel timestamp per element and exposes `query_time_range` — still O(√n).

|

|

80

|

+

|

|

81

|

+

```python

|

|

82

|

+

from livefold import TimeIndexedLiveFold

|

|

83

|

+

|

|

84

|

+

lf = TimeIndexedLiveFold(

|

|

85

|

+

[1, 2, 3],

|

|

86

|

+

folds={"sum": sum, "max": max},

|

|

87

|

+

timestamps=[1.0, 2.0, 3.0],

|

|

88

|

+

)

|

|

89

|

+

lf.append(4, timestamp=4.0)

|

|

90

|

+

lf.append(5, timestamp=5.0)

|

|

91

|

+

|

|

92

|

+

lf.query_time_range(2.0, 4.0)

|

|

93

|

+

# → {"sum": 9, "max": 4}

|

|

94

|

+

```

|

|

95

|

+

|

|

96

|

+

If you omit `timestamps` / `timestamp`, `time.time()` is used by default. The class is generic over the timestamp type — anything orderable works (`float`, `int`, `datetime`, ...). Pass explicit timestamps for any non-`float` type:

|

|

97

|

+

|

|

98

|

+

```python

|

|

99

|

+

from datetime import datetime

|

|

100

|

+

from livefold import TimeIndexedLiveFold

|

|

101

|

+

|

|

102

|

+

lf = TimeIndexedLiveFold[datetime](

|

|

103

|

+

[1, 2, 3],

|

|

104

|

+

folds={"sum": sum},

|

|

105

|

+

timestamps=[datetime(2025, 1, 1), datetime(2025, 6, 1), datetime(2026, 1, 1)],

|

|

106

|

+

)

|

|

107

|

+

lf.query_time_range(datetime(2025, 5, 1), datetime(2026, 6, 1))

|

|

108

|

+

# → {"sum": 5}

|

|

109

|

+

```

|

|

110

|

+

|

|

111

|

+

`TimeIndexedLiveFold` is **append-only by design**. Operations that would break time ordering raise `MonotonicityError`:

|

|

112

|

+

|

|

113

|

+

- `insert`, `sort`, `reverse` — would break the ordering invariant

|

|

114

|

+

- slice `__setitem__`, slice `__delitem__` — would desync data and timestamps

|

|

115

|

+

- `append` / `extend` with a timestamp earlier than the last stored one

|

|

116

|

+

- `+` / `+=` with anything other than another `TimeIndexedLiveFold` (use `extend(values, timestamps=...)` instead)

|

|

117

|

+

|

|

118

|

+

Integer indexing (`lf[i] = x`, `del lf[i]`), `pop`, `remove`, and `clear` work normally and keep the parallel timestamp list in sync. `+` and `+=` between two `TimeIndexedLiveFold` instances concatenate timestamps and re-check monotonicity.

|

|

119

|

+

|

|

120

|

+

## How it works

|

|

121

|

+

|

|

122

|

+

|

|

123

|

+

|

|

124

|

+

`LiveFold` splits its underlying list into ⌊√n⌋ blocks of size √n, precomputes the configured folds for each block, and updates them incrementally on mutation. A `query(left, right)` walks at most two partial blocks plus the precomputed folds for whole-block spans in between — touching roughly 2√n elements per fold regardless of n. Mo's algorithm with mutability and a dict-shaped output.

|

|

125

|

+

|

|

126

|

+

|

|

127

|

+

|

|

128

|

+

`append` is **amortized O(√n)**. Most appends just push onto the last block at O(1), but each time `n` crosses a perfect square, `block_size = isqrt(n)` increments and the whole structure re-blocks — an O(n) cost. The gap between consecutive perfect squares is `2k + 1` (linear in `k = √n`, not geometric), so over `n` appends total rebuild work sums to Σ_{k=1}^{√n} O(k²) = O(n^(3/2)) — i.e. O(√n) per append amortized. Boundary crossings happen only ~once per √n appends, though, so the *median* append latency stays in the low µs range across all `n` (this is what the benchmarks show); the asymptotic only shows up in mean or total cost.

|

|

129

|

+

|

|

130

|

+

`TimeIndexedLiveFold` layers a parallel monotonically non-decreasing timestamp list on top. `query_time_range(start, end)` calls `bisect_left`/`bisect_right` to map timestamps to indices in O(log n), then routes through the same √n-decomposed query path — so overall query cost stays O(√n).

|

|

131

|

+

|

|

132

|

+

For the full derivation, complexity analysis, and other implementation details, see the [corresponding blog post](https://dannycahall.substack.com/p/square-root-decomposition-made-mutable).

|

|

133

|

+

|

|

134

|

+

> *Note: the blog post predates the rebrand from `pysquagg` and uses the old singular `aggregator_function=` API. The math and structural choices are unchanged; only the package name and the `folds={"name": fn, ...}` dict shape have evolved.*

|

|

135

|

+

|

|

136

|

+

## Fold contract

|

|

137

|

+

|

|

138

|

+

A fold is a single-argument callable `fn(items) -> result` that:

|

|

139

|

+

|

|

140

|

+

1. Accepts an **iterable** of elements (or, when called internally to combine block results, an iterable of prior fold results) and returns a single value.

|

|

141

|

+

2. Is **associative**: `fn([fn([a, b]), fn([c, d])]) == fn([a, b, c, d])`. This lets `query` combine precomputed block-level folds with the partial folds at each end.

|

|

142

|

+

3. Returns a value of the same shape it accepts as elements — i.e., feeding the result back through `fn` together with other results must work. `len` is a common-but-broken choice: it returns an `int` regardless of input shape, so `len([len(block_a), len(block_b)])` gives `2`, not the total element count.

|

|

143

|

+

|

|

144

|

+

Examples that satisfy the contract: `sum`, `max`, `min`, `math.prod`, `math.gcd` (via `functools.reduce`), bitwise `or`/`and`/`xor`, `"".join`, and any mergeable sketch (t-digest, HyperLogLog, Count-Min, Welford) wrapped in a fold-shaped callable. Commutativity is *not* required — string concatenation, matrix multiplication, and other ordered monoids work too.

|

|

145

|

+

|

|

146

|

+

## Constraints

|

|

147

|

+

|

|

148

|

+

- **Not thread-safe.** Single-process, single-thread workloads only.

|

|

149

|

+

|

|

150

|

+

## Contributing

|

|

151

|

+

|

|

152

|

+

See [CONTRIBUTING.md](https://github.com/danielenricocahall/livefold/blob/main/CONTRIBUTING.md).

|

|

Binary file

|

|

@@ -0,0 +1,194 @@

|

|

|

1

|

+

"""Render an animated GIF illustrating LiveFold's √n-decomposed query.

|

|

2

|

+

|

|

3

|

+

Walks through `query(2, 7)` on a 9-element list with 3 blocks of size 3:

|

|

4

|

+

1. Idle state with precomputed per-block sums

|

|

5

|

+

2. Query is announced

|

|

6

|

+

3. Left partial block is scanned (1 element)

|

|

7

|

+

4. Middle whole block uses its precomputed fold (no scan)

|

|

8

|

+

5. Right partial block is scanned (2 elements)

|

|

9

|

+

6. Combined answer revealed

|

|

10

|

+

|

|

11

|

+

Run with: uv run --group bench python -m assets.render_query_animation

|

|

12

|

+

Output: assets/query_animation.gif

|

|

13

|

+

"""

|

|

14

|

+

|

|

15

|

+

from __future__ import annotations

|

|

16

|

+

|

|

17

|

+

from pathlib import Path

|

|

18

|

+

|

|

19

|

+

import matplotlib.patches as mpatches

|

|

20

|

+

import matplotlib.pyplot as plt

|

|

21

|

+

from matplotlib.animation import FuncAnimation, PillowWriter

|

|

22

|

+

|

|

23

|

+

DATA = [3, 1, 4, 1, 5, 9, 2, 6, 5]

|

|

24

|

+

BLOCK_SIZE = 3

|

|

25

|

+

BLOCKS = [DATA[i : i + BLOCK_SIZE] for i in range(0, len(DATA), BLOCK_SIZE)]

|

|

26

|

+

BLOCK_SUMS = [sum(b) for b in BLOCKS]

|

|

27

|

+

|

|

28

|

+

QUERY_LEFT, QUERY_RIGHT = 2, 7 # inclusive

|

|

29

|

+

|

|

30

|

+

IDLE_CELL = "#e5e7eb"

|

|

31

|

+

PARTIAL = "#fbbf24"

|

|

32

|

+

PRECOMPUTED = "#a78bfa"

|

|

33

|

+

ANSWER = "#34d399"

|

|

34

|

+

TEXT_DARK = "#111827"

|

|

35

|

+

TEXT_MUTED = "#6b7280"

|

|

36

|

+

|

|

37

|

+

NUM_PHASES = 6

|

|

38

|

+

# Hold each phase by emitting one frame per phase + extra holds on the answer.

|

|

39

|

+

# Total frames at fps=1 → seconds in the GIF.

|

|

40

|

+

PHASE_DURATIONS = [1, 1, 1, 1, 1, 3] # final answer holds for 3 seconds

|

|

41

|

+

|

|

42

|

+

|

|

43

|

+

def phase_for_frame(frame: int) -> int:

|

|

44

|

+

cumulative = 0

|

|

45

|

+

for phase, duration in enumerate(PHASE_DURATIONS):

|

|

46

|

+

cumulative += duration

|

|

47

|

+

if frame < cumulative:

|

|

48

|

+

return phase

|

|

49

|

+

return NUM_PHASES - 1

|

|

50

|

+

|

|

51

|

+

|

|

52

|

+

def draw(frame: int, ax) -> None:

|

|

53

|

+

phase = phase_for_frame(frame)

|

|

54

|

+

ax.clear()

|

|

55

|

+

ax.set_xlim(-0.6, 9.6)

|

|

56

|

+

ax.set_ylim(-2.6, 3.0)

|

|

57

|

+

ax.set_aspect("equal")

|

|

58

|

+

ax.axis("off")

|

|

59

|

+

|

|

60

|

+

title = "LiveFold(data, folds={'sum': sum})"

|

|

61

|

+

if phase >= 1:

|

|

62

|

+

title = "query(left=2, right=7) → ?"

|

|

63

|

+

if phase >= 5:

|

|

64

|

+

title = "query(left=2, right=7) → 27"

|

|

65

|

+

ax.text(

|

|

66

|

+

4.5,

|

|

67

|

+

2.55,

|

|

68

|

+

title,

|

|

69

|

+

ha="center",

|

|

70

|

+

va="center",

|

|

71

|

+

fontsize=14,

|

|

72

|

+

fontweight="bold",

|

|

73

|

+

color=TEXT_DARK,

|

|

74

|

+

)

|

|

75

|

+

|

|

76

|

+

for i, val in enumerate(DATA):

|

|

77

|

+

color = IDLE_CELL

|

|

78

|

+

if phase >= 2 and i == QUERY_LEFT:

|

|

79

|

+

color = PARTIAL

|

|

80

|

+

if phase >= 4 and QUERY_RIGHT - 1 <= i <= QUERY_RIGHT:

|

|

81

|

+

color = PARTIAL

|

|

82

|

+

ax.add_patch(

|

|

83

|

+

mpatches.FancyBboxPatch(

|

|

84

|

+

(i + 0.05, 1.05),

|

|

85

|

+

0.9,

|

|

86

|

+

0.9,

|

|

87

|

+

boxstyle="round,pad=0.02,rounding_size=0.08",

|

|

88

|

+

facecolor=color,

|

|

89

|

+

edgecolor="black",

|

|

90

|

+

linewidth=1.2,

|

|

91

|

+

)

|

|

92

|

+

)

|

|

93

|

+

ax.text(

|

|

94

|

+

i + 0.5,

|

|

95

|

+

1.5,

|

|

96

|

+

str(val),

|

|

97

|

+

ha="center",

|

|

98

|

+

va="center",

|

|

99

|

+

fontweight="bold",

|

|

100

|

+

fontsize=14,

|

|

101

|

+

color=TEXT_DARK,

|

|

102

|

+

)

|

|

103

|

+

ax.text(

|

|

104

|

+

i + 0.5,

|

|

105

|

+

2.15,

|

|

106

|

+

str(i),

|

|

107

|

+

ha="center",

|

|

108

|

+

va="center",

|

|

109

|

+

fontsize=9,

|

|

110

|

+

color=TEXT_MUTED,

|

|

111

|

+

)

|

|

112

|

+

|

|

113

|

+

for b_idx in range(len(BLOCKS)):

|

|

114

|

+

start_x = b_idx * BLOCK_SIZE + 0.05

|

|

115

|

+

end_x = start_x + BLOCK_SIZE - 0.1

|

|

116

|

+

ax.plot(

|

|

117

|

+

[start_x, start_x, end_x, end_x],

|

|

118

|

+

[0.95, 0.65, 0.65, 0.95],

|

|

119

|

+

color="#374151",

|

|

120

|

+

lw=1.5,

|

|

121

|

+

)

|

|

122

|

+

|

|

123

|

+

block_color = IDLE_CELL

|

|

124

|

+

if phase == 3 and b_idx == 1:

|

|

125

|

+

block_color = PRECOMPUTED

|

|

126

|

+

elif phase >= 4 and b_idx == 1:

|

|

127

|

+

block_color = PRECOMPUTED

|

|

128

|

+

|

|

129

|

+

label = f"sum = {BLOCK_SUMS[b_idx]}"

|

|

130

|

+

ax.text(

|

|

131

|

+

(start_x + end_x) / 2,

|

|

132

|

+

0.15,

|

|

133

|

+

label,

|

|

134

|

+

ha="center",

|

|

135

|

+

va="center",

|

|

136

|

+

fontsize=11,

|

|

137

|

+

fontweight="bold",

|

|

138

|

+

color=TEXT_DARK,

|

|

139

|

+

bbox=dict(

|

|

140

|

+

boxstyle="round,pad=0.4",

|

|

141

|

+

facecolor=block_color,

|

|

142

|

+

edgecolor="#374151",

|

|

143

|

+

linewidth=1.0,

|

|

144

|

+

),

|

|

145

|

+

)

|

|

146

|

+

|

|

147

|

+

annotations: list[tuple[str, str]] = []

|

|

148

|

+

if phase >= 2:

|

|

149

|

+

annotations.append(("left partial → scan data[2] = 4", PARTIAL))

|

|

150

|

+

if phase >= 3:

|

|

151

|

+

annotations.append(("middle block → reuse precomputed sum = 15", PRECOMPUTED))

|

|

152

|

+

if phase >= 4:

|

|

153

|

+

annotations.append(("right partial → scan data[6:8] = 2 + 6 = 8", PARTIAL))

|

|

154

|

+

if phase >= 5:

|

|

155

|

+

annotations.append(("answer = 4 + 15 + 8 = 27", ANSWER))

|

|

156

|

+

|

|

157

|

+

for i, (text, color) in enumerate(annotations):

|

|

158

|

+

ax.text(

|

|

159

|

+

4.5,

|

|

160

|

+

-0.7 - i * 0.45,

|

|

161

|

+

text,

|

|

162

|

+

ha="center",

|

|

163

|

+

va="center",

|

|

164

|

+

fontsize=11,

|

|

165

|

+

color=TEXT_DARK,

|

|

166

|

+

bbox=dict(

|

|

167

|

+

boxstyle="round,pad=0.3",

|

|

168

|

+

facecolor=color,

|

|

169

|

+

edgecolor="none",

|

|

170

|

+

alpha=0.7,

|

|

171

|

+

),

|

|

172

|

+

)

|

|

173

|

+

|

|

174

|

+

|

|

175

|

+

def main() -> None:

|

|

176

|

+

fig, ax = plt.subplots(figsize=(10, 4.2), dpi=100)

|

|

177

|

+

fig.patch.set_facecolor("white")

|

|

178

|

+

|

|

179

|

+

total_frames = sum(PHASE_DURATIONS)

|

|

180

|

+

anim = FuncAnimation(

|

|

181

|

+

fig,

|

|

182

|

+

lambda f: draw(f, ax),

|

|

183

|

+

frames=total_frames,

|

|

184

|

+

interval=1000,

|

|

185

|

+

blit=False,

|

|

186

|

+

)

|

|

187

|

+

|

|

188

|

+

out = Path(__file__).parent / "query_animation.gif"

|

|

189

|

+

anim.save(out, writer=PillowWriter(fps=1))

|

|

190

|

+

print(f"Wrote {out}")

|

|

191

|

+

|

|

192

|

+

|

|

193

|

+

if __name__ == "__main__":

|

|

194

|

+

main()

|